Jupyterlab: Getting OSError: [Errno 24] Too many open files

Describe the bug

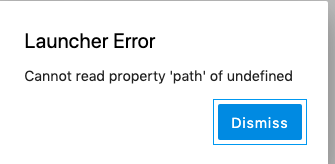

When opening new consoles, I am often (though not always) getting the following error:

Copy-pasted thats:

Traceback (most recent call last):

File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/web.py", line 1592, in _execute

File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/gen.py", line 1133, in run

File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/gen.py", line 1141, in run

File "/Users/Nick/anaconda3/lib/python3.7/site-packages/notebook/services/sessions/handlers.py", line 73, in post

File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/gen.py", line 1133, in run

File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/gen.py", line 1141, in run

File "/Users/Nick/anaconda3/lib/python3.7/site-packages/notebook/services/sessions/sessionmanager.py", line 79, in create_session

File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/gen.py", line 1133, in run

File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/gen.py", line 1141, in run

File "/Users/Nick/anaconda3/lib/python3.7/site-packages/notebook/services/sessions/sessionmanager.py", line 92, in start_kernel_for_session

File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/gen.py", line 1133, in run

File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/gen.py", line 326, in wrapper

File "/Users/Nick/anaconda3/lib/python3.7/site-packages/notebook/services/kernels/kernelmanager.py", line 160, in start_kernel

File "/Users/Nick/anaconda3/lib/python3.7/site-packages/jupyter_client/multikernelmanager.py", line 110, in start_kernel

km.start_kernel(**kwargs)

File "/Users/Nick/anaconda3/lib/python3.7/site-packages/jupyter_client/manager.py", line 240, in start_kernel

self.write_connection_file()

File "/Users/Nick/anaconda3/lib/python3.7/site-packages/jupyter_client/connect.py", line 472, in write_connection_file

kernel_name=self.kernel_name

File "/Users/Nick/anaconda3/lib/python3.7/site-packages/jupyter_client/connect.py", line 98, in write_connection_file

sock = socket.socket()

File "/Users/Nick/anaconda3/lib/python3.7/socket.py", line 151, in __init__

OSError: [Errno 24] Too many open files

[I 14:57:20.667 LabApp] Adapting to protocol v5.0 for kernel 80f5b454-304b-4f77-ae2f-72b1f60bb9b6

[E 14:57:20.669 LabApp] Uncaught exception GET /api/kernels/80f5b454-304b-4f77-ae2f-72b1f60bb9b6/channels?session_id=c9436bb4-8747-41d3-911c-4c4a24da7575&token=efea1c3040a707f1504d45fe9c9e3ba0c1767cbee36f2bd1 (::1)

HTTPServerRequest(protocol='http', host='localhost:8888', method='GET', uri='/api/kernels/80f5b454-304b-4f77-ae2f-72b1f60bb9b6/channels?session_id=c9436bb4-8747-41d3-911c-4c4a24da7575&token=efea1c3040a707f1504d45fe9c9e3ba0c1767cbee36f2bd1', version='HTTP/1.1', remote_ip='::1')

Traceback (most recent call last):

File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/websocket.py", line 546, in _run_callback

result = callback(*args, **kwargs)

File "/Users/Nick/anaconda3/lib/python3.7/site-packages/notebook/services/kernels/handlers.py", line 276, in open

self.create_stream()

File "/Users/Nick/anaconda3/lib/python3.7/site-packages/notebook/services/kernels/handlers.py", line 130, in create_stream

self.channels[channel] = stream = meth(self.kernel_id, identity=identity)

File "/Users/Nick/anaconda3/lib/python3.7/site-packages/jupyter_client/multikernelmanager.py", line 33, in wrapped

r = method(*args, **kwargs)

File "/Users/Nick/anaconda3/lib/python3.7/site-packages/jupyter_client/ioloop/manager.py", line 22, in wrapped

socket = f(self, *args, **kwargs)

File "/Users/Nick/anaconda3/lib/python3.7/site-packages/jupyter_client/connect.py", line 553, in connect_iopub

sock = self._create_connected_socket('iopub', identity=identity)

File "/Users/Nick/anaconda3/lib/python3.7/site-packages/jupyter_client/connect.py", line 543, in _create_connected_socket

sock = self.context.socket(socket_type)

File "/Users/Nick/anaconda3/lib/python3.7/site-packages/zmq/sugar/context.py", line 146, in socket

s = self._socket_class(self, socket_type, **kwargs)

File "/Users/Nick/anaconda3/lib/python3.7/site-packages/zmq/sugar/socket.py", line 59, in __init__

super(Socket, self).__init__(*a, **kw)

File "zmq/backend/cython/socket.pyx", line 328, in zmq.backend.cython.socket.Socket.__init__

zmq.error.ZMQError: Too many open files

Troubleshoot Output

(base) ➜ ~ jupyter troubleshoot

$PATH:

/Users/Nick/anaconda3/bin

/Users/Nick/anaconda3/bin

/Users/Nick/anaconda3/condabin

/usr/local/bin

/usr/bin

/bin

/usr/sbin

/sbin

/users/nick/github/barrio_networks/code/modules

/Library/TeX/texbin

/opt/X11/bin

/usr/local/git/bin

sys.path:

/Users/Nick/anaconda3/bin

/users/nick/github/barrio_networks/code/modules

/Users/Nick/anaconda3/lib/python37.zip

/Users/Nick/anaconda3/lib/python3.7

/Users/Nick/anaconda3/lib/python3.7/lib-dynload

/Users/Nick/.local/lib/python3.7/site-packages

/Users/Nick/anaconda3/lib/python3.7/site-packages

/Users/Nick/anaconda3/lib/python3.7/site-packages/aeosa

sys.executable:

/Users/Nick/anaconda3/bin/python

sys.version:

3.7.3 (default, Mar 27 2019, 16:54:48)

[Clang 4.0.1 (tags/RELEASE_401/final)]

platform.platform():

Darwin-18.6.0-x86_64-i386-64bit

which -a jupyter:

/Users/Nick/anaconda3/bin/jupyter

/Users/Nick/anaconda3/bin/jupyter

pip list:

Package Version

---------------------------------- -----------

alabaster 0.7.12

anaconda-client 1.7.2

anaconda-navigator 1.9.7

anaconda-project 0.8.2

appdirs 1.4.3

appnope 0.1.0

appscript 1.0.1

argh 0.26.2

asn1crypto 0.24.0

astroid 2.2.5

astropy 3.1.2

atomicwrites 1.3.0

attrs 19.1.0

Babel 2.6.0

backcall 0.1.0

backports.os 0.1.1

backports.shutil-get-terminal-size 1.0.0

bash-kernel 0.7.1

beautifulsoup4 4.7.1

bitarray 0.8.3

bkcharts 0.2

black 19.3b0

bleach 3.1.0

bokeh 1.0.4

boto 2.49.0

Bottleneck 1.2.1

certifi 2019.3.9

cffi 1.12.2

chardet 3.0.4

Click 7.0

click-plugins 1.1.1

cligj 0.5.0

cloudpickle 0.8.0

clyent 1.2.2

colorama 0.4.1

conda 4.7.5

conda-build 3.17.8

conda-package-handling 1.3.10

conda-verify 3.1.1

contextlib2 0.5.5

cryptography 2.6.1

cycler 0.10.0

Cython 0.29.6

cytoolz 0.9.0.1

dask 1.1.4

decorator 4.4.0

defusedxml 0.5.0

Deprecated 1.2.5

descartes 1.1.0

distributed 1.26.0

docutils 0.14

entrypoints 0.3

et-xmlfile 1.0.1

fastcache 1.0.2

filelock 3.0.10

Fiona 1.8.4

Flask 1.0.2

future 0.17.1

GDAL 2.3.3

geopandas 0.4.1

gevent 1.4.0

glob2 0.6

gmpy2 2.0.8

greenlet 0.4.15

h5py 2.9.0

heapdict 1.0.0

html5lib 1.0.1

idna 2.8

imageio 2.5.0

imagesize 1.1.0

importlib-metadata 0.0.0

ipykernel 5.1.0

ipython 7.4.0

ipython-genutils 0.2.0

ipywidgets 7.4.2

isort 4.3.16

itsdangerous 1.1.0

jdcal 1.4

jedi 0.13.3

Jinja2 2.10

jsonschema 3.0.1

jupyter 1.0.0

jupyter-client 5.2.4

jupyter-console 6.0.0

jupyter-core 4.4.0

jupyterlab 1.0.0

jupyterlab-code-formatter 0.2.1

jupyterlab-latex 0.4.1

jupyterlab-server 1.0.0rc0

keyring 18.0.0

kiwisolver 1.0.1

lazy-object-proxy 1.3.1

libarchive-c 2.8

lief 0.9.0

livereload 2.6.0

llvmlite 0.28.0

locket 0.2.0

lxml 4.3.2

mapclassify 2.1.0

MarkupSafe 1.1.1

matplotlib 3.0.3

mccabe 0.6.1

mistune 0.8.4

mkl-fft 1.0.10

mkl-random 1.0.2

more-itertools 6.0.0

mpmath 1.1.0

msgpack 0.6.1

multipledispatch 0.6.0

munch 2.3.2

navigator-updater 0.2.1

nbconvert 5.4.1

nbformat 4.4.0

nbsphinx 0.4.2

networkx 2.2

nltk 3.4

nose 1.3.7

notebook 5.7.8

numba 0.43.1

numexpr 2.6.9

numpy 1.16.2

numpydoc 0.8.0

olefile 0.46

openpyxl 2.6.1

packaging 19.0

pandas 0.24.2

pandocfilters 1.4.2

parso 0.3.4

partd 0.3.10

path.py 11.5.0

pathlib2 2.3.3

pathtools 0.1.2

patsy 0.5.1

pep8 1.7.1

pexpect 4.6.0

pickleshare 0.7.5

Pillow 5.4.1

pip 19.0.3

pkginfo 1.5.0.1

pluggy 0.9.0

ply 3.11

port-for 0.3.1

prometheus-client 0.6.0

prompt-toolkit 2.0.9

psutil 5.6.1

ptyprocess 0.6.0

py 1.8.0

pycodestyle 2.5.0

pycosat 0.6.3

pycparser 2.19

pycrypto 2.6.1

pycurl 7.43.0.2

pyflakes 2.1.1

Pygments 2.3.1

pylint 2.3.1

pyodbc 4.0.26

pyOpenSSL 19.0.0

pyparsing 2.3.1

pyproj 1.9.6

pyrsistent 0.14.11

PySocks 1.6.8

pytest 4.3.1

pytest-arraydiff 0.3

pytest-astropy 0.5.0

pytest-doctestplus 0.3.0

pytest-openfiles 0.3.2

pytest-remotedata 0.3.1

python-dateutil 2.8.0

pytz 2018.9

PyWavelets 1.0.2

PyYAML 5.1

pyzmq 18.0.0

QtAwesome 0.5.7

qtconsole 4.4.3

QtPy 1.7.0

requests 2.21.0

rope 0.12.0

Rtree 0.8.3

ruamel-yaml 0.15.46

scikit-image 0.14.2

scikit-learn 0.20.3

scipy 1.2.1

seaborn 0.9.0

Send2Trash 1.5.0

setuptools 40.8.0

Shapely 1.6.4.post2

simplegeneric 0.8.1

singledispatch 3.4.0.3

six 1.12.0

snowballstemmer 1.2.1

sortedcollections 1.1.2

sortedcontainers 2.1.0

soupsieve 1.8

Sphinx 1.8.5

sphinx-autobuild 0.7.1

sphinxcontrib-websupport 1.1.0

spyder 3.3.3

spyder-kernels 0.4.2

SQLAlchemy 1.3.1

stata-kernel 1.10.5

statsmodels 0.9.0

sympy 1.3

tables 3.5.1

tblib 1.3.2

terminado 0.8.1

testpath 0.4.2

toml 0.10.0

toolz 0.9.0

tornado 5.1.1

tqdm 4.31.1

traitlets 4.3.2

unicodecsv 0.14.1

urllib3 1.24.1

watchdog 0.9.0

wcwidth 0.1.7

webencodings 0.5.1

Werkzeug 0.14.1

wheel 0.33.1

widgetsnbextension 3.4.2

wrapt 1.11.1

wurlitzer 1.0.2

xlrd 1.2.0

XlsxWriter 1.1.5

xlwings 0.15.4

xlwt 1.3.0

zict 0.1.4

zipp 0.3.3

conda list:

# packages in environment at /Users/Nick/anaconda3:

#

# Name Version Build Channel

_ipyw_jlab_nb_ext_conf 0.1.0 py37_0

alabaster 0.7.12 py37_0

anaconda 2019.03 py37_0

anaconda-client 1.7.2 py37_0

anaconda-navigator 1.9.7 py37_0

anaconda-project 0.8.2 py37_0

appdirs 1.4.3 pypi_0 pypi

appnope 0.1.0 py37_0 conda-forge

appscript 1.1.0 py37h1de35cc_0

asn1crypto 0.24.0 py37_0

astroid 2.2.5 py37_0 conda-forge

astropy 3.1.2 py37h1de35cc_0 conda-forge

atomicwrites 1.3.0 py37_1

attrs 19.1.0 py37_1

babel 2.6.0 py37_0

backcall 0.1.0 py37_0

backports 1.0 py37_1

backports.os 0.1.1 py37_0

backports.shutil_get_terminal_size 1.0.0 py37_2

bash-kernel 0.7.1 pypi_0 pypi

beautifulsoup4 4.7.1 py37_1

bitarray 0.8.3 py37h1de35cc_0

bkcharts 0.2 py37_0

black 19.3b0 pypi_0 pypi

blas 1.0 mkl

bleach 3.1.0 py37_0

blosc 1.15.0 hd9629dc_0

bokeh 1.0.4 py37_0

boost-cpp 1.70.0 hd59e818_0 conda-forge

boto 2.49.0 py37_0

bottleneck 1.2.1 py37h1d22016_1

bzip2 1.0.6 h1de35cc_5

ca-certificates 2019.1.23 0

cairo 1.14.12 h9d4d9ac_1005 conda-forge

certifi 2019.3.9 py37_0 conda-forge

cffi 1.12.2 py37hb5b8e2f_1

chardet 3.0.4 py37_1

click 7.0 py37_0

click-plugins 1.1.1 py_0 conda-forge

cligj 0.5.0 py_0 conda-forge

cloudpickle 0.8.0 py37_0

clyent 1.2.2 py37_1

colorama 0.4.1 py37_0

conda 4.7.5 py37_0 conda-forge

conda-build 3.17.8 py37_0

conda-env 2.6.0 1

conda-package-handling 1.3.10 py37_0 conda-forge

conda-verify 3.1.1 py37_0

contextlib2 0.5.5 py37_0

cryptography 2.6.1 py37ha12b0ac_0

curl 7.64.0 ha441bb4_2

cycler 0.10.0 py37_0

cython 0.29.6 py37h0a44026_0 conda-forge

cytoolz 0.9.0.1 py37h1de35cc_1

dask 1.1.4 py37_1

dask-core 1.1.4 py37_1

dbus 1.13.6 h90a0687_0

decorator 4.4.0 py37_1

defusedxml 0.5.0 py37_1

deprecated 1.2.5 py_0 conda-forge

descartes 1.1.0 py_3 conda-forge

distributed 1.26.0 py37_1 conda-forge

docutils 0.14 py37_0

entrypoints 0.3 py37_0

et_xmlfile 1.0.1 py37_0

expat 2.2.6 h0a44026_0

fastcache 1.0.2 py37h1de35cc_2

filelock 3.0.10 py37_0

fiona 1.8.4 py37h8e9a8e4_1001 conda-forge

flask 1.0.2 py37_1

fontconfig 2.13.1 h1027ab8_1000 conda-forge

freetype 2.9.1 hb4e5f40_0

freexl 1.0.5 h1de35cc_1002 conda-forge

future 0.17.1 py37_1000 conda-forge

gdal 2.3.3 py37hbe65578_0

geopandas 0.4.1 py_1 conda-forge

geos 3.7.1 h0a44026_1000 conda-forge

get_terminal_size 1.0.0 h7520d66_0

gettext 0.19.8.1 h15daf44_3

gevent 1.4.0 py37h1de35cc_0 conda-forge

giflib 5.1.7 h01d97ff_1 conda-forge

glib 2.56.2 hd9629dc_0

glob2 0.6 py37_1

gmp 6.1.2 hb37e062_1

gmpy2 2.0.8 py37h6ef4df4_2

greenlet 0.4.15 py37h1de35cc_0

h5py 2.9.0 py37h3134771_0

hdf4 4.2.13 0 conda-forge

hdf5 1.10.4 hfa1e0ec_0

heapdict 1.0.0 py37_2

html5lib 1.0.1 py37_0

icu 58.2 h4b95b61_1

idna 2.8 py37_0

imageio 2.5.0 py37_0 conda-forge

imagesize 1.1.0 py37_0

importlib_metadata 0.8 py37_0 conda-forge

intel-openmp 2019.3 199

ipykernel 5.1.0 py37h39e3cac_0

ipython 7.4.0 py37h39e3cac_0

ipython_genutils 0.2.0 py37_0

ipywidgets 7.4.2 py37_0

isort 4.3.16 py37_0 conda-forge

itsdangerous 1.1.0 py37_0

jbig 2.1 h4d881f8_0

jdcal 1.4 py37_0

jedi 0.13.3 py37_0 conda-forge

jinja2 2.10 py37_0

jpeg 9b he5867d9_2

json-c 0.13.1 h1de35cc_1001 conda-forge

jsonschema 3.0.1 py37_0 conda-forge

jupyter 1.0.0 py37_7

jupyter_client 5.2.4 py37_0

jupyter_console 6.0.0 py37_0

jupyter_core 4.4.0 py37_0

jupyterlab 1.0.0 pypi_0 pypi

jupyterlab-code-formatter 0.2.1 pypi_0 pypi

jupyterlab-latex 0.4.1 pypi_0 pypi

jupyterlab-server 1.0.0rc0 pypi_0 pypi

kealib 1.4.10 hecf890f_1003 conda-forge

keyring 18.0.0 py37_0 conda-forge

kiwisolver 1.0.1 py37h0a44026_0

krb5 1.16.1 hddcf347_7

lazy-object-proxy 1.3.1 py37h1de35cc_2

libarchive 3.3.3 h786848e_5

libcurl 7.64.0 h051b688_2

libcxx 4.0.1 hcfea43d_1

libcxxabi 4.0.1 hcfea43d_1

libdap4 3.19.1 hae55d67_1000 conda-forge

libedit 3.1.20181209 hb402a30_0

libffi 3.2.1 h475c297_4

libgdal 2.3.3 h0950a36_0

libgfortran 3.0.1 h93005f0_2

libiconv 1.15 hdd342a3_7

libkml 1.3.0 hed7d534_1010 conda-forge

liblief 0.9.0 h2a1bed3_2

libnetcdf 4.6.1 hd5207e6_2

libpng 1.6.36 ha441bb4_0

libpq 11.2 h051b688_0

libsodium 1.0.16 h3efe00b_0

libspatialindex 1.9.0 h6de7cb9_1 conda-forge

libspatialite 4.3.0a h0cd9627_1026 conda-forge

libssh2 1.8.0 ha12b0ac_4

libtiff 4.0.10 hcb84e12_2

libxml2 2.9.9 hab757c2_0

libxslt 1.1.33 h33a18ac_0

llvmlite 0.28.0 py37h8c7ce04_0

locket 0.2.0 py37_1

lxml 4.3.2 py37hef8c89e_0

lz4-c 1.8.1.2 h1de35cc_0

lzo 2.10 h362108e_2

mapclassify 2.1.0 py_0 conda-forge

markupsafe 1.1.1 py37h1de35cc_0 conda-forge

matplotlib 3.0.3 py37h54f8f79_0

mccabe 0.6.1 py37_1

mistune 0.8.4 py37h1de35cc_0

mkl 2019.3 199

mkl-service 1.1.2 py37hfbe908c_5

mkl_fft 1.0.10 py37h5e564d8_0

mkl_random 1.0.2 py37h27c97d8_0

more-itertools 6.0.0 py37_0

mpc 1.1.0 h6ef4df4_1

mpfr 4.0.1 h3018a27_3

mpmath 1.1.0 py37_0

msgpack-python 0.6.1 py37h04f5b5a_1

multipledispatch 0.6.0 py37_0

munch 2.3.2 py_0 conda-forge

navigator-updater 0.2.1 py37_0

nbconvert 5.4.1 py37_3

nbformat 4.4.0 py37_0

nbsphinx 0.4.2 py_0 conda-forge

ncurses 6.1 h0a44026_1

networkx 2.2 py37_1

nltk 3.4 py37_1

nodejs 11.14.0 h6de7cb9_1 conda-forge

nose 1.3.7 py37_2 conda-forge

notebook 5.7.8 py37_0 conda-forge

numba 0.43.1 py37h6440ff4_0

numexpr 2.6.9 py37h7413580_0

numpy 1.16.2 py37hacdab7b_0

numpy-base 1.16.2 py37h6575580_0

numpydoc 0.8.0 py37_0

olefile 0.46 py37_0

openjpeg 2.3.1 hc1feee7_0 conda-forge

openpyxl 2.6.1 py37_1

openssl 1.1.1b h1de35cc_1 conda-forge

packaging 19.0 py37_0

pandas 0.24.2 py37h0a44026_0 conda-forge

pandoc 2.2.3.2 0

pandocfilters 1.4.2 py37_1

parso 0.3.4 py37_0

partd 0.3.10 py37_1

path.py 11.5.0 py37_0

pathlib2 2.3.3 py37_0

patsy 0.5.1 py37_0

pcre 8.43 h0a44026_0

pep8 1.7.1 py37_0

pexpect 4.6.0 py37_0 conda-forge

pickleshare 0.7.5 py37_0

pillow 5.4.1 py37hb68e598_0

pip 19.0.3 py37_0 conda-forge

pixman 0.34.0 h1de35cc_1003 conda-forge

pkginfo 1.5.0.1 py37_0

pluggy 0.9.0 py37_0

ply 3.11 py37_0

poppler 0.65.0 ha097c24_1

poppler-data 0.4.9 1 conda-forge

proj4 5.2.0 h6de7cb9_1003 conda-forge

prometheus_client 0.6.0 py37_0

prompt_toolkit 2.0.9 py37_0

psutil 5.6.1 py37h1de35cc_0 conda-forge

ptyprocess 0.6.0 py37_0 conda-forge

py 1.8.0 py37_0

py-lief 0.9.0 py37h1413db1_2

pycodestyle 2.5.0 py37_0

pycosat 0.6.3 py37h1de35cc_0

pycparser 2.19 py37_0

pycrypto 2.6.1 py37h1de35cc_9

pycurl 7.43.0.2 py37ha12b0ac_0

pyflakes 2.1.1 py37_0

pygments 2.3.1 py37_0

pylint 2.3.1 py37_0 conda-forge

pyodbc 4.0.26 py37h0a44026_0 conda-forge

pyopenssl 19.0.0 py37_0 conda-forge

pyparsing 2.3.1 py37_0

pyproj 1.9.6 py37h01d97ff_1002 conda-forge

pyqt 5.9.2 py37h655552a_2

pyrsistent 0.14.11 py37h1de35cc_0 conda-forge

pysocks 1.6.8 py37_0

pytables 3.5.1 py37h5bccee9_0

pytest 4.3.1 py37_0 conda-forge

pytest-arraydiff 0.3 py37h39e3cac_0

pytest-astropy 0.5.0 py37_0

pytest-doctestplus 0.3.0 py37_0

pytest-openfiles 0.3.2 py37_0

pytest-remotedata 0.3.1 py37_0

python 3.7.3 h359304d_0

python-dateutil 2.8.0 py37_0

python-libarchive-c 2.8 py37_6

python.app 2 py37_9

pytz 2018.9 py37_0

pywavelets 1.0.2 py37h1d22016_0

pyyaml 5.1 py37h1de35cc_0 conda-forge

pyzmq 18.0.0 py37h0a44026_0

qt 5.9.7 h468cd18_1

qtawesome 0.5.7 py37_1

qtconsole 4.4.3 py37_0

qtpy 1.7.0 py37_1

readline 7.0 h1de35cc_5

requests 2.21.0 py37_0

rope 0.12.0 py37_0

rtree 0.8.3 py37h666c49c_1002 conda-forge

ruamel_yaml 0.15.46 py37h1de35cc_0

scikit-image 0.14.2 py37h0a44026_0 conda-forge

scikit-learn 0.20.3 py37h27c97d8_0

scipy 1.2.1 py37h1410ff5_0

seaborn 0.9.0 py37_0

send2trash 1.5.0 py37_0

setuptools 40.8.0 py37_0 conda-forge

shapely 1.6.4 py37h79c6f3e_1005 conda-forge

simplegeneric 0.8.1 py37_2

singledispatch 3.4.0.3 py37_0

sip 4.19.8 py37h0a44026_0

six 1.12.0 py37_0

snappy 1.1.7 he62c110_3

snowballstemmer 1.2.1 py37_0

sortedcollections 1.1.2 py37_0

sortedcontainers 2.1.0 py37_0

soupsieve 1.8 py37_0 conda-forge

sphinx 1.8.5 py37_0 conda-forge

sphinxcontrib 1.0 py37_1

sphinxcontrib-websupport 1.1.0 py37_1

spyder 3.3.3 py37_0

spyder-kernels 0.4.2 py37_0 conda-forge

sqlalchemy 1.3.1 py37h1de35cc_0 conda-forge

sqlite 3.27.2 ha441bb4_0

stata-kernel 1.10.5 pypi_0 pypi

statsmodels 0.9.0 py37h1d22016_0

sympy 1.3 py37_0 conda-forge

tblib 1.3.2 py37_0

terminado 0.8.1 py37_1 conda-forge

testpath 0.4.2 py37_0

tk 8.6.8 ha441bb4_0 conda-forge

toml 0.10.0 pypi_0 pypi

toolz 0.9.0 py37_0

tornado 5.1.1 pypi_0 pypi

tqdm 4.31.1 py37_1

traitlets 4.3.2 py37_0 conda-forge

unicodecsv 0.14.1 py37_0

unixodbc 2.3.7 h1de35cc_0

urllib3 1.24.1 py37_0

wcwidth 0.1.7 py37_0

webencodings 0.5.1 py37_1

werkzeug 0.14.1 py37_0

wheel 0.33.1 py37_0 conda-forge

widgetsnbextension 3.4.2 py37_0

wrapt 1.11.1 py37h1de35cc_0 conda-forge

wurlitzer 1.0.2 py37_0

xerces-c 3.2.2 h44e365a_1001 conda-forge

xlrd 1.2.0 py37_0

xlsxwriter 1.1.5 py37_0

xlwings 0.15.4 py37_0 conda-forge

xlwt 1.3.0 py37_0

xz 5.2.4 h1de35cc_4

yaml 0.1.7 hc338f04_2

zeromq 4.3.1 h0a44026_3

zict 0.1.4 py37_0

zipp 0.3.3 py37_1

zlib 1.2.11 h1de35cc_3

zstd 1.3.7 h5bba6e5_0

Command Line Output

Paste the output from your command line running `jupyter lab` here, use `--debug` if possible.

Browser Output

Paste the output from your browser console here.

All 160 comments

I have also seen these errors. Are you on a Mac? What is your output of ulimit -n? If the output is a small number (e.g. 256), will the problem persist after you run ulimit -n 4096? Note that the effect of ulimit -n is session-based so you will have to either change the limit permanently (OS and version dependent) or run this command in your shell starting script.

I am getting this same error with just one notebook open on Ubuntu 18.04LTS. Using the R kernel.

My ulimit -n output was 1024 and I increased it to 2048. Still happened after only about 20 minutes.

Though, I guess it could be different? Mine has no connection to opening a console, it happens when the notebook/lab interface it just sitting idle.

Only happening since upgrading the jupyterlab-server package today.

Do you see something suspicious with any of the processes using command ps -aef (or other usages listed here)?

@BoPeng, I updated my ulimit to 4096 and haven't had this issue again, but:

$ ps -aef | grep jupyter-lab -m 1 | cut -d ' ' -f 2 | xargs lsof -p | wc -l

2432

That seems like a lot of open files for 1 notebook and one jupyter instance on a machine.

I'm having the same problem (running under Arch Linux), started after the update to version 1 and was not solved by yesterday's update. Also, when I get the error message, one CPU gets to 100% and stays there till I close JupyterLab.

Also having the same issue. Single user server running within JupyterHub. Accessing Juypter Lab on macOS 10.14.5 with Safari. The issue only appeared after updating to v1 yesterday.

Same issue, but with only one notebook open (it's rather large, 16.1 mb), when I tried to save it. High load across all 4 CPUs, averaging around 30%-50% usage.

Ubuntu 18.04, Firefox. I tried setting ulimit -n 4096 and it seems to be behaving better. Originally it was set at 1024.

Does ulimit set a limit to number of files over life of shell session or limit to number of files at a given moment? I probably have the same terminal session open for a long time, ending jupyter sessions but leaving the shell window in the corner and using it to restart jupyter lab later...

@nickeubank , I'm pretty sure it's for the lifetime of the shell session. There are also ways to change this permanently. However, I think this many open files at once must be a bug.

I think I'm still having this issue. If I open one of my large notebooks (~16 mb) and a smaller notebook (~10 kb), even with ulimit -n 4096, and work on the large notebook for a while (continually running a cell that generates a plot and shows it without closing it), the issue appears.

I also should have mentioned in the first place that these notebooks are in Julia 0.7. I haven't needed to use Python in a while so I'm not sure if the error is related to the language used or not.

I was working with a combination of bash and python kernels, so safe to say

this is not kernel dependent then....

On Sun, Jul 7, 2019 at 4:38 PM emc2 notifications@github.com wrote:

I think I'm still having this issue. If I open one of my large notebooks

(~16 mb) and a smaller notebook (~10 kb), even with ulimit -n 4096, and

work on the large notebook for a while (continually running a cell that

generates a plot and shows it without closing it), the issue appears.I also should have mentioned in the first place that these notebooks are

in Julia 0.7. I haven't needed to use Python in a while so I'm not sure if

the error is related to the language used or not.—

You are receiving this because you were mentioned.

Reply to this email directly, view it on GitHub

https://github.com/jupyterlab/jupyterlab/issues/6727?email_source=notifications&email_token=ACJ4F3OTYDW6O5I7NHKOCWTP6JO55A5CNFSM4H4IESRKYY3PNVWWK3TUL52HS4DFVREXG43VMVBW63LNMVXHJKTDN5WW2ZLOORPWSZGODZLTSYA#issuecomment-509032800,

or mute the thread

https://github.com/notifications/unsubscribe-auth/ACJ4F3KBFE63QXTUOTF4IZ3P6JO55ANCNFSM4H4IESRA

.

Might be related to #4017 and/or jupyter/notebook#3748

I’m experiencing this with my IRkernel. Previously to the last jupyterlab/pyzmq/… update everything was fine. Now:

$ lsof 2>/dev/null | grep philipp.angerer | cut -f 1 -d ' ' | sort | uniq -c

21 cut

23 grep

2172 jupyter-l

31 lsof

807 R

4 (sd-pam

25 sort

4 ssh-agent

4 sshd

52 systemd

25 tmux

21 uniq

48 zsh

400 900 of these are open ports, 5001000 are unix sockets, ~1200 are libraries

update: 3180 ports, 3553 sockets. the numbers keep exploding until the error occurs.

I can confirm this issue only happens for notebooks with cells that generate a lot of plot output over a long period of time.

Does it also happen in the classic notebook? If so, it's likely not a JupyterLab issue, but an issue with the kernel and/or the code the kernel is running.

For me, the problem started after the upgrade to JupyterLab v1 in a

notebook with Julia code that was unchanged and had been executed without

problems in v0.35. There was no Julia update in the meantime. In view of

all the other reports, there is pretty strong evidence the problem is

related to the JupyterLab upgrade.

Op ma 8 jul. 2019 om 17:21 schreef Jason Grout notifications@github.com:

Does it also happen in the classic notebook? If so, it's likely not a

JupyterLab issue, but an issue with the kernel and/or the code the kernel

is running.—

You are receiving this because you commented.

Reply to this email directly, view it on GitHub

https://github.com/jupyterlab/jupyterlab/issues/6727?email_source=notifications&email_token=AIFACLDFIS4SJFTLBH2TLMTP6NLQZA5CNFSM4H4IESRKYY3PNVWWK3TUL52HS4DFVREXG43VMVBW63LNMVXHJKTDN5WW2ZLOORPWSZGODZNNVNA#issuecomment-509270708,

or mute the thread

https://github.com/notifications/unsubscribe-auth/AIFACLALX2JQ7KD7Q535473P6NLQZANCNFSM4H4IESRA

.

Are you sure that JupyterLab was the only thing upgraded? Often the notebook server is also upgraded, as well as tornado, etc., and those upgrades might be the source of the issues.

That's why I suggest you check on that exact same system, with the now current packages, if the problem appears using the classic notebook (please use the JLab help menu to use the exact same classic notebook server that jlab is using).

You're probably right. I think I remember the server was updated at the

same time when JupyterLab was updated.

Op ma 8 jul. 2019 om 17:32 schreef Jason Grout notifications@github.com:

Are you sure that JupyterLab was the only thing upgraded? Often the

notebook server is also upgraded, as well as tornado, etc., and those

upgrades might be the source of the issues.That's why I suggest you check on that exact same system, with the now

current packages, if the problem appears using the classic notebook (please

use the JLab help menu to use the exact same classic notebook server that

jlab is using).—

You are receiving this because you commented.

Reply to this email directly, view it on GitHub

https://github.com/jupyterlab/jupyterlab/issues/6727?email_source=notifications&email_token=AIFACLFWYQ256VFPFJXQ2BTP6NMZBA5CNFSM4H4IESRKYY3PNVWWK3TUL52HS4DFVREXG43VMVBW63LNMVXHJKTDN5WW2ZLOORPWSZGODZNOXUQ#issuecomment-509275090,

or mute the thread

https://github.com/notifications/unsubscribe-auth/AIFACLFTPBB6LAVHKH7IQR3P6NMZBANCNFSM4H4IESRA

.

Since this is hard to replicate, can anyone (preemptively) suggest what data / output people can collect when it's happening to aid in diagnosing and troubleshooting this?

If it's helpful, I can send you the notebook in which it happens, of

course. Just let me know.

Op ma 8 jul. 2019 om 17:42 schreef Nick Eubank notifications@github.com:

Since this is hard to replicate, can anyone (preemptively) suggest what

data / output people can collect when it's happening to aid in diagnosing

and troubleshooting this?—

You are receiving this because you commented.

Reply to this email directly, view it on GitHub

https://github.com/jupyterlab/jupyterlab/issues/6727?email_source=notifications&email_token=AIFACLDKN6NQTIOD7PGX3A3P6NN6LA5CNFSM4H4IESRKYY3PNVWWK3TUL52HS4DFVREXG43VMVBW63LNMVXHJKTDN5WW2ZLOORPWSZGODZNPWWQ#issuecomment-509279066,

or mute the thread

https://github.com/notifications/unsubscribe-auth/AIFACLBGJXKTSHV57SLI3OLP6NN6LANCNFSM4H4IESRA

.

It’s a general problem. I assume the more kernels are running the faster it happens, but it should be possible to cause this with most notebooks (Maybe it won’t happen if you don’t plot or something)

I've tried using the same notebook for a while morning, continually regenerating plots, and haven't gotten the same "too many files" error yet. I did open the notebook using the JupyterLab menu as requested.

I have Linux x86, libzmq 3.0.0, the newest python packages for everything, except for matplotlib 3.0.3 (due to an incompatibility in 3.1)

Back to having problems. No figures created in my current session. Anyone have advice for how to get more meaningful information the possible source of the problem while I have the error running?

Also only 1 running kernel:

Also getting different error reports:

OSError: [Errno 24] Too many open files Exception in callback BaseAsyncIOLoop._handle_events(7, 1) handle:Traceback (most recent call last): File "/Users/Nick/anaconda3/lib/python3.7/asyncio/events.py", line 88, in _run File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/platform/asyncio.py", line 138, in _handle_events File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/netutil.py", line 260, in accept_handler File "/Users/Nick/anaconda3/lib/python3.7/socket.py", line 212, in accept OSError: [Errno 24] Too many open files Exception in callback BaseAsyncIOLoop._handle_events(7, 1) handle: Traceback (most recent call last): File "/Users/Nick/anaconda3/lib/python3.7/asyncio/events.py", line 88, in _run File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/platform/asyncio.py", line 138, in _handle_events File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/netutil.py", line 260, in accept_handler File "/Users/Nick/anaconda3/lib/python3.7/socket.py", line 212, in accept OSError: [Errno 24] Too many open files Exception in callback BaseAsyncIOLoop._handle_events(7, 1) handle: Traceback (most recent call last): File "/Users/Nick/anaconda3/lib/python3.7/asyncio/events.py", line 88, in _run File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/platform/asyncio.py", line 138, in _handle_events File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/netutil.py", line 260, in accept_handler File "/Users/Nick/anaconda3/lib/python3.7/socket.py", line 212, in accept OSError: [Errno 24] Too many open files Exception in callback BaseAsyncIOLoop._handle_events(7, 1) handle: Traceback (most recent call last): File "/Users/Nick/anaconda3/lib/python3.7/asyncio/events.py", line 88, in _run File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/platform/asyncio.py", line 138, in _handle_events File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/netutil.py", line 260, in accept_handler File "/Users/Nick/anaconda3/lib/python3.7/socket.py", line 212, in accept OSError: [Errno 24] Too many open files Exception in callback BaseAsyncIOLoop._handle_events(7, 1) handle: Traceback (most recent call last): File "/Users/Nick/anaconda3/lib/python3.7/asyncio/events.py", line 88, in _run File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/platform/asyncio.py", line 138, in _handle_events File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/netutil.py", line 260, in accept_handler File "/Users/Nick/anaconda3/lib/python3.7/socket.py", line 212, in accept OSError: [Errno 24] Too many open files Exception in callback BaseAsyncIOLoop._handle_events(7, 1) handle: Traceback (most recent call last): File "/Users/Nick/anaconda3/lib/python3.7/asyncio/events.py", line 88, in _run File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/platform/asyncio.py", line 138, in _handle_events File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/netutil.py", line 260, in accept_handler File "/Users/Nick/anaconda3/lib/python3.7/socket.py", line 212, in accept OSError: [Errno 24] Too many open files [E 10:20:08.440 LabApp] Uncaught exception GET /api/contents/github/practicaldatascience/source?content=1&1563117608435 (::1) HTTPServerRequest(protocol='http', host='localhost:8888', method='GET', uri='/api/contents/github/practicaldatascience/source?content=1&1563117608435', version='HTTP/1.1', remote_ip='::1') Traceback (most recent call last): File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/web.py", line 1699, in _execute File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/gen.py", line 209, in wrapper File "/Users/Nick/anaconda3/lib/python3.7/site-packages/notebook/services/contents/handlers.py", line 112, in get File "/Users/Nick/anaconda3/lib/python3.7/site-packages/notebook/services/contents/filemanager.py", line 431, in get File "/Users/Nick/anaconda3/lib/python3.7/site-packages/notebook/services/contents/filemanager.py", line 313, in _dir_model OSError: [Errno 24] Too many open files: '/Users/Nick/github/practicaldatascience/source' [W 10:20:08.441 LabApp] Unhandled error [E 10:20:08.441 LabApp] { "Host": "localhost:8888", "Connection": "keep-alive", "Authorization": "token 3115048e2c1b948d2e153280145c2fce7803050589d4c888", "Dnt": "1", "X-Xsrftoken": "2|0ca294cf|2763a99108e0f6abff0c53c025e82121|1562689740", "User-Agent": "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_14_5) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/75.0.3770.100 Safari/537.36", "Content-Type": "application/json", "Accept": "*/*", "Referer": "http://localhost:8888/lab", "Accept-Encoding": "gzip, deflate, br", "Accept-Language": "en-US,en;q=0.9", "Cookie": "username-localhost-8889=\"2|1:0|10:1562267991|23:username-localhost-8889|44:NGQ3YjU3OTlhMzFhNDViOGJhZjBhNzdhMzYyZDZmYjY=|191c351e768221b47963cf5ec150635ff3788788aba3851e0491ebe4c6dc99d4\"; _xsrf=2|0ca294cf|2763a99108e0f6abff0c53c025e82121|1562689740; username-localhost-8888=\"2|1:0|10:1563117608|23:username-localhost-8888|44:ZDRhZWZmYjUzNWZmNDkxMmFjMmI2ZjY2NGZlZWI5NTY=|372342209030764dbd3b76e009d48657de870afa3ed69e3b29e1aa5d5cceb4b7\"" } [E 10:20:08.441 LabApp] 500 GET /api/contents/github/practicaldatascience/source?content=1&1563117608435 (::1) 2.32ms referer=http://localhost:8888/lab Exception in callback BaseAsyncIOLoop._handle_events(7, 1) handle: Traceback (most recent call last): File "/Users/Nick/anaconda3/lib/python3.7/asyncio/events.py", line 88, in _run File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/platform/asyncio.py", line 138, in _handle_events File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/netutil.py", line 260, in accept_handler File "/Users/Nick/anaconda3/lib/python3.7/socket.py", line 212, in accept OSError: [Errno 24] Too many open files Exception in callback BaseAsyncIOLoop._handle_events(7, 1) handle: Traceback (most recent call last): File "/Users/Nick/anaconda3/lib/python3.7/asyncio/events.py", line 88, in _run File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/platform/asyncio.py", line 138, in _handle_events File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/netutil.py", line 260, in accept_handler File "/Users/Nick/anaconda3/lib/python3.7/socket.py", line 212, in accept OSError: [Errno 24] Too many open files Exception in callback BaseAsyncIOLoop._handle_events(7, 1) handle: Traceback (most recent call last): File "/Users/Nick/anaconda3/lib/python3.7/asyncio/events.py", line 88, in _run File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/platform/asyncio.py", line 138, in _handle_events File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/netutil.py", line 260, in accept_handler File "/Users/Nick/anaconda3/lib/python3.7/socket.py", line 212, in accept OSError: [Errno 24] Too many open files Exception in callback BaseAsyncIOLoop._handle_events(7, 1) handle: Traceback (most recent call last): File "/Users/Nick/anaconda3/lib/python3.7/asyncio/events.py", line 88, in _run File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/platform/asyncio.py", line 138, in _handle_events File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/netutil.py", line 260, in accept_handler File "/Users/Nick/anaconda3/lib/python3.7/socket.py", line 212, in accept OSError: [Errno 24] Too many open files Exception in callback BaseAsyncIOLoop._handle_events(7, 1) handle: Traceback (most recent call last): File "/Users/Nick/anaconda3/lib/python3.7/asyncio/events.py", line 88, in _run File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/platform/asyncio.py", line 138, in _handle_events File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/netutil.py", line 260, in accept_handler File "/Users/Nick/anaconda3/lib/python3.7/socket.py", line 212, in accept OSError: [Errno 24] Too many open files Exception in callback BaseAsyncIOLoop._handle_events(7, 1) handle: Traceback (most recent call last): File "/Users/Nick/anaconda3/lib/python3.7/asyncio/events.py", line 88, in _run File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/platform/asyncio.py", line 138, in _handle_events File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/netutil.py", line 260, in accept_handler File "/Users/Nick/anaconda3/lib/python3.7/socket.py", line 212, in accept OSError: [Errno 24] Too many open files Exception in callback BaseAsyncIOLoop._handle_events(7, 1) handle: Traceback (most recent call last): File "/Users/Nick/anaconda3/lib/python3.7/asyncio/events.py", line 88, in _run File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/platform/asyncio.py", line 138, in _handle_events File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/netutil.py", line 260, in accept_handler File "/Users/Nick/anaconda3/lib/python3.7/socket.py", line 212, in accept OSError: [Errno 24] Too many open files [E 10:20:18.449 LabApp] Uncaught exception GET /api/contents/github/practicaldatascience/source?content=1&1563117618445 (::1) HTTPServerRequest(protocol='http', host='localhost:8888', method='GET', uri='/api/contents/github/practicaldatascience/source?content=1&1563117618445', version='HTTP/1.1', remote_ip='::1') Traceback (most recent call last): File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/web.py", line 1699, in _execute File "/Users/Nick/anaconda3/lib/python3.7/site-packages/tornado/gen.py", line 209, in wrapper File "/Users/Nick/anaconda3/lib/python3.7/site-packages/notebook/services/contents/handlers.py", line 112, in get File "/Users/Nick/anaconda3/lib/python3.7/site-packages/notebook/services/contents/filemanager.py", line 431, in get File "/Users/Nick/anaconda3/lib/python3.7/site-packages/notebook/services/contents/filemanager.py", line 313, in _dir_model OSError: [Errno 24] Too many open files: '/Users/Nick/github/practicaldatascience/source' [W 10:20:18.450 LabApp] Unhandled error [E 10:20:18.451 LabApp] { "Host": "localhost:8888", "Connection": "keep-alive", "Authorization": "token 3115048e2c1b948d2e153280145c2fce7803050589d4c888", "Dnt": "1", "X-Xsrftoken": "2|0ca294cf|2763a99108e0f6abff0c53c025e82121|1562689740", "User-Agent": "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_14_5) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/75.0.3770.100 Safari/537.36", "Content-Type": "application/json", "Accept": "*/*", "Referer": "http://localhost:8888/lab", "Accept-Encoding": "gzip, deflate, br", "Accept-Language": "en-US,en;q=0.9", "Cookie": "username-localhost-8889=\"2|1:0|10:1562267991|23:username-localhost-8889|44:NGQ3YjU3OTlhMzFhNDViOGJhZjBhNzdhMzYyZDZmYjY=|191c351e768221b47963cf5ec150635ff3788788aba3851e0491ebe4c6dc99d4\"; _xsrf=2|0ca294cf|2763a99108e0f6abff0c53c025e82121|1562689740; username-localhost-8888=\"2|1:0|10:1563117618|23:username-localhost-8888|44:YTFjYjI2NDJkNDViNGFiMGI1Yzc3NTdjZGE2N2IzOWQ=|749086c2491525684bf6d8951dc5468fa5e3573e00c00ebcf07e07b1311602df\""

Anyone have advice for how to get more meaningful information the possible source of the problem while I have the error running?

Perhaps the following?

- Create a new conda env and have JLab installed. Do not install any extension for other kernel.

- Reduce your system

ulimitto a smaller number such as128. - Try to see if you can reproduce the problem. If not, add extension and/or kernel and repeat.

Ok — is there anyway to see what these thousands of open files are right

now causing the problem? Seems like that might give some pretty clear

diagnostic information if there’s a way to see them . Sorry I’m not an

operating system wizard I’m afraid. :)

On Sun, Jul 14, 2019 at 10:26 AM Bo notifications@github.com wrote:

Anyone have advice for how to get more meaningful information the possible

source of the problem while I have the error running?Perhaps?

- Create a new conda env and have JLab installed. Do not install any

extension for other kernel.- Reduce your system ulimit to a smaller number such as 128.

- Try to see if you can reproduce the problem. If not, add extension

and/or kernel and repeat.—

You are receiving this because you were mentioned.

Reply to this email directly, view it on GitHub

https://github.com/jupyterlab/jupyterlab/issues/6727?email_source=notifications&email_token=ACJ4F3OG3RLQ5G6QSLQ5LSLP7NAT7A5CNFSM4H4IESRKYY3PNVWWK3TUL52HS4DFVREXG43VMVBW63LNMVXHJKTDN5WW2ZLOORPWSZGODZ4HS2Q#issuecomment-511211882,

or mute the thread

https://github.com/notifications/unsubscribe-auth/ACJ4F3ML5VVOCDFJH3EAPFTP7NAT7ANCNFSM4H4IESRA

.

These are most likely not 'files', but sockets created by zmq. Command lsof can be used to list open files/sockets and you can filter by the Jupyter process.

thanks @BoPeng .

Here's my full lsof:

Here's emulating @flying-sheep 's trick:

(base) ➜ ~ lsof | grep Nick | cut -f 1 -d ' ' | sort | uniq -c 10 AGMServic 7 APFSUserA 69 Activity 8 AdobeCRDa 118 AdobeRead 14 AirPlayUI 6 AlertNoti 45 Antivirus 185 AppleSpel 12 AssetCach 188 Atom 205 Atom\x20H 16 AudioComp 10 CMFSyncAg 36 CalNCServ 84 CalendarA 77 CallHisto 95 Cardhop 55 Citations 11 CloudKeyc 35 CommCente 7 ContactsA 12 Container 8 ContextSe 18 CoreLocat 16 CoreServi 251 Dock 64 DocumentP 656 Dropbox 14 DropboxAc 12 DropboxFo 7 EscrowSec 155 Evernote 24 EvernoteH 42 ExternalQ 8 FMIPClien 92 Finder 55 Freedom 9 FreedomPr 290 GitHub 516 Google 11 IMAutomat 52 IMDPersis 37 IMRemoteU 8 IMTransco 14 Keychain 145 Kindle 15 LaterAgen 27 LocationM 7 LoginUser 75 MTLCompil 203 Messages 667 Microsoft 80 Notificat 22 Notify 25 OSDUIHelp 15 PAH_Exten 66 Preview 10 PrintUITo 17 Protected 53 QuickLook 124 R 106 RdrCEF 12 ReportCra 9 SafariBoo 8 SafariClo 15 SafeEject 11 ScopedBoo 61 Screens 8 SidecarRe 19 Siri 14 SiriNCSer 68 Skim 117 Slack 152 Slack\x20 8 SocialPus 115 Spotlight 72 SystemUIS 68 Terminal 208 Things3 16 Trackball 6 USBAgent 16 UsageTrac 179 UserEvent 79 VTDecoder 9 ViewBridg 17 WiFiAgent 12 WiFiProxy 98 accountsd 16 adprivacy 19 akd 15 appstorea 92 assistant 10 atsd 31 avconfere 15 backgroun 96 bird 27 bzbmenu 44 callservi 7 cdpd 7 cfprefsd 11 chrome_cr 396 cloudd 10 cloudpair 53 cloudphot 7 colorsync 788 com.apple 7 com.flexi 24 commerce 8 coreauthd 8 corespeec 57 corespotl 9 crashpad_ 8 ctkahp 7 ctkd 6 cut 6 dbfsevent 10 deleted 14 diagnosti 6 distnoted 8 dmd 9 familycir 8 fileprovi 8 findmydev 569 firefox 17 fmfd 8 followupd 12 fontd 8 fontworke 80 garcon 6 grep 18 icdd 343 iconservi 48 identitys 18 imagent 12 imklaunch 22 keyboards 22 knowledge 7 loginitem 52 loginwind 13 lsd 8 lsof 9 mapspushd 9 mdworker 169 mdworker_ 10 mdwrite 11 media-ind 7 mediaremo 23 nbagent 12 networkse 16 nsurlsess 169 nsurlstor 6 org.spark 12 parsecd 7 pboard 9 pbs 64 photoanal 24 photolibr 9 pkd 3341 plugin-co 13 printtool 325 python3.7 54 quicklook 23 rapportd 16 recentsd 11 reversete 56 routined 25 secd 13 secinitd 11 sharedfil 60 sharingd 7 silhouett 10 siriknowl 49 soagent 7 softwareu 7 spindump_ 7 storeacco 16 storeasse 9 storedown 8 storelega 16 storeuid 120 suggestd 9 swcd 19 talagent 14 tccd 36 trustd 15 universal 12 useractiv 19 usernoted 12 videosubs

Unlike his, I don't have a "jupyter-lab" process. Looks like it's hiding in plugin-container?

Here is the lsof of plugin-container files (lsof | grep plugin-co > lsof_plugins.txt):

(base) ➜ ~ lsof | grep plugin-co | grep Fonts | wc -l

2636

Seems like the problem may be that every font on my system seems to be open?

I'm working under Jupyterhub, and I noticed the same problem since I upgraded to JLab 1.0.0.

I have an intuition about what is generating such behavior. It seems that the new status bar is sending update queries at a fixed rate to check the status of the environment. Each of these queries opens a socket that REMAINS open until the session ends. When the number of open sockets reaches the ulimit... boom! Errno 24.

Does anybody know if there is a way to deactivate the status bar update queries?

~updating to 1.0.2 fixed this issue for me (at least for now)...~ Problem is back, never mind. 1.0.2 also has the same problem

@jtmatamalas would that explain the fact the files being open are all fonts? https://github.com/jupyterlab/jupyterlab/issues/6727#issuecomment-511214271

Anyone else have any ideas of workarounds?

We are considering moving from jupyter notebooks to jupyterlab in a class of a 100 students this fall - this issue will affect more than half of the students.

@firasm Same! Well, 50 students. But agreed: this is a big usability issue that doesn't seem to be getting much attention, though it seems like we're getting some diagnostic traction. I'll post on Jupyter discourse.

I did try setting this in the terminal: ulimit -n 1024 ;

will report back to see if it doesn't work.

I can't see why 'plugin-container' would be JupyterLab. That's an assumption above that I think merits checking.

@jasongrout Well, didn't seem that I had any jupyterlab processes, though jupyterlab was clearly open (though you can see my full dump in my posts above). Also, the only place I was getting the error was jupyterlab.

But I know nothing about web development tools, so don't really know. Just trying to moving the diagnosis forward... As I posted a while ago, happy to collect any additional data a dev suggests would be useful next time it happens.

Here's what I tried to narrow things down more:

# Create a new conda environment:

conda create -n del-lsof jupyterlab

conda activate del-lsof

ulimit -n

ulimit -n gave 4096 for my system. That's the max number of open files.

Then I ran the following summary command before starting up JupyterLab to get a baseline for my system.

lsof 2>/dev/null | grep <USERNAME> | cut -f 1 -d ' ' | sort | uniq -c | sort -n

Note that plugin-co is already there with many open files.

Then I started JupyterLab, and in another terminal ran my lsof count again. I noticed there was a new python3 process with about 85 open handles. That matches with the more specific summary command for the jlab process

ps | grep jupyter-lab -m 1 | cut -d ' ' -f 1 | xargs lsof -p | wc -l

Opening a notebook only bumped the jlab opened file handles up to 115 or so.

Furthermore, looking up the PID of plugin-co, then grepping the output of ps for that process id shows that plugin-co is /Applications/Firefox.app/Contents/MacOS/plugin-container.app/Contents/MacOS/plugin-container - that makes sense that firefox would open the files on a system.

Indeed, shutting down firefox makes that plugin-co go away in the lsof summary, and opening back up and opening tabs makes it come back.

Thanks @jasongrout for helping! Let me know if you'd like me to run anything while I see the errors

So is jupyter lab inducing the browser in which it has been opened to do something weird?

BTW, re: replication: I think the consensus is that this doesn't happen immediately. In my case, I usually find I hit this after I've left jupyterlab open overnight...

Agreed, it takes a couple of hours for the errors to start. Default ulimit on my system (macOS 10.14.6 was 256

Doing the same debugging steps would help. Get a baseline for your system before starting jlab, then monitor that lsof summary over time to see what is increasing. I would do it both with jlab open, and without jlab open to see what the difference that jlab makes.

That ulimit of 256 is asking for trouble. I would say that needs to be raised no matter what.

k will set that up and run it tonight overnight.

(to be clear, I don't have to be doing anything during those hours (there's no code running), it just seems to require it to be open).

I would also do it from a clean environment, with no other kernel activity running. In other words, let's try to just have jlab doing things, and not any extra activity from kernels, etc.

o be clear, I don't have to be _doing_ anything during those hours (there's no code running), it just seems to require it to be open).

Not even a notebook open? Just the single Launcher tab?

And this is a clean jlab with no extensions installed, right? 1.0.4?

Anyway, the point here is to try to eliminate any other sources for file handles to be exhausted, and just have stock JLab with nothing else.

There may be a notebook / kernel open, but no running code.

Will try with all the cleanliness you suggest tonight!

On Sat, Jul 27, 2019 at 2:16 PM Jason Grout notifications@github.com

wrote:

Anyway, the point here is to try to eliminate any other sources for file

handles to be exhausted, and just have stock JLab with nothing else.—

You are receiving this because you were mentioned.

Reply to this email directly, view it on GitHub

https://github.com/jupyterlab/jupyterlab/issues/6727?email_source=notifications&email_token=ACJ4F3N3TB2TM5UT45KGAJ3QBSNKTA5CNFSM4H4IESRKYY3PNVWWK3TUL52HS4DFVREXG43VMVBW63LNMVXHJKTDN5WW2ZLOORPWSZGOD26RCAQ#issuecomment-515707138,

or mute the thread

https://github.com/notifications/unsubscribe-auth/ACJ4F3N3W2KDTR737W3IHZLQBSNKTANCNFSM4H4IESRA

.

@Zsailer is there a chance this is related to the tornado issue you helped to debug a few months back?

@jasongrout OK, experiment running.

FWIW my ulimit is apparently 256. I'm working on a mac and have never played with that setting, so I assume that's the default (unless my terminal (oh-my-zsh) changed it?). If that's the problem, maybe we need a way to bump that number when jupyter lab runs?

But there are a few people in comments above who have hit when they were set at 1024 and 2048...

256 is the default on macOS (10.14.6), and if you just use ulimit -n 1024, it'll reset at next launch instance of Terminal. There are other ways of setting it system wide persistently, but I haven't gone through the trouble of doing it because the same errors came back when I bumped it up to 1024.

That being said, I haven't had a chance to run the tests as clean as you suggest above yet...

my lsof before jupyterlab launch. Note I had to tweak the linux command you gave to be:

lsof | grep <USERNAME> | cut -f 1 -d ' ' | sort | uniq -c | sort -n

6 dbfsevent 6 distnoted 6 grep 7 APFSUserA 7 ContactsA 7 EscrowSec 7 LoginUser 7 cdpd 7 cfprefsd 7 colorsync 7 ctkd 7 loginitem 7 mediaremo 7 pboard 7 silhouett 7 softwareu 7 storeacco 8 DiskArbit 8 FMIPClien 8 SafariClo 8 SidecarRe 8 SocialPus 8 assertion 8 corespeec 8 ctkahp 8 diskutil 8 dmd 8 findmydev 8 followupd 8 lsof 8 spindump_ 8 storelega 9 FreedomPr 9 SafariBoo 9 ViewBridg 9 crashpad_ 9 familycir 9 fileprovi 9 mapspushd 9 pbs 9 rcd 10 AGMServic 10 CMFSyncAg 10 DiskUnmou 10 IMTransco 10 PrintUITo 10 atsd 10 cloudpair 10 coreauthd 10 deleted 10 mdwrite 10 siriknowl 10 swcd 11 AirPort 11 CloudKeyc 11 IMAutomat 11 ScopedBoo 11 media-ind 11 parsecd 11 pkd 11 reversete 11 sharedfil 12 AssetCach 12 DropboxFo 12 WiFiProxy 12 fontd 12 imklaunch 12 networkse 12 useractiv 12 videosubs 13 Container 13 ReportCra 13 lsd 13 printtool 13 secinitd 14 AirPlayUI 14 DropboxAc 14 Keychain 14 SiriNCSer 14 diagnosti 14 tccd 15 LaterAgen 15 PAH_Exten 15 SafeEject 15 backgroun 15 universal 16 AppleMobi 16 AudioComp 16 CoreServi 16 QuickLook 16 Trackball 16 UsageTrac 16 nsurlsess 16 storeuid 17 Protected 17 Visualize 17 adprivacy 17 adservice 17 fmfd 17 recentsd 17 storeasse 17 talagent 18 CoreLocat 18 WiFiAgent 18 icdd 19 ContextSe 19 Siri 19 avconfere 19 imagent 19 storedown 19 usernoted 21 akd 21 appstorea 22 Notify 22 keyboards 22 knowledge 22 rapportd 23 nbagent 25 EvernoteH 25 photolibr 25 secd 26 LocationM 27 OSDUIHelp 27 bzbmenu 27 commerce 29 mdworker 36 CalNCServ 36 CommCente 36 trustd 36 zsh 42 callservi 43 UIKitSyst 45 Antivirus 45 MTLCompil 47 Disk\x20U 47 VTDecoder 47 soagent 47 studentd 48 garcon 53 IMDPersis 53 loginwind 55 Citations 55 identitys 55 routined 61 Activity 62 sharingd 63 corespotl 65 photoanal 67 IMRemoteU 69 Freedom 70 Skim 73 SystemUIS 75 Terminal 76 Photos 78 Stata 81 CalendarA 82 CallHisto 82 cloudphot 94 Notificat 97 bird 100 Spotlight 103 accountsd 104 assistant 107 Dock 125 suggestd 129 iTunes 135 Finder 137 USBAgent 153 Evernote 164 nsurlstor 167 Messages 169 mdworker_ 179 UserEvent 191 Atom 203 AppleSpel 221 Atom\x20H 247 iconservi 250 Things3 261 GitHub 396 cloudd 490 Papers 507 firefox 539 com.apple 655 Microsoft 687 Dropbox 1536 plugin-co 2463 ath

This is quite odd, I've been running jupyterlab version 1.x pretty much since it was release. This problem just started this weekend! Only change I can think of is Ubuntu pushed out a kernel update?

@david-waterworth do you mean you just started seeing it on Ubuntu?

The reported issues here by myself and @nickeubank are on macOS - so is this problem now cross-platform?

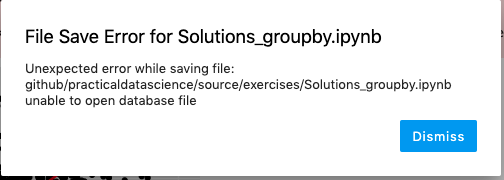

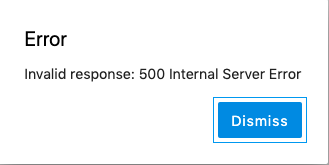

@firasm yes, I've been seeing it all weekend on Ubuntu 18.04 + jupyterlab 1.0.1, it's driving me nuts as once it starts I can no longer save my work. I didn't actually realise the original reports were maxOS so yeah this is cross platform. I originally thought maybe the issue was firefox related so I switched to chrome but same issue (plus after a while in chrome jupyterlab crashed - although that's probably not browser related)

And after a while:

OSError: [Errno 12] Cannot allocate memory

There are several Linux reports above (both Arch and Ubuntu). As well as

reports from people using different kernels (Python, R, and Julia).

On Sun, Jul 28, 2019 at 2:18 AM David Waterworth notifications@github.com

wrote:

@firasm https://github.com/firasm yes, I've been seeing it all weekend

on Ubuntu 18.04 + jupyterlab 1.0.1, it's driving me nuts as once it starts

I can no longer save my work. I didn't actually realise the original

reports were maxOS so yeah this is cross platform. I originally thought

maybe the issue was firefox related so I switched to chrome but same issue

(plus after a while in chrome jupyterlab crashed - although that's probably

not browser related)—

You are receiving this because you were mentioned.

Reply to this email directly, view it on GitHub

https://github.com/jupyterlab/jupyterlab/issues/6727?email_source=notifications&email_token=ACJ4F3I4GSYKKH55ZQTTK53QBVB6DA5CNFSM4H4IESRKYY3PNVWWK3TUL52HS4DFVREXG43VMVBW63LNMVXHJKTDN5WW2ZLOORPWSZGOD26Y55Y#issuecomment-515739383,

or mute the thread

https://github.com/notifications/unsubscribe-auth/ACJ4F3PAYS22WCYF4SERWJDQBVB6DANCNFSM4H4IESRA

.

Here's output after leaving open my new clean install all night but with NOTHING open in jupyter lab (except the default launcher tab).

(base) ➜ ~ lsof | grep Nick | cut -f 1 -d ' ' | sort | uniq -c | sort -n 6 AlertNoti 6 cut 6 dbfsevent 6 distnoted 6 grep 7 APFSUserA 7 ContactsA 7 EscrowSec 7 LoginUser 7 cdpd 7 cfprefsd 7 colorsync 7 ctkd 7 loginitem 7 mediaremo 7 pboard 7 silhouett 7 softwareu 7 storeacco 8 DiskArbit 8 FMIPClien 8 SafariClo 8 SidecarRe 8 SocialPus 8 assertion 8 corespeec 8 ctkahp 8 diskutil 8 dmd 8 findmydev 8 followupd 8 lsof 8 spindump_ 8 storelega 9 FreedomPr 9 SafariBoo 9 ViewBridg 9 chrome_cr 9 crashpad_ 9 familycir 9 fileprovi 9 mapspushd 9 mdworker 9 pbs 9 rcd 10 AGMServic 10 CMFSyncAg 10 DiskUnmou 10 IMTransco 10 PrintUITo 10 atsd 10 cloudpair 10 coreauthd 10 deleted 10 mdwrite 10 siriknowl 10 swcd 11 AirPort 11 CloudKeyc 11 IMAutomat 11 ScopedBoo 11 media-ind 11 parsecd 11 pkd 11 reversete 11 sharedfil 12 AssetCach 12 DropboxFo 12 WiFiProxy 12 fontd 12 imklaunch 12 networkse 12 useractiv 12 videosubs 13 Container 13 ReportCra 13 lsd 13 printtool 13 secinitd 14 AirPlayUI 14 DropboxAc 14 Keychain 14 SiriNCSer 14 tccd 15 LaterAgen 15 PAH_Exten 15 SafeEject 15 backgroun 15 diagnosti 15 universal 16 AppleMobi 16 AudioComp 16 CoreServi 16 QuickLook 16 Trackball 16 UsageTrac 16 nsurlsess 16 storeuid 17 Protected 17 Visualize 17 adprivacy 17 adservice 17 fmfd 17 recentsd 17 storeasse 17 talagent 18 CoreLocat 18 WiFiAgent 18 icdd 19 ContextSe 19 Siri 19 avconfere 19 imagent 19 storedown 19 usernoted 21 akd 21 appstorea 21 rapportd 22 Notify 22 keyboards 22 knowledge 23 nbagent 25 EvernoteH 25 photolibr 25 secd 26 LocationM 27 OSDUIHelp 27 bzbmenu 27 commerce 36 CalNCServ 36 CommCente 36 trustd 42 callservi 43 UIKitSyst 45 Antivirus 45 MTLCompil 47 Disk\x20U 47 soagent 47 studentd 48 garcon 53 IMDPersis 53 loginwind 55 Citations 55 identitys 55 routined 55 zsh 56 VTDecoder 61 Activity 62 sharingd 63 corespotl 65 photoanal 66 mdworker_ 67 IMRemoteU 69 Freedom 70 Skim 73 SystemUIS 76 Photos 78 Stata 78 Terminal 81 CalendarA 81 python3.7 82 CallHisto 82 cloudphot 94 Notificat 97 bird 99 accountsd 100 Spotlight 104 assistant 107 Dock 110 iTunes 125 suggestd 135 Finder 137 USBAgent 153 Evernote 164 nsurlstor 167 Messages 179 UserEvent 200 Atom 207 AppleSpel 222 Atom\x20H 247 iconservi 250 Things3 261 GitHub 396 cloudd 468 Google 491 Papers 504 firefox 550 com.apple 655 Microsoft 687 Dropbox 1136 plugin-co 3129 ath

But when I open a notebook, edit, and try and save, I'm not having a problem. Could be clean environment, could be not having a notebook open.

So I'm gonna leave this clean install of jupyter lab sitting around with the notebook open now and report back later.

Nothing yet. :/ So like this kind of problem to not crop up when you actually want it to. It's always seemed intermittent, though, so I don't know that that that's dispositive yet.

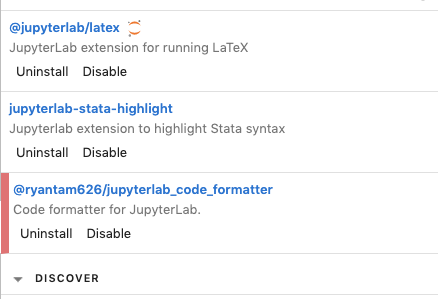

With that said, here's my extension list (in the version I usually use -- testing now in a clean environment), in case anyone else with this problem wants to cross reference:

@ellisonbg

@Zsailer is there a chance this is related to the tornado issue you helped to debug a few months back?

I've never seen this error, specifically, but @nickeubank it might be worth upgrading to tornado>=6.0.3 and seeing if that fixes your issue (I see your original post says your running tornado 5.1.1).

Upgraded to tornado 6.0.3 (I was on 5.1.1 as well). It did not help.

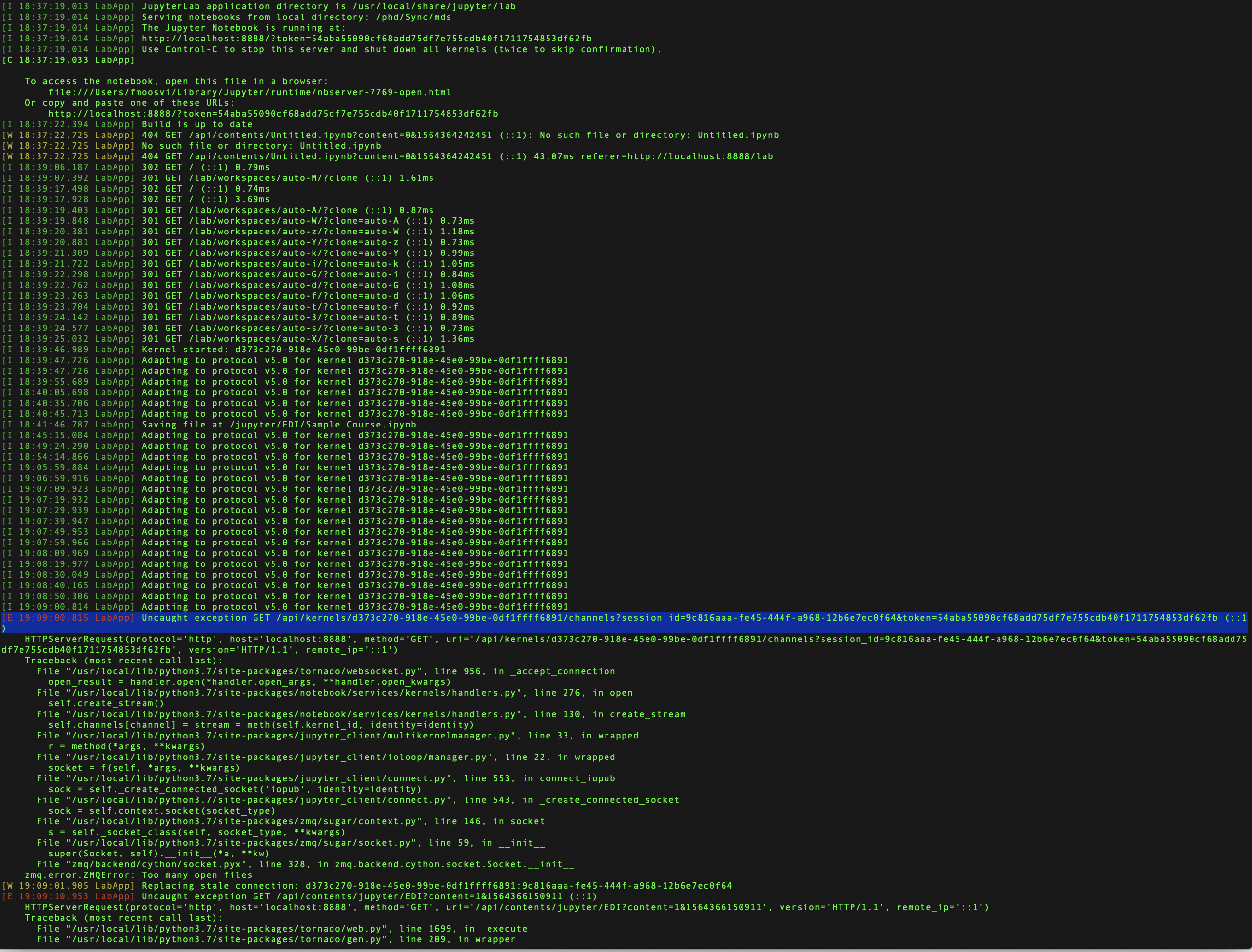

This time, I managed to catch this error just as it was happening:

Terminal output

Firass-15-rMBP:~ fmoosvi$ pip3 install tornado

Requirement already satisfied: tornado in /usr/local/lib/python3.7/site-packages (5.1.1)

Firass-15-rMBP:~ fmoosvi$ pip3 install --upgrade tornado

Collecting tornado

Installing collected packages: tornado

Found existing installation: tornado 5.1.1

Uninstalling tornado-5.1.1:

Successfully uninstalled tornado-5.1.1

Successfully installed tornado-6.0.3

Firass-15-rMBP:~ fmoosvi$ mdsj

[I 18:37:18.993 LabApp] [jupyter_nbextensions_configurator] enabled 0.4.1

[I 18:37:19.005 LabApp] JupyterLab extension loaded from /usr/local/lib/python3.7/site-packages/jupyterlab

[I 18:37:19.006 LabApp] JupyterLab application directory is /usr/local/share/jupyter/lab

[W 18:37:19.008 LabApp] JupyterLab server extension not enabled, manually loading...

[I 18:37:19.013 LabApp] JupyterLab extension loaded from /usr/local/lib/python3.7/site-packages/jupyterlab

[I 18:37:19.013 LabApp] JupyterLab application directory is /usr/local/share/jupyter/lab

[I 18:37:19.014 LabApp] Serving notebooks from local directory: /path/is/redacted

[I 18:37:19.014 LabApp] The Jupyter Notebook is running at:

[I 18:37:19.014 LabApp] http://localhost:8888/?token=

[I 18:37:19.014 LabApp] Use Control-C to stop this server and shut down all kernels (twice to skip confirmation).

[C 18:37:19.033 LabApp]

To access the notebook, open this file in a browser:

file:///Users/fmoosvi/Library/Jupyter/runtime/nbserver-7769-open.html

Or copy and paste one of these URLs:

http://localhost:8888/?token=<REDACTED>

[I 18:37:22.394 LabApp] Build is up to date

[W 18:37:22.725 LabApp] 404 GET /api/contents/Untitled.ipynb?content=0&1564364242451 (::1): No such file or directory: Untitled.ipynb

[W 18:37:22.725 LabApp] No such file or directory: Untitled.ipynb

[W 18:37:22.725 LabApp] 404 GET /api/contents/Untitled.ipynb?content=0&1564364242451 (::1) 43.07ms referer=http://localhost:8888/lab

[I 18:39:06.187 LabApp] 302 GET / (::1) 0.79ms

[I 18:39:07.392 LabApp] 301 GET /lab/workspaces/auto-M/?clone (::1) 1.61ms

[I 18:39:17.498 LabApp] 302 GET / (::1) 0.74ms

[I 18:39:17.928 LabApp] 302 GET / (::1) 3.69ms

[I 18:39:19.403 LabApp] 301 GET /lab/workspaces/auto-A/?clone (::1) 0.87ms

[I 18:39:19.848 LabApp] 301 GET /lab/workspaces/auto-W/?clone=auto-A (::1) 0.73ms

[I 18:39:20.381 LabApp] 301 GET /lab/workspaces/auto-z/?clone=auto-W (::1) 1.18ms

[I 18:39:20.881 LabApp] 301 GET /lab/workspaces/auto-Y/?clone=auto-z (::1) 0.73ms

[I 18:39:21.309 LabApp] 301 GET /lab/workspaces/auto-k/?clone=auto-Y (::1) 0.99ms

[I 18:39:21.722 LabApp] 301 GET /lab/workspaces/auto-i/?clone=auto-k (::1) 1.05ms

[I 18:39:22.298 LabApp] 301 GET /lab/workspaces/auto-G/?clone=auto-i (::1) 0.84ms

[I 18:39:22.762 LabApp] 301 GET /lab/workspaces/auto-d/?clone=auto-G (::1) 1.08ms

[I 18:39:23.263 LabApp] 301 GET /lab/workspaces/auto-f/?clone=auto-d (::1) 1.06ms

[I 18:39:23.704 LabApp] 301 GET /lab/workspaces/auto-t/?clone=auto-f (::1) 0.92ms

[I 18:39:24.142 LabApp] 301 GET /lab/workspaces/auto-3/?clone=auto-t (::1) 0.89ms

[I 18:39:24.577 LabApp] 301 GET /lab/workspaces/auto-s/?clone=auto-3 (::1) 0.73ms

[I 18:39:25.032 LabApp] 301 GET /lab/workspaces/auto-X/?clone=auto-s (::1) 1.36ms

[I 18:39:46.989 LabApp] Kernel started: d373c270-918e-45e0-99be-0df1ffff6891

[I 18:39:47.726 LabApp] Adapting to protocol v5.0 for kernel d373c270-918e-45e0-99be-0df1ffff6891

[I 18:39:47.726 LabApp] Adapting to protocol v5.0 for kernel d373c270-918e-45e0-99be-0df1ffff6891

[I 18:39:55.689 LabApp] Adapting to protocol v5.0 for kernel d373c270-918e-45e0-99be-0df1ffff6891

[I 18:40:05.698 LabApp] Adapting to protocol v5.0 for kernel d373c270-918e-45e0-99be-0df1ffff6891

[I 18:40:35.706 LabApp] Adapting to protocol v5.0 for kernel d373c270-918e-45e0-99be-0df1ffff6891

[I 18:40:45.713 LabApp] Adapting to protocol v5.0 for kernel d373c270-918e-45e0-99be-0df1ffff6891

[I 18:41:46.787 LabApp] Saving file at /jupyter/EDI/Sample Course.ipynb

[I 18:45:15.084 LabApp] Adapting to protocol v5.0 for kernel d373c270-918e-45e0-99be-0df1ffff6891

[I 18:49:24.290 LabApp] Adapting to protocol v5.0 for kernel d373c270-918e-45e0-99be-0df1ffff6891

[I 18:54:14.866 LabApp] Adapting to protocol v5.0 for kernel d373c270-918e-45e0-99be-0df1ffff6891

[I 19:05:59.884 LabApp] Adapting to protocol v5.0 for kernel d373c270-918e-45e0-99be-0df1ffff6891

[I 19:06:59.916 LabApp] Adapting to protocol v5.0 for kernel d373c270-918e-45e0-99be-0df1ffff6891

[I 19:07:09.923 LabApp] Adapting to protocol v5.0 for kernel d373c270-918e-45e0-99be-0df1ffff6891

[I 19:07:19.932 LabApp] Adapting to protocol v5.0 for kernel d373c270-918e-45e0-99be-0df1ffff6891

[I 19:07:29.939 LabApp] Adapting to protocol v5.0 for kernel d373c270-918e-45e0-99be-0df1ffff6891

[I 19:07:39.947 LabApp] Adapting to protocol v5.0 for kernel d373c270-918e-45e0-99be-0df1ffff6891

[I 19:07:49.953 LabApp] Adapting to protocol v5.0 for kernel d373c270-918e-45e0-99be-0df1ffff6891

[I 19:07:59.966 LabApp] Adapting to protocol v5.0 for kernel d373c270-918e-45e0-99be-0df1ffff6891

[I 19:08:09.969 LabApp] Adapting to protocol v5.0 for kernel d373c270-918e-45e0-99be-0df1ffff6891

[I 19:08:19.977 LabApp] Adapting to protocol v5.0 for kernel d373c270-918e-45e0-99be-0df1ffff6891

[I 19:08:30.049 LabApp] Adapting to protocol v5.0 for kernel d373c270-918e-45e0-99be-0df1ffff6891

[I 19:08:40.165 LabApp] Adapting to protocol v5.0 for kernel d373c270-918e-45e0-99be-0df1ffff6891

[I 19:08:50.306 LabApp] Adapting to protocol v5.0 for kernel d373c270-918e-45e0-99be-0df1ffff6891

[I 19:09:00.814 LabApp] Adapting to protocol v5.0 for kernel d373c270-918e-45e0-99be-0df1ffff6891

[E 19:09:00.815 LabApp] Uncaught exception GET /api/kernels/d373c270-918e-45e0-99be-0df1ffff6891/channels?session_id=9c816aaa-fe45-444f-a968-12b6e7ec0f64&token=

HTTPServerRequest(protocol='http', host='localhost:8888', method='GET', uri='/api/kernels/d373c270-918e-45e0-99be-0df1ffff6891/channels?session_id=9c816aaa-fe45-444f-a968-12b6e7ec0f64&token=

Traceback (most recent call last):

File "/usr/local/lib/python3.7/site-packages/tornado/websocket.py", line 956, in _accept_connection

open_result = handler.open(handler.open_args, *handler.open_kwargs)

File "/usr/local/lib/python3.7/site-packages/notebook/services/kernels/handlers.py", line 276, in open

self.create_stream()

File "/usr/local/lib/python3.7/site-packages/notebook/services/kernels/handlers.py", line 130, in create_stream

self.channels[channel] = stream = meth(self.kernel_id, identity=identity)

File "/usr/local/lib/python3.7/site-packages/jupyter_client/multikernelmanager.py", line 33, in wrapped

r = method(args, *kwargs)

File "/usr/local/lib/python3.7/site-packages/jupyter_client/ioloop/manager.py", line 22, in wrapped

socket = f(self, args, *kwargs)

File "/usr/local/lib/python3.7/site-packages/jupyter_client/connect.py", line 553, in connect_iopub

sock = self._create_connected_socket('iopub', identity=identity)

File "/usr/local/lib/python3.7/site-packages/jupyter_client/connect.py", line 543, in _create_connected_socket

sock = self.context.socket(socket_type)

File "/usr/local/lib/python3.7/site-packages/zmq/sugar/context.py", line 146, in socket

s = self._socket_class(self, socket_type, *kwargs)

File "/usr/local/lib/python3.7/site-packages/zmq/sugar/socket.py", line 59, in __init__

super(Socket, self).__init__(a, *kw)

File "zmq/backend/cython/socket.pyx", line 328, in zmq.backend.cython.socket.Socket.__init__

zmq.error.ZMQError: Too many open files

[W 19:09:01.905 LabApp] Replacing stale connection: d373c270-918e-45e0-99be-0df1ffff6891:9c816aaa-fe45-444f-a968-12b6e7ec0f64

[E 19:09:10.953 LabApp] Uncaught exception GET /api/contents/jupyter/EDI?content=1&1564366150911 (::1)

HTTPServerRequest(protocol='http', host='localhost:8888', method='GET', uri='/api/contents/jupyter/EDI?content=1&1564366150911', version='HTTP/1.1', remote_ip='::1')

Traceback (most recent call last):

File "/usr/local/lib/python3.7/site-packages/tornado/web.py", line 1699, in _execute

File "/usr/local/lib/python3.7/site-packages/tornado/gen.py", line 209, in wrapper

File "/usr/local/lib/python3.7/site-packages/notebook/services/contents/handlers.py", line 112, in get

File "/usr/local/lib/python3.7/site-packages/notebook/services/contents/filemanager.py", line 431, in get

File "/usr/local/lib/python3.7/site-packages/notebook/services/contents/filemanager.py", line 313, in _dir_model

OSError: [Errno 24] Too many open files: '/path/is/redacted/jupyter/EDI'

[W 19:09:10.954 LabApp] Unhandled error

[E 19:09:10.955 LabApp] {

"Host": "localhost:8888",

"Pragma": "no-cache",

"Accept": "/*",

"Authorization": "token 54aba55090cf68add75df7e755cdb40f1711754853df62fb",

"X-Xsrftoken": "2|334e1f38|3944eb4731e3366f845f9afcfb0d8789|1564188958",

"Accept-Language": "en-ca",

"Accept-Encoding": "gzip, deflate",

"Cache-Control": "no-cache",

"Content-Type": "application/json",

"User-Agent": "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_14_6) AppleWebKit/605.1.15 (KHTML, like Gecko) Version/12.1.2 Safari/605.1.15",

"Referer": "http://localhost:8888/lab",

"Connection": "keep-alive",

"Cookie": "username-localhost-8888=\"2|1:0|10:1564366140|23:username-localhost-8888|44:MWFhYmViNWE4NTU4NGNhN2IwMDVhZDhmOTlkNWQ3YmQ=|2834597008995426258d56d33bc4a3e5be1d213eaa3026b020504645c33c6306\"; username-localhost-8889=\"2|1:0|10:1564268254|23:username-localhost-8889|44:NzUzZjcxMWNlNmEzNDUzMmI4ZTYyMjllYTZhNTEwYTI=|d391fdd9ef091b843c6d0591879ec18d8b8edb85d04353b6f2a679c97525854b\"; _xsrf=2|334e1f38|3944eb4731e3366f845f9afcfb0d8789|1564188958"

}

[E 19:09:10.955 LabApp] 500 GET /api/contents/jupyter/EDI?content=1&1564366150911 (::1) 2.69ms referer=http://localhost:8888/lab

Exception in callback BaseAsyncIOLoop._handle_events(7, 1)

handle:

File "/usr/local/Cellar/python/3.7.3/Frameworks/Python.framework/Versions/3.7/lib/python3.7/asyncio/events.py", line 88, in _run

File "/usr/local/lib/python3.7/site-packages/tornado/platform/asyncio.py", line 138, in _handle_events

File "/usr/local/lib/python3.7/site-packages/tornado/netutil.py", line 260, in accept_handler

File "/usr/local/Cellar/python/3.7.3/Frameworks/Python.framework/Versions/3.7/lib/python3.7/socket.py", line 212, in accept

OSError: [Errno 24] Too many open files

Exception in callback BaseAsyncIOLoop._handle_events(7, 1)

handle:

File "/usr/local/Cellar/python/3.7.3/Frameworks/Python.framework/Versions/3.7/lib/python3.7/asyncio/events.py", line 88, in _run

File "/usr/local/lib/python3.7/site-packages/tornado/platform/asyncio.py", line 138, in _handle_events

File "/usr/local/lib/python3.7/site-packages/tornado/netutil.py", line 260, in accept_handler

File "/usr/local/Cellar/python/3.7.3/Frameworks/Python.framework/Versions/3.7/lib/python3.7/socket.py", line 212, in accept

OSError: [Errno 24] Too many open files

Exception in callback BaseAsyncIOLoop._handle_events(7, 1)

handle:

File "/usr/local/Cellar/python/3.7.3/Frameworks/Python.framework/Versions/3.7/lib/python3.7/asyncio/events.py", line 88, in _run

File "/usr/local/lib/python3.7/site-packages/tornado/platform/asyncio.py", line 138, in _handle_events

File "/usr/local/lib/python3.7/site-packages/tornado/netutil.py", line 260, in accept_handler

File "/usr/local/Cellar/python/3.7.3/Frameworks/Python.framework/Versions/3.7/lib/python3.7/socket.py", line 212, in accept

OSError: [Errno 24] Too many open files

Exception in callback BaseAsyncIOLoop._handle_events(7, 1)

handle:

File "/usr/local/Cellar/python/3.7.3/Frameworks/Python.framework/Versions/3.7/lib/python3.7/asyncio/events.py", line 88, in _run

File "/usr/local/lib/python3.7/site-packages/tornado/platform/asyncio.py", line 138, in _handle_events

File "/usr/local/lib/python3.7/site-packages/tornado/netutil.py", line 260, in accept_handler

File "/usr/local/Cellar/python/3.7.3/Frameworks/Python.framework/Versions/3.7/lib/python3.7/socket.py", line 212, in accept

FYI: I just updated from jupyterlab 1.0.2 to 1.0.4 and the errors seem to have stopped... for now. Jupyterlab has been running for about 4 hours now with no issue. Will report back if they come back.

@david-waterworth - I noticed you're on 1.0.1, try going to .4 and see if you still have the issue?

@firasm do you have any extensions installed?

@jasongrout My attempt to replicate on clean install failed. :/ Seems like that suggests it was (a) fixed by 1.0.4, or (b) it's extension related, right? I'll upgrade to 1.0.4 now in my regular environment and see what happens...

My guess is it is extension related if it's coming from JLab, but either could be true. Let us know what you find!

Will do, thanks!

I've disabled all by @jupyter-widgets/jupyterlab-manager and updated to 1.0.4, also updated tornado from 5.1.1 to 6.0.3

I note though, it still say 1.0.2 on the Help > About Jupyter Lab screen? pip list says 1.0.4

I have not had this issue since the upgrade to 1.0.4 either.

Same. Updated to 1.0.4 yesterday, did lots of work yesterday, left open overnight, and am doing lots of work this morning. No problems. I'd suggest leaving this open a few more days to be sure, but this may have been accidentally fixed!

EDIT: sorry, "accidentally fixed" suggests no one put work into fixing this, and obviously all bug fixes take work. I just mean someone appears to have fixed this particular problem while patching something out, as opposed to fixing it by trying to directly address this error.

Just curious - when people saw this problem, were they working with the Jupyter python kernel, or some other kernel?

There are a range of kernels reported. I usually see working with a Python kernel, but have seen it working with R kernels. There are also reports above of seeing it with Julia kernel.

In 1.0.3, we relaxed validation of kernel messages, since strict validation was causing errors with kernels that weren't quite compliant with the kernel spec (which apparently included R and Julia kernels). In looking at the 1.0.3 PRs, that was the one that jumped out to me as possibly fixing something like what was noted on this thread. If we were seeing these issues with the python kernel, though, that wasn't the fix, since the python kernel was compliant.

Are there any reports of these issues with jlab 1.0.4, or should we close the issue as resolved?

Seems like everyone is doing well so far -- seems reasonable to close to me.

No issues yet on 1.0.4 ! Thanks all

My errors were on the R kernel.

Closing as fixed in 1.0.4, then (and probably 1.0.3, since 1.0.4 was a one-PR fix for 1.0.3). Perhaps related to #6860

We can reopen this if someone is still noticing an issue.

Just encountered this in 1.0.4, my ulimit was 256 and I was running 6 notebooks but only 1 or 2 notebooks had cells executed, and weren't using significant resources.

Just encountered this in 1.0.4, my ulimit was 256 and I was running 6 notebooks but only 1 or 2 notebooks had cells executed, and weren't using significant resources.

Same issue on our side as well, no more than 3-4 notebooks running, only a few actually executing jobs, error keeps coming back after some time :( on Ubuntu 18.04, JLAB 1.0.4 and tried both Jhub 1.0.0 and 0.9.6.

ulimit was set to fs.file-max = 500000

Issue still happens

Reopening since there are still people apparently seeing this.

If you are still seeing this in 1.0.4, can you please use lsof to try to narrow down where the issue is coming from? For example, see jupyterlab/jupyterlab#6727 (comment). Also, can you try in a fresh clean environment with no extensions and only minimal dependencies (e.g., conda create -n jlab3748 -c conda-forge jupyterlab=1.0.4)

hello, lsof output :

root@*:/home/*# lsof 2>/dev/null | grep jupyter | cut -f 1 -d ' ' | sort | uniq -c

1652 jupyterhu

102769 jupyter-l

root@*:/home/**# jupyter serverextension list

config dir: /root/.jupyter

sparkmagic enabled

- Validating...

sparkmagic 0.12.9 OK

@jupyter-widgets/jupyterlab-manager enabled

- Validating...

Error loading server extension @jupyter-widgets/jupyterlab-manager

X is @jupyter-widgets/jupyterlab-manager importable?

jupyterlab enabled

- Validating...

jupyterlab 1.0.4 OK

config dir: /usr/etc/jupyter

jupyterlab enabled

- Validating...

jupyterlab 1.0.4 OK

config dir: /usr/local/etc/jupyter

jupyterlab enabled

- Validating...

jupyterlab 1.0.4 OK

I also dissabled the extension with issues :

@jupyter-widgets/jupyterlab-manager disabled

- Validating...

Error loading server extension @jupyter-widgets/jupyterlab-manager

X is @jupyter-widgets/jupyterlab-manager importable?

Hello everyone,

After further investigation on my end, i seem to have found a working combination of the components in JLab.

After trying multiple versions of JH,JLAB and Tornado server, the following versions seems to have solved the too many open files issue on my ubuntu 18.04 instance :

Tornado 5.1.1

JLab 1.0.4

JHub 0.9.6