Jupyterlab: Extension Development Wishlist

I am opening this issue to compile thoughts and plans for a refactor of the build system we are planning to work on during our team sprint in December (https://github.com/jupyterlab/team-compass/issues/19).

I want to solicit input from any users who have pain points with the extension build system. Please comment with suggestions and I will add them to this comment.

Then, we can collate this issues into a number of new requirements and determine which combination of technical changes will be necessary to address them.

Current State

Please edit this or ping me to fix it if this is incorrect

Currently, when you install a new extension, it is added to the package.json in the staging directory of your JupyterLab application directory. It is also added to the list of installed extensions. These are used to generate a index.js file that imports each installed extension and registers it at runtime. This file is then built with webpack using our config.

New features

- Allow installing extensions without webpack by pre-bundling them: https://github.com/jupyterlab/jupyterlab/issues/5672#issuecomment-526278264

- Related: allow doing extension development without a network https://github.com/jupyterlab/jupyterlab/issues/5236

- Allow backend extensions to automatically ship or install frontend extensions: https://github.com/jupyterlab/jupyterlab/issues/6228

- Add sibling is broken in some cases: https://github.com/jupyterlab/jupyterlab/issues/6911 Overall, it should be possible to try out your extension with a local version of jupyterlab without including your code in the repo.

- Allow extensions to customize webpack build: https://github.com/jupyterlab/jupyterlab/issues/4328

- Allow third party packages to be verified as singletons: https://github.com/jupyterlab/jupyterlab/issues/3193

- Support third party extensions to depend on other third party extensions: https://github.com/jupyterlab/jupyterlab/issues/7467 https://github.com/jupyterlab/jupyterlab/issues/4245 https://github.com/jupyterlab/jupyterlab/issues/7064

- Consolidate

linkandinstallcommands if possible, since they cause confusion: https://discourse.jupyter.org/t/about-jupyter-labextension-link-v-s-install/2201 - Allow developing extensions in JupyterLab itself https://github.com/jupyterlab/jupyterlab/issues/7469

Suggestions

- Webpack alternatives

- Yarn 2.0? https://github.com/jupyterlab/jupyterlab/issues/4705

- Use runetime loading, like custom require JS? https://github.com/jupyterlab/jupyterlab/issues/5672#issuecomment-526443943

- See how we can use what observablehq is doing here, using d3-require and jsdeliver https://github.com/jupyterlab/jupyterlab/issues/5672#issuecomment-526565078

Bugs

- It currently requires a really ugly workaround to install local extensions that aren't uploaded to NPM: https://github.com/jupyterlab/jupyterlab/issues/5852

All 91 comments

extension are not feeling lego-style :

- webpack apparently doesn't work on windows 32 bit,

- having to rebuild the jupyterlab on your PC each time you add a new plugin is a bet,

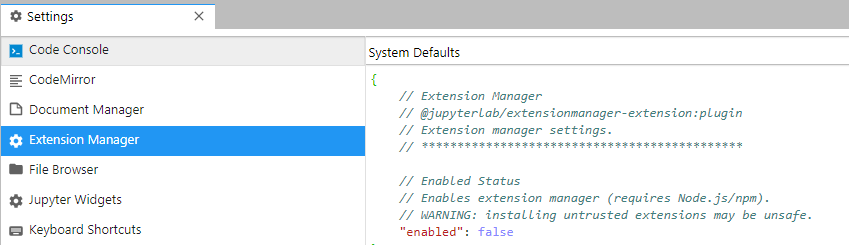

- there is (is there ?) no simple tool (graphical in Windows term) to:

. understand why things go wrong when Jupyterlab build goes south for the mere standard user,

. see which extension plugin is active, create problems, check it/activate/desactivate it, - do I read the big staging directory must not be cleared ?

I had a hard time getting jest to work for unit testing my extensions and it's still not flawless (using react).

I think it would be nice to have a unit testing configured in the cookie cutter extension project. Or maybe have a react version of it?

Notes from our conversation today:

The plan is to investigate the following in a separate repository:

- Extensions are pip/conda packages that provide entry points

- Extensions can list

externalsthat are provided by the application. They can listinternalsto override core-provided externals. - JupyterLab core will provide a set of extensions that are globally available (such as

@lumino/*) - Each extension provides itself as a globally available module.

- We use a Webpack loader to produce a module format that uses

defineandrequire.

- For extensions, they provide a global

definefor themselves and then their dependencies are scoped (e.g.define('my_extension/foo_dependency'). - For core, we provide several top level

definemodules (such as@lumino/*). - We use a scoped

require()unless an extension is marked asexternal - There are two types of entry points:

jupyterlab_extensionandjupyterlab_provider(that for example provides a utility or atokenand can be listed as anexternal).

- For extensions, they provide a global

- We continue to use

/share/jupyter/lab/settings(override.json,page_config.json,build_config.json`) - We will give extensions a

public_urlthat allows us to route to theirsite_packageslocation to serve their static assets - We will template our

index.htmlto load the currently installed JupyterLab extensions

Questions:

- Can we scrape entry_points data from PyPI for discoverability?

For completeness, would it be possible to also list the motivation for each of the points? It is not immediately clear to me what the purpose of some of the points are, or what problems they will solve.

We use a Webpack loader to produce a module format that uses

defineandrequire.

- For extensions, they provide a global

definefor themselves and then their dependencies are scoped (e.g.define('my_extension/foo_dependency').

Does this mean we are going back to a requirejs dependency, and not trying to use something like systemjs or jspm or whatever might be out there now?

In our current meeting, @blink1073 mentioned that he has been looking into this and settling on:

- AMD as our format

- Use system.import

- systemjs as our loader

@blink1073 I unfortunately missed our meeting yesterday and Max said you had figured some things about how we wanna proceed. Do you think you could summarize that here?

@bollwyvl mentioned snowpack elsewhere which seems pretty interesting.

I don't have the experience to judge if it would be a good fit but I'd be curious why not (expert advice about what I should beware of before giving it a go! 😜 )

Background

JupyterLab uses a dependency injection (DI) system, as well as some shared state between modules. Because of this, we have a need to share modules at run time. The current best practice for web applications is to use a tool like Rollup or Webpack to

create a set of bundle files that are loaded in the browser. Those bundle files typically contain local state that is not shared

between bundles.

In the case of JupyterLab, we want to enable the user to extend the application by installing new extensions that load alongside the core extensions. Up until now, this was accomplished by adding the extensions to a monolithic single page

web application build using Webpack. This had the advantage of using standard tooling and workflows. It has the disadvantage of requiring a node runtime and access to an npm repository for dependencies.

Here we seek to find a solution that enables JupyterLab to be extensible without requiring a node runtime or access to an npm repository. We still have the requirement of sharing modules for DI, as well as things like lumino messages that share state.

We propose a solution where the extension author uses a JupyterLab-provided tool to build an extension asset that is able to be dynamically loaded by the JupyterLab application and share state. The extension should be installable as a conda package, so that users can use a single manifest and dependency source to provision their environments.

The next step is to find a bundler and loader solution that accomplishes the above design goals. The following bundlers were considered: Webpack, Rollup, Parcel, and snowpack. The following loaders were considered: Webpack, Parcel, snowpack, RequireJS, SystemJS, and StealJS.

Bundlers

Webpack was used in a previous attempt to make a custom bundler for JupyterLab. The previous version relied on the parsed output of the module in order to create an AMD module for each of the output modules, as opposed to the standard Webpack module. However, neither Webpack 4.0 nor the upcoming Webpack 5.0 expose the transpiled output to plugins.

Rollup is ruled out because it inlines modules.

Snowpack is ruled out because it leaves existing modules in place and loads them as singular files.

Parcel provides the generated JavaScript for each module, allowing us to generate AMD modules for each input module. It is in the process of migrating to a new plugin format, but the underlying bundle and module structure is staying the same.

Loaders

Webpack and Parcel are ruled out because their loaders operate on self-contained bundles, unless explicitly loading an external bundle.

Snowpack is ruled out because it expects one file per module, which would take to long for our application.

SystemJS is ruled out because it does not support the require function variant of the AMD module.

Both RequireJS and StealJS support loading the require function variant of the AMD module. RequireJS has not seen much activity on GitHub, but it is stable and widely used. StealJS is actively maintained, but not as widely used.

Bundling and Loading Proposal

We target Parcel as the bundler and RequireJS as the module loader. We would write a transformer that rewrites Parcel imports to use an AMD module name, and then wrap the output in an AMD definition that uses a require function. We would use the sourcemap information given by Parcel and update it given our changes to produce the final source map. We do not expose window.require, so that we keep our loader as an implementation detail.

Our extension builder would have a set of known external packages from JupyterLab and the extension. It would use unqualified names for those modules, and not include them in the output. The bundles must be optionally optimized using a JS optimizer. These bundles would then be included in either a pip or conda package, and would be loaded dynamically by the server from site-packages. Eventually, a build step would be used to create optimized bundles in the application directory.

Packaging and Dependency Management

Currently extensions are typically installed from an npm registry, or from a tarball included in a pip or conda package. Extensions cannot declare a direct dependency on another extension. There is no way for two packages to share another dependency for the purposes of shared state other than requiring the same version range and relying on yarn de-duplication. If we utilize python entry points, then we have a single source of truth for dependency resolution: either conda or pip. Extensions would be installed as standard package alongside JupyterLab, and declare their dependency other other extensions or on provider packages, that are built the way same as extensions, but are meant to provide shared state as opposed to an extension.

Remaining technical tasks for a minimum product

- Write an AMD module transformer as a Parcel plugin that takes a list of external and top level modules

- Write the logic that produces extension bundles for a given version of JupyterLab and its singletons

- Write the logic that produces the core JupyterLab bundles

- Write the server logic to load bundles from package entry points from site-packages

- Implement a watch mode for core that leverages Parcel’s watch

- Implement a watch mode for extension authors

Thanks @dhirschfeld for the hint about snowpack, it looks pretty cool but not well suited to our needs (see above).

Thanks for the detailed explanation @blink1073 - that's really helpful! Since I'll likely be doing a bit of frontend dev I'm keen to align with the jupyter tech-stack...

Thanks for the detailed break-down! I have one question about extensions depending on other extensions though. Take this case:

- Extension A depends on extension B

- I want to use extension C instead of extension B (i.e. extension C implements the token that extension B declares).

How would I as an end user achieve this?

@vidartf One option would be to split the tokens into separate packages:

- Split extension B into two NPM packages

BandB-token.Bdepends onB-token - Create one Python package for each of these B packages, where again

Bdepends onB-token. - Have

Adepend onB-tokenpython package. - Have

Cdepend onB-tokenas well. - In the install instructions of

Asay "eitherpip install Borpip install C". - Alternatively, you could have

Adepend on theBpython package and add some metadata toCthat says "When installed, don't load the code in extensionB"

@saulshanabrook The assumption in the case was that someone had written A with a hard dependency on B. As such, only the last point "Alternatively, you could have ..." seems relevant here. Under this regime, I think we would need to allow this configuration system to specify "don't register this plugin from X", as an extension can contain many plugins, and you might just want to replace one of them. Some thought would need to go into what level would be most appropriate for such a config system (static declaration in the python/js package? dynamic python code? dynamic client-side JS? Would there be alternative sources of configuration data?).

@asmeurer mentioned that the conda "Features" feature might be a way to express to the solver "Hey I need some package that implements XXX but I don't care which one it is, as long as one of them does!".

It might be nice if we could punt this problem to the version solver, instead of creating a bespoke feature ourselves. I think it would be reasonable to tell users if they want this kind of automatic solving they could use Conda, otherwise they can use pip and manually uninstall the package they don't want.

Ah @teoliphant just pointed out that actually this is now called "Variants" instead of "features".

Please don't take Saul's @ of me as me endorsing any conda functionality or anything in that comment being accurate. I haven't worked closely on conda in several years and don't know what the current state of things is.

Some of the best docs on the current state of variants is by MinRK at:

https://conda-forge.org/docs/maintainer/knowledge_base.html#message-passing-interface-mpi

Just a small data point from a user perspective. Currently two points are really painful in the current extension system.

- The Typescript extension version and Python (or other) package are not by default checking their respective version compatibility. This leads to all kinds of scenarios of mismatches with errors ranging from subtle bugs to total crashes of the Jupyterlab interface. Some extension authors are now including version checking into their packages but that is far from the norm and should either by highly encouraged or even better the default by some Jupyterlab mechanism.

- The build process feels "fragile". For a lack of deeper technical understanding what happens is that a uninstalled extension is not completely uninstalled (jupyter-matplotlib is one example) and another one is that I experienced a couple of times that I got no error messages after the build, the extension did not work, uninstalling and reinstalling helped and then it worked.

I try to report concrete bugs as often as I can but these are more like meta-issues so I wanted to bring them up here.

Thanks @asteppke that's helpful! Yeah the plan that @blink1073 is working on would I think address both of these, because all packages would be python packages and so we could use conda/pip to verify that versions are pinned correctly. And each package would come pre-built so you would less global shared state, unless you chose to bundle them all together for performance.

Interesting! Webpack 5 will have federated bundles baked in: adds config for shared (shared packages), exposes (exported packages) and remotes (remote bundle providers).

I'm going to do more research into this.

https://dev.to/marais/webpack-5-and-module-federation-4j1i

https://github.com/webpack/webpack/issues/10352

Interesting! Webpack 5 will have federated bundles baked in

I read the issue and the article. Here's another article written by the issue's author (might need to go into private browsing mode to get past the paywall): https://medium.com/@ScriptedAlchemy/webpack-5-module-federation-a-game-changer-to-javascript-architecture-bcdd30e02669

It sounds like they came up with a solution to a completely different problem, but that there's enough overlap that it may solve our problem (or at least the "extensions should live in their own bundles" part of it) as well.

I am a little confused by their description of their implementation. For example, in:

const ModuleFederationPlugin = require("webpack/lib/container/ModuleFederationPlugin");

new ModuleFederationPlugin({

name: 'app_two',

library: { type: 'global', name: 'app_a' },

remotes: {

app_one: 'app_one',

app_three: 'app_three'

},

exposes: {

AppContainer: './src/App'

},

shared: ['react', 'react-dom', 'relay-runtime']

}),

how exactly do you specify the "paths" to the remotes, and where do the associated bundles/resources need to live?

Wow that looks amazing! I haven't also looked that closely... But seems like what we have been looking for.

We are planning a meeting at 11:30 PT on Friday Apr 3 on the normal Jupyter Zoom channel to discuss how to move forward with the extension rework.

From the issue @blink1073 linked to:

I want to share resources between separate bundles at runtime. Similar to DLL plugin but without needing to provide Static build context , and I want to do this at runtime in the browser. Not at build time. Thus enabling tech like micro-frontends to be efficient, easy to manage, and most importantly - erase page reloads between separate apps and Enable independent deploys of standalone applications.

This is exactly what we want! If we can really get webpack to make multiple separate builds, and have it join them in the browser, this will be perfect, since then we don't need to do another build on install time.

It might be helpful to have a call with @ScriptedAlchemy, if he is available, to get his take on what we are trying to do and how we could leverage the work they are pursuing.

I have played a bit with https://github.com/module-federation/module-federation-examples and posted a question on @ScriptedAlchemy twitter timeline https://twitter.com/ScriptedAlchemy/status/1245141523764674560

The shared, exposes and remotes attributes work pretty well but I have not seen a feature that would allow to avoid a complete rebuild when you would add a deps/dependency not present in your runtime.

Food for thought True dynamic loaded components in React (with rollup) https://www.youtube.com/watch?v=GjkQS5J6K6k

Some thoughts:

- Adopt AMD as the module format, something isn't going to change

- Use Webpack to build AMD modules and RequireJS to load them

We provide:

- A tool that creates webpack configuration to create multiple AMD libraries that include the source package name and requested package version in the library name, e.g.

[email protected]/[email protected]. This produces a set of files that is a mini-registry. - A tool that uses

package.jsonfiles and their associated registries to create AMD bundles that are de-deduped. The script also handles RequireJS aliasing, e.g. ([email protected]/[email protected] -> [email protected]/[email protected]) - A private version of RequireJS (not on the window object), for the loading.

- A tool that creates webpack configuration to create multiple AMD libraries that include the source package name and requested package version in the library name, e.g.

There is still a build step, but it doesn't require any external tools.

- Workflows like watch mode and hot swapping get much easier because they're just AMD modules.

Open questions:

- How to handle lazy imports? Do we mark certain modules as lazy in a manifest and then promote them to not lazy if they get used by an immediate import?

- How will CSS and other asset types get handled - will the CSS loaders etc. still work?

- How to handle source maps - this one shouldn't be too bad from @telamonian's impressions

- Could we potentially leave the files unwrapped and use a manifest to write the AMD header as we're building?

cc @oigewan

@echarles thats possible to do, we just don't really disclose it. You can also dynamically load remotes at runtime without adding them to remotes array. I tweeted about it today.

Doing the same for overrides (shared) would involve executing __webpack_overrides__() - adding new overrides at runtime. Not something i thought about doing, but i believe it's been designed as such that you could. If you look at the testCases/container folder, you'll see us manually attaching vendors to shared. Because ModuleFederation plugin is just a small orchestrator on top of several larger plugins.. Accessing the overridables, calling it on its own with additional vendors is possible.

A remote has a getter, which is how expose {} is able to work, then there's a setter-like function that enables hosts to write overrides into the remote. There might be some limitations, because shared actually causes the bundle to change its structure. whatever is shared is replaced with SharedModule - which looks for an override, if one isn't found - it loads its own dependency.

If the functionality isn't accessible already, it could likely be introduced as a supplemental plugin

Reading this thread, I'm realizing there's more questions. Could do a zoom call or hangout if you've got questions.

In short, Module Federation can do everything webpack can. JS, CSS, Loaders, side effects, SSR, AMD/UMD, commonjs,systemjs and so on. No real limitations from that perspective - if webpack can do it, it'll work.

Vendor versioning and management, a simple solution would be:

One could do the same to expose object as well. And you could walk a directory - generating exposed files dynamically.

Paths to remotes, that's an implementation detail - webpack only manages the capability. So how I do it at the moment - the plugin emits a special runtime, I call it remoteEntry.js. I use cache headers to bust browser cache, but you could also have a script injected that looks like http://localhost:9000/remoteEntry.js?Date.now() - its gets the job done. Thats the only reference point a "host" needs to interface with a remote, all other JS files are hashed as usual. remoteEntry contains no code other than a specialized webpack runtime. So it's pretty small to load.

Not entirely sure of your use case, but the underlying ContainerPlugin, OverridablePlugin, ContainerReferencePlugin are pretty powerful. Hooks could be added (and likely will) to enable more flexibility.

Importing code - the mechanics underneath enable us to do things like import RemoteThing from "website1/something" - which will be a synchronous import. Pretty useful for code that wont work under sync conditions (like react suspense SSR)

How we actually pull that off is by hoisting that requirement to the nearest dynamic import. higher up in the module graph. I usually leave a dynamic import in the entry point to ensure webpack always has a promise it can hoist to.

Rollup has some interesting dynamic concepts as well, I've not used rollup and am a die-hard webpack contributor. In some form or another, I wanted multiple bundles to work as one at runtime, in Webpack.

All i was focused on was that webpack doesn't work well with micro-frontend or distributed javascript systems. Something I've wanted for a long time in the compiler i always use

I think a nice next step here would be for someone to make a new repo which experiments with the Module Federation to demonstrate how it could work for a use case like ours...

Which means:

- A set of packages that are "core". These together form into one bundle.

- Another extensions package that imports the "core" packages and interacts with them. It also includes third party packages. This is the extension.

- Possibly a shared dependency between multiple extensions packages, this would be another bundle.

It would be nice to see each of these built separately, then served together and linked together at runtime in the browser.

EDIT: Also then trying to combine all those bundles locally, to dedupe where possible and pre-bundle a larger one would also be helpful! If that's possible, then we could have everyone ship module federation bundles and either use them in the browser or build a larger bundle from them server side.

For further documentation, it seems the thread to read about the federated module support in webpack is https://github.com/webpack/webpack/issues/10352 (make sure to expand the comments hidden by default)

As noted in https://github.com/webpack/webpack/issues/10352#issuecomment-591087935, the three main PRs were merged into webpack on 26 Feb 2020.

@jasongrout A new example has just landed in https://github.com/module-federation/module-federation-examples/pull/3 for more dynamic loading and is closer to what we are looking for. See also https://www.youtube.com/watch?v=27QbUycVrHg&feature=youtu.be (especially the 2nd part of the video for details on the new example)

Thanks. That is closer to what we need. I suppose the shared line in https://github.com/module-federation/module-federation-examples/blob/f3fa9c911bee9c01d156ee453b6a6de54e4ce6d5/dynamic-system-host/app1/webpack.config.js#L30 for us would contain basically all the module's dependencies? Transitive dependencies?

Thinking loud, The host (jupyterlab) would define a set of deps shared by most of the extensions, eg @juypyterlab/apputils ... so maybe yes all the core jlab extensions. I am not sure for now about the impact of those definitions on the remote bundle sizes, still experimenting with that, but the expectations would be that only transitive deps being not already loaded would be pulled.

Update on module federation, few requests to be able to dynamically add remotes and shared. This would enable you to perform contextless federation at runtime. Its not pretty, but works. Also handy for webpack4 to consume webpack5 federated code

Thanks @ScriptedAlchemy!

Do you have any sense for when webpack 5 is slated to be released? We're also trying to match with our shipping timelines.

Its OSS, so you know how it is. Mid-year is the best timeline I can hammer out. I'm working with Zeit as they've started to prep dual support canary release. Module federation has 2 tickets before it will appear in the next NPM beta of webpack.

The main holdup is docs, module federation, maybe some work left around multi-target entry points.

Might get a proposal to enhance HMR API to support not reloading the parent module to reload the child.. not sure if that would impact timelines tho (don't think so)

I screen-casted a more robust example of federation. Interleaving 5 separately deployed MFEs into a SPA. I'm not great at text lol

https://youtu.be/-LNcpralkjM

Mid-year is the best timeline I can hammer out.

That's incredibly helpful. We're planning to feature freeze around June and ship around August, so these timelines might just work out.

It seems like our usecase might require a bit more control about how the dependencies are overridden. As far as I can tell, in your examples each microfrontend tries to load a shared dependency from the host application first, but then falls back to its own copy if the shared dependency is not available from the host (this is the self-healing example, for example).

In our case, we have a number of extension plugins that are each compiled separately, each bundling a version of their dependencies. In my mind, in an ideal world, the host application would essentially do the same as yarn-deduplicate --strategy fewer at runtime to override dependency imports from each extension bundle. In other words, each extension would label most of its dependencies as shared, and would also publish the allowable semver range for the dependency. The host application would:

- collect the information from each extension bundle about what dependency/versions are available in each bundle.

- collect the information about what dependency/semver range are required by each extension

- run the

yarn-deduplicate --strategy feweralgorithm to maximize dependency sharing, essentially deciding which version of each dependency to use to maximize the number of clients using the dependency and minimizing the number of loaded dependencies. This takes into account the dependency semver range each extension requires. - override the dependency loading for all extensions to load these deduplicated dependencies.

As a concrete example, suppose we have a host application and extension bundles A, B, and C that each require some version of dependency D, which is not supplied or bundled by the host application (so the host knows nothing at compile time about dependency D). In our example, we have the following:

- extension A requires D version

^2and bundles D version 2.0 - extension B requires D version

1.0 || 1.1and bundles D version 1.1 - extension C requires D version

^1.1and bundles D version 1.4.

In our scenario, the host application will deduplicate and dynamically determine the overrides so that extension A gets its own bundled dependency, and B and C both get the dependency version 1.1 that B bundles, since it satisfies both semver requirements and can be deduplicated.

Is this type of dynamic resolution feasible in the system you created? It's a little more complicated than the self-healing example where each extension just falls back to its own bundled version, and requires the host to both know about what each bundle is providing and what each bundle is requiring before deciding the overrides.

Hmm, on second thought, we could gather the extension manifest information and run the deduplication when we template the original html page, and generate on the html page a list of overrides to be used at runtime (e.g., extension A provides dependency D which is used by extensions B and C).

Yes, I think we'll need a build step. That will also prevent serving JS modules that never get loaded.

@jasongrout @blink1073 I feel like there is a way to go about this that avoids a build step... and avoids this dynamic version resolution thing. It's a "hack" but maybe it would be enough to work and cut down a ton on our complexity of building?

- Extensions bundle anything inside of them that they don't mind if there are multiple versions of.

- Extensions don't bundle anything inside them they want shared. For JupyterLab libraries, obviously there will be a version loaded they can depend on. For other libraries that are not part of the core JuptyerLab bundle (including our dependencies), they must also be distributed as Python package so that conda/pip takes care of making sure only only one exists.

I worry about building an install system that is technically "correct" in terms of matching semver for everything and creating the perfect optimal bundle, but adds a ton of complexity and maintenance burden for us. I would much prefer something dumb and understandable and relying on existing technologies that we don't build ourselves.

EDIT: This still allows us to deal with versioning requirements but pushes all of them to the Python level instead of the JS level. So we don't have to build our own resolution software, but can rely on the downstream one.

@ScriptedAlchemy I am trying out the dynamic-system-hosts example! It makes sense, basically it just sets a global var to be the module, and then you grab that var. Nice and simple. It does seem like it has the side affect of polluting the globals? Curious if are able to use different types for the library arg, like requirejs?

Ideally, we would want to dynamically provide a module so when we use native ES6 imports, that module is available, but unfortunately there is no spec yet on how to dynamically provide modules to the frontend...

2. For other libraries that are not part of the core JuptyerLab bundle (including our dependencies), they must also be distributed as Python package so that conda/pip takes care of making sure only only one exists.

The problem here is that we basically need to repackage a (huge?) number of js libraries. I suppose it's really a question of scope. If it's 10 shared python packages, fine. If it's 1000...

I suppose it's really a question of scope. If it's 10 shared python packages, fine. If it's 1000.

Totally agree here! Do you have a sense, off the top of your head, that this would be more like 1000? Maybe we should collect some data here:

Out of the top extensions published, how many rely on NPM packages that need to be globals that aren't not included in our core bundle?

2. For other libraries that are not part of the core JuptyerLab bundle (including our dependencies), they must also be distributed as Python package so that conda/pip takes care of making sure only only one exists.

Thinking through this, I think the python package is an implementation detail and probably the wrong level to solve the deduping problem. It would be trivial, for example, to have two python packages that make the same js library available to the frontend, and then you're right back to square one since you've deduped at a different level than where things are being actually used.

In the end, a shared js package needs to be available in the browser for multiple packages to import. Note that since these packages importing this shared library may not be extensions, we can't use the jlab dependency injection registry to provide these libraries and do the deduplication, we need some sort of registry that works at a deeper level and interfaces with how we are distributing and loading the js packages, such as an AMD registry like requirejs, or import maps and es modules, or systemjs, or this federated module idea.

It would be trivial, for example, to have two python packages that make the same js library available to the frontend, and then you're right back to square one since you've deduped at a different level than where things are being actually used.

Python libraries would only make available to frontend themselves, even if they bundle other libraries. So it's unclear to me how you would get into the state where the same JS library is available from multiple python libraries. Could you give an example?

In the end, a shared js package needs to be available in the browser for multiple packages to import. Note that since these packages importing this shared library may not be extensions, we can't use the jlab dependency injection registry to provide these libraries and do the deduplication, we need some sort of registry that works at a deeper level and interfaces with how we are distributing and loading the js packages, such as an AMD registry like requirejs, or import maps and es modules, or systemjs, or this federated module idea.

But this shared JS package can just be shipped as a Python package. Yeah this is what the federated modules does. We just have to choose for each extension what to inline and what not to.

Python libraries would only make available to frontend themselves, even if they bundle other libraries. So it's unclear to me how you would get into the state where the same JS library is available from multiple python libraries. Could you give an example?

Author A writes several packages that depend on d3, so they bundle a shared d3 as jlabd3. Author B also has a few libraries that rely on d3, so they create a different python jupyterd3. jlab loads both copies of d3 into the system.

Of course, they could coordinate. But perhaps Author A requires d3 v2 and Author B requires d3 v3. Again, how are things resolved at runtime?

Of course, they could coordinate. But perhaps Author A requires d3 v2 and Author B requires d3 v3. Again, how are things resolved at runtime?

Yeah they would have to coordinate..

JupyterLab could also given an error if there are two python libraries installed that provide the same node module name, i.e. in the case of jupyterd3 and jlabd3.

If they require different versions, then they depend on different versions of the python package. Only one is installed. With conda, this would be an error. Currently with pip it would print a warning.

The point is, if you need something to be global, you can't have two versions. If you don't need it to be global, just bundle it in with yourself.

The point is, if you need something to be global, you can't have two versions. If you don't need it to be global, just bundle it in with yourself.

Deduplicating at runtime allows you to share dependencies not because they have to be global to work, but for efficiency.

Though the counterargument there is that if the end user cares about efficiency, they should build a monolithic package like we have now that does deduping at build time.

Though the counterargument there is that if the end user cares about efficiency, they should build a monolithic package like we have now that does deduping at build time.

Yes, I would say trying to also get a version of this that we can precompile, if we want, would be a way to cover this base.

I guess that's a question for @ScriptedAlchemy (unless it's already been answered). If I have a couple of different libraries that all produce bundles, is it possible to ingest all those bundles and output a composite bundle that dedupes things where possible?

But it would be so nice if we could also be able to do it without this step. It would just makes things conceptually a lot simpler and reduce our long term maintenance burden. It would also make the kind of dynamic loading of libraries thing so much easier, because the whole system is loading stuff on the fly and just hoping that the non-bundled deps are available.

As a sysadmin I'd like to build & deploy JupyterLab with a curated set of extensions. It would be great if users could take their base install and add/remove extensions as they wish without having to recompile the entire application requiring dev tools and multiple GBs of memory.

...so I see this capability as a bit of an escape hatch. If an extension becomes popular enough it can be added as part of the default bundle for those users.

As a sysadmin I'd like to build & deploy JupyterLab with a curated set of extensions. It would be great if users could take their base install and add/remove extensions as they wish without having to recompile the entire application requiring dev tools and multiple GBs of memory.

Yes, that's a primary goal for all of us here.

Yes, I think we'll need a build step. That will also prevent serving JS modules that never get loaded.

To be clear, I'm advocating for no build step. Getting rid of the end-user build step, and the requirement for nodejs for the end user, is a primary goal for me.

I'm advocating for no mandatory build step, but see no problem with an "optimize-my-delivery build" (if the default case isn't already optimal).

There are examples of fully dynamic federated apps. Similar to graphana style dashboard where users install “remotes” at will and the “host” isn’t aware.

So yes, you can supply both vendor dependencies and other remote code at runtime without the application shell or whatever being aware of them at built time. Preventing you from needing to redeploy the app when new extensions are used.

To achieve this, you’d need to use the lower level implementation and not the sugar syntax of import()

100% dynamic capabilities are possible. You’d just use the webpack functions directly to instrument the application

@ScriptedAlchemy I am trying out the dynamic-system-hosts example! It makes sense, basically it just sets a global

varto be the module, and then you grab that var. Nice and simple. It does seem like it has the side affect of polluting the globals? Curious if are able to use different types for the library arg, like requirejs?Ideally, we would want to dynamically provide a module so when we use native ES6 imports, that module is available, but unfortunately there is no spec yet on how to dynamically provide modules to the frontend...

I’m going to walk over the source code on YouTube today. But it supports all library types webpack can process. Common js, systemjs, global, window, amd, UMD

But it supports all library types webpack can process. Common js, systemjs, global, window, amd, UMD

Ah perfect!

All supported types:

getSourceString(runtimeTemplate, moduleGraph, chunkGraph) {

const request =

typeof this.request === "object" && !Array.isArray(this.request)

? this.request[this.externalType]

: this.request;

switch (this.externalType) {

case "this":

case "window":

case "self":

return getSourceForGlobalVariableExternal(request, this.externalType);

case "global":

return getSourceForGlobalVariableExternal(

request,

runtimeTemplate.outputOptions.globalObject

);

case "commonjs":

case "commonjs2":

case "commonjs-module":

return getSourceForCommonJsExternal(request);

case "amd":

case "amd-require":

case "umd":

case "umd2":

case "system":

case "jsonp":

return getSourceForAmdOrUmdExternal(

chunkGraph.getModuleId(this),

this.isOptional(moduleGraph),

request,

runtimeTemplate

);

case "module":

throw new Error("Module external type is not implemented yet");

case "var":

case "const":

case "let":

case "assign":

default:

return getSourceForDefaultCase(

this.isOptional(moduleGraph),

request,

runtimeTemplate

);

}

}

Also if you're interested in a deep-dive. I made a screencast going through the entire source of ModuleFederation - it's a little dense but very informative.

Also if you're interested in a deep-dive. I made a screencast going through the entire source of ModuleFederation - it's a little dense but very informative.

Thanks, definitely! I've been diving into the source as time allows over the past few days to see how to make it work for our usecase, and I really appreciate a guided tour!

I'm new to screencasts and all that, writing medium articles just cannot effectively dump the amount of information required lol. Ive got a playlist on youtube if you're looking to kill time lol.

https://www.youtube.com/playlist?list=PLWSiF9YHHK-DqsFHGYbeAMwbd9xcZbEWJ

Even just watching the first few minutes answered some basic questions I had about the code structure. Again, thanks!

@echarles brought up a question about remote imports and typing: https://github.com/module-federation/module-federation-examples/issues/20#issue-612053587

I think we shouldn't need to use the dynamic import statement in extensions, so this should be ok (see https://github.com/webpack/webpack/issues/10352#issuecomment-622149436 and comments later on).

My current takes is:

- Extension authors will just use regular imports. But their webpack config (or our CLI that wraps this) uses federated modules to make sure those imports are resolved remotely.

- In our main bundle, we can dynamically import a list of all the installed extensions (in this case it is just using globals, so it just grabs the globals https://github.com/module-federation/module-federation-examples/blob/cf2d070c7bbb0d5f447cfe33f69e07bcf85f8ddb/dynamic-system-host/app1/src/App.js#L3-L9, but we could also use requirejs instead. Doesn't matter to end users). Yes this won't be type safe, but it also isn't type safe now and there really isn't a way it could be type safe. Because we are basically taking a list of strings provided in the evironment of module names and resolving each and hoping that it is a JupyterLab extension type.

Ah so this (https://github.com/webpack/webpack/issues/10352#issuecomment-622016042) says we can't use remotes with non dynamic imports.

I think that works fine. So for extension authors, all imports are shared imports, not remotes. If an extension imports another extension, it's also as a shared import.

Sounds good @saulshanabrook. I am beyond excited to try this out in an actual Webpack beta release!

@blink1073 me too!! I am very thankful for @ScriptedAlchemy and @sokra for their work on this.

We have liftoff! https://github.com/webpack/webpack/issues/10352#issuecomment-624339188

Dynamic import issues fixed. It’s on npm now. If you want sync imports then just make sure you have an async import somewhere higher in the tree. I’m able to use import or import(). If you “import from” a remote. Then that file needs a promise somewhere in it execution chain. If you lazyload the parent file or something. Very useful for redux stores. And things that can’t be a promise. Otherwise just use import() and then the module has a promise to work with. I repeat as long as there’s an import() somewhere in the chain of execution. You can use sync import/require() otherwise you neeed to dynamic import the remotes. Remotes working only depends on there being a import() somewhere higher up. Webpack will boost remotes to the nearest promise. If you import() a remote. Then that’s the nearest dynamic import webpack will hoist dependencies to

I also own github.com/module-federation which has a example repo under the org. Showing a lot of capabilities. https://twitter.com/7rulnik/status/1257967681954877442?s=21

Lastly,

Thank you for considering this as a solution. The enthusiasm from the community has been a driving factor to try and change something for us all. It’s really appreciated, a lot of work went into making this all happen. I really hope saves you time and enables you more✨

Thank you Tobias for all the support and work you invested. We couldn’t have built this without your help. You made this work server and client side. This is a stable and powerful solution because of Your changes to the api, webpack core, and ideas on refining the initial proposal.

@ScriptedAlchemy and @sokra, I can't thank you both enough for the work you do at the foundation layer that enables platforms like ours to succeed.

Improved vendor federation for dynamic containers now under review. Please note well.

We also include versioning internally and you can set singletons or min required version as you wish

https://github.com/webpack/webpack/pull/10960

Thanks for the heads up!

Cool, got it running.

function loadComponent(scope, module) {

return async () => {

// Initializes the share scope. This fills it with known provided modules from this build and all remotes

await __webpack_init_sharing__("default");

const container = window[scope]; // or get the container somewhere else

// Initialize the container, it may provide shared modules

await container.init(__webpack_share_scopes__.default);

const factory = await window[scope].get(module);

const Module = factory();

return Module;

};

}

you can also create sub-scopes if you do not wish to blanket override the entire subset.

https://github.com/module-federation/module-federation-examples/pull/63/files

We should be cutting a new beta.17 for webpack very soon

Amazing, thank you for being so responsive with those examples, they have already helped us tremendously.

Seconded - thanks for your excellent work and communication! webpack/webpack#10960 in particular looks like it addresses the problems we were trying to reason through.

leave comments on that thread for sure, im just a direct line to Tobias and internal webpack - for discussions and so on - the thread on webpack is best.

Advanced API: Module Federation for power users https://youtu.be/9F7GoDMrzeQ

Copying and elaborating on https://github.com/jupyterlab/jupyterlab/pull/8385#issuecomment-652065046 since we've now closed that PR:

- Create a new repo to iterate on the rest of the design

- Port those pieces back to JupyterLab as:

- Two new scripts in

buildutils(core build and extension build) - New endpoint in

jupyterlab_server(notionally/lab/ext/<foo>)

- Two new scripts in

- Then, make the other changes to the build system and the

appexample as appropriate:

- installed extensions are found on the system, respecting the appropriate Jupyter paths. Extensions at one level may override extensions at another level

- The data is encoded into the templated HTML file

Create a new repo to iterate on the rest of the design

In progress: https://github.com/blink1073/juptyerlab-module-federation

Other follow up tasks tracked from https://github.com/jupyterlab/jupyterlab/pull/8385#issuecomment-646335100:

- Update the server to template the new index file and handle paths to extension assets. These paths should follow the Jupyter convention for overriding levels user, sys-prefix, and system.

- Update commands.py to handle extensions spread throughout the Jupyter levels

- In the new share application directory, we'll just put the pre-built core packages, we won't build anymore

- Publish an alpha version

- Test with both a third-party extension and another extension that depends on it (for example, ipywiywi)

- The server should be able to still load core and all extensions from a CDN

Can be done in parallel:

- Add a cookiecutter that uses the extension CLI to generate a package suitable for PyPI or conda-forge

- Write documentation on using the new build tool for extension authors

- Ensure that source maps work for both core packages and extensions

Core mode should only have the affect of not loading extensions (if not bundled)

An update: #8772 and #8802 implement the basic functionality, released in 3.0a8.

@git-mn-b and I made a lot of progress on getting a better webpack config that allows extensions to depend on other extensions, and he is putting in a PR to update the extension webpack config.

Can we add an inline whiteboard to the wishlist?

@pylang, this issue is about what we want the new extension system framework and architecture to be, not about specific extensions.

If you'd like to propose a new extension idea, please open a new issue and we'll tag it as an extension idea. We collect these as issues so that if a community member is looking for an idea, there are a few recorded. Of course, you are welcome to implement the extension yourself (you can see the extension tutorial in the docs).

All of the above issues are tracked in other issues, closing this mega-issue.

Most helpful comment

Background

JupyterLab uses a dependency injection (DI) system, as well as some shared state between modules. Because of this, we have a need to share modules at run time. The current best practice for web applications is to use a tool like Rollup or Webpack to

create a set of bundle files that are loaded in the browser. Those bundle files typically contain local state that is not shared

between bundles.

In the case of JupyterLab, we want to enable the user to extend the application by installing new extensions that load alongside the core extensions. Up until now, this was accomplished by adding the extensions to a monolithic single page

web application build using Webpack. This had the advantage of using standard tooling and workflows. It has the disadvantage of requiring a node runtime and access to an npm repository for dependencies.

Here we seek to find a solution that enables JupyterLab to be extensible without requiring a node runtime or access to an npm repository. We still have the requirement of sharing modules for DI, as well as things like lumino messages that share state.

We propose a solution where the extension author uses a JupyterLab-provided tool to build an extension asset that is able to be dynamically loaded by the JupyterLab application and share state. The extension should be installable as a conda package, so that users can use a single manifest and dependency source to provision their environments.

The next step is to find a bundler and loader solution that accomplishes the above design goals. The following bundlers were considered: Webpack, Rollup, Parcel, and snowpack. The following loaders were considered: Webpack, Parcel, snowpack, RequireJS, SystemJS, and StealJS.

Bundlers

Webpack was used in a previous attempt to make a custom bundler for JupyterLab. The previous version relied on the parsed output of the module in order to create an AMD module for each of the output modules, as opposed to the standard Webpack module. However, neither Webpack 4.0 nor the upcoming Webpack 5.0 expose the transpiled output to plugins.

Rollup is ruled out because it inlines modules.

Snowpack is ruled out because it leaves existing modules in place and loads them as singular files.

Parcel provides the generated JavaScript for each module, allowing us to generate AMD modules for each input module. It is in the process of migrating to a new plugin format, but the underlying bundle and module structure is staying the same.

Loaders

Webpack and Parcel are ruled out because their loaders operate on self-contained bundles, unless explicitly loading an external bundle.

Snowpack is ruled out because it expects one file per module, which would take to long for our application.

SystemJS is ruled out because it does not support the require function variant of the AMD module.

Both RequireJS and StealJS support loading the require function variant of the AMD module. RequireJS has not seen much activity on GitHub, but it is stable and widely used. StealJS is actively maintained, but not as widely used.

Bundling and Loading Proposal

We target Parcel as the bundler and RequireJS as the module loader. We would write a transformer that rewrites Parcel imports to use an AMD module name, and then wrap the output in an AMD definition that uses a require function. We would use the sourcemap information given by Parcel and update it given our changes to produce the final source map. We do not expose window.require, so that we keep our loader as an implementation detail.

Our extension builder would have a set of known external packages from JupyterLab and the extension. It would use unqualified names for those modules, and not include them in the output. The bundles must be optionally optimized using a JS optimizer. These bundles would then be included in either a pip or conda package, and would be loaded dynamically by the server from site-packages. Eventually, a build step would be used to create optimized bundles in the application directory.

Packaging and Dependency Management

Currently extensions are typically installed from an npm registry, or from a tarball included in a pip or conda package. Extensions cannot declare a direct dependency on another extension. There is no way for two packages to share another dependency for the purposes of shared state other than requiring the same version range and relying on yarn de-duplication. If we utilize python entry points, then we have a single source of truth for dependency resolution: either conda or pip. Extensions would be installed as standard package alongside JupyterLab, and declare their dependency other other extensions or on provider packages, that are built the way same as extensions, but are meant to provide shared state as opposed to an extension.

Remaining technical tasks for a minimum product