Puppeteer: Question: How do I get puppeteer to download a file?

Question: How do I get puppeteer to download a file or make additional http requests and save the response?

All 172 comments

I'll look into the specifics after I work on another issue (if no one gets to it before me.) But, I feel like we'd need to look for the request going off and then save the buffer of that response body. Not sure how you'd trigger the download though pragmatically. Although, "clicking" like normal on the right part of the page should do it.

Let me elaborate a little bit. In my use case, I want to visit a website which contains a list of files. Then I want to trigger HTTP requests for each file and save the response to the disk. The list may not already by hyperlinks to the files, just a plain text name of a file, but from that I can derive the actually URL of the file to download.

I saw this Chromium issue some time ago. It addresses downloads and seems to be moving along well. Due to security reasons I have the impression that headless Chrome does not support downloading by clicking on the download button. But above issue opens up that possibility for this important use case.

It seems that a key use case of this project is to use it for web scraping and downloading content from the internet. For example CasperJS has a download method for this purpose.

I'm using CasperJS for couple of years, yep that actually sends raw XMLHttpRequests to the URL specified to grab the contents of a file. However, in practice it is not foolproof. For example, try scripting to fetch the download zip URL of a GitHub repo, it will produce a zero-byte file. I guess a method like that can be revisited to see if it can be improved to cover edge cases.

Although fundamentally, my preference is to have the ability to click some download button and getting the file directly. This seems the easier to implement in scripting, because some types of downloads you won't get to see the actual URL from the DOM layer. Only after clicking and going through some JS code, the download initiates in a normal browser. That type of setup may not be applicable with a download method because there is no full URL to give to the method in the first place.

Yea, Chromium doesn't support it. But if you can trigger the request you should be able to at least get the buffer content and write it to disk using the filesystem API in Node. Or get the URL to then initiate a manual request, if prevented as a download outright, which you'd then do the same with the buffer from.

Chromium may not support it, but it should be possible to work around it.

@kensoh Ideally, it could support both downloading by clicking a link and downloading from URL.

@Garbee There may be a way for me to just use NodeJS to make the requests, but if I use a headless browser, it will send the proper sessions cookies with my requests.

Support for downloads is on the way. It needs changes to Chromium that are under review.

@pavelfeldman im waiting~~~~

Upstream has landed as r496577, we now need to roll.

I'm having file download issues as well, not sure if it's the same thing. I'm visiting a link that triggers a download like: somewhere.com/download/en-GB.po.

I'm creating a new page per language file I need to download so they're run in parallel and then trying to Promise.all() them before closing the browser. It seems that even after the downloads all finish, the page.goto is never resolved:

const urls = languages.map(lang => `${domain}/download/${lang}/${project}/${lang}.${extension}`);

await Promise.all(urls.map(url => browser.newPage().then(async tab => await tab.goto(url))));

browser.close();

@aslushnikov is the change you mentioned in your last commit, now shipped?

If yes, then I am looking for some examples to download a file in headless mode. I couldn't find any documentation on it.

@nisrulz additional work is required upstream, we're working on it.

@aslushnikov 496577 landed a week ago, it should be few lines of code on your end.

Looks like aslushnikov@ bakes something more upstream to deliver an event upon download.

@pavelfeldman r590913 is not enough for a complete story; I'm working on Page.FileDownloaded event to notify about successful download.

One workaround I found (not ideal for sure), open chrome with a profile that has a download directory set: Worked for me when i clicked a link that downloaded an audio file. Then in your puppeteer script just wait for that file to appear and copy it over where you need to go.

const browser = await puppeteer.launch({headless: false, args: '--profile-directory="Default"'});

see here for how to find your profile

@mmacaula I am looking for a way to download a file when Chrome is running in headless mode. If I am not running in headless mode, the file downloads perfectly into the Downloads folder which is the default location I guess.

Its a much sought after feature, already available in projects such as CasperJS

Oh yeah my mistake, It doesn't work with headless mode. :(

Is there a way to just capture the request and have stored in another remote location instead of local to Chrome/puppeteer?

@dagumak couldn't you catch the responses and write the files to a location of your choice?

const puppeteer = require('puppeteer');

const fs = require('fs');

const mime = require('mime');

const URL = require('url').URL;

(async() => {

const browser = await puppeteer.launch();

const page = await browser.newPage();

const responses = [];

page.on('response', resp => {

responses.push(resp);

});

page.on('load', () => {

responses.map(async (resp, i) => {

const request = await resp.request();

const url = new URL(request.url);

const split = url.pathname.split('/');

let filename = split[split.length - 1];

if (!filename.includes('.')) {

filename += '.html';

}

const buffer = await resp.buffer();

fs.writeFileSync(filename, buffer);

});

});

await page.goto('https://news.ycombinator.com/', {waitUntil: 'networkidle'});

browser.close();

})();

You may need to adjust the timing for your page. Waiting for the load event and networkidle might not be enough.

@ebidel Sorry if I'm missing something, but where are you getting the buffer from in that code?

edit: response.buffer seems to be a function, but when I call it and await the promise it returns I get this error:

Unhandled promise rejection (rejection id: 1): Error: Protocol error (Network.getResponseBody):

No data found for resource with given identifier undefined

This seems to only happen when the file gets downloaded by the browser -- that is to say, when the file appears in the download bar in non-headless mode.

This is the code I used:

// this works

// const downloadUrl = 'https://nodejs.org/dist/v6.11.3/'

// this doesn't work

const downloadUrl = 'https://nodejs.org/dist/v6.11.3/SHASUMS256.txt.sig'

const responseHandler = async (response) => {

if (response.url !== downloadUrl) {

return

}

const buffer = await response.buffer()

console.log('response buffer', buffer)

browser.close()

}

page.on('response', responseHandler)

page.goto(downloadUrl)

Version info:

Windows 10 64-bit

Puppeteer 0.10.2

Chromium 62.0.3198.0 (Developer Build) (64-bit)

@ebidel I'm going to give that a shot. Thank you!!

@mickdekkers updated the snippet to include const buffer = await resp.buffer();. Bad copy and paste.

@ebidel alright, thanks! Do you know if the issue I described in my edit is a bug or expected behavior? I couldn't find any info about it and I'd like to report it somewhere if it is, but I'm not sure what's the best place for it. I can make a new issue for it on this tracker if it's a puppeteer bug.

For resource types that the renderer doesn't support, the default browser behavior is to download the file. That's probably what's going on here. Could you use pure node apis to fetch/write the file instead of waiting for the page response? You could also intercept requests page.on('request') and fetch the file.

@aslushnikov would know for sure. He's been working on a download API. There may be a cleaner way to handle cases like this in the future.

Been trying the code snippet above and run into the following issue on the line

const buffer = await resp.buffer();

Error: Protocol error (Network.getResponseBody): No data found for resource with given identifier undefined

On normal Chrome it will download a pdf. Maybe this is related to #610?

Couldn't find a way to work around it.

@Apfelmann it sounds like you're experiencing the same issue I mentioned in my comment earlier.

In my case the solution turned out to be to use node to fetch the file itself as @ebidel suggested. It wasn't possible to use node right away because the file I wanted to download requires you to be logged in, but I was able to work around it. I used puppeteer log in and to obtain a session cookie, then included that in the request headers when I made the download request using node.

One benefit of using node for the download is that if you store the session cookie somehow, you don't need puppeteer to log in every time you want to download a file. You can just keep using the same session cookie until it expires.

Keep in mind that if you do decide to store the session cookie, you'll want to keep it secure. Anyone that has access to it essentially has access to your account, and can do almost all the things you can do when you're logged in.

As @kensoh mentioned in his earlier comment there are a few important use cases where downloading out-of-band might not be possible. I tried a suggestion in a comment of a related bug on Chromium Version 64.0.3240.0 (Developer Build) (64-bit) on a Mac, but that did not work either in full Chromium with puppeteer(puppeteer.launch({devtools:true})) or headless Chromium. I have noticed similar issue on Phantomjs that remains open for years. @aslushnikov If there is a timeline when this is expected to land upstream it will be great!

I too am fighting with Chrome stealing my downloads!

In some instances, apps are designed such that they really only work with a browser and attempting to emulate the browser behavior from inside Node isn't realistically feasible.

I too am receiving this error:

Error: Protocol error (Network.getResponseBody): No data found for resource with given identifier undefined

And it's very frustrating! The browser steals my response bodies but doesn't save them anywhere useful!

The only way I've been able to download a file is if I know the URL before-hand, and call fetch in an evaluate. downloadUrl is a string with the URL of the file you want to download.

const downloadedContent = await page.evaluate(async downloadUrl => {

const fetchResp = await fetch(downloadCsvUrl, {credentials: 'include'});

return await fetchResp.text();

}, downloadUrl);

You can then use downloadedContent to write to a file.

@jonesnc The file URL can always be guessed somehow, even if there is no hard coded link within the HTML source code, it's easy to find what is is for example simply by looking in the Chrome download manager.

Now, to bring some additional information regarding your solution, I think there are a few caveats due to the fact that the page.evaluate method (similarly to the page.exposeFunction) only accept and returns serializable arguments/parameters, and seems to sometimes incorrectly handle string encoding.

I had the case when using your exact same method (with the Fetch API), when trying to download a PDF, get its contents as a String (which is normally a binary String) and return that string to the Nodejs context: here, impossible to save the binary string as a file and to get a valid PDF file (even using write methods along with Buffers).

So I found a workaround (duplicated comment from #610):

My idea was to use the Fetch API, and then use the arrayBuffer or blob response type in order to send the result back to an exposed function, which in turn would write the data to a file.

But obviously, the page.exposeFunction() method serializes the exposed function parameters using JSON.stringify, meaning we cannot pass data such as ArrayBuffers or Blobs (these type would be destroyed).

Using the Fetch API DOMString response type (string) did not work either, for some reason the string was somehow altered (its encoding I think) when passed into the exposed function.

Eventually, I decided to fetch the PDF data as an ArrayBuffer, convert the ArrayBuffer to a string in UTF-8 encoding, pass that UTF-8 string into the exposed function, and then compute the reverse method on the other side: the exposed function convert the UTF-8 string back to an ArrayBuffer, which can be used to write the data in a file on the user file system.

Some bits of my code here:

page.exposeFunction("writeABString", async (strbuf, targetFile) => {

return new Promise((resolve, reject) => {

// Convert the ArrayBuffer string back to an ArrayBufffer, which in turn is converted to a Buffer

const buf = strToBuffer(strbuf);

// Try saving the file

fs.writeFile(targetFile, buf, (err, text) => {

if(err) reject(err);

else resolve(targetFile);

});

});

function strToBuffer(str) { // Convert a UTF-8 String to an ArrayBuffer

let buf = new ArrayBuffer(str.length); // 1 byte for each char

let bufView = new Uint8Array(buf);

for (var i=0, strLen=str.length; i < strLen; i++) {

bufView[i] = str.charCodeAt(i);

}

return Buffer.from(buf);

}

});

And then, from within an evaluate call, you would have the following :

page.evaluate( async () => {

function arrayBufferToString(buffer){ // Convert an ArrayBuffer to an UTF-8 String

let bufView = new Uint8Array(buffer);

let length = bufView.length;

let result = '';

let addition = Math.pow(2,8)-1;

for(var i = 0;i<length;i+=addition){

if(i + addition > length){

addition = length - i;

}

result += String.fromCharCode.apply(null, bufView.subarray(i,i+addition));

}

return result;

}

let geturl = "https://whateverurl.example.com";

return fetch(geturl, {

credentials: 'same-origin', // usefull when we are logged into a website and want to send cookies

responseType: 'arraybuffer', // get response as an ArrayBuffer

})

.then(response => response.arrayBuffer())

.then( arrayBuffer => {

var bufstring = arrayBufferToString(arrayBuffer);

return window.writeABString(bufstring, '/tmp/downloadtest.pdf');

})

.catch(function (error) {

console.log('Request failed: ', error);

});

});

The PDF file is eventually well downloaded and saved at the /tmp/downloadtest.pdf location :)

@Xavatar I implemented your solution and it works pretty good. Although I changed it a little. Instead of string I'm using dumb JS StringBuilder. For larger files, I think this might make a difference.

var result = [];

var addition = Math.pow(2,8)-1;

for(var i = 0;i<length;i+=addition){

if(i + addition > length){

addition = length - i;

}

result.push(String.fromCharCode.apply(null, bufView.subarray(i,i+addition)));

}

return result.join('');

@jonesnc Finally I use you method to solve my CSV download problem. I had tried page.on('load') or set the profile directory but none of them work under headless mode. Thanks

@ebidel your code in my case doesn't save the file on the path of my choice. Always on the download folder. I assume, if Im constantly changing that folder I will get my solution. In the meantime, Im still trying

@titusfx that shouldn't be. fs.writeFileSync should save to the path you specify. Unless you're doing something else.

I am trying to download a file by clicking a link (which goes through JS instead of a URL as @kensoh mentioned here). The file gets downloaded when I am running in headless: false mode, but the problem its gets downloaded to the default location. How can I change the default download location?? Also, the file doesnt download when running in headless: true mode.

@ebidel What is the solution or work around to capture the download buffer while running headless?

@aslushnikov I couldnt find any documentation around page.FileDownloaded event, I tried it and looks like this doesn't work either.

Here is what I am doing -

const puppeteer = require('puppeteer');

(async () => {

const browser = await puppeteer.launch({headless: true});

const page = await browser.newPage();

await page.goto('http://niftyindices.com/resources/holiday-calendar');

await page.click('#exportholidaycalender');

await page.waitFor(5000);

await browser.close();

})();

@sohailalam2 upstream Chrome DevTools Protocol has the new method Page.setDownloadBehavior. More info on its parameters. Puppeteer's team probably already has something more elegant and robust in mind to address downloads.

In the meantime you can try this comment (force the behavior to 'allow' and explicitly set a valid download path) - https://github.com/GoogleChrome/puppeteer/pull/888#issuecomment-334998415 For me it works for both visible and headless Chrome. I use websocket directly but the behavior should be the same using Puppeteer, I believe.

Thanks @kensoh 👍 It works.

await page._client.send('Page.setDownloadBehavior', {behavior: 'allow', downloadPath: './'})

There is one possible issue with this hack currently -

If downloading a file and the another file exists by the same name, then puppeteer overwrites the file with the new content instead of changing the filename. :(

@sohailalam2 is there a way to set this in the browser class rather then the page?

@paynoattn if you are asking about _setDownloadBehavior_ then no, there isn't a method to do so at browser level. You can read the documentation here.

Hi @sohailalam2 I need small help. niftyindices.com is newly launched site by nseindia.com.

Previously I used to download historic data from url : https://www.nseindia.com/products/dynaContent/equities/indices/total_returnindices.jsp?indexType=NIFTY%20BANK&fromDate=30-09-2017&toDate=31-10-2017

in this "NIFTY%20BANK&fromDate=30-09-2017&toDate=31-10-2017" dynamically created using Excel Macros where name of indices, start & end date used to changed as per requirement.

Now same data is available for down load from niftyindices.com -> Reports -> Historic Data & I am not able to figure out the JS/URL from which it is downloaded.

How to identify new link from where the data is downloaded in CSV format on niftyindices.com.

Pls open this link for better clarity. https://www.nseindia.com/products/dynaContent/equities/indices/total_returnindices.jsp?indexType=NIFTY%20BANK&fromDate=30-09-2017&toDate=31-10-2017

Thanks in advance.

Hi @jdvisaria :

Nothing happens when clicking on the "_Download file in csv format_" link. I suspect there is something wrong with their page's Javascript code / link click event handling.

How to identify new link from where the data is downloaded in CSV format on niftyindices.com.

There don't seem to be any hard link, since it's more likely that a new CSV file is created "on the fly" by Javascript, using a blob, but the link click event seems to be broken.

Anyway, this has no relation at all with Puppeteer..

@Xavatar Thank you for the reply. Hope you went on http://niftyindices.com/reports/historical-data to do the analysis. Once you are on this link, select "Total Return Index" from top left side and select any older start date from date manu. Once you click on submit it will show the the table on right hand side with "csv format" link.

Now csv file will be downloaded. I am trying to figure out URL from where it got downloaded e.g. try opening this link in google chrome browser. The table will open in the browser itself without downloading the file. The "nseindia.com" was the older side which now moved to "niftyindices.com"

Hope I am able to explain my query.

@jdvisaria Yes indeed as suspected, there is no HTTP request made to their server to query for the CSV file.

The CSV data is entirely generated on-the-fly and is being sent as data url, as follows:

data:application/csv;charset=utf-8, "Date","Total Returns Index"

"06 Nov 2017","14274.03"

"03 Nov 2017","14274.97"

"02 Nov 2017","14232.79"

"01 Nov 2017","14255.58"

"31 Oct 2017","14108.55"

The Javascript code responsible for this part is located in http://blob.niftyindices.com/assets/js/IISLComponet.js

$("#exportTotalindex").on("click", function (a) {

exportTableToCSV.call(this, $("#historytotalindexexport"), "HistoricalTotalReturn_Data.csv")

})

function exportTableToCSV(a, b) {

function j(a) { return a.get().join(f).split(f).join(h).split(e).join(g) }

function k(a, b) { var c = $(b), d = c.find("td"); return d.length || (d = c.find("th")), d.map(l).get().join(e) }

function l(a, b) { return $(b).text().replace('"', '""') }

var c = a.find("tr:has(th)"), d = a.find("tr:has(td)"), e = String.fromCharCode(11), f = String.fromCharCode(0), g = '","', h = '"\r\n"', i = '"'; i += j(c.map(k)), i += h, i += j(d.map(k)) + '"', csvData = "data:application/csv;charset=utf-8," + encodeURIComponent(i), window.navigator.msSaveBlob ? window.navigator.msSaveOrOpenBlob(new Blob([i], { type: "text/plain;charset=utf-8;" }), "csvname.csv") : $(this).attr({ download: b, href: csvData, target: "_blank" })

}

All what you have to do is to handle this csvData differently, and returns a CSV string from your Puppeteer evaluate closure:

i = '"'; i += j(c.map(k)), i += h, i += j(d.map(k)) + '"'

@Xavatar Thanks for the reply. I will try to figure out URL. Thanks again.

@Xavatar I tried finding the URL. However, I came to know that data is retrieved in JSON and I need to parse it. If you can help with that as I know nothing about JS

Really looking forward to Page.fileDownloaded event. Could we expect it any time soon? @aslushnikov

@piilupart88 we're working on bringing proper support for this into DevTools protocol; it turned out to be a bit more involved than we expected. The upstream bug could be tracked at https://crbug.com/696481, but there're no time estimates.

Is it possible to download files when they are just downloaded to browser? I mean do we have to wait browser to trigger the whole "load" event? Something like "Page.on('responseLoad', resp => { ... " ?

@ebidel wrote:

const puppeteer = require('puppeteer');

const fs = require('fs');

const mime = require('mime');

const URL = require('url').URL;

(async() => {

const browser = await puppeteer.launch();

const page = await browser.newPage();

const responses = [];

page.on('response', resp => {

responses.push(resp);

});

page.on('load', () => {

responses.map(async (resp, i) => {

const request = await resp.request();

const url = new URL(request.url);

const split = url.pathname.split('/');

let filename = split[split.length - 1];

if (!filename.includes('.')) {

filename += '.html';

}

const buffer = await resp.buffer();

fs.writeFileSync(filename, buffer);

});

});

await page.goto('https://news.ycombinator.com/', {waitUntil: 'networkidle'});

browser.close();

})();

const buffer = await resp.buffer();

Error: Protocol error (Network.getResponseBody): No data found for resource with given identifier undefined

This happens only in the new Version 0.13.0, if i use Version 0.12.0 all works fine!

I think I got my answer, too. :) @do-web The code you wrote works on v0.13.0 inside of the on('response') event on my end.

OS X 10.13.1

Node v8.2.1

So far, using:

await page._client.send('Page.setDownloadBehavior', {behavior: 'allow', downloadPath: downloadDir});

Has worked for me when "clicking" on a link that starts a download.

Any ideas on how to declare the name of the file to be downloaded? Right now I have to scan the folder for the default name of the file then change it.

v0.13.0

@ecovirtual thanks for that solution 👍

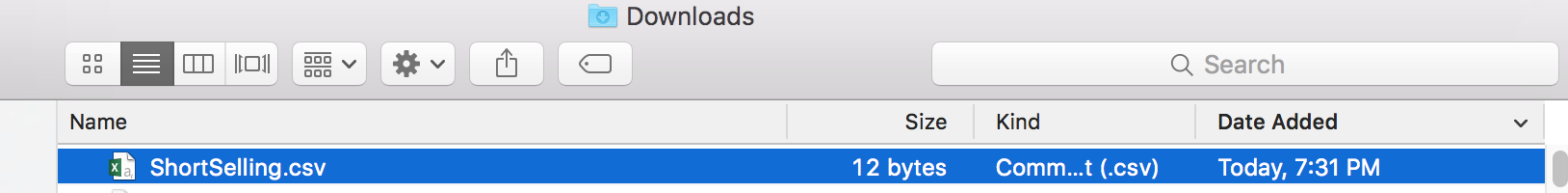

@ecovirtual Please find below sample code.

const puppeteer = require('puppeteer');

(async() => {

const browser = await puppeteer.launch({headless: false});

const page = await browser.newPage();

await page.goto('https://www.nseindia.com/products/content/equities/equities/homepage_eq.htm');

await page._client.send('Page.setDownloadBehavior', {behavior: 'allow', downloadPath: __dirname});

await page.waitFor(1000);

page.evaluate(async () => {

var ele = document.evaluate('//a[text()="Short Selling (csv)"]', document, null, XPathResult.FIRST_ORDERED_NODE_TYPE, null).singleNodeValue;

if(ele)

{

ele.click();

}

});

await page.waitFor(5000);

await browser.close();

})();

I want file to be downloaded in the directory where my node is running. But the file is downloaded into "Downloads".

_# await page._client.send('Page.setDownloadBehavior', {behavior: 'allow', downloadPath: __dirname});_

Am i doing something wrong.

@mithung Well, it looks ok. I'm declaring the setDownloadBehavior before actually going to the page. Also, you could try just a relative path.

await page._client.send('Page.setDownloadBehavior', {behavior: 'allow', downloadPath: './myDownloadDir'});

await page.goto('https://www.nseindia.com/products/content/equities/equities/homepage_eq.htm');

@ecovirtual Thanks for quick response.

I have made suggested modifications.

const puppeteer = require('puppeteer');

(async() => {

const browser = await puppeteer.launch({headless: false});

const page = await browser.newPage();

await page._client.send('Page.setDownloadBehavior', {behavior: 'allow', downloadPath: './myDownloadDir'});

await page.goto('https://www.nseindia.com/products/content/equities/equities/homepage_eq.htm');

await page.waitFor(1000);

page.evaluate(async () => {

var ele = document.evaluate('//a[text()="Short Selling (csv)"]', document, null, XPathResult.FIRST_ORDERED_NODE_TYPE, null).singleNodeValue;

if(ele)

{

ele.click();

}

});

await page.waitFor(5000);

await browser.close();

})();

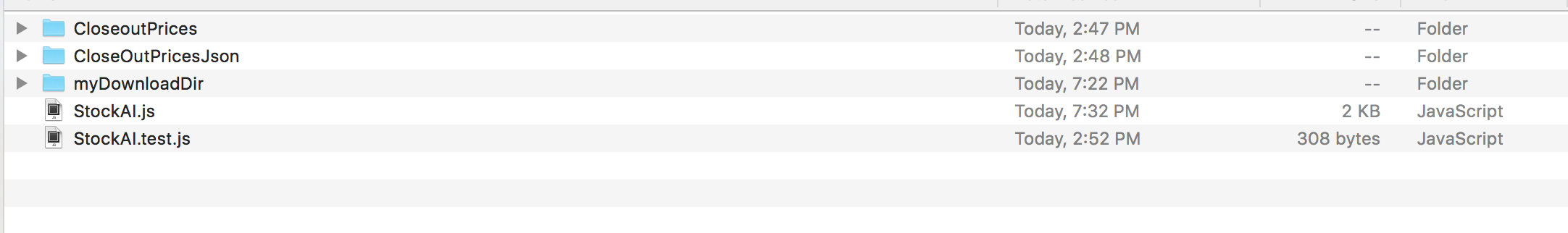

Below is my folder structure :

Mmm, the only thing that occurs to me now is that OS X is handling chrome/chromium in a different way. My tests are beeing done in Ubuntu 17.10 with Puppeteer v0.13 and Node v8.9.3

Is anyone else getting Error - path too long when using the mentioned workaround?

await page._client.send('Page.setDownloadBehavior', { behavior: 'allow', downloadPath: downloadsDirectoryPath })

I am on Windows 10 and I get the error even with paths like C:/files (existing folder).

Just find the way to pass any response of fetch back to Node.js:

https://intoli.com/blog/saving-images/

fetch() -> blob -> base64 --(pass to node)-> blob -> write

Can some one help me out for downloading the PDF and Excel file using puppeteer ?

My HTML code:

1) PDF :== < img src="L001/consumer/images/ButtonImage_PDFDownload.jpg?mtime=1472814764000" alt="Adobe - PDF Format" title="Adobe - PDF Format" class="m_excelPDF" id="arrowImage" border="0" onclick="generateEStatementPDF();">

2) Excel file:== < img src="L001/consumer/images/ButtonImage_ExcelDownload.jpg?mtime=1472814759000" alt="Microsoft - Excel Format" title="Microsoft - Excel Format" class="m_excelPDF" id="Image32997277" border="0" onclick="generateEStatementXLS();">

Hi @kensoh and @sohailalam2

I've tried the following setup but it doesn't download for some reason using headless: true ( with false the download works like a charm!)

```const puppeteer = require('puppeteer');

(async () => {

const browser = await puppeteer.launch({headless: true});

const page = await browser.newPage();

await page.goto('http://niftyindices.com/resources/holiday-calendar');

await page._client.send('Page.setDownloadBehavior', {behavior: 'allow',

downloadPath: '/tmp'})

await page.click('#exportholidaycalender');

await page.waitFor(5000);

await browser.close();

})();

```

I'm using the latest puppeteer version 1.1.1

What am I missing?

Thanks for the help

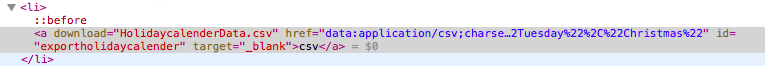

@antoniogomezalvarado I can replicate this with a framework I used automate Chrome through DevTools protocol. 2 observations - 1. the link has nothing before clicking. 2. on clicking you'll see below properties. Not sure if it is the way headless Chrome handles data URL differently from visible Chrome or some other causes. I don't have a solution, just sharing findings.

@kensoh that' looks easy. Just click the link and then grab the inline csv file via .href?

await page.click('a#exportholidaycalender')

const data = await page.evaluate(() => document.querySelector('#exportholidaycalender').href)

then using something like https://www.npmjs.com/package/data-uri-to-buffer to get it into a buffer then just fs.writeFile? 👍

I can confirm

await page._client.send('Page.setDownloadBehavior', { behavior: 'allow', downloadPath: '/tmp' })

Works well (in non headless mode)

Thanks @albinekb got it, does it work in headless mode? The issue by @antoniogomezalvarado only happens in headless mode. Hi @antoniogomezalvarado you can check out @albinekb solution.

PS - sorry for sounding noob 🤣 I actually don't have a Node.js environment

Thanks @albinekb @kensoh your solution is similar to what was suggested here : https://stackoverflow.com/questions/49245080/how-to-download-file-with-puppeteer-using-headless-true

Which eventually grabs the event and writes the data into a file ( and page._client isn't required to make this work - don't know why )

What made it work for me was adding:

```

const browser = await puppeteer.launch({

headless: true

});

browser.on('targetcreated', async (target) => {

let s = target.url();

//the test opens an about:blank to start - ignore this

if (s == 'about:blank') {

return;

}

//unencode the characters after removing the content type

s = s.replace("data:text/csv;charset=utf-8,", "");

//clean up string by unencoding the %xx

...

fs.writeFile("/tmp/download.csv", s, function(err) {

if(err) {

console.log(err);

return;

}

console.log("The file was saved!");

});

});

const page = await browser.newPage();

....```

@antoniogomezalvarado I am not able to get your code working. Do you have a full example you could point me to?

@CanterJ here it is:

#!/usr/bin/env node

// Configs

var fs = require('fs');

// Methods

function downloadTest () {

try {

const puppeteer = require('puppeteer');

(async () => {

const browser = await puppeteer.launch({headless: true});

browser.on('targetcreated', async (target) => {

let s = target.url();

//the test opens an about:blank to start - ignore this

if (s == 'about:blank') {

return;

}

//This is fast and ugly to unencode the characters after removing the content type

s = s.replace("data:application/csv;charset=utf-8,", "");

s = s.replace(/%2F/g, "/");

s = s.replace(/%2C/g,",");

s = s.replace(/%22/g,'"');

s = s.replace(/%20/g," ");

s = s.replace(/%0A/g,"\n")

s = s.replace(/%0D/g,"");

//

fs.writeFile("/tmp/download.csv", s, function(err) {

if(err) {

console.log(err);

return;

}

console.log("The file was saved!");

});

});

const page = await browser.newPage();

await page.goto('http://niftyindices.com/resources/holiday-calendar');

await page.click('#exportholidaycalender');

await page.waitFor(5000);

await browser.close();

})();

}

catch (err) {

console.log("ERROR while creating report: " + err);

process.exit(1);

}

}

// Sequence

console.log('Running download test')

downloadTest()

@antoniogomezalvarado You are awesome!! Thank you! FYI, the reason I was having issues is because I was using puppeteer 1.2.0. I had to downgrade to 1.1.1 to get this working.

I'm glad it works @CanterJ :) - puppeteer versioning is indeed very delicate - I'd recommend running these in https://hub.docker.com/r/alekzonder/puppeteer/ which provides all the relevant dependencies without needing to setup the painful dependencies

So is there official puppeteer support for this yet?

@aslushnikov can you help me understand how to implement the functionality that I want, with the puppeteer?

I want to download the file in headless mode (when I use {headless: false} all is OK).

On the page I have the link:

<a target="_blank" href="https://...Response-80-33194866.zip=/"></a>

If I understand correctly that puppeteer should go to the new tab after clicking to download the file and save it using page._client.send('Page.setDownloadBehavior', {behavior: 'allow',

downloadPath: './'})

But I don't know how to implement it.

Hello!

Any news about Page.FileDownloaded event?

@gersonfs I'm sorry this takes long. Our initial approach turned out to be quite involved and vastly complicated chromium codebase. We're now evaluating a different approach that sounds promising, but there's no ETA. See https://crbug.com/831887

@TomasHubelbauer I was just having the File too long issue on windows 10. I ended up changing my path from c:/somedir/foo to c:\\somedir\\foo\\ and I was able to successfully download a file. Maybe that points you in the right direction. Cheers.

I spent hours poring through this thread and Stack Overflow yesterday, trying to figure out how to get Puppeteer to download a csv file by clicking a download link in headless mode in an authenticated session. This article saved the day. In short, fetch:

const res = await this.page.evaluate(() =>

{

return fetch('https://example.com/path/to/file.csv', {

method: 'GET',

credentials: 'include'

}).then(r => r.text());

});

@mycompassspins what you're doing is quite what is being asked about in this thread. What you are doing is getting the contents of a remote file using the current session and returning that to the puppeteer script for processing. The issue here is about triggering a download of a remote file to the local filesystem within the session. Without needing to return the contents and manually save it to the filesystem.

I had a similar situation, but more complicated: the site was built with ASP and every action was performed using the same URL. The server would choose what to do on the backend. This meant I couldn’t use anything like the fetch trick above (though that’s a neat one; thanks for the link @mycompassspins!).

What I used to solve it was something like this:

https://gist.github.com/Aankhen/b15132b141fc4c7ee6db0597aba4083c#file-download-ts-L1-L37

I was already using request-promise for something else, so I just continued with that.

@Garbee: true, the thread splintered in a couple different directions, but I thought my experience and solution might help another wayward cyber wanderer or two down the line.

I've created a working (at least for my purpose), 'waitForFileDownload'

https://github.com/kmbang/puppeteer_downFile/blob/master/temp.js

The idea is

- Use 'Page.setDownloadBehavior' to make the file to be located into the folder we want.

Use CDP (Chrome debug protocol) to catch, 'Network.responseReceived'

Usually, file down load comes with response header with the filename in Content-Disposition

So that we know which file we need to watch and record the timestampPolling the target folder to look for the file (use regex because sometimes chrome renamed the file, -0, -1, etc..) and the file that's generated later than the timestamp of #2.

Put timeout for this entire operation.

So for it seems working for me. Any comment, improvements are welcomed.

You can get the filesize and the name of the file from the response, and then use a watch script to check filesize from downloaded file, in order to close the browser.

And them you can trgger browser.on('disconnected') to do something else after download is done.

This is an example:

const filename = <set this with some regex in response>;

const dir = <watch folder or file>;

// Download and wait for download

await Promise.all([

page.click('#DownloadFile'),

// Event on all responses

page.on('response', response => {

// If response has a file on it

if (response._headers['content-disposition'] === `attachment;filename=${filename}`) {

// Get the size

console.log('Size del header: ', response._headers['content-length']);

// Watch event on download folder or file

fs.watchFile(dir, function (curr, prev) {

// If current size eq to size from response then close

if (parseInt(curr.size) === parseInt(response._headers['content-length'])) {

browser.close();

this.close();

}

});

}

})

]);

// Trigger some event when browser closes.

let self = this;

browser.on('disconnected', async () => {

<something else>

});

Even that the way of searching in response can be improved though I hope you'll find this usefull.

Not sure if my use case is the same as yours, but if you don't provide a path to page.pdf it returns a buffer. You can return this from the endpoint and use it however you want on the client side

Server side

const pdf = await page.pdf({

fullPage: true,

printBackground: true,

});

await browser.close();

res.set({

'Content-Disposition': 'attachment; filename="test.pdf"',

'Content-Type': 'application/pdf'

});

res.send(pdf);

then client side, I used a get request and filesaver.

.get(`/pdf-gen`, {

responseType: 'arraybuffer',

headers: { Accept: 'application/pdf' }

})

.then(response => {

console.log(response);

const blob = new Blob([response.data], {

type: 'application/pdf'

});

saveAs(blob, 'file.pdf');

});

Can someone else add fileDownloadCompleted event to puppeteer.Page or puppeteer.Browser?

I spent hours poring through this thread and Stack Overflow yesterday, trying to figure out how to get Puppeteer to download a csv file by clicking a download link in headless mode in an authenticated session. This article saved the day. In short,

fetch:const res = await this.page.evaluate(() => { return fetch('https://example.com/path/to/file.csv', { method: 'GET', credentials: 'include' }).then(r => r.text()); });

Any ideas on how to fetch it if I need to save a binary file, such as .pdf? I'm trying blob() instead of text() and other ideas but so far unsuccessful.

@msprancis : see my solution here

@xprudhomme Thank you, it worked!

@msprancis: You're welcome, happy to help :)

@gersonfs I'm sorry this takes long. Our initial approach turned out to be quite involved and vastly complicated chromium codebase. We're now evaluating a different approach that sounds promising, but there's no ETA. See https://crbug.com/831887

Issue #831887 is fixed now!

I also saw that the chromium issue was marked as fixed. Are there docs on how to use the new feature to download a binary file?

@gersonfs @aslushnikov Is there anything that needs to be done to get this working that is either in progress or one of the community members can submit a PR for?

Well to be honest, I don't really see where there is an issue at this point, everything seems to be working like a charm.

I've even been able to get Puppeteer download a file, simply by clicking on the "Download file" button that triggers the download process.

It works this way:

function setDownloadBehavior(downloadPath='/tmp/puppeteer/downloads/') {

return page._client.send('Page.setDownloadBehavior', {

behavior: 'allow',

downloadPath

});

}

await setDownloadBehavior();

await page.click(downloadButtonSelector);

If the download button triggers a PDF file download, then you will end up with this PDF file being downloaded at the downloadPath location, in the case above it would be located at '/tmp/puppeteer/downloads/whateverPDFname.pdf'

Hi @xprudhomme, for me the problem is, that I don't know what is the filename of the downloaded file and when the download has finished. There are workarounds, but it is quite cumbersome. Also using your snippet without some kind of wait at the end will cause that file will not be downloaded if it is not super tiny.

Hi @verglor ,

I understand the issues you are dealing with. However, they are easy to overcome.

We can :

- Determine the downloaded file name by catching it from a customized response handler (because even if we don't know the exact file name, at least we know what the requested url looks like)

- Trigger the file download

- Wait for the downloaded file name to be 'ready' / caught, and get it

- Wait for the downloaded file to be 'ready' / written to local storage

With this code:

function responseHandler(response) { // Related to step 1

const url = response.url();

const dlRequestUriPattern = /whatever-pattern\/download/i;

const urlMatchesDownloadRequestURI = dlRequestUriPattern.test(url);

// Only deal with requests matching our file download URL pattern

if(!urlMatchesDownloadRequestURI) {

return;

}

console.log(` [responseHandler] Got response for file download: ${url}`);

let dlFilename = decodeURIComponent(url.replace(/http.+\//g, '').replace(/\?.+/g, ''));

setDLFileName(dlFilename);

}

function setDLFileName(filename) { // Related to step 1

return page.evaluate((name) => {

window._dlFilename_ = name;

}, filename);

}

function waitForDLFileName() { // Related to step 3

return page.waitForFunction(() => (window._dlFilename_ !== null && window._dlFilename_ !== undefined) );

}

function getDLFileName() { // Related to step 3

return waitForDLFileName()

.then( () => page.evaluate( () => window._dlFilename_));

}

/**

* Check if file exists, watching containing directory meanwhile.

* Resolve if the file exists, or if the file is created before the timeout occurs

* @param {string} filePath

* @param {integer} timeout

*/

function checkFileExists(filePath, timeout=15000) { // Related to step 4

return new Promise(function (resolve, reject) {

let timer = setTimeout(function () {

watcher.close();

reject(new Error(' [checkFileExists] File does not exist, and was not created during the timeout delay.'));

}, timeout);

fs.access(filePath, fs.constants.R_OK, function (err) {

if (!err) {

clearTimeout(timer);

watcher.close();

resolve();

}

});

let dir = path.dirname(filePath);

let basename = path.basename(filePath);

let watcher = fs.watch(dir, function (eventType, filename) {

if (eventType === 'rename' && filename === basename) {

clearTimeout(timer);

watcher.close();

resolve();

}

});

});

}

page.on('response', responseHandler); // Step 1

await page.click('whateverDownloadButtonSelector'); // Step 2

const downloadedFilename = await getDLFileName(); // Step 3

// Step 4

const filePath = `/tmp/puppeteer/downloads/`${downloadedFilename};

await checkFileExists(filePath);

Hi @xprudhomme, thank you for your sharing.

As I said workaround exists but is cumbersome.

Mine was the following:

const puppeteer = require('puppeteer')

const expect = require('expect-puppeteer')

const { setDefaultOptions } = require('expect-puppeteer')

setDefaultOptions({ timeout: 5000 })

const fs = require('fs')

const mkdirp = require('mkdirp')

const path = require('path')

const uuid = require('uuid/v1')

async download(page, selector) {

const downloadPath = path.resolve(__dirname, 'download', uuid())

mkdirp(downloadPath)

console.log('Downloading file to:', downloadPath)

await page._client.send('Page.setDownloadBehavior', { behavior: 'allow', downloadPath: downloadPath })

await expect(page).toClick(selector)

let filename = await this.waitForFileToDownload(downloadPath)

return path.resolve(downloadPath, filename)

}

async waitForFileToDownload(downloadPath) {

console.log('Waiting to download file...')

let filename

while (!filename || filename.endsWith('.crdownload')) {

filename = fs.readdirSync(downloadPath)[0]

await sleep(500)

}

return filename

}

@verglor Your solution involving Page.setDownloadBehavior doesn't work for me; the Chromium process hard crashes (native code crash) on macOS.

@xprudhomme The issue with your solution is it's required to know in advance what the URL of the file is. If you have some kind of complicated way of resolving the URL of the file (e.g. the server spits out a URL from an XHR in clientside JS upon clicking a button), you'll not be able to write any meaningful code with a regex for const dlRequestUriPattern = /whatever-pattern\/download/i;.

Also, you should probabaly parameterize that pattern. What if I want to download two completely separate files in the same script that have nothing to do with one another in terms of their pattern?

This is very unwieldy. +1 for an easy, baked-in way to do this!

I got xprudhomme's solution to work. None of the other solutions worked for me, mostly because the page.on('response' never seemed to show the download.

@xprudhomme The issue with your solution is it's required to know in advance what the URL of the file is. If you have some kind of complicated way of resolving the URL of the file (e.g. the server spits out a URL from an XHR in clientside JS upon clicking a button), you'll not be able to write any meaningful code with a regex for

const dlRequestUriPattern = /whatever-pattern\/download/i;.Also, you should probabaly parameterize that pattern. What if I want to download two completely separate files in the same script that have nothing to do with one another in terms of their pattern?

This is _very_ unwieldy. +1 for an easy, baked-in way to do this!

@allquixotic

If you don't mind, I would kindly remind that the topic here is "Question: How do I get puppeteer to download a file?", question to which I have answered with a working solution.

The question is not "How do I get the best improved solution ever". I agree there are improvements, such as parameterizing the download URL regex pattern, it does totally make sense.

The solution works, not just for me. Unfortunately when you deal with many different website, you will always have to first figure out what is the URL pattern for the files you are trying to download, which always require a bit of reverse engineering work on the target website.

I guess that your point is, or could be: "How do we get a fully automated way to get Puppeteer to download files for us, without any customization or any kind", which is not the question here from my point of view :)

I did a workaround using puppeteer and axios.

await page.setRequestInterception(true);

const interceptedRequest = await new Promise((resolve) => {

page.goto(downloadLink);

page.on('request', (request) => {

request.abort();

resolve(request);

});

});

const cookies = await page.cookies();

const headers = interceptedRequest._headers;

headers.Cookie = cookies.map(cookie => `${cookie.name}=${cookie.value}`).join(';');

const response = await axios.get(interceptedRequest._url, {

headers,

responseType: 'blob',

});

In my case the server generates a filename dynamically, and I can't predict what the filename will be.

page._client.send('Page.setDownloadBehavior', { ... }

sees all the files, except for the file downloaded by clicking a download link.

In my case the server generates a filename dynamically, and I can't predict what the filename will be.

page._client.send('Page.setDownloadBehavior', { ... }

sees all the files, except for the file downloaded by clicking a download link.

@GerryOnGithub : in our case too, we are not able to predict what the filename will be, that's why our solution guess it: const downloadedFilename = await getDLFileName();

Sorry, the client (browser) is creating the filename and downloading it locally - there is no url.

Node.js, running puppeteer on the server can't see the filename. I think I will have the client write the filename to a hidden div/span and then puppeteer should be able to extract it from the page.

Oh I see, is this some kind of data url ?

this answer works fine for clicking a URL and download the file, same thing you can try by using .goto to a specific file URL you created. - https://github.com/GoogleChrome/puppeteer/issues/299#issuecomment-434000021

So instead of clicking a button, you actually navigate to that url by .goto and chromium should be able to download that in the defined directory.

This has been something that's been asked of in browserless, so it's now a first-class feature. The overview is:

- You POST a script of

application/javascriptto run. - It takes care of making a temporary directory for _just_ that script.

- It executes your script (goto a page, click something, whatever).

- It watches the pre-made temp directory for a file download.

- Once downloaded we resolve the request with that file (including an appropriate content-type).

- The temporary folder and file are deleted from disk.

Quick docs here: https://docs.browserless.io/docs/download.html#docsNav. Would love to hear feedback if something is missing!

@joelgriffith On the same lines I am trying to resolve another problem, what If I want to use something like Amazon Lambda completely without having any EC2 (Or any other ) Linux instance so that i can set up it such that when we don't need the instance it will be killed.

The only challenge is, how could I store the file directly on some CDN OR AWS S3 so that I dont need the linux node forever.

Thanks

How to download a file that triggers by clicking on a button??? There isn't an URL

Hi @kselax, you can try my workaround - you need just selector to click.

Hi, @kselax

await page.setRequestInterception(true);

const interceptedRequest = await new Promise((resolve) => {

page.click(YOUR_CUSTOM_SELECTOR_HERE);

page.on('request', (request) => {

request.abort();

resolve(request);

});

});

const cookies = await page.cookies();

const headers = interceptedRequest._headers;

headers.Cookie = cookies.map(cookie => `${cookie.name}=${cookie.value}`).join(';');

const response = await axios.get(interceptedRequest._url, {

headers,

responseType: 'blob',

});

@gufranco Thanks for your code, it worked, but I still have two questions:

1) I had to filter out, with request._url, some tracker urls which trigger, so that they don't resolve

2) How can I get back to the un-intercepted state afterwards? I tried adding

await page.setRequestInterception(false);

after the axios.get but my page.goto("logout-url") still gets intercepted?

@leotulipan

You have to specify request.continue()

Well to be honest, I don't really see where there is an issue at this point, everything seems to be working like a charm.

I've even been able to get Puppeteer download a file, simply by clicking on the "Download file" button that triggers the download process.

It works this way:

function setDownloadBehavior(downloadPath='/tmp/puppeteer/downloads/') { return page._client.send('Page.setDownloadBehavior', { behavior: 'allow', downloadPath }); } await setDownloadBehavior(); await page.click(downloadButtonSelector);If the download button triggers a PDF file download, then you will end up with this PDF file being downloaded at the downloadPath location, in the case above it would be located at '/tmp/puppeteer/downloads/whateverPDFname.pdf'

I am doing the same thing, but I cannot get the excel file to be downloaded to the folder in my node js project, it only downloads the file to the downloads directory when I run the script in non-headless mode. Is there any way you can help?

@leotulipan

You have to specify request.continue()

But where do I actually put that continue? I tried doing it in an else but I get Error: Request is already handled!

Here's my code so far. Note: Download works perfectly, but the code doesn't continue past the writeFileSync

await page.setRequestInterception(true)

const interceptedRequest = await new Promise((resolve) => {

page.mouse.click(xy[0], xy[1])

// CHANGE THIS. SEE BELOW

page.on('request', (request) => {

request.abort()

console.log('Intercepted ' + request._url.substr(0, 50))

if (request._url !== 'https://www.etracker.de/api/tracking/v5/webEvents') {

resolve(request)

}

// doesnt work here

// else {

// request.continue()

// }

})

})

// CHANGE END

const cookies = await page.cookies()

const headers = interceptedRequest._headers

headers.Cookie = cookies.map(cookie => `${cookie.name}=${cookie.value}`).join(';')

const response = await axios.get(interceptedRequest._url, {

headers,

responseType: 'text'

})

try {

fs.writeFileSync('data.csv', response.data)

} catch (err) {

console.error(err)

}

so, thanks to xprudhomme's comment below, I changed the page.on('request') part like this and it works perfectly now:

page.on('request', (interceptedRequest) => {

console.log('interceptedRequest.url(): ' + interceptedRequest.url())

if (interceptedRequest.url().endsWith('.csv') ||

interceptedRequest.url().startsWith('https://example.com/DownloadCsvFile')) {

interceptedRequest.abort()

console.log('Intercepted + downloading: ' + interceptedRequest.url().substr(0, 50))

resolve(interceptedRequest)

} else {

if (!interceptedRequest.url().startsWith('https://www.etracker.de/api/tracking/v5/webEvents')) {

interceptedRequest.continue()

}

}

})

@leotulipan you cannot use both request.abort() and request.continue(), it can only be either one.

As per what the doc says:

Activating request interception enables request.abort, request.continue and request.respond methods. This provides the capability to modify network requests that are made by a page.

Once request interception is enabled, every request will stall unless it's continued, responded or aborted

For instant:

const puppeteer = require('puppeteer');

puppeteer.launch().then(async browser => {

const page = await browser.newPage();

await page.setRequestInterception(true);

page.on('request', interceptedRequest => {

if (interceptedRequest.url().endsWith('.png') || interceptedRequest.url().endsWith('.jpg'))

interceptedRequest.abort();

else

interceptedRequest.continue();

});

await page.goto('https://example.com');

await browser.close();

});

In your code, the first thing you do is a straight "request.abort()", meaning the request is already being handled at this point. And then you try to do request.continue() , but it cannot work since you have aborted the request previously...

The right mechanism is using a if/else block, doing request.continue() if some condition is met, else doing request.abort().

You should not use it like you did, but rather like in the example above.

Chromium crashes when setDownloadBehavior is used to download files that opens in new window. If the download is starting on the same page everything is good and it saves to the folder. Is this a bug or are there any solutions?

const puppeteer = require('puppeteer');

const path = require('path');

const DOWNLOAD_PATH = path.resolve(__dirname, 'downloads');

(async() => {

const browser = await puppeteer.launch({

headless: false,

dumpio: true,

});

const page = await browser.newPage();

const client = await page.target().createCDPSession();

await client.send('Page.setDownloadBehavior', {

behavior: 'allow',

downloadPath: DOWNLOAD_PATH

});

// example site that start download in new window

await page.goto('https://turnkeyinternet.net/speed-test/', {waitUntil: 'networkidle2'});

})();

Here is the dumpio

[0227/222012.378:ERROR:process_memory_win.cc(73)] ReadMemory at 0x268de00025c of 512 bytes failed: Only part of a ReadProcessMemory or WriteProcessMemory request was completed. (0x12B)

@verglor Dear verglor,

how may I use your snippet? I'm getting SyntaxError: Unexpected identifier

at the line defining the function async download(page, selector) {

@chazanov That looks like you're executing in an environment that doesn't natively support async/await. Node.js introduced support in v8.10.

So, you'll likely need to either upgrade your version of Node.js or transpile it using something like Babel.

The file URL can always be guessed somehow, even if there is no hard coded link within the HTML source code, it's easy to find what is is for example simply by looking in the Chrome download manager.

@xprudhomme it's more than just knowing the URL. You also have to know what request body is submitted as well (if any). Yes, I could also figure that out, but if I wanted to write/develop my own simulation of a browser, I wouldn't be using Puppeteer.

Self-contained, working example (thanks @verglor)

const fs = require('fs');

const path = require('path');

const puppeteer = require('puppeteer');

const util = require('util');

// set up, invoke the function, wait for the download to complete

async function download(page, f) {

const downloadPath = path.resolve(

process.cwd(),

`download-${Math.random()

.toString(36)

.substr(2, 8)}`,

);

await util.promisify(fs.mkdir)(downloadPath);

console.error('Download directory:', downloadPath);

await page._client.send('Page.setDownloadBehavior', {

behavior: 'allow',

downloadPath: downloadPath,

});

await f();

console.error('Downloading...');

let fileName;

while (!fileName || fileName.endsWith('.crdownload')) {

await new Promise(resolve => setTimeout(resolve, 100));

[fileName] = await util.promisify(fs.readdir)(downloadPath);

}

const filePath = path.resolve(downloadPath, fileName);

console.error('Downloaded file:', filePath);

return filePath;

}

// example usage

(async function() {

const browser = await puppeteer.launch({ headless: true });

try {

const page = await browser.newPage();

await page.goto(

'http://file-examples.com/index.php/text-files-and-archives-download/',

{ waitUntil: 'domcontentloaded' },

);

const path = await download(page, () =>

page.click(

'a[href="http://file-examples.com/wp-content/uploads/2017/02/file_example_CSV_5000.csv"]',

),

);

const { size } = await util.promisify(fs.stat)(path);

console.log(path, `${size}B`);

} finally {

await browser.close();

}

})().catch(e => {

console.error(e.stack);

process.exit(1);

});

Download directory: /home/paul/test/download-7al32vrb

Downloading...

Downloaded file: /home/paul/rivet/test/download-7al32vrb/file_example_CSV_5000.csv

/home/paul/test/download-7al32vrb/file_example_CSV_5000.csv 284042B

The file URL can always be guessed somehow, even if there is no hard coded link within the HTML source code, it's easy to find what is is for example simply by looking in the Chrome download manager.

@xprudhomme it's more than just knowing the URL. You also have to know what request body is submitted as well (if any). Yes, I could _also_ figure that out, but if I wanted to write/develop my own simulation of a browser, I wouldn't be using Puppeteer.

Yes you are right, things have evolved a lot since my initial proposed solution back in 2017.

@verglor 's solution is perfect : )

In Windows, Page.setDownloadBehavior does not work if downloadPath is using forward slash.

@char101 it is recommended to use path.resolve to solve OS path issues

Does anyone have best-practices for handling downloads that occur in newly popped-up tab? I believe these don't respect the downloadPath set previously because they're in a new page.

In my case

- navigate to a URL

- click on a link to download (this click invokes some JS)

- a new tab pops-up, and the download starts there

I could certainly construct a fetch request instead, but would love to leverage "click()" since would be much simpler to simulate the actual user interaction.

My issue is similar to this StackOverflow Q.. Based on their input I plan to try targetcreated event handling. I'm not yet sure whether I'll be able to set the the downloadPath for the new page before the actual download begins. We'll see!

Thank you all for the discussion and great examples.

cc @verglor @pauldraper

Quick update: I haven't had any success with targetcreated, as it doesn't seem to fire this event. So I am fetch-ing the URL directly.

await page.evaluate(() => {

return fetch(url, {

method: "GET",

credentials: "include"

}).then(r => r.text());

});

I also found another puppeteer issue that was similar (https://github.com/GoogleChrome/puppeteer/issues/2895)... I explored using page.goto to avoid the pop-up, and this does work and gets the file into the specified download path, however I have to swallow the ERR_ABORTED error

Yes, definately you have to catch the targetcreated event to know the url of your resource that you need to download but also, you need to catch the response event on the page like below:

function downloadFile(resourceUrl)

{

const file = fs.createWriteStream(path.resolve(__dirname, 'ExcelFile.xls'));

const request = http.get(resourceUrl, function(res) {

var data = [];

res.on('data', function(chunk) {

data.push(chunk);

}).on('end', function() {

//at this point data is an array of Buffers

//so Buffer.concat() can make us a new Buffer

//of all of them together

var buffer = Buffer.concat(data);

file.write(buffer);

file.end();

console.log('File saved.');

});

});

}

page.on('response', async resp => {

// if you are trying to download excel file, you need to enter the extension of the file to check to see if the response has the file type you need.

if(resp.url().indexOf(".xls") != -1)

{

downloadFile(resp.url());

}

});

browser.on('targetcreated', async (target) => {

// this line below gets the url for the resource, you need to download

let resourceUrl = target.url();

await page.goto(resourceUrl); // go to this resource url, so that the page gets response event.

page.waitFor(10000);

});

Does anyone have best-practices for handling downloads that occur in newly popped-up tab? I believe these don't respect the

downloadPathset previously because they're in a newpage.In my case

- navigate to a URL

- click on a link to download (this click invokes some JS)

- a new tab pops-up, and the download starts there

I could certainly construct a fetch request instead, but would love to leverage "click()" since would be much simpler to simulate the actual user interaction.

My issue is similar to this StackOverflow Q.. Based on their input I plan to try

targetcreatedevent handling. I'm not yet sure whether I'll be able to set the thedownloadPathfor the new page before the actual download begins. We'll see!Thank you all for the discussion and great examples.

cc @verglor @pauldraper

Quick update: I haven't had any success with

targetcreated, as it doesn't seem to fire this event. So I amfetch-ing the URL directly.await page.evaluate(() => { return fetch(url, { method: "GET", credentials: "include" }).then(r => r.text()); });I also found another puppeteer issue that was similar (#2895)... I explored using

page.gototo avoid the pop-up, and this does work and gets the file into the specified download path, however I have to swallow theERR_ABORTEDerror

@nathanleiby I'm also facing a similar problem, where on click download starts in new tab. Can u elaborate on how are you using fetch as workaround to this.

Thanks

In my case

navigate to a URL

click on a link to download (this click invokes some JS)

a new tab pops-up, and the download starts there

Similar to self contained examples, when new tab is opened to download we need to Page.setDownloadBehavior for that new page. Below snippet will set download_path in new page before file gets downloading. This works for me completely.

Insert this code after the browser been created.

await browser.on('targetcreated', async () =>{

const pageList = await browser.pages();

console.log(pageList.length);

pageList.forEach((page) => {

page._client.send('Page.setDownloadBehavior', {

behavior: 'allow',

downloadPath: OUTDIR,

});

});

});

I have an issue too. When the app is in headless mode, the files don't download at all by clicking on some links. But when I set "headless: false", it works fine.

"puppeteer": "^1.17.0"

Hej,

The code above works well, thanks @pauldraper

How would you get the url that the file was downloaded from?

In my case, I use page.click() and it opens new tab where download is fired automatically with latter tab closing. I need to get the url puppeteer accessed to get the file. I've checked request.url() using setRequestInterception as shown below, but I've got different url.

const filePath = await downloadFile(page, browser, () => page.click('#selector'));

await page.setRequestInterception(true);

const promise = new Promise(resolve => {

page.on('request', request => {

const url = request.url();

console.log(url);

request.continue();

});

});

Any ideas?

Thank you

Hello

My code in last lines when puppeteer is clicking on link it opens new tab were download starts. But file is saved is download not in my folder

async function main() {

const browser = await puppeteer.launch({ headless: false });

const page = await browser.newPage();

await page.setViewport({ width: 1366, height: 800 });

await page.goto(endpoint, { waitUntil: "networkidle2", timeout: 0 });

await page.waitFor(3000);

await page.type("#username", login);

await page.type("#password", pass);

// click and wait for navigation

await page.click("#login"),

await page.waitForNavigation({ waitUntil: "networkidle2" });

await page.waitFor(2000);

await page.waitForXPath('//*[@id="ctl00_ctl00_masterMain_cphMain_repViewable_ctl01_thisItemLink"]');

const [setting] = await page.$x('//*[@id="ctl00_ctl00_masterMain_cphMain_repViewable_ctl01_thisItemLink"]');

if (setting) setting.click();

await page.waitFor(3000);

let pages = await browser.pages();

await page.waitFor(2000);

await pages[2].waitForXPath('/html/body/table/tbody/tr[17]/td[6]/a');

const [newVID] = await pages[2].$x('/html/body/table/tbody/tr[17]/td[6]/a');

if (newVID) newVID.click();

await pages[2].waitFor(3000)

await pages[2].close()

await pages[2].waitFor(5000)

pages = await browser.pages()

for (const page of pages) {

console.log(page.url()) // new page now appear!

}

await pages[2].waitForXPath('//*[@id="freeze"]/table/tbody/tr[2]/td/table/tbody/tr[3]/td/a');

const [neww] = await pages[2].$x('//*[@id="freeze"]/table/tbody/tr[2]/td/table/tbody/tr[3]/td/a');

if (neww) neww.click();

await pages[2].waitFor(4000)

await pages[2].waitFor(4000)

await pages[2].waitForXPath('/html/body/div/table/tbody/tr[3]/td/a');

const [fin] = await pages[2].$x('/html/body/div/table/tbody/tr[3]/td/a');

if (fin) fin.click();

await browser.on('targetcreated', async () =>{

const pageList = await browser.pages();

console.log(pageList.length);

pageList.forEach((page) => {

page._client.send('Page.setDownloadBehavior', {

behavior: 'allow',

downloadPath: './',

});

});

});

}

main()

@pauldraper Thank you, this is the only code that worked for me. I would suggest the following change:

[fileName] = await util.promisify(fs.readdir)(downloadPath);

to

fileName = (await util.promisify(fs.readdir)(downloadPath)).pop();

When dealing with large files, Chrome takes time to move file.zip.crdownload to file.zip. The lexical sort order of the directory means file.zip will be created but not yet completed when the loop exits meaning the file is not actually present or available for the following code. The .pop() always checks the last file in the list.

In my case

navigate to a URL

click on a link to download (this click invokes some JS)

a new tab pops-up, and the download starts thereSimilar to self contained examples, when new tab is opened to download we need to

Page.setDownloadBehaviorfor that new page. Below snippet will set download_path in new page before file gets downloading. This works for me completely.Insert this code after the browser been created.

await browser.on('targetcreated', async () =>{ const pageList = await browser.pages(); console.log(pageList.length); pageList.forEach((page) => { page._client.send('Page.setDownloadBehavior', { behavior: 'allow', downloadPath: OUTDIR, }); }); });

I couldn't get this to work, but in my particular case I just modified the anchor directly. Instead of opening in _blank, I just emptied the target attribute and it worked.

await page.$eval('.downloadLink', el => el.target = '')

The only way I've been able to download a file is if I know the URL before-hand, and call

fetchin anevaluate.downloadUrlis a string with the URL of the file you want to download.const downloadedContent = await page.evaluate(async downloadUrl => { const fetchResp = await fetch(downloadCsvUrl, {credentials: 'include'}); return await fetchResp.text(); }, downloadUrl);You can then use

downloadedContentto write to a file.

I'm using the following code, for storing a small PDF into a buffer:

let myPDF = await page.evaluate(

async function () {

const _URI = await ( document.querySelector('form').action + '?' + Array.from(document.querySelectorAll('form input')).filter((el) => (el.type != 'submit' || (el.type == 'submit' && el.value == 'Imprimir'))).map( el => (el.name + '=' + el.value)).join('&') );

const _response = await fetch(_URI);

const _PDF = await new Uint8Array(await _response.arrayBuffer());

if (await !_response.ok) throw 'RESPONSE NOT OK';

if (await _response.status != await 200) throw 'RESPONSE NOT 200';

if (await _response.headers.get("content-length") != await _PDF.byteLength) throw 'SIZE ERROR'

return await btoa(String.fromCharCode(..._PDF));

}

);

myPDF = await Buffer.from(myPDF, 'base64');

Hej,

The code above works well, thanks @pauldraper

How would you get the url that the file was downloaded from?In my case, I use

page.click()and it opens new tab where download is fired automatically with latter tab closing. I need to get the url puppeteer accessed to get the file. I've checkedrequest.url()using setRequestInterception as shown below, but I've got different url.const filePath = await downloadFile(page, browser, () => page.click('#selector')); await page.setRequestInterception(true); const promise = new Promise(resolve => { page.on('request', request => { const url = request.url(); console.log(url); request.continue(); }); });Any ideas?

Thank you

Use

page.on('popup', async (request) => {

console.log(request.target().url())

}

Or something of that nature - and I was able to get the link of the new tab it was opening. Still working on the complete solution myself, though. :/

I found a solution that works for me. When clicking a link opens a new page with an embedded PDF, I first take the target URL of "popup" page, and push it to an array which I later use page.goto() by which to navigate.

page.on('popup', async (request) => {

if (request.target().url().startsWith('pdf.url')) {

responses.push(request.target().url())

} else {

return;

}

});

Later I use page.goto(responses[index]) in some loop to access the page. Since that sends a new request - I capture it using:

const rp = require('request-promise');

const fs = require('fs');

await page.setRequestInterception(true);

page.on('request', async (request) => {

if (request.url().startsWith('urlofPDF')) {

options = {

method: request._method,

uri: request._url,

body: request._postData,

headers: request._headers,

encoding: "binary"

}

let cookies = await page.cookies();

options.headers.Cookie = cookies.map(ck => ck.name + '=' + ck.value).join(';');

let rand = Math.floor((Math.random() * 100) + 1)

rp(options).then(function (body) {

fs.writeFile(`./myfile${rand}.pdf`, body, "binary", (err) => { console.log(err) })

}).catch(err => { })

request.abort();

} else {

request.continue()

}

});

Which basically intercepts the request, copies it, then sends it again using another library and I get back my PDF response.

If the link doesn't pop into a new page, but downloads into directly, just setting the request interception handler to send a new request (exactly how I did it in my above example) will work to download the file to a specified directory. This has been doing wonders for me.

Page.FileDownloaded

any news?

Hi @xprudhomme, thank you for your sharing.

As I said workaround exists but is cumbersome.

Mine was the following:const puppeteer = require('puppeteer') const expect = require('expect-puppeteer') const { setDefaultOptions } = require('expect-puppeteer') setDefaultOptions({ timeout: 5000 }) const fs = require('fs') const mkdirp = require('mkdirp') const path = require('path') const uuid = require('uuid/v1') async download(page, selector) { const downloadPath = path.resolve(__dirname, 'download', uuid()) mkdirp(downloadPath) console.log('Downloading file to:', downloadPath) await page._client.send('Page.setDownloadBehavior', { behavior: 'allow', downloadPath: downloadPath }) await expect(page).toClick(selector) let filename = await this.waitForFileToDownload(downloadPath) return path.resolve(downloadPath, filename) } async waitForFileToDownload(downloadPath) { console.log('Waiting to download file...') let filename while (!filename || filename.endsWith('.crdownload')) { filename = fs.readdirSync(downloadPath)[0] await sleep(500) } return filename }

Hi @verglor & thank you for your solution, very helpful.

Sometimes I do have a file that is not online anymore, and then the download is stuck because the resource does not exist anymore. Would you have a simple solution to deal with this case ?

i imported system-sleep library to handle the sleep(500).

is a my fault?

thanks.

i imported system-sleep library to handle the sleep(500).

is a my fault?

thanks.

this often hungs. I replaced using WaitFor of page

In case someone is looking for code to download a file given the URL in Puppeteer, here's what works for me: (from https://github.com/ndabas/WhatDidIBuy/blob/master/lib/utils.js)

/**

* @param page { import("puppeteer").Page }

* @param {RequestInfo} input

* @param {RequestInit=} init

*/

exports.downloadBlob = async (page, input, init) => {

const data = await page.evaluate(async (input, init) => {

const resp = await window.fetch(input, init);

if (!resp.ok)

throw new Error(`Server responded with ${resp.status} ${resp.statusText}`);

const data = await resp.blob();

const reader = new FileReader();

return new Promise(resolve => {

reader.addEventListener('loadend', () => resolve({

url: reader.result,

mime: resp.headers.get('Content-Type')

}));

reader.readAsDataURL(data);

});

}, input, init);

return { buffer: Buffer.from(data.url.split(',')[1], 'base64'), mime: data.mime };

};

The downloadBlob function executes window.fetch in the browser context to do the business. The input and init parameters are the parameters to that function, as documented here. The function will return an object with the buffer and the mime type.

After pressing download button

const myDownloadPath = path.resolve(${Directory Where you want to download})

mkdirp(myDownloadPath)

await page._client.send('Page.setDownloadBehavior', {behavior: 'allow', downloadPath: myDownloadPath });

imported lib:

const mkdirp = require('mkdirp')

const path = require('path')

Hi all