Picongpu: cudaErrorIllegalAddress

Hello,

Here is an error that I just obtained running the files attached on a single GPU. I am using the development version installed a few weeks ago. My feeling is that it has something to do with the arrangement of particles inside the grid cells because the error appeared after setting in grid.param

constexpr double SIDEX = 32.81e-09;

constexpr double SIDEY = 47.16e-09;

constexpr double SIDEZ = 37.88e-09;

instead of allowing the code to compute the three simulations domain sides as

constexpr double SIDEX = XA-XE;

constexpr double SIDEY = hTL + mYL;

constexpr double SIDEZ = ZO-ZF;

Please have a look.

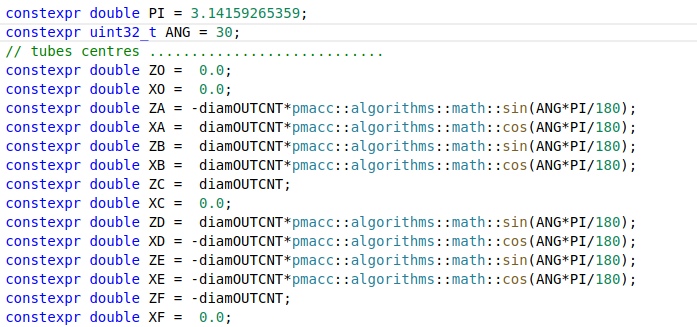

If you review the code, please let me know how can I use the power and trigonometric functions in density.param because it is silly to work with constexpr double COS30 = 0.86602540378; and (z - ZOf)*(z - ZOf). On the other hand my attempts to use the cmath:: syntax failed, probably because of the CUDA specific code, and any new attempt shows the error after a few minutes of compilation so I stopped investigating this problem.

All 8 comments

Sorry, I got confused and deleted the previous message, this is unrelated to #3202.

Yes, this seems to be a different error by maybe related at deeper level.

@cbontoiu I have not looked at the essence of the issue yet. But regarding the math functions question, you generally cannot use any of C++ standard library on the device side (just the language itself). We provide wrappers for math functions on both host and device sides, these should be available as pmacc::algorithms::math::cos( x ); and similarly for other common math functions.

Thank you,

I tried but I get this error. what can it be?

function call must have a constant value in a constant expression

Thank you,

I tried but I get this error. what can it be?function call must have a constant value in a constant expression

The math functions in PIConGPU/PMAcc can not be used for constexpr evaluation. Here you can use functions form std:: if those will be evaluated at compile time.

Hello,

Here is an error that I just obtained running the files attached on a single GPU. I am using the development version installed a few weeks ago. My feeling is that it has something to do with the arrangement of particles inside the grid cells because the error appeared after setting in

grid.paramconstexpr double SIDEX = 32.81e-09; constexpr double SIDEY = 47.16e-09; constexpr double SIDEZ = 37.88e-09;instead of allowing the code to compute the three simulations domain sides as

constexpr double SIDEX = XA-XE; constexpr double SIDEY = hTL + mYL; constexpr double SIDEZ = ZO-ZF;Please have a look.

If you review the code, please let me know how can I use the power and trigonometric functions in

density.parambecause it is silly to work withconstexpr double COS30 = 0.86602540378;and(z - ZOf)*(z - ZOf). On the other hand my attempts to use thecmath::syntax failed, probably because of the CUDA specific code, and any new attempt shows the error after a few minutes of compilation so I stopped investigating this problem.

Could you please post the full output file. I assume that you are running out of memory when you change the density of your target. Not sure which target you use but I think when you change your density you will have more macro particles in regions where the density was before near vacuum.

Please check if you need to increase MIN_WEIGHTING in particles.param when you go to higher density.

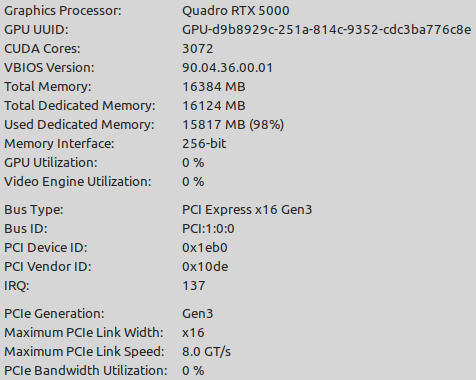

What kind of device do you use? GPU/CPU? Amount of device memory?

Hello, yes I now understand the MIN_WEIGHTING in particles.param.

My GPU is as shown in the figure below

Unfortunately, I don't have the output file anymore. I will post it here together with the input files, if the error appears again.

Regards

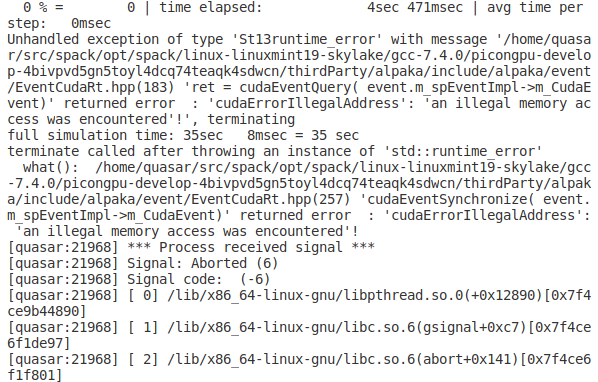

For example, I got the error attached in the file (unfortunately I have only the tail of it)

once at run time but then trying again without changing anything and without recompiling the simulation started to execute smoothly.

Most helpful comment

Could you please post the full output file. I assume that you are running out of memory when you change the density of your target. Not sure which target you use but I think when you change your density you will have more macro particles in regions where the density was before near vacuum.

Please check if you need to increase

MIN_WEIGHTINGinparticles.paramwhen you go to higher density.What kind of device do you use? GPU/CPU? Amount of device memory?