Apollo: [Question]obstacles recognise issue by cloud points of perception module

Hi,

I added 1000 random points (outside ROI) into a pcd and the offline perception tool miss 3 obstacles in front of the vehicle. Is the algorithm or model behind the obstacle recognition by cloud points vulnerable to noise attack (points outside ROI)? My apollo code is checked out on the Jan 31, 2018.

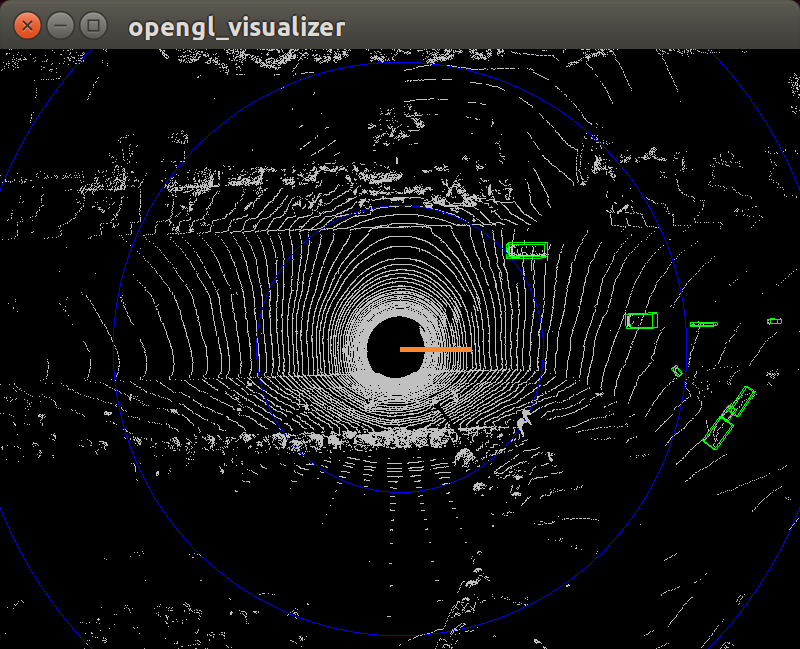

This is original pcd.

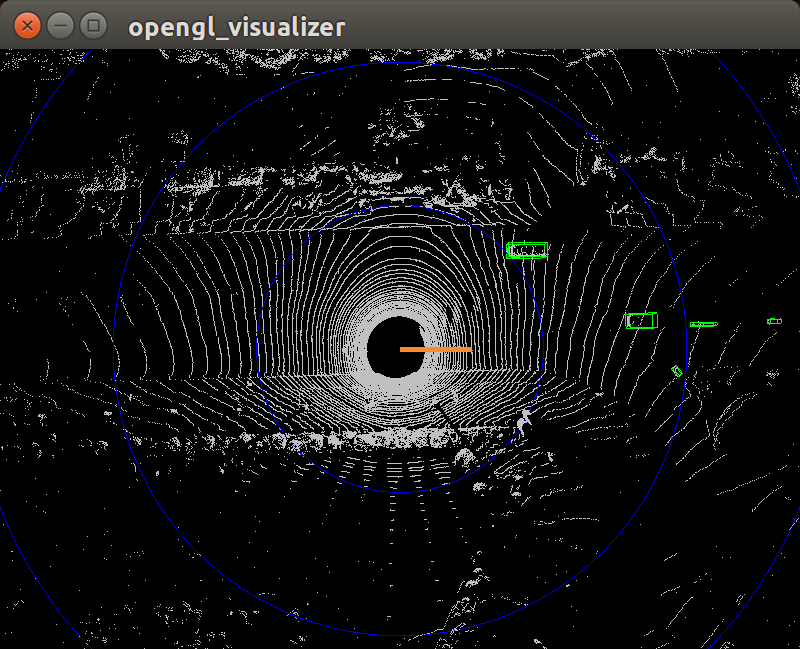

This is pcd with 1000 random noise points ouside ROI

pose.zip

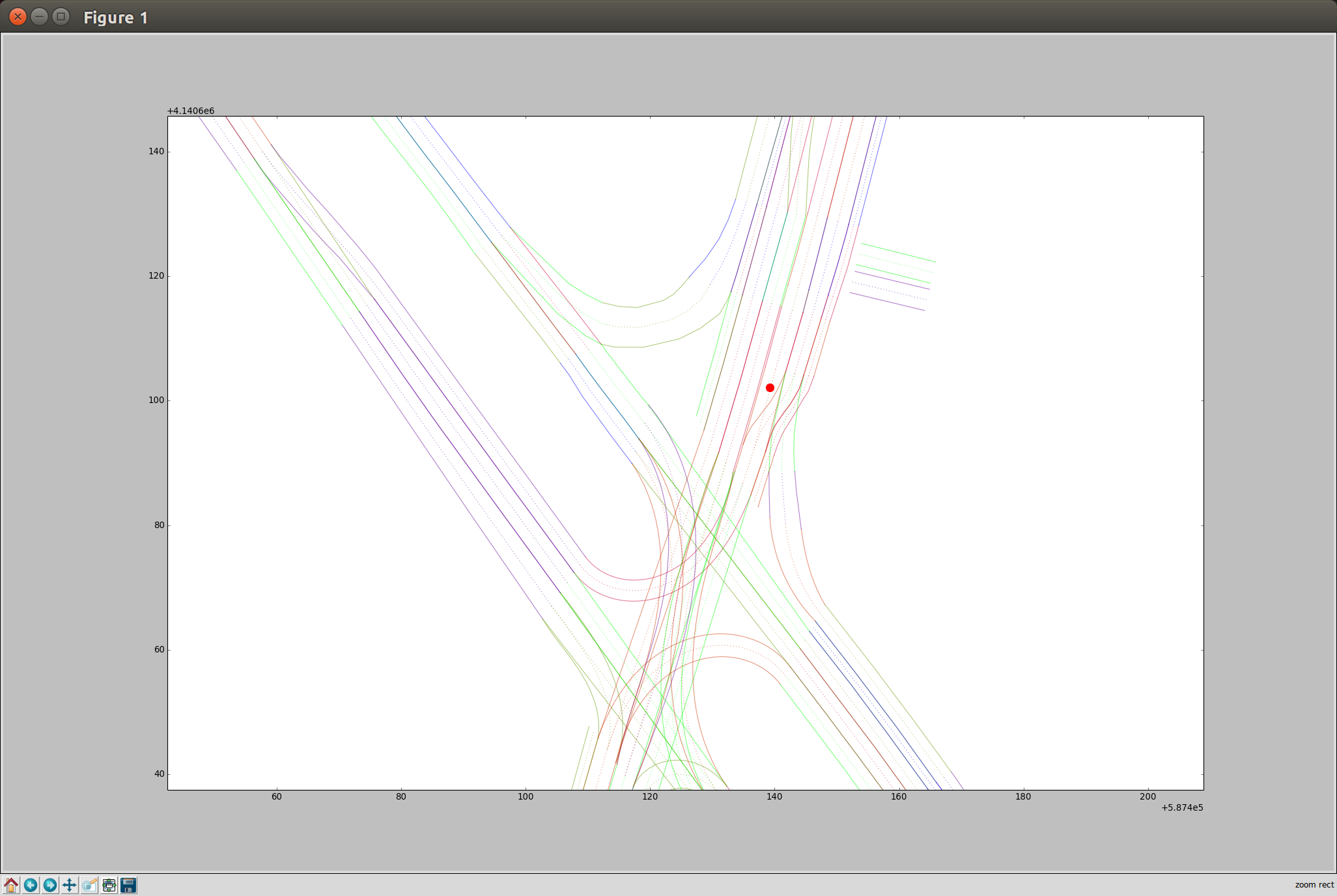

Position in sunnyvale_loop map

Regards,

Liqun Sun

>All comments

thanks for the post. since cnn seg is deep learning trained, it might happen if you add noise point into the data itself. in practise, there could be multiple pipeline doing segmentation based on traditional method as well as deep learning method. Also, for cases like that, models can be fine tuned using data augmentation.

Most helpful comment

thanks for the post. since cnn seg is deep learning trained, it might happen if you add noise point into the data itself. in practise, there could be multiple pipeline doing segmentation based on traditional method as well as deep learning method. Also, for cases like that, models can be fine tuned using data augmentation.