Skaffold: Port Forwarding does not work with Helm deployer on Windows.

Expected behavior

Running skaffold dev --portforward or skaffold debug --portforward should open up ports to any deployed services then available over localhost.

Actual behavior

The skaffold output claims that the port has been open.

Any attempt to interact with my service over localhost fails to connect.

Information

- Skaffold version: version 1.3.1

- Operating system: Windows

- Contents of skaffold.yaml:

apiVersion: skaffold/v2alpha3

kind: Config

build:

artifacts:

- image: node-example

context: backend

deploy:

helm:

releases:

- name: node

chartPath: chart

values:

image: node-example

imageStrategy:

helm: {}

Steps to reproduce the behavior

- Clone my fork of skaffold and updated the nodejs sample

cd examples/nodejsskaffold dev --port-forward- Observe how the console output suggests that port forwarding has been enabled and a local port assigned

curl 127.0.0.1:[local-port]- Notice how it fails to connect

All 12 comments

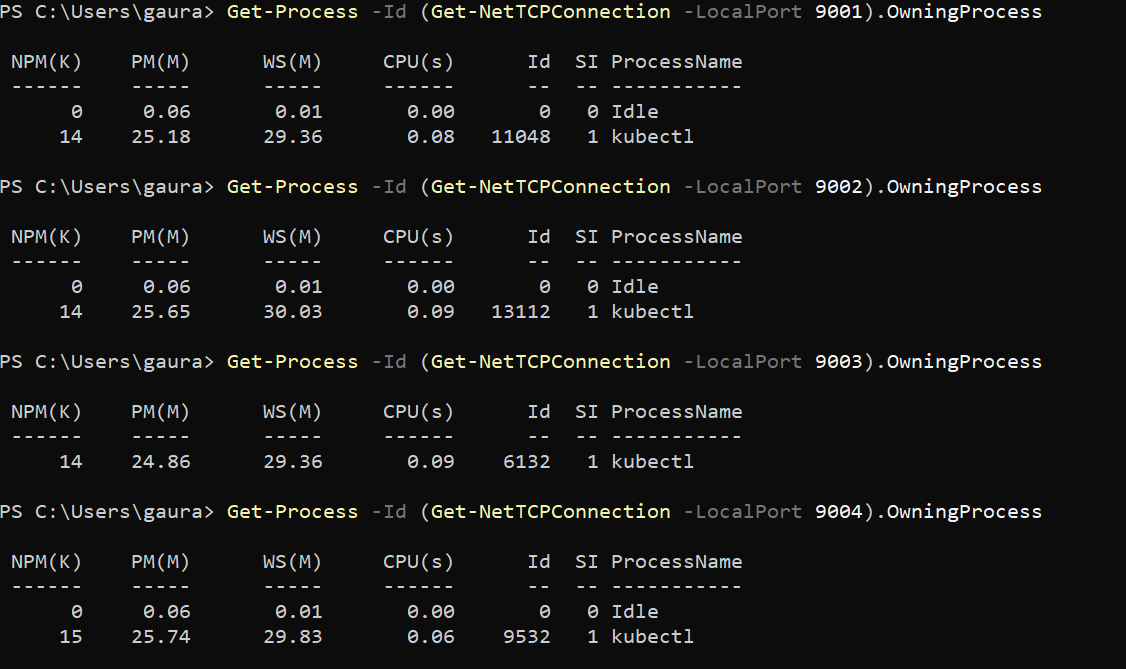

Following previous advice to scan for local ports I don't see anything to suggest ports are open.

Update:

Team members using a Mac suggest that port forwarding works fine for them.

Some light testing of port forwarding using the kubectl deployer appears to work on Windows.

~No longer relevant, see later comments below~

@GavinOsborn sorry we never got back to you on this one. would you mind trying with the latest version of skaffold and verifying this is still an issue? I'll be trying to get a windows machine set up next week so I can have a look if it still exists. also, providing relevant version info (windows version, kubectl version, etc.) as well as logs running with -v DEBUG would be great.

@nkubala have a pretty solid day ahead but should be able to re-test this tomorrow.

Hey @nkubala,

So, things have improved quite a bit since I last looked into this but it isn't all good.

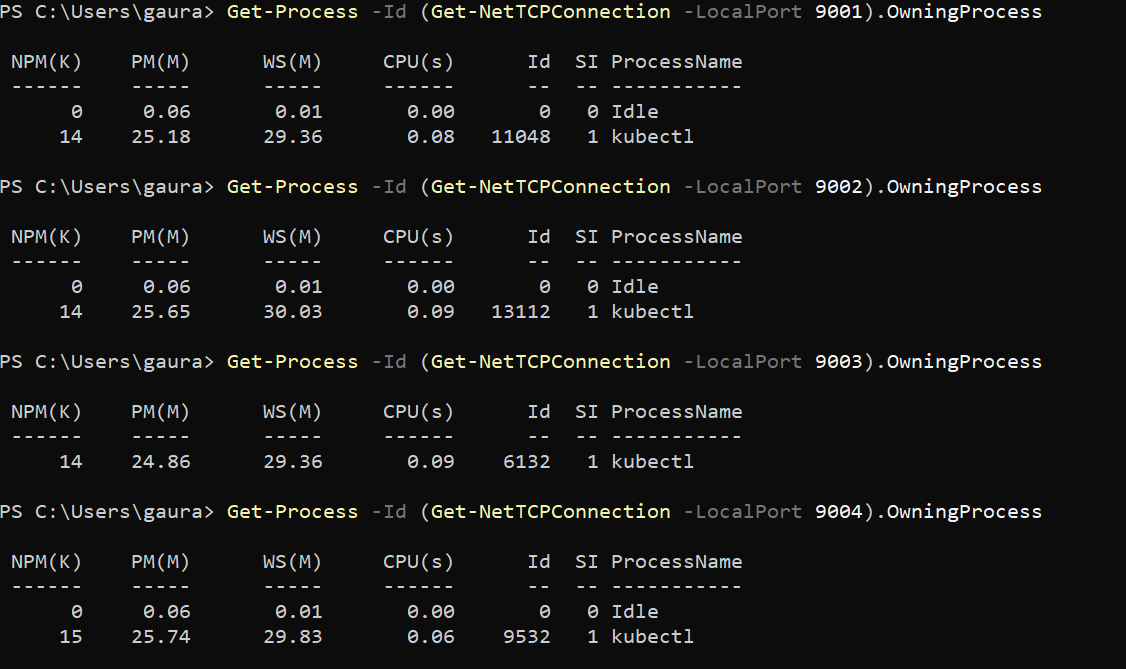

Port forwarding does now mostly work on windows the main exception being that port numbers do not seem to survive hot reloading. As you'll see from the logs below each time I triggered a rebuild I was assigned a new port. This is particularly frustrating to work with.

I also notice, running side-by-side tests on my Mac that port number assignments are different. 80,81,82 on Windows and 4053 on Mac. Is that deliberate?

My environment details:

- Windows

10 (v1909) - Git Bash

2.25.0.windows.1 - Minikube

1.9.2 - Kubernetes version

1.14.8 - Kubectl version

1.15.3 - Helm version

2.16.1 - Skaffold version

1.8

I have included the logs you requested below. I have scrubbed it of environment variables and any other potentially sensitive data. Please let me know if I've removed too much :)

Logs from running skaffold dev -v DEBUG --port-forward

time="2020-04-29T17:12:41+10:00" level=info msg="starting gRPC server on port 50051"

time="2020-04-29T17:12:41+10:00" level=info msg="starting gRPC HTTP server on port 50052"

time="2020-04-29T17:12:41+10:00" level=info msg="Skaffold &{Version:v1.8.0 ConfigVersion:skaffold/v2beta2 GitVersion: GitCommit:bd280192092e28067f0f52584c8bcb4f4dc480e4 GitTreeState:clean BuildDate:2020-04-17T23:01:25Z GoVersion:go1.14 Compiler:gc Platform:windows/amd64}"

time="2020-04-29T17:12:41+10:00" level=debug msg="config version (skaffold/v2alpha3) out of date: upgrading to latest (skaffold/v2beta2)"

time="2020-04-29T17:12:41+10:00" level=debug msg="validating yamltags of struct SkaffoldConfig"

time="2020-04-29T17:12:41+10:00" level=debug msg="validating yamltags of struct Metadata"

time="2020-04-29T17:12:41+10:00" level=debug msg="validating yamltags of struct Pipeline"

time="2020-04-29T17:12:41+10:00" level=debug msg="validating yamltags of struct BuildConfig"

time="2020-04-29T17:12:41+10:00" level=debug msg="validating yamltags of struct Artifact"

time="2020-04-29T17:12:41+10:00" level=debug msg="validating yamltags of struct ArtifactType"

time="2020-04-29T17:12:41+10:00" level=debug msg="validating yamltags of struct DockerArtifact"

time="2020-04-29T17:12:41+10:00" level=debug msg="validating yamltags of struct TagPolicy"

time="2020-04-29T17:12:41+10:00" level=debug msg="validating yamltags of struct GitTagger"

time="2020-04-29T17:12:41+10:00" level=debug msg="validating yamltags of struct BuildType"

time="2020-04-29T17:12:41+10:00" level=debug msg="validating yamltags of struct LocalBuild"

time="2020-04-29T17:12:41+10:00" level=debug msg="validating yamltags of struct DeployConfig"

time="2020-04-29T17:12:41+10:00" level=debug msg="validating yamltags of struct DeployType"

time="2020-04-29T17:12:41+10:00" level=debug msg="validating yamltags of struct HelmDeploy"

time="2020-04-29T17:12:41+10:00" level=debug msg="validating yamltags of struct HelmRelease"

time="2020-04-29T17:12:41+10:00" level=debug msg="validating yamltags of struct HelmOverrides"

time="2020-04-29T17:12:41+10:00" level=debug msg="validating yamltags of struct HelmImageStrategy"

time="2020-04-29T17:12:41+10:00" level=debug msg="validating yamltags of struct HelmImageConfig"

time="2020-04-29T17:12:41+10:00" level=debug msg="validating yamltags of struct HelmConventionConfig"

time="2020-04-29T17:12:41+10:00" level=debug msg="validating yamltags of struct HelmDeployFlags"

time="2020-04-29T17:12:41+10:00" level=info msg="Using kubectl context: minikube"

time="2020-04-29T17:12:41+10:00" level=debug msg="Using builder: local"

time="2020-04-29T17:12:41+10:00" level=debug msg="Running command: [minikube docker-env --shell none]"

time="2020-04-29T17:12:41+10:00" level=debug msg="Command output: [DOCKER_TLS_VERIFY=1\nDOCKER_HOST=tcp://192.168.99.110:2376\nDOCKER_CERT_PATH=C:\\Users\\Gav\\.minikube\\certs\nMINIKUBE_ACTIVE_DOCKERD=minikube\n]"

time="2020-04-29T17:12:41+10:00" level=debug msg="setting Docker user agent to skaffold-v1.8.0"

Listing files to watch...

- service-name-here

time="2020-04-29T17:12:45+10:00" level=debug msg="Found dependencies for dockerfile: [{package.json /app/package.json false} {package-lock.json /app/package-lock.json false} {index.js /app/index.js false} {app /app/app false}]"

time="2020-04-29T17:12:45+10:00" level=info msg="List generated in 3.8947347s"

Generating tags...

- service-name-here -> time="2020-04-29T17:12:45+10:00" level=debug msg="Running command: [git describe --tags --always]"

time="2020-04-29T17:12:45+10:00" level=warning msg="Unable to find git commit: running [git describe --tags --always]\n - stdout: \n - stderr: \"fatal: not a git repository (or any of the parent directories): .git\\n\": exit status 128"

service-name-here:dirty

time="2020-04-29T17:12:45+10:00" level=info msg="Tags generated in 24.3863ms"

Checking cache...

time="2020-04-29T17:12:45+10:00" level=debug msg="Found dependencies for dockerfile: [{package.json /app/package.json false} {package-lock.json /app/package-lock.json false} {index.js /app/index.js false} {app /app/app false}]"

- service-name-here: Not found. Building

time="2020-04-29T17:12:45+10:00" level=info msg="Cache check complete in 1.9556ms"

Found [minikube] context, using local docker daemon.

Building [service-name-here]...

time="2020-04-29T17:12:45+10:00" level=debug msg="Running docker build: context: ., dockerfile: Dockerfile"

time="2020-04-29T17:12:45+10:00" level=debug msg="Skipping credential configuration because docker-credential-gcloud is not on PATH."

time="2020-04-29T17:12:45+10:00" level=debug msg="Found dependencies for dockerfile: [{package.json /app/package.json false} {package-lock.json /app/package-lock.json false} {index.js /app/index.js false} {app /app/app false}]"

Sending build context to Docker daemon 19.46kB

Step 1/8 : FROM node:12-alpine

12-alpine: Pulling from library/node

cbdbe7a5bc2a: Pulling fs layer

8f3938f7d3bd: Pulling fs layer

6e3c12f5dc10: Pulling fs layer

ce0cb7a9eeee: Pulling fs layer

ce0cb7a9eeee: Waiting

cbdbe7a5bc2a: Verifying Checksum

cbdbe7a5bc2a: Download complete

6e3c12f5dc10: Verifying Checksum

6e3c12f5dc10: Download complete

cbdbe7a5bc2a: Pull complete

ce0cb7a9eeee: Verifying Checksum

ce0cb7a9eeee: Download complete

8f3938f7d3bd: Verifying Checksum

8f3938f7d3bd: Download complete

8f3938f7d3bd: Pull complete

6e3c12f5dc10: Pull complete

ce0cb7a9eeee: Pull complete

Digest: sha256:84f5c9090cc741421b2376b2393e7d5fa2f89d36167c12eb57aef16dd64ecf6c

Status: Downloaded newer image for node:12-alpine

---> 7a48db49edbf

Step 2/8 : WORKDIR /app

---> Running in 49586f67bca1

---> 721b8113e065

Step 3/8 : ADD ./package.json ./package.json

---> af904cc02929

Step 4/8 : ADD ./package-lock.json ./package-lock.json

---> c35ecbac66c3

Step 5/8 : RUN npm ci --production

---> Running in e46721d3267e

added 49 packages in 0.99s

---> 631dec8dec6b

Step 6/8 : ADD ./index.js ./index.js

---> 7ed859b0ff6f

Step 7/8 : ADD ./app ./app

---> b4a93b5a7456

Step 8/8 : CMD ["npm", "start"]

---> Running in 80f854521c97

---> 67f825e8cfd0

Successfully built 67f825e8cfd0

Successfully tagged service-name-here:dirty

time="2020-04-29T17:12:58+10:00" level=info msg="Build complete in 12.3006911s"

time="2020-04-29T17:12:58+10:00" level=info msg="Test complete in 0s"

Tags used in deployment:

- service-name-here -> service-name-here:67f825e8cfd0d1c34fbf36671f5de35f52fefd93d5781433b91610f1a38beb70

local images can't be referenced by digest. They are tagged and referenced by a unique ID instead

Starting deploy...

time="2020-04-29T17:12:58+10:00" level=debug msg="Running command: [helm version]"

time="2020-04-29T17:12:58+10:00" level=info msg="Deploying with helm v2.16.1 ..."

time="2020-04-29T17:12:58+10:00" level=debug msg="Executing template &{envTemplate 0xc000472000 0xc000150940 } with environment map[<redacted>]"

time="2020-04-29T17:12:58+10:00" level=debug msg="Running command: [helm --kube-context minikube get service-name-here]"

Helm release service-name-here not installed. Installing...

time="2020-04-29T17:12:58+10:00" level=info msg="Building helm dependencies..."

time="2020-04-29T17:12:58+10:00" level=debug msg="Running command: [helm --kube-context minikube dep build ./helm-chart]"

No requirements found in ./helm-chart/charts.

time="2020-04-29T17:12:58+10:00" level=debug msg="EnvVarMap: map[DIGEST:service-name-here:67f825e8cfd0d1c34fbf36671f5de35f52fefd93d5781433b91610f1a38beb70 DIGEST_ALGO:service-name-here DIGEST_HEX:67f825e8cfd0d1c34fbf36671f5de35f52fefd93d5781433b91610f1a38beb70 IMAGE_NAME:service-name-here]\n"

time="2020-04-29T17:12:58+10:00" level=debug msg="Running command: [helm --kube-context minikube install --name service-name-here ./helm-chart --set-string image.repository=service-name-here,image.tag=67f825e8cfd0d1c34fbf36671f5de35f52fefd93d5781433b91610f1a38beb70 --wait]"

NAME: service-name-here

LAST DEPLOYED: Wed Apr 29 17:12:58 2020

NAMESPACE: core

STATUS: DEPLOYED

RESOURCES:

==> v1/Deployment

NAME AGE

service-name-here-deployment 8s

==> v1/Pod(related)

NAME AGE

service-name-here-deployment-d59d8f5b-95m4r 8s

==> v1/Service

NAME AGE

service-name-here 8s

time="2020-04-29T17:13:06+10:00" level=debug msg="Running command: [helm --kube-context minikube get service-name-here]"

time="2020-04-29T17:13:07+10:00" level=info msg="error decoding parsed yaml: Object 'Kind' is missing in 'REVISION: 1\nRELEASED: Wed Apr 29 17:12:58 2020\nCHART: service-name-here-0.1.0\nUSER-SUPPLIED VALUES:\nimage:\n repository: service-name-here\n tag: 67f825e8cfd0d1c34fbf36671f5de35f52fefd93d5781433b91610f1a38beb70\n\nCOMPUTED VALUES:\nimage:\n pullPolicy: IfNotPresent\n repository: service-name-here\n tag: 67f825e8cfd0d1c34fbf36671f5de35f52fefd93d5781433b91610f1a38beb70\nports:\n containerPort: 3000\n servicePort: 80\nreplicaCount: \"1\"\nrevisionHistoryLimit: 5\n\nHOOKS:\nMANIFEST:\n\n'"

time="2020-04-29T17:13:07+10:00" level=debug msg="getting client config for kubeContext: ``"

time="2020-04-29T17:13:07+10:00" level=debug msg="getting client config for kubeContext: ``"

time="2020-04-29T17:13:07+10:00" level=debug msg="Patching service-name-here in namespace core"

time="2020-04-29T17:13:07+10:00" level=debug msg="Patching service-name-here-deployment in namespace core"

time="2020-04-29T17:13:07+10:00" level=info msg="Deploy complete in 8.9940888s"

Waiting for deployments to stabilize...

time="2020-04-29T17:13:07+10:00" level=debug msg="getting client config for kubeContext: ``"

time="2020-04-29T17:13:07+10:00" level=debug msg="checking status core:deployment/service-name-here-deployment"

time="2020-04-29T17:13:07+10:00" level=debug msg="Running command: [kubectl --context minikube rollout status deployment service-name-here-deployment --namespace core --watch=false]"

time="2020-04-29T17:13:07+10:00" level=debug msg="Command output: [deployment \"service-name-here-deployment\" successfully rolled out\n]"

- core:deployment/service-name-here-deployment is ready.

Deployments stabilized in 322.323ms

time="2020-04-29T17:13:07+10:00" level=debug msg="getting client config for kubeContext: ``"

time="2020-04-29T17:13:07+10:00" level=debug msg="getting client config for kubeContext: ``"

Port forwarding service/service-name-here in namespace core, remote port 80 -> address 127.0.0.1 port 80

time="2020-04-29T17:13:07+10:00" level=debug msg="found open port: 81"

time="2020-04-29T17:13:07+10:00" level=debug msg="Running command: [kubectl --context minikube port-forward --pod-running-timeout 1s service/service-name-here 80:80 --namespace core]"

time="2020-04-29T17:13:07+10:00" level=debug msg="Change detected <nil>"

time="2020-04-29T17:13:07+10:00" level=debug msg="Found dependencies for dockerfile: [{package.json /app/package.json false} {package-lock.json /app/package-lock.json false} {index.js /app/index.js false} {app /app/app false}]"

Watching for changes...

time="2020-04-29T17:13:07+10:00" level=info msg="Streaming logs from pod: service-name-here-deployment-d59d8f5b-95m4r container: api"

time="2020-04-29T17:13:07+10:00" level=debug msg="Running command: [kubectl --context minikube logs --since=10s -f service-name-here-deployment-d59d8f5b-95m4r -c api --namespace core]"

[service-name-here-deployment-d59d8f5b-95m4r api]

[service-name-here-deployment-d59d8f5b-95m4r api] > [email protected] start /app

[service-name-here-deployment-d59d8f5b-95m4r api] > node index.js

[service-name-here-deployment-d59d8f5b-95m4r api]

time="2020-04-29T17:13:08+10:00" level=debug msg="Found dependencies for dockerfile: [{package.json /app/package.json false} {package-lock.json /app/package-lock.json false} {index.js /app/index.js false} {app /app/app false}]"

time="2020-04-29T17:13:59+10:00" level=debug msg="Change detected notify.Write: \"C:\\dev\\src\\ntx\\skaffold-3679-repro\\app\\index.js\""

time="2020-04-29T17:13:59+10:00" level=debug msg="Change detected notify.Write: \"C:\\dev\\src\\ntx\\skaffold-3679-repro\\app\\index.js\""

time="2020-04-29T17:14:00+10:00" level=debug msg="Found dependencies for dockerfile: [{package.json /app/package.json false} {package-lock.json /app/package-lock.json false} {index.js /app/index.js false} {app /app/app false}]"

time="2020-04-29T17:14:00+10:00" level=info msg="files modified: [app\\index.js]"

Generating tags...

- service-name-here -> time="2020-04-29T17:14:00+10:00" level=debug msg="Running command: [git describe --tags --always]"

time="2020-04-29T17:14:00+10:00" level=warning msg="Unable to find git commit: running [git describe --tags --always]\n - stdout: \n - stderr: \"fatal: not a git repository (or any of the parent directories): .git\\n\": exit status 128"

service-name-here:dirty

time="2020-04-29T17:14:00+10:00" level=info msg="Tags generated in 26.8472ms"

Checking cache...

time="2020-04-29T17:14:00+10:00" level=debug msg="Found dependencies for dockerfile: [{package.json /app/package.json false} {package-lock.json /app/package-lock.json false} {index.js /app/index.js false} {app /app/app false}]"

- service-name-here: Not found. Building

time="2020-04-29T17:14:00+10:00" level=info msg="Cache check complete in 4.8953ms"

Found [minikube] context, using local docker daemon.

Building [service-name-here]...

time="2020-04-29T17:14:00+10:00" level=debug msg="Running docker build: context: ., dockerfile: Dockerfile"

time="2020-04-29T17:14:00+10:00" level=debug msg="Skipping credential configuration because docker-credential-gcloud is not on PATH."

time="2020-04-29T17:14:00+10:00" level=debug msg="Found dependencies for dockerfile: [{package.json /app/package.json false} {package-lock.json /app/package-lock.json false} {index.js /app/index.js false} {app /app/app false}]"

Sending build context to Docker daemon 19.46kB

Step 1/8 : FROM node:12-alpine

---> 7a48db49edbf

Step 2/8 : WORKDIR /app

---> Using cache

---> 721b8113e065

Step 3/8 : ADD ./package.json ./package.json

---> Using cache

---> af904cc02929

Step 4/8 : ADD ./package-lock.json ./package-lock.json

---> Using cache

---> c35ecbac66c3

Step 5/8 : RUN npm ci --production

---> Using cache

---> 631dec8dec6b

Step 6/8 : ADD ./index.js ./index.js

---> Using cache

---> 7ed859b0ff6f

Step 7/8 : ADD ./app ./app

---> cd55b1c9820c

Step 8/8 : CMD ["npm", "start"]

---> Running in dd0970d93566

---> 47bfc802693d

Successfully built 47bfc802693d

Successfully tagged service-name-here:dirty

time="2020-04-29T17:14:00+10:00" level=info msg="Build complete in 341.0147ms"

time="2020-04-29T17:14:00+10:00" level=info msg="Test complete in 0s"

time="2020-04-29T17:14:00+10:00" level=debug msg="Terminating port-forward service-service-name-here-core-80"

Tags used in deployment:

- service-name-here -> service-name-here:47bfc802693df53b36d3642064d36e9b9e73693b1a1dfb34421ae852b043c53f

local images can't be referenced by digest. They are tagged and referenced by a unique ID instead

Starting deploy...

time="2020-04-29T17:14:00+10:00" level=info msg="Deploying with helm v2.16.1 ..."

time="2020-04-29T17:14:00+10:00" level=debug msg="Executing template &{envTemplate 0xc0000bab00 0xc0007a4140 } with environment map[<redacted>]"

time="2020-04-29T17:14:00+10:00" level=debug msg="Running command: [helm --kube-context minikube get service-name-here]"

time="2020-04-29T17:14:00+10:00" level=info msg="Building helm dependencies..."

time="2020-04-29T17:14:00+10:00" level=debug msg="Running command: [helm --kube-context minikube dep build ./helm-chart]"

No requirements found in ./helm-chart/charts.

time="2020-04-29T17:14:00+10:00" level=debug msg="EnvVarMap: map[DIGEST:service-name-here:47bfc802693df53b36d3642064d36e9b9e73693b1a1dfb34421ae852b043c53f DIGEST_ALGO:service-name-here DIGEST_HEX:47bfc802693df53b36d3642064d36e9b9e73693b1a1dfb34421ae852b043c53f IMAGE_NAME:service-name-here]\n"

time="2020-04-29T17:14:00+10:00" level=debug msg="Running command: [helm --kube-context minikube upgrade service-name-here --force ./helm-chart --set-string image.repository=service-name-here,image.tag=47bfc802693df53b36d3642064d36e9b9e73693b1a1dfb34421ae852b043c53f --wait]"

time="2020-04-29T17:14:02+10:00" level=info msg="Streaming logs from pod: service-name-here-deployment-5bdcf7c4dd-gx9kn container: api"

time="2020-04-29T17:14:02+10:00" level=debug msg="Running command: [kubectl --context minikube logs --since=65s -f service-name-here-deployment-5bdcf7c4dd-gx9kn -c api --namespace core]"

Release "service-name-here" has been upgraded.

LAST DEPLOYED: Wed Apr 29 17:14:01 2020

NAMESPACE: core

STATUS: DEPLOYED

RESOURCES:

==> v1/Deployment

NAME AGE

service-name-here-deployment 69s

==> v1/Pod(related)

NAME AGE

service-name-here-deployment-5bdcf7c4dd-gx9kn 6s

service-name-here-deployment-d59d8f5b-95m4r 69s

==> v1/Service

NAME AGE

service-name-here 69s

time="2020-04-29T17:14:07+10:00" level=debug msg="Running command: [helm --kube-context minikube get service-name-here]"

time="2020-04-29T17:14:07+10:00" level=info msg="error decoding parsed yaml: Object 'Kind' is missing in 'REVISION: 2\nRELEASED: Wed Apr 29 17:14:01 2020\nCHART: service-name-here-0.1.0\nUSER-SUPPLIED VALUES:\nimage:\n repository: service-name-here\n tag: 47bfc802693df53b36d3642064d36e9b9e73693b1a1dfb34421ae852b043c53f\n\nCOMPUTED VALUES:\nimage:\n pullPolicy: IfNotPresent\n repository: service-name-here\n tag: 47bfc802693df53b36d3642064d36e9b9e73693b1a1dfb34421ae852b043c53f\nports:\n containerPort: 3000\n servicePort: 80\nreplicaCount: \"1\"\nrevisionHistoryLimit: 5\n\nHOOKS:\nMANIFEST:\n\n'"

time="2020-04-29T17:14:07+10:00" level=debug msg="getting client config for kubeContext: ``"

time="2020-04-29T17:14:07+10:00" level=debug msg="getting client config for kubeContext: ``"

time="2020-04-29T17:14:07+10:00" level=debug msg="Patching service-name-here in namespace core"

time="2020-04-29T17:14:07+10:00" level=debug msg="Patching service-name-here-deployment in namespace core"

time="2020-04-29T17:14:07+10:00" level=info msg="Deploy complete in 6.7150603s"

Waiting for deployments to stabilize...

time="2020-04-29T17:14:07+10:00" level=debug msg="getting client config for kubeContext: ``"

time="2020-04-29T17:14:07+10:00" level=debug msg="checking status core:deployment/service-name-here-deployment"

time="2020-04-29T17:14:07+10:00" level=debug msg="Running command: [kubectl --context minikube rollout status deployment service-name-here-deployment --namespace core --watch=false]"

time="2020-04-29T17:14:07+10:00" level=debug msg="Command output: [deployment \"service-name-here-deployment\" successfully rolled out\n]"

- core:deployment/service-name-here-deployment is ready.

Deployments stabilized in 320.6682ms

time="2020-04-29T17:14:07+10:00" level=debug msg="getting client config for kubeContext: ``"

Watching for changes...

time="2020-04-29T17:14:07+10:00" level=debug msg="found open port: 82"

Port forwarding service/service-name-here in namespace core, remote port 80 -> address 127.0.0.1 port 82

time="2020-04-29T17:14:07+10:00" level=debug msg="found open port: 83"

time="2020-04-29T17:14:07+10:00" level=debug msg="Running command: [kubectl --context minikube port-forward --pod-running-timeout 1s service/service-name-here 82:80 --namespace core]"

time="2020-04-29T17:14:18+10:00" level=debug msg="Change detected notify.Write: \"C:\\dev\\src\\ntx\\skaffold-3679-repro\\app\\index.js\""

time="2020-04-29T17:14:18+10:00" level=debug msg="Change detected notify.Write: \"C:\\dev\\src\\ntx\\skaffold-3679-repro\\app\\index.js\""

time="2020-04-29T17:14:19+10:00" level=debug msg="Found dependencies for dockerfile: [{package.json /app/package.json false} {package-lock.json /app/package-lock.json false} {index.js /app/index.js false} {app /app/app false}]"

time="2020-04-29T17:14:19+10:00" level=info msg="files modified: [app\\index.js]"

Generating tags...

- service-name-here -> time="2020-04-29T17:14:19+10:00" level=debug msg="Running command: [git describe --tags --always]"

time="2020-04-29T17:14:19+10:00" level=warning msg="Unable to find git commit: running [git describe --tags --always]\n - stdout: \n - stderr: \"fatal: not a git repository (or any of the parent directories): .git\\n\": exit status 128"

service-name-here:dirty

time="2020-04-29T17:14:19+10:00" level=info msg="Tags generated in 25.4801ms"

Checking cache...

time="2020-04-29T17:14:19+10:00" level=debug msg="Found dependencies for dockerfile: [{package.json /app/package.json false} {package-lock.json /app/package-lock.json false} {index.js /app/index.js false} {app /app/app false}]"

- service-name-here: Not found. Building

time="2020-04-29T17:14:19+10:00" level=info msg="Cache check complete in 2.9308ms"

Found [minikube] context, using local docker daemon.

Building [service-name-here]...

time="2020-04-29T17:14:19+10:00" level=debug msg="Running docker build: context: ., dockerfile: Dockerfile"

time="2020-04-29T17:14:19+10:00" level=debug msg="Skipping credential configuration because docker-credential-gcloud is not on PATH."

time="2020-04-29T17:14:19+10:00" level=debug msg="Found dependencies for dockerfile: [{package.json /app/package.json false} {package-lock.json /app/package-lock.json false} {index.js /app/index.js false} {app /app/app false}]"

Sending build context to Docker daemon 19.46kB

Step 1/8 : FROM node:12-alpine

---> 7a48db49edbf

Step 2/8 : WORKDIR /app

---> Using cache

---> 721b8113e065

Step 3/8 : ADD ./package.json ./package.json

---> Using cache

---> af904cc02929

Step 4/8 : ADD ./package-lock.json ./package-lock.json

---> Using cache

---> c35ecbac66c3

Step 5/8 : RUN npm ci --production

---> Using cache

---> 631dec8dec6b

Step 6/8 : ADD ./index.js ./index.js

---> Using cache

---> 7ed859b0ff6f

Step 7/8 : ADD ./app ./app

---> 0552cd5aea50

Step 8/8 : CMD ["npm", "start"]

---> Running in 0e3ef5a86525

---> 0ecb7fc52910

Successfully built 0ecb7fc52910

Successfully tagged service-name-here:dirty

time="2020-04-29T17:14:20+10:00" level=info msg="Build complete in 283.0358ms"

time="2020-04-29T17:14:20+10:00" level=info msg="Test complete in 0s"

time="2020-04-29T17:14:20+10:00" level=debug msg="Terminating port-forward service-service-name-here-core-80"

Tags used in deployment:

- service-name-here -> service-name-here:0ecb7fc529105a2a45516aa6e862ce2ad118fa0b6876ddd2b679703e2a7094dc

local images can't be referenced by digest. They are tagged and referenced by a unique ID instead

Starting deploy...

time="2020-04-29T17:14:20+10:00" level=info msg="Deploying with helm v2.16.1 ..."

time="2020-04-29T17:14:20+10:00" level=debug msg="Executing template &{envTemplate 0xc0000fd000 0xc000419fc0 } with environment map[<redacted>]"

time="2020-04-29T17:14:20+10:00" level=debug msg="Running command: [helm --kube-context minikube get service-name-here]"

time="2020-04-29T17:14:20+10:00" level=info msg="Building helm dependencies..."

time="2020-04-29T17:14:20+10:00" level=debug msg="Running command: [helm --kube-context minikube dep build ./helm-chart]"

No requirements found in ./helm-chart/charts.

time="2020-04-29T17:14:20+10:00" level=debug msg="EnvVarMap: map[DIGEST:service-name-here:0ecb7fc529105a2a45516aa6e862ce2ad118fa0b6876ddd2b679703e2a7094dc DIGEST_ALGO:service-name-here DIGEST_HEX:0ecb7fc529105a2a45516aa6e862ce2ad118fa0b6876ddd2b679703e2a7094dc IMAGE_NAME:service-name-here]\n"

time="2020-04-29T17:14:20+10:00" level=debug msg="Running command: [helm --kube-context minikube upgrade service-name-here --force ./helm-chart --set-string image.repository=service-name-here,image.tag=0ecb7fc529105a2a45516aa6e862ce2ad118fa0b6876ddd2b679703e2a7094dc --wait]"

time="2020-04-29T17:14:21+10:00" level=info msg="Streaming logs from pod: service-name-here-deployment-6d8474746c-llz9d container: api"

time="2020-04-29T17:14:21+10:00" level=debug msg="Running command: [kubectl --context minikube logs --since=84s -f service-name-here-deployment-6d8474746c-llz9d -c api --namespace core]"

Release "service-name-here" has been upgraded.

LAST DEPLOYED: Wed Apr 29 17:14:20 2020

NAMESPACE: core

STATUS: DEPLOYED

RESOURCES:

==> v1/Deployment

NAME AGE

service-name-here-deployment 92s

==> v1/Pod(related)

NAME AGE

service-name-here-deployment-5bdcf7c4dd-gx9kn 29s

service-name-here-deployment-6d8474746c-llz9d 10s

==> v1/Service

NAME AGE

service-name-here 92s

time="2020-04-29T17:14:30+10:00" level=debug msg="Running command: [helm --kube-context minikube get service-name-here]"

time="2020-04-29T17:14:31+10:00" level=info msg="error decoding parsed yaml: Object 'Kind' is missing in 'REVISION: 3\nRELEASED: Wed Apr 29 17:14:20 2020\nCHART: service-name-here-0.1.0\nUSER-SUPPLIED VALUES:\nimage:\n repository: service-name-here\n tag: 0ecb7fc529105a2a45516aa6e862ce2ad118fa0b6876ddd2b679703e2a7094dc\n\nCOMPUTED VALUES:\nimage:\n pullPolicy: IfNotPresent\n repository: service-name-here\n tag: 0ecb7fc529105a2a45516aa6e862ce2ad118fa0b6876ddd2b679703e2a7094dc\nports:\n containerPort: 3000\n servicePort: 80\nreplicaCount: \"1\"\nrevisionHistoryLimit: 5\n\nHOOKS:\nMANIFEST:\n\n'"

time="2020-04-29T17:14:31+10:00" level=debug msg="getting client config for kubeContext: ``"

time="2020-04-29T17:14:31+10:00" level=debug msg="getting client config for kubeContext: ``"

time="2020-04-29T17:14:31+10:00" level=debug msg="Patching service-name-here in namespace core"

time="2020-04-29T17:14:31+10:00" level=debug msg="Patching service-name-here-deployment in namespace core"

time="2020-04-29T17:14:31+10:00" level=info msg="Deploy complete in 10.7541526s"

Waiting for deployments to stabilize...

time="2020-04-29T17:14:31+10:00" level=debug msg="getting client config for kubeContext: ``"

time="2020-04-29T17:14:31+10:00" level=debug msg="checking status core:deployment/service-name-here-deployment"

time="2020-04-29T17:14:31+10:00" level=debug msg="Running command: [kubectl --context minikube rollout status deployment service-name-here-deployment --namespace core --watch=false]"

time="2020-04-29T17:14:31+10:00" level=debug msg="Command output: [deployment \"service-name-here-deployment\" successfully rolled out\n]"

- core:deployment/service-name-here-deployment is ready.

Deployments stabilized in 327.0336ms

time="2020-04-29T17:14:31+10:00" level=debug msg="getting client config for kubeContext: ``"

Watching for changes...

time="2020-04-29T17:14:31+10:00" level=debug msg="found open port: 84"

Port forwarding service/service-name-here in namespace core, remote port 80 -> address 127.0.0.1 port 84

time="2020-04-29T17:14:31+10:00" level=debug msg="found open port: 85"

time="2020-04-29T17:14:31+10:00" level=debug msg="Running command: [kubectl --context minikube port-forward --pod-running-timeout 1s service/service-name-here 84:80 --namespace core]"

time="2020-04-29T17:14:45+10:00" level=debug msg="Terminating port-forward service-service-name-here-core-80"

Cleaning up...

time="2020-04-29T17:14:45+10:00" level=debug msg="Executing template &{envTemplate 0xc0000bab00 0xc000663980 } with environment map[<redacted>]"

In addition connectivity is not always re-established after a reload. Some times port-forwarding is not re-established after reload despite the success reported in the console, once this starts, connectivity is never re-established no matter how many times you trigger a reload.

Unfortunately since I turned on -v DEBUG I'm having a hard time reproducing this - will keep trying.

You can clone this repo to see the exact configuration I'm using to run this test.

Thanks @GavinOsborn. Just wanted to update that the team is trying to get their hands on windows machine and reproduce this. Due to the new norms, i am not able to my login into windows desktop at work.

We will keep you posted.

Thanks for the update.

This isn't the first time I've heard both you and the minikube teams talk about the struggles of getting access to Windows devices.

Have you explored the idea of using a cloud vm?

@GavinOsborn skaffold tried to terminate the kubectl port-forward process by cancelling the child process's context which on windows, might does not kill the process.

@gaghosh is helping me run this on their personal machine.

We will try to see if this patch to explicitly terminate the process fixes the issue

git diff pkg/skaffold/kubernetes/portforward/kubectl_forwarder.go

diff --git a/pkg/skaffold/kubernetes/portforward/kubectl_forwarder.go b/pkg/skaffold/kubernetes/portforward/kubectl_forwarder.go

index 256be4be0..f411e5238 100644

--- a/pkg/skaffold/kubernetes/portforward/kubectl_forwarder.go

+++ b/pkg/skaffold/kubernetes/portforward/kubectl_forwarder.go

@@ -95,10 +95,13 @@ func (k *KubectlForwarder) forward(parentCtx context.Context, pfe *portForwardEn

}

ctx, cancel := context.WithCancel(parentCtx)

- pfe.cancel = cancel

buf := &bytes.Buffer{}

cmd := portForwardCmd(ctx, k.kubectl, pfe, buf)

+ pfe.cancel = func() {

+ cmd.Process.Kill()

+ cancel()

+ }

logrus.Debugf("Running command: %s", cmd.Args)

if err := cmd.Start(); err != nil {

tejaldesai@tejaldesai-macbookpro2 skaffold (add_protos)

I could verify that it's because the kubectl port-forward processes are not getting terminated.

@tejal29 I tried this patch but it didn't fix the issue. I'm gonna keep digging.

I am not sure if this will work, but kaniko does this to ensure all children are killed

https://github.com/GoogleContainerTools/kaniko/blob/2fc8a7f4bcd4f91052c7defd80f90631b9e2b4cb/pkg/commands/run.go#L113

So it seems that on windows running kubectl portforward creates a child process that binds to the port and doesn't terminate when we terminate the parent process unlike in macos where a single process does that. I've tried a few ways including the above but those didn't work. I tried job objects in a sample app and that worked so I'm trying to incorporate that into skaffold and see if that works.

Most helpful comment

I could verify that it's because the

kubectl port-forwardprocesses are not getting terminated.@tejal29 I tried this patch but it didn't fix the issue. I'm gonna keep digging.