Rclone: Any plan to add support to Google Photos?

If possible, please add a support to upload photo/video files to Google Photos directly!

Although it's possible to add a "Google Photos" folder in Google Drive, and all your Google Photos will be there (organized by date folder), However, photos uploaded into this folder does not seems to reflect into Google Photos.

Also, if we upload in "High Quality" than there will be unlimited storage size for photos and Video. I am not sure the "down-sizing" is done locally or remotely by Google Photos server, however...

I realize Google Photo is not a good place to organize photos but it's a good place to share photos with others. And with a stock of 300k+ photos I really don't want to have my PC running for God-knows-how-long for the upload.... It's the job of RPi!

All 171 comments

Thanks for the suggestion.

There seems to be a comprehensive API here

https://developers.google.com/picasa-web/docs/2.0/developers_guide_protocol

Yes, but I believe Picasa and it's API will be depreciated from May/1...

Foo! Is there a different API for google photos that you can find?

The Picasa API will continue to support uploading images:

Beginning May 1st, 2016, we’ll start rolling out changes to the Picasa Web Albums Data API and no longer support the following functionality:

- Flash support

- Community search

- Mutation operations other than uploads

- All support for tags, comments, and contacts

The API will still support other functions, including reading photos, reading albums, reading photos in albums, and uploading new photos. Although these operations will continue to be supported and the protocol will remain the same, the content included in the responses and the operation behavior may change.

https://developers.google.com/picasa-web/

Edit: Judging by this answer, it will however count towards the storage quota.

Even if it count towards the storage quota, it is still possible to use "reclaim space" in the setting of Photos.google.com which will re-compress (or just convert?) it into non-quota related "high resolution" photos.

Thus before Google comes up with new Photos API (God knows when...), do you think it's the best solution we could get so far?

I've been trying to accomplish this for a long time. I have a huge local store of photos that I want to store in google photos at 'high resolution' as to have them NOT count towards the quotas but instead only use it to be able to see these photos across google devices. If we upload to drive, we get hit with quotas. It would be absolutely awesome to be able to sync them with rclone at a 'under the quota' limit to G-Photos.

+1

Another useful functionality could be to arrange photos/videos in albums, i.e. using the folder name for that.

Nick, do you think that it would be possible to have Google Photos in roadmap soon or later?

Thanks!

I was able to sync photos using this option from Google Drive:

This created a folder called Google Photos which I could sync using:

rclone --exclude '*.{dng,CR2}' --include '{IMG,PANO,VID}_*.{jpg,mp4}' sync 'MyRemoteName:/Google Photos/2016' /mnt/raid0/GooglePhotos/2016/

This examples removes the RAW images because they come from my DSLR, which is already backed up. I'm only interested in the pics uploaded from mobile.

@BenoitDuffez nice writeup thanks - a great way of downloading your pics from google photos.

It doesn't solve the upload part though which is what this issue is about.

@ncw that's strange that Google still doesn't have official API for that..

I'm the author of picasawebsync and believe that the picasaweb API is the only one that currently works for reasons that baffle me. However the binding libraries don't support OAUTH2 which is the only one supported by the REST API, so you have to make a manual workaround.

A quick look though seems to imply that google now does offer a two way sync between drive and photos (https://support.google.com/photos/answer/6156103?co=GENIE.Platform%3DAndroid&hl=en-GB) though I can't readily test it as I'm a google apps user

2 comments based on tests I just did:

a) You can upload to Google Drive and have them displayed in Google Photos if you turn the setting on as someone may have said above. With the exception that Panasonic RW2 raw files render in GDrive but do not show in GPhotos.

b) GDrive is quite a nice medium to display and share photos. But does not have all the polish of GPhotos. And does not have a free option for JPEGs (won't affect me - I want to archive my RW2s and processed TIFFs/DNGs).

But it would be nice to have rclone support if it can be figured out :)

Sadly the reclaim space feature wouldn't work since the photos are uploaded via google drive according to this

Hi, I know this is not a new topic, but is there any solution to the problem @TioBorracho was talking about? I'm facing the same situation, as related on Google Photos support forum topic.

Any help is appreciated!

Just seen this thread. You may be interested in the little app I put together to solve this:

https://github.com/Webreaper/GooglePhotoSync/

It was primarily designed for running on a Mac desktop (it sits in the menu bar, like the Google Drive app, and just uploads/downloads photos to GPhotos. But I also introduced a 'headless' mode, so you can run it on a box without a GUI. I have it running on my Synology NAS, and used it for a few months to upload pictures to the cloud.

The app currently uploads at full res but once I remember the setting I plan to make it an option to upload at the 16MP setting (which is free and doesn't count against your quota) - the idea being that I'll use my unlimited Amazon Cloud storage for proper backup (via rclone) and then have a low-res copy in GPhotos to take advantage of their advanced image search (at least until Amazon bring their intelligent search, aka Family Vault, to the UK).

You could also run leocrawford's excellent sync tool, which would work too.

As has been discussed, the 'two-way' sync between drive and GPhotos is massively limited:

- Despite uploading all photos into named, organised folders (albums) into GPhotos, if you make them available through GDrive they just appear in month/year folders, which is really annoying.

- As has been mentioned, uploading to GDrive and then making the pictures available in GPhotos will not upload them as 'free' resolution. However, you could periodically click the 'Recover storage' option after doing this, and it'll resize them for you. I did this earlier this week (having moved all my full-res images to Amazon Drive) and it resized 1.2TB of images (around 200k x 5MB-9MB images) in about 36 hours.

Perhaps a neat solution would be to see if there's an API for 'Recover Storage' - you could then run rclone to upload to Gdrive, and then automatically trigger a 'recover storage' process once it's uploaded. :D

Hi Mark Otway,

is there any chance to run GooglePhotoSync on a standard Linux-ARM box?

Thanks,

Livio

2017-02-01 10:23 GMT+01:00 Mark Otway notifications@github.com:

Just seen this thread. You may be interested in the little app I put

together to solve this:

https://github.com/Webreaper/GooglePhotoSync/

It was primarily designed for running on a Mac desktop (it sits in the

menu bar, like the Google Drive app, and just uploads/downloads photos to

GPhotos. But I also introduced a 'headless' mode, so you can run it on a

box without a GUI. I have it running on my Synology NAS, and used it for a

few months to upload pictures to the cloud.

The app currently uploads at full res but once I remember the setting I

plan to make it an option to upload at the 16MP setting (which is free and

doesn't count against your quota) - the idea being that I'll use my

unlimited Amazon Cloud storage for proper backup (via rclone) and then have

a low-res copy in GPhotos to take advantage of their advanced image search

(at least until Amazon bring their intelligent search, aka Family Vault, to

the UK).—

You are receiving this because you commented.

Reply to this email directly, view it on GitHub

https://github.com/ncw/rclone/issues/369#issuecomment-276609870, or mute

the thread

https://github.com/notifications/unsubscribe-auth/ARz94N-SvIiWwQRVT2ZDgeWQ17XeVl5wks5rYE76gaJpZM4Hh4qq

.

Don't see why not, it's just vanilla Java. I've run it on a Synology box

which is basically Linux. Unfortunately though, it won't work any more

because Google recently killed the API access for creating albums, and

haven't replaced it. You just get a 500 error now. It's caused a great deal

of consternation on the picasaweb API developer forum....

On Tue, 28 Feb 2017, 18:29 lv913, notifications@github.com wrote:

Hi Mark Otway,

is there any chance to run GooglePhotoSync on a standard Linux-ARM box?

Thanks,

Livio

2017-02-01 10:23 GMT+01:00 Mark Otway notifications@github.com:

Just seen this thread. You may be interested in the little app I put

together to solve this:

https://github.com/Webreaper/GooglePhotoSync/

It was primarily designed for running on a Mac desktop (it sits in the

menu bar, like the Google Drive app, and just uploads/downloads photos to

GPhotos. But I also introduced a 'headless' mode, so you can run it on a

box without a GUI. I have it running on my Synology NAS, and used it for

a

few months to upload pictures to the cloud.

The app currently uploads at full res but once I remember the setting I

plan to make it an option to upload at the 16MP setting (which is free

and

doesn't count against your quota) - the idea being that I'll use my

unlimited Amazon Cloud storage for proper backup (via rclone) and then

have

a low-res copy in GPhotos to take advantage of their advanced image

search

(at least until Amazon bring their intelligent search, aka Family Vault,

to

the UK).—

You are receiving this because you commented.

Reply to this email directly, view it on GitHub

https://github.com/ncw/rclone/issues/369#issuecomment-276609870, or

mute

the thread

<

https://github.com/notifications/unsubscribe-auth/ARz94N-SvIiWwQRVT2ZDgeWQ17XeVl5wks5rYE76gaJpZM4Hh4qq.

—

You are receiving this because you commented.

Reply to this email directly, view it on GitHub

https://github.com/ncw/rclone/issues/369#issuecomment-283122905, or mute

the thread

https://github.com/notifications/unsubscribe-auth/AErI7uyd9psN9E56t7MBVrQZRxfyvmI1ks5rhGdlgaJpZM4Hh4qq

.

Any prediction for this feature?

I would also love to be able to rclone to/from Google Photos.

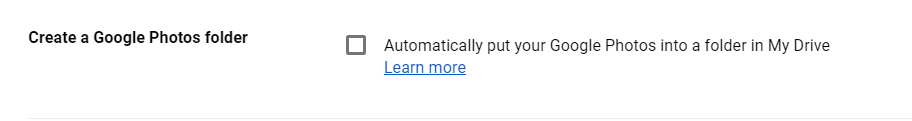

Many items which are in my Google Photos are not showing up or syncing via the Google Photos folder in my Google Drive as they're supposed to.

The "Create a Google Photos folder" option in Drive is apparently unreliable; it does not show all items from Google Photos.

It's a bit pointless requesting this feature when Google have shut down the API that allows you to write to GPhotos. Also, I can tell you from my experience of writing a client back when the API was still available, it's in no way rich enough or functional enough to be able to implement with rclone.

If you're putting photos in Gdrive and they're not showing up in GPhotos, you should raise a support ticket with Google. I have a quarter of a million photos in Gdrive and they all show up just fine in Photos.

@Webreaper Actually I'm more concerned with GP > GD ... My phone auto uploads all items to GP, then I use GD to sync them to my desktop. I noticed today that many items in my GP are not showing up in my GD.

Ah, yes, we'll, given the lack of support for GPhotos, that doesn't surprise me at all. I had all sorts of problems with GPhotos not backing up photos, and so on. In the end I've moved to an entirely Gdrive based workflow.

- Turn off Google photos backup

- Install this: https://play.google.com/store/apps/details?id=com.ttxapps.drivesync

- Configure to sync to a particular folder when any new pics are taken

- Use rclone to sync from Gdrive back down to my pc.

Works a treat, and a billion times more reliable (and faster too) than using Google's backup and autosync.

What about Google space usage? I suppose that uploading pictures through

Gdrive will not be free as it is using Gphotos native backup... am I wrong?

Il 24/Dic/2017 20:57, "Mark Otway" notifications@github.com ha scritto:

Ah, yes, we'll, given the lack of support for GPhotos, that doesn't

surprise me at all. I had all sorts of problems with GPhotos not backing up

photos, and so on. In the end I've moved to an entirely Gdrive based

workflow.

- Turn off Google photos backup

- Install this: https://play.google.com/store/

apps/details?id=com.ttxapps.drivesync

https://play.google.com/store/apps/details?id=com.ttxapps.drivesync- Configure to sync to a particular folder when any new pics are taken

- Use rclone to sync from Gdrive back down to my pc.

Works a treat, and a billion times more reliable (and faster too) than

using Google's backup and autosync.—

You are receiving this because you commented.

Reply to this email directly, view it on GitHub

https://github.com/ncw/rclone/issues/369#issuecomment-353800827, or mute

the thread

https://github.com/notifications/unsubscribe-auth/ARz94Fl3yXWEAEj6uw17MXxnx6Jm7a4Yks5tDqyWgaJpZM4Hh4qq

.

If you upload photos to either GDrive or GPhotos and they're less than 16Mp, then they don't count against your storage tarifff. However, I'm using this as a primary offsite backup, so need photos stored in full ('original', as Google calls it) resolution, so yes, it's not free.

@Webreaper -- That sync app looks cool; but in the past I've always had issues with 3rd party syncers, mainly their not running until I launch them manually. Does this reliably upload in the background immediately upon file creation? THanks

I've been using it for about a year, and it's worked totally reliably. On my wife's phone I had to enable the constant notification for the instant upload to work, but that's not a big deal.

Many items which are in my Google Photos are not showing up or syncing via the Google Photos folder in my Google Drive as they're supposed to.

The "Create a Google Photos folder" option in Drive is apparently unreliable; it does not show all items from Google Photos.

@Webreaper – I'm having this same issue and it's driving me nuts. Have you made an progress on debugging? The checkbox seems to have a mind of its own.

If I can fix that functionality, I'll be able to use rclone to backup my Google Photos. Until then, I'm stuck with running Takeout every one in a while. 🙄

As I said earlier in the thread, Do it the other way around. Push the photos into drive, and use the "show drive photos in Google photos" option.

@Webreaper ... but if you "Show Google Drive photos & videos in your Photos library" won't it show ALL photos/videos from ALL folders in Drive? I really don't want that; I just want my CAMERA photos in Photos. Not all the junk from my millions of other folders.

Then you're out of luck....

I use rclone to backup google photos via drive. I find it works very well, but it is necessary to run rclone dedupe on it every now and again as it often seems to have duplicate file names in.

@Webreaper wrote:

It's a bit pointless requesting this feature when Google have shut down the API that allows you to write to GPhotos. Also, I can tell you from my experience of writing a client back when the API was still available, it's in no way rich enough or functional enough to be able to implement with rclone.

Do you think I should close this issue then now that the Google photos API is gone? When did that go - I seemed to have missed that?

The new Backup and Sync client from Google (which replaces the old Drive-only and Photos-only backup clients) interacts with Google Photos via a new Drive API in a very similar way to how "Computers" are backed up ("descendantOfRoot": false so it only shows under Computers in the Drive web UI and not under the normal Drive folder hierarchy) see #1773. This issue should probably be repurposed to implement that new Drive-based API (since it appears to only be some extra flags on existing Drive API calls to control send to Photos ("alwaysShowInPhotos": true) and original vs high-quality) instead of the old and dead picasa web albums API. Not sure if Google has documented the Drive-based Photos API anywhere yet though.

@neocryptek – Any more info on this part?

The new Backup and Sync client from Google (which replaces the old Drive-only and Photos-only backup clients) interacts with Google Photos via a new Drive API in a very similar way to how "Computers" are backed up ("descendantOfRoot": false so it only shows under Computers in the Drive web UI and not under the normal Drive folder hierarchy)

Not sure if I understand how Google Photos relates to descendantOfRoot.

@neocryptek interesting... If you come across any docs can you link them?

Good news on the horizon - TechCrunch

Direct link to API reference:

https://developers.google.com/photos/library/reference/

I'm using that API and I'm getting two URLs per picture:

productUrl: Contains a private link to the working Google Photos application which loads the photo in full resolution (which is publicly accessible by the way)baseUrl: Contains a public link to a thumbnail ~500x300

I haven't found a way to get the full resolution picture URL from the API. If someone finds it please notify it.

EDIT: Nevermind, you have to read the file metadata and append the required resolution to the baseUrl:

const { baseUrl, mediaMetadata } = media;

const { width, height } = mediaMetadata;

return `${baseUrl}=w${width}-h${height}`;

Hi @amatiasq ,

Are you saying that you already implemented this new remote?

@prateeksriv No, I was creating a standalone sync program from Google Photos API to Amazon S3 when I found this project. I don't know how to create a remote for it.

After checking through the Google Photos API, I propose following ideas for rclone to fully support two way sync Google Photos:

A: from local upload to GP

- It put all files in Year/Month directory structures

- It can (optionally) upload full res or free size

- (Optionally) Create Album based on local directory structure...

B: from GP download to local

- If Album information is presented, then add photo downloaded into local folder named after Album

- If Album is not available, then just maintain GP structure (Year/Month)

How do you guys think?

That's exactly how I did it in my sync utility (mentioned earlier in the thread). Would be like a very simple 1-directory-deep file sync.

@lssong99 I really like the approach you've sketched out. My only suggestion would be to make the formatting of the directory & album names minimally customizable: e.g. yyyy/MM, yyyy-MM-dd, etc.

Also, AFAIK GP does not allow for nested folders, so the albums named Year/Month would not actually be a subfolder. Perhaps that could be glossed over from local -> remote / remote -> local, but should be kept in mind.

For the mapping, you need to do it in a ay that remains distinct otherwise to photos on one side might resolve to the same name on the other side. My python code to do this is available here if needed:

Looks like Google is coming up with a proper API interface:

https://developers.google.com/photos/library/guides/get-started

The Go library is here: http://godoc.org/google.golang.org/api/photoslibrary/v1

You can find a couple of example apps: https://godoc.org/google.golang.org/api/photoslibrary/v1?importers

Probably the best plan is to use the id field as the filename. As mentioned in the previous content (https://github.com/ncw/rclone/issues/369#issuecomment-391292753) you essentially request baseURL with the height and width in the metadata field.

{

"mediaItems": [

{

"id": "super_long_string",

"productUrl": "https://photos.google.com/lr/photo/super_long_string",

"baseUrl": "https://lh3.googleusercontent.com/lr/private_unauthenticated_long_string",

"mimeType": "image/jpeg",

"mediaMetadata": {

"creationTime": "2018-07-13T23:17:56Z",

"width": "3036",

"height": "4048",

"photo": {

"cameraMake": "Google",

"cameraModel": "Pixel",

"focalLength": 4.67,

"apertureFNumber": 2,

"isoEquivalent": 494

}

}

If sync'ing wouldn't you want to use the original filename, rather than create one based on an ID?

I didn't see a way to get to the original filename. Do you @ebridges?

Perhaps I'm misunderstanding! My understanding is that since rclone is a sync library the client would be reading a list of photos as gathered from local disk and then uploading them to GPhotos. As such, the client for the GPhotos storage system would have the original filenames. Please advise if I'm missing your point.

My use case is copying from Google Photos to S3. Essentially like the http

source in rclone already.

Regardless I don't think gphotos preseves filenames on upload.

On Sat, Jul 14, 2018, 8:36 AM Edward Q. Bridges notifications@github.com

wrote:

Perhaps I'm misunderstanding! My understanding is that since rclone is a

sync library the client would be reading a list of photos as gathered from

local disk and then uploading them to GPhotos. As such, the client for the

GPhotos storage system would have the original filenames. Please advise if

I'm missing your point.—

You are receiving this because you commented.

Reply to this email directly, view it on GitHub

https://github.com/ncw/rclone/issues/369#issuecomment-405030997, or mute

the thread

https://github.com/notifications/unsubscribe-auth/AACDCIENNwyyfIq-Bo0sMKrAGXrJETjqks5uGg_7gaJpZM4Hh4qq

.

Gphotos does preserve filename. That's how I was able to write a Google

Photos sync tool I mentioned earlier in the thread.

On Sat, 14 Jul 2018, 17:12 Brandon Philips, notifications@github.com

wrote:

My use case is copying from Google Photos to S3. Essentially like the http

source in rclone already.Regardless I don't think gphotos preseves filenames on upload.

On Sat, Jul 14, 2018, 8:36 AM Edward Q. Bridges notifications@github.com

wrote:Perhaps I'm misunderstanding! My understanding is that since rclone is a

sync library the client would be reading a list of photos as gathered

from

local disk and then uploading them to GPhotos. As such, the client for

the

GPhotos storage system would have the original filenames. Please advise

if

I'm missing your point.—

You are receiving this because you commented.

Reply to this email directly, view it on GitHub

https://github.com/ncw/rclone/issues/369#issuecomment-405030997, or

mute

the thread

<

https://github.com/notifications/unsubscribe-auth/AACDCIENNwyyfIq-Bo0sMKrAGXrJETjqks5uGg_7gaJpZM4Hh4qq.

—

You are receiving this because you were mentioned.

Reply to this email directly, view it on GitHub

https://github.com/ncw/rclone/issues/369#issuecomment-405033239, or mute

the thread

https://github.com/notifications/unsubscribe-auth/AErI7kx9vchBXaxKkzr7xNu82IF9hK3kks5uGhhngaJpZM4Hh4qq

.

By the way, if you just want to copy from Gphotos to S3, and you don't care

about filenames or folders then just enable "Show Google photos in Drive"

and use Rclone to sync from Gdrive to S3.

On Sat, 14 Jul 2018, 17:12 Brandon Philips, notifications@github.com

wrote:

My use case is copying from Google Photos to S3. Essentially like the http

source in rclone already.Regardless I don't think gphotos preseves filenames on upload.

On Sat, Jul 14, 2018, 8:36 AM Edward Q. Bridges notifications@github.com

wrote:Perhaps I'm misunderstanding! My understanding is that since rclone is a

sync library the client would be reading a list of photos as gathered

from

local disk and then uploading them to GPhotos. As such, the client for

the

GPhotos storage system would have the original filenames. Please advise

if

I'm missing your point.—

You are receiving this because you commented.

Reply to this email directly, view it on GitHub

https://github.com/ncw/rclone/issues/369#issuecomment-405030997, or

mute

the thread

<

https://github.com/notifications/unsubscribe-auth/AACDCIENNwyyfIq-Bo0sMKrAGXrJETjqks5uGg_7gaJpZM4Hh4qq.

—

You are receiving this because you were mentioned.

Reply to this email directly, view it on GitHub

https://github.com/ncw/rclone/issues/369#issuecomment-405033239, or mute

the thread

https://github.com/notifications/unsubscribe-auth/AErI7kx9vchBXaxKkzr7xNu82IF9hK3kks5uGhhngaJpZM4Hh4qq

.

I don't see filename in the API. Can you link please?

https://developers.google.com/photos/library/reference/rest/v1/mediaItems#MediaItem

On Sat, Jul 14, 2018, 9:30 AM Mark Otway notifications@github.com wrote:

By the way, if you just want to copy from Gphotos to S3, and you don't care

about filenames or folders then just enable "Show Google photos in Drive"

and use Rclone to sync from Gdrive to S3.On Sat, 14 Jul 2018, 17:12 Brandon Philips, notifications@github.com

wrote:My use case is copying from Google Photos to S3. Essentially like the

http

source in rclone already.Regardless I don't think gphotos preseves filenames on upload.

On Sat, Jul 14, 2018, 8:36 AM Edward Q. Bridges <

[email protected]>

wrote:Perhaps I'm misunderstanding! My understanding is that since rclone is

a

sync library the client would be reading a list of photos as gathered

from

local disk and then uploading them to GPhotos. As such, the client for

the

GPhotos storage system would have the original filenames. Please advise

if

I'm missing your point.—

You are receiving this because you commented.

Reply to this email directly, view it on GitHub

https://github.com/ncw/rclone/issues/369#issuecomment-405030997, or

mute

the thread

<https://github.com/notifications/unsubscribe-auth/AACDCIENNwyyfIq-Bo0sMKrAGXrJETjqks5uGg_7gaJpZM4Hh4qq

>.

—

You are receiving this because you were mentioned.

Reply to this email directly, view it on GitHub

https://github.com/ncw/rclone/issues/369#issuecomment-405033239, or

mute

the thread

<

https://github.com/notifications/unsubscribe-auth/AErI7kx9vchBXaxKkzr7xNu82IF9hK3kks5uGhhngaJpZM4Hh4qq.

—

You are receiving this because you commented.

Reply to this email directly, view it on GitHub

https://github.com/ncw/rclone/issues/369#issuecomment-405034322, or mute

the thread

https://github.com/notifications/unsubscribe-auth/AACDCHrFZuI46fmqrKyLDfIyyWRB8GxZks5uGhyPgaJpZM4Hh4qq

.

Ah, no idea about that. My tool used the old API. I was more meaning that

Gphotos does store the filename. Whether the new API exposes that is a

different question. Sorry!

On Sat, 14 Jul 2018, 18:49 Brandon Philips, notifications@github.com

wrote:

I don't see filename in the API. Can you link please?

https://developers.google.com/photos/library/reference/rest/v1/mediaItems#MediaItem

On Sat, Jul 14, 2018, 9:30 AM Mark Otway notifications@github.com wrote:

By the way, if you just want to copy from Gphotos to S3, and you don't

care

about filenames or folders then just enable "Show Google photos in Drive"

and use Rclone to sync from Gdrive to S3.On Sat, 14 Jul 2018, 17:12 Brandon Philips, notifications@github.com

wrote:My use case is copying from Google Photos to S3. Essentially like the

http

source in rclone already.Regardless I don't think gphotos preseves filenames on upload.

On Sat, Jul 14, 2018, 8:36 AM Edward Q. Bridges <

[email protected]>

wrote:Perhaps I'm misunderstanding! My understanding is that since rclone

is

a

sync library the client would be reading a list of photos as gathered

from

local disk and then uploading them to GPhotos. As such, the client

for

the

GPhotos storage system would have the original filenames. Please

advise

if

I'm missing your point.—

You are receiving this because you commented.

Reply to this email directly, view it on GitHub

https://github.com/ncw/rclone/issues/369#issuecomment-405030997,

or

mute

the thread

<>

.

—

You are receiving this because you were mentioned.

Reply to this email directly, view it on GitHub

https://github.com/ncw/rclone/issues/369#issuecomment-405033239, or

mute

the thread

<https://github.com/notifications/unsubscribe-auth/AErI7kx9vchBXaxKkzr7xNu82IF9hK3kks5uGhhngaJpZM4Hh4qq

>.

—

You are receiving this because you commented.

Reply to this email directly, view it on GitHub

https://github.com/ncw/rclone/issues/369#issuecomment-405034322, or

mute

the thread

<

https://github.com/notifications/unsubscribe-auth/AACDCHrFZuI46fmqrKyLDfIyyWRB8GxZks5uGhyPgaJpZM4Hh4qq.

—

You are receiving this because you were mentioned.

Reply to this email directly, view it on GitHub

https://github.com/ncw/rclone/issues/369#issuecomment-405039095, or mute

the thread

https://github.com/notifications/unsubscribe-auth/AErI7rBASjadRzGpXed9NWYd1JRyoC-Tks5uGi8TgaJpZM4Hh4qq

.

I'm sure that Google stores the original filename of the medias. It shows when downloading the file and in the info panel.

I found a feature request asking for including the original filename within the media item object (https://issuetracker.google.com/issues/79656863) that includes a bad workaround. A header is clipped to the baseUrl containing the actual original file name, querying it asking for a 1x1 pixels image could do the trick. It looks like content-disposition:inline;filename="signal-2016-08-09-211213.jpg"

They fixed it! :)

https://developers.google.com/photos/library/support/release-notes#2018-07-31

Can somebody summarize where are we? Is the API usable at least in theory to meet the original request (upload to Google Photos)?

I haven't upload any photos but consumed the API and it worked good enough. I see endpoints to upload new content but I haven't used them.

I'm pretty sure the API is ready for this feature.

I'm pretty sure the API is ready for this feature.

Anyone want to work on it?

I haven't found any way to upload pictures in "high quality" (unlimited

storage offer) through Google Photos upload API other than calling the

"Save some space" trigger which convert any already uploaded pictures to

"high quality" (can take days).

Any alternative ?

@aslafy-z correct, I don't think there's any way to upload via the API as "high quality": https://developers.google.com/photos/library/guides/api-limits-quotas

"All media items uploaded to Google Photos using the API are stored in full resolution at original quality. They count toward the user’s storage.

Note: If your uploads exceed 25MB per user, your application should remind the user that these uploads will count towards storage in their Google Account."

I'd only want original quality. But for anyone who does, they could either

use imagemagick/graphics magic, or if we wanted to be really fancy, have

rclone call either of those if available to shrink pics before upload. 😁

On Tue, 28 Aug 2018, 01:41 di-dc, notifications@github.com wrote:

@aslafy-z https://github.com/aslafy-z correct, I don't think there's

any way to upload via the API as "high quality":

https://developers.google.com/photos/library/guides/api-limits-quotas"All media items uploaded to Google Photos using the API are stored in

full resolution at original quality. They count toward the user’s storage.Note: If your uploads exceed 25MB per user, your application should remind

the user that these uploads will count towards storage in their Google

Account."—

You are receiving this because you were mentioned.

Reply to this email directly, view it on GitHub

https://github.com/ncw/rclone/issues/369#issuecomment-416413304, or mute

the thread

https://github.com/notifications/unsubscribe-auth/AErI7uHO34gxD_NoyW_52dowMKiX3UYxks5uVJG9gaJpZM4Hh4qq

.

My use-case would be opposite, only upload "for free". Do you know if resizing (via imagemagick) before uploading would still be considered "free storage" by Google ? Are the spec of resolution, compression rate and file size documented anywhere in order to be considered "free storage" ?

I always assumed that only Google authored tools would be able to flag images as "free" when uploading them, and that uploading manually any files, even small one, would always count toward your quota...

No, it doesn't work like that. If you upload images that are small enough

("less than 16MP" in Google parlance) then they don't count against your

quota. They don't have to be converted by Google, or flagged explicitly.

The biggest challenge is that nobody - to my knowledge - has ever found a

definitive answer to the question of "what does Google count as 16MP". From

a bit of googling, that seems to mean "the longest side of the image

exceeds 2048 pixels".

On Tue, 28 Aug 2018 at 06:29 Adrien Crivelli notifications@github.com

wrote:

My use-case would be opposite, only upload "for free". Do you know if

resizing (via imagemagick) before uploading would still be considered "free

storage" by Google ? Are the spec of resolution, compression rate and file

size documented anywhere in order to be considered "free storage" ?I always assumed that only Google authored tools would be able to flag

images as "free" when uploading them, and that uploading manually any

files, even small one, would always count toward your quota...—

You are receiving this because you were mentioned.

Reply to this email directly, view it on GitHub

https://github.com/ncw/rclone/issues/369#issuecomment-416455850, or mute

the thread

https://github.com/notifications/unsubscribe-auth/AErI7omZBvNA6HHK7-_kKqnCX2lgq1Vvks5uVNUSgaJpZM4Hh4qq

.

Is this something that can be added? I'm not using the free storage, I want to store my photos in the original format. My current use case is actually that I want to sync all of my photos from one Google account to another.

Have you tried the library share feature? (Although you can only share with a max of one account)

Thanks @ammgws !

Anyone want to work on it?

@ncw I'm new to Go and this codebase, but I'd be willing to give it a shot if no one has started already

Really love the app btw

@skennedy if you (or anyone else) would like to give it a go then I'd be delighted!

See the CONTRIBUTING guide and in particular the writing a new backend section :-)

It is likely this would need a custom set of integration tests as this isn't a "general purpose" remote, only being able to store photos.

a Go library written for this

Any news on this?

https://www.androidpolice.com/2019/06/12/google-drive-photos-sync/ as a heads-up for those relying on that as a fix..

Well, that sucks big-time. :(

On Wed, 12 Jun 2019 at 15:12, Lars Solberg notifications@github.com wrote:

https://www.androidpolice.com/2019/06/12/google-drive-photos-sync/ as a

heads-up for those relying on that as a fix..—

You are receiving this because you were mentioned.

Reply to this email directly, view it on GitHub

https://github.com/ncw/rclone/issues/369?email_source=notifications&email_token=ABFMR3Q2OYKHBJCT5X6A32LP2D74TA5CNFSM4B4HRKVKYY3PNVWWK3TUL52HS4DFVREXG43VMVBW63LNMVXHJKTDN5WW2ZLOORPWSZGODXQRW6I#issuecomment-501291897,

or mute the thread

https://github.com/notifications/unsubscribe-auth/ABFMR3UV4QYQYBDBG2KFT2LP2D74TANCNFSM4B4HRKVA

.

Well actually things are already very bad so it's hard to suck more than it does now. Actually it might be better. Why it sucks? Photos->Drive was actually the only way I know to bulk-delete things in Photos (as in you enable Photos to be shown in Drive and then you have some folders in Drive just by year where you can very well delete stuff and it'll get deleted in Photos). Otherwise except for shift-click or some other nonsense I don't know how to delete stuff in Photos.

NOW for the other direction (Drive->Photos) it doesn't work at all for Gsuite users! And for the "normal" (usually free, 15GB) users where it works it is next to useless as they'll run out of space immediately if uploading with rclone to gdrive (with the idea that the pictures will end up in Photos as well).

How it can be better? "If you really want to move photos and videos between Drive and Photos, Google will have a new option in Photos called "Upload from Drive." That lets you manually choose images in Drive to import into Photos. That's better than nothing, I suppose." This could be very useful, assuming you don't need to manually do much and that you can just point it to a big folder in Drive (uploaded with rclone) and tell it: put it to Photos too!

I don't see filename in the API. Can you link please?

Jus going back to this conversation earlier in the thread - I just tested the API, and if you use the Media Item 'get' endpoint, it definitely returns the filename.

https://developers.google.com/photos/library/reference/rest/v1/mediaItems/get

Here's a snippet of output. So doing the sync should be straightforward. That said, they seem to only return the creation date, not the last-update date, which is an impediment to writing decent sync code.

{

"id": "some unique image ID",

"productUrl": "https://photos.google.com/lr/photo/some unique ID",

"baseUrl": "https://lh3.googleusercontent.com/lr/blah blah blah some huge long ID",

"mimeType": "image/jpeg",

"mediaMetadata": {

"creationTime": "2017-05-24T19:08:27Z",

"width": "3024",

"height": "4032",

"photo": {

"cameraMake": "samsung",

"cameraModel": "SM-G935F",

"focalLength": 4.2,

"apertureFNumber": 1.7,

"isoEquivalent": 100

}

},

"filename": "20170524_200827.jpg"

}

Just want to chime in & re-share more clearly what @xeor already obtusely shared: Google is deprecating the Google Photos <-> Google Drive sync capability (in mother frakking less than a month! frelllllll!!!).

This is what I've been using to access my Google Photos collection, & has been my primary use of rclone. All of a sudden native Google Photos support has become a lot more important to me. I went hunting through issues to find Google Photos support, after I heard of this new addition to the gcemetary & just want to clearly share the state of affairs for this ticket that I suddenly care much more about.

@Webreaper thanks for finding that filename. I'd challenge someone to try uploading two different files with the same filename & see what happens, to be sure we have our bases covered.

I feel like I may have reset my camera in the past & ended up with multiple different files having the same file name, & gphotos dealing with it ok. I don't know how/if rclone could/should handle that!

The way I handled it in my sync app using the old API was to assume that

there's a 1:1 relationship between folders and albums. However, you're

right, I think it's possible to have multiple files with the same filename

in a single folder/album. We'd probably need to have the same sort of

functionality as the GDrive dedupe in rclone (since Drive can also have

multiple files with the same name in a single folder).

On Wed, 12 Jun 2019 at 21:38, rektide notifications@github.com wrote:

@Webreaper https://github.com/Webreaper thanks for finding that

filename. I'd challenge someone to try uploading two different files with

the same filename & see what happens, to be sure we have our bases covered.I feel like I may have reset my camera in the past & ended up with

multiple different files having the same file name, & gphotos dealing with

it ok. I don't know how/if rclone could/should handle that!—

You are receiving this because you were mentioned.

Reply to this email directly, view it on GitHub

https://github.com/ncw/rclone/issues/369?email_source=notifications&email_token=ABFMR3W3YD4HVCNBTVJICV3P2FM7LA5CNFSM4B4HRKVKYY3PNVWWK3TUL52HS4DFVREXG43VMVBW63LNMVXHJKTDN5WW2ZLOORPWSZGODXRXINQ#issuecomment-501445686,

or mute the thread

https://github.com/notifications/unsubscribe-auth/ABFMR3W6D4OSMKQQ64UXIIDP2FM7LANCNFSM4B4HRKVA

.

Yep. I came to the same conclusion.

Thing is... It appears that although drive and photos won't sync, their docs sure do imply that the user can sync directly to both services. So it may all be okay in the end.

Switching off drive to photos syncing is going to affect how I backup my google photos, so I think it is time I put some effort into this!

It looks like google have now got a decent API

https://developers.google.com/photos/library/guides/overview

There doesn't appear to be a go module to help with that as google have withdrawn https://google.golang.org/api/photoslibrary/v1 that https://github.com/nmrshll/google-photos-api-client-go is based on.

However the API is simple enough that I propose building a google photos backend just using the REST API.

If anyone else is already working on this then please let me know and we can collaborate.

Nick, it's great to see you prioritizing this work! Do you intend to make this a two way backend? I would like to use rclone to upload new local photos from my camera into google photos.

Nick, it's great to see you prioritizing this work! Do you intend to make this a two way backend? I would like to use rclone to upload new local photos from my camera into google photos.

+1 ! It would be great to be able to use rclone instead of the clunky and unreliable web interface to download/upload photos!

Nick, it's great to see you prioritizing this work! Do you intend to make this a two way backend? I would like to use rclone to upload new local photos from my camera into google photos.

My first goal will be a read only backend at which point I'll release a beta. I would like it to be a full read-write backend for release if possible.

What the rclone filesystem should look like for google photos needs some discussion.

Google photos aren't stored in directories like a normal file system. The natural way to show them would be all 50,000 files in a single directory which isn't very useful.

There are albums, which photos can appear in multiple of, and there are ways of searching through the photos of these the date based ones look the most useful.

So I think the gphotos backend should have a synthetic root directory, something like this

- media

- all

- image

- image

- by-year

- 2019

- image

- image

- 2018

- image

- image

- by-year-month

- 2019-01

- image

- image

- 2019-02

- image

- image

- by-media-type

- photo

- video

- other filters here

- album

- name of album

- picture

- picture

- name of album

- picture

- picture

- shared albums

Flags could also (or in addition) be provided for the filters.

What would people think if uploads were only possible to albums? That would simplify the syncing logic as these behave the most like directories.

For me, uploading only to albums makes the most sense. When I wrote the sync for the old Photos API, that's how I did it.

Interestingly this is what I used to want to but the nature of Google

Photos has changed my use case. I dump everything into Google now as the

primary as our phones are already doing this - I actually like just

dragging files into the browser and forgetting about it. This comes after

years of trying to do it "well" myself. However, I would like to have a

local backup arranged by year... ideally. This is what I have been trying

to find a solution to.

On Fri, 14 Jun 2019 at 11:12, Mark Otway notifications@github.com wrote:

For me, uploading only to albums makes the most sense. When I wrote the

sync https://github.com/Webreaper/GooglePhotoSync for the old Photos

API, that's how I did it.—

You are receiving this because you commented.

Reply to this email directly, view it on GitHub

https://github.com/ncw/rclone/issues/369?email_source=notifications&email_token=ABPENH4GAZG4TDJT6CJTDLTP2NVKHA5CNFSM4B4HRKVKYY3PNVWWK3TUL52HS4DFVREXG43VMVBW63LNMVXHJKTDN5WW2ZLOORPWSZGODXWLWAQ#issuecomment-502053634,

or mute the thread

https://github.com/notifications/unsubscribe-auth/ABPENH2JQVFZ6OSJX3M5KATP2NVKHANCNFSM4B4HRKVA

.

Interestingly this is what I used to want to but the nature of Google Photos has changed my use case. I dump everything into Google now as the primary as our phones are already doing this - I actually like just dragging files into the browser and forgetting about it. This comes after years of trying to do it "well" myself.

Just dumping stuff in would argue for a /upload folder which doesn't show any files except those which have been uploaded recently. This isn't very sync friendly though but would be great for one off dumps of files. Maybe rclone could search for a duplicate before uploading.

I'm trying to avoid rclone having a folder with all the google media in a flat directory structure which might be millions of items.

However, I would like to have a local backup arranged by year... ideally. This is what I have been trying to find a solution to.

That is my preference for backing up google photos.

Presumably for those who favour backing up by year - and don't really care about albums - then they would organise their local structure into:

- root

- 2019

- picture1

- picture2

- 2018

- picture3

- picture4

And then sync using the album-based structure. They would end up with an album for each year. But that would be a reasonable approach?

Also, albums being a virtual concept is actually quite useful. Photos are

photos - albums have a one to many concept and work well this way.

On Fri, 14 Jun 2019 at 11:47, Mark Otway notifications@github.com wrote:

Presumably for those who favour backing up by year - and don't really care

about albums - then they would organise their local structure into:

- root

- 2019

- picture1

- picture2

- 2018

- picture3

- picture4

And then sync using the album-based structure. They would end up with an

album for each year. But that would be a reasonable approach?—

You are receiving this because you commented.

Reply to this email directly, view it on GitHub

https://github.com/ncw/rclone/issues/369?email_source=notifications&email_token=ABPENH6SXC5ZFQGAX2UB35TP2NZNJA5CNFSM4B4HRKVKYY3PNVWWK3TUL52HS4DFVREXG43VMVBW63LNMVXHJKTDN5WW2ZLOORPWSZGODXWN6HA#issuecomment-502062876,

or mute the thread

https://github.com/notifications/unsubscribe-auth/ABPENH5FDSOUNW4REXC3X2DP2NZNJANCNFSM4B4HRKVA

.

I have found that having photos organized in albums by year can still leave one with too many photos in each album. The folder structure that I've found to work best is like this:

- root

- 2019

- 2019-01-01

- picture1

- picture2

- picture3

- 2019-01-02

- picture1

- picture2

- picture3

etc...

A primary issue that I faced in trying to keep photos in sync with Google Photos is that their API does not (currently) offer a way to delete images. https://issuetracker.google.com/issues/109759781

I have found that having photos organized in albums by year can still leave one with too many photos in each album. The folder structure that I've found to work best is like this:

- root - 2019 - 2019-01-01

That is the exact scheme I use for my photo archive so I'll implement that for definite!

A primary issue that I faced in trying to keep photos in sync with Google Photos is that their API does not (currently) offer a way to delete images. https://issuetracker.google.com/issues/109759781

I failed to notice that :-(

This means that this backend can't possibly be a full featured backend. That is probably OK but it will make writing integration tests very difficult if you can't upload photos and then delete them.

I'm going to concentrate on two use cases

- backing up your gphotos

- uploading new photos into either an album or a general /uploads directory.

- If we can't delete then it can't be the destination for

rclone synconlyrclone copy.

- If we can't delete then it can't be the destination for

I'd enjoy helping out on that -- I do have some experience working with the API and am always looking for ways to brush up on Go.

How do you normally plan features like this? Is it just via this thread?

I have found that having photos organized in albums by year can still leave one with too many photos in each album. The folder structure that I've found to work best is like this:

- root - 2019 - 2019-01-01That is the exact scheme I use for my photo archive so I'll implement that for definite!

+1 to that! ;-)

- backing up your gphotos

- uploading new photos into either an album or a general /uploads directory.

*If we can't delete then it can't be the destination for rclone sync only rclone copy.

That would work just great for my case: not having photos deleted in Google Drive after deleting them locally and then sync'ing is not such a big deal.

One observation: how is the "picture quality" thing going to be handled? You folks know GooglePhotos only allow unlimited photos (ie, without counting towards your total Google quota) in "High Quality", not in "Original" form, right? Additionally, wouldn't that change the size of the pictures on the remote and force a (re)copy every time? (I'm not familiar with the Google Photos API, perhaps these are non-issues -- I'm just bringing them up so they can be discussed).

One observation: how is the "picture quality" thing going to be handled? You folks know GooglePhotos only allow unlimited photos (ie, without counting towards your total Google quota) in "High Quality", not in "Original" form, right? Additionally, wouldn't that change the size of the pictures on the remote and force a (re)copy every time? (I'm not familiar with the Google Photos API, perhaps these are non-issues -- I'm just bringing them up so they can be discussed).

I was imagining "high quality" vs "original" would be an command line option but I read this in the API docs

All media items uploaded to Google Photos using the API are stored in full resolution at original quality. They count toward the user’s storage.

Note: If your uploads exceed 25MB per user, your application should remind the user that these uploads will count towards storage in their Google Account.

Not uploading duplicates is a problem I haven't figured out yet.

I'd enjoy helping out on that -- I do have some experience working with the API and am always looking for ways to brush up on Go.

Great!

How do you normally plan features like this? Is it just via this thread?

Normally one issue per feature. If it gets really complicated then we can make a design doc.

Not uploading duplicates is a problem I haven't figured out yet.

I worked around this with a combination of file-size, filename, created date and so on, to identify uniqueness within an album. Assuming your local storage is sane, it shouldn't be too difficult. The downside is that presumably if there's no 'delete' API, you also can't 'overwrite' a photo, which is a real pain.

The other problem I had with the old API (and it looks to persist in the new API - I thought these guys at Google were supposed to be good at coding/design?!?) is that there's no lastUpdated date or other metadata, so even if there is an overwrite API, it's really hard to figure out when to apply it without re-uploading everything from scratch every time. In the end, I did something super-hacky, writing the last-updated date to the EXIF/IPTC tags of the photo before uploading, and then querying that when I decided whether a photo had changed and needed uploading. It's pretty horrible though.

All media items uploaded to Google Photos using the API are stored in full resolution at original quality. They count toward the user’s storage.

Oh crap! :-( This will make it unfeasible for my usage here, and I guess for almost anyone :-(

@ncw: would it be possible to use this new feature to copy files between different Google Photo accounts? If yes, I would at least be able to work around the limitation by using my unlimited EDU account...

This will make it unfeasible for my usage here, and I guess for almost anyone

Not sure about that. I pay £7 a month for my Google One account and that gives me 2TB for backing up all my stuff, including photos. So it'll be extremely useful. ;)

Not sure about that. I pay £7 a month for my Google One account and that gives me 2TB for backing up all my stuff, including photos. So it'll be extremely useful. ;)

That's just because you are not an old Scrooge^H^H^H^H^H^H^H^H^H^H frugal person like me ;-)

Seriously, I have a separate, unlimited EDU account and I plan to make full use of it before paying Google anything :-)

If anyone wants to have a go, here is a prototype google photos backend. It is read only for the time being. I implemented a few different ways of reading the media.

https://beta.rclone.org/branch/v1.48.0-003-g8551241c-gphotos-beta/ (uploaded in 15-30 mins)

Fixed the Entry doesn't belong in directory bug:

https://beta.rclone.org/branch/v1.48.0-003-gf2c25527-gphotos-beta/ (uploaded in 15-30 mins)

(uploaded in 15-30 mins)

Just tried it, and it works perfectly for my use case! Currently (finally) in the process of backing up all my pictures from Google Photos.Thanks a lot!

I have tried it too and it looks great. How does it currently manage

chnages to the local file system? If I change the date on a photo in GP

will this "move" to the new year folder locally? I thought I saw that

happening but cannot now reproduce.

On Tue, 18 Jun 2019 at 11:57, Max Rumpf notifications@github.com wrote:

Just tried it, and it works perfectly for my use case! Currently (finally)

backing up all my pictures from Google Photos.Thanks a lot!—

You are receiving this because you commented.

Reply to this email directly, view it on GitHub

https://github.com/ncw/rclone/issues/369?email_source=notifications&email_token=ABPENH2ZEPPGLSPCJV4XZWTP3C5S3A5CNFSM4B4HRKVKYY3PNVWWK3TUL52HS4DFVREXG43VMVBW63LNMVXHJKTDN5WW2ZLOORPWSZGODX6ABTI#issuecomment-503054541,

or mute the thread

https://github.com/notifications/unsubscribe-auth/ABPENH6WJFPGZEI74SFEAPTP3C5S3ANCNFSM4B4HRKVA

.

Fixed downloading videos (rclone was downloading them as images).

So if anyone downloaded videos, please delete them and re-download!

https://beta.rclone.org/branch/v1.48.0-006-g8aea25d7-gphotos-beta/ (uploaded in 15-30 mins)

If I change the date on a photo in GP will this "move" to the new year folder locally? I thought I saw that

happening but cannot now reproduce.

Yes it should do if you can change the Created date on gogle photos (I don't know whether that is possible or not.)

So, I noticed that the file sizes of the downloaded media hugely differs from the sizes listed on Photos itself. For example, the photos are generally less than half the size (but at the same resolution), and one 2160p HEVC video with a bitrate of ~42 mbit/s and a size of 380 MB changed to 2.7 MB of 1080p, ~180 kbit/s H.264 video. I backup everything in original quality. Did I miss an option to change the download quality? Does the API only serve compressed files? Looking forward to hear some information on this.

I am also following and looking forward to this. I would like to be able to use the Synology to backup Google Photos

Oh man!

Nearly started to investigate in the API and go for myself but luckily found this first.

You saved me many many hours.

Thx a lot!

So, I noticed that the file sizes of the downloaded media hugely differs from the sizes listed on Photos itself. For example, the photos are generally less than half the size (but at the same resolution), and one 2160p HEVC video with a bitrate of ~42 mbit/s and a size of 380 MB changed to 2.7 MB of 1080p, ~180 kbit/s H.264 video. I backup everything in original quality. Did I miss an option to change the download quality? Does the API only serve compressed files? Looking forward to hear some information on this.

Here is what the docs say about downloading

https://developers.google.com/photos/library/guides/access-media-items#base-urls

Rclone is using =d to download images.

- This strips EXIF location (according to the docs and my tests)

- The download direct from google photos website has the EXIF location

- See: https://issuetracker.google.com/issues/112096115

Rclone is using =dv to download videos

- This downloads a really compressed version of the video compared to downloading it via the google photos web interface

- The docs don't state the video isn't the original

- See: https://issuetracker.google.com/issues/113672044

Unfortunately I think photos web UI must be using a different API, one which isn't publically available.

This is all rather annoying - if you can't download the originals via the API I'm wondering whether this backend will be useful at all. I guess it would make a backup of last resort.

@ncw thanks for the information. Yeah, without having the same quality as Google Takeout, it doesn't really make a lot of sense, I hope Google extends/improves the API to fix this shortcoming - it's still our own data after all, so why shouldn't we be allowed to download it in it's original quality.

I've commented on the issues and I've also signed up to be a Google Photos partner.

So should I release this backend?

- I can put lots of warnings in the docs about it not downloading original quality pics

- But there will always be people that don't notice those warnings

So worth releasing anyway?

If there's warnings in the backend setup, then people can't really claim

they haven't seen them as configuring the back-end is pretty interactive.

I haven't been keeping up, but is this download-only for now? My interest

is really to upload to GPhotos, although given all the restrictions (can't

delete, etc) I'm going to stick with Amazon Prime Photos for my 'original'

photos backups.

On Fri, 21 Jun 2019 at 10:18, Nick Craig-Wood notifications@github.com

wrote:

I've commented on the issues and I've also signed up to be a Google Photos

partner.So should I release this backend?

- I can put lots of warnings in the docs about it not downloading

original quality pics- But there will always be people that don't notice those warnings

So worth releasing anyway?

—

You are receiving this because you were mentioned.

Reply to this email directly, view it on GitHub

https://github.com/ncw/rclone/issues/369?email_source=notifications&email_token=ABFMR3XYR3NEYCHEBQB6P2TP3SMEPA5CNFSM4B4HRKVKYY3PNVWWK3TUL52HS4DFVREXG43VMVBW63LNMVXHJKTDN5WW2ZLOORPWSZGODYH5ZDQ#issuecomment-504355982,

or mute the thread

https://github.com/notifications/unsubscribe-auth/ABFMR3UYY3BMWUISEVTOC6LP3SMEPANCNFSM4B4HRKVA

.

I second those comments. Frankly I don't "trust" Google with my

originals. They have shut down to many services and are to free with

reducing quality for me to trust them with data that I intend to keep

long-term (lifetime). I do however love their interface and would like to

use them as one of the ways to view/browse my photo collection which is

primarily housed and protected in other ways.

On Fri, Jun 21, 2019, 5:20 AM Mark Otway notifications@github.com wrote:

If there's warnings in the backend setup, then people can't really claim

they haven't seen them as configuring the back-end is pretty interactive.I haven't been keeping up, but is this download-only for now? My interest

is really to upload to GPhotos, although given all the restrictions (can't

delete, etc) I'm going to stick with Amazon Prime Photos for my 'original'

photos backups.On Fri, 21 Jun 2019 at 10:18, Nick Craig-Wood notifications@github.com

wrote:I've commented on the issues and I've also signed up to be a Google

Photos

partner.So should I release this backend?

- I can put lots of warnings in the docs about it not downloading

original quality pics- But there will always be people that don't notice those warnings

So worth releasing anyway?

—

You are receiving this because you were mentioned.

Reply to this email directly, view it on GitHub

<

https://github.com/ncw/rclone/issues/369?email_source=notifications&email_token=ABFMR3XYR3NEYCHEBQB6P2TP3SMEPA5CNFSM4B4HRKVKYY3PNVWWK3TUL52HS4DFVREXG43VMVBW63LNMVXHJKTDN5WW2ZLOORPWSZGODYH5ZDQ#issuecomment-504355982

,

or mute the thread

<

https://github.com/notifications/unsubscribe-auth/ABFMR3UYY3BMWUISEVTOC6LP3SMEPANCNFSM4B4HRKVA.

—

You are receiving this because you are subscribed to this thread.

Reply to this email directly, view it on GitHub

https://github.com/ncw/rclone/issues/369?email_source=notifications&email_token=AC3I765AZRANC23F7JGPPHDP3SMPLA5CNFSM4B4HRKVKYY3PNVWWK3TUL52HS4DFVREXG43VMVBW63LNMVXHJKTDN5WW2ZLOORPWSZGODYH6AKY#issuecomment-504356907,

or mute the thread

https://github.com/notifications/unsubscribe-auth/AC3I762XYR3H65B6BI65UF3P3SMPLANCNFSM4B4HRKVA

.

Well, I'd probably not go that far. I trust Google with my originals - they've been my go-to for Cloud backup of photos for years. My problem was that when I hit > 1TB, it was going to cost me $80/month to go to the next plan. So I switched to Prime Photos, where I get unlimited original storage of photos, for free. I currently have 2TB of pics on there. Sadly, no rclone support. ;)

What I use Google Photos for is their search. I resize all my pictures and upload 800x600 thumbs to Google Photos, and their image recognition is so smart that it helps me find things that aren't tagged or named (e.g., search for "red car"). Currently, I can just rclone the thumbs to GDrive, and they feed into GPhotos automatically. In future, I'd need some sort of sync tool to put them in GPhotos directly. So annoying!

It's not so much that I worry about them loosing data as them making is

inaccessible or unusable. I've got no problem storing data on drive

because it has a industry standard way of accessing the data and is easy to

get the data out of.

I spent loads of time tagging pictures in Picasa and uploading to picasaweb

only to find that Google photos doesn't respect any of those tags. I did

find a 3rd party program that would write the tags Picasa created into the

metadata of the pictures though. Now I'm worried to use photos for tagging

because I haven't seen a good way to export that info into a standard

format a year or two from now when they decide to move onto the next

iteration of photo storage. There doesn't seem to be any way to update the

metadata on a photo if you for instance change the capture date or tag

someone. Given that how can we invest time in creating that info inside

photos.

On Fri, Jun 21, 2019, 7:36 AM Mark Otway notifications@github.com wrote:

Well, I'd probably not go that far. I trust Google with my originals -

they've been my go-to for Cloud backup of photos for years. My problem was

that when I hit > 1TB, it was going to cost me $80/month to go to the next

plan. So I switched to Prime Photos, where I get unlimited original storage

of photos, for free. I currently have 2TB of pics on there. Sadly, no

rclone support. ;)What I use Google Photos for is their search. I resize all my pictures and

upload 800x600 thumbs to Google Photos, and their image recognition is so

smart that it helps me find things that aren't tagged or named (e.g.,

search for "red car"). Currently, I can just rclone the thumbs to GDrive,

and they feed into GPhotos automatically. In future, I'd need some sort of

sync tool to put them in GPhotos directly. So annoying!—

You are receiving this because you are subscribed to this thread.

Reply to this email directly, view it on GitHub

https://github.com/ncw/rclone/issues/369?email_source=notifications&email_token=AC3I76YJQ5Z2PK2RR3JYBSLP3S4KTA5CNFSM4B4HRKVKYY3PNVWWK3TUL52HS4DFVREXG43VMVBW63LNMVXHJKTDN5WW2ZLOORPWSZGODYIHFGA#issuecomment-504394392,

or mute the thread

https://github.com/notifications/unsubscribe-auth/AC3I76Z2HSMHHAO5WITGDIDP3S4KTANCNFSM4B4HRKVA

.

It's of doubtful usage, to me at least. Is there a way to mark a backend as "alpha" or something?

I think you should still publish it. For me though I need the ability to write to photos instead of read. I'm going to sync to drive myself and then upload to photos for the app interface.

I spent loads of time tagging pictures in Picasa and uploading to picasaweb only to find that Google photos doesn't respect any of those tags.

Google Photos stores the IPTC tags - if you upload a photo to it, and then re-download, the tags are still there. It makes me sad that GPhotos doesn't read those tags and index them for searching, but what would I know.

It stores them but do any of the changes that you make inside the photos

app make it into the metadata? That's why I'd want to sync TO photos

instead of FROM photos. To me it's a read only interface because any

changes there are not persisted as far as I know.

On Fri, Jun 21, 2019, 8:39 AM Mark Otway notifications@github.com wrote:

I spent loads of time tagging pictures in Picasa and uploading to

picasaweb only to find that Google photos doesn't respect any of those tags.Google Photos stores the IPTC tags - if you upload a photo to it, and then

re-download, the tags are still there. It makes me sad that GPhotos doesn't

read those tags and index them for searching, but what would I know.—

You are receiving this because you are subscribed to this thread.

Reply to this email directly, view it on GitHub

https://github.com/ncw/rclone/issues/369?email_source=notifications&email_token=AC3I767S2T5JWBRA7VCHNSLP3TDY7A5CNFSM4B4HRKVKYY3PNVWWK3TUL52HS4DFVREXG43VMVBW63LNMVXHJKTDN5WW2ZLOORPWSZGODYILFEY#issuecomment-504410771,

or mute the thread

https://github.com/notifications/unsubscribe-auth/AC3I766VFMWCZQSCCQY7V3DP3TDY7ANCNFSM4B4HRKVA

.

I'm confused,

When july 10 hits, there's no option for google folder?

@calisro wrote:

I think you should still publish it. For me though I need the ability to write to photos instead of read. I'm going to sync to drive myself and then upload to photos for the app interface.

OK I'll publish it :-)

I'd like a bit of advice on the upload...

I'm planning that you can make albums in /albums with mkdir and upload to them.

If you upload to an album then the sync process will work pretty naturally, except for the fact that there can't be a nested directory structure within an album.

I did have a plan where you'd be able to copy files to /uploads. These will remain in uploads for the duration of the run of rclone but will disappear afterwards, so acting like an uploads directory for an FTP server. The files would then appear in the /media directories according to their creation date.

This will solve just general uploads but it will make repeated syncing impossible without making lots of duplicates. That has made me think this isn't such a good idea and I should scrap it.

General media uploads can always be done to an album, then the album can be removed and the media will remain.

Is upload to an album sufficient for everyone's needs?

Any thoughts?

I don't use the album feature really at all. I was always concerned with spending time making albums only to have them go away if Google made changes or if I wanted to move photos elsewhere. and given that its hard to extract stuff now (except using take-out) i doubt i'd use them.

For my needs, i'm looking to just copy (or preferably sync if possible) many thousands of photos to the photos app so they are presented in the GUIs. The directories i'm trying to sync though will have nested folders. I'm not really concerned about losing the folder names since i'm not using 'google photos' as the source of record but rather just a GUI. The key for me is that the date data is correct based on the photo metadata which I think google photos will implicitly scrape from the EXIF data after upload.

Additionally, it would be nice to upload these to lower resolution since it isn't the source of record and I can save space on my account. But even if they are full quality, that works as well.

I think the most important aspect first and foremost is the ability to 'sync' correctly without creating large duplicates.

@ncw Thank you for making this backend and publishing it. GPhotos is a decent photos bucket, but I really want a backup of my photos on a different provider / local drive of some quality. I personally don't need the original quality, or even metadata. I just don't want to loose them.

Uploads to albums sound fine to me...

Per my comment earlier in the thread, albums are nice if they're automatically created based on the folders in which the uploading images are stored locally. So if I have:

photo

- Birthday

- Christmas

- New Puppy

the upload would upload the photos into those 3 albums. This is exactly how the folders within GDrive are represented today - each folder is mapped to an Album.

There's a couple of reasons it would be good to do this

- For those of us who have photos organised into album folders, the same albums would be created in GPhotos.

- For people who just want a single upload of all the photos, they could either have one huge album, or put all their images in the root 'photo' folder, and they'll all just be uploaded without an album.

It's worth mentioning that when you actually upload to GPhotos (rather than GPhotos being a 'view' on a GDrive folder) I believe there's a limit of something like 1,000 photos in an album. So uploading hundreds of thousands of photos to GPhoto without segregating them into Albums might be problematic.

@Webreaper I like that approach. I'd also like a flag though to disable album creation but I could live without a flag as well.

There is no limit to upload to gphotos (non-album) but there is an 'album' content limit yes. :(

Here is the latest with a directory listing bug fixed (it was skipping the first item) and the ability to make albums and upload to albums. Note you can't remove albums (it isn't in the api) and you can't upload to albums you didn't create with rclone (also not in the API).

Note that uploads count against your drive quota.

https://beta.rclone.org/branch/v1.48.0-010-g63014f46-gphotos-beta/ (uploaded in 15-30 mins)

@calisro wrote:

For my needs, i'm looking to just copy (or preferably sync if possible) many thousands of photos to the photos app so they are presented in the GUIs. The directories i'm trying to sync though will have nested folders. I'm not really concerned about losing the folder names since i'm not using 'google photos' as the source of record but rather just a GUI. The key for me is that the date data is correct based on the photo metadata which I think google photos will implicitly scrape from the EXIF data after upload.

Sync should work to albums now. However they won't support nested folders...

See below for an idea on how this might work.

Additionally, it would be nice to upload these to lower resolution since it isn't the source of record and I can save space on my account. But even if they are full quality, that works as well.

I don't think that the API allows anything but full res uploads. Ideally it would allow the low res uploads that don't count against your quota but I don't think it does.

I think the most important aspect first and foremost is the ability to 'sync' correctly without creating large duplicates.

Sync to albums should work now...

@Webreaper wrote:

Per my comment earlier in the thread, albums are nice if they're automatically created based on the folders in which the uploading images are stored locally. So if I have:

- Birthday - Christmas - New Puppythe upload would upload the photos into those 3 albums. This is exactly how the folders within GDrive are represented today - each folder is mapped to an Album.

If you had a 2 level deep layout like the above then rclone will work as you wish now.

That gives me an idea though - what if rclone represented nested directories

- root

- sub

- subsub

- subsub2

- sub

As albums with names "root", "root/sub", "root/sub/subsub", "root/sub/subsub"? That would be one way of trying to flatten a hierarchical filesystem into google photos rather than munging the file names. (Those / would be a unicode equivalent probably).

That would have the advantage that listing the albums only would create the directory tree. I already cache the albums so this might work quite neatly.

@calisro wrote:

I like that approach. I'd also like a flag though to disable album creation but I could live without a flag as well.

Do you mean a --read-only flag? Or rclone could offer you a read only option on backend creation so when it gets the oauth token it requests a read only one.

As albums with names "root", "root/sub", "root/sub/subsub", "root/sub/subsub"?

I was actually about to write a comment proposing this exact convention. At that point, you can support arbitrary nesting of folders.

Thanks for the fast response on all of this!! :boom:

Here is an approaching complete google photos backend :-)

The docs are here: googlephotos.md - I'd appreciate a read through to check them over and see if they make sense.

I implemented the heirachical album scheme and it makes the space under album/ behave pretty much like a normal file system.

https://beta.rclone.org/branch/v1.48.0-013-gbb4eeedf-gphotos-beta/ (uploaded in 15-30 mins)

Is there a reason that we can only write to albums? Albums have file limits and I rarely use them. Is it possible to also write direct without albums like nmrshll/gphotos-uploader-cli does?

Also I see severe rate limiting. Is that typical? I could barely list 50 photos on my first lsl.

2019/06/26 13:28:12 DEBUG : pacer: low level retry 1/10 (error Quota exceeded for quota metric 'photoslibrary.googleapis.com/read_requests' and limit 'ReadsPerMinutePerUser' of service 'photoslibrary.googleapis.com' for consumer 'project_number:480718780999'. (429 RESOURCE_EXHAUSTED))

Thankyou @ncw

Is there a reason that we can only write to albums? Albums have file limits and I rarely use them. Is it possible to also write direct without albums like nmrshll/gphotos-uploader-cli does?

Any files you write to albums will appear in the general files list, so you could upload to an album then delete it...

You can read the contents of albums and delete files from then both of which will make the rclone copy/sync experience relatively normal.

The problem with making a general upload is that rclone needs to be able to see if the file is already uploaded and there is no API for searching for a filename. Rclone can't even list the recently uploaded files, it can only list files by EXIF info which bears no relation to the datestamp on the file.

The best I could do is implement an /upload directory. This would upload any files copied in, and it would list out any files that have been uploaded in this run of rclone. This means that if rclone had to do --retries then duplicates wouldn't be uploaded.

However if you quitted rclone and restarted it then it would upload everything again. I note in my tests that if you upload an image twice, then google only stores it once, so this only costs you bandwidth not storage at google.

I could store the uploaded files on disk I suppose.

I could also list all the media and find the file name like that, however (as you noted) google photos is quite slow and that would take some time!

Which one of those options would work for you?

- /upload directory which only shows files in uploaded in this session of rclone

- /upload directory which shows all files uploaded in the last X days (cached on disk)

- /upload directory which would be really slow on first access but would show all the files.

Option 1 is relatively easy and could be upgraded to option 2 if required. I guess 2. and 3. could be combined so a slow read to fill up a local db of files. Though there isn't an API method to keep that updated which is a bit painful...