Prometheus-operator: Grafana dashboards not getting persisted.

What did you do?

Installed prometheus operator and kube prometheus using helm.

Configured storagespec for grafana. (To persist grafana data)

What did you expect to see?

Expect the dashboards created on grafana UI to persist even after pod restart.

What did you see instead? Under which circumstances?

Grafana dashboards are not persisted

Environment

Kubernetes version information:

Client Version: version.Info{Major:"1", Minor:"9", GitVersion:"v1.9.6", GitCommit:"9f8ebd171479bec0ada837d7ee641dec2f8c6dd1", GitTreeState:"clean", BuildDate:"2018-03-21T15:21:50Z", GoVersion:"go1.9.3", Compiler:"gc", Platform:"linux/amd64"}

Server Version: version.Info{Major:"1", Minor:"9", GitVersion:"v1.9.6", GitCommit:"9f8ebd171479bec0ada837d7ee641dec2f8c6dd1", GitTreeState:"clean", BuildDate:"2018-03-21T15:13:31Z", GoVersion:"go1.9.3", Compiler:"gc", Platform:"linux/amd64"}Kubernetes cluster kind:

KopsManifests:

grafana:

storageSpec:

class: px-repl1

accessMode: ReadWriteOnce

resources:

requests:

storage: 2Gi

selector: {}

*Other Details

Issue is not with persisting the data. When I exec to the pod and check the path to which the volume is mounted, grafana.db (file), sessions (folder), plugins (folder) are found. this data gets persisted. But when i see in the UI newly created dashboards are not available even though the data is there, after restart of the pod.

All 38 comments

if you use helm, when you modify helm/grafana, you should use helm dependency update for kube-prometheus. Cloud you send grafana deployment configure in kubernetes?

Try

I try to delete pod and run again my pvc it still running.

Result

grafana UI dashboard is gone.

Try

delete pvc and start pod.

throw error "Can't get persistentvolumeclaim"

mean pod get data from pvc but I don't know why dashboard is gone.

@fumanne I am not making any changes to helm grafana config at all. I create a configMap seperately and reference that configMap name in the helm grafana config (while installing the kube prometheus)

Hi, I have a similar issue. I have created a PV and on the first helm install it works fine. My PV gets bound to the PVC created by this:

storageSpec:

class: standard

accessMode: "ReadWriteOnce"

resources:

requests:

storage: 250Gi

selector:

matchLabels:

app: "grafana"

After deleting this my PV is in state released and when I created the kube-prometheus with helm install again a new PV and PVC is created.

Isn't there a possibility to use like it its for prometheus?

storageSpec:

volumeClaimTemplate:

spec:

storageClassName: standard

accessModes: ["ReadWriteOnce"]

resources:

requests:

storage: 250Gi

selector:

matchLabels:

app: "prometheus-server"

Maybe someone can provide an working example?

I am working on GKE.

This is because Grafana uses a Deployment and Prometheus uses a StatefulSet. The StatefulSet makes sure that PVCs are assigned consistently.

How can I solve this issue?

When I install Grafana on its own with this, its not a problem:

persistence:

enabled: true

storageClassName: standard

accessModes:

- ReadWriteOnce

size: 250Gi

# annotations: {}

# subPath: ""

existingClaim: grafana-pvc

The problem is that with a deployment you will always get a new PVC and thus a new PV, where as with a StatefulSet it will attempt to reuse the existing ones.

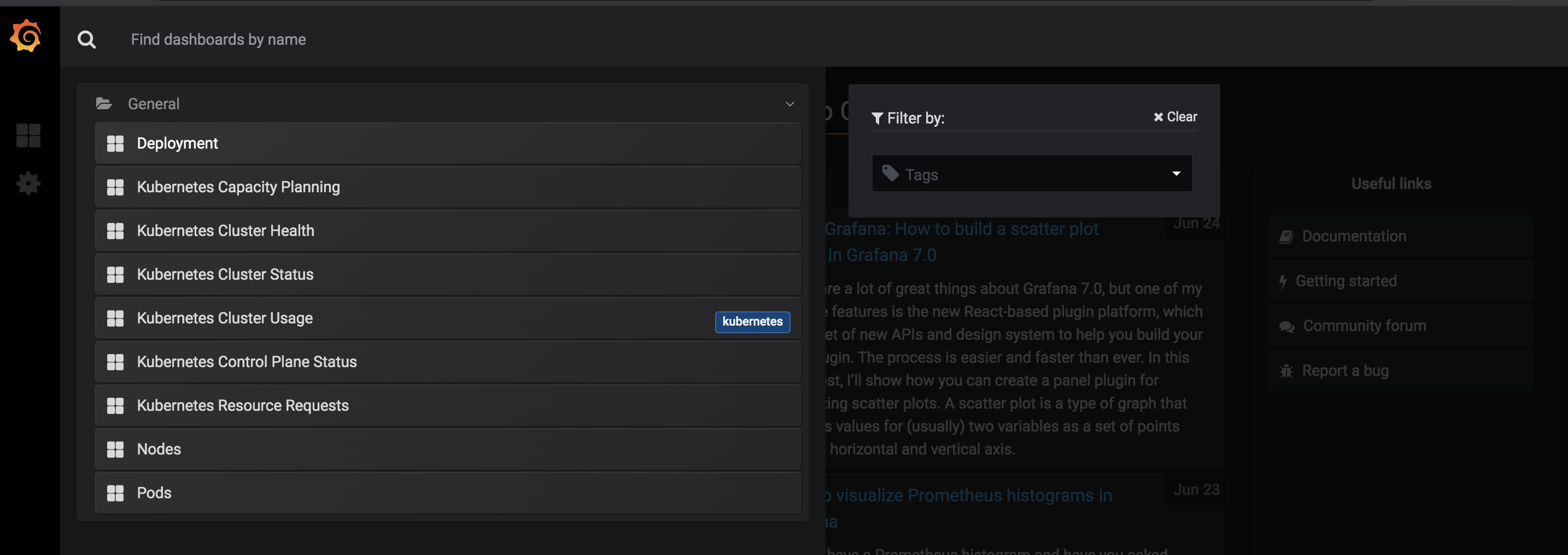

I just installed Grafana on my own now. Can you give me the dashboard included in kube-prometheus?

What else is different from stable/grafana version?

All the dashboards are already files, you just need to make Grafana load them, which is what this setup automates.

i have faced the same issue.

every time when grafana pod goes down, new one does not have old data.

What did you do?

Installed prometheus operator and kube prometheus using helm.

Configured storagespec for grafana. (To persist grafana data)

What did you expect to see?

Expect the dashboards created on grafana UI to persist even after pod restart.

What did you see instead? Under which circumstances?

Grafana dashboards are not persisted

Environment

Kubernetes version

kubectl version

Client Version: version.Info{Major:"1", Minor:"10", GitVersion:"v1.10.3", GitCommit:"2bba0127d85d5a46ab4b778548be28623b32d0b0", GitTreeState:"clean", BuildDate:"2018-05-21T09:17:39Z", GoVersion:"go1.9.3", Compiler:"gc", Platform:"linux/amd64"}

Server Version: version.Info{Major:"1", Minor:"10", GitVersion:"v1.10.5", GitCommit:"32ac1c9073b132b8ba18aa830f46b77dcceb0723", GitTreeState:"clean", BuildDate:"2018-06-21T11:34:22Z", GoVersion:"go1.9.3", Compiler:"gc", Platform:"linux/amd64"}

Kubernetes cluster kind

bare-metal + kubespray

Repository Prometheus-operator tag version

v0.20.0

prometheus-operator/helm/grafana/values.yaml have

```storageSpec:

class: ceph-rbd

accessMode: ReadWriteOnce

resources:

requests:

storage: 1Gi

selector: {}

where ceph-rdb

kubectl get storageclass ceph-rbd

NAME PROVISIONER AGE

ceph-rbd kubernetes.io/rbd 5d

PVC always the same.

13:33 user:[~]: kubectl describe pod grafana-grafana-75df687764-jw9dh |grep pvc

Normal SuccessfulMountVolume 1m kubelet, k8s-node-3 MountVolume.SetUp succeeded for volume "pvc-daada967-90a3-11e8-8372-9c5c8e90f472"

13:33 user:[~]: kubectl delete pod grafana-grafana-75df687764-jw9dh

pod "grafana-grafana-75df687764-jw9dh" deleted

13:33 user:[~]: kubectl describe pod grafana-grafana-75df687764-frt5h |grep pvc

Normal SuccessfulMountVolume 55s kubelet, k8s-node-3 MountVolume.SetUp succeeded for volume "pvc-daada967-90a3-11e8-8372-9c5c8e90f472"

13:34 user:[~]:

````

/var/lib/grafana folder save data as expected, restarting pod does not affected data in this folder

But setting and bt my self added dashboards are not persisted

We're also running into some weirdness. But what is strange is that _some_ assets are persisted. The following objects are persisted between pod restarts (things I have found so far):

- Users

- Playlists (but totally corrupted and can't be removed)

- Plugins

Unfortunately Dashboards, Data Sources and other objects are totally lost after a pod deletion.

i am also looking for solution to persist all saved configs/plugins in grafana

+1 in my case grafana can't even start with persistant storage:

GF_PATHS_DATA='/var/lib/grafana' is not writable.

You may have issues with file permissions, more information here: http://docs.grafana.org/installation/docker/#migration-from-a-previous-version-of-the-docker-container-to-5-1-or-later

mkdir: cannot create directory '/var/lib/grafana/plugins': Permission denied

I've got the PersistentVolume created and the users and other items are persisted but not the dashboards or data sources. This is really a pain, I'd love to be able to not worry about my dashboards disappearing if the pod is restarted.

This is a huge pain and makes grafana unusable :-( Really need a fix for this.

I too am seeing the same issue with pod restart and disappearing dashboards.

This repository is very opinionated about how Grafana is managed, if you don't want to declaratively configure Grafana that's fine, but it's a non-goal for this project. I hope you understand and I'd highly recommend for you to try the declarative approach, as a stateful Grafana is an incredible pain to manage even though it's not necessary and rollback are near impossible. We've been running Grafana in an entirely stateless way for years, and have had no problems with the approach.

I hope you understand. I'm closing this here as it's out of scope for this project.

@brancz

I guess we are not forcing a certain way of how grafana should be managed, we are(at I am) only expressing a problem we had encountered and are bothered by it since we can't find(or think of) a good solution apart from trying to get Grafana into stateful.

so would you be kind enough to share how you(and your team) manage Grafana when there are following configurations saved:

- dashboard created/updated

- datasource created/updated

- user created/updated

- alert notification created/updated(slack token etc)

and I think more importantly how do you(and your team) deal with these above mentioned setup when Grafana pod is replaced(crash/update)?

your experience share will be greatly appreciated, thank you!

Dashboards are written as jsonnet code and checked into version control. When updated in version control, a redeploy of Grafana is triggered and Grafana is fully provisioned (both dashboards and datasources) from files using the Grafana provisioning features.

Users are managed by an external identity provider using the bitly/oauth2_proxy, and the following Grafana configurations:

[auth]

disable_login_form = true

disable_signout_menu = true

[auth.basic]

enabled = false

[auth.proxy]

auto_sign_up = true

enabled = true

header_name = X-Forwarded-User

[paths]

data = /var/lib/grafana

logs = /var/lib/grafana/logs

plugins = /var/lib/grafana/plugins

provisioning = /etc/grafana/provisioning

[server]

http_addr = 127.0.0.1

http_port = 3001

That as jsonnet code that is (you can read here for more detail on how to customize your Grafana install via jsonnet):

{

_config+:: {

grafana+:: {

config: {

sections: {

paths: {

data: '/var/lib/grafana',

logs: '/var/lib/grafana/logs',

plugins: '/var/lib/grafana/plugins',

provisioning: '/etc/grafana/provisioning',

},

server: {

http_addr: '127.0.0.1',

http_port: '3001',

},

auth: {

disable_login_form: true,

disable_signout_menu: true,

},

'auth.basic': {

enabled: false,

},

'auth.proxy': {

enabled: true,

header_name: 'X-Forwarded-User',

auto_sign_up: true,

},

},

},

},

},

}

And we have Grafana bind to the loopback interface, and because of the above settings any user successfully authenticated with Grafana is automatically created in Grafana. In the future we would like Grafana to also use the users login token for requests against Prometheus as then we can make use of authorization proxies for Prometheus so we can limit what a user can see through Grafana without any customization/configuration necessary.

We manage all alerts as Prometheus alerts that are also checked in to version control and deployed and reloaded by Prometheus whenever checked in.

@brancz What is your workflow for developing new dashboards with this setup, particularly for exploratory work, where the desired dashboard is not fully known?

For example, create dashboard in grafana UI, export JSON from UI, refactor into jsonnet?

That’s a workflow we’ve been doing a lot. Recently we’ve been thinking that running a Grafana instance locally and pointing it at the same data source would be even better as we can just generate out the dashboards and Grafana will automatically reload them. We haven’t set this up yet, but I think that experience will be even better as we can write the code immediately but also have immediate feedback.

(Did not read everything)

You can use ConfigMaps to persist the dashboards instead and prometheus reloads them into you graphana server. Thats what i do

(Did not read everything)

You can use ConfigMaps to persist the dashboards instead and prometheus reloads them into you graphana server. Thats what i do

@MrD2 how do you do it? lets say, I create a dashboard from grafana ui and save it, how to tell grafana pod to save that into configmap and then load it next time when pod is scheduled on another node.

Edit:

I see in doc if sidecar is enabled, configmaps with grafana_dashboard=1 label will be loaded into Grafana as dashboards, will try that

@infa-ddeore Probably that way: https://stackoverflow.com/questions/52046908/are-kubernetes-configmaps-writable

But it looks like an abuse to the feature that is not intended to be used that way.

Hi @MrD2 .. Can you please provide us the step you did to persist the data using configMaps?

@KavithaBNagaraju @infa-ddeore

Sorry to only respond now.

For now we only save the dashboards to the kubernetes configmaps with the grafana_dashboard=1 label. If you change the values on the grafana UI the modifications won't propagate to the CM (to the best of my knowledge. This is something that we want to alter, or find a way where users can freely change their dashboards under the grafana UI and this dashboards get persisted somehow.

If you find a way to do this give me a buzz ;)

@den-is try this:

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: grafana-storage

namespace: monitoring

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 10Gi

storageClassName: csi-rbd

---

apiVersion: apps/v1beta2

kind: Deployment

metadata:

labels:

app: grafana

name: grafana

namespace: monitoring

spec:

replicas: 1

selector:

matchLabels:

app: grafana

template:

metadata:

labels:

app: grafana

spec:

initContainers:

- command:

- sh

- -c

- "whoami; chmod 777 /var/lib/grafana"

image: harbor.geniusafc.com/docker.io/busybox

name: volume-permissions

volumeMounts:

- mountPath: /var/lib/grafana

name: grafana-storage

readOnly: false

containers:

- image: harbor.geniusafc.com/docker.io/grafana/grafana:6.0.0-beta1

name: grafana

securityContext:

runAsNonRoot: true

runAsUser: 65534

ports:

- containerPort: 3000

name: http

readinessProbe:

httpGet:

path: /api/health

port: http

resources:

limits:

cpu: 200m

memory: 200Mi

requests:

cpu: 100m

memory: 100Mi

volumeMounts:

- mountPath: /var/lib/grafana

name: grafana-storage

readOnly: false

- mountPath: /etc/grafana/provisioning/datasources

name: grafana-datasources

readOnly: false

- mountPath: /etc/grafana/provisioning/dashboards

name: grafana-dashboards

readOnly: false

- mountPath: /grafana-dashboard-definitions/0/etcd

name: grafana-dashboard-etcd

readOnly: false

- mountPath: /grafana-dashboard-definitions/0/k8s-cluster-rsrc-use

name: grafana-dashboard-k8s-cluster-rsrc-use

readOnly: false

- mountPath: /grafana-dashboard-definitions/0/k8s-node-rsrc-use

name: grafana-dashboard-k8s-node-rsrc-use

readOnly: false

- mountPath: /grafana-dashboard-definitions/0/k8s-resources-cluster

name: grafana-dashboard-k8s-resources-cluster

readOnly: false

- mountPath: /grafana-dashboard-definitions/0/k8s-resources-namespace

name: grafana-dashboard-k8s-resources-namespace

readOnly: false

- mountPath: /grafana-dashboard-definitions/0/k8s-resources-pod

name: grafana-dashboard-k8s-resources-pod

readOnly: false

- mountPath: /grafana-dashboard-definitions/0/nodes

name: grafana-dashboard-nodes

readOnly: false

- mountPath: /grafana-dashboard-definitions/0/persistentvolumesusage

name: grafana-dashboard-persistentvolumesusage

readOnly: false

- mountPath: /grafana-dashboard-definitions/0/pods

name: grafana-dashboard-pods

readOnly: false

- mountPath: /grafana-dashboard-definitions/0/statefulset

name: grafana-dashboard-statefulset

readOnly: false

nodeSelector:

beta.kubernetes.io/os: linux

serviceAccountName: grafana

volumes:

- name: grafana-storage

persistentVolumeClaim:

claimName: grafana-storage

readOnly: false

- name: grafana-datasources

secret:

secretName: grafana-datasources

- configMap:

name: grafana-dashboards

name: grafana-dashboards

- configMap:

name: grafana-dashboard-etcd

name: grafana-dashboard-etcd

- configMap:

name: grafana-dashboard-k8s-cluster-rsrc-use

name: grafana-dashboard-k8s-cluster-rsrc-use

- configMap:

name: grafana-dashboard-k8s-node-rsrc-use

name: grafana-dashboard-k8s-node-rsrc-use

- configMap:

name: grafana-dashboard-k8s-resources-cluster

name: grafana-dashboard-k8s-resources-cluster

- configMap:

name: grafana-dashboard-k8s-resources-namespace

name: grafana-dashboard-k8s-resources-namespace

- configMap:

name: grafana-dashboard-k8s-resources-pod

name: grafana-dashboard-k8s-resources-pod

- configMap:

name: grafana-dashboard-nodes

name: grafana-dashboard-nodes

- configMap:

name: grafana-dashboard-persistentvolumesusage

name: grafana-dashboard-persistentvolumesusage

- configMap:

name: grafana-dashboard-pods

name: grafana-dashboard-pods

- configMap:

name: grafana-dashboard-statefulset

name: grafana-dashboard-statefulset

@zgfh thanks for your yaml.

maybe we should remove the initContainers config.

@Ghostbaby initContainers change directory permissions ,if not ,pod will error like this:

GF_PATHS_DATA='/var/lib/grafana' is not writable.

You may have issues with file permissions, more information here: http://docs.grafana.org/installation/docker/#migration-from-a-previous-version-of-the-docker-container-to-5-1-or-later

mkdir: cannot create directory '/var/lib/grafana/plugins': Permission denied

These are the steps we make to persist dashboards to outlive pod deletion:

Create your custom dashboard through the UI.

Click on the “Save” button which will pop up a “Cannot save provisioned dashboard” window. There you can click on the “Save JSON to file” button.

Save the json file to your computer.

Using kubectl command-line tool which is configured to communicate with your k8s cluster, create a new ConfigMap using the following command:

_kubectl create configmap my-custom-dashboard --from-file=path-to-file.json_Add a label to the newly created ConfigMap:

_kubectl label configmaps my-custom-dashboard grafana_dashboard=1_

How are you supposed to create users using the stateless deployment?

we went the PVC way for users persistence

FYI for people caught off guard by this like me: When using the stable/prometheus-operator helm chart it seems to be sufficient to set the grafana.persistence.enabled value to true.

(Did not read everything)

You can use ConfigMaps to persist the dashboards instead and prometheus reloads them into you graphana server. Thats what i do

We are using ConfigMaps, but still, we have the same issue were all the dashboards get deleted if grafana pod deleted or restarted.

Looking for a workaround does anyone have a fix?

Here is my Grafana Deployment spec:

grafana-persistent-storage.yaml

`apiVersion: v1

kind: PersistentVolumeClaim

metadata:

annotations:

pv.kubernetes.io/bind-completed: "yes"

pv.kubernetes.io/bound-by-controller: "yes"

volume.beta.kubernetes.io/storage-provisioner: kubernetes.io/aws-ebs

creationTimestamp: "2019-11-21T06:29:08Z"

finalizers:

- kubernetes.io/pvc-protection

name: grafana-persistent-storage

namespace: monitoring

resourceVersion: "20086164"

selfLink: /api/v1/namespaces/monitoring/persistentvolumeclaims/grafana-persistent-storage

uid: 38eb1a4f-0c28-11ea-aeb1-02dad98e3180

spec:

accessModes: - ReadWriteOnce

resources:

requests:

storage: 5Gi

storageClassName: gp2

volumeMode: Filesystem

volumeName: pvc-38eb1a4f-0c28-11ea-aeb1-02dad98e3180

status:

accessModes: - ReadWriteOnce

capacity:

storage: 5Gi

phase: Bound`

grafana-deploy.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

annotations:

deployment.kubernetes.io/revision: "3"

creationTimestamp: "2019-11-21T06:29:08Z"

generation: 3

labels:

app: grafana

name: grafana

namespace: monitoring

resourceVersion: "63098583"

selfLink: /apis/apps/v1/namespaces/monitoring/deployments/grafana

uid: 38ecabdc-0c28-11ea-aeb1-02dad98e3180

spec:

progressDeadlineSeconds: 600

replicas: 1

revisionHistoryLimit: 2

selector:

matchLabels:

app: grafana

strategy:

rollingUpdate:

maxSurge: 25%

maxUnavailable: 25%

type: RollingUpdate

template:

metadata:

creationTimestamp: null

labels:

app: grafana

spec:

containers:

- env:

- name: GF_AUTH_BASIC_ENABLED

value: "true"

- name: GF_AUTH_ANONYMOUS_ENABLED

value: "true"

- name: GF_SECURITY_ADMIN_USER

valueFrom:

secretKeyRef:

key: user

name: grafana-credentials

- name: GF_SECURITY_ADMIN_PASSWORD

valueFrom:

secretKeyRef:

key: password

name: grafana-credentials

image: grafana/grafana:7.0.3

imagePullPolicy: IfNotPresent

name: grafana

ports:

- containerPort: 3000

name: web

protocol: TCP

resources:

limits:

cpu: 200m

memory: 200Mi

requests:

cpu: 100m

memory: 100Mi

terminationMessagePath: /dev/termination-log

terminationMessagePolicy: File

volumeMounts:

- mountPath: /var/grafana-storage

name: grafana-storage

- mountPath: /var/lib/grafana

name: grafana-persistent-storage

subPath: grafana

- args:

- --watch-dir=/var/grafana-dashboards

- --grafana-url=http://localhost:3000

env:

- name: GRAFANA_USER

valueFrom:

secretKeyRef:

key: user

name: grafana-credentials

- name: GRAFANA_PASSWORD

valueFrom:

secretKeyRef:

key: password

name: grafana-credentials

image: quay.io/coreos/grafana-watcher:v0.0.8

imagePullPolicy: IfNotPresent

name: grafana-watcher

resources:

limits:

cpu: 100m

memory: 32Mi

requests:

cpu: 50m

memory: 16Mi

terminationMessagePath: /dev/termination-log

terminationMessagePolicy: File

volumeMounts:

- mountPath: /var/grafana-dashboards

name: grafana-dashboards

- mountPath: /var/grafana-ingress-ctrl-dashboards

name: nginx-ingress-ctrl-dashboard

dnsPolicy: ClusterFirst

restartPolicy: Always

schedulerName: default-scheduler

securityContext:

fsGroup: 472

terminationGracePeriodSeconds: 30

volumes:

- emptyDir: {}

name: grafana-storage

- name: grafana-persistent-storage

persistentVolumeClaim:

claimName: grafana-persistent-storage

- configMap:

defaultMode: 420

name: grafana-dashboards

name: grafana-dashboards

- configMap:

defaultMode: 420

name: nginx-ingress-ctrl-dashboard

name: nginx-ingress-ctrl-dashboard

nginx-ingress-ctrl-dashboard.yaml

apiVersion: v1

data:

nginx-ingress-controller-dashboard.json: |

{

"annotations": {

"list": [

{

"$$hashKey": "object:995",

"builtIn": 1,

"datasource": "-- Grafana --",

"enable": true,

"hide": true,

"iconColor": "rgba(0, 211, 255, 1)",

"name": "Annotations & Alerts",

"type": "dashboard"

},

{

"$$hashKey": "object:996",

"da................................................

..........................

}

kind: ConfigMap

metadata:

creationTimestamp: "2020-06-18T15:40:11Z"

labels:

grafana_dashboard: "1"

name: nginx-ingress-ctrl-dashboard

namespace: monitoring

resourceVersion: "63098134"

selfLink: /api/v1/namespaces/monitoring/configmaps/nginx-ingress-ctrl-dashboard

uid: 9c289bb1-98e9-46e6-9a59-29c24b41a649

You can set up a PVC for your grafana stuff

See here: https://review.opendev.org/#/c/722396/2/magnum/drivers/common/templates/kubernetes/helm/prometheus-operator.sh

Having the same problem ... using Grafana as add-on within Home Assistant. As soon as the Grafana restarted (already saved) dashboards get deleted.

I am able to get a few of the dashboards persistent using the same configmap, but for the rest of all dashboards if a pod deleted/restart those dashboards gets deleted.

Here is my Grafana Dashboard I believe there is some issue with the format can anyone help, Thanks in Advance.

{

"annotations": {

"list": [

{

"$$hashKey": "object:745",

"builtIn": 1,

"datasource": "-- Grafana --",

"enable": true,

"hide": true,

"iconColor": "rgba(0, 211, 255, 1)",

"name": "Annotations & Alerts",

"type": "dashboard"

}

]

},

"description": "",

"editable": true,

"gnetId": 9614,

"graphTooltip": 1,

"id": 159,

"iteration": 1587106084087,

"links": [],

"panels": [

{

"aliasColors": {},

"bars": false,

"dashLength": 10,

"dashes": false,

"datasource": "prometheus",

"description": "Total time taken for nginx and upstream servers to process a request and send a response",

"fill": 1,

"fillGradient": 0,

"gridPos": {

"h": 8,

"w": 12,

"x": 0,

"y": 0

},

"hiddenSeries": false,

"id": 91,

"legend": {

"avg": false,

"current": false,

"max": false,

"min": false,

"show": true,

"total": false,

"values": false

},

"lines": true,

"linewidth": 1,

"links": [],

"nullPointMode": "connected",

"options": {

"dataLinks": []

},

"percentage": false,

"pointradius": 2,

"points": false,

"renderer": "flot",

"seriesOverrides": [],

"spaceLength": 10,

"stack": false,

"steppedLine": false,

"targets": [

{

"expr": "histogram_quantile(\n 0.5,\n sum by (le)(\n rate(\n nginx_ingress_controller_request_duration_seconds_bucket{\n ingress =~ \"$ingress\"\n }[1m]\n )\n )\n)",

"format": "time_series",

"interval": "",

"intervalFactor": 1,

"legendFormat": ".5",

"refId": "D"

},

{

"expr": "histogram_quantile(\n 0.95,\n sum by (le)(\n rate(\n nginx_ingress_controller_request_duration_seconds_bucket{\n ingress =~ \"$ingress\"\n }[1m]\n )\n )\n)",

"format": "time_series",

"interval": "",

"intervalFactor": 1,

"legendFormat": ".95",

"refId": "B"

},

{

"expr": "histogram_quantile(\n 0.99,\n sum by (le)(\n rate(\n nginx_ingress_controller_request_duration_seconds_bucket{\n ingress =~ \"$ingress\"\n }[1m]\n )\n )\n)",

"format": "time_series",

"interval": "",

"intervalFactor": 1,

"legendFormat": ".99",

"refId": "A"

}

],

"thresholds": [],

"timeFrom": null,

"timeRegions": [],

"timeShift": null,

"title": "Total Request Handling Time",

"tooltip": {

"shared": true,

"sort": 0,

"value_type": "individual"

},

"type": "graph",

"xaxis": {

"buckets": null,

"mode": "time",

"name": null,

"show": true,

"values": []

},

"yaxes": [

{

"format": "s",

"label": null,

"logBase": 1,

"max": null,

"min": null,

"show": true

},

{

"format": "short",

"label": null,

"logBase": 1,

"max": null,

"min": null,

"show": true

}

],

"yaxis": {

"align": false,

"alignLevel": null

}

},

{

"aliasColors": {},

"bars": false,

"dashLength": 10,

"dashes": false,

"datasource": "prometheus",

"description": "The time spent on receiving the response from the upstream server",

"fill": 1,

"fillGradient": 0,

"gridPos": {

"h": 8,

"w": 12,

"x": 12,

"y": 0

},

"hiddenSeries": false,

"id": 94,

"legend": {

"avg": false,

"current": false,

"max": false,

"min": false,

"show": true,

"total": false,

"values": false

},

"lines": true,

"linewidth": 1,

"links": [],

"nullPointMode": "connected",

"options": {

"dataLinks": []

},

"percentage": false,

"pointradius": 2,

"points": false,

"renderer": "flot",

"seriesOverrides": [],

"spaceLength": 10,

"stack": false,

"steppedLine": false,

"targets": [

{

"expr": "histogram_quantile(\n 0.5,\n sum by (le)(\n rate(\n nginx_ingress_controller_response_duration_seconds_bucket{\n ingress =~ \"$ingress\"\n }[1m]\n )\n )\n)",

"instant": false,

"interval": "",

"intervalFactor": 1,

"legendFormat": ".5",

"refId": "D"

},

{

"expr": "histogram_quantile(\n 0.95,\n sum by (le)(\n rate(\n nginx_ingress_controller_response_duration_seconds_bucket{\n ingress =~ \"$ingress\"\n }[1m]\n )\n )\n)",

"interval": "",

"legendFormat": ".95",

"refId": "B"

},

{

"expr": "histogram_quantile(\n 0.99,\n sum by (le)(\n rate(\n nginx_ingress_controller_response_duration_seconds_bucket{\n ingress =~ \"$ingress\"\n }[1m]\n )\n )\n)",

"interval": "",

"legendFormat": ".99",

"refId": "A"

}

],

"thresholds": [],

"timeFrom": null,

"timeRegions": [],

"timeShift": null,

"title": "Upstream Response Time",

"tooltip": {

"shared": true,

"sort": 0,

"value_type": "individual"

},

"type": "graph",

"xaxis": {

"buckets": null,

"mode": "time",

"name": null,

"show": true,

"values": []

},

"yaxes": [

{

"format": "s",

"label": null,

"logBase": 1,

"max": null,

"min": null,

"show": true

},

{

"format": "short",

"label": null,

"logBase": 1,

"max": null,

"min": null,

"show": true

}

],

"yaxis": {

"align": false,

"alignLevel": null

}

},

{

"aliasColors": {},

"bars": false,

"dashLength": 10,

"dashes": false,

"datasource": "prometheus",

"fill": 1,

"fillGradient": 0,

"gridPos": {

"h": 8,

"w": 12,

"x": 0,

"y": 8

},

"hiddenSeries": false,

"id": 93,

"legend": {

"alignAsTable": true,

"avg": false,

"current": false,

"max": false,

"min": false,

"rightSide": true,

"show": true,

"total": false,

"values": false

},

"lines": true,

"linewidth": 1,

"links": [],

"nullPointMode": "connected",

"options": {

"dataLinks": []

},

"percentage": false,

"pointradius": 2,

"points": false,

"renderer": "flot",

"seriesOverrides": [],

"spaceLength": 10,

"stack": false,

"steppedLine": false,

"targets": [

{

"expr": " sum by (method)(\n rate(\n nginx_ingress_controller_request_duration_seconds_count{\n ingress =~\"$ingress\"\n }[1m]\n )\n )\n",

"format": "time_series",

"interval": "",

"intervalFactor": 1,

"legendFormat": "{{ method }}",

"refId": "A"

}

],

"thresholds": [],

"timeFrom": null,

"timeRegions": [],

"timeShift": null,

"title": "Request Volume Time by Method",

"tooltip": {

"shared": true,

"sort": 0,

"value_type": "individual"

},

"type": "graph",

"xaxis": {

"buckets": null,

"mode": "time",

"name": null,

"show": true,

"values": []

},

"yaxes": [

{

"format": "none",

"label": null,

"logBase": 1,

"max": null,

"min": null,

"show": true

},

{

"format": "none",

"label": null,

"logBase": 1,

"max": null,

"min": null,

"show": true

}

],

"yaxis": {

"align": false,

"alignLevel": null

}

},

{

"aliasColors": {},

"bars": false,

"dashLength": 10,

"dashes": false,

"datasource": "prometheus",

"description": "For each path observed, its median upstream response time",

"fill": 1,

"fillGradient": 0,

"gridPos": {

"h": 8,

"w": 12,

"x": 12,

"y": 8

},

"hiddenSeries": false,

"id": 98,

"legend": {

"alignAsTable": true,

"avg": false,

"current": false,

"max": false,

"min": false,

"rightSide": true,

"show": true,

"total": false,

"values": false

},

"lines": true,

"linewidth": 1,

"links": [],

"nullPointMode": "connected",

"options": {

"dataLinks": []

},

"percentage": false,

"pointradius": 2,

"points": false,

"renderer": "flot",

"seriesOverrides": [],

"spaceLength": 10,

"stack": false,

"steppedLine": false,

"targets": [

{

"expr": "histogram_quantile(\n .5,\n sum by (le, method)(\n rate(\n nginx_ingress_controller_response_duration_seconds_bucket{\n ingress =~ \"$ingress\"\n }[1m]\n )\n )\n)",

"format": "time_series",

"interval": "",

"intervalFactor": 1,

"legendFormat": "{{ method }}",

"refId": "A"

}

],

"thresholds": [],

"timeFrom": null,

"timeRegions": [],

"timeShift": null,

"title": "Median Upstream Response Time by Method",

"tooltip": {

"shared": true,

"sort": 0,

"value_type": "individual"

},

"type": "graph",

"xaxis": {

"buckets": null,

"mode": "time",

"name": null,

"show": true,

"values": []

},

"yaxes": [

{

"format": "s",

"label": null,

"logBase": 1,

"max": null,

"min": null,

"show": true

},

{

"format": "short",

"label": null,

"logBase": 1,

"max": null,

"min": null,

"show": true

}

],

"yaxis": {

"align": false,

"alignLevel": null

}

},

{

"aliasColors": {},

"bars": false,

"dashLength": 10,

"dashes": false,

"datasource": "prometheus",

"description": "Percentage of 4xx and 5xx responses among all responses.",

"fill": 1,

"fillGradient": 0,

"gridPos": {

"h": 8,

"w": 12,

"x": 0,

"y": 16

},

"hiddenSeries": false,

"id": 100,

"legend": {

"alignAsTable": true,

"avg": false,

"current": false,

"max": false,

"min": false,

"rightSide": true,

"show": true,

"total": false,

"values": false

},

"lines": true,

"linewidth": 1,

"links": [],

"nullPointMode": "connected",

"options": {

"dataLinks": []

},

"percentage": false,

"pointradius": 2,

"points": false,

"renderer": "flot",

"seriesOverrides": [],

"spaceLength": 10,

"stack": false,

"steppedLine": false,

"targets": [

{

"expr": "sum by (method) (rate(nginx_ingress_controller_request_duration_seconds_count{\n status =~ \"[4-5].*\"\n}[1m])) / sum by (method) (rate(nginx_ingress_controller_request_duration_seconds_count{\n}[1m]))",

"format": "time_series",

"interval": "",

"intervalFactor": 1,

"legendFormat": "{{ method }}",

"refId": "A"

}

],

"thresholds": [],

"timeFrom": null,

"timeRegions": [],

"timeShift": null,

"title": "Response Error Rate by Method",

"tooltip": {

"shared": true,

"sort": 0,

"value_type": "individual"

},

"type": "graph",

"xaxis": {

"buckets": null,

"mode": "time",

"name": null,

"show": true,

"values": []

},

"yaxes": [

{

"format": "percentunit",

"label": null,

"logBase": 1,

"max": null,

"min": null,

"show": true

},

{

"format": "short",

"label": null,

"logBase": 1,

"max": null,

"min": null,

"show": true

}

],

"yaxis": {

"align": false,

"alignLevel": null

}

},

{

"aliasColors": {},

"bars": false,

"dashLength": 10,

"dashes": false,

"datasource": "prometheus",

"fill": 1,

"fillGradient": 0,

"gridPos": {

"h": 8,

"w": 12,

"x": 12,

"y": 16

},

"hiddenSeries": false,

"id": 101,

"legend": {

"alignAsTable": true,

"avg": false,

"current": false,

"max": false,

"min": false,

"rightSide": true,

"show": true,

"total": false,

"values": false

},

"lines": true,

"linewidth": 1,

"links": [],

"nullPointMode": "connected",

"options": {

"dataLinks": []

},

"percentage": false,

"pointradius": 2,

"points": false,

"renderer": "flot",

"seriesOverrides": [],

"spaceLength": 10,

"stack": false,

"steppedLine": false,

"targets": [

{

"expr": " count (\n rate(\n nginx_ingress_controller_request_duration_seconds_count{\n ingress =~ \"$ingress\",\n status =~\"[4-5].*\",\n }[1m]\n )\n ) by(method, status)\n",

"format": "time_series",

"hide": false,

"interval": "",

"intervalFactor": 1,

"legendFormat": "{{ method }} {{ status }}",

"refId": "A"

}

],

"thresholds": [],

"timeFrom": null,

"timeRegions": [],

"timeShift": null,

"title": "Response Error Volume by Path",

"tooltip": {

"shared": true,

"sort": 0,

"value_type": "individual"

},

"type": "graph",

"xaxis": {

"buckets": null,

"mode": "time",

"name": null,

"show": true,

"values": []

},

"yaxes": [

{

"format": "none",

"label": null,

"logBase": 1,

"max": null,

"min": null,

"show": true

},

{

"format": "none",

"label": null,

"logBase": 1,

"max": null,

"min": null,

"show": true

}

],

"yaxis": {

"align": false,

"alignLevel": null

}

},

{

"aliasColors": {},

"bars": false,

"dashLength": 10,

"dashes": false,

"datasource": "prometheus",

"description": "For each path observed, the sum of upstream request time",

"fill": 1,

"fillGradient": 0,

"gridPos": {

"h": 8,

"w": 12,

"x": 0,

"y": 24

},

"hiddenSeries": false,

"id": 102,

"legend": {

"alignAsTable": true,

"avg": false,

"current": false,

"max": false,

"min": false,

"rightSide": true,

"show": true,

"total": false,

"values": false

},

"lines": true,

"linewidth": 1,

"links": [],

"nullPointMode": "connected",

"options": {

"dataLinks": []

},

"percentage": false,

"pointradius": 2,

"points": false,

"renderer": "flot",

"seriesOverrides": [],

"spaceLength": 10,

"stack": false,

"steppedLine": false,

"targets": [

{

"expr": "sum by (method) (rate(nginx_ingress_controller_response_duration_seconds_sum{}[1m]))",

"format": "time_series",

"interval": "",

"intervalFactor": 1,

"legendFormat": "{{ method }}",

"refId": "A"

}

],

"thresholds": [],

"timeFrom": null,

"timeRegions": [],

"timeShift": null,

"title": "Upstream Time Consumed by Method",

"tooltip": {

"shared": true,

"sort": 0,

"value_type": "individual"

},

"type": "graph",

"xaxis": {

"buckets": null,

"mode": "time",

"name": null,

"show": true,

"values": []

},

"yaxes": [

{

"format": "s",

"label": null,

"logBase": 1,

"max": null,

"min": null,

"show": true

},

{

"format": "short",

"label": null,

"logBase": 1,

"max": null,

"min": null,

"show": true

}

],

"yaxis": {

"align": false,

"alignLevel": null

}

},

{

"aliasColors": {},

"bars": false,

"dashLength": 10,

"dashes": false,

"datasource": "prometheus",

"fill": 1,

"fillGradient": 0,

"gridPos": {

"h": 8,

"w": 12,

"x": 12,

"y": 24

},

"hiddenSeries": false,

"id": 99,

"legend": {

"alignAsTable": true,

"avg": false,

"current": false,

"max": false,

"min": false,

"rightSide": true,

"show": true,

"total": false,

"values": false

},

"lines": true,

"linewidth": 1,

"links": [],

"nullPointMode": "connected",

"options": {

"dataLinks": []

},

"percentage": false,

"pointradius": 2,

"points": false,

"renderer": "flot",

"seriesOverrides": [],

"spaceLength": 10,

"stack": false,

"steppedLine": false,

"targets": [

{

"expr": "sum (\n rate (\n nginx_ingress_controller_response_size_sum {\n ingress =~ \"$ingress\",\n }[1m]\n )\n) by (method) / sum (\n rate(\n nginx_ingress_controller_response_size_count {\n ingress =~ \"$ingress\",\n }[1m]\n )\n) by (method)\n",

"format": "time_series",

"hide": false,

"instant": false,

"interval": "",

"intervalFactor": 1,

"legendFormat": "{{ method }}",

"refId": "D"

},

{

"expr": " sum (rate(nginx_ingress_controller_response_size_bucket{\n namespace =~ \"$namespace\",\n ingress =~ \"$ingress\",\n }[1m])) by (le)\n",

"format": "time_series",

"hide": true,

"intervalFactor": 1,

"legendFormat": "{{le}}",

"refId": "A"

}

],

"thresholds": [],

"timeFrom": null,

"timeRegions": [],

"timeShift": null,

"title": "Average response size by Method",

"tooltip": {

"shared": true,

"sort": 0,

"value_type": "individual"

},

"type": "graph",

"xaxis": {

"buckets": null,

"mode": "time",

"name": null,

"show": true,

"values": []

},

"yaxes": [

{

"format": "decbytes",

"label": null,

"logBase": 1,

"max": null,

"min": null,

"show": true

},

{

"format": "short",

"label": null,

"logBase": 1,

"max": null,

"min": null,

"show": true

}

],

"yaxis": {

"align": false,

"alignLevel": null

}

},

{

"aliasColors": {},

"bars": false,

"dashLength": 10,

"dashes": false,

"datasource": "prometheus",

"fill": 1,

"gridPos": {

"h": 9,

"w": 24,

"x": 0,

"y": 32

},

"id": 104,

"legend": {

"alignAsTable": true,

"avg": false,

"current": false,

"max": false,

"min": false,

"rightSide": true,

"show": true,

"sideWidth": 200,

"total": false,

"values": false

},

"lines": true,

"linewidth": 1,

"links": [],

"nullPointMode": "connected",

"percentage": false,

"pointradius": 5,

"points": false,

"renderer": "flot",

"seriesOverrides": [],

"spaceLength": 10,

"stack": false,

"steppedLine": false,

"targets": [

{

"expr": "topk(20, avg by(method, path) (rate(rest_api_time_seconds_sum[600m]) / rate(rest_api_time_seconds_count[600m])))",

"format": "time_series",

"intervalFactor": 1,

"legendFormat": "{{ method }} : {{ path }}",

"refId": "A"

}

],

"thresholds": [],

"timeFrom": null,

"timeShift": null,

"title": "Top 20 API Request Latency",

"tooltip": {

"shared": true,

"sort": 0,

"value_type": "individual"

},

"type": "graph",

"xaxis": {

"buckets": null,

"mode": "time",

"name": null,

"show": true,

"values": []

},

"yaxes": [

{

"format": "s",

"label": null,

"logBase": 1,

"max": null,

"min": null,

"show": true

},

{

"format": "short",

"label": null,

"logBase": 1,

"max": null,

"min": null,

"show": true

}

],

"yaxis": {

"align": false,

"alignLevel": null

}

},

{

"aliasColors": {},

"bars": false,

"dashLength": 10,

"dashes": false,

"datasource": "prometheus",

"fill": 1,

"fillGradient": 0,

"gridPos": {

"h": 8,

"w": 24,

"x": 0,

"y": 41

},

"hiddenSeries": false,

"id": 96,

"legend": {

"avg": false,

"current": false,

"max": false,

"min": false,

"show": true,

"total": false,

"values": false

},

"lines": true,

"linewidth": 1,

"links": [],

"nullPointMode": "connected",

"options": {

"dataLinks": []

},

"percentage": false,

"pointradius": 2,

"points": false,

"renderer": "flot",

"seriesOverrides": [],

"spaceLength": 10,

"stack": false,

"steppedLine": false,

"targets": [

{

"expr": "sum (\n rate(\n nginx_ingress_controller_ingress_upstream_latency_seconds_sum {\n ingress =~ \"$ingresss\",\n }[1m]\n)) / sum (\n rate(\n nginx_ingress_controller_ingress_upstream_latency_seconds_count {\n ingress =~ \"$ingresss\",\n }[1m]\n )\n)\n",

"format": "time_series",

"hide": false,

"instant": false,

"interval": "",

"intervalFactor": 1,

"legendFormat": "{{ ingresss }}",

"refId": "B"

}

],

"thresholds": [],

"timeFrom": null,

"timeRegions": [],

"timeShift": null,

"title": "Upstream Service Latency",

"tooltip": {

"shared": true,

"sort": 0,

"value_type": "individual"

},

"type": "graph",

"xaxis": {

"buckets": null,

"mode": "time",

"name": null,

"show": true,

"values": []

},

"yaxes": [

{

"format": "s",

"label": null,

"logBase": 1,

"max": null,

"min": null,

"show": true

},

{

"format": "short",

"label": null,

"logBase": 1,

"max": null,

"min": null,

"show": true

}

],

"yaxis": {

"align": false,

"alignLevel": null

}

}

],

"refresh": "30s",

"schemaVersion": 16,

"style": "dark",

"tags": [

"nginx"

],

"templating": {

"list": [

{

"allValue": ".*",

"current": {

"text": "All",

"value": "$__all"

},

"datasource": "prometheus",

"definition": "label_values(nginx_ingress_controller_requests, ingress) ",

"hide": 0,

"includeAll": true,

"label": "ingress",

"multi": false,

"name": "ingress",

"options": [],

"query": "label_values(nginx_ingress_controller_requests{namespace=~\"$namespace\",controller_class=~\"$controller_class\",controller=~\"$controller\"}, ingress) ",

"refresh": 1,

"regex": "",

"skipUrlSync": false,

"sort": 2,

"tagValuesQuery": "",

"tags": [],

"tagsQuery": "",

"type": "query",

"useTags": false

},

{

"allValue": ".*",

"current": {

"text": "All",

"value": "$__all"

},

"datasource": "prometheus",

"hide": 0,

"includeAll": true,

"label": "Controller",

"multi": false,

"name": "controller",

"options": [],

"query": "label_values(nginx_ingress_controller_config_hash{namespace=~\"$namespace\",controller_class=~\"$controller_class\"}, controller_pod) ",

"refresh": 1,

"regex": "",

"sort": 0,

"tagValuesQuery": "",

"tags": [],

"tagsQuery": "",

"type": "query",

"useTags": false

},

{

"allValue": ".*",

"current": {

"text": "All",

"value": "$__all"

},

"datasource": "prometheus",

"hide": 0,

"includeAll": true,

"label": "Controller Class",

"multi": false,

"name": "controller_class",

"options": [],

"query": "label_values(nginx_ingress_controller_config_hash{namespace=~\"$namespace\"}, controller_class) ",

"refresh": 1,

"regex": "",

"sort": 0,

"tagValuesQuery": "",

"tags": [],

"tagsQuery": "",

"type": "query",

"useTags": false

},

{

"allValue": ".*",

"current": {

"text": "All",

"value": "$__all"

},

"datasource": "prometheus",

"hide": 0,

"includeAll": true,

"label": "namespace",

"multi": false,

"name": "namespace",

"options": [],

"query": "label_values(nginx_ingress_controller_config_hash, controller_namespace)",

"refresh": 1,

"regex": "",

"sort": 0,

"tagValuesQuery": "",

"tags": [],

"tagsQuery": "",

"type": "query",

"useTags": false

},

{

"allValue": ".*",

"current": {

"text": "All",

"value": "$__all"

},

"datasource": "prometheus",

"hide": 0,

"includeAll": true,

"label": "ingresss",

"multi": false,

"name": "ingresss",

"options": [],

"query": "label_values(nginx_ingress_controller_ingress_upstream_latency_seconds_sum{namespace=~\"$namespace\",controller_class=~\"$controller_class\"}, ingress) ",

"refresh": 1,

"regex": "",

"sort": 0,

"tagValuesQuery": "",

"tags": [],

"tagsQuery": "",

"type": "query",

"useTags": false

}

]

},

"time": {

"from": "now-15m",

"to": "now"

},

"timepicker": {

"refresh_intervals": [

"5s",

"10s",

"30s",

"2m",

"5m",

"15m",

"30m",

"1h",

"2h",

"1d"

],

"time_options": [

"5m",

"15m",

"1h",

"6h",

"12h",

"24h",

"2d",

"7d",

"30d"

]

},

"timezone": "browser",

"title": "Request Handling Performance",

"uid": "4GFbkOsZk",

"version": 1

}

You can set up a PVC for your grafana stuff

See here: https://review.opendev.org/#/c/722396/2/magnum/drivers/common/templates/kubernetes/helm/prometheus-operator.sh

I did not use helm to deploy Prometheus operator, Just to confirm what I did was deleted the PVC and Deployment and re-deployed the complete Grafana but still, it's same I don't see those dashboards imported Dashboard name and JSON ^^ "Request Handling Performance"

Most helpful comment

I've got the PersistentVolume created and the users and other items are persisted but not the dashboards or data sources. This is really a pain, I'd love to be able to not worry about my dashboards disappearing if the pod is restarted.