Picongpu: ADIOS for 3D data

I have results for a 3D model obtained through ADIOS.

For 2D structures with Python I could read for example the electrons charge using a definition as:

def get_electronsCharge(series, iteration, coord=io.Mesh_Record_Component.SCALAR):

it = series.iterations[iteration]

mesh = it.meshes['e_chargeDensity']

component = mesh[coord]

data = component[()] * component.unit_SI

factor = component.unit_SI

return data, factor

but I don't know how to do it for 3D data files. I suspect I need to replace coord=io.Mesh_Record_Component.SCALAR but I couldn't find a place where to learn from. Could you help, please?

For comparison, in the past, when working with h5 files I could use a syntax like ts.get_field(iteration=it,field='e_chargeDensity',slice_across='z', slice_relative_position=cutZ); where cutZ = 0 to get a slice of data in the middle of the simulation domain.

Thank you

Cristian

All 10 comments

Hey Christian, it seems like you use openpmd-api or openpmd-viewer already? (I am just guessing from what I see in your code snippet).

Both softwares do not belong (directly) to PIConGPU, but are separate projects. We can, therefore, only be of limited help. See the respective repositories @openPMD.

For your specific problem, I would recommend to have look at the 2nd of the Juypter turorial notebooks 2_Specific-field-geometries.ipynb of the openPMD/openPMD-viewer project, see (https://github.com/openPMD/openPMD-viewer/tree/dev/tutorials). It explicitly shows how to load 3D field data sets using openPMD-viewer. Whether they are in ADIOS or HDF5 format does not matter.

Does this help already?

@steindev Thank you for your reply. I will check in detail. Meanwhile, I have yet another problem. The --adios.period syntax is not recognized. All I did was to fetch the LWFA from the distribution and add in the 1.cfg file as

TBG_adios="--adios.period 100 --adios.file simData --adios.source 'species_all,fields_all' --adios.disable-meta 0 \

--adios.compression blosc:threshold=2048,shuffle=bit,lvl=1,threads=8,compressor=zstd"

and accordingly include the adios plugin at TBG_plugins="..."

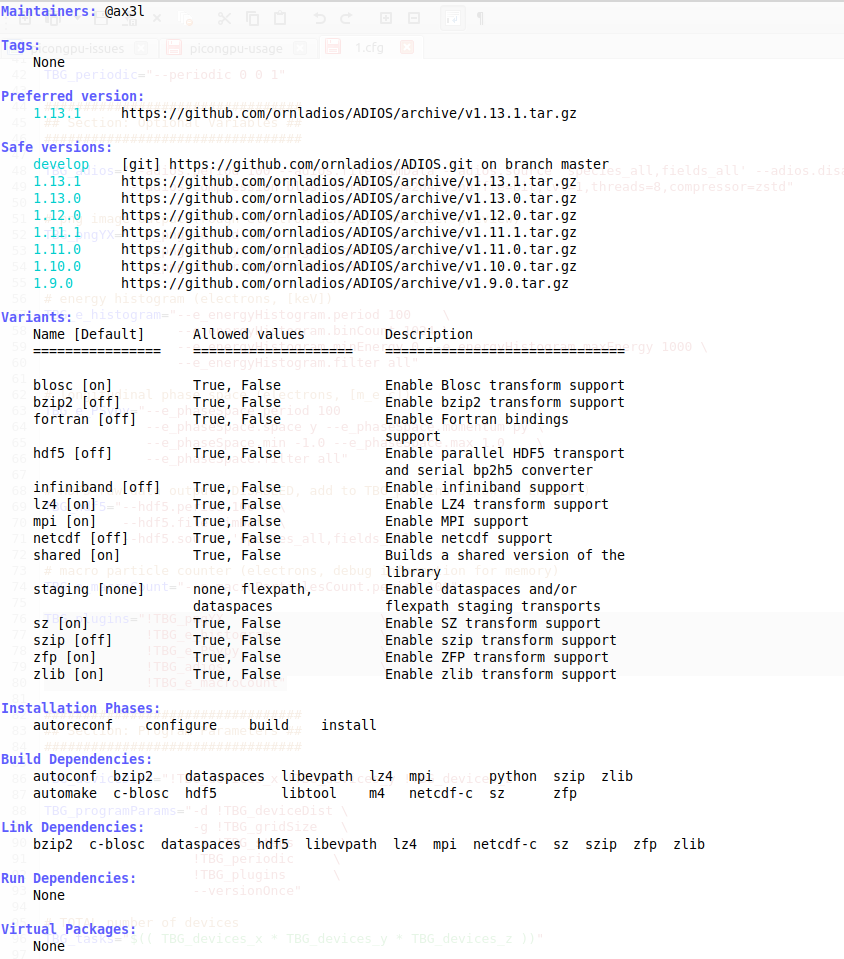

I load the code as spack load picongpu +adios %[email protected] but I still have ADIOS: NOTFOUND though adios is there in the Spack folder

Hello @cbontoiu . Unfortunately, I could not reproduce it on our system. What I did was

spack install picongpu +adios

spack load picongpu +adios

with the fresh contents of the spack-repo.

I assume you did the same? Could you check on the piece of spack install output after

==> Installing adios and post it here?

For me looked like

==> Installing adios

==> No binary for adios found: installing from source

==> adios: Executing phase: 'autoreconf'

==> adios: Executing phase: 'configure'

==> adios: Executing phase: 'build'

==> adios: Executing phase: 'install'

[+] /home/bastra54/src/spack/opt/spack/linux-centos7-broadwell/gcc-7.3.0/adios-1.13.1-omek3vzsiv3i5xykecxt567bqufbodxy

[+] /home/bastra54/src/spack/opt/spack/linux-centos7-broadwell/gcc-7.3.0/hdf5-1.10.6-ipavsffhywrthtmrjtdlgdipw32wp6vg

[+] /home/bastra54/src/spack/opt/spack/linux-centos7-broadwell/gcc-7.3.0/libsplash-1.7.0-br6ozbg3pwgzp23y56otzfiaim56sh7o

@sbastrakov Hello,

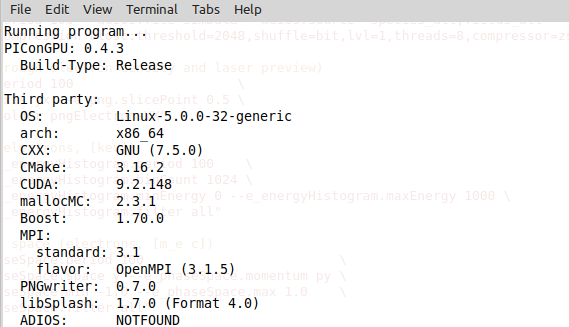

I reinstall the whole Spack folder with all dependencies and adios. It works now and I am satisfied. Still you might consider that fact that there is a misleading message just when the simulation starts running as shown below:

(base) quasar@quasar:~/PIC_INPUT/PICONGPU/TESTS/myLaserWakefield$ tbg -s bash -c etc/picongpu/1.cfg -t etc/picongpu/bash/mpiexec.tpl /media/quasar/RawDataDisk/PICONGPU/TESTS/myLaserWakefield_02

Running program...

using default compiler

==> Error: Spec '[email protected]%[email protected]+adios+hdf5+isaac+png backend=cuda cudacxx=nvcc arch=linux-linuxmint19-skylake ^[email protected]%[email protected]+blosc~bzip2~fortran~hdf5~infiniband+lz4+mpi~netcdf+shared+sz~szip+zfp+zlib patches=01113e9efb929d71c28bf33cc8b7f215d85195ec700e99cb41164e2f8f830640,8ae17f655248e87cbab1d1ed794e15364a38d2f5f8d971b1086702f72d79bd42,d24b79b795f66e40ddcd331ea4be896ac9c393d6f68f4318616d23928b0694e9 staging=none arch=linux-linuxmint19-skylake ^[email protected]%[email protected] arch=linux-linuxmint19-skylake ^[email protected]%[email protected] arch=linux-linuxmint19-skylake ^[email protected]%[email protected] arch=linux-linuxmint19-skylake ^[email protected]%[email protected]+atomic+chrono~clanglibcpp~container~context~coroutine+date_time~debug+exception~fiber+filesystem+graph~icu+iostreams+locale+log+math~mpi+multithreaded~numpy~pic+program_options~python+random+regex+serialization+shared+signals~singlethreaded+system~taggedlayout+test+thread+timer~versionedlayout+wave cxxstd=11 visibility=hidden arch=linux-linuxmint19-skylake ^[email protected]%[email protected]+shared arch=linux-linuxmint19-skylake ^[email protected]%[email protected]+avx2~ipo build_type=RelWithDebInfo patches=cd40604a26157a0e018ea496cf3267e116e6ec5ff80a7d1cef11b841c154c388 arch=linux-linuxmint19-skylake ^[email protected]%[email protected]~doc+ncurses+openssl+ownlibs~qt patches=bf695e3febb222da2ed94b3beea600650e4318975da90e4a71d6f31a6d5d8c3d arch=linux-linuxmint19-skylake ^[email protected]%[email protected] arch=linux-linuxmint19-skylake ^[email protected]%[email protected] arch=linux-linuxmint19-skylake ^[email protected]%[email protected]+libbsd arch=linux-linuxmint19-skylake ^[email protected]%[email protected] arch=linux-linuxmint19-skylake ^[email protected]%[email protected] arch=linux-linuxmint19-skylake ^[email protected]%[email protected]+bzip2+curses+git~libunistring+libxml2+tar+xz arch=linux-linuxmint19-skylake ^[email protected]%[email protected]~cxx~debug~fortran~hl~java+mpi+pic+shared~szip~threadsafe api=none arch=linux-linuxmint19-skylake ^[email protected]%[email protected]~ipo build_type=RelWithDebInfo arch=linux-linuxmint19-skylake ^[email protected]%[email protected]+cuda~ipo build_type=RelWithDebInfo arch=linux-linuxmint19-skylake ^[email protected]%[email protected]~ipo build_type=RelWithDebInfo arch=linux-linuxmint19-skylake ^[email protected]%[email protected]~ipo+shared build_type=RelWithDebInfo arch=linux-linuxmint19-skylake ^[email protected]%[email protected] arch=linux-linuxmint19-skylake ^[email protected]%[email protected] patches=26f26c6f29a7ce9bf370ad3ab2610f99365b4bdd7b82e7c31df41a3370d685c0 arch=linux-linuxmint19-skylake ^[email protected]%[email protected] arch=linux-linuxmint19-skylake ^[email protected]%[email protected] arch=linux-linuxmint19-skylake ^[email protected]%[email protected] arch=linux-linuxmint19-skylake ^[email protected]%[email protected] arch=linux-linuxmint19-skylake ^[email protected]%[email protected]~ipo+mpi build_type=RelWithDebInfo patches=669608721dfce0ada7cef1ac84344352791a8916b7bb98ca8a0d4e6d4670e744 arch=linux-linuxmint19-skylake ^[email protected]%[email protected] arch=linux-linuxmint19-skylake ^[email protected]%[email protected] arch=linux-linuxmint19-skylake ^[email protected]%[email protected]~ipo build_type=RelWithDebInfo arch=linux-linuxmint19-skylake ^[email protected]%[email protected]~python arch=linux-linuxmint19-skylake ^[email protected]%[email protected] arch=linux-linuxmint19-skylake ^[email protected]%[email protected]+sigsegv patches=3877ab548f88597ab2327a2230ee048d2d07ace1062efe81fc92e91b7f39cd00,fc9b61654a3ba1a8d6cd78ce087e7c96366c290bc8d2c299f09828d793b853c8 arch=linux-linuxmint19-skylake ^[email protected]%[email protected] arch=linux-linuxmint19-skylake ^[email protected]%[email protected]~symlinks+termlib arch=linux-linuxmint19-skylake ^[email protected]%[email protected]~atomics~cuda~cxx~cxx_exceptions+gpfs~java~legacylaunchers~lustre~memchecker~pmi~singularity~sqlite3+static~thread_multiple+vt+wrapper-rpath fabrics=none schedulers=none arch=linux-linuxmint19-skylake ^[email protected]%[email protected]+systemcerts arch=linux-linuxmint19-skylake ^[email protected]%[email protected]+cpanm+shared+threads arch=linux-linuxmint19-skylake ^[email protected]%[email protected] arch=linux-linuxmint19-skylake ^[email protected]%[email protected]~ipo build_type=RelWithDebInfo arch=linux-linuxmint19-skylake ^[email protected]%[email protected] arch=linux-linuxmint19-skylake ^[email protected]%[email protected]+bz2+ctypes+dbm~debug+libxml2+lzma~nis~optimizations+pic+pyexpat+pythoncmd+readline+shared+sqlite3+ssl~tix~tkinter~ucs4+uuid+zlib patches=0d98e93189bc278fbc37a50ed7f183bd8aaf249a8e1670a465f0db6bb4f8cf87 arch=linux-linuxmint19-skylake ^[email protected]%[email protected] arch=linux-linuxmint19-skylake ^[email protected]%[email protected] arch=linux-linuxmint19-skylake ^[email protected]%[email protected]~ipo+pic+shared build_type=RelWithDebInfo patches=c9cfecb1f7a623418590cf4e00ae7d308d1c3faeb15046c2e5090e38221da7cd arch=linux-linuxmint19-skylake ^[email protected]%[email protected]+column_metadata+fts~functions~rtree arch=linux-linuxmint19-skylake ^[email protected]%[email protected]~fortran~hdf5~ipo~netcdf~pastri~python~random_access+shared~stats~time_compression build_type=RelWithDebInfo arch=linux-linuxmint19-skylake ^[email protected]%[email protected] arch=linux-linuxmint19-skylake ^[email protected]%[email protected] arch=linux-linuxmint19-skylake ^[email protected]%[email protected]~pic arch=linux-linuxmint19-skylake ^[email protected]%[email protected]~aligned~fasthash~ipo~profile+shared~strided~twoway bsws=64 build_type=RelWithDebInfo arch=linux-linuxmint19-skylake ^[email protected]%[email protected]+optimize+pic+shared arch=linux-linuxmint19-skylake ^[email protected]%[email protected]+pic arch=linux-linuxmint19-skylake' matches no installed packages.

PIConGPU: 0.5.0

Build-Type: Release

Third party:

OS: Linux-5.0.0-32-generic

arch: x86_64

CXX: GNU (7.5.0)

CMake: 3.18.4

CUDA: 9.2.148

mallocMC: 2.3.1

Boost: 1.70.0

MPI:

standard: 3.1

flavor: OpenMPI (3.1.5)

PNGwriter: 0.7.0

libSplash: 1.7.0 (Format 4.0)

ADIOS: 1.13.1

PIConGPUVerbose PHYSICS(1) | Sliding Window is OFF

PIConGPUVerbose PHYSICS(1) | used Random Number Generator: RNGProvider3XorMin seed: 42

PIConGPUVerbose PHYSICS(1) | Courant c*dt <= 1.00229 ? 1

PIConGPUVerbose PHYSICS(1) | Resolving plasma oscillations?

Estimates are based on DensityRatio to BASE_DENSITY of each species

(see: density.param, speciesDefinition.param).

It and does not cover other forms of initialization

PIConGPUVerbose PHYSICS(1) | species e: omega_p * dt <= 0.1 ? 0.0247974

PIConGPUVerbose PHYSICS(1) | y-cells per wavelength: 18.0587

PIConGPUVerbose PHYSICS(1) | macro particles per device: 4718592

PIConGPUVerbose PHYSICS(1) | typical macro particle weighting: 6955.06

PIConGPUVerbose PHYSICS(1) | UNIT_SPEED 2.99792e+08

PIConGPUVerbose PHYSICS(1) | UNIT_TIME 1.39e-16

PIConGPUVerbose PHYSICS(1) | UNIT_LENGTH 4.16712e-08

PIConGPUVerbose PHYSICS(1) | UNIT_MASS 6.33563e-27

PIConGPUVerbose PHYSICS(1) | UNIT_CHARGE 1.11432e-15

PIConGPUVerbose PHYSICS(1) | UNIT_EFIELD 1.22627e+13

PIConGPUVerbose PHYSICS(1) | UNIT_BFIELD 40903.8

PIConGPUVerbose PHYSICS(1) | UNIT_ENERGY 5.69418e-10

initialization time: 10sec 719msec = 10 sec

0 % = 0 | time elapsed: 2sec 307msec | avg time per step: 0msec

4 % = 102 | time elapsed: 6sec 132msec | avg time per step: 13msec

9 % = 204 | time elapsed: 9sec 981msec | avg time per step: 13msec

14 % = 306 | time elapsed: 14sec 549msec | avg time per step: 14msec

19 % = 408 | time elapsed: 19sec 359msec | avg time per step: 15msec

24 % = 510 | time elapsed: 23sec 499msec | avg time per step: 16msec

29 % = 612 | time elapsed: 27sec 674msec | avg time per step: 16msec

34 % = 714 | time elapsed: 32sec 447msec | avg time per step: 17msec

39 % = 816 | time elapsed: 37sec 394msec | avg time per step: 17msec

44 % = 918 | time elapsed: 41sec 622msec | avg time per step: 17msec

49 % = 1020 | time elapsed: 46sec 49msec | avg time per step: 18msec

54 % = 1122 | time elapsed: 50sec 404msec | avg time per step: 19msec

59 % = 1224 | time elapsed: 55sec 7msec | avg time per step: 20msec

64 % = 1326 | time elapsed: 59sec 787msec | avg time per step: 23msec

69 % = 1428 | time elapsed: 1min 4sec 645msec | avg time per step: 24msec

74 % = 1530 | time elapsed: 1min 9sec 466msec | avg time per step: 24msec

79 % = 1632 | time elapsed: 1min 15sec 125msec | avg time per step: 25msec

84 % = 1734 | time elapsed: 1min 20sec 394msec | avg time per step: 21msec

89 % = 1836 | time elapsed: 1min 25sec 342msec | avg time per step: 20msec

94 % = 1938 | time elapsed: 1min 30sec 329msec | avg time per step: 20msec

99 % = 2040 | time elapsed: 1min 34sec 666msec | avg time per step: 21msec

calculation simulation time: 1min 34sec 829msec = 94 sec

full simulation time: 1min 45sec 709msec = 105 sec

(base) quasar@quasar:~/PIC_INPUT/PICONGPU/TESTS/myLaserWakefield$

Another thing is that I compared the adios and hdf5 runtimes for the LWFA model:

data type | total size | initialization time | calculation time

ADIOS 2.8 GB 134 sec 81 sec

HDF5 7 GB 10 sec 94 sec

I was just surprised how long the initialization time is when working with ADIOS.

Interesting. @steindev @PrometheusPi @n01r by any chance did you observe anything similar?

I don't use HDF5. So never compared. I have seen these initialization times when restarting large simulations. Initialization times I usually observe with ADIOS are between half a minute and one minute for multi-GPU simulations.

Hmm, I don't remember any cases where I did an exact comparison of HDF5 and ADIOS.

Suffice it to say that for very large (>TB / output iteration) scenarios the initialization time was always a few minutes but the time spent on I/O was drastically reduced for ADIOS compared to HDF5.

Now that I have a second thought, I do not use HDF5 anymore because I had initialization times of more than one hour in a larger simulation. The main reason for this was probably a bad filesystem since these were caused by a very slow writing of the initial checkpoint/simulation output to the file system. But I don't know if this counts on the initialization time. I think not. However, as ADIOS was able to do it in a few minutes I soleyly use ADIOS since then.

From my impression, which is however not backed by any quantitative measurements, ADIOS was always much faster than hdf5 for me.

Most helpful comment

From my impression, which is however not backed by any quantitative measurements, ADIOS was always much faster than hdf5 for me.