Picongpu: ADIOS buffer problem

Hi!

I have a problem with buffering with adios/1.13.1.

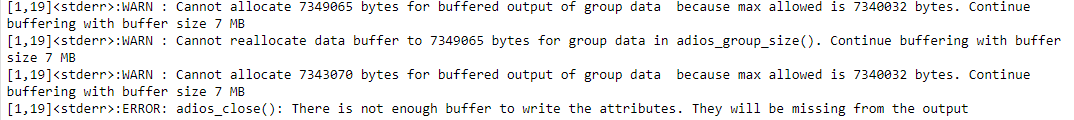

Here is part of the error message:

and path to the files

/bigdata/hplsim/external/mukhar40/ColloidalMelting_TF_I=3.0_1ps.

I tried just recompiling - resubmitting once and it didn't help.

This simulation was restarting automatically as was discussed in https://github.com/ComputationalRadiationPhysics/picongpu/issues/2618#issuecomment-394273964

and for some time it worked perfectly but then that error appeared.

All 25 comments

The error message seems to originate from here in the adios code.

from common_adios.c

if (*total_size > fd->buffer_size && fd->bufstate == buffering_ongoing) {

if (adios_databuffer_resize(fd, *total_size)) {

log_warn("Cannot reallocate data buffer to %" PRIu64

" bytes "

"for group %s in adios_group_size(). Continue buffering "

"with buffer size %" PRIu64 " MB\n",

*total_size, fd->group->name, fd->buffer_size / 1048576L);

}

}

The calculation of the adios group buffer size is done around here in PIConGPU.

from ParticleAttributeSize.hpp

...

params->adiosGroupSize += elements * components * sizeof(ComponentType);

...

Any ideas @psychocoderHPC ?

@NastasiaM Do you run the job on our k20 or k80? It looks like the host is running out of CPU memory.

On k20

We probably just miss some attributes in the buffer estimation.

Can you change this line:

to

size_t buffer_mem=static_cast<size_t>(1.2 * static_cast<float_64>(writeBuffer_in_MiB));

?

It looks like the host is running out of CPU memory.

Could also be the case!

I honestly have no experience in this matter, so it very well maybe nonsense. But doesn't the provided error message indicate that the buffer size we request is more than max allowed in ADIOS? If so, it can probably be fixed by capping params->adiosGroupSize at 7 MB. It seems currently there is no hard capping for this value.

Don't forget to recompile after the change and replace the old binary in your run directory with the new <paramSet>/bin/picongpu binary if you try @ax3l's solution. (You can also rename the old one)

That is because you're restarting automatically from the same simulation directory.

@sbastrakov that's exactly the lines I am linking, using the var from threadParams->adiosGroupSize.

But it looks to me that the malloc call inside adios_databuffer_resize fails, indicating missing mem on the CPU as @psychocoderHPC stated.

https://github.com/ornladios/ADIOS/blob/v1.13.1/src/core/buffer.c#L58

Uh, it's actually a hard-coded param in ADIOS that we cap:

https://github.com/ornladios/ADIOS/blob/v1.13.1/src/core/buffer.c#L64

https://github.com/ornladios/ADIOS/blob/v1.13.1/src/core/buffer.c#L101-L104

https://github.com/ornladios/ADIOS/blob/v1.13.1/src/core/buffer.c#L30-L38

We set this var with the lines I linked above:

https://github.com/ComputationalRadiationPhysics/picongpu/blob/c3054c36ef5cc482233b4779fc9d8dbaed8d1967/src/picongpu/include/plugins/adios/ADIOSWriter.hpp#L1052-L1058

We could also just comment out the adios_set_max_buffer_size(buffer_mem); - it's a large default value now and optional.

We could also just comment out the adios_set_max_buffer_size(buffer_mem); - it's a large default value now and optional.

yes I think that should work. It was only required in very old ADIOS versions.

@NastasiaM could you please comment out the line @ax3l suggest and recompile and give us some feedback if it works or not.

OK, I commented this line, compiled, submitted and now I'm waiting for something to crash or not to crash. I will write you when see something

@NastasiaM how did it work?

Looks like everything is fine now. Thanks!

Ok, will update the mainline then!

Is there any chance that we could crash later again if we, for instance, create a species with a very large number of attributes?

Nope, nothing to worry.

the max size is sane since ADIOS 1.10 and we are not allocating the buffer expliclitly anymore (via adios_groupsize) already.

Ref: https://github.com/ornladios/ADIOS/commit/9fae0d12fea535a4366e81c0c04d7a3be54c3179

ADIOS re-allocates on adios_write on the fly

Hi!

Now I have another problem - it looks like at some point simulation output cannot be written, and simulation is stuck. It should restart after each cycle (about 1 hour) but now it does.t restart and does.t give any output. And no error messages also! Any ideas what it could be?

$ df -h /bigdata/hplsim/production/

Filesystem Size Used Avail Use% Mounted on

bigdata 725T 680T 46T 94% /bigdata

We wouldn't be running into quota issues, would we?

The softlimit for external users is 80T but I can't check because I don't have permission.

$ df -h /bigdata/hplsim/external/

Filesystem Size Used Avail Use% Mounted on

bigdata 85T 74T 12T 87% /bigdata

At first I thought ../external used the same space as ../production but I don't think that's true.

Well, the quota command doesn't work. But you can always do a du -hs <your/parent/directory>. It takes a while but you can check that way.

Can you qdel the simulation and check stderr/stdout? Sounds to me like running out of memory during the sim.

Done - nothing new in stderr/stdout. Should I send it to you?

And I checked disk usage for myself - 8.2 T out of 10 T which is my limit

this issue is solve with #2670

@NastasiaM I would close this issue be cause the initial problem is solved. If you have other issues please open a issue per problem that we not mix different topics. It is no problem to work on different issues on the same time.

Most helpful comment

OK, I commented this line, compiled, submitted and now I'm waiting for something to crash or not to crash. I will write you when see something