Maskrcnn-benchmark: RuntimeError: CUDA error: out of memory Singal GPU Navida 1080TI

❓ Questions and Help

Hello,

I am trying to train the

model on a self created dataset ,

and I keep getting the following error,

I have resized the images to be no larger then 400*400

tried to mask as small as I can.

However I keep receiving the following error: RuntimeError: CUDA error: out of memory

Traceback (most recent call last):

File "tools/train_net.py", line 187, in

main()

File "tools/train_net.py", line 180, in main

model = train(cfg, args.local_rank, args.distributed)

File "tools/train_net.py", line 35, in train

model.to(device)

File "/home/portablelinux/anaconda3/envs/maskrcnnHeadstones/lib/python3.7/site-packages/torch/nn/modules/module.py", line 384, in to

return self._apply(convert)

File "/home/portablelinux/anaconda3/envs/maskrcnnHeadstones/lib/python3.7/site-packages/torch/nn/modules/module.py", line 190, in _apply

module._apply(fn)

File "/home/portablelinux/anaconda3/envs/maskrcnnHeadstones/lib/python3.7/site-packages/torch/nn/modules/module.py", line 190, in _apply

module._apply(fn)

File "/home/portablelinux/anaconda3/envs/maskrcnnHeadstones/lib/python3.7/site-packages/torch/nn/modules/module.py", line 190, in _apply

module._apply(fn)

[Previous line repeated 1 more time]

File "/home/portablelinux/anaconda3/envs/maskrcnnHeadstones/lib/python3.7/site-packages/torch/nn/modules/module.py", line 196, in _apply

param.data = fn(param.data)

File "/home/portablelinux/anaconda3/envs/maskrcnnHeadstones/lib/python3.7/site-packages/torch/nn/modules/module.py", line 382, in convert

return t.to(device, dtype if t.is_floating_point() else None, non_blocking)

RuntimeError: CUDA error: out of memory

I am runinig the model : e2e_mask_rcnn_X_101_32x8d_FPN_1x

with the following parameters:

(all the other parmater in the config file has not been modified )

SOLVER:

BASE_LR: 0.0025

BIAS_LR_FACTOR: 2

CHECKPOINT_PERIOD: 2500

GAMMA: 0.1

IMS_PER_BATCH: 1

MAX_ITER: 180000

MOMENTUM: 0.9

STEPS: (0, 30000, 40000)

WARMUP_FACTOR: 0.3333333333333333

WARMUP_ITERS: 500

WARMUP_METHOD: linear

WEIGHT_DECAY: 0.0001

WEIGHT_DECAY_BIAS: 0

I am trying to run with a 18.04 linux ubuntu :

PyTorch version: 1.0.0.dev20190207

Is debug build: No

CUDA used to build PyTorch: 10.0.130

OS: Ubuntu 18.04.2 LTS

GCC version: (Ubuntu 7.3.0-27ubuntu1~18.04) 7.3.0

CMake version: Could not collect

Python version: 3.7

Is CUDA available: Yes

CUDA runtime version: 10.0.130

GPU models and configuration: GPU 0: GeForce GTX 1080 Ti

Nvidia driver version: 410.79

cuDNN version: Probably one of the following:

/usr/lib/x86_64-linux-gnu/libcudnn.so.7.4.2

/usr/lib/x86_64-linux-gnu/libcudnn_static_v7.a

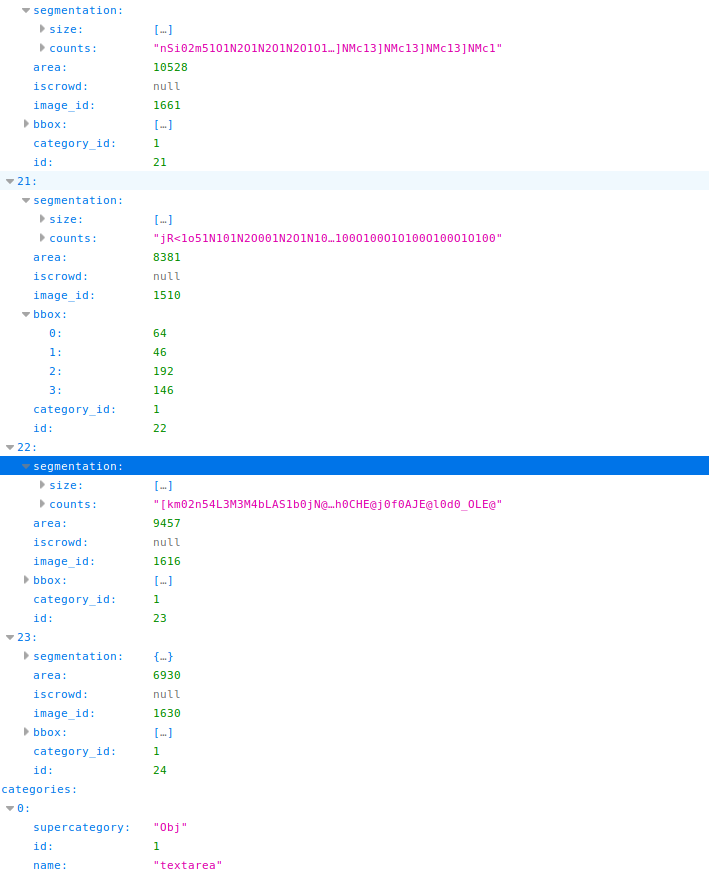

In addition here is a sample of my dataset annotation file:

Thanks for helping !

All 3 comments

You don't need to pre-resize the images in the dataset.

Just change the following parameters.

INPUT:

MIN_SIZE_TRAIN: (800,)

MAX_SIZE_TRAIN: 1333

MIN_SIZE_TEST: 800

MAX_SIZE_TEST: 1333

In your case, you can adjust the MIN_SIZE_TRAIN and MIN_SIZE_TEST to 400 and MAX_SIZE_TRAIN and MAX_SIZE_TEST to 667.

Or you can lower to (300, 500).

@chengyangfu

oddly after changing data format to iscrowded = 0 (no usage of rle ) , and working with polygons instead of masks ,

the training has began successfully, with max mem being 5711.

thanks for your help!

Glad that things work for you now. Closing this issue then

Most helpful comment

You don't need to pre-resize the images in the dataset.

Just change the following parameters.

In your case, you can adjust the

MIN_SIZE_TRAINandMIN_SIZE_TESTto 400 andMAX_SIZE_TRAINandMAX_SIZE_TESTto 667.Or you can lower to (300, 500).