Maskrcnn-benchmark: Strange output and interrupt when perform multi-GPU training

❓ Questions and Help

Hi @fmassa , thank you for your great maskrcnn-benchmark.

Now we encounter some problems. When perform multi-GPU training with our own network, the strange output will appear:

However, when we training Faster R-CNN network with multi-GPU that you provide, there is not that problem.

And training our network with single GPU, there is also not that problem.

We ensure our parameter (batch size or other things) setting is suitable.

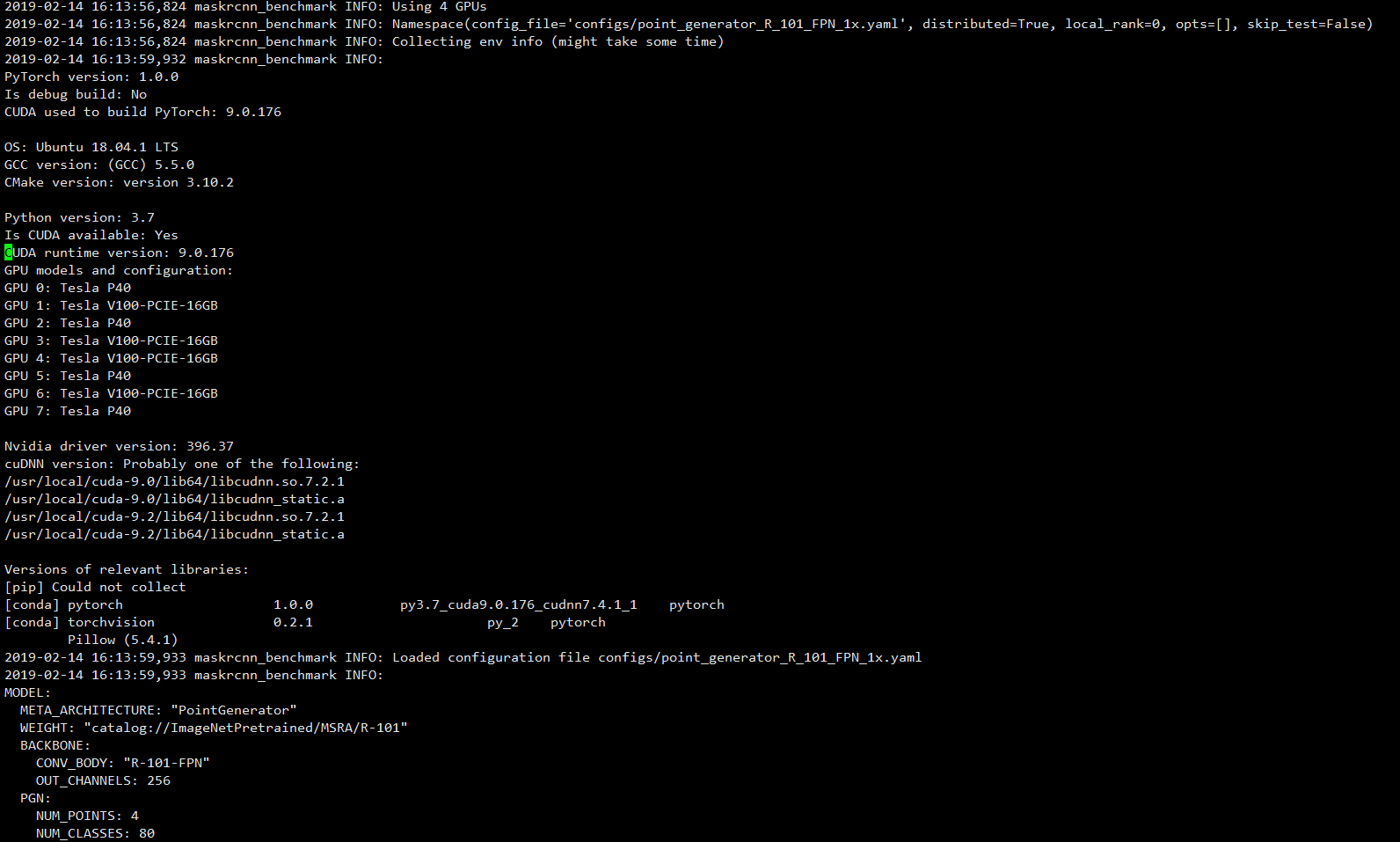

Here is some information:

Thank you! @fmassa

All 8 comments

emm…… meet the same problem, anyone can help?

Hi,

It looks like your model didn't train properly. It might be that it might require some different learning rates / hyper parameters for it to train.

I would recommend checking the loss and see if it decreases over time, and also evaluating the model on the different checkpoints that were generated, to see if it is actually learning something.

Hi, @fmassa

It cannot be trained. The program will be interrupted when start training, and output these strange numbers.........

Thanks for your help.

Did you change anything in the implementation?

Also, are you using a custom dataset?

I can't think of any part of the codebase where I deliberately print a tensor, so maybe the code has been modified in some way?

I also met the problem when I used two GPUs for training. @ChenJoya, do you solve the problem?

@ChenJoya @2678918253 Hi, I have met one similar bug. I wonder if it is caused by distributed launch. If your own model has parameters which are not used in model forward , distributed launch may take this bug.

I think you can use log text to find if your bug has some info like **"TypeError: _queue_reduction(): incompatible function arguments. The following argument types are supported:

- (process_group: torch.distributed.ProcessGroup, grads_batch:List[List[at::Tensor]], devices: List[int]) -> Tuple[torch.distributed.Work, at::Tensor]".**

If you have these bug info, I recommend two ways to solve it. One is trying to find parameters not used in your model forward process and delete it. Second is using NVIDIA apex to wrap your model.

Thank you!

@Lausannen Thank you, problem has been solved following idea!

Most helpful comment

@ChenJoya @2678918253 Hi, I have met one similar bug. I wonder if it is caused by distributed launch. If your own model has parameters which are not used in model forward , distributed launch may take this bug.

I think you can use log text to find if your bug has some info like **"TypeError: _queue_reduction(): incompatible function arguments. The following argument types are supported:

If you have these bug info, I recommend two ways to solve it. One is trying to find parameters not used in your model forward process and delete it. Second is using NVIDIA apex to wrap your model.