Machinelearning: Can i use ML.NET do train a face recognition model

Hi

Im new to ML, so this might seem like a stupid question, but, id like to use C# to train a face recognition model.

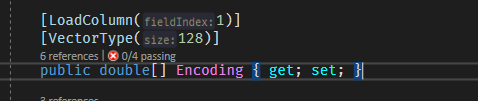

The face detection part would be done by Dlib which will extract the face encodings (double[128])

So the question would be can i use ML.NET to train a model that gets face vectors for input

Input would be:

Label: string

Feature: double array

And trains a model that when an image comes in and i extract the face encodings from it, it could predict which person it is. (I have about 2000 person with 2-3 images per person (2-3 encodings))

Is it possible and what algorithms are suitable for this task ?

Thanks for a answer

Mati

All 4 comments

While you could run this in ML․NET as a multi-class classification problem. You may instead want to write your own distance calculation. For instance L2 distance. Your output class is the lowest distance between your training images' embeddings and your image to score.

If you want to run this in ML․NET, set each person as your label, and your pre-computed face embedding as your feature column. Then run as multi-class. I'm unsure which model type will work best, though I suspect trees; KNN would be an optimal model type, though ML․NET does not have an implementation. I'd recommend having the AutoML try out the various models for you. The run will be slow as you will have 2K classes.

Assuming you're not refitting your face model, it should be safe to pre-featurize your dataset. If you are refitting the face model, you'd want to ensure that you re-fit it only on a training split of the dataset.

Missing components in ML․NET: _(which would help problems like this)_

- KNN model (finish this PR - https://github.com/dotnet/machinelearning/pull/1889; see issue https://github.com/dotnet/machinelearning/issues/1712)

- Transform to calculate distance between two vectors (L1, L2, cosine-similarity)

Note: Be sure to be ethical in your ML models. There are plenty of ways to misuse a face model. From the nature of your question, I assume you're just experimenting for intellectual curiosity.

Hi, thx the L2 distance works good, but just for information.

When i try to build a ML.NET model lets say OneVersusAll with LinearSvm and my data is in a double array format.

How can preserve the double format, since it keeps saying that expected a VectorType of Single.

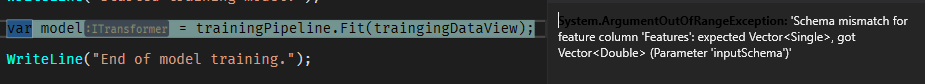

Then i get an exception at

How can i inform the modelbuilder that it is a Vector of double ?

tldr; Single will work well.

@MatiKingloom: Generally in ML, a Single works well to represent your data. The full 64-bits slows down the ML process and increases RAM utilization without offering generalized value. Doubles are often used internally in ML․NET, for instance in logistic regression for accumulating its loss.

Taking the bit-reduction further, bfloat16 is used in ML packages like TensorFlow and PyTorch for better speed and memory efficiency. Bfloat16 is a 32-bit float truncated to 16-bits. Though not currently used in ML․NET, I've experimented with reducing our Singles to bfloat16s without causing degradation in our training process.

Side note: ML․NET would need bfloat16 support in .NET. Until then ML․NET could use bfloat16 as an optional lossy compression mechanism within our in-memory caches, by manually packing/unpacking, halving memory use and gaining the related bus transfer speed.

Seems question has been answered, feel free to reopen if necessary, thanks.

Most helpful comment

While you could run this in ML․NET as a multi-class classification problem. You may instead want to write your own distance calculation. For instance L2 distance. Your output class is the lowest distance between your training images' embeddings and your image to score.

If you want to run this in ML․NET, set each person as your label, and your pre-computed face embedding as your feature column. Then run as multi-class. I'm unsure which model type will work best, though I suspect trees; KNN would be an optimal model type, though ML․NET does not have an implementation. I'd recommend having the AutoML try out the various models for you. The run will be slow as you will have 2K classes.

Assuming you're not refitting your face model, it should be safe to pre-featurize your dataset. If you are refitting the face model, you'd want to ensure that you re-fit it only on a training split of the dataset.

Missing components in ML․NET: _(which would help problems like this)_

Note: Be sure to be ethical in your ML models. There are plenty of ways to misuse a face model. From the nature of your question, I assume you're just experimenting for intellectual curiosity.