Leela-zero: 40x256 training note.

All 305 comments

@gcp @roy7 Can you match 40b in queue?

can you add information of training?

learning rate 0.0001

current round: v152-v155 + elf

released version: 328k.

still going

previous round: v148-v152 + elf

final version: 384k

I forgot the rounds before these two. But I remembered that bjiyxo posted it in some thread.

ps: 328k vs 224k = 76:61, not tested vs elf0, elf1

224k vs elf0 = 86:83

224k vs elf1 = 53:79

all matches with 3200 visits

So released version should probably be in between Elf0 and Elf1, which is quite much stronger than LZ160, so nice! Let's wait for the test results anyway, that'll clarify it's Elo

Thanks Bjiyxo, can't wait to see this...

I'm a bit worried by the LR however: isn't it a bit too small? If yes, we risk being stuck in a local minima and it will be hard to improve it by selfplay (without going down quite significantly, which is not allowed by gating). BTW, isn't it the problem we face with current 20b net?

@Friday9i if it is much better than current 20b, it will generate much better games. That and with new elf1 games, it will maybe be possible to see improvement and pass gating with a higher LR maybe.

i dont even know if this learning rate is bad at current LZ state.

@Friday9i I tried 0.0005 learning rate for the same training data, and found that the result is poor (worse than the previous weight).

Thx @bubblesld, interesting

I could be wrong, but I feel that the poor results from offiicial 20b is due to the high learning rate.

I don't remember, what was the learning rate at the end of 15b ? didn't @gcp lowered it at the end ?

Maybe LZ has enough step and is at a point where LR must be reduced to 0.0001, whatever the size of the net being trained.

@bubblesld Is the 40b net2net from a smaller lz network, or did you bootstrap it from a random net?

@NhanHo from bjiyxo's 20b. @bjiyxo did the net2net.

@NhanHo @bubblesld No, I didn't n2n this time. I trained it from Xavier Initialization.

@bjiyxo How many games were the network trained in total? It seems like my net needs to run through the last 2million games to match the current network strength

@NhanHo

How many games were the network trained in total?

40b was trained from the beginning of 10b.

It seems like my net needs to run through the last 2million games to match the current network strength.

Maybe you can post your training schedule, including your window, lr, bs and data.

What is this Xavier Initialization ? It was a clean 40b blank net ?

You can regard Xavier Initialization as clean 40b blank net.

okay thanks for all the informations !

Test matches queued.

Promising start against Elf1, let's cross fingers!

I would not expect 40b to be close to elf1.

From your comment above 328 vs 224 vs Elf1 that should be quite close...

@Friday9i It is not. From my experience, the best weight in each round is much better than that of the previous round. But the winning rate against elf0 increases very slowly (even worse sometimes).

If the 40 block network comes our really strong, why don't we go all out and use it? Mabye even lower the visit amount to 800 and see what happens, I think it would be very interesting to see.

If the 40 block network comes our really strong, why don't we go all out and use it

We could. It's "only" half the speed as 256x20. We would skip an optimal 256x20 network then, but we have ELFv1 too, so there's options in that range.

Looks like the Elf v1 is still much stronger. Sorry it may off-topic here. May I ask that doest it make sense to produce self-play game between two network? (elf v1 vs current best) I am currious that it can know how to win it self from elf v1.

Note the file size on 40 block is huge though. Wonder if we eventually need a torrent based solution for that.

@roy7 I don't think a torrent based solution would work since some people might not have access to that since it is blocked for them.

Since this net has also been trained with very low learning rates, it is likely that promoting it would encounter the same initial problem as we're having right now: Reinforcement learning with the usual learning rates would likely fail to produce networks which pass gating.

@MartinDevelopment "I don't think a torrent based solution would work since some people might not have access to that since it is blocked for them"

A big file can be split into parts and joined with a very simple utility.

Well, seems like the 40b net is way too good to not use.

@2ji3150 I guess that current match is with 1600v. elf has advantage in low visit due to its sharper values in policy. I believe that 40b can produce at least 1/3 w.r. against elf1 for higher visit.

@gcp 40b self play games are very useful to produce any lower block weight, and whatever you want. We do not care half speed for 40b training and current 20b is hopeless and just a aimless lost in elf trap

Most guys in our training group (500 persons] also wish to keep going on 40b and it’s very attractive and we will enjoy this great promotion.

Reinforcement learning with the usual learning rates would likely fail to produce networks which pass gating.

We can drop learning rate then. I don't see any other options to progress. The next run for the current 256x20 will be at bs=128 @ 0.0001. (By the way, if you mention learning rate, the number is pointless if you don't include the batch size!)

I didn't manage to train a net competitive with the best 192x15 with larger rates.

current 20b is hopeless

Well, by this kind of reasoning, we don't need to try the 256x40 because it'll be more of the same. (Luckily I don't agree with you)

Note the file size on 40 block is huge though. Wonder if we eventually need a torrent based solution for that.

Data traffic from my webserver was 3.5TB in July. If we go above 5TB, it will be throttled (but not dramatically). I suspect we'll be fine. Most of the traffic seems to come in huge spikes, so that won't be the clients who are downloading new networks day to day, but rather people who are downloading all networks or something. I hope there won't be people running the clients that suddenly get a scare when they see their data traffic stats.

I'd like to see if we can get any movement on the 256x20 before we go to 256x40 though. Let's give it one more cycle at the lower rate at least.

20b progressed.

Data traffic from my webserver was 3.5TB in July. If we go above 5TB, it will be throttled (but not dramatically).

If you do end up needing some bandwidth, I'd be happy to provide a server with 60TB/month.

i think experimenting with 20 blocks is fine and above all interesting

after all, elf v1 is 20 blocks but still much stronger than this lz 40 blocks

this just shows how much potential the 20 block has

i think its really interesting to keep waiting for 100-150k games on the 20 block lz to see how it influences new networks strength and direction of play

20b is not stalled or hopeless, its just its in early stage, i think

there seems to be a trend on the 20b, progressively getting stronger and learning things (fixing its own holes ?)

i'm curious to see how things will develop for the 20b network, remembering that elf v1 is 20 blocks but so much stronger

i think theres no need to upgrade immediately to 40b

40 blocks may be be stronger at the start, but like elf v0 and v1 showed, 20 blocks has the potential to be stronger than current 40 blocks lz

so as long as 20b is not stalling, it may be more efficient to use it as a training point (starting point is lower, but we can expect it to learn faster than 40b if we look at elo gained per week, with equal computing power between 20b and 40b)

also, this will require less ressource to train and less ressource to play matches (which can be useful to play against it on weak computers, smartphones, etc...)

and finally, like i said, i'm curious to see how this 20b experiment will develop, going directly to 40b makes us miss a chance to learn knowledge and experience about bots

if we made some mistake, its a good opportunity to learn from it, no need to rush things

you should also consider that by the time lz 40 blocks catches up to elf v1, elf team can release a 40b elf v2 which will be insanely stronger than the 40b you want to achieve, so again rushing for strength is pointless imo

I think we have to leave this decision to @gcp, there are certainly arguments both for and against going to 40 blocks soon. If a majority of self-play contributors favor upgrading sooner rather than later, we might as well upgrade early this time, but there's no harm in at least waiting out another few training cycles while trying different learning rates.

I am no expert on this. I am very interested in seeing how high leelaz can reach with a 40-block network. Also, it is unlikely that we will see leelaz surpass ELFv1 in its strength anytime soon. So the fans' enthusiasm might be dampened at a certain point if we keep training a 20-block network that is far below the strength of ELF.

The only worry I have is that it appears we do not know exactly why we are having problems with the current 20-block networks. It could be the learning rate, the ratio of the ELF games being used, the use of 1601 visits, or something else. I would think that we want to at least have a better idea of the problem before moving onto 40 blocks since it will take double the amount of time to experiment to find the problem if we are stuck again at the 40-block level.

Perhaps, all what we are seeing is the nature of the network when it reaches a certain level of strength. I suspect that if we are stuck in 40 blocks again in the future, we will have to try the Alpha Zero approach of no gating to move a network out of a local minima.

Since we're considering going to 40 blocks soon, one possible change that would very likely help in either case would be to up the ELF fraction in game production, from 25% to half or even higher. Regular self-play games at 1600 visits are currently somewhat weaker than 15 block games at 3200 visits, but ELF v1 games are definitely more useful than either. Having more of them sooner may help the 20 block nets to pass gating, and would also be more useful in case we switch to 40 blocks.

It's hard to believe the current lack of improvement can largely be attributed to the training process hitting a plateau at the current network size, considering the smaller ELF networks that are significantly stronger than not only the current best 20x256, but also the bootstrapped 40x256 one. The training process is meant to maximize improvement per time unit (which was the motivation behind decreasing the number of visits). In the absence of a legitimate plateau, it simply makes no sense to have a larger and thus slower network generate training games. That has always been the purpose of the current "official" network and the very fact a larger, stronger network could be created from the training games by a separate endeavor in the first place means that no one should somehow feel deprived of anything.

I think the network size of the training process should not be increased until there are no more 192x15 games in the training window, and maybe even that then there should be a full pass through all the typical learning rates (to have a chance of getting out of local maxima), just to make sure this isn't a transitional issue and 20x256 is indeed irredeemable beyond a reasonable doubt. Don't be impatient and worry about a few weeks.

@TFiFiE What you don't consider is that if a net is WAY better (even if it's a bigger net) than a smaller net, it's better to generate the selfplay game with it, because the net you will train on these way better self play games will be a lot better (even if you want to train a smaller net with these games).

If the 40b net only had something like 60 or 65% WR it would be debattable what's better for the fastest training possible : the 40b net and it's 60% wr ? or two times faster 20b net with slightly worse game quality ? But with an insane 40b net with 85% WR, there is not even a contest.

Doesn't mean it's not interesting to continue with the 20b net to see what can happen and what's wrong with it's training for now and test things and parameters, but for efficiency it's 100% the 40b net.

@herazul

Recall that AlphaZero was trained from scratch. If human games were used, the training would

hit a lower "ceiling". In other words, an AlphaZero trained by human games would have a faster start,

but the final result might be a weaker network, as it might miss some "blind spots".

@MacErlang The concern was that the weight is contaminated by the weakness of the human games. Then what is your point comparing to skip 20b-net? In fact, the 40b weight is already contaminated by the weakness of the lower block games since it is trained from those self-games.

What's the evidence that human games resulted in a lower ceiling?

@bubblesld You have a good point. I am no expert on this. But, perhaps one implication is that

the 40B network (or any new blocks) should be trained from scratch, despite the poor performance

at the outset. Does this make any sense at all? Since potential moves are generated randomly, a

clean slate might reduce possible sampling bias created by weaker games from a lower block count.

It is necessary to conduct experiments and data analysis to find technical details not mentioned in the paper.

No, I didn't n2n this time. I trained it from Xavier Initialization.

So the problem may be n2n? I dont know much about training, but if the 40b network was trained in absolute different way, it may worth a try to train a new 20b with the same training methods and parameters to find out why the 20b network is so poor now. It may not be just the problem of network size.

@alphaladder

I think 40 blocks will get the same chance.

But is there any guarantee that the current trap will not occur in 40 blocks? We need more time to test until we find a way to solve this problem.

Efforts to solve this problem at 40 blocks are much more expensive.

ELF shows that the limit of 20 blocks is much higher than we are.

If the problem is solved it is a good thing. If not, go to 40 blocks.

Wait for the results with more patience.

It is not a great help to continue to speak the same opinion of yours.

gcp will choose in the right direction.

@jkiliani I think I read in some of the issues that someone (I think our Chinese contributors) tried to produce networks with varying amounts of ELF generated games and found that even 25% may be too much. That few games spice the training, but perhaps too much makes it stuck in between both optimal ELF as well as LZ plays (and being weaker).

I personally would have probably liked to adopting ELF games altogether (we had a slow progress in the last month anyway, we might have had it the same by not adopting ELF generated games somewhat earlier). I kind of fear that we will be always chasing ELF. As soon as we catch them they release a new, bigger and better network (perhaps not to spite us, just out of coincidence), the end result will be the same: 1,5 publicly available network (ELF and ELF derived LZ) instead of 2 clearly independent.

But in the news we have to trust GCP, I don't even remember if he ever made a clearly wrong choice regarding the project, so let's wait and see, I trust him :-). In 2-3 days we will know if lowering LR helps 20x256. Even if I might prefer going to 40x256 for the bigger final potential it is not that important. Though I do wonder where we got all the new hundreds contributors?

@sheeryjay

i was wondering about something similar

wouldnt it be better to stop using elf selfplay until 20b catches up to current best 20b network ?

because elf will make our current 20b stronger in a faster way and using stronger plays, but the weaknesses ("holes") in the network architechture will still remain, and these weaknesses may possibly prevent the stronger elf moves from being used in the best conditions

perhaps it is better to let the 20b figure out the "weaknesses" by itself and fix them, and when the new networks generated get back to the same strength as our current best 20b, then introducing elf selfplay may be more effective ?

the way i see it, getting new generated 20b networks's strength back to best 20b may be a prerequired step to scale the 20b development

it is also possible to do both elf + 20b selfplay at the same time, but isnt stopping elf until 20b catches up an interesting idea ?

The only worry I have is that it appears we do not know exactly why we are having problems with the current 20-block networks. It could be the learning rate, the ratio of the ELF games being used, the use of 1601 visits, or something else. I would think that we want to at least have a better idea of the problem before moving onto 40 blocks since it will take double the amount of time to experiment to find the problem if we are stuck again at the 40-block level.

Yes, this is exactly my reasoning as well.

If we can improve the 20 blocks, we can still see what to do. If it improves at 55.01%, well, then switching to the 40 blocks could speed us up. But if the 20 blocks starts promoting every cycle at 66%, then it's a different matter entirely.

@MacErlang

Recall that AlphaZero was trained from scratch. If human games were used, the training would hit a lower "ceiling".

I think we don't know that in fact. Alphago and AlphagoZero have very different training process and architecture, we don't really know what would have happened with an AlphaGo Zero initialised from human pro data if i'm not mistaken.

We don't even know if all Zero bots who are improving are converging toward the same style of play or not.

@GCP will you try to lower next cycle %Elf games in training ? @bjiyxo had succes with 30-40% of elf games if i recall correctly, might be worth a try too.

I suspect the current issue is more likely learning rate, so let's see what that gives first.

I think we don't know that in fact.

We don't. It's unfortunate that the graphs in the DeepMind papers are rather unclear, but I think the suspicion was that Alpha Go Master was actually stronger at 20 blocks than Alpha Go Zero, so they did Alpha Go Zero at 40 blocks to beat them both. But of course, what would have happened with Alpha Go Master at 40 blocks?

Why not for now wait until 20b self-play games are more than half of the window and, then, if we still don't have a promotion, lower the LR gradually until we get one.

The very low scoring (20%-30%) suggests that training with more and new data, even if few, is making the network considerably weaker, which I think is a different situation than nets that are about the same strength (40%-54%).

Two things have changed at the same time, the network and the visits. Wouldn't it be wise to just going back to 3200v? We don't care about game generation slowdown on the short term, what matters is to quickly put the new net on track, no? You may have to play with learning rate for a while.

Once promotions have resumed, visits reduction effect can be considered, against the new baseline.

Yes, this is exactly my reasoning as well.

If we can improve the 20 blocks, we can still see what to do. If it improves at 55.01%, well, then switching to the 40 blocks could speed us up. But if the 20 blocks starts promoting every cycle at 66%, then it's a different matter entirely.

——-/////——

very reasonable.

@gcp we set up the random play in the whole training games, thus, is it a problem in the current step?

Too many short games in our current training games.

Previously only in the first 30 steps.

The whole game t=1 training worked really well for the 15x192 networks. Why would it suddenly cause problems?

that is what we are trying to slove...

See? 20b is progressing.

yes,if problem could be solved in these 20b tests, then we have more reason to start 40b.

Test any bugs in 20b and start to run 40b weight mixed with some portion of elf games. 20b is just for trouble shooting.

Running 20b with elf is just a copy of elf v2 ,not our LZ. 20b is the field of Dr. Tian’s. 40b belong to lz

I can't believe people now appear to be rooting against whatever progress might be happening under 20x256 (if I'm correctly interpreting the thumbs-downs 1-punchMan's comments are getting). :confused:

@alphaladder, you can't have it both ways here à la Morton's fork. The fact is, that if the promotions start coming in again, then we're (ideally) only about 7.5 minimal promotions away from a 20 block that is as least as strong as 1fdfb1c5 under equal visits, let alone under equal time, meaning that a hypothetical parallel training process of 40x256 would likely be overtaken in the medium term.

"_No plateau, no size increase_" seems like a perfectly fine motto to adhere to and being that 20x256 was one of the sizes DeepMind settled on (for who knows what reasons), it deserves some proper treatment by the project that seeks to replicate their work.

20b 7a8a0c1a is stronger than 511034f4 79 : 75 (51.30%)now

@TFiFiE see gcp review above. I can not get your point since we have other better fast promotion methds for Lz in 40b level. Just to follow elf v1 in this 20b using elf games.

I bet people prefer to train a 40b network if 40 has any opportunity in the queue of training.we should fully make use of elf in 40b , rather simple copy !

40b can run on a mobile phone/low-end computer, but it is too slow to use, while 20b can run nicely on those platforms @alphaladder

@I1T1 You can use 40b games for any weight in any size of block. Do not worry.

Otherwise we should stop in 15b and go home, which is enough for most guys. Just kidding.

In our training group with 500 persons, they have great enthusiasm in 40b training.

can you training out a 40b weight which is stronger than 20b elf? @alphaladder

See? 20b is progressing.

The question is: Is it because of the new learning rate or is it because we have more self-play games now?

I vote for learning rate.

Did @gcp say that he lowered the LR?

I vote for learning rate,too.

can you training out a 40b weight which is stronger than 20b elf?

————

@I1T1. You do not get my point. We have already a very good 40b candidate in the queue. If we could start this 40b scenario, it should easily exceed elf. In most cases, 40b should have much possibility than 20b to beat elf. It is very hard to exceed elf series since Tian is still working on his 20b.

Let us wait for gcp’s decision after recent test in 20x256.

@alphaladder

i think someone has to say it directly to you :

just because you have the right to speak doesnt mean you can say whatever nonsense you feel like depending on your mood, its better not to speak if you dont know what you're saying

if you want to ask questions, to learn information, or if you have an opinion, express it in a humble way, being open to be wrong and corrected

all this is adding a lot of work to the contributors, making the work more exhausting and less efficient

of course, i'm saying this to you but i'm the first one trying to apply it to myself

hope this helps...

@wonderingabout

I do not understand what you are focusing on . It looks like that you are interested in initiating a useless “nonsense” or aggressive argue in person

Since it is not associated with this issue any more, please stop @me .Thank you.

I'm not sure how relevant for this particular problem at hand, but I have always found the bigger and more complex a net is, the lower the usable learning rate is. Even with non-constant / decaying lr schedules, I saw complex tasks where the net converged but only to a trivial "I don't know" middle result, and only started to do actual work with lower initial lrs.

Net size have more than doubled between and 192x15 and 256x20 (not to mention the increased understanding and complexity one hopes for), so it wouldn't be surprising if rather different lr would be necessary.

EDIT: Likely unrelated to the training problem, but just now I noticed that not just selfplay but even matches are reduced to 1600 visits now. This is very surprising and will certainly affect match results! Since we know that the difference in result scales up with more visits, achieving 55% is significantly harder now than before. Was this change accounted for somehow?

Just adding my 5 cent to the debate. I think chasing after ELF and comparing LZ continuously to that is counter-productive: Facebook has and will always have superior resources, and so long as they pursue the bot, it is quite guaranteed we will be following their tail if we obsess about comparisons, and probably lose our unique way doing that.

I feel that just switching to 40b to "easily have stronger than ELF" network before seeing if we can make 20b work is not the long-term solution. There is even no proof that "easily" is true at all, it might as well be "harder and slower than through 20b" (maybe we encounter same issues as with 20b, or it takes 3 months and we could've gotten there with 20b in 2.5, who knows?). I'm having a gut feeling myself that the "40b is 2x better than 20b" is too intuitive to people and "because AZ had 40b" people think that somehow just having the Bigger Network will boost the results.

Silly analogy: If I had a 500 hp motorbike that goes 100 mph and could not get it to go 200 mph (when that Mark guy next door has a 400 hp bike that goes 250 mph), I would not buy a 1000 hp bike that already goes 150 mph. I would first try to get my 500 hp one to go 300 mph, and THEN buy the bigger one, or build it myself using the learnings.

It's true that the 40b network is currently stronger, and maybe it might be best to train a 20b net but use new ELF and 40b to generate the games. But I think the strength of Leela Zero project is the long-term view, and while it may feel frustrating to wait for several days, even a week or two, and not immediately adopt the currently biggest and meanest, I'd vote for patience and maybe even doing things a bit sub-optimally, if we can get experience that might save us bigger (40b, 50b, 100b, whatever) headaches in the future.

I like analogies. :) If you have brains of a fly, a mouse, a dog, a human, a superhuman. There is no much sense to produce the best go playing dog. But you should learn how to teach best the game of go by testing with the smaller sized brains.

My conclusion: Continue with 20b up to the moment, we know, what may go wrong and how to insure that the network improves.

Probably this is the same idea as @jokkebk just voted for.

@jokkebk

"it might be best to train a 20b net but use new ELF and 40b to generate the games. "

Generating self-games is the part consuming most of resources. Training a weight from self-game is much easier. The debate will be generating 20b or 40b self-games, not training 20b or 40b weight.

@jokkebk That's not a good comparison. For one thing, clearly the issue with the bikes is the transmission/gear ratios, and that 500hp bike is getting to 100mph lightning fast and will smoke the neighbor's bike off the line every day of the week.

The big advantage that having more blocks will confer is the bot will be able to better see larger parts of the board, and therefore better avoid the big problems that smaller networks face, such as large, nontrivial ladders or the life and death and semeais of very large groups. Having the extra information will allow bots to either win these large fights, or find nuanced, expert ways to avoid them. And there's also the other potential benefits that come from better pattern recognition and increased capacity, which humans can only speculate about.

It's not that a 40b is twice as good as a 20b; it's that a 40b will learn strategies that a 20b will miss completely, and that fundamental difference can never be overcome, no matter how much you train a 20b or how much search you use, because the 20b is sub-optimally pruning and narrowing the search space based on a relatively smaller sample of information about the state of the game.

Incrementally increasing the size of the network makes for an interesting experiment to see how bootstrapping larger networks from smaller ones (and the training data set produced by the smaller ones) impacts the efficacy of the training process and the quality of the final network, if at all, but there's no proof yet that we're actually gaining a computational time advantage from doing it, as we don't have a control group that we can make comparisons to and use to draw meaningful conclusions about our methods.

We're basically just wandering about in the dark while tinkering with some things here and there and making inferences to refine our training methods, using statistical reasoning and otherwise, that are likely subject to a multitude of systemic biases and in a manner that's very difficult to reproduce. That's not to say that the LZ project doesn't have a lot of value, however, as we're the only group providing a fully open source zero-style dcnn go bot with a large training data set and a tool that is completely reshaping the world of go and leading to better understanding and appreciation for the game.

The point is that where we're at now, there's not much significance in the choice between continuing with a 20b or a 40b, except that there's no actual evidence that going through a 20b first will actually be faster in the long run, and a 40b should, in the long run, produce better results. Even if we know that increasing the number of blocks affects the rate of game generation in polynomial time, and therefore a 20b should generate games twice as fast as a 40b with equal visits, if it becomes necessary to decrease the learning rate and step size of larger networks to get them to continue to converge, or potentially be forced to lower or remove gating to try and escape a local extrema, it could potentially be a wash in the long run. What's the chance of reaching an adverse outcome? We simply don't know.

Again, there's no actual evidence to support either path, so really it just comes down to preference.

@crazyeight01

Thank you for your great review! Very reliable and objective.

We're basically just wandering about in the dark while tinkering with some things here and there and making inferences to refine our training methods, using statistical reasoning and otherwise, that are likely subject to a multitude of systemic biases and in a manner that's very difficult to reproduce.

So, then, business as usual when it comes to programming neural networks. :P

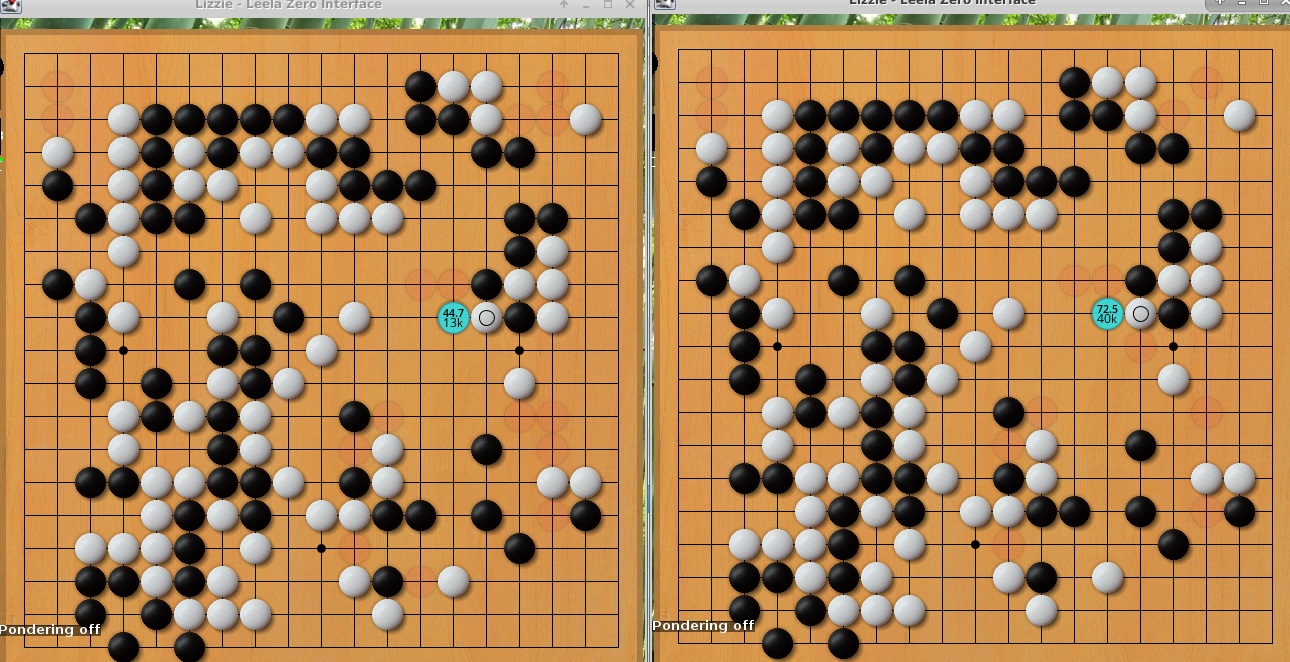

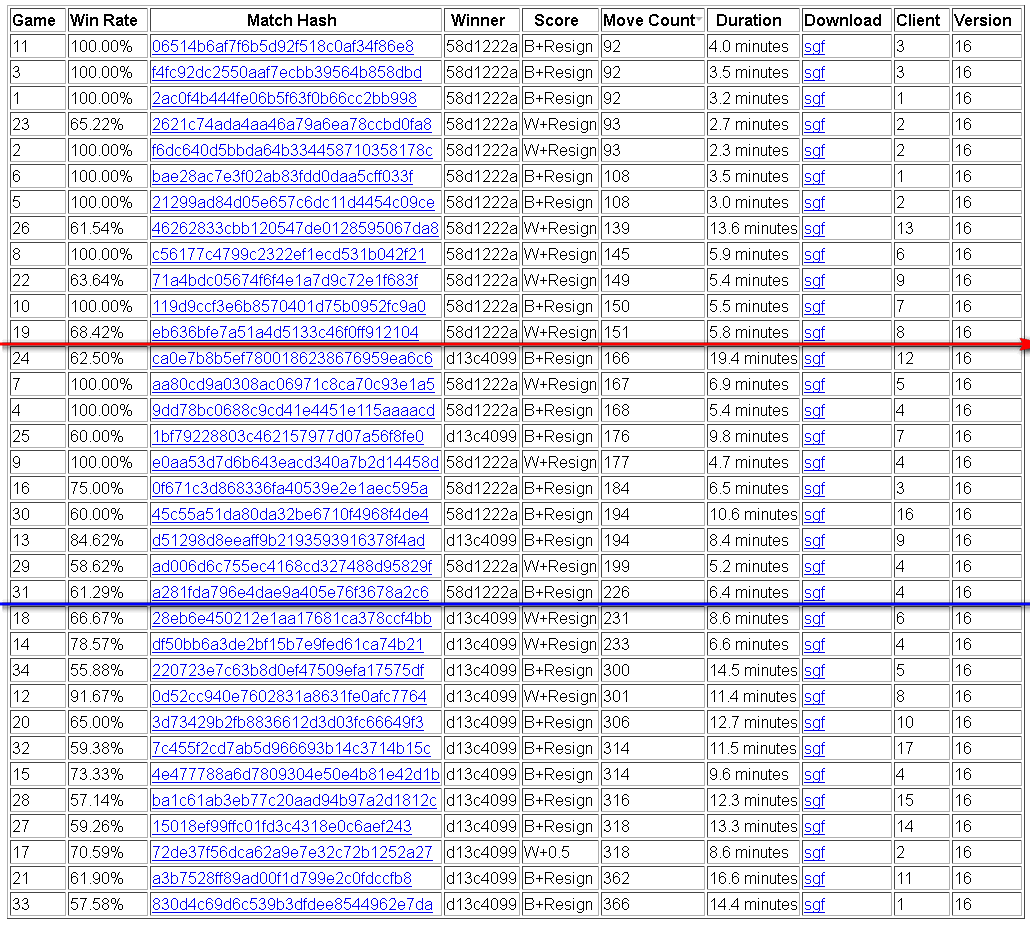

Before I present my proposal, I would like to show the results of 400 matches between 40b (new round 112k) with 1600 visits and 20b (v160) with 3200 visits.

40b (1600v) vs 20b (3200v) = 231 : 169

The running time for 40b with 1600v is a little longer than 20b with 3200v, but very close. That means, we can generate self-games with better quality from 40b than from 20b for the same period of time.

Now, I would like to propose the next version of LZ to generate 20b self-games as default, but people with high-end machines can add some parameter to generate 40b self-games instead. This will be a win-win. The total amount of self-games will stay about the same.

For the 20b training, there are some better quality games. And remember that the weight of 40b in some sense is much closer to that of 20b than elf to 20b, which may produce greater help. The bottom is: there is no harm to the 20b training.

For the 40b training, the part of better quality games stays the same. And the most important thing is that the self-play games generated by itself can correct some mistakes learned in the previous round of training. From the experience of 20b training by bjiyxo, the weights of 20b once had a serious ladder problem that the weights of 15b did not have. The weight of 20b does not know that it has such a problem because no its self-play game for training.

In summary:

- Let the clients select the type of self-games they want to generate.

- The total amount of training games will be about the same by this approach.

- It is good for both 20b and 40b trainings.

What do you think?

@gcp problem is solved in 20b,thus, could you please let us know any possibility to run 40b ? Thanks.

@bubblesld Great plan!

We could simply run 50% LZ-20B (1600visits), 25% LZ-40B(800 visits), and 25% elf-v1 (1600visits).

Then we might be able to improve both the 20B and 40B networks faster.

I am a strong bot supporter. But if you match 40b & 20b in normal match setting ( 30min, 1min byo-yomi), 40b really can't compete with 20b weight because 20b get as twice the playouts as 40b. 40B is only good when you have 500K or 1M+ playouts/min ( at least you should have 2 or more 1080Ti). It only benefits to very few people who has extraordinary ambition on FoxGo server. And I like to point out here, 500 persons in QQ group is just nothing, most people there only take and not give, and manipulated by few persons. @alphaladder is keeping blablabla and try to influence the direction of LZ project. why should the Leela Zero project to satisfy your need or ambition?

@jamesho8743

Did you test what you wrote? 40b is not as good as 20b in normal match setting?

My tested results show 231:169 when 20b gets twice visits. Furthermore, from my experience, it will favor 40b more if both visits are doubled.

I only have 1070 card, in my test, 40B lost more often than 20B because the playouts is poor and in any case, 20B always has the "double" playouts than 40B. 231:169 please describe your time settings and setup. As far as I know even Octopus can win ELF with more playouts. To most people, I think one 1080Ti is top gear setup, and 30min/1min is very standard and universal.

And as your 231:169, it's only 57.75% winrate. What is the advantage of 40B? Let alone the majority of people who has mediocre card.

@jamesho8743

@bubblesld said its 40b (1600v) vs 20b (3200v).

So it's 57.5% win rate in 400 games at time-parity independent of hardware.

The 40b is not my personal ambition. It is our final goal for lz program.And most guys will support 40b.

I do not think you really learn our training group in QQ. In fact, we produce at least thousands of self play games per day but in your eye it is nothing. Are you kidding? How many self play games do you make daily? 30? @jamesho8843

I just consult gcp on the possibility based on his previous response, which is not judged by your preference of 40bphobia.

At last, kindly reminder is that your blablabla supported by your noble graphic card could not make the direction of lz. Thanks.

@jamesho8743 What is the sample size of your tests ?

in my test, 40B lost more often than 20B

The strength of the current 40B network is not so important. It will only be the starting point for more training on 40 blocks. The theory is that after a couple of weeks / months, a 20B network cannot become as strong as a 40B network.

@betterworld

Yes. The debate is just to find the efficient way to our final goal of az 40b.

We could learn a lot from the fall and rise of 20b training, which is also benefit for potential 40b block.

@bubblesld I like your idea. But I think there is one potential risk. According to your training scheme, for either 20b or 40 b network, the training data is partly self-played and partly supervised (by the other network and elf). Both networks might be like students taught by three different teachers that often contradict one another. By trying to reach a compromise among conflicting teachings, the students might end up worse, not better.

No, I didn't n2n this time. I trained it from Xavier Initialization.

@bjiyxo Could Xavier Initialization for 40b and net2net for 20b have made a difference in their performance? 40b seems to be doing a lot better than expected with similar training data.

@estonte

I have no idea. It's hard to compare after millions of steps.

good~

@bjiyxo did you also try Xavier Initialization for the 20b networks, as it apparently worked very well for the 40b net?

@Friday9i No. It will need ~2 weeks to train a 20b on 2x1080Ti if you use a similar schedule from my 40b.

Didn't know it was so long..., I understand you didn't! Thx for the answer

Sorry, I match 40b vs 20b by accident and if 40b wins it will promote. So if @gcp or @roy7 is not here, I will match 20b vs 5b to get a quick fix.

mistake happen, i'm sure roy can correct it =)

Well played on the training anyway, seems like the new 40b is very strong ! Did you start using elf v1 games in the training ?

Fixed by roy7

40b-157-360k (e2be4815) trains to 157, the last 15b. It didn't train neither elf-v1 (d13c4099) nor 20b yet.

Okay thank you.

I'm quite hyped to see the results when the training includes some 20b games and elf v1 games !

Because the 40b-157-360k was trained to the last 15b, so before we get into 20b, it shall be a good reference there. After this 40b, I will test 40b when 20b is ~5 promotion.

@bjiyxo

The new 40b look so good!

How about 30b? Do you have the intrest about 30b? Maybe it is a good trade-off for the strengh and speed.

My original idea was that 20b was used for training, and 40b was used for competition, and now I don't have enough machine to train another network. I'm training another 20x256 from scratch, but I am not sure if it works.

I see, Thanks!

Looks like elf v1 is still stronger than 40b. And it is much much stronger than our 20x256 network.

That't very strange that our 20x256 network still progresses slow.

Does it worth to train a 20x256 network based on elf v1 and see whether its strengh is near elf v1 or not ? If it isn't that should be some problem on our training . And if it is much stronger than we can know there is still some potential. And it can be the new teacher or we can just mix the network with our 20x256.

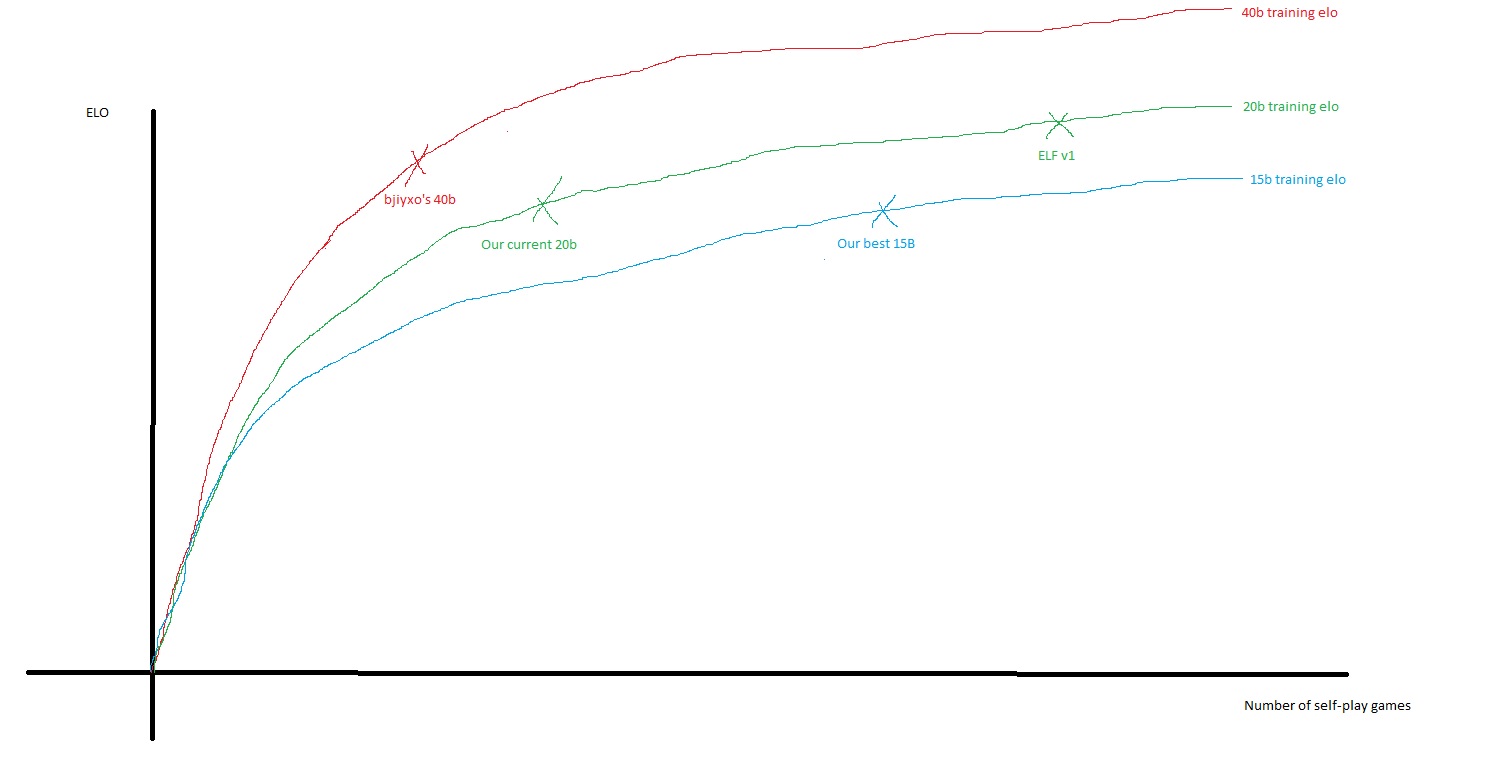

The slow progress of 20x256 is not strange, because its potential is relatively limited, probably less than 1000 Elo (selfplay)... A net improves fast in the beginning, then slowly when it's close to the asymptot: we are close to the asymptot, so it is slow. And we are close because we trained very well the 15b net and because the difference of potential between 15b and 20b is not huge (we know it from Elf v0 and v1)

That's why I think we should switch to 40b, because it has for sure much more potential, so we should enter a new cycle of frequent promotions and improvements, which is nice and motivating for everyone.

Our 20b is nowhere near as strong as ELF though, so there should be plenty of room for improvement.

Only 400 Elo difference, which in selfplay is less than 1000 selfplay Elo: this is "relatively close to the asymptot", and it will take quite a lot of time to go there (several months probably).

I find it much more exciting and motivating to switch to 40b, where the potential is probably 2000 or 3000 selfplay Elo points: we would get frequent improvements and fast LZ would be an ultra super human bot :-)

on intuition, i see things kinda like it, but i don't know if th'at remotly true :

But yeah if bjiyxo's 40b is already at elf v1 elo, a very well trained network, i think the 20b will take a very very long time to cath up, furthermore if it's true that elf v1 is realmly close to 20b skill ceiling. (and it may be, because opengo said it took them a LOT of additional steps to get 65% wr against their v0 net)

The test match had a winning rate around 52% at first 220 matches. It was close to my personal test. I have 40b win 96 out of 200. I do not know what happened to the last 180 games.

At the moment I thought that the w.r. will be close to 48%, I would like to suggest to keep training 20b but replacing elf self-games with 40b self-games. I believe that the 40b weight is closer to the 20b one so that 20b is easier to learn from 40b than elf. Now that elf1 is still better than 40b from the official test. Maybe we should wait a little longer.

Small update of our pretrained model for ELF OpenGo. This is v0 after finetuning with approximately 250000 additional minibatches (learning rate 1e-4).

Elf v1 is said use 250000 mini batches for fineturing and it is 67% stronger than elf v0 by our test. Does it means 250000 games? If it does, how elf can grow so fast by just 250000 games.

Elf v1 is said use 250000 mini batches

Are we talking about mini-batch size or number of mini-batches per epoch? The first one (increasing the batch size) is a known solution to keep learning rate high and speed up fine-tuning. Although 250k samples would be a really high mini-batch size.....

It looks like the 40b is very close to the best ELF now.

I said before I don't think we need to max out 20b as there's already ELF in that size that people can use (or just any other size!). So it makes a lot of sense to jump to 40b. The gain in Elo is much higher than the x2 speed loss.

I am catching up on uploading data now. I assume it will give an updated 40b another boost. By the time that network is up I think we can queue it for promotion?

Elf v1 is said use 250000 mini batches for fineturing and it is 67% stronger than elf v0 by our test. Does it means 250000 games? If it does, how elf can grow so fast by just 250000 games.

No it's not only 250k selfplay games (or i didn't understand something). I just asked them on their github, maybe we will have an answer.

@gcp in the next @bjiyxo 40b net, there will be 20b games and some elf v1 games, it could be interesting to match it versus previous 40b iteration to see if it didn't cause problem to the training before promoting.

@gcp also i have several questions when you promote 40b :

- what LR do you plan to use ? same as 20b or will you try a little higher LR at first ?

- will you keep 384k and 512k net ?

- still 1601 visits ?

Probably go one step back with the learning rate, i.e. 0.0001 @ bs=128. But I doubt it will work.

I don't think I'll keep >256k, a net did promote but several of the other steps were already >54% so I think that was just a bit random. Most importantly, I don't see a sign the score gradually increases with more steps.

We'll stay on 1600 visits for now. It's probably a little low now but a) we know this is efficient as long as we can pass the promotion bar b) I suspect this is the kind of thing that matters more if you are trying to max out a size and 40b shouldn't be there for a long time?

Yeah ok makes sense.

For the LR if it don't work no big deal, at worst you will have some cool github issue ! :p ( " THIS IS INTOLERABLE §§§!!! NO PROMOTION FOR A WEEK !!!§§ WE DEMAND THE KING'S HEAD !!§§§§§§§ " )

Thx for these precisions.

However I don't understand your last point "40b shouldn't be there for a long time", could you clarify please?

@Friday9i i think he just mean that 40b is far from it's skill ceiling, we should have a lot of progression before we start approaching it. So no hurry to optimize training strength, we can go for training speed in the mean time.

Indeed @herazul, that's the point, thx

(I was wondering if he spoke about a step further, eg 60b, but it didn't really make sense :-)

From my experience of 40b training, learning rate of 0.005 did not work well (i.e. become weaker). But things may be different with 40b self-games.

Now that the server issue has been resolved. Should a 40B network be queued for promotion match?

@diadorak I imagine they'll probably do it after bjiyxo's 40b catches up to the full training data set and produces good results. The 162 version showed only marginal improvement over the 157 version, so it probably needs additional steps or some other adjustments first.

@bjiyxo @bubblesld Just curious. Are the 40b-162 networks trained with ELF v1 games as well?

@diadorak

Yes, all 40b-162 was trained with ELF v1. 40b-162 was trained with 15b 137k games, 20b ~250k games, elf v0 ~210k games and elf v1 ~80k games.

it looks new games' contribution are small, and new 20b still much weaker than elf, then increase elf v1 games proportion ,since elf team says they played 2.5M games

This might be a good time to give 40b a try if the server is ready to handle 40b networks with the help from the colab trainers' effort of diverting network traffic. While 20b is making steady progress, it is concerning that the win rate of 9c56ae62, the current best 20b network, is actually worse than d351f06e, the best 15b network, when matched against ELF open go v0.

If 40b is to be used, 66ba9e7a appears to be a good network that can provide an immediate elo boost. e2be4815 can also be a good candidate for consideration.

@iteachcs: " it is concerning that the win rate of 9c56ae62, the current best 20b network, is actually worse than d351f06e, the best 15b network"

If so, perhaps 224x20 is the way to go? (d13c4 is

224x20)

@Marcin1960

224x20 is certainly a good choice if network strength is the only consideration. However, we already have Dr. Tian's team working on ELF Open Go networks. It is very likely that Dr. Tian will release new networks in the future when progress is made for Open Go. Therefore, there is not much point in competing with Dr. Tian by further advancing Open Go networks in the Leela Zero project. On other hand, the 40b network has Leela's own identity while providing a sizable ELO boost at the same time.

@iteachcs "224x20 is certainly a good choice if network strength is the only consideration."

Hmm, isn't the network strength the main consideration?

"the 40b network has Leela's own identity"

What I meant, that it is possible to have 224x20 net with Leela's own identity.

a new 40b weight need about 10 days to trainning by bjiyxo, 20b promoted 5 weights(163-166) in those days. it's hard to choose.

@Marcin1960

I understand and respect your point. My main worry of using 224x20 is about competing with Dr. Tian, which does not seem to be very productive considering Dr. Tian is still working on the same network. Leelaz's identity is also important to me personally--although I provided no contribution to the code base, I have been running training games since last November.

@iteachcs " My main worry of using 224x20 is about competing with Dr. Tian"

Than perhaps to try 224x21 would be OK? Unless you think it would be too close? I wonder why Dr. Tian picked 224x20? Could it be some sweet spot based on empirical data?

@Marcin1960

It is very possible that the choice of block and feature numbers are based on empirical evaluation. Your suggestion of 224x21 networks could be stronger than 224x20 ones but there is no empirical data to support it. In addition, we do not have a good existing 224x21 network to start with and the performance improvement of 224x21 might be too small to make a difference.

My guess is that people's suggestions of 256x20 or 256x40 networks were mostly based on Google's agz studies. It is reasonable to believe that Google must have empirically evaluted and decided that the 256x40 network size is the most useful in producing strong networks since it is the final product that they presented in the agz paper.

when matched against ELF v0, d351f06e used 3200v and 9c56ae62 used 1600v, so it's not fair to say the 20b network is actually worse. In fact, the winrate is improved compared to the previous match between 20b and ELF (79.50% to 73.93%). Of course, big gaps are always frustrating, especially as the slope of the 20b progress curve doesn't look good, compare to the 15b one.

@kfc51151271

Thank you for pointing out the difference in visit numbers. The only remaining argument I could offer would be that the visit number of ELF v0 was also reduced to v1600 so it might still be possible that d351f06e was stronger than 9c56ae62.

Either way, 20b is clearly making progress so the only question is whether we want to embrace 40b now, for the ELO increase, or stay with 20b and run its course. I think I have said enough and should leave it to the more knowledgeable to decide.

@iteachcs "Your suggestion of 224x21 networks could be stronger than 224x20 ones but there is no empirical data to support it. "

You missed my point. You expressed concern that "using 224x20 is about competing with Dr. Tian".

If this is not the case, than let 224x20 be the net. Certainly there are VERY strong "empirical data to support it".

In case you missed it, I posted my test result 12 days ago in this thread

40b (1600v) vs 20b (3200v) = 231 : 169

20b was v160.

when matched against ELF v0, d351f06e used 3200v and 9c56ae62 used 1600v, so it's not fair to say the 20b network is actually worse.

Exactly because of this, losing to 73.93% at 1600 visits is worse result than 72.46% at 3200 visits. As I wrote in the other thread, lowering match visits pulls results towards 50%, and at higher visits ELF would win more.

a new 40b weight need about 10 days to trainning by bjiyxo, 20b promoted 5 weights(163-166) in those days. it's hard to choose.

@l1t1 I do not think that it is a fair comparison.

I am helping the 40b training with 1080ti x4. In each cycle, we have at least 400k self-games. With 240 batch size, it is about 64k steps each day. For 360k, it is about 5 days. Usually we would not go beyond 500k steps.

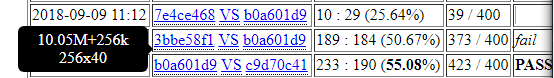

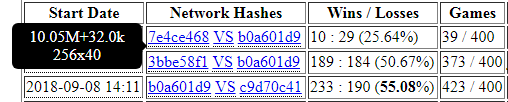

We tried to find the best one in each cycle, and test it. I do believe that we will have some passed 55% if we test t for each 32k (to be analyzed later). For the cycle up to v162, the 360k one has 69:45 against the previous 40b. The winning rate is above 60% and the p-value that it is not better is 0.0154 (which is rate than winning 220 out of 400). We tried to have it tested. But then there was server issue.

When the server issue is gone, we found that 416k is even stronger (37:16 aginst the previous, with p-value at 0.0027). We have 416k tested first. failed. We then try the 256k one. Failed again. I can not believe the results. But, well.... it happens, and everything is possible with probabilities...

Back to statistical theory, the chance of a weight with 51% w.r. to win 220 or more out 400 is about 6%. That means, in average, we can have a weight with 51% passed in 17 trials. From my personal testing, I believe that the weight in this cycle is at least slightly better than the previous, so I believe that I can have one passed if we test the weight as often as the 20b. However, I do not think that promotion is the most important thing. The real improvement is more important.

BTW, we are now going to the next cycle with a few more elf1 and v163-5. From my test, 16k one is 28:22 to the previous one. I would say that it has some probability to pass. Well, we are not going to have it tested until we find a much stronger one.

Omg,training lz in 20x224? thus we do bacame the cheap copy of elf.

@alphaladder "Omg,training lz in 20x224? thus we do became the cheap copy of elf."

What I meant is that elf size might be advantageous for some peculiar reason. Maybe shape of the net matters?

@alphaladder

close your free colab if its what you want..

just because some colab is contributing doesnt mean you can blackmail with it

everyone is free of will, this includes the colab choice to support leela zero, as well as leela zero project's choice to stay at 20b or go to 30/40/50/100 blocks..

@alphaladder please go away

@alphaladder

i was just pointing out how flawed your reasoning is (your comment about "the free colab that is contributing a lot might not be here anymore if you dont move to 40b soon" , (something like that) , you seem to have deleted this comment by now)

i personally dont care how slow a thing is, it was like that before the colab and other people were here btw, but i cant stand people who think like you, that just because they help, people will bow down their heads and beg for help.. dont look down on people, people are proud about their work and care about the how, and btw again, history showed countless times that people with such attitude often suffered horrible deaths by the people they hurt, begging for their lives and promising to give a huge quantity of money if they spare and protect them..

to go back to the point, i think leela zero project welcomes you and is thankful to you if you want to help and contribute on your own free will, but if you think you deserve everything just because you contribute, thats really a disgusting view... it reminds me of those rich men (or women) who think they can do whatever they want to people(insult, hurt, humiliate, harass, dirty one's pride, etc..) just because they have money...

please, let's think in a human and caring way

I appreciated your technical explanation and my opinion to gcp &roy7 is based on the opinions of many Chinese users who have at least hundreds of colab accouts while can not express their worrying on future training in english on this github. All of them are not rich and need the help of free but unreliable help of colab gpu, which is the main reason why we prefer 40b .You can not bear it but I fully understand.

Blackmail, nonsense,go away... Those are the words that you guys like to use. How about the next phrase you preferred? So I am very happy you guys are not Gcp otherwise .....Thus, I really have nothing to say. Do not @our humble colab trainers,thanks.

This isn't an opinion sharing forum. It's mainly for coders to discuss the code. People who contribute machine time aren't really a part of this conversation. Myself included. I hate posting here because I don't belong, but I do every few months when a really arrogant person comes and annoys everyone all day long. Back to my silence now.

@alphaladder "All of them are not rich and need the help of free but unreliable help of colab gpu, which is the main reason why we prefer 40b ."

Tell me friend. What personal computer would you recommend for people to buy, to handle 40b nets and quite possibly larger nets?

@alphaladder

i understand you, but if you want to speak in the name of all your chinese friends that dont speak english, then first of all you should have a more diplomatic, open minded, and understanding attitude

i believe most of chinese users, just like all users, are mostly reasonable people, so you dont do them a service by forcing your opinion and being impatient..

then, about their "worrying on the future of training", leela zero project is already considering moving to 40 blocks at some point, and they train and test networks for that, but there no immediate need or reason to move immediately to 40 blocks, also, there are some disadvantages to that, including losing low power users (and other applications like smartphone apps for example, it may be good to max out every block size)

also, everyone worries, not only chinese people, so there is no point to mention that chinese people, specifically, worry, everyone here is on the same boat and should think of all users as one single community sharing the same goals, amibitions, and worries

so, can you tell them that there is a plan and to be patient like everyone else for now, and see with an interesting eye the daily improvements made by leela zero project on whatever blocks ? rather than being desperate to reach alphago level (which is going to take many years at least on this project)

also, if they dont like the direction of the project no one is forcing anyone to do anything, and no one has debts to anyone, i want to stress this point again, it is important to understand. this is includes all users, all contributors, and even project owners, that are not forced or commited to their project forever, its only as long as they want to continue it, dont forget

personally, i'm wondering about moving to 30 blocks rather, which may be faster and still have some potential for strength improvement (when 20b starts to stall), but its just my opinion and i express it patiently without trying to force it, and if this is not accepted, i will respect the decision

hope all this helped

" rather than being desperate to reach alphago level (which is going to take many years at least on this project)"

That is why I like an idea of two main branches.

One for those with powerful machines or access to special resources, who love competition and tournaments, based on 40b nets and larger.

Second branch for those who are interested in clever algorithms and artificial intelligence.

De facto it is already is taking place:

RTX 2080 and RTX 2080 Ti will be announce tomorrow, they will be good at training

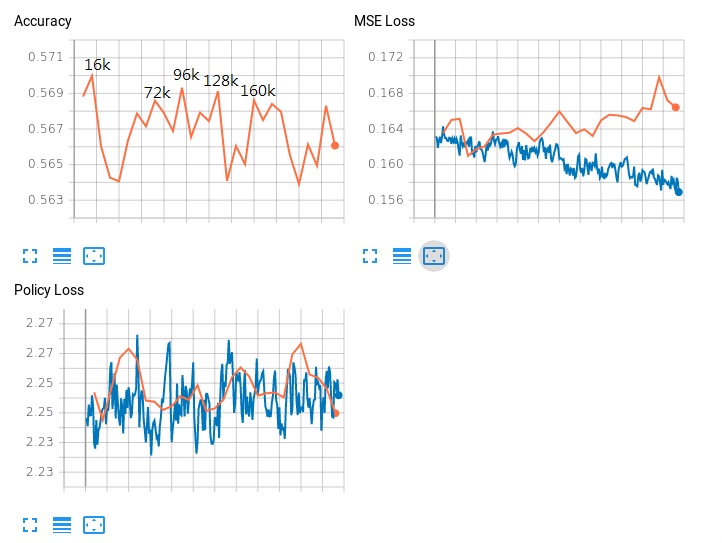

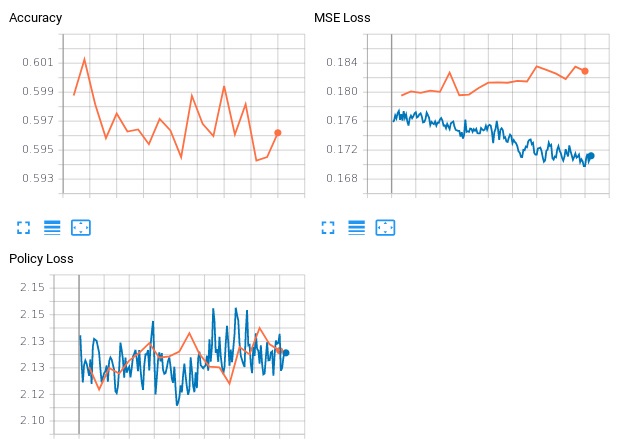

I am still puzzled at the official 40b test result. In the current cycle, we have training data with v158-v164 + elf1 + some elf0 (3500-4294). Learning rate 0.0001, batch size 240. Training with 1080ti x4.

We tested the peaks from the figure above (from our experience, a good weight is usually generated from the peak of accuracy). With 1600v against the previous 40b:

16k 57:49 0.5377

72k 107:77 0.5815

96k 54:49 0.5243

128k 61:57 0.5169

160k 63:49 0.5625

We have 72k tested in the official website. The result is 176:174 (0.5029). I do not know how to explain that all what we tested are more than 51.6%, and it turned out that the best one we picked has only 50.23% in the official test. We wonder whether the resign rate is the difference. We set it as 18 in our tests. What is the number for the official tests? In our tests, we never see a game end with non-resignation.

Then I want to say that people may think that the training of 40b seems stalled. But it may not be the case. The training data are self-games from 20b and elf. 40b training is trying to learned from them. The improvement will be probably on increasing the chance to beat 20b and elf. There is no self-game of 40b, so that it will not learn how to beat itself.

I ran 400 test matches against elf1 with the result 203:197. I am also running test matches against v167 with current result being 54:7. Since my results are often very different from official results, can we have test matches of 40b against elf1 and 20b to check the progress of 40b?

updated result vs v167

59:11

stopped at

117:26

(to test other peak)

@gcp @roy7

Do you think that we can have test matches against elf1 and 20b as I suggested above? Thanks.

Thank you very much for the great data in details, which is the real thing that we should focus on.

I think one reason is that all your tests are performed in 1080ti gpu while the lz official tests are also involved in 980 or 1050 gpu?

Similarly, in the cpu only machine , the performance of zen 7 is better than the that of 15b 157. As we know that 15b 157 is far strong than zen7.

Just a reference. Anyway, direct match between elf1 and new 40b is very reasonable.

@alphaladder

GPU should be irrelevant since visits are specified, not time.

@bubblesld

The resignation threshold is set at 5% in the official test. Here are the settings:

Leela Zero options: -p 0 -v 1600 -r 5 -m 0 -t 1 -d --noponder -s 4508320485158256009 -g -q -w networks/e2be4815f74d5293db054a706ecbed3c9586e110ecbf4917ee58942bd1c836a3

@bubblesld Either it's bad luck to get less winrate vs what you tested, or it is the resignation threshold. As you tested several nets, bad luck is very unlikely: hence, it must be the resignation threshold...

I saw several games where a net had a winrate below 18% and then won, so this 18% threshold is probably too high: you should probably test with 5% as in the official test.

@bubblesld I think you are getting way to many false positive resigns. But that doesn't explain all of the extreme difference. Therefor we need to know a few things. What version/commit of LZ are you using? What are the exact settings you are using? What hardware are you using?

I am using the latest LZ with command like

gogui-twogtp -black "~/leelaz -g --noponder -t 12 -q -v 1600 -r 18 -w ~/40b_157_360k.gz --gpu 1 --gpu 2 --gpu 3" -white "~/leelaz -g --noponder -t 12 -q -v 1600 -r 18 -w ~/40b_165_256k.gz --gpu 1 --gpu 2 --gpu 3" -games 400 -sgffile 40b_157_360k_165_256k -size 19 -komi 7.5 -alternate -auto&

It is using -v 1600, so it should not matter to what hardware I am using. Anyway, the machine is with ubuntu and 1080ti x8. I am using 4 gpu for training.

I do feel that the setting on the w.r. is the difference. I think that "-r 5" will lead the result closer to 0.5, since there will be some mistakes that should not happen for higher visit after the w.r. is below 18%.

I vaguely remember that "-t 12" could introduce more noise too. Maybe just use "-t 1" like the official tests?

And "-r 18" would hurt ELF much more than LZ's networks since ELF's win rates vary more quickly. It might resign prematurely in many winnable games.

@diadorak For sure indeed, high threshold favors LZ vs ELF, good point.

In the same way, it may favor old 40B vs newer 40b if the new ones are a bit more "stable" and get a sharper vision of winrates: that would also result in an optimistic result with high 18% resgin threshold, and lower result with lower resign threshold.

All in all, I think you should do your tests with -r 5

@bjiyxo congrats, the a3047139 network seems like a beast. 75-85% winrate against best 20x256, that might mean even a time parity based victory.

If gcp is ready to switch over to 40x256, this seems like a good candidate. Though I guess he will want the binary weight format merged before that so a few more days are to be expected?

@bubblesld it seems a304 is a monster against LZ168, now above 87% winrate and it scored 39 wins in a row, just totally amazing!

Maybe new iterations of 40b learned to better play against ELFv1 and 20b-LZ, but not so much against itself (40b), which would explain it did not improve significantly (vs e2be)? If that is the case, it's even more promising to switch to 40b selfplay, as it would probably improves even faster ;-).

Anyway, a test against ELFv1 seems useful.

Though I guess he will want the binary weight format merged before that so a few more days are to be expected?

That's not a consideration at all for a lot of reasons. (See format discussion, but also the need to stabilize the current code so we can actually make a release and get clients to update...)

I'll start a test of the quantized version of this 40b.

The only real concern is the possibility that we lost some clients that are out of RAM for 40b. But I think a) on master it's less of an issue b) on next we just had some fixes c) those are going to be slow clients.

This isn't an opinion sharing forum. It's mainly for coders to discuss the code. People who contribute machine time aren't really a part of this conversation. Myself included.

I wouldn't say that. If your feedback is: the 40b seems 10 times slower on my machine because my GPU runs out of memory, this is important to know :-)

I'm also very interested in what the people who actually play go think. I sometimes wonder how many there are here :-) All the ones I talked to care about komi and getting sensible analysis in lopsided situations (still hoping for a series of small pull requests from alreadydone there at some point).

It is true that there are so many opinions shared here (you know what I said about opinions vs data) that it's been long impossible to follow all, or maybe even most of it, and my priority in time is to unblock the people who are trying to develop the code, i.e. putting the reviews out.

Given that 20b is now progressing normally, 20b vs 40b is a bit of judgement how fast the 20b progresses vs how much stronger the 40b is. The latest 40b looks very good, and we have addressed some of the issues that would've made it problematic.

The quantized test is running now. If it's good, I'll switch to 40b (and the server will output quantized nets). (I am traveling next week so I'd rather do it sooner rather than later)

One argument for sticking to 20b for a while is to test whether our net is definitely stuck in some local optima. If 256x20 fails to eventually catch up to both Elves, this would confirm a sub-optimal configuration of weights, due to the inability of current learning rates to reorganise the net sufficiently. Right now, 256x20 is improving at a good rate, I'm very curious where this will take us.

Quantized 40b seems to work well (around 50% after 70 games), we may advance soon to 40b ;-)).

@gcp On another subject, it seems almost 50% of the time spent by clients are on endgame moves for no resign games: these moves are necessary to avoid score errors, but is there any need to spend so much time on them? Note: as they are quite "random", there's almost no tree reuse and 1600 visits leads to almost 1600 playouts, hence no resign games are extremely long. A low number of visits may be enough for that job... Naphthalin suggested on discord a simple approach: to halve visits each time the 2 players passes non-consecutively. What do you think of that? That would for sure help the transition to 40b ;-)

@friday9i. The 0 resignation strategy should greatly improve the endgame technique of lz,especially for some special shape we called blind point in the endgame. Another benefit is what we called “”no slack”. Those are the opinions of professional players, just for your reference. :) Thanks. It looks Dr.Tian prefered it in elf training.

Note the dynamic komi branch already reduces slack moves. Leela will just continue to crush you after already winning.

@alphaladder I know, and I'm not suggesting to suppress it!! I just suggest to lower visits for the no-resign moves after both players passed (non-consecutively), which is a clear sign we entered the "no-resign endgame" and time spent on moves just appears wasted: 100 or 200 visits should be more than enough to fill the empty spaces ;-)

Are there any plans yet for challenging Haylee again, or another professional, with one of the 40b nets and the dynamic komi branch?

@Friday9i

Yes.very agree on your idea.

I planned to invite her to new handicap games once dynamic komi got merged into /next.

Sorry for always pushing on the same line (don't want to annoy or harass anyone) or if I fail to understand something:

@Friday9i commented:

@gcp On another subject, it seems almost 50% of the time spent by clients are on endgame moves for no resign games: these moves are necessary to avoid score errors, but is there any need to spend so much time on them?

Training data have not meta-data relative to the resign threshold used to genrate them. Thus they cannot and are not used to verify the resign threshold validity ('avoid score errors'). Only offline generated data are used to verify -r threshold validity (false positive rate).

Note: as they are quite "random", there's almost no tree reuse and 1600 visits leads to almost 1600 playouts, hence no resign games are extremely long.

They are quite random because the mcts makes the visits distribution very flat under extreme win rate values AND because t=1 is used. Games become weird way before the first one-side pass. And while moving to 800v or 400v once resign theshold is hit, doing so while still using t=1 would likely keep the games quite random. They should 'look' normal and should follow 'sound' trajectories by switching to t=0 (or a low value) once the resign threshold is hit. Most of the training data would still be quite flat but at least the games would no more be more or less random, providing meaningfull positions to learn from.

Although it's true that learning from late end game positions is important (endgame move account fir 1/3 to 1/2 of the total moves and playing good endgame is often decisive), I don't see that -r0 games should help much to discover new things in that area of the game, due to the issue of completely out of balance values flattening the search.

Moving to t=0 once resign threshold hit means relying on Dirichlet noise only to discover usefull things, i.e. less exploration. But still better than learning from random moves. Just MHO.

Whereas turning of temperature once bestscore hit resign threshold is still 'zero' approach, I believe it is: we are just paliating a deficiency of the search algorithm, nothing soecific to the game of go.

@bjiyxo @bubblesld

Looks like you have another 40b candidate network. Is it quantized? It seems we are gonna use quantized networks from now on.

@diodorak I check 4caca2a8 and 8993cd24, and both of them are ~90 MB. If gcp start to use quantized networks, then I will quantize it, too.

Are we gonna queue 530a or its quantized version for promotion match?

It might be stronger than elf v1 at visit parity!

530a or b072 which one is better

Thus they cannot and are not used to verify the resign threshold validity ('avoid score errors')

They are not used to verify our threshold, but they allow the net to discover situations where "oops this wasn't 100% winning after all", due, for example, score or counting errors in the net, or moves incorrectly pruned by the policy (for which purpose the flat distribution isn't necessarily hurting).

If you'd play LZ against a stronger net, you'd have a reasonable amount of games where both think they are 100%. You need to play out some of those games.

And again, our resign threshold is what it is BECAUSE WE KNOW this (completely incorrect scores) happen in a fair percentage of the games (as you pointed out, measured offline), and making it even lower leads to a quickly increasing amount of games where this happens and the program REINFORCES this wrong knowledge instead of discovering the error by not resigning.

@gcp thanks for these precisions. Any opinion regarding the idea to lower visits each time both nets passes non-consecutively? Currently, no-resign games represent almost 50% of the time spent by clients (mostly to fill the board "not so intelligently" ;-). I know we need it, but 50% of the time is a lot!

@gcp: To be clear, because I'm afraid there is misunderstanding here : I'm by no way suggesting any change to the resign threshold value. Or to the way it's fixed. Nor suggesting that game reversal or no incorrect score evaluation doesn't happen past the resign threshold.

Anyway, I'll stop asking questions on the ins and outs of no-resign games and randomness, because I'm more and more convinced I must be badly missing some important point on the training process, since I feel I understand your answers for themselves but not how they fit my questions/remarks. What amounts to say I don't truly understand your answers. So better not annoy you any longer and better dig by myself in the papers/code and let the important concepts sink. With more time maybe, everything may fall into place one day ;-) Thank you anyway for your replies and for your patience with newbies like me.

FYI, 40b-99b6 leads 48-32 on my PC against ELFv1 with 500 visits: 60%, nice achievement!

I did not test 1600 visits as it is very slow... Hard to assess, but it may be even better for 40b at 1600 visits: that would be interesting to test it on the server :-).

Edit: tonight I'll get 200 games, it will be better statistically speaking. I also uses -m 10 for both bots in order to ensure some variability in the games.

need 400 games, ref http://zero.sjeng.org/match-games/5b7b67d9cc3dde4a3e75ec80 0.66 at 100 games

@Ishinoshita I'm not saying what you said was wrong (it seems to have been a correction of a previous post) . If I understand correctly, you're suggesting the program speeds up (uses less visits) once the resign threshold is reached, and perhaps drops randomness. It may very well be that that is fine but it's untested complication I'm not interested in.

Generally speaking, as long as the resign threshold is fine (i.e. there's still a number of scoring errors "below"), the effort of the no-resign games is far from wasted. They will generate a huge error signal during training for all involved positions.

@l1t1 Yeah, 400 games are better of course, but it takes a lot of time. If you want to make 200 of them, that'll do 400 with the 200 I do ; -).

And statistically speaking, 200 is already good, with a 1 sigma variance of ~3.5 points.

Note: on a single computer, there is less variance than for distributed matches. Indeed, for distributed matches, short games arrive on average before long games: when win rates for short vs long games are significantly different, the win rate can evolve a lot more than expected (when games are played one after the other and arrive in the same order, which is the case on a single computer).

For 40b vs elf1, I have some personal test results:

with -v 1600 -t12 -r 18 gpu x3

66ba9e7a (54:46)

960fe0e8 (62:72)

c72877cd (40:69)

a3047139 (203:197)

with -v 1600 -t1 -r 5 gpu x1

530af2dc (229:237)

99b64745 (63:97)

I believe that elf1 is still stronger than 40b, while 40b learned some tricks to beat elf1 in the early stage of games. 530af2dc should have a good chance to get 45+% vs elf1 (which means not statistically weaker). That is why I hope to have a test match for 530af2dc vs elf1.

==================

update: forgot to paste the one a3047139

Nice!

Contrasting results, I'll update with 200 games tonight, and I'll probably launch some games of 99b6 against Elfv1 with 1600 visits (and -r 5 instead of the -r 2 that I used for the 500 visits match)

@bubblesld

530a looks really promising. Maybe we should just promote it :)

with -v 1600 -t1 -r 5 gpu x1, 530af2dc (229:237)

Can we let 40b and 20b generate self-play games simultaneously and train them in parallel?

One downside is that too many matches are needed. But, we could use AZ style no-gating method for the 40B networks and continue to use the 55%-gating method for the 20B networks. And from time to time we do a match between the 40B and the 20B networks to see if they are converging in strength.

Can we let 40b and 20b generate self-play games simultaneously and train them in parallel?

We can train them in parallel regardless, this has been happening for weeks.

Why would we self-play them both, what would be the point? We want the most time/strength efficient network for self-play I think, and it looks like that is 40 blocks right now. We are switching soon.

But, we could (...overly complicated proposal follows)

No.

Updated results of 40b-99b64745 vs ELFv1 after 200 games with -v 500 (and -r 2 -t 2 -m 10): 40b won easily with a 64.5% winrate (3.4 point of standard deviation), ie 129 vs 71!

Quite impressive, but also a bit surprising compared to @bubblesld test "99b64745 (63:97)", where it was clearly beaten @1600 visits.

I'm wondering about a possible explanation for the difference: I used -m 10, but it may generate not so good moves for both, but Elf plays badly if too much behind? Or simply the difference in visits, but such a big difference just between 500 and 1600 visits is surprising.

Anyway, 40b is good, waiting so much for the transition :-)

99b64745(bjiyxo 40b-167-72k quantized) was trained by v158-v167 + elf-v1.

68a8e58e(bjiyxo 40b-167a-72k quantized) was trained by v158-v167 + elf-v1 + 60k elf-v0.

We retrain 40x256 with slightly different data because we test 99b64745 vs elf-v1 =63:97. We will keep on observation and decide which one to continue training.

it seems that so far no 40b network can extract enough strength from all 20b games to be stronger than the 40b trained on 15b games e2be4815 (40b-157-360k), even after including all these new elf v1 games

after all, there are 400k games of 20b bewteen lz 157 and lz 167

is everything going all right with the 20b and 40b training ?

we may be missing something to understand

also, how about a 30b training ?

it would be faster to run than 40b while stronger than 20b

p.s : you also said @bjiyxo at https://github.com/gcp/leela-zero/issues/1681#issuecomment-411767448

that 40b-157 didnt train on any elf v1 game, and we have arround 200k elf v1 games now

it may be interesting to try to train a net based on lz 157 games, with no 20 b games, and including these 200k elf v1 games, to see how it influences the strength of 40b

@wonderingabout even though 40b is slower than 20b it still is much stronger. On my PC with 40b network I get like 1k playouts playing 20sec/move, with 20b network I get 2.5k playouts/move. On my tests (not big, 10 games) 40b won 9 out of 10.

It'll always be like this - how about 25b training? it would be faster to run than 30b while stronger than 20b... ;)

@nukee86

i'm starting to wonder if the 400k games of 20b are not dragging down the strength of the 40b network, and that we dont see it because of the strength contribution of the 200k elf v1 games

this is why i'm interested to test a 40b-157-elfv1 network, that does not include any 20b game

it may also be a good opportunity to train a 30b network instead of a 40b one

remember that time when no good 20b network was able to be stronger than lz157, we still dont know the cause yet, and it may be the same one

another test can be to train a 40b-167 network without any elf v1 game, and compare it to 40b-157 (which didnt train with any elf v1 game too)

if lz 20b is indeed stronger than 15b, its 400k games should contribute to increase 40b strength, even without elf v1 games

if 40b is not stronger or even weaker, then there may be a problem, and if thats the case, the soone rit is fixed the less consequences

edit : 400k, not 900k, my bad

@wonderingabout

There is only less than 400k 40b available. (You have your number from Training #, which is incorrect)