Eksctl: regression: external-dns Route53 controller has insufficient rights to operate

In eksctl 0.1.17 and 0.1.18 the created DNS role rights seems to be not sufficient for extenal-dns to operate properly. It works as intended in versions 0.1.16 and earlier.

Here is example log after creation of ingress object in cluster created by 0.1.18 version of eksctl:

time="2019-01-13T14:24:20Z" level=info msg="Created Kubernetes client https://10.100.0.1:443"

time="2019-01-13T14:24:20Z" level=error msg="AccessDenied: User: arn:aws:sts::337236057593:assumed-role/eksctl-prod-nodegroup-ng-8e393a03-NodeInstanceRole-BCMMCJ54BUIC/i-0b5c9b5633c5ab02c is not authorized to perform: route53:ListHostedZones\n\tstatus code: 403, request id: eaf310b3-173e-11e9-9923-339fa910c16a"

time="2019-01-13T14:25:20Z" level=error msg="AccessDenied: User: arn:aws:sts::337236057593:assumed-role/eksctl-prod-nodegroup-ng-8e393a03-NodeInstanceRole-BCMMCJ54BUIC/i-0b5c9b5633c5ab02c is not authorized to perform: route53:ListHostedZones\n\tstatus code: 403, request id: 0ead55e6-173f-11e9-bd6d-75fd7b08b429"

time="2019-01-13T14:26:20Z" level=error msg="AccessDenied: User: arn:aws:sts::337236057593:assumed-role/eksctl-prod-nodegroup-ng-8e393a03-NodeInstanceRole-BCMMCJ54BUIC/i-0b5c9b5633c5ab02c is not authorized to perform: route53:ListHostedZones\n\tstatus code: 403, request id: 326ddda1-173f-11e9-8465-b7070858f103"

time="2019-01-13T14:27:20Z" level=error msg="AccessDenied: User: arn:aws:sts::337236057593:assumed-role/eksctl-prod-nodegroup-ng-8e393a03-NodeInstanceRole-BCMMCJ54BUIC/i-0b5c9b5633c5ab02c is not authorized to perform: route53:ListHostedZones\n\tstatus code: 403, request id: 5633e29c-173f-11e9-9204-17db2e779598"

time="2019-01-13T14:28:20Z" level=error msg="AccessDenied: User: arn:aws:sts::337236057593:assumed-role/eksctl-prod-nodegroup-ng-8e393a03-NodeInstanceRole-BCMMCJ54BUIC/i-0b5c9b5633c5ab02c is not authorized to perform: route53:ListHostedZones\n\tstatus code: 403, request id: 79f63de5-173f-11e9-aef4-6d3cf9c8b3bd"

...

All 11 comments

Thanks for reporting this! Could you please confirm whether you used --external-dns-access flag?

If you did, the route53:ListHostedZones should be included:

https://github.com/weaveworks/eksctl/blob/3661acc8d0f274af97507f2066dc515feb667bb9/pkg/cfn/builder/iam.go#L121-L129

Yes I did, the precise command was:

eksctl create cluster --name=prod --nodes=3 --nodes-min=2 --nodes-max=5 --auto-kubeconfig --node-type=m5.2xlarge --region=us-west-2 --node-ami=auto --asg-access --external-dns-access --full-ecr-access -P

It works on 0.1.16 version w/o problems.

I've tested it recently, and it worked. I don't think anything changed around this particular feature, but it'd recommend upgrading to latest version (0.1.18).

I can see assumed-role in the logs, I wonder if that's normal when instance roles are used, or there is something in your account that makes it work differently?

To fix this, please try to update the instance role and let us know what changes need to be made to make it work.

We certainly do set route53:ListHostedZones amongst other roles required for external-dns add-on.

I checked created policies, here is the difference, on 0.1.18 the Route53 policy is:

{

"Version": "2012-10-17",

"Statement": [

{

"Action": [

"route53:ChangeResourceRecordSets",

"route53:ListHostedZones",

"route53:ListResourceRecordSets"

],

"Resource": "arn:aws:route53:::hostedzone/*",

"Effect": "Allow"

}

]

}

On the 0.1.16 version the effective policy looks like:

{

"Version": "2012-10-17",

"Statement": [

{

"Action": [

"route53:ChangeResourceRecordSets"

],

"Resource": "arn:aws:route53:::hostedzone/*",

"Effect": "Allow"

}

]

}

{

"Version": "2012-10-17",

"Statement": [

{

"Action": [

"route53:ListHostedZones",

"route53:ListResourceRecordSets"

],

"Resource": "*",

"Effect": "Allow"

}

]

}

The difference is in Resource: field, which was more general in previous versions.

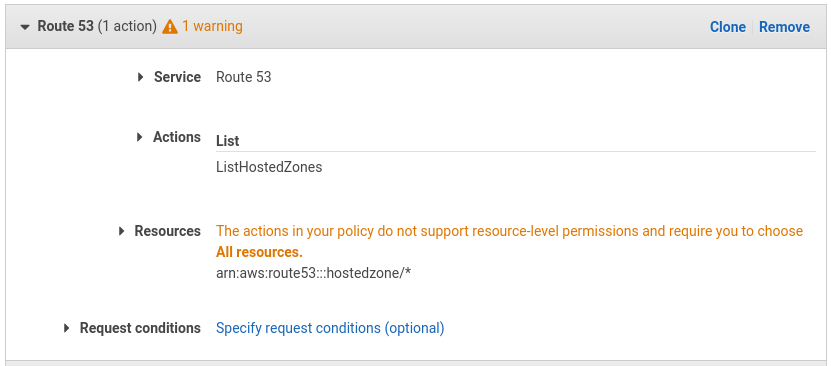

There is also Warning in the policy window about ListHostedZones action policy:

After fixing the warning, following policy works properly:

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": [

"route53:ChangeResourceRecordSets",

"route53:ListResourceRecordSets"

],

"Resource": "arn:aws:route53:::hostedzone/*"

},

{

"Effect": "Allow",

"Action": "route53:ListHostedZones",

"Resource": "*"

}

]

}

ListHostedZones action must be allowed for all resources.

After further investigation I discovered that above config is too limited. The error disappears but a controller is unable to work properly and check ListResourceRecordSets. The proper configuration was an initial one form 0.1.16:

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": "route53:ChangeResourceRecordSets",

"Resource": "arn:aws:route53:::hostedzone/*"

},

{

"Effect": "Allow",

"Action": [

"route53:ListHostedZones",

"route53:ListResourceRecordSets"

],

"Resource": "*"

}

]

}

I've faced that issue too, I had to go and apply permissions manually

eksctl version

[ℹ] version.Info{BuiltAt:"", GitCommit:"", GitTag:"0.1.19"}

I've also encountered this, and had to manually change the permissions of the policy according to the AWS Tutorial Docs for external-dns

Thanks

Same here, had to manually fix the permission

EKS_CLUSTER_NAME='your-cluster'

EKS_EXTERNAL_DNS_POLICY='''{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": [

"route53:ChangeResourceRecordSets"

],

"Resource": [

"arn:aws:route53:::hostedzone/*"

]

},

{

"Effect": "Allow",

"Action": [

"route53:ListHostedZones",

"route53:ListResourceRecordSets"

],

"Resource": [

"*"

]

}

]

}'''

EKS_NODEGROUP_ROLE_NAME=$(aws iam list-roles --query 'Roles[*].{RoleName:RoleName}' --output text --max-items 10000 |grep "eksctl-${EKS_CLUSTER_NAME}-nodegroup-ng" |xargs)

EKS_EXTERNAL_DNS_POLICY_NG=$(aws iam list-role-policies --role-name=${EKS_NODEGROUP_ROLE_NAME} --output=text | egrep -o '[a-z0-9-]+-PolicyExternalDNS' |xargs)

aws iam put-role-policy --role-name ${EKS_NODEGROUP_ROLE_NAME} --policy-name ${EKS_EXTERNAL_DNS_POLICY_NG} --policy-document file://<(cat <<<"${EKS_EXTERNAL_DNS_POLICY}")

This should be a trivial one to fix, could someone please open a PR? If

someone wants to do it, but needs help, please ping me on Slack.

On Tue, 19 Feb 2019, 10:29 am Eugene Glotov <[email protected] wrote:

EKS_CLUSTER_NAME='your-cluster'

EKS_EXTERNAL_DNS_POLICY='''{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": [

"route53:ChangeResourceRecordSets"

],

"Resource": [

"arn:aws:route53:::hostedzone/"

]

},

{

"Effect": "Allow",

"Action": [

"route53:ListHostedZones",

"route53:ListResourceRecordSets"

],

"Resource": [

""

]

}

]

}'''EKS_NODEGROUP_ROLE_NAME=$(aws iam list-roles --query 'Roles[*].{RoleName:RoleName}' --output text --max-items 10000 |grep "eksctl-${EKS_CLUSTER_NAME}-nodegroup-ng" |xargs)

EKS_EXTERNAL_DNS_POLICY_NG=$(aws iam list-role-policies --role-name=${EKS_NODEGROUP_ROLE_NAME} --output=text | egrep -o '[a-z0-9-]+-PolicyExternalDNS' |xargs)

aws iam put-role-policy --role-name ${EKS_NODEGROUP_ROLE_NAME} --policy-name ${EKS_EXTERNAL_DNS_POLICY_NG} --policy-document file://<(cat <<<"${EKS_EXTERNAL_DNS_POLICY}")—

You are receiving this because you commented.

Reply to this email directly, view it on GitHub

https://github.com/weaveworks/eksctl/issues/427#issuecomment-465076093,

or mute the thread

https://github.com/notifications/unsubscribe-auth/AAPWSy2bG2RVfa9cDOYcVaxWGxwClJ4uks5vO9HsgaJpZM4Z9MyF

.

Most helpful comment

After further investigation I discovered that above config is too limited. The error disappears but a controller is unable to work properly and check

ListResourceRecordSets. The proper configuration was an initial one form0.1.16: