I've tested this today, and it worked as intended, but we need more testing before we can declare Weave Net is full supported.

From eksctl perspective, we should make Weave Net an add-on (see #17 and #18), especially because there all the extra steps required.

First, create a cluster with eksctl create cluster.

Next, backup and delete kube-system:DaemonSet/aws-node:

kubectl get ds --namespace=kube-system aws-node --output=yaml --export > aws-node.ds.yaml

kubectl delete ds --namespace=kube-system aws-node

Now, install Weave Net:

curl --silent --location \

"https://cloud.weave.works/k8s/v1.10/net" \

--output weave-net.yaml

kubectl apply -f weave-net.yaml

After kube-system:DaemonSet/weave-net is created, all pods will need to be recycled.

The following script can be used to recycle all pods properly:

#!/bin/bash

set -o errexit

set -o pipefail

set -o nounset

## Wait until all Weave Net pods are running

weave_net_pods_are_ready() {

local count

count="$(

kubectl get pods \

--namespace=kube-system \

--selector='name=weave-net' \

--output='jsonpath={range .items[*]}{@.metadata.name}:{@.status.phase}{"\n"}{end}' \

| grep -c -v Running

)"

test "${count}" -eq 0

}

## Delete all pods that were created before Weave Net CNI plugin was installed

delete_stale_pods() {

local namespaces

namespaces=($(kubectl get namespaces --output='jsonpath={range .items[*]}{@.metadata.name}{"\n"}{end}'))

for ns in "${namespaces[@]}" ; do

kubectl delete pods --namespace="${ns}" --selector='name!=weave-net'

done

unset ns

}

until weave_net_pods_are_ready ; do sleep 1 ; done

delete_stale_pods

NOTE: when we do this, we should be able to bypass --max-pod setting based on instance type, as it's based on ENI limits.

All 18 comments

I was expecting access to kubernetes service to break, which is what happen during the tests I did earlier. However, what appears to break now is DNS. Either way, this is most likely due to that ENI network was removed. There must be a way to make this work, potentially without having to use both networks, although we might have to do that for compatibly/stability. Multiple CNI networks is not mainstream, but can be achieved from what I have heard.

Has anyone been able to figure out the DNS issue yet? I'm hitting the same problem. I'm not sure I understand enough about why it doesn't work.

Has anyone been able to figure out the DNS issue yet? I'm hitting the same problem. I'm not sure I understand enough about why it doesn't work.

Same here. Tried installing CoreDNS to see if this was some kind of limitation of KubeDNS, didn't seem to help. Starting to wonder if this is an issue with iptables on the worker node, or security groups limiting network activity in some way. Not sure though.

@murali-reddy said that he will be looking into it soon.

Cross posting the steps from here comment

I am able to make pod-to-pod, pod-to-nodes, pod-service-pod communication path work with Weave. I dont see any issues for services. Please try and let me if it works for you.

- eksctl create cluster

- kubectl delete ds aws-node -n kube-system

- delete /etc/cni/net.d/10-aws.conflist on each of the node

- edit instance security group to allow UDP, TCP on 6873, 6874 ports

- flush iptables nat, mangle, filter

- restart kube-proxy pods

- apply weave-net daemoset

Just to clarify another approach which doesn't require manual steps on the nodes could be:

- eksctl create cluster

- kubectl delete ds aws-node -n kube-system

- edit instance security group to allow UDP, TCP on 6873, 6874 ports

- apply weave-net daemonset

- recycle all nodes

@jacobtomlinson thanks for your suggestion! Actually, there is now even a better way.

- You can use config file and set

overrideBootsrapCommandto delete/etc/cni/net.d/10-aws.conflist. - You can create a cluster with

eksctl create cluster --config-file=cluster.yaml --without-nodegroup - Then delete the native driver with

kubectl delete ds aws-node -n kube-system - Now apply Weave Net config

- Next use

eksctl create ng --config-file=cluster.yamlto create your nodegroups - You shouldn't need to patch any security groups, all ports are open between all nodes

- Shouldn't need to flush iptables, as native driver didn't get a chance to run this

- Shouldn't need to restart kube-proxy pods

I am happy to help more, but don't have the time to fully document it, help here would be appreciated, especially in a form of a blog post to begin with.

Ok great thanks. Is deleteing /etc/cni/net.d/10-aws.conflist actually necessary if the nodes are going to be created after the daemonsets are swapped from aws-node to weave-net?

@jacobtomlinson good point! Worse checking, I just thought it's possible that file may exist on the image.

The instructions above are missing a crucial --max-pods-per-node flag (maxPodsPerNode in config file) which must be set for a nodegroup, as otherwise the maximum number of pods per node will be limited based on AWS VPC CNI requirements (see pkg/nodebootstrap/maxpods.go).

Please forgive me if this is the wrong place to state this, but why does eksctl not just have a simple flag that can be specified to tell eksctl to install weave net? like eksctl create --cni=weavenet. I'm surprised this behavior isn't built in since both weavenet and eksctl are created by weaveworks.

Hello,

This is a re-post that I posted Friday on an issues that was closed.

https://github.com/weaveworks/weave/issues/3335

But i am not sure the instructions given in the issue are working correctly, but i think this current issue is still open to address the instructions??

Not sure if the instructions are not fully working.

kubectl -n kube-system logs weave-net-x57lt -c weave-npc

Error from server: Get https://172.17.172.29:10250/containerLogs/kube-system/weave-net-x57lt/weave-npc: dial tcp 172.17.172.29:10250: connect: no route to host

But if i try to rerun the same command, after about 30 tries or so it works. then after about 5 minutes, it stops working again.

It seem its working then not, then working, then not, etc.

- In my case I created a fresh EKS 1.13 cluster with no workers attached at first,

- kubectl -n kube-system delete daemonset aws-node

- kubectl apply -f "https://cloud.weave.works/k8s/net?k8s-version=$(kubectl version | base64 | tr -d 'n')&env.IPALLOC_RANGE=192.168.0.0/16"

- kubectl apply -f ./EKS-Worker-Auth-ConfigMap.yaml

There instructions seem work well for me with preBootStrap on 1.11, and 1.12, but not with 1.13, I had "no CNI driver" after the aws-cni was in the worker and this did forbid the worker node to start. I haven't investigated it further for now. So, unless someone has instructions for 1.13, or has time to investigate what are the proper instructions for 1.13 and above, for now it is probably better to stick to 1.11 and 1.12.

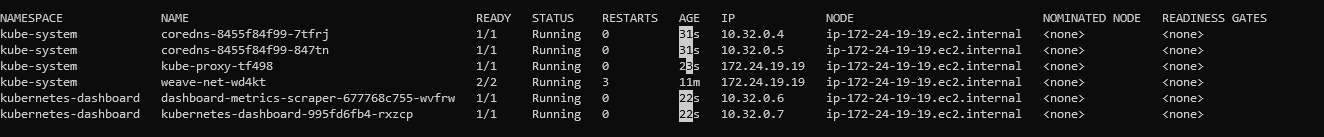

Encountered the same issue. A fresh AWS EKS 1.14 instance with the worker nodes created using cloudformation template. Before joining the worker node, I deleted aws cni and installed weave. When the nodes joined, the kubeproxy has the IP of the worker node. The issue that I am facing is that, the proxy container is not able to connect to the kubernetes-dashboard container.

kubectl proxy

Accessing'http://localhost:8001/api/v1/namespaces/kubernetes-dashboard/services/https:kubernetes-dashboard:/proxy/#!/login

gives the error message

Error: 'dial tcp 10.32.0.6:8443: i/o timeout'

Trying to reach: 'https://10.32.0.6:8443/'

Kubernetes dashboard was installed using

kubectl apply -f https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.0-beta4/aio/deploy/recommended.yaml

following official aws documentation - https://docs.aws.amazon.com/eks/latest/userguide/dashboard-tutorial.html

Encountered the same issue. A fresh AWS EKS 1.14 instance with the worker nodes created using cloudformation template. Before joining the worker node, I deleted aws cni and installed weave. When the nodes joined, the kubeproxy has the IP of the worker node. The issue that I am facing is that, the proxy container is not able to connect to the kubernetes-dashboard container.

kubectl proxy

Accessing'http://localhost:8001/api/v1/namespaces/kubernetes-dashboard/services/https:kubernetes-dashboard:/proxy/#!/login

gives the error message

Error: 'dial tcp 10.32.0.6:8443: i/o timeout' Trying to reach: 'https://10.32.0.6:8443/'

That is expected behavior due to the fact that the Weave CNI cannot be installed on to the master nodes that are managed by AWS: https://github.com/weaveworks/weave/issues/3335#issuecomment-518157683

With weave net CNI running in EKS you will not be able to use the proxy capability and you also won't be able to use admission controllers.

simple flag that can be specified on eksctl to install weave net like eksctl create --cni=weavenet

We had our staging cluster on EKS, and the number of pods limitation was really making us burn money for no reason ( we have a lot of services but most with low memory and CPU usage).

We switched to cluster build with kops and we are really happier now.

No maximum number of pods limitation, you can choose the type of instance for the master.

This issue is stale because it has been open 30 days with no activity. Remove stale label or comment or this will be closed in 5 days.

Most helpful comment

Please forgive me if this is the wrong place to state this, but why does eksctl not just have a simple flag that can be specified to tell eksctl to install weave net? like

eksctl create --cni=weavenet. I'm surprised this behavior isn't built in since both weavenet and eksctl are created by weaveworks.