Darknet: Training YOLOv3 with own dataset

Hi everyone,

Has anyone had success with training YOLOv3 for their own datasets? If so, could you help sort out some questions for me:

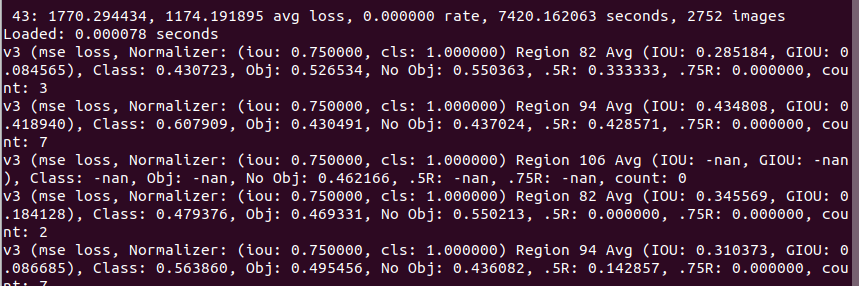

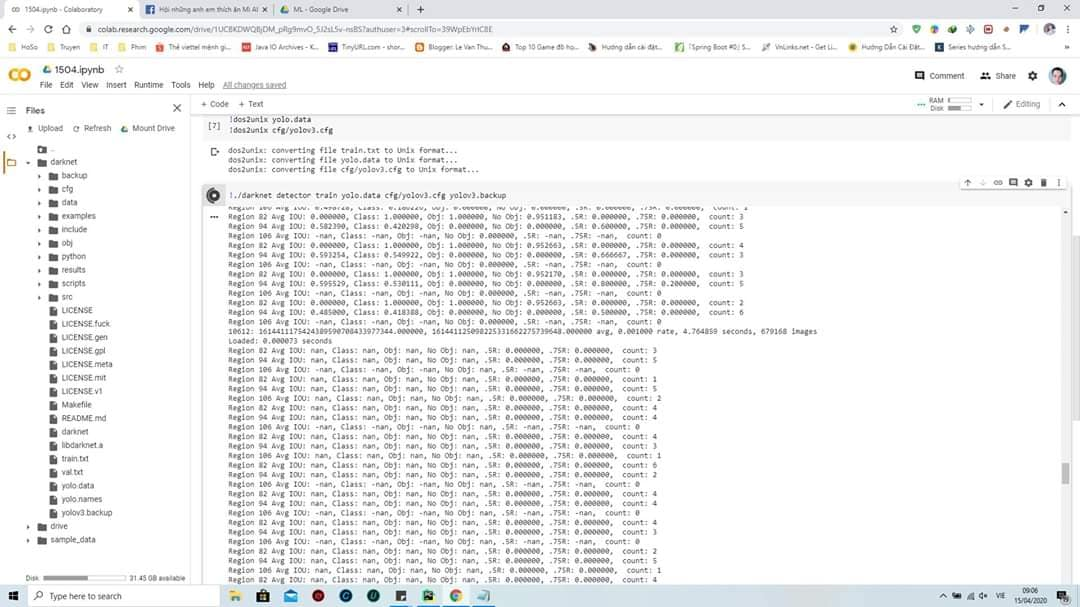

For me, I have a 5 class object detection problem. In the .cfg file, I have changed the number of classes and the number of filters to 3*(num_classes+5) = 30 in 3 different places. I can initiate the training but the loss blows up to start with and I am seeing a bunch of nans in the output massage (see snippet)

Here are my questions:

- Did you need to change the anchor box sizes and/or the number of anchors?

- Did you need to create the labels differently than for YOLO v2?

Thanks!

All 218 comments

No you don't need to change your training set.

You need to calculate your anchors as previously on yolo2 but multiply by 32 (and round).

Then split the anchors among the layers. If you have 9 anchors you can split them 3 ways, but decide based on size. Each anchor should have 5+number of objects filters.

I got ok results with the default anchors but you could recompute. Remember your anchor calc should be the same scale as the input size for the network.

@sonalambwani Just wait about 1000 iterations, and nan will disappear: https://github.com/AlexeyAB/darknet/issues/504#issuecomment-377290060

- You can re-calculate anchors, but it is not necessary. You can calculate anchors for Yolo v3 using this fork: https://github.com/AlexeyAB/darknet

and this command if in your cfg-filewidth=416andheight=416:

darknet.exe detector calc_anchors data/voc.data -num_of_clusters 9 -width 416 -heigh 416

This anchors you can use in your cfg-file (without multiplication by 32)

- You can use the same labels as for Yolo v2

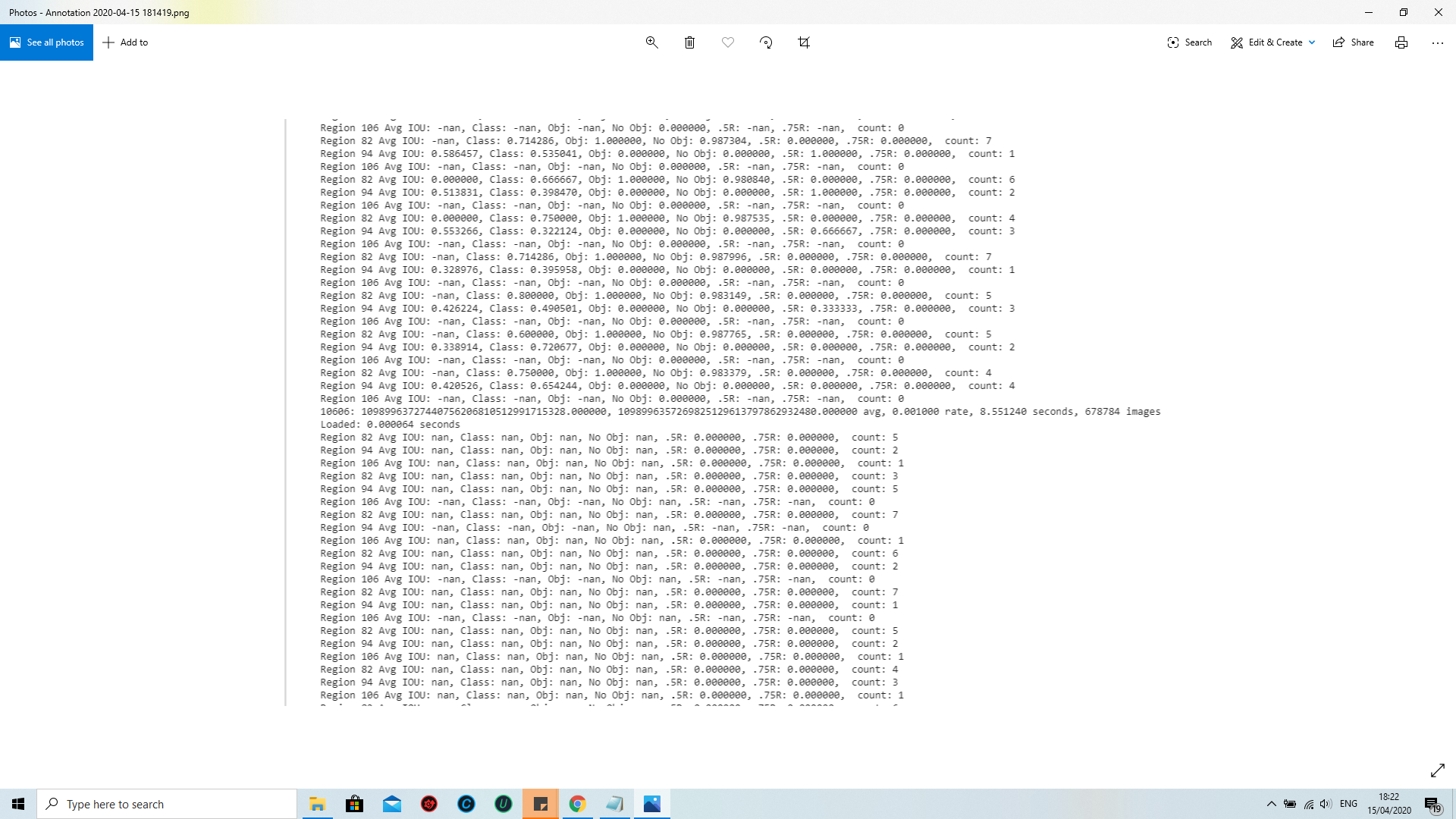

@AlexeyAB ,hello,but after waiting about 1000 iterations, and nan still appear:

Hi,

I am trying to do Training YOLO on VOC.

below command i ma using , ./darknet detector train cfg/voc.data cfg/yolov3-voc.cfg darknet53.conv.74

But nans keep on increasing. Is it normal or some issue.

Loaded: 0.000063 seconds

Region 82 Avg IOU: nan, Class: nan, Obj: nan, No Obj: nan, .5R: 0.000000, .75R: 0.000000, count: 1

Region 94 Avg IOU: -nan, Class: -nan, Obj: -nan, No Obj: nan, .5R: -nan, .75R: -nan, count: 0

Region 106 Avg IOU: -nan, Class: -nan, Obj: -nan, No Obj: nan, .5R: -nan, .75R: -nan, count: 0

3296: -nan, nan avg, 0.001000 rate, 0.416401 seconds, 3296 images

I have a same issue. The error is shown in second yolo layer. Did you solve this problem?

same tooooo

@ss199302

If there are only some nan then training goes well, but if there are all nan then training goes wrong.

@AlexeyAB

As you suggested, I am now training with my new dataset with the default COCO anchor boxes. I am training from "Scratch", i.e., no initialization with the pretrained convolutional weights as you have done in https://github.com/AlexeyAB/darknet/issues/504#issuecomment-377290060

For me, I see nans even after 2500 iterations. The loss (after starting off really high) has dropped within a reasonable range, but there is more of a fluctuation between the loss for each mini-batch.

Have you, or anyone else here, noticed similar behavior?

For me, I see nans even after 2500 iterations.

All lines have

nanvalues or only some lines?How many classes and images in your dataset? And what tool did you use for labeling?

What batch and subdivision do you use?

Do you use

random=1?Do you train using multi-GPU?

It's just a few lines with nans.

Used an in-house tool for labeling.

batch=16, subdivisions = 16

Not sure about random=1. Where do I check/set that??

It's a single GPU.

@AlexeyAB

"How many classes and images in your dataset? And what tool did you use for labeling?"

5 classes, ~17k images in the training set.

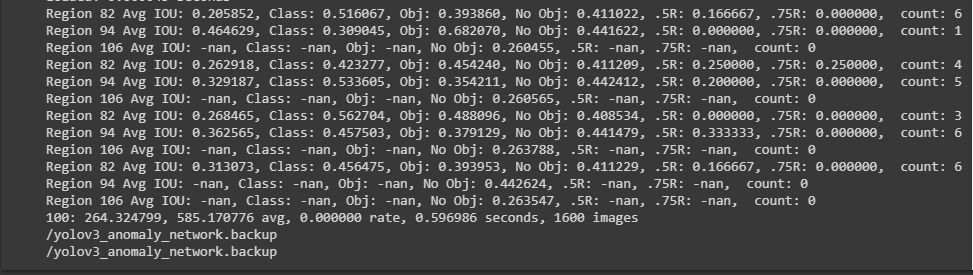

@sonalambwani Looks like normal output of training.

You have batch and subdivision 16. That means one image per iteration and depending on the density of objects in your images, it's possible that no object will be found in a given layer which will lead to nan. Also depends if the ground truths are similar to the anchors. If they are all very small for all very large then you may not detect them in the very large or very small layers.

So I agree with alexeyAB that this looks normal. Can you reduce the subdivisions so more images per mini batch.

I have a same issue

batch=64, subdivisions = 8

I have followed this instruction

I really didn't understand if I should change anchors in yolo-obj.cfg when i have own dataset.

@ndg123

Thank you for your suggestions. I am now testing with batch = 64 and subdiv=16. Right off the bat, I see fewer nans. There are a few, but it's looking better.

per my training on customer dataset, If not all of them are nans, it is fine. since 3 different scales, that means in some scale, no object is detected. you may try different input image size, or divide into 2, or 4 different scales instead of 3? then the number of nans should change.

@AlexeyAB thanks your reply,but i can't test anything

@AlexeyAB darknet.exe detector calc_anchors data/voc.data -num_of_clusters 9 -width 416 -heigh 416,this command how to write in ubuntu darknet?

@ss199302 ./darknet detector calc_anchors data/voc.data -num_of_clusters 9 -width 416 -heigh 416

I am trying to run calc_anchors in linux using what @TheMikeyR says and it returns to command line immediately and gives no output. Is it supposed to print the anchors to std out? I'm new to C. Where can I find the code this command runs?

Also, I'm training on my own data, and the bounding boxes in my training data are all the exact same size, and they are all squares. Do I still need to specifiy more than one anchor?

Is it possible to detect the Signature (or any handwritten area) in printed receipts using YOLO ? which would be the best cfg file for the same, and any suggestions before I start ?

@brieh try Alexey repo https://github.com/AlexeyAB/darknet

Here is the code https://github.com/AlexeyAB/darknet/blob/master/src/detector.c#L839

@TheMikeyR Thanks. I was using the pjreddie fork.

@AlexeyAB hello! I use this command ./darknet detector calc_anchors data/voc.data -num_of_clusters 9 -width 416 -heigh 416 to get anchors,but it don't return anything.

@ss199302 same for me. Have u found the solution?

@ss199302 @spenceryue97 did you create the labels (*.txt) files first?

@ss199302 @spenceryue97 and you're definitely using AlexeyAB's fork?

I never ended up getting it working. I didn't want to switch to AlexeyAB's fork because we've modified our fork of pjreddie's fork. I tried copy/pasting the code that does the clustering from AlexeyAB's detector.c to the one I have and remaking, but still gave no output.

@sonalambwani Yes

@brieh I'm using pjreddie's repo

@spenceryue97 @brieh you can just get AlexeyAB's fork, run the calc_anchors and then take the numbers to your cfg in pjreddie's repo.

@AlexeyAB Can you tell me when i use recall ,why my IOU appear nan,and recall and precision is 0.5% ,thanks!

@UgolUgol Have you compare the result between default anchors and the anchors calculated with the command from https://github.com/AlexeyAB/darknet?

@AlexeyAB I am training my own objects, and am weirdly getting valudes for all Region 106 results and -nan for everything else:

```Region 106 Avg IOU: 0.103513, Class: 0.206469, Obj: 0.001993, No Obj: 0.000744, .5R: 0.000000, .75R: 0.000000, count: 4

Region 82 Avg IOU: -nan, Class: -nan, Obj: -nan, No Obj: 0.001404, .5R: -nan, .75R: -nan, count: 0

Region 94 Avg IOU: -nan, Class: -nan, Obj: -nan, No Obj: 0.000535, .5R: -nan, .75R: -nan, count: 0

Region 106 Avg IOU: 0.183575, Class: 0.167765, Obj: 0.002698, No Obj: 0.000716, .5R: 0.000000, .75R: 0.000000, count: 1

Region 82 Avg IOU: -nan, Class: -nan, Obj: -nan, No Obj: 0.001364, .5R: -nan, .75R: -nan, count: 0

Region 94 Avg IOU: -nan, Class: -nan, Obj: -nan, No Obj: 0.000517, .5R: -nan, .75R: -nan, count: 0

Region 106 Avg IOU: 0.112761, Class: 0.219895, Obj: 0.001320, No Obj: 0.000692, .5R: 0.000000, .75R: 0.000000, count: 4

Region 82 Avg IOU: -nan, Class: -nan, Obj: -nan, No Obj: 0.001196, .5R: -nan, .75R: -nan, count: 0

Region 94 Avg IOU: -nan, Class: -nan, Obj: -nan, No Obj: 0.000538, .5R: -nan, .75R: -nan, count: 0

Region 106 Avg IOU: 0.518243, Class: 0.616705, Obj: 0.000801, No Obj: 0.000739, .5R: 1.000000, .75R: 0.000000, count: 1

Region 82 Avg IOU: -nan, Class: -nan, Obj: -nan, No Obj: 0.001336, .5R: -nan, .75R: -nan, count: 0

Region 94 Avg IOU: -nan, Class: -nan, Obj: -nan, No Obj: 0.000534, .5R: -nan, .75R: -nan, count: 0

Region 106 Avg IOU: 0.067241, Class: 0.113757, Obj: 0.002734, No Obj: 0.000756, .5R: 0.000000, .75R: 0.000000, count: 7

Region 82 Avg IOU: -nan, Class: -nan, Obj: -nan, No Obj: 0.001470, .5R: -nan, .75R: -nan, count: 0

Region 94 Avg IOU: -nan, Class: -nan, Obj: -nan, No Obj: 0.000540, .5R: -nan, .75R: -nan, count: 0

Region 106 Avg IOU: 0.064037, Class: 0.159617, Obj: 0.005763, No Obj: 0.000764, .5R: 0.000000, .75R: 0.000000, count: 5

Region 82 Avg IOU: -nan, Class: -nan, Obj: -nan, No Obj: 0.001454, .5R: -nan, .75R: -nan, count: 0

Region 94 Avg IOU: -nan, Class: -nan, Obj: -nan, No Obj: 0.000550, .5R: -nan, .75R: -nan, count: 0

Region 106 Avg IOU: 0.092829, Class: 0.161946, Obj: 0.004937, No Obj: 0.000723, .5R: 0.000000, .75R: 0.000000, count: 8

813: 6.122332, 6.256896 avg, 0.000437 rate, 8.432507 seconds, 26016 images

```

It's the consistency that's worrying me. I've got 16 classes with around 4500 images. The one particularly odd thing about my setup is that I've set the height and width for every identified object to 0.01 (e.g. 2 0.808552 0.933797 0.01 0.01), as I only care about the position, not the bounds of the object. Hopefully that's not messing things up?

@jfries289

every identified object to 0.01 so you will get nan for Region 82 and 94 always, but it isn't a problem. Training goes well.

But for slightly better accuracy, even if you need only position, it's better to set the real width and height of the objects, so Yolo will know which of 3 [yolo] layers (with higher receptive filed, or with higher resolution without subdiscretization) should be used to detect this object.

@AlexeyAB Thanks for the reply. It's clear I need to improve my understanding of the regions, etc.

However, I'm still not sure my training is going successfully:

2612: 3.408263, 1.774134 avg, 0.001000 rate, 12.983424 seconds, 83584 images

My avg seems to be oscillating between 1.2 and 1.7. At this stage, I would have expected my avg to be lower. Is this the system temporarily stuck in a local minimum, or has something possibly gone wrong?

@jfries289

My avg seems to be oscillating between 1.2 and 1.7. At this stage, I would have expected my avg to be lower. Is this the system temporarily stuck in a local minimum, or has something possibly gone wrong?

I think this is because Yolo can't select the optimal [yolo]-layer (1 of 3), so the last [yolo]-layer predicts objects with the big error and it increases loss, also the difference between size that predicted by Yolo during training and size that you set is very large. Also may be something wrong else. I think you will able to detect objects, but with low accuracy.

I recommend you to set real sizes for object using Yolo_mark, then recalculate anchors and then start training from the begining.

In the Yolo v3, the labels with correct sizes of objects help to choose the optimal [yolo]-layer, i.e. help to train with higher accuracy.

@AlexeyAB

In the Yolo v3, the labels with correct sizes of objects help to choose the optimal [yolo]-layer, i.e. help to train with higher accuracy.

If full-sized labels are not an option, would it be better for me to use Yolo v2? Or would I have the same issue there?

@jfries289

What is the range of the real sizes of objects in your dataset?

@AlexeyAB

I would guess anywhere from 0.1 to 0.8.

@jfries289

In the Yolo v3, the labels with correct sizes of objects help to choose the optimal [yolo]-layer, i.e. help to train with higher accuracy.

If full-sized labels are not an option, would it be better for me to use Yolo v2? Or would I have the same issue there?

- If real sizes of objects are big - then probably will be better to use Yolo v2.

- If real sizes of objects can be big and small - then you should re-label your objects and train with Yolo v3.

But I have never tested training using such dataset as your with the constant values of width and height.

I have a dataset of 21k face images. I already checked the labelled data using yolo_mark. I am using yolov3 with batch 64 and subdivisions 16

I am getting nan every where shall I wait for 1000 iterations?

here is the output:

73: -nan, -nan avg loss, 0.000000 rate, 649.007621 seconds, 4672 images

Loaded: 0.000000 seconds

Region 82 Avg IOU: -nan, Class: nan, Obj: -nan, No Obj: -nan, .5R: 0.000000, .75R: 0.000000, count: 4

Region 94 Avg IOU: -nan, Class: nan, Obj: nan, No Obj: nan, .5R: 0.000000, .75R: 0.000000, count: 1

Region 106 Avg IOU: -nan, Class: nan, Obj: -nan, No Obj: -nan, .5R: 0.000000, .75R: 0.000000, count: 1

Region 82 Avg IOU: -nan, Class: nan, Obj: -nan, No Obj: -nan, .5R: 0.000000, .75R: 0.000000, count: 2

Region 94 Avg IOU: -nan, Class: nan, Obj: nan, No Obj: nan, .5R: 0.000000, .75R: 0.000000, count: 4

Region 106 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: -nan, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 82 Avg IOU: -nan, Class: nan, Obj: -nan, No Obj: -nan, .5R: 0.000000, .75R: 0.000000, count: 3

Region 94 Avg IOU: -nan, Class: nan, Obj: nan, No Obj: nan, .5R: 0.000000, .75R: 0.000000, count: 1

Region 106 Avg IOU: -nan, Class: nan, Obj: -nan, No Obj: -nan, .5R: 0.000000, .75R: 0.000000, count: 4

Region 82 Avg IOU: -nan, Class: nan, Obj: -nan, No Obj: -nan, .5R: 0.000000, .75R: 0.000000, count: 4

Region 94 Avg IOU: -nan, Class: nan, Obj: nan, No Obj: nan, .5R: 0.000000, .75R: 0.000000, count: 2

Region 106 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: -nan, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 82 Avg IOU: -nan, Class: nan, Obj: -nan, No Obj: -nan, .5R: 0.000000, .75R: 0.000000, count: 3

Region 94 Avg IOU: -nan, Class: nan, Obj: nan, No Obj: nan, .5R: 0.000000, .75R:0.000000, count: 3

Region 106 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: -nan, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 82 Avg IOU: -nan, Class: nan, Obj: -nan, No Obj: -nan, .5R: 0.000000, .75R: 0.000000, count: 3

Region 94 Avg IOU: -nan, Class: nan, Obj: nan, No Obj: nan, .5R: 0.000000, .75R: 0.000000, count: 3

Region 106 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: -nan, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 82 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: -nan, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 94 Avg IOU: -nan, Class: nan, Obj: nan, No Obj: nan, .5R: 0.000000, .75R: 0.000000, count: 6

Region 106 Avg IOU: -nan, Class: nan, Obj: -nan, No Obj: -nan, .5R: 0.000000, .75R: 0.000000, count: 2

Region 82 Avg IOU: -nan, Class: nan, Obj: -nan, No Obj: -nan, .5R: 0.000000, .75R: 0.000000, count: 5

Region 94 Avg IOU: -nan, Class: nan, Obj: nan, No Obj: nan, .5R: 0.000000, .75R: 0.000000, count: 1

Region 106 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: -nan, .5R: -nan(ind), .75R: -nan(ind), count: 0

@AlexeyAB As you mentioned earlier in Only if nan occurs for avg loss for several dozen consecutive iterations, then training went wrong. Otherwise, the training goes well., can you please give some solutions on how to correct the training process if we are getting all nans? My training loss goes on increasing and after some steps, all values become -nan.

I encounter this phenomenon on my 3 classes dataset, but after training, it goes well.

I think it is from the scale mismatch of different output layer.

When no object found in the given layer it gives nan. IOU is basically Area of intersection / Area of Union.

I think that looks normal.

@AlexeyAB how many images do you think I should get if I want to add a new class to the COCO dataset?

Hello @MizbaMohammed ,

Are you already aware of the default recommendation? I guess this is not very specific for COCO, but in general:

https://github.com/AlexeyAB/darknet#how-to-improve-object-detection

desirable that your training dataset include images with objects at diffrent: scales, rotations, lightings, from different sides, on different backgrounds - you should preferably have 2000 different images for each class or more, and you should train 2000*classes iterations or more

I got confused when set up the anchor boxes: how should I arrange the sequence of the clustered anchor boxes? You know, they're not distributed well as we wish...... I might get [1, 1, 2, 2, 5, 6, 30, 32, 42] instead of [1,2,3,4,5,6,7,8,9], and I hesitated to just put them evenly at 3 scales in yolov3. And experiments of myself have just proved that the arrangement of anchor boxes just matters.

And the output Region 82 and Region 94, and Region 106 is another confusion: what do they mean?

Region 82 Avg IOU: 0.790874, Class: 0.993619, Obj: 0.970194, No Obj: 0.002241, .5R: 1.000000, .75R: 0.666667, count: 3

Region 94 Avg IOU: 0.665403, Class: 0.775035, Obj: 0.567849, No Obj: 0.000524, .5R: 0.800000, .75R: 0.200000, count: 5

Region 106 Avg IOU: -nan, Class: -nan, Obj: -nan, No Obj: 0.000002, .5R: -nan, .75R: -nan, count: 0

How can it know how many objects the batch has at each layer? And if it decides each object has a layer

to belong to, then would the distribution of anchor boxes be a big problem?

Could anyone help with this? Thanks a lot.

I have successfully trained 1-object detection YOLO-2 model, but still doesn't understand- what role anchors in cfg file plays? I has changed them, but didn't see any effect.

1) What is the meaning of anchors?

2) @AlexeyAB: Alexey, what do you mean _"real sizes of objects"_?

3) If anybody has some program choosing one best weight from the trained set of weights, based on test annotated images?

4) What is the advantage of YOLO-3 over YOLO-2 ?

Hi@AlexeyAB , yolo v3-spp sounds good, is there any tutorial on how to train it? thank you

When I am trying to calculate the anchors k-means++ can't be used without OpenCV, because there is used cvKMeans2 implementation , this error is coming. How to resolve this??

Hi @AlexeyAB I have some questions. One is how to set the iteration times in the cfg file. Is it the "steps"?

I want to set the weights file autosave rate fix at every 100 iterations as the weights-file is saved only once every 10 000 iterations if(iterations > 1000). Is the parameter the "burn in"?

@wentianl20 You should change in file detector.c in function train_detector(...):

the block with save_weights(net, buff) should be under condition if(i%100==0).

@wentianl20 steps is for learning rate policy, not for model caching frequency

@AlexeyAB Sir when i install the alexyab/darknet then after make in the directory build/darknet/x64 darknet.exe not occurs.please guide me or send the procedure.thanks

@arsal181

on Windows if you compile by using MSVS -

build/darknet/x64/darknet.exewill occuron Linux if you compile by using make -

darknetwill occur in the current directory (near with Makefile)

can i use this darknet in build/darknet/x64 directory?

On Sun, Oct 14, 2018, 4:14 PM Alexey notifications@github.com wrote:

@arsal181 https://github.com/arsal181

-

on Windows if you compile by using MSVS - build/darknet/x64

/darknet.exe will occur

-on Linux if you compile by using make - darknet will occur in the

current directory (near with Makefile)—

You are receiving this because you were mentioned.

Reply to this email directly, view it on GitHub

https://github.com/pjreddie/darknet/issues/597#issuecomment-429617819,

or mute the thread

https://github.com/notifications/unsubscribe-auth/AqFw_bV9pqzP-wJijyHOZ9pRSS8PbGCgks5ukxyEgaJpZM4TA4ER

.

@arsal181

Just compy ./darknet to the ./build/darknet/x64/darknet

sir when i run this error permission denied shows.

On Sun, Oct 14, 2018, 4:45 PM Alexey notifications@github.com wrote:

@arsal181 https://github.com/arsal181

Just compy ./darknet to the ./build/darknet/x64/darknet—

You are receiving this because you were mentioned.

Reply to this email directly, view it on GitHub

https://github.com/pjreddie/darknet/issues/597#issuecomment-429619685,

or mute the thread

https://github.com/notifications/unsubscribe-auth/AqFw_TQTFP8ntZhDdj5lbD6RSZfKrZFrks5ukyPwgaJpZM4TA4ER

.

I ran this command ./darknet detector train cfg/voc.data cfg/yolov3-voc.cfg darknet53.conv.74

Are these many iterations normal? I saw the posts about nan.

Loaded: 0.000025 seconds

Region 82 Avg IOU: nan, Class: nan, Obj: nan, No Obj: nan, .5R: 0.000000, .75R: 0.000000, count: 2

Region 94 Avg IOU: -nan, Class: -nan, Obj: -nan, No Obj: nan, .5R: -nan, .75R: -nan, count: 0

Region 106 Avg IOU: -nan, Class: -nan, Obj: -nan, No Obj: nan, .5R: -nan, .75R: -nan, count: 0

14799: -nan, -nan avg, 0.001000 rate, 0.086326 seconds, 14799 images

Loaded: 0.000039 seconds

Region 82 Avg IOU: nan, Class: nan, Obj: nan, No Obj: nan, .5R: 0.000000, .75R: 0.000000, count: 1

Region 94 Avg IOU: -nan, Class: -nan, Obj: -nan, No Obj: nan, .5R: -nan, .75R: -nan, count: 0

Region 106 Avg IOU: -nan, Class: -nan, Obj: -nan, No Obj: nan, .5R: -nan, .75R: -nan, count: 0

14800: -nan, -nan avg, 0.001000 rate, 0.085953 seconds, 14800 images

Saving weights to darknet/backup/yolov3-voc.backup

I ran this command ./darknet detector train cfg/voc.data cfg/yolov3-voc.cfg darknet53.conv.74

Are these many iterations normal? I saw the posts about nan.

Loaded: 0.000025 seconds

Region 82 Avg IOU: nan, Class: nan, Obj: nan, No Obj: nan, .5R: 0.000000, .75R: 0.000000, count: 2

Region 94 Avg IOU: -nan, Class: -nan, Obj: -nan, No Obj: nan, .5R: -nan, .75R: -nan, count: 0

Region 106 Avg IOU: -nan, Class: -nan, Obj: -nan, No Obj: nan, .5R: -nan, .75R: -nan, count: 0

14799: -nan, -nan avg, 0.001000 rate, 0.086326 seconds, 14799 images

Loaded: 0.000039 seconds

Region 82 Avg IOU: nan, Class: nan, Obj: nan, No Obj: nan, .5R: 0.000000, .75R: 0.000000, count: 1

Region 94 Avg IOU: -nan, Class: -nan, Obj: -nan, No Obj: nan, .5R: -nan, .75R: -nan, count: 0

Region 106 Avg IOU: -nan, Class: -nan, Obj: -nan, No Obj: nan, .5R: -nan, .75R: -nan, count: 0

14800: -nan, -nan avg, 0.001000 rate, 0.085953 seconds, 14800 images

Saving weights to darknet/backup/yolov3-voc.backup

That looks like bad data to me. nans pop up, but they shouldn't be your only result.

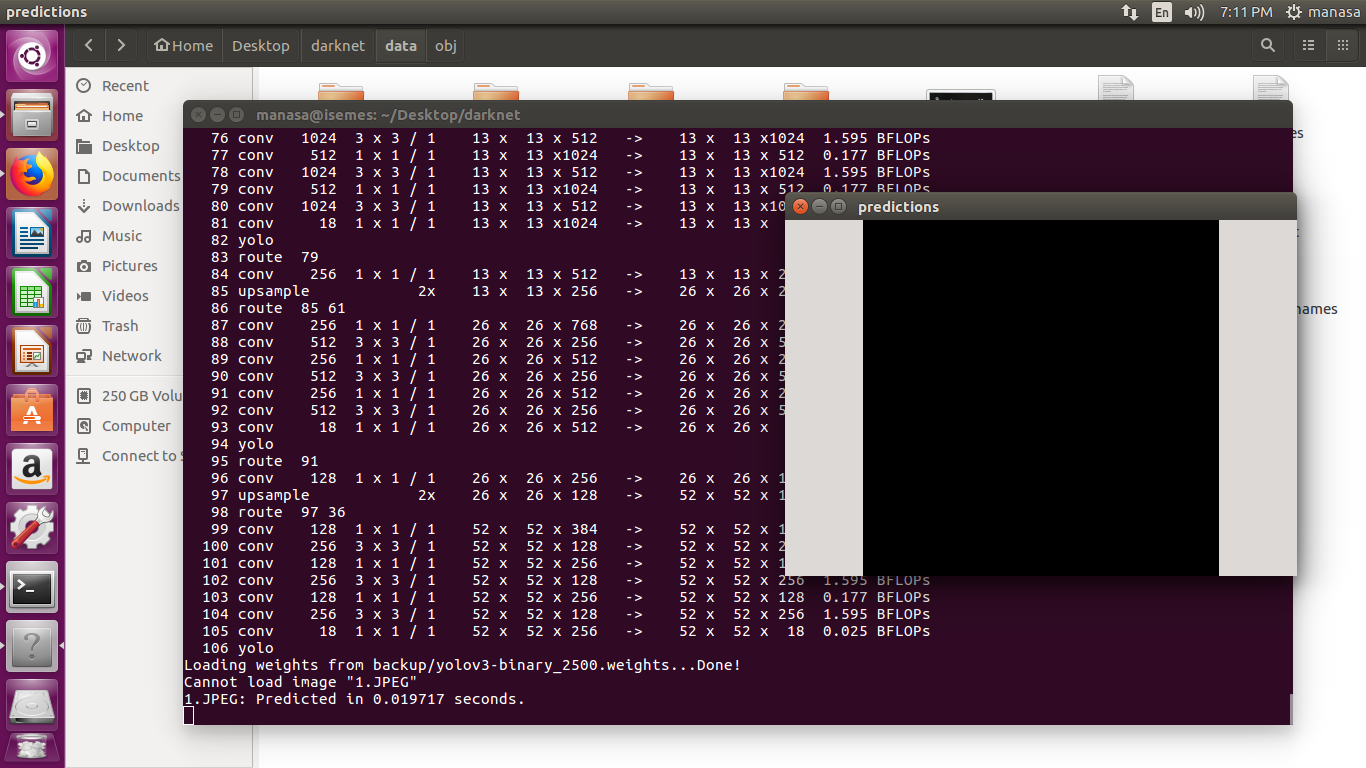

@AlexeyAB Sir I have trained custom data of 1 class with 200 images for 2500 iterations.For this, I have used yolov3.cfg file.In that I have changed

width and height=416

subdivisions=8

batch=64

classes=1

filters=18

When I tried to test with an image with the weights generated, I have seen blank screen on prediction window and showing could not load image as below.

What could be the reason?

The error message says It can't open 1.JPEG. Is this file a proper jpg? What happens if you test it with the dog and bicycle picture from the sample data?

your image path is not correct ot check the extension of image like jpg or

png

On Mon, Nov 12, 2018 at 9:56 AM PeterQuinn925 notifications@github.com

wrote:

The error message says It can't open 1.JPEG. Is this file a proper jpg?

What happens if you test it with the dog and bicycle picture from the

sample data?—

You are receiving this because you were mentioned.

Reply to this email directly, view it on GitHub

https://github.com/pjreddie/darknet/issues/597#issuecomment-437756350,

or mute the thread

https://github.com/notifications/unsubscribe-auth/AqFw_UMMxv2KwwyiH46xqgBV6xNSMjvyks5uuP-DgaJpZM4TA4ER

.

I have placed my images (.JPEG ) and .txt files in obj folder (i.e darknet->data->obj).

And all the images are in .JPEG format only.I have my yolov3.cfg ,obj.data and obj.names in cfg (i.e darknet->cfg) folder as well as in darknet.

The error message says It can't open 1.JPEG. Is this file a proper jpg? What happens if you test it with the dog and bicycle picture from the sample data?

For the dog and bicycle picture,I am able to get the image but without labels.

Thank you..The error was in the image path.But I am now seeing only the labels but not the bounding boxes in the test image.

Do you known where will cause the "nan" in the source code? I can not find out where will cause "nan" in "yolo_layer.c".

In the function _float *parse_fields(char *line, int n)_ in utils.c there are lines like if(p == c) field[count] = nan("");

Hi!!

I have some questions:

- I have my images (in jpg format) in the directory darknet/data/images and the labels (.txt) in teh directory darknet/data/labels

- When I try to train with my own dataset I get the following in the first iterations:

Region 94 Avg IOU: 0.327257, Class: 0.436315, Obj: 0.025532, No Obj: 0.005994, .5R: 0.000000, .75R: 0.000000, count: 4

Region 106 Avg IOU: 0.218748, Class: 0.485177, Obj: 0.011414, No Obj: 0.003186, .5R: 0.125000, .75R: 0.000000, count: 8

264: 45.015869, 26.430340 avg, 0.000005 rate, 0.082373 seconds, 264 images

Loaded: 0.000038 seconds

But after a while I get the following:

Region 94 Avg IOU: nan, Class: 0.000000, Obj: 0.000000, No Obj: 0.000000, .5R: 0.000000, .75R: 0.000000, count: 1

Region 106 Avg IOU: nan, Class: 0.000000, Obj: 0.000000, No Obj: 0.000000, .5R: 0.000000, .75R: 0.000000, count: 6

1139: -nan, nan avg, 0.001000 rate, 0.094441 seconds, 1139 images

Loaded: 0.000038 seconds

I try to put the label files in the same directory(darknet/data/images) that the images, but I have the same problem.

What could be the problem?

Regards!

@jessiffmm

Did you calculate the anchor boxes and put them into 3 places of .cfg file?

Hi @andyrey

I haven't calculated the anchor boxes. How Can I calculate the anchor boxes?

Regards!

@jessiffmm

if you have build darknet, launch cmd like this:

darknet3.exe detector calc_anchors data/obj.data -num_of_clusters 9 -width 416 -height 416 -showpause

and then you'll get "anchors.txt" file with 9 pairs of digits, replace (3 places in yolo- 3) in .cfg file.

Ok @andyrey

Thanks!!

I imagine that width and height depend of the images size.

@jessiffmm

All images are brought to same size (416x416) or something 32-fold.

All anchors dependent on individual dataset.

Hi!

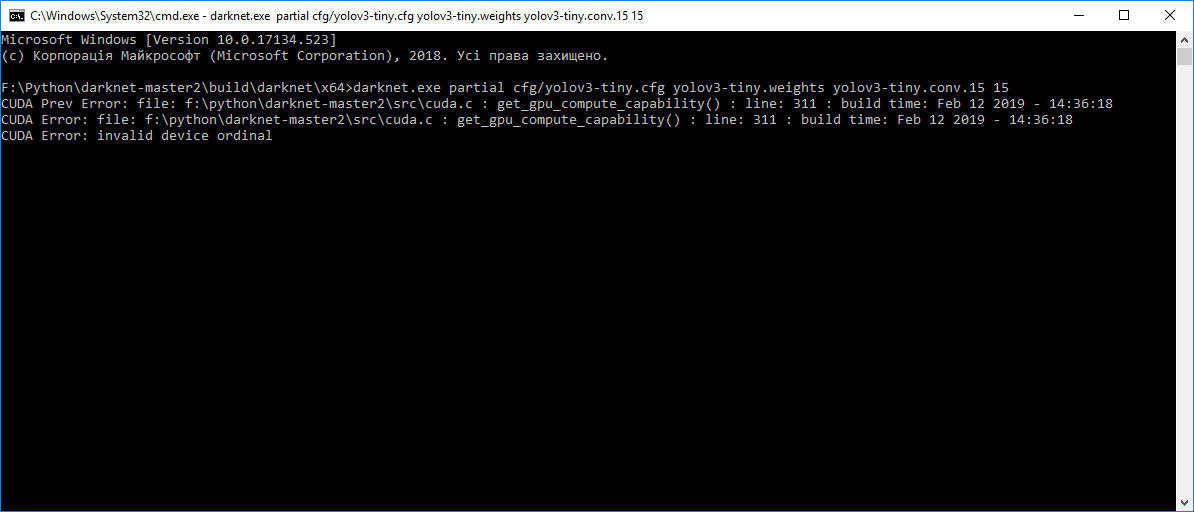

I tried to get pre-trained weights 'yolov3-tiny.conv.15' using command: 'darknet.exe partial cfg/yolov3-tiny.cfg yolov3-tiny.weights yolov3-tiny.conv.15 15', but every time I get this error:

Can you help me, please.

@Kertrop Try to update your code from GitHub.

@Kertrop Try to update your code from GitHub.

Thank you very much, it helped.

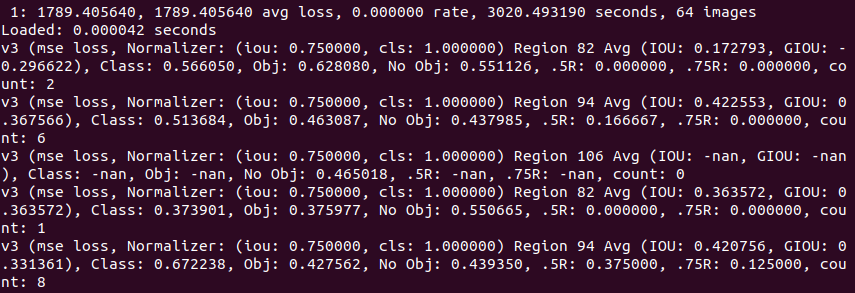

Sorry if it's inappropriate to ask here. I've been trying to train yolo3-tiny with my own dataset of 1 class and ~1000 positive samples. After 50 hours of training it tells:

Resizing

512

Loaded: 0.044990 seconds

Region 16 Avg IOU: -nan, Class: -nan, Obj: -nan, No Obj: 0.000001, .5R: -nan, .75R: -nan, count: 0

Region 23 Avg IOU: 0.737925, Class: 0.998520, Obj: 0.991458, No Obj: 0.000621, .5R: 1.000000, .75R: 0.000000, count: 1

54261: 0.292673, 0.218497 avg, 0.001000 rate, 4.347198 seconds, 54261 images

Loaded: 0.000091 seconds

I'm running it on i5 cpu in docker on windows host :) Is it possible to tell that training will complete on that system in any near future (this week). Or I should stop that misery and run on a "normal" system?

@dubtar

54261: 0.292673, 0.218497 avg, 0.001000 rate, 4.347198 seconds, 54261 images

Do you train with using yolov3-tiny.weights pretrained weights-file, or how did you train 54K iterations on CPU? )

There is enough low loss. So you can try to stop training, check wether it can detect anything, and if can't, then you should run it on a normal system with nVidia GPU )

@AlexeyAB Thanks, man! I was following article on Medium.com, so used darknet53.conv.74.

@AlexeyAB

hi,i have some question.

What reason is this?

Region 106 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 82 Avg IOU: 0.870595, Class: 0.994771, Obj: 0.700115, No Obj: 0.006700, .5R: 1.000000, .75R: 1.000000, count: 5

Region 94 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 106 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 82 Avg IOU: 0.678149, Class: 0.996602, Obj: 0.088626, No Obj: 0.002252, .5R: 1.000000, .75R: 0.500000, count: 2

Region 94 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 106 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 82 Avg IOU: 0.884214, Class: 0.999505, Obj: 0.904762, No Obj: 0.009010, .5R: 1.000000, .75R: 1.000000, count: 7

Region 94 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 106 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 82 Avg IOU: 0.784557, Class: 0.996936, Obj: 0.690478, No Obj: 0.002225, .5R: 1.000000, .75R: 0.500000, count: 2

Region 94 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 106 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 82 Avg IOU: 0.818402, Class: 0.993600, Obj: 0.891443, No Obj: 0.003130, .5R: 1.000000, .75R: 0.750000, count: 4

Region 94 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 106 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

@AlexeyAB

Hello, I'm trying to crawl baidu food pictures in the atlas of the training, but found that iou is not high, class objective parameters, bouncing obj parameters is very big, loss has been maintained at 0.3-0.5, this is what causes? Is the picture background is too complicated or I caused by the number of each type is not the same as picture?

@yxwLearn Hi,

hi,i have some question.

What reason is this?Region 106 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

This is normal.

Look only at avg loss: https://github.com/AlexeyAB/darknet#how-to-train-to-detect-your-custom-objects

Note: If during training you see nan values for avg (loss) field - then training goes wrong, but if nan is in some other lines - then training goes well.

and look at mAP if you train with flag -map https://github.com/AlexeyAB/darknet#when-should-i-stop-training

Hello, I'm trying to crawl baidu food pictures in the atlas of the training, but found that iou is not high, class objective parameters, bouncing obj parameters is very big, loss has been maintained at 0.3-0.5, this is what causes?

- Can you rename you cfg file to txt file and attach it?

- How many classes and images do you have?

- Show point cloud that you can get by using command

./darknet detector calc_anchors data/obj.data -num_of_clusters 9 -width 608 -height 608 -show

using this repo: https://github.com/AlexeyAB/darknet

Is the picture background is too complicated or I caused by the number of each type is not the same as picture?

What do you mean?

@AlexeyAB

sorry,something went wrong when I upload my cfg file,so I paste it.

and i have 11 classes and 5853 images,but number of pictures of each classes is different, some more or less.

I run this training on Windows .

I mean my picture quality is not very good, this is the cause of loss at 0.3 to 0.5?

`[net]

Testing

batch=1

subdivisions=1

Training

batch=64

subdivisions=32

width=416

height=416

channels=3

momentum=0.9

decay=0.0005

angle=0

saturation = 1.5

exposure = 1.5

hue=.1

learning_rate=0.001

burn_in=1000

max_batches = 500200

policy=steps

steps=400000,450000

scales=.1,.1

[convolutional]

batch_normalize=1

filters=32

size=3

stride=1

pad=1

activation=leaky

Downsample

[convolutional]

batch_normalize=1

filters=64

size=3

stride=2

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=32

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=64

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

Downsample

[convolutional]

batch_normalize=1

filters=128

size=3

stride=2

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=64

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=128

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=64

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=128

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

Downsample

[convolutional]

batch_normalize=1

filters=256

size=3

stride=2

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=128

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=256

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=128

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=256

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=128

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=256

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=128

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=256

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=128

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=256

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=128

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=256

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=128

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=256

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=128

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=256

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

Downsample

[convolutional]

batch_normalize=1

filters=512

size=3

stride=2

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=256

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=512

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=256

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=512

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=256

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=512

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=256

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=512

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=256

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=512

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=256

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=512

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=256

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=512

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=256

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=512

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

Downsample

[convolutional]

batch_normalize=1

filters=1024

size=3

stride=2

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=512

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=1024

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=512

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=1024

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=512

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=1024

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

[convolutional]

batch_normalize=1

filters=512

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=1024

size=3

stride=1

pad=1

activation=leaky

[shortcut]

from=-3

activation=linear

#

[convolutional]

batch_normalize=1

filters=512

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

size=3

stride=1

pad=1

filters=1024

activation=leaky

[convolutional]

batch_normalize=1

filters=512

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

size=3

stride=1

pad=1

filters=1024

activation=leaky

[convolutional]

batch_normalize=1

filters=512

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

size=3

stride=1

pad=1

filters=1024

activation=leaky

[convolutional]

size=1

stride=1

pad=1

filters=48

activation=linear

[yolo]

mask = 6,7,8

anchors = 2.67,3.02, 3.26,4.61, 4.44,5.91, 4.76,8.50, 5.27,3.82, 6.97,7.43, 8.24,10.17, 8.97,5.41, 10.51,8.19, 11.25,11.33

classes=11

num=9

jitter=.3

ignore_thresh = .7

truth_thresh = 1

random=1

[route]

layers = -4

[convolutional]

batch_normalize=1

filters=256

size=1

stride=1

pad=1

activation=leaky

[upsample]

stride=2

[route]

layers = -1, 61

[convolutional]

batch_normalize=1

filters=256

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

size=3

stride=1

pad=1

filters=512

activation=leaky

[convolutional]

batch_normalize=1

filters=256

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

size=3

stride=1

pad=1

filters=512

activation=leaky

[convolutional]

batch_normalize=1

filters=256

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

size=3

stride=1

pad=1

filters=512

activation=leaky

[convolutional]

size=1

stride=1

pad=1

filters=48

activation=linear

[yolo]

mask = 3,4,5

anchors = 2.67,3.02, 3.26,4.61, 4.44,5.91, 4.76,8.50, 5.27,3.82, 6.97,7.43, 8.24,10.17, 8.97,5.41, 10.51,8.19, 11.25,11.33

classes=11

num=9

jitter=.3

ignore_thresh = .7

truth_thresh = 1

random=1

[route]

layers = -4

[convolutional]

batch_normalize=1

filters=128

size=1

stride=1

pad=1

activation=leaky

[upsample]

stride=2

[route]

layers = -1, 36

[convolutional]

batch_normalize=1

filters=128

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

size=3

stride=1

pad=1

filters=256

activation=leaky

[convolutional]

batch_normalize=1

filters=128

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

size=3

stride=1

pad=1

filters=256

activation=leaky

[convolutional]

batch_normalize=1

filters=128

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

size=3

stride=1

pad=1

filters=256

activation=leaky

[convolutional]

size=1

stride=1

pad=1

filters=48

activation=linear

[yolo]

mask = 0,1,2

anchors = 2.67,3.02, 3.26,4.61, 4.44,5.91, 4.76,8.50, 5.27,3.82, 6.97,7.43, 8.24,10.17, 8.97,5.41, 10.51,8.19, 11.25,11.33

classes=11

num=9

jitter=.3

ignore_thresh = .7

truth_thresh = 1

random=1

`

@AlexeyAB

oh,I trained for 14300 iteration,that the console parameter is very considerable,but when testing a classes, the effect is not very well。sorry about that I can't upload picture,so I paste it。

Region 82 Avg IOU: 0.872828, Class: 0.998161, Obj: 0.791894, No Obj: 0.002921, .5R: 1.000000, .75R: 1.000000, count: 2

Region 94 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 106 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 82 Avg IOU: 0.793445, Class: 0.999805, Obj: 0.914844, No Obj: 0.002492, .5R: 1.000000, .75R: 0.500000, count: 2

Region 94 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 106 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 82 Avg IOU: 0.878821, Class: 0.999332, Obj: 0.741771, No Obj: 0.009482, .5R: 1.000000, .75R: 1.000000, count: 9

Region 94 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 106 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 82 Avg IOU: 0.864686, Class: 0.998293, Obj: 0.986454, No Obj: 0.002985, .5R: 1.000000, .75R: 1.000000, count: 2

Region 94 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 106 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 82 Avg IOU: 0.712289, Class: 0.998681, Obj: 0.890383, No Obj: 0.003450, .5R: 1.000000, .75R: 0.000000, count: 2

Region 94 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 106 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 82 Avg IOU: 0.960917, Class: 0.999933, Obj: 0.758110, No Obj: 0.004128, .5R: 1.000000, .75R: 1.000000, count: 2

Region 94 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 106 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

14548: 0.230654, 0.198709 avg loss, 0.001000 rate, 7.220494 seconds, 931072 images

Loaded: 0.000000 seconds

Region 82 Avg IOU: 0.904501, Class: 0.999886, Obj: 0.683604, No Obj: 0.003879, .5R: 1.000000, .75R: 1.000000, count: 2

Region 94 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 106 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 82 Avg IOU: 0.836755, Class: 0.999735, Obj: 0.885447, No Obj: 0.002573, .5R: 1.000000, .75R: 1.000000, count: 2

Region 94 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 106 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 82 Avg IOU: 0.915653, Class: 0.998804, Obj: 0.946341, No Obj: 0.003264, .5R: 1.000000, .75R: 1.000000, count: 2

Region 94 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 106 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 82 Avg IOU: 0.883266, Class: 0.999722, Obj: 0.985086, No Obj: 0.006194, .5R: 1.000000, .75R: 0.750000, count: 4

Region 94 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000001, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 106 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 82 Avg IOU: 0.951540, Class: 0.998787, Obj: 0.858221, No Obj: 0.003164, .5R: 1.000000, .75R: 1.000000, count: 2

Region 94 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

Region 106 Avg IOU: -nan(ind), Class: -nan(ind), Obj: -nan(ind), No Obj: 0.000000, .5R: -nan(ind), .75R: -nan(ind), count: 0

@yxwLearn

Your objects are very small. So train with width=832 height=832 in cfg-file.

@AlexeyAB

Thank you!I will try it,and I calculate anchors by gen_anchors.py,This is necessary?

@yxwLearn No. Use default anchors.

@AlexeyAB

thank you very much!

@AlexeyAB

what‘s wrong?

1>nvcc fatal : Unsupported gpu architecture 'compute_75'

1>nvcc fatal : Unsupported gpu architecture 'compute_75

@yxwLearn

- Either install CUDA 10.0

- or change 75 to 60

open darknet.sln -> (right click on project) -> properties -> CUDA C/C++ -> Device and change here;compute_75,sm_75to;compute_60,sm_60

@AlexeyAB

sorry,I'm too careless。

@AlexeyAB

My computer video card is 1070.

but when i change the cfg-file width=832 height=832 and i also set subvisions=64,it always out of memory,I should set the random = 0?

@jfries289

sorry?

when it always out of memory,Should not increase the value of subvisions?

@AlexeyAB

I train with cfg-file width=832 height=832 and sunvisions=64,random=1,but the training very slow.

My computer video card is 1070.

Greater subdivisions, the slower training. Less subdivisions= faster training, but may be memory exceeding. Try to find trade-off.

@yxwLearn

Use width=416 height=416 random=1 batch=64 subdivisions=64

or

width=832 height=832 random=0 batch=64 subdivisions=64

Can you help me, please

'darknet.exe' n’est pas reconnu en tant que commande interne

ou externe, un programme exécutable ou un fichier de commandes.

Hi @sarratouil

Are you using ubuntu or windows?

If you are using ubuntu you have to execute with ./darknet

@AlexeyAB

Should I modify the parameter of the file makefile?

@AlexeyAB

hi,excuse me

The mAP% in the process of training is very high, but the obj has a low value?

why?

@AlexeyAB

And the classes is 1,the subvisions is 32,random=1, the value of the loss is 0.4-0.5, the iteration is 1300 steps

@AlexeyAB

Hi, I had been training 1 class with yolov3-tiny with initially 50-60 images, it is a small object. Even after 20,000 iterations it is still only giving me a average loss of 0.5-0.7 and it stopped decreasing. Is there a way to drop the detection rate? I need about 0.06 average loss. I am training with Mac with no gpu because that's the best device I could find.

Would height and width matter? What do you suggest?

My sample label text data:

0 0.246094 0.644444 0.088021 0.020370 and 0 0.819792 0.748611 0.241667 0.063889

My image dimension is 1920x1080 with resolution 72dpiDoes anchor needs to be changed?

- Is the learning rate of 0.001 okay?

Thank you so much for the help.

My tiny-yolov3

[net]

Testing

batch=1

subdivisions=1

Training

batch=64

subdivisions=2

width=416

height=416

channels=3

momentum=0.9

decay=0.0005

angle=0

saturation = 1.5

exposure = 1.5

hue=.1

learning_rate=0.001

burn_in=1000

max_batches = 500200

policy=steps

steps=400000,450000

scales=.1,.1

[convolutional]

batch_normalize=1

filters=16

size=3

stride=1

pad=1

activation=leaky

[maxpool]

size=2

stride=2

[convolutional]

batch_normalize=1

filters=32

size=3

stride=1

pad=1

activation=leaky

[maxpool]

size=2

stride=2

[convolutional]

batch_normalize=1

filters=64

size=3

stride=1

pad=1

activation=leaky

[maxpool]

size=2

stride=2

[convolutional]

batch_normalize=1

filters=128

size=3

stride=1

pad=1

activation=leaky

[maxpool]

size=2

stride=2

[convolutional]

batch_normalize=1

filters=256

size=3

stride=1

pad=1

activation=leaky

[maxpool]

size=2

stride=2

[convolutional]

batch_normalize=1

filters=512

size=3

stride=1

pad=1

activation=leaky

[maxpool]

size=2

stride=1

[convolutional]

batch_normalize=1

filters=1024

size=3

stride=1

pad=1

activation=leaky

#

[convolutional]

batch_normalize=1

filters=256

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=512

size=3

stride=1

pad=1

activation=leaky

[convolutional]

size=1

stride=1

pad=1

filters=18

activation=linear

[yolo]

mask = 3,4,5

anchors = 10,14, 23,27, 37,58, 81,82, 135,169, 344,319

classes=1

num=6

jitter=.3

ignore_thresh = .7

truth_thresh = 1

random=1

[route]

layers = -4

[convolutional]

batch_normalize=1

filters=128

size=1

stride=1

pad=1

activation=leaky

[upsample]

stride=2

[route]

layers = -1, 8

[convolutional]

batch_normalize=1

filters=256

size=3

stride=1

pad=1

activation=leaky

[convolutional]

size=1

stride=1

pad=1

filters=18

activation=linear

[yolo]

mask = 0,1,2

anchors = 10,14, 23,27, 37,58, 81,82, 135,169, 344,319

classes=1

num=6

jitter=.3

ignore_thresh = .7

truth_thresh = 1

random=1

@elainewu1

Try to train with width=832 height=832 batch=64 subdivisions=8 and with default anchors

Even after 20,000 iterations it is still only giving me a average loss of 0.5-0.7 and it stopped decreasing. Is there a way to drop the detection rate? I need about 0.06 average loss. I am training with Mac with no gpu because that's the best device I could find.

It can be a normal avg loss, what mAP can you get?: https://github.com/AlexeyAB/darknet#when-should-i-stop-training

When you see that average loss 0.xxxxxx avg no longer decreases at many iterations then you should stop training. The final avgerage loss can be from 0.05 (for a small model and easy dataset) to 3.0 (for a big model and a difficult dataset).

@jessiffmm i use a windows thank you it works but i have another problem

@AlexeyAB

Hello AlexeyAB, I tried the suggestion of changing the height and width and the detection time is improved only by a second after 20,000 iterations, still needs 9 seconds to detect. What else could I do? The training set is about 60 images for 1 class and 60% of the images used for test and 40% for train.

While training (with gpu & CUDA) i am getting following error ..

can anyone please help me ?

CUDA status Error: file: ....srccuda.c : cuda_set_device() : line: 22 : build time: Mar 10 2019 - 15:38:38

CUDA Error: unknown error

Windows 10

Cuda 10.0

CUDN 7.4

@AlexeyAB you have any idea which weight of yolo that modified GoogLeNet architecture

an fast version of YOLO,

based on a neural network with fewer convolutional layers (9

instead of 24) and fewer filters in those layers.

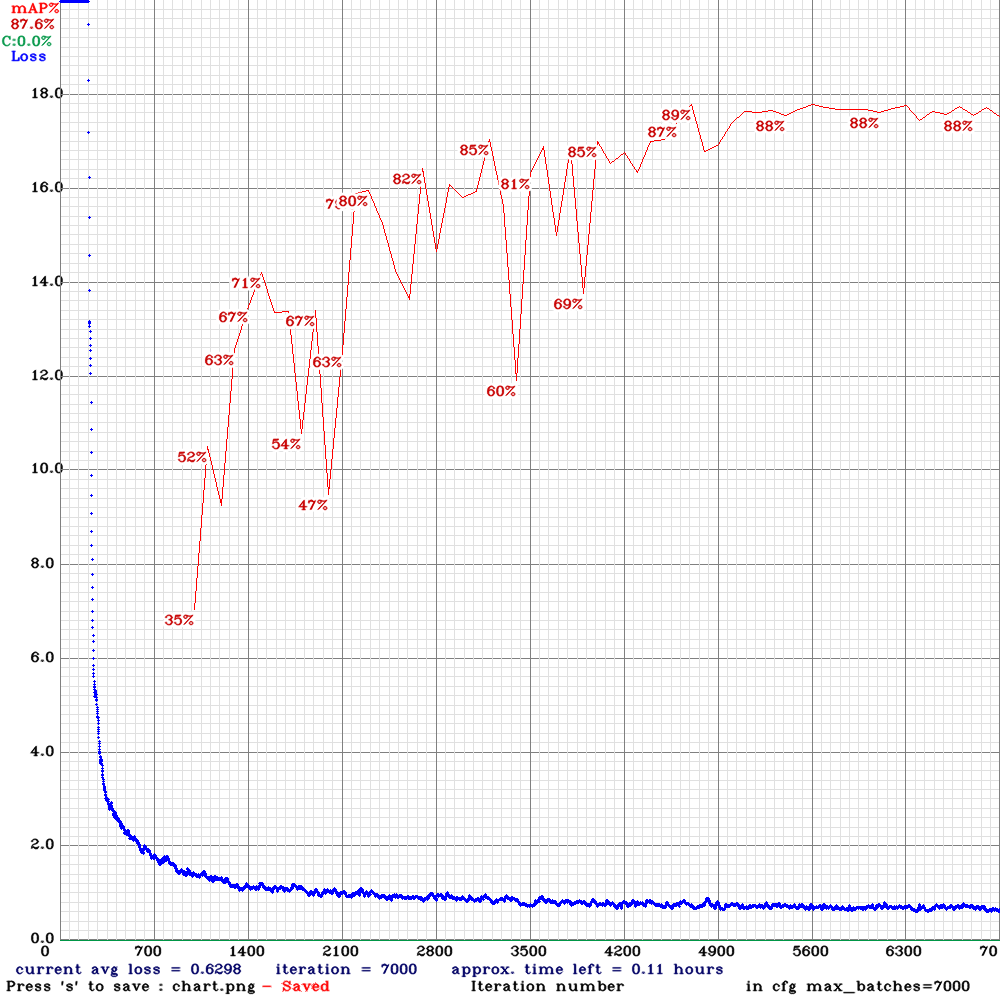

@AlexeyAB I train yolov3 to my own object such as 'iris' with 2638 images: 1500 for training and the other for the test

But I have a problem in the result

@sarratouil There is no problem.

@AlexeyAB precision=1 it's correct?

my file config

[net]

Testing

batch=64

subdivisions=64

Training

batch=64

subdivisions=64

width=416

height=416

channels=3

momentum=0.9

decay=0.0005

angle=0

saturation = 1.5

exposure = 1.5

hue=.1

learning_rate=0.001

burn_in=1000

max_batches = 1000

policy=steps

steps=400000,450000

scales=.1,.1

[convolutional]

batch_normalize=1

filters=16

size=3

stride=1

pad=1

activation=leaky

[maxpool]

size=2

stride=2

[convolutional]

batch_normalize=1

filters=32

size=3

stride=1

pad=1

activation=leaky

[maxpool]

size=2

stride=2

[convolutional]

batch_normalize=1

filters=64

size=3

stride=1

pad=1

activation=leaky

[maxpool]

size=2

stride=2

[convolutional]

batch_normalize=1

filters=128

size=3

stride=1

pad=1

activation=leaky

[maxpool]

size=2

stride=2

[convolutional]

batch_normalize=1

filters=256

size=3

stride=1

pad=1

activation=leaky

[maxpool]

size=2

stride=2

[convolutional]

batch_normalize=1

filters=512

size=3

stride=1

pad=1

activation=leaky

[maxpool]

size=2

stride=1

[convolutional]

batch_normalize=1

filters=1024

size=3

stride=1

pad=1

activation=leaky

#

[convolutional]

batch_normalize=1

filters=256

size=1

stride=1

pad=1

activation=leaky

[convolutional]

batch_normalize=1

filters=512

size=3

stride=1

pad=1

activation=leaky

[convolutional]

size=1

stride=1

pad=1

filters=18

activation=linear

[yolo]

mask = 3,4,5

anchors = 10,14, 23,27, 37,58, 81,82, 135,169, 344,319

classes=1

num=6

jitter=.3

ignore_thresh = .7

truth_thresh = 1

random=1

[route]

layers = -4

[convolutional]

batch_normalize=1

filters=128

size=1

stride=1

pad=1

activation=leaky

[upsample]

stride=2

[route]

layers = -1, 8

[convolutional]

batch_normalize=1

filters=256

size=3

stride=1

pad=1

activation=leaky

[convolutional]

size=1

stride=1

pad=1

filters=18

activation=linear

[yolo]

mask = 0,1,2

anchors = 10,14, 23,27, 37,58, 81,82, 135,169, 344,319

classes=1

num=6

jitter=.3

ignore_thresh = .7

truth_thresh = 1

random=1

@sarratouil how did u run darknet on windows ?

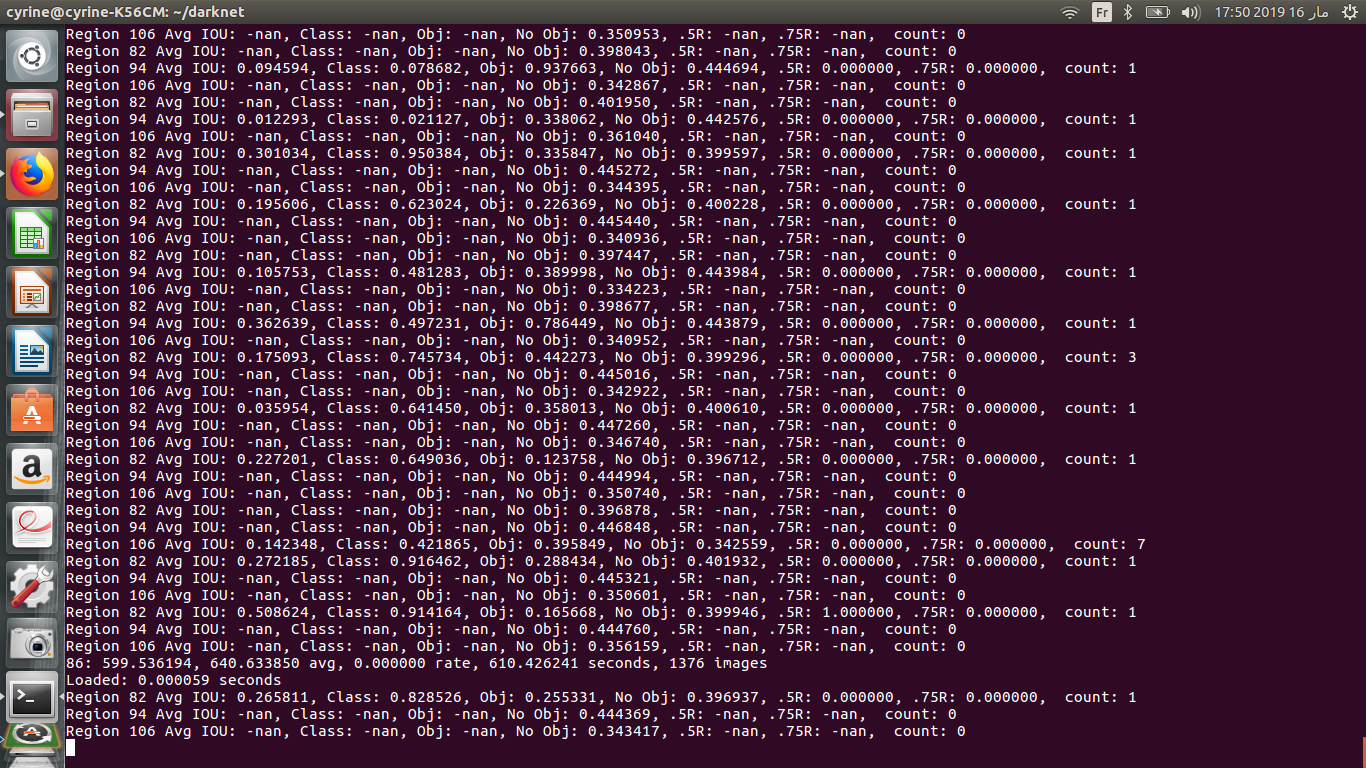

@AlexeyAB should i use different anchors for my own dataset , or i keep the default anchors ?

it my training going well ? i have too much NaN

@cyrineee you can run it with execute darknet.sln of draknet folder on visual studio

this link help you : https://github.com/AlexeyAB/darknet

@AlexeyAB Sir, I trained and detected the Thai license plates along with digits using yolov3. I can see the detected output in the terminal when I give test image as shown in the image.

Now, I want to print the detected characters on the terminal as

license plate: 11390

So to get this, I need help regarding where can I change the code and what to add to extract the detected digits and print them.

Thanks in advance

Manasa.

Dear friends, let us ask here questions specific to the topic- YOLO-3. Please, do not ask here questions referred to common items of programming on C/C++, like console output or how to use printer, just take one of many books on programming or use extensive Internet resources .

@ManasaNadimpalli Hi,

If you use this repository https://github.com/AlexeyAB/darknet and if you use Detection on Image (not on Video) then as you see the detection are already sorted from Left to Right: https://github.com/AlexeyAB/darknet/blob/9815012a01c13f70806381e0c5d556599117c76b/src/image.c#L333

So in the console output detections already sorted from left to right.

If you want to use Darknet as SO/DLL library by using your own C/C++/Python application, then you should sort detection by yourself in your code.

@ManasaNadimpalli Hi,

If you use this repository https://github.com/AlexeyAB/darknet and if you use Detection on Image (not on Video) then as you see the detection are already sorted from Left to Right: https://github.com/AlexeyAB/darknet/blob/9815012a01c13f70806381e0c5d556599117c76b/src/image.c#L333

So in the console output detections already sorted from left to right.

If you want to use Darknet as SO/DLL library by using your own C/C++/Python application, then you should sort detection by yourself in your code.

Thank you Sir.I am able to do it on image. Now I need to do it on video. Is there sort function for video to get the detection from left to right? If not,is it possible to add it to detect in video?

Regards

Manasa.

::Resolved this Error ::

Solution :: Need to install the nvidia drivers !!!

When i try to train the custom images in windows getting following error? Can any one please help ?

./darknet detector train data/obj.data yolo-obj.cfg darknet19_448.conv.23 CUDA status Error: file: ....srccuda.c : cuda_set_device() : line: 22 : build time: Mar 10 2019 - 21:51:45 CUDA Error: unknown error

@AlexeyAB and others who knows...

I got 2 strongly overlapped objects of different classes (one object's bounding box (BB) is inside another object's BB), it should not be in my case. Changing nms doesn't change result. I understand this as:

nms influence only on objects of same class, but not on the objects of different classes. Am I right or wrong?

@andyrey

Changing nms doesn't change result. I understand this as:

nms influence only on objects of same class, but not on the objects of different classes.

Yes.

Hello @AlexeyAB , Can I print the detected output on one side of the video frame while I am running on video?

Thanks in advance

@ndg123

Hi!, I have a question what of we have different input size for the network ?

what value should we take for height and width and anchors box ?!

@sonalambwani Just wait about 1000 iterations, and

nanwill disappear: AlexeyAB#504 (comment)1. You can re-calculate anchors, but it is not necessary. You can calculate anchors for Yolo v3 using this fork: https://github.com/AlexeyAB/darknet and this command if in your cfg-file `width=416` and `height=416`: `darknet.exe detector calc_anchors data/voc.data -num_of_clusters 9 -width 416 -heigh 416`This anchors you can use in your cfg-file (without multiplication by 32)

1. You can use the same labels as for Yolo v2

voc.data is not in data/

@AlexeyAB

you have any idea how i convert the model .h5 to .weight model darknet

@AlexeyAB I train yolov3 to my own object such as 'iris' with 2638 images: 1500 for training and the other for the test

But I have a problem in the result

hi,

Im sorry im very new to yolo. Can i know how calculate mAP performance and get this kind of output using the trained weight file.

thank you

@sumitra20

Use this repository to calculate mAP: https://github.com/AlexeyAB/darknet

How to calculate mAP on Darknet: https://github.com/AlexeyAB/darknet#when-should-i-stop-training

Read about mAP: https://medium.com/@jonathan_hui/map-mean-average-precision-for-object-detection-45c121a31173

@AlexeyAB As I am new to YOLO I do not know how the training works here. Can the YOLO training be stopped in the middle and continue from the same step where it got stopped, just like SSD and Faster R-CNN using Tensorflow-api?

@dinesh1218 -yes, you can stop and re-launch training process later, and YOLO takes the last saved weight for resuming training. I have done it many times.

@andyrey Thank you so much. will check it.

@sumitra20

Use this repository to calculate mAP: https://github.com/AlexeyAB/darknet

How to calculate mAP on Darknet: https://github.com/AlexeyAB/darknet#when-should-i-stop-training

Read about mAP: https://medium.com/@jonathan_hui/map-mean-average-precision-for-object-detection-45c121a31173

@AlexeyAB Thank you so much for the link, its very helpful. I have just started training the yolov3 model using my own data-set and my average loss from the beginning of the training until now (43rd training iteration) is 0.0000. Could that indicate any error?

@andyrey Can you please guide me to restart YOLO training from saved weights. When I tried to use the below command it starts from beginning.

./darknet detector train data/obj.data yolo-obj.cfg darknet53.conv.74

I have the following weights saved inside my backup folder - yolo-obj_1000.weights and yolo-obj_last.weights. Should I need to use these weights to restart my training, if so how can I do it?

Yes, instead _darknet53.conv.74_ you should set your last weight.

@sumitra20

Use this repository to calculate mAP: https://github.com/AlexeyAB/darknet

How to calculate mAP on Darknet: https://github.com/AlexeyAB/darknet#when-should-i-stop-training

Read about mAP: https://medium.com/@jonathan_hui/map-mean-average-precision-for-object-detection-45c121a31173

@AlexeyAB

When I use the repository you shared to calculate mAP, I find "TP + FP" is not equal to "detections_count". It confuse me a lot, and I wonder if that is wrong.

Looking forward to you reply, thanks!

I have the same issue, should I wait or something is going wrong?

@AlexeyAB @pjreddie @CThuw is there any way by which i can access the loss and average loss value during training so that the training gets automatically stops if the loss value comes under certain range?

@hghimanshu Hi, I don't have a programmatical answer but here is an idea :

Darknet saves weights as it goes so you have .weight files available even though the learning isn't done.

You can check your log file in real time with tail -f log and when you are satisfied with your loss and loss average you can simply use the latest weight file obtained.

@AlexeyAB or anyone else Could you please suggest me more about training?

Supposed that, I have 3 classes of objects [cat, dog, fish] and each class contains 450 of labelled images for training.

After finished the training with using pretrained weights-file (such as yolov3-tiny.conv.15) and, later that, I want to expand the objects to 6 classes [cat, dog, fish, bird, horse, bear] what should I do?

A. Using the previous trained weights-file (from 3 classes) and training with the image dataset of only additional 3 classes [bird, horse, bear]. The file obj.names are contained 6 classes as [cat, dog, fish, bird, horse, bear].

B. Using the previous trained weights-file (from 3 classes) and training with the image dataset contains all of 6 classes [cat, dog, fish, bird, horse, bear]. The images dataset is mixed between [cat, dog, fish] images from the previous training and the new images for [bird, horse, bear]. The file obj.names are contained 6 classes as [cat, dog, fish, bird, horse, bear].

C. Discard the previous train weights-file and start training from the beginning by using the yolov3-tiny.conv.15 weights-file.

I also have no idea about how to edit the cfg file for those methods. Could you please suggest me?

2BankPC. My experience:You cannot reuse weights, trained on 3 classes, for expanding to 6 classes.

You will get failure in training process if you try to use it.

Your "C" is most adequate variant. You only can reuse your previous dataset, but even here you have to renew your 3-class annotations to 6-classes.

Hello when i use this command i

./darknet detector train data/obj.data yolo-obj.cfg darknet53.conv.74

i get Couldn't open file: data/obj.data

Please help

go to cfg directory and copy the coco.data and rename the file with

obj.data and paste the data directory

On Sat, Mar 21, 2020 at 10:36 PM Lumi1717 notifications@github.com wrote:

Hello when i use this command i

./darknet detector train data/obj.data yolo-obj.cfg darknet53.conv.74

i get Couldn't open file: data/obj.data

Please help—

You are receiving this because you were mentioned.

Reply to this email directly, view it on GitHub

https://github.com/pjreddie/darknet/issues/597#issuecomment-602076975,

or unsubscribe

https://github.com/notifications/unsubscribe-auth/AKQXB7P6QPJ2SXHXWR5SLKLRIT3H7ANCNFSM4EYDQEIQ

.

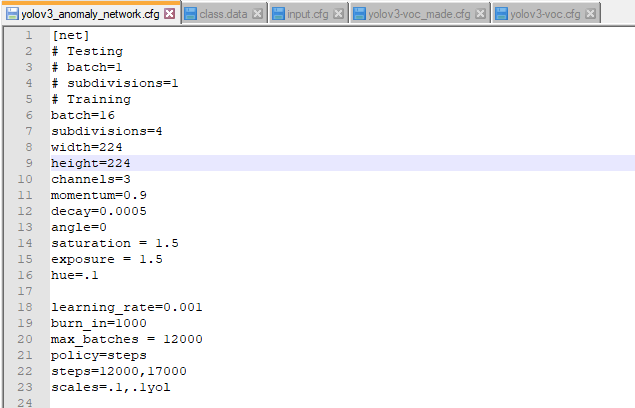

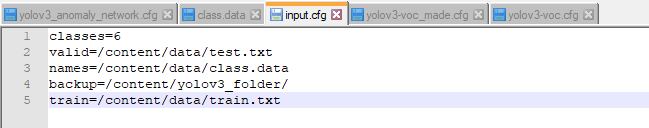

I'm trying to train yolov3 model using darknet53 on my own custom dataset. Can anyone help me with issue I have.

My training gets stopped at 100th iteration even when the max batches in yolov3.cfg is 12000 and also the backup file is not getting saved at the location given in input.cfg file. I'm attaching snippets of input.cfg and yolov3.cfg for your clear understanding. Thank you in advance.

yolov3.cfg

input.cfg

@AlexeyAB @pjreddie

Dear sir!

I use this command for the display of graph:

./darknet detector train data/obj.data yolo-obj.cfg darknet53.conv.74 -map

but it didn't show any graph .....

Please guide me through

you should use last saved weight rather than darknet53 weights

On Wed, Apr 1, 2020 at 11:52 AM Rao Abdul Rehman Khan <

[email protected]> wrote:

@AlexeyAB https://github.com/AlexeyAB

Dear sir!

I use this command for the display of graph:

./darknet detector train data/obj.data yolo-obj.cfg darknet53.conv.74 -map

but it didn't show any graph .....

Please guide me through—

You are receiving this because you were mentioned.

Reply to this email directly, view it on GitHub

https://github.com/pjreddie/darknet/issues/597#issuecomment-607068914,

or unsubscribe

https://github.com/notifications/unsubscribe-auth/AKQXB7KLEKOE6ZP4JFHWHK3RKLQETANCNFSM4EYDQEIQ

.

@arsal181

Thank you for your reply...

i want to ask you, can i see the loss graph at runtime..

@Raoabdulrehman771

In my case, showing the plot of loss function, doesn't depend on flag _-map_ in command line. It is inside program and built in. Can you see console output to confirm the process of training has been launched at all? If yes, you should find out in main() code the proper place referred to plotting and rebuild the program.

@andyrey

can you specify a file name?

@Raoabdulrehman771

in file detector.c

check running these lines in function

_void run_detector(int argc, char **argv)_

from line 1377

_else if(0==strcmp(argv[2], "train")) train_detector(datacfg, cfg, weights, gpus, ngpus, clear, dont_show, calc_map);

else if(0==strcmp(argv[2], "valid")) validate_detector(datacfg, cfg, weights, outfile);

else if(0==strcmp(argv[2], "recall")) validate_detector_recall(datacfg, cfg, weights);

else if(0==strcmp(argv[2], "map")) validate_detector_map(datacfg, cfg, weights, thresh, iou_thresh, NULL);_

Hi,@andyrey!

My situation:

I need training to identify 7 objects. After the first training, I identified 3 objects, the second time I took the weight that had been successfully trained before to train 3 more objects. This time I still succeed. The third time, I took the weight file that was successfully trained before (the second time) to continue training, the error happens as shown in the picture?

I have studied for a long time but still can not fix.

Hope you can help me!

Can you ask me with yolov3 how many subjects can I train?

In case, I want to train more objects on the file weight before I have to do?

Currently, I am using google colab for training because I am not eligible to buy a good computer to train data.

@ThuanK42

Hello, Thuan, I don't understand your first fraze: _"I need training to identify 7 objects"_. Did you mean training to detect all objects of 7 different classes? Different images can contain various number of objects, but we can detect and distinct only those belonging to trained classes.

If you firstly train the model for 7 classes, then, after adding to your dataset new labeled samples, you can prolong training and you can use weight from the first training, but in this case you can use the same cfg file, same number of classes (7). Of course, on test you can detect any number of objects( but some limit exists) in the picture, each object belongs to one of 7 trained classes.

First, thank you for your feedback.

I want to identify with my own data set, I want to identify computer mice, motorbikes, buses, airplanes, laptops, phones, TVs. During the first 2 training sessions, I identified : computer mouse, motorbike, bus, airplane, laptop, phone. But at the last training session when I continued to get the weight file that had been successfully trained before, there was an error below

error -nan

help you , pls

@ThuanK42

Firstly you should check up, why you can't detect TV-set. Try to test one of the training images from 1st dataset,where TV-set is, with the best weight from the 1st train. Does it detected?

If you get _-nan_ in further training, do you get the process of training broken? If yes, check up your newer added dataset. Usually subprogram _anchors finding_ reports some problem samples.

If not, go on training.

And, if you prolong training, I recommend you to train over all summary dataset, not only newly added.

Hello, how can i get the mAP form my weights after training , and did yollov3 use a focal loss function ?

darknet.exe detector map data/obj.data yolo-obj.cfg

backupyolo-obj_7000.weights

I think YOLOv3 does not use focal loss because it has some problems

On Thu, Apr 16, 2020 at 2:03 AM Amal Chaari notifications@github.com

wrote:

Hello, how can i get the mAP form my weights after training , and did

yellov3 user a loss focal loss function ?—

You are receiving this because you were mentioned.

Reply to this email directly, view it on GitHub

https://github.com/pjreddie/darknet/issues/597#issuecomment-614278689,

or unsubscribe

https://github.com/notifications/unsubscribe-auth/AKQXB7OCMROSWP5ACO5RUKLRMYOKDANCNFSM4EYDQEIQ

.

thank you, did you know witch loss function Yolov3 use ?

@andyrey thank you

First, thank you for your feedback.

I want to identify with my own data set, I want to identify computer mice, motorbikes, buses, airplanes, laptops, phones, TVs. During the first 2 training sessions, I identified : computer mouse, motorbike, bus, airplane, laptop, phone. But at the last training session when I continued to get the weight file that had been successfully trained before, there was an error below

error -nan

help you , pls

You should decrease your learning rate more.

I encountered the same issue when training with learning_rate = 0.001 in my ~800 sample. After I changed it to 0.00005, it converged.

Hope it could help.

Hello,

Does anybody know how Darknet repository calculates the best.weights file?

Which criteria is used?

Thank you very much in advance!

Best regards.

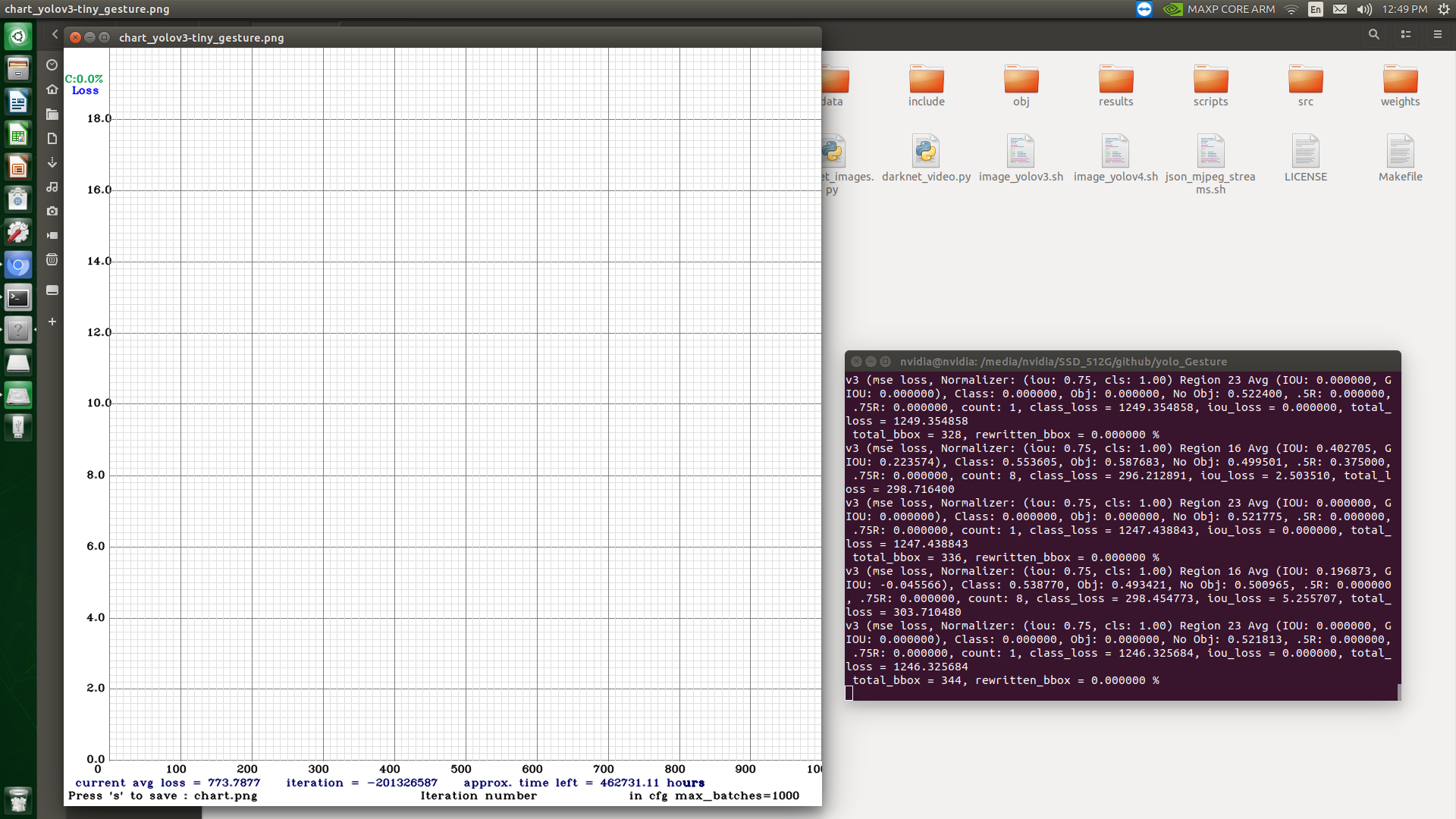

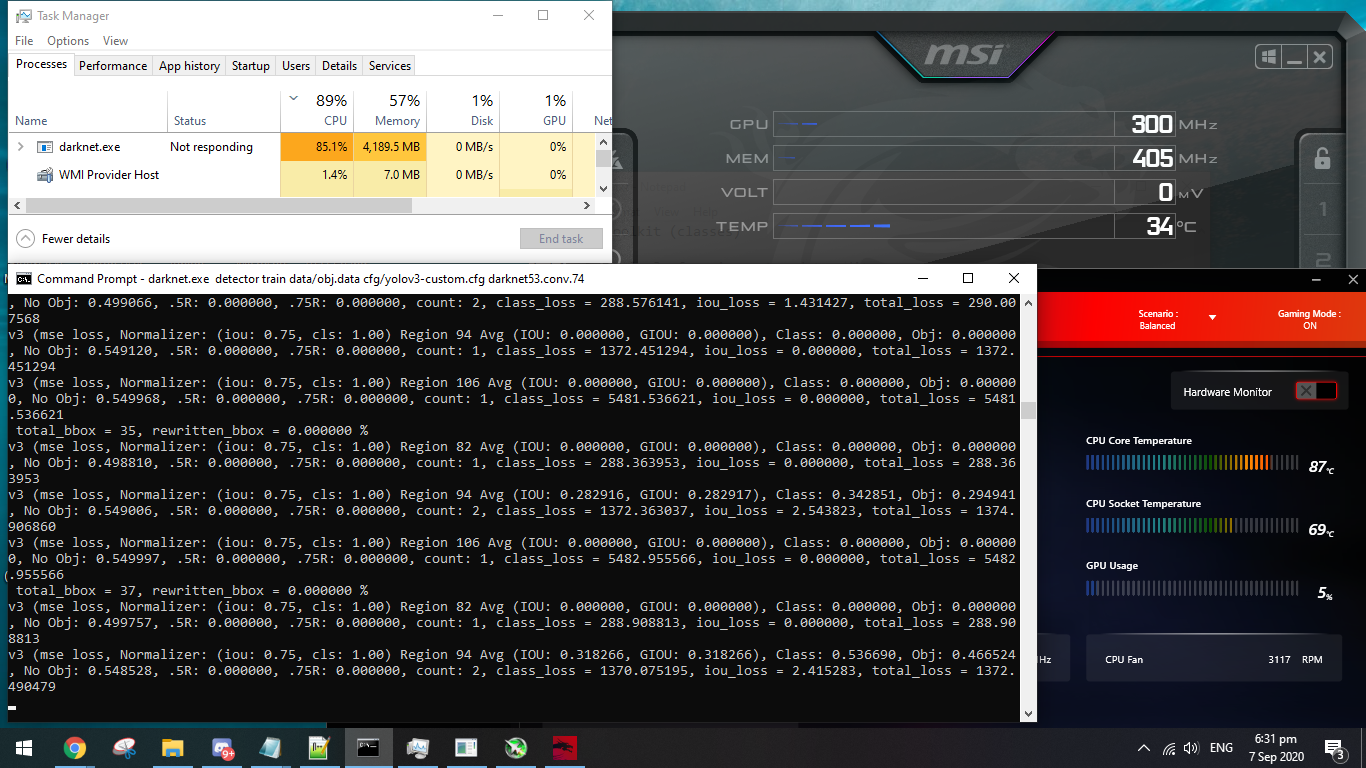

I am getting the iteration number as negative during training in the chart.png. The maximum batches are kept as 1000 in the cfg file with 64 images in each batch.

Even the weights file is getting saved as yolov3-tiny_201326000.weights where the number "201326000" stands for the iteration number.

Thank you.

Please help.

I am getting the iteration number as negative during training in the chart.png. The maximum batches are kept as 1000 in the cfg file with 64 images in each batch.

Even the weights file is getting saved as yolov3-tiny_201326000.weights where the number "201326000" stands for the iteration number.

Thank you.

Please help.

Sorted it out. The problem was because of using pretrained weights. Once I started the training from start with no weights passed, it got resolved.

Does anyone know why I get this error? Saving weights to backup/yolov3_custom_train_last.weights

Couldn't open file: backup/yolov3_custom_train_last.weights. Do I have to manually create a backup folder or why cant it open it? I tried running it with the sudo command btw

Does anyone know why I get this error? Saving weights to backup/yolov3_custom_train_last.weights

Couldn't open file: backup/yolov3_custom_train_last.weights. Do I have to manually create a backup folder or why cant it open it? I tried running it with the sudo command btw

@patrikszepesi

You need to ensure you create a folder "backup", script wont do it for you.