Cockroach: sql/logictest: TestLogic/local/aggregate/other times out under race

(sql/logictest).TestLogic/local/aggregate/other failed on master@d438437fe2b9dbec3980f9265a36c9dfb527ba8b:

Slow failing tests:

TestLogic/local/aggregate/other - 7198.97s

Slow passing tests:

<none>

Related: See this test on roachdashMore

make stressrace TESTS=TestLogic/local/aggregate/other PKG=./pkg/sql/logictest TESTTIMEOUT=5m STRESSFLAGS='-timeout 5m' 2>&1

powered by pkg/cmd/internal/issues

All 30 comments

Took an initial cursory look. It ran with a 2h timeout and some extraordinary repeated log messages:

I200923 07:37:51.316848 244 kv/kvserver/replica_rangefeed.go:610 [n1,s1,r6/1:/Table/{SystemCon…-11}] RangeFeed closed timestamp 1600846515.819343350,0 is behind by 2m35.497476727s

E200923 07:37:54.930447 374604 kv/kvserver/queue.go:1087 [n1,gc,s1,r2/1:/System/NodeLiveness{-Max}] cannot read undeclared span /{Min-System/NodeLiveness/1}

@ajwerner / @nvanbenschoten what do these log messages mean?

This seems alarming. We need the rangefeed to catch up in order to get updates for schema leases and what not.

E200923 07:37:54.930447 374604 kv/kvserver/queue.go:1087 [n1,gc,s1,r2/1:/System/NodeLiveness{-Max}] cannot read undeclared span /{Min-System/NodeLiveness/1}

This one I don't know about

The rangefeed slowdown is pretty easily reproducible with:

make stressrace TESTS=TestLogic/local/aggregate/other PKG=./pkg/sql/logictest TESTTIMEOUT=5m STRESSFLAGS='-timeout 5m' 2>&1

I'm not sure if this is expected or not during race. Who would be able to look further into this?

slowdown isn't necessarily a problem but getting stuck is. does the amount that the rangefeed is behind keep increasing?

It does increase:

I200923 14:50:58.282453 401 kv/kvserver/replica_rangefeed.go:610 [n1,s1,r6/1:/Table/{SystemCon…-11}] RangeFeed closed timestamp 1600872545.589732941,0 is behind by 1m52.692701646s

I200923 14:51:03.702171 401 kv/kvserver/replica_rangefeed.go:610 [n1,s1,r6/1:/Table/{SystemCon…-11}] RangeFeed closed timestamp 1600872545.589732941,0 is behind by 1m58.112421674s

I200923 14:51:07.854011 408 server/status/runtime.go:522 [n1] runtime stats: 682 MiB RSS, 384 goroutines, 48 MiB/63 MiB/106 MiB GO alloc/idle/total, 10 MiB/12 MiB CGO alloc/total, 0.1 CGO/sec, 97.5/3.8 %(u/s)time, 0.3 %gc (2x), 73 KiB/73 KiB (r/w)net

I200923 14:51:09.116027 401 kv/kvserver/replica_rangefeed.go:610 [n1,s1,r6/1:/Table/{SystemCon…-11}] RangeFeed closed timestamp 1600872545.589732941,0 is behind by 2m3.526277733s

I200923 14:51:14.564187 401 kv/kvserver/replica_rangefeed.go:610 [n1,s1,r6/1:/Table/{SystemCon…-11}] RangeFeed closed timestamp 1600872545.589732941,0 is behind by 2m8.974436187s

I200923 14:51:17.870399 408 server/status/runtime.go:522 [n1] runtime stats: 683 MiB RSS, 384 goroutines, 61 MiB/52 MiB/106 MiB GO alloc/idle/total, 10 MiB/12 MiB CGO alloc/total, 0.1 CGO/sec, 101.6/2.8 %(u/s)time, 0.0 %gc (1x), 54 KiB/54 KiB (r/w)net

I200923 14:51:19.987061 401 kv/kvserver/replica_rangefeed.go:610 [n1,s1,r6/1:/Table/{SystemCon…-11}] RangeFeed closed timestamp 1600872545.589732941,0 is behind by 2m14.397311033s

I200923 14:51:25.393614 401 kv/kvserver/replica_rangefeed.go:610 [n1,s1,r6/1:/Table/{SystemCon…-11}] RangeFeed closed timestamp 1600872545.589732941,0 is behind by 2m19.803863322s

I200923 14:51:27.854368 408 server/status/runtime.go:522 [n1] runtime stats: 683 MiB RSS, 384 goroutines, 72 MiB/40 MiB/106 MiB GO alloc/idle/total, 10 MiB/12 MiB CGO alloc/total, 0.1 CGO/sec, 105.5/3.2 %(u/s)time, 0.1 %gc (1x), 56 KiB/56 KiB (r/w)net

I200923 14:51:30.815443 401 kv/kvserver/replica_rangefeed.go:610 [n1,s1,r6/1:/Table/{SystemCon…-11}] RangeFeed closed timestamp 1600872545.589732941,0 is behind by 2m25.225694277s

Alright, this is bad and indicates that the closed timestamp is stuck

or rather, the resolved timestamp

cc @nvanbenschoten can you triage? This failure is happening very often

@aayushshah15 do you have free cycles to take a look at this? It looks right up your alley.

@andreimatei just want to raise the visibility on this as you proceed to work on understanding some other closed timestamp issues.

Friendly ping, this failure is happening nightly

Heads up: I think this is also a problem in 20.2 code, and has a pretty wide blast radius. Less importantly, it's failing builds on master. I'm going to tag this as a release-blocker for now so we don't forget about it.

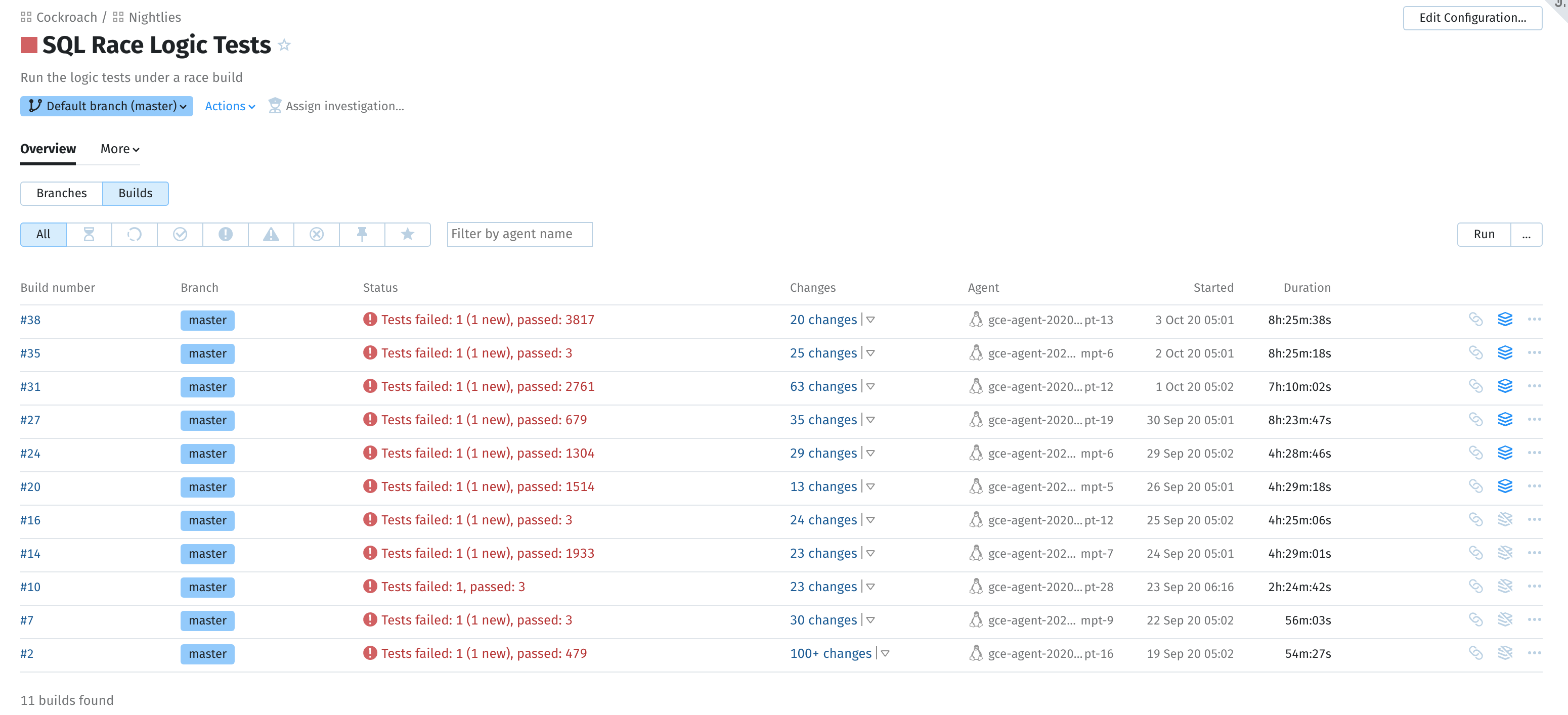

Are we sure all these logictests are supposed to work under race? I see a bunch of

past slack conversations (and this comment from the linked issue) that suggest

otherwise. The "SQL Race Logic Tests" nightly suite also seems to have only

recently been added, and has never succeeded.

I stressed this (without race) for a good while and it does not reproduce.

973 runs so far, 0 failures, over 46m10s

However, under race I can reproduce it (every single time) on a totally random

commit (25f009bfe) from a few months ago, which indicates that perhaps this has

never worked. The rangefeed closed timestamp lag seems to just be a symptom of

general slowness everywhere, and not a cause.

What if under race we try increasing the closed timestamp interval and close fraction?

I'd have (naively) guessed that setting the closed timestamp duration really

high would stall everything since the sqllliveness stuff depends on rangefeeds,

which in turn depend on closed timestamps. However, it didn't have much of an

effect at all besides making the test take slightly longer. FWIW, I don't think

closed timestamps are causing any problems here. In a ~200MB log file (~30 min

test run) I don't see the closed timestamp getting in the way too much:

> rg 'bumped write timestamp due to closed ts' ../rangefeed_stuck_rts_testrace.out | wc -l

8

I just see evaluations of large batch requests (TxnSeq values going up to

40K) taking _super_ long, which makes sense under race, and explains closed

timestamps not making progress, since the minPropTracker won't be able to close

timestamps until those evaluations complete.

Additionally, I let stressrace run overnight and this is what I see:

> make stressrace TESTS=TestLogic/local/aggregate/other PKG=./pkg/sql/logictest TESTFLAGS='-show-logs -v -vmodule=*=3' STRESSFLAGS='-p 1 -timeout 12h' TESTTIMEOUT=12h

...

21 runs so far, 0 failures, over 8h22m10s

So it seems like things are _really slow_, but not stuck. Running with 5m

timeouts is not going to cut it, and now it doesn't really surprise me that the

full logic test suite cannot complete, under race, in <8hrs on CI machines. I

feel like we've known about logic tests being infeasibly slow (or finding data

races in our testing/logging: #40336) under race for a long time, haven't we?

IIRC the problem only got worse with the move to pebble since the race detector

now has more goroutines to worry about.

Overall, I'm not sure if we have any evidence that things are actually

misbehaving?

since the sqllliveness stuff depends on rangefeeds,

The sqlliveness stuff does not depend on rangefeeds.

It looks like there's been a lot of movement on this test config over the past two weeks. First, its timeout was bumped from 30m to 4h in https://github.com/cockroachdb/cockroach/commit/e5014541745d99401c1fe8102774c0ab8698f20d. Then it was bumped to 8h in https://github.com/cockroachdb/cockroach/commit/ba05ce3d34176481139dbaaee52b3acf8a776baa.

@asubiotto do you know the history here? Is this actually an indication that anything has regressed or just that this test can't and never could handle stressrace because it has too much internal parallelism? Based on @aayushshah15's investigation, it doesn't necessarily seem like it is a recent regression.

I think @rytaft enabled these recently to increase optimizer test coverage and was trying to find a good timeout. I'm not sure why they were disabled but it's very possible that stressrace is too much for the logic tests. I do want to say that they've been useful recently in finding a race in the vectorized engine.

My suggestion here is that we should run it with something like a 24h timeout and then decide if we want to tone that down to a smaller timeout (or stop running these under race) once we learn how long the full suite really takes.

FWIW, I was wondering if the logictest executes test files in parallel, but it looks like that's disabled under race https://github.com/cockroachdb/cockroach/blob/5025c7054f81d28e0420b20ed0d266454a30ea57/pkg/sql/logictest/logic.go#L2513

I'd be curious to understand why the slow tests report that I mucked with back in the day doesn't seem to work right here. For example in #55246 it just says

Slow failing tests:

TestLogic/local-vec-off/aggregate/other - 25856.62s

Slow passing tests:

<none>

Maybe there's some particular file culprits that we should be aware off...

I can bump it to 24h if we think that will fix things... otherwise we could just try to disable this specific test under race.

I'd be curious to understand why the slow tests report that I mucked with back in the day doesn't seem to work right here.

Why do you think it's not working? TestLogic/.../aggregate/other is what has been failing each time...

Oh... And it's also the first test to run?

If we can't run any single test, then I don't think that adjusting the timeout is the right thing here...

It's not -- we've had up to ~4000 tests passing with this one failing: https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_SqlRaceLogicTests/2341042

Ah, I wish that report would tell us how many non-slow tests passed.

Anyway, it seems to me like we should be looking at this particular test under race, then.

Discussed with @andreimatei offline. Summarizing my thoughts/findings:

- This shouldn't be a release blocker since the test seems to have never passed under race (even on 20.1: see test history).

- For some reason it seems to take way longer (order of magnitude) on roachprod & teamcity workers than my personal desktop. So _maybe_ something funky is going on with the race detector on the linux version we run on roachprod & teamcity, but this is just a guess.

# 6-node cluster with 32 vCPUs each

8 runs completed, 0 failures, over 3h40m1s

# Personal computer with 16 hyper-threads

21 runs so far, 0 failures, over 8h22m10s

- The closed timestamp lag doesn't seem to have anything to do with the timeout.

We should try to understand why this test takes so long under race, and if it makes sense, raise the timeout or skip this particular test. I'm removing the blocker label and passing it back to someone from Exec.

Do you all know about this stack trace, which I saw in another timeout?

E201007 08:10:46.240401 2688251 kv/kvserver/queue.go:1087 [n1,gc,s1,r2/1:/System/NodeLiveness{-Max}] cannot read undeclared span /{Min-System/NodeLiveness/1}

declared:

read local: /Local/Range/System/NodeLiveness/RangeDescriptor at 0,0

write global: /System/NodeLiveness/1 at 1602058246.215523027,0

stack:

goroutine 2688251 [running]:

runtime/debug.Stack(0xc03b3c0800, 0xc, 0xc)

/usr/local/go/src/runtime/debug/stack.go:24 +0xab

github.com/cockroachdb/cockroach/pkg/kv/kvserver/spanset.(*SpanSet).checkAllowed(0xc017875320, 0x0, 0x0, 0x0, 0x0, 0xc03b3c0800, 0xc, 0xc, 0x70ceba8, 0x212a32f, ...)

/go/src/github.com/cockroachdb/cockroach/pkg/kv/kvserver/spanset/spanset.go:312 +0x3ad

github.com/cockroachdb/cockroach/pkg/kv/kvserver/spanset.(*SpanSet).CheckAllowed(0xc017875320, 0x0, 0x0, 0x0, 0x0, 0xc03b3c0800, 0xc, 0xc, 0x263778f, 0xc00e57d000)

/go/src/github.com/cockroachdb/cockroach/pkg/kv/kvserver/spanset/spanset.go:243 +0xb5

github.com/cockroachdb/cockroach/pkg/kv/kvserver/spanset.(*Iterator).checkAllowed(0xc039a8ae10, 0x0, 0x0, 0x0, 0xc03b3c0800, 0xc, 0xc, 0xb1c401)

/go/src/github.com/cockroachdb/cockroach/pkg/kv/kvserver/spanset/batch.go:124 +0x187

github.com/cockroachdb/cockroach/pkg/kv/kvserver/spanset.(*Iterator).SeekLT(0xc039a8ae10, 0xc03b3c0800, 0xc, 0xc, 0x163ba6ecade911a2, 0x0)

/go/src/github.com/cockroachdb/cockroach/pkg/kv/kvserver/spanset/batch.go:92 +0x12d

github.com/cockroachdb/cockroach/pkg/storage.MVCCGarbageCollect(0x89b6560, 0xc000d32d80, 0x1509c1c26e28, 0xc03f81b4d0, 0xc030f38100, 0xc02edf6b40, 0x1, 0x1, 0x163ba77ca3295ad3, 0x0, ...)

/go/src/github.com/cockroachdb/cockroach/pkg/storage/mvcc.go:3401 +0xf38

github.com/cockroachdb/cockroach/pkg/kv/kvserver/batcheval.GC(0x89b6560, 0xc000d32d80, 0x1509c1c26e28, 0xc03f81b4d0, 0x8b2c580, 0xc015272e70, 0x163ba77ca3295ad3, 0x0, 0x0, 0x0, ...)

/go/src/github.com/cockroachdb/cockroach/pkg/kv/kvserver/batcheval/cmd_gc.go:77 +0x2e2

github.com/cockroachdb/cockroach/pkg/kv/kvserver.evaluateCommand(0x89b6560, 0xc000d32d80, 0xc0292f5828, 0x8, 0x0, 0x1509c1c26e28, 0xc03f81b4d0, 0x8b2c580, 0xc015272e70, 0xc030f38100, ...)

/go/src/github.com/cockroachdb/cockroach/pkg/kv/kvserver/replica_evaluate.go:465 +0x2d9

github.com/cockroachdb/cockroach/pkg/kv/kvserver.evaluateBatch(0x89b6560, 0xc000d32d80, 0xc0292f5828, 0x8, 0x1509c1c26e28, 0xc03f81b4d0, 0x8b2c580, 0xc015272e70, 0xc030f38100, 0xc03f81b3b0, ...)

/go/src/github.com/cockroachdb/cockroach/pkg/kv/kvserver/replica_evaluate.go:241 +0x471

github.com/cockroachdb/cockroach/pkg/kv/kvserver.(*Replica).evaluateWriteBatchWrapper(0xc03536f800, 0x89b6560, 0xc000d32d80, 0xc0292f5828, 0x8, 0x8b2c580, 0xc015272e70, 0xc030f38100, 0xc03f81b3b0, 0xc017875320, ...)

/go/src/github.com/cockroachdb/cockroach/pkg/kv/kvserver/replica_write.go:600 +0x155

github.com/cockroachdb/cockroach/pkg/kv/kvserver.(*Replica).evaluateWriteBatchWithServersideRefreshes(0xc03536f800, 0x89b6560, 0xc000d32d80, 0xc0292f5828, 0x8, 0x8b2c580, 0xc015272e70, 0xc030f38100, 0xc03f81b3b0, 0xc017875320, ...)

/go/src/github.com/cockroachdb/cockroach/pkg/kv/kvserver/replica_write.go:569 +0x196

github.com/cockroachdb/cockroach/pkg/kv/kvserver.(*Replica).evaluateWriteBatch(0xc03536f800, 0x89b6560, 0xc000d32d80, 0xc0292f5828, 0x8, 0xc03f81b3b0, 0xc017875320, 0x0, 0x0, 0x0, ...)

/go/src/github.com/cockroachdb/cockroach/pkg/kv/kvserver/replica_write.go:388 +0x22f

github.com/cockroachdb/cockroach/pkg/kv/kvserver.(*Replica).evaluateProposal(0xc03536f800, 0x89b6560, 0xc000d32d80, 0xc0292f5828, 0x8, 0xc03f81b3b0, 0xc017875320, 0x0, 0xc028f99000, 0x0)

/go/src/github.com/cockroachdb/cockroach/pkg/kv/kvserver/replica_proposal.go:741 +0x13c

github.com/cockroachdb/cockroach/pkg/kv/kvserver.(*Replica).requestToProposal(0xc03536f800, 0x89b6560, 0xc000d32d80, 0xc0292f5828, 0x8, 0xc03f81b3b0, 0xc017875320, 0xc028f99078, 0xea309e)

/go/src/github.com/cockroachdb/cockroach/pkg/kv/kvserver/replica_proposal.go:868 +0x9f

github.com/cockroachdb/cockroach/pkg/kv/kvserver.(*Replica).evalAndPropose(0xc03536f800, 0x89b6560, 0xc000d32d80, 0xc03f81b3b0, 0xc02aa5e690, 0xc028f99e78, 0x0, 0x0, 0x0, 0x0)

/go/src/github.com/cockroachdb/cockroach/pkg/kv/kvserver/replica_raft.go:73 +0x10b

github.com/cockroachdb/cockroach/pkg/kv/kvserver.(*Replica).executeWriteBatch(0xc03536f800, 0x89b6560, 0xc000d32d80, 0xc03f81b3b0, 0x0, 0x0, 0xc02ec23d80, 0x100000001, 0x1, 0x0, ...)

/go/src/github.com/cockroachdb/cockroach/pkg/kv/kvserver/replica_write.go:134 +0xa6b

github.com/cockroachdb/cockroach/pkg/kv/kvserver.(*Replica).executeBatchWithConcurrencyRetries(0xc03536f800, 0x89b6560, 0xc000d32d80, 0xc03f81b3b0, 0x70ce6a8, 0x0, 0x0)

/go/src/github.com/cockroachdb/cockroach/pkg/kv/kvserver/replica_send.go:355 +0x5da

github.com/cockroachdb/cockroach/pkg/kv/kvserver.(*Replica).sendWithRangeID(0xc03536f800, 0x89b6560, 0xc000d32d80, 0x2, 0xc03f81b3b0, 0x0, 0x0)

/go/src/github.com/cockroachdb/cockroach/pkg/kv/kvserver/replica_send.go:100 +0xa9e

github.com/cockroachdb/cockroach/pkg/kv/kvserver.(*Replica).Send(0xc03536f800, 0x89b6560, 0xc000d32d80, 0x163ba77ca3295ad3, 0x0, 0x0, 0x0, 0x0, 0x2, 0x0, ...)

/go/src/github.com/cockroachdb/cockroach/pkg/kv/kvserver/replica_send.go:37 +0xdd

github.com/cockroachdb/cockroach/pkg/kv/kvserver.(*replicaGCer).send(0xc0170da930, 0x89b6560, 0xc000d32d80, 0xc00a285a60, 0xb, 0x10, 0xc00a285a70, 0xb, 0x10, 0x0, ...)

/go/src/github.com/cockroachdb/cockroach/pkg/kv/kvserver/gc_queue.go:386 +0x3be

github.com/cockroachdb/cockroach/pkg/kv/kvserver.(*replicaGCer).GC(0xc0170da930, 0x89b6560, 0xc000d32d80, 0xc02edf6ae0, 0x1, 0x1, 0x0, 0x1)

/go/src/github.com/cockroachdb/cockroach/pkg/kv/kvserver/gc_queue.go:405 +0x13f

github.com/cockroachdb/cockroach/pkg/kv/kvserver/gc.processReplicatedKeyRange(0x89b6560, 0xc000d32d80, 0xc034fd79d0, 0x8a115e0, 0xc0170da910, 0x163ba7799c7152e8, 0xc000000000, 0x163ba6ede9a7e2e8, 0x0, 0x8968860, ...)

/go/src/github.com/cockroachdb/cockroach/pkg/kv/kvserver/gc/gc.go:355 +0x12c7

github.com/cockroachdb/cockroach/pkg/kv/kvserver/gc.Run(0x89b6560, 0xc000d32d80, 0xc034fd79d0, 0x8a115e0, 0xc0170da910, 0x163ba7799c7152e8, 0x0, 0x163ba6ede9a7e2e8, 0x0, 0x258, ...)

/go/src/github.com/cockroachdb/cockroach/pkg/kv/kvserver/gc/gc.go:185 +0x2cb

github.com/cockroachdb/cockroach/pkg/kv/kvserver.(*gcQueue).process(0xc005fda140, 0x89b6560, 0xc000d32d80, 0xc03536f800, 0xc064fee640, 0x6b2d700, 0x0, 0x0)

/go/src/github.com/cockroachdb/cockroach/pkg/kv/kvserver/gc_queue.go:460 +0x797

github.com/cockroachdb/cockroach/pkg/kv/kvserver.(*baseQueue).processReplica.func1(0x89b6560, 0xc000d32d80, 0xdf8475800, 0x89b6560)

/go/src/github.com/cockroachdb/cockroach/pkg/kv/kvserver/queue.go:958 +0x24e

github.com/cockroachdb/cockroach/pkg/util/contextutil.RunWithTimeout(0x89b6560, 0xc000d32d80, 0xc016594100, 0x1a, 0xdf8475800, 0xc014c14d98, 0x0, 0x0)

/go/src/github.com/cockroachdb/cockroach/pkg/util/contextutil/context.go:135 +0xde

github.com/cockroachdb/cockroach/pkg/kv/kvserver.(*baseQueue).processReplica(0xc0329c0f20, 0x89b65a0, 0xc02cae9410, 0x8a3d340, 0xc03536f800, 0x0, 0x0)

/go/src/github.com/cockroachdb/cockroach/pkg/kv/kvserver/queue.go:917 +0x3aa

github.com/cockroachdb/cockroach/pkg/kv/kvserver.(*baseQueue).processLoop.func1.2(0x89b65a0, 0xc02cae93b0)

/go/src/github.com/cockroachdb/cockroach/pkg/kv/kvserver/queue.go:845 +0x116

github.com/cockroachdb/cockroach/pkg/util/stop.(*Stopper).RunAsyncTask.func1(0xc01dc45360, 0x89b65a0, 0xc02cae93b0, 0xc019d05e60, 0x26, 0x0, 0x0, 0xc02cae93e0)

/go/src/github.com/cockroachdb/cockroach/pkg/util/stop/stopper.go:347 +0x163

created by github.com/cockroachdb/cockroach/pkg/util/stop.(*Stopper).RunAsyncTask

/go/src/github.com/cockroachdb/cockroach/pkg/util/stop/stopper.go:342 +0x14b

@tbg hey, it looks like your name is on the git blame for this error - any idea what it's about?

This looks like fallout from https://github.com/cockroachdb/cockroach/commit/858541622a74121007f6759192e61f187eb7a8cd. We're calling SeekLT on an MVCC version that we know has newer versions (at higher timestamp), but the spanset.Iterator doesn't know this, so it assumes that the SeekLT is moving to a new key which has not been declared. This isn't concerning outside of the fact that the assertion is being disruptive.

There are two things here:

- if we want this to work, we're going to need to take MVCC versions into account in

spanset.Iteratorsomehow. - we shouldn't even be using latches at all during garbage collection, so I'm tempted to disable these assertions entirely. The latches aren't protecting anything and the spanset is just fooling itself. I think the easier fix is to stop using the

spanset.Iteratorwhen evaluating GC requests.

Opened https://github.com/cockroachdb/cockroach/pull/55310 to bump the test timeout to 24 hours. If that doesn't help I can try to disable this particular test under race.

@nvanbenschoten jogging my memory to remind myself what's going on here. Say we're GC'ing a key k at timestamp 50, which means we'll throw away all of the MVCC versions <=50 (correct?)

Say the versions are these (sorted in ascending engine order)

k @ meta

k @ 100

k @ 99

...

k @ 1

We'll look at the meta first and then step forward a few times hoping to get to the first gc'able version version, but here there are too many non-gcable ones so we'll end up somewhere here:

k @ meta

k @ 100

...

k @ 96 <-- iterator

k @ 95

...

k @ 1

We'll then SeekLT to k@50, meaning we get

k @ meta

k @ 100

...

k @ 51 <-- iterator

k @ 50

...

k @ 1

Then we step the iterator forward one and begin removing keys.

Ok, so what does the SpanSet not like? Your comment indicates that its SeekLT thinks that by seeking to k@50 we will necessarily and up at a key earlier than k (rather than an older version of k). We are definitely going to an older version of k here.

What I don't fully understand is why we're catching the assertion here. We're GC'ing a single key, so the iterator is bounded on [k, k.Next()]. I thought your changes in c2ecc15c9954a3c3a99e8b307fae25cd6a818459 in effect made it "ok" to seek anywhere as long as the iterator's bounds are allowed by the span set.

When we GC more than one key, I think this reasoning doesn't hold though, as the bound we set on the iterator is then the interval covering all keys (as opposed to the union of [k, k.Next()) over all keys gc'ed), so we'd still have a problem.

Ah, looking into c2ecc15c9954a3c3a99e8b307fae25cd6a818459 a bit more I see why. We enforce the spans against the seeked key as long as the iter is valid after the seek. It seems that what we want here is to check the current position of the iterator in that case. If we actually seeked to below k - that's a problem. If it's k though, we're ok. We should be able to achieve this by looking at the key here (if iter is valid) and constructing a span [iter.UnsafeKey(), k].