Candidate SHA: f990441079c686f9eec32d80044c719175a2bee5

Deployment status: Qualifying

Qualification Suite: #167 - started 8/24, 8:46p

Nightly Suite: #2327 - started 8/24, 8:48p

Admin UI for Qualification Clusters:

Prep date: Monday 8/24/2020

- [x] [Pick a SHA](https://cockroachlabs.atlassian.net/wiki/spaces/ENG/pages/73105625/Release+process#Releaseprocess-PickingaSHA)

- [x] fill in

Candidate SHAabove - [x] email thread on releases@

- [x] fill in

- [x] [Tag the provisional SHA](https://cockroachlabs.atlassian.net/wiki/spaces/ENG/pages/73105625/Release+process#Releaseprocess-TagtheprovisionalSHA)

- [x] [Publish provisional binaries](https://cockroachlabs.atlassian.net/wiki/spaces/ENG/pages/73105625/Release+process#Releaseprocess-Publishtheprovisionalbinaries)

- [x] Ack security@ and release-engineering-team@ on the generated AWS S3 bucket write Alert to confirm these writes were part of a planned release (Just reply on the email received alert email acking that this was part of the release process)

Release Qualification

- [x] [Check binaries](https://cockroachlabs.atlassian.net/wiki/spaces/ENG/pages/73105625/Release+process#Releaseprocess-Checkbinaries)

- [x] [Deploy to test clusters](https://cockroachlabs.atlassian.net/wiki/spaces/ENG/pages/73105625/Release+process#Releaseprocess-DeploytoTestClusters)

- [x] [Verify node crash reports](https://cockroachlabs.atlassian.net/wiki/spaces/ENG/pages/73105625/Release+process#Releaseprocess-Verifynodecrashreportsappearinsentry.io)

- [x] [Start release qualification suite](https://cockroachlabs.atlassian.net/wiki/spaces/ENG/pages/73105625/Release+process#Releaseprocess-RuntheSuiteofNightlyRoachtests)

- [x] [Start nightly suite](https://cockroachlabs.atlassian.net/wiki/spaces/ENG/pages/73105625/Release+process#Releaseprocess-RuntheSuiteofNightlyRoachtests)

- [x] fill in all WIP elements above (Nightly Suite, Admin UI, etc.)

One day after prep date:

- [x] [Get signoff on roachtest failures](https://cockroachlabs.atlassian.net/wiki/spaces/ENG/pages/328859690/Release+Qualification#Roachtest-Failure-Signoff)

- [x] [Keep an eye on clusters](https://cockroachlabs.atlassian.net/wiki/spaces/ENG/pages/328859690/Release+Qualification#Monitoring-the-Qualification-Clusters) until release date. Do not proceed below until the release date.

Release date: Monday 8/31/2020

- [x] [Check cluster status](https://cockroachlabs.atlassian.net/wiki/spaces/ENG/pages/328859690/Release+Qualification#Monitoring-the-Qualification-Clusters)

- [x] [Tag release](https://cockroachlabs.atlassian.net/wiki/spaces/ENG/pages/73105625/Release+process#Releaseprocess-11.Tagtherelease)

- [x] [Bless provisional binaries](https://cockroachlabs.atlassian.net/wiki/spaces/ENG/pages/73105625/Release+process#Releaseprocess-Blesstheprovisionalbinaries)

- [ ] If applicable, update the map that roachtests use to map a version to a previous version, to reference the newly tagged version

For production or stable releases in the latest major release series

- [x] Update the Homebrew Formula

- [x] Update the image tag in our Orchestrator configurations

For production or stable releases

- [x] Announce version to the registration cluster

[ ] Update docs

- [ ] External communications for release

Cleanup:

- [x] Clean up provisional tag from repository

- [x] Destroy roachprod clusters

All 11 comments

Roachtest Nightly - GCE: Test Failure Sign-Off

Tests failed: 13 (2 new), passed: 45289, ignored: 139; Build chain finished (success: 16, failed: 3)

Roachtest Nightly - GCE:

https://teamcity.cockroachdb.com/viewLog.html?buildId=2217981&buildTypeId=Cockroach_Nightlies_NightlySuite

appdev

- [x] activerecord

- [x] django

- [x] pgjdbc

bulkio

- [x] backupTPCC

- [x] import/tpcc/warehouses=1000/nodes=32

- [x] import/tpcc/warehouses=1000/nodes=4

kv

- [x] tpccbench/nodes=9/cpu=4/multi-region

- [x] tpcc/interleaved/nodes=3/cpu=16/w=500 - @rohany

- [x] version/mixed/nodes=3 - @rohany

- [x] version/mixed/nodes=5 - @cockroachdb/sql-schema

storage

- [x] engine/switch/encrypted/nodes=3

- [x] engine/switch/nodes=3

schema

- [x] TestRandomSyntaxSchemaChangeColumn

All the bulk io failures are known and should be fixed by https://github.com/cockroachdb/cockroach/pull/53367.

Signing off on TestRandomSyntaxSchemaChangeColumn since it looks like a timeout (again). The schema changes seem to be making progress but are slow due to all the jobs overhead. I'm going to look into increasing the timeout since this happens literally every time.

All the storage ones should be fixed by #53494. Signing off.

Signed off on the appdev tests. Django has a skip PR in progress, I will add one for the flaky ActiveRecord and PGJDBC tests.

The version-upgrade failure on the three-node test is caused by

| I200825 18:11:24.341985 1 workload/cli/run.go:338 retrying after error during init: Could not postload: pq: foreign key requires an existing index on columns ("h_c_w_id", "h_c_d_id", "h_c_id")

I believe @rohany knows about those (https://github.com/cockroachdb/cockroach/pull/52931)

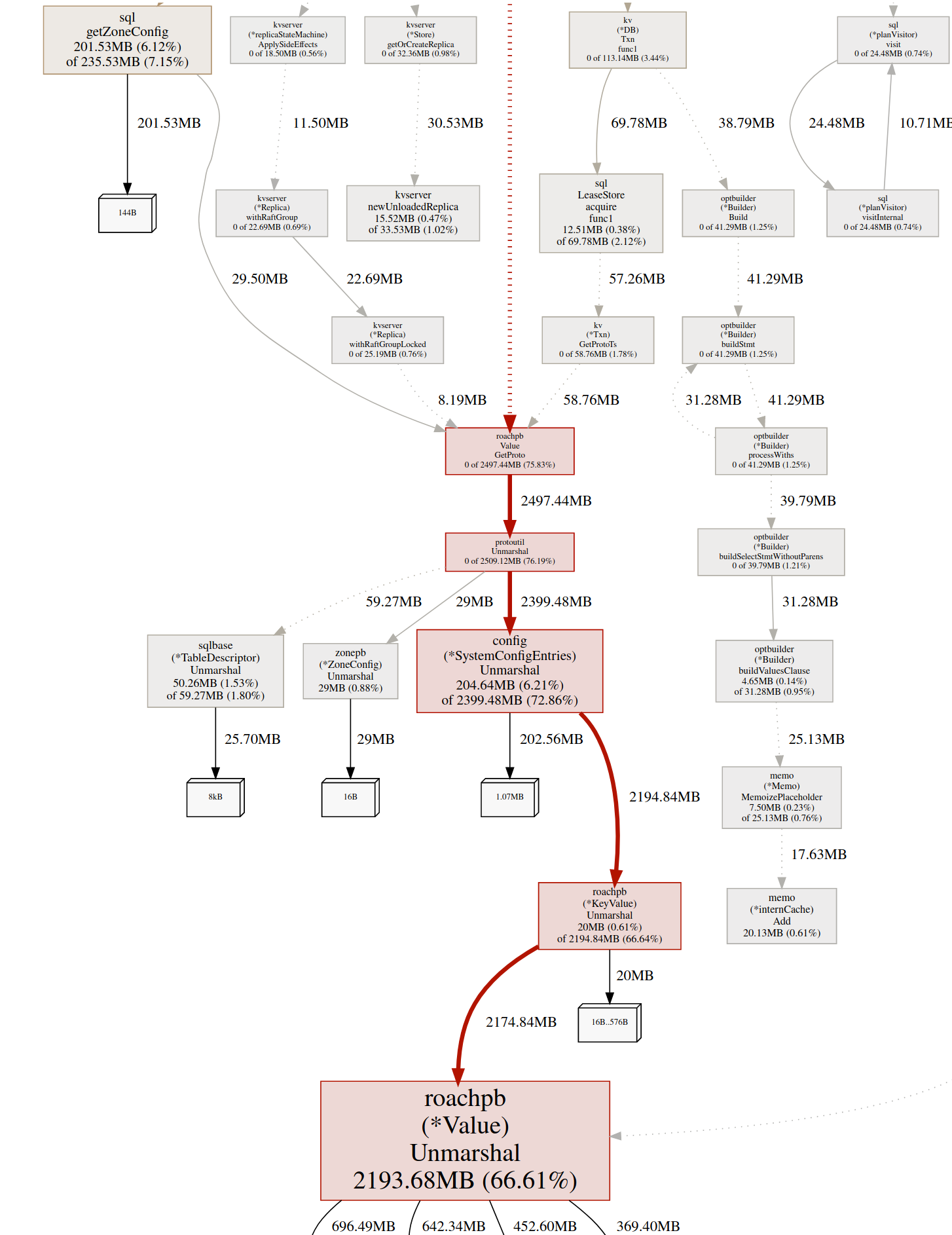

The five node test had n5 crash. I think it is likely an OOM, though neither log nor dmesg have an explicit message about that. The heap profiler shows this (artifacts)

A goroutine dump (taken ~hours before the actual crash) has lots of these:

github.com/cockroachdb/cockroach/pkg/sql/gcjob.schemaChangeGCResumer.Resume(0x81c005e855d0005, 0x4be8140, 0xc000c94960, 0x4304ae0, 0xc063b31b00, 0x0, 0x0, 0x0)

$ grep -cF '.Resume' ~/Downloads/goroutine_dump.2020-08-25T23_24_24.036.double_since_last_dump.000001005.txt

645

goroutine_dump.2020-08-25T23_24_24.036.double_since_last_dump.000001005.txt

memprof.000000006725779984_2020-08-26T01_48_57.305.txt

cockroach.log

The test ran from 5pm until 2:45am the next morning, so I'm sure it got stuck somewhere in a situation where more and more memory was allocated until n5 eventually gave out.

It's final runtime stats line is this:

I200826 01:49:17.009102 244 server/status/runtime.go:498 [n5] runtime stats: 14 GiB RSS, 1382 goroutines, 5.9 GiB/2.3 GiB/7.9 GiB GO alloc/idle/total, 4.5 GiB/5.6 GiB CGO alloc/total, 17274.1 CGO/sec, 256.0/43.6 %(u/s)time, 0.1 %gc (2x), 131 MiB/131 MiB (r/w)net

Going back through the logs, this is steadily growing.

tpcc/interleaved is same index failure:

Could not postload: pq: foreign key requires an existing index on columns ("h_c_w_id", "h_c_d_id", "h_c_id")

so I will leave it to @rohany.

tpccbench failed as follows

Attempt to create load generator failed. It|'s been more than 10m0s since we started trying to create the load generator so we|'re giving up. Last failure: failed to initialize the load generator: preparing \n\t\tUPDATE district\n\t\tSET d_next_o_id = d_next_o_id + 1\n\t\tWHERE d_w_id = $1 AND d_id = $2\n\t\tRETURNING d_tax, d_next_o_id: context deadline exceeded\nError: failed to initialize the load generator: preparing \n\t\tUPDATE district\n\t\tSET d_next_o_id = d_next_o_id + 1\n\t\tWHERE d_w_id = $1 AND d_id = $2\n\t\tRETURNING d_tax, d_next_o_id: context deadline exceeded\n

The restore previously went through in ~5h.

It looks like we didn't get a debug zip because the hard-coded 5 minute limit to do so wasn't enough.

The logs show no KV alerts (i.e. no unavailable ranges, etc), but we see have been waiting messages from DistSender, which indicates that there is in fact a problem at the KV layer. I will need to investigate further.

I'm checking off the tpccbench failure since it has been failing for a long time. This needs to change, but getting this test stable should not block the release.

Not sure when this release qualification started, but PR's https://github.com/cockroachdb/cockroach/pull/53450 and https://github.com/cockroachdb/cockroach/pull/53367 need to be part of the roachtest/workload builds used for qualification to avoid the failures.

I'll sign off on the failures (tpcc/interleaved, version/nodes=3) because they are just workload import failures.

I started the test runs on 8/24 and looks like those PRs went in 22 hours ago.

Signing off on version/mixed/nodes=5. Ultimately this was also #52931-related fallout, and I think nodes=3 didn't OOM just because it's a shorter/smaller test. See https://github.com/cockroachdb/cockroach/issues/53399#issuecomment-682309446 for more details.