Cht-core: Not all writes to CouchDB go through the audit layer

In my opinion (descent welcome) we should be writing via audit everywhere. For every version that has ever existed in CouchDB there should be an entry in the audit log for that version.

This does not appear to be the case. Specifically, I've noticed that when SMS come in a report is created and written to without a sister audit log being created (the first audit in this instance is from sentinel transitions being written).

I presume there are probably others.

We should work through the codebase and make sure we're always using audit. Ideally, we'd change our code so that you couldn't not use audit DB.

All 36 comments

So this is a much larger problem than I originally anticipated.

We are using db.medic.insert all over the place (migrations, in some transitions and transition flow, schedules etc).

We are using db.medic.request with the method of PUT or POSTall over the show as well.

We are also using CouchDB to write SMS messages into data_records (eg kujua-sms/updates.js:add_sms), thus completely skipping any form of auditing there as well.

The current scheme of having a separate db.audit.saveDoc isn't working for us, I think in hindsight because it makes it far too optional, and so no one uses it. It also doesn't account for writes that occur in CouchDB.

I think it would be much better–if we want our audit layer to not be ignorable–for us to have something more universal that you don't have to remember to use.

Here are some things I'd like to discuss with smart Medic people:

- We could change sentinel and api's

dbservice so that any write done by that service is automatically audited. This could be accomplished by releasing a new version of couchdb-audit that supports wrapping nano (and later perhaps PouchDB) and then using that instead of nano directly. - We could write a microservice that sat between api and couchdb with the sole goal of auditing requests going through it (using express or whatever to catch requests and audit them if required)

We could write a microservice that follows(nevermind, a changes feed watcher can't record who wrote the change, and can only approximate when. Not a suitable replacement)_changesand then writes to the audit db that way. This is less invasive and more encompassing than either of the two previous ideas (as it is now literally any write, automatically ignoring_localdocs etc), but you could lose data if the service goes down (if a compact happens while it's down it can't recover old versions to retroactively create audit records), so while it's the most general it is also possibly too flaky for us? We could solve the flakiness by linking it to api by linking it to api (or just embedding it in api), with the presumption that if api is down then everything is down.

The first two would also require that either we take all writes out of CouchDB and put them in api (which I know we are thinking of doing), or having manual "add audit" bits added to the api code where we know we are calling on to couchdb code, to hole up those gaps.

Anything going direct to couchdb will be difficult to audit, unless we only expose our own service instead of couchdb. I like the changes listener solution but then it seems weird if we store every rev then why even compact? Maybe have an audit log that stores diffs?

@mandric yeah I definitely like the idea of it storing diffs!

We currently store the full versions. The reason why we do it when we also compact is two things:

- now that it's in its own db audit it shouldn't affect main db performance

- Most importantly, compact is one command away, and you can't stop admins from performing it (and in the past as well as today we still require real CouchDB admins to be used by people like tech leads and stuff), so it's really dangerous and you could lose you're entire audit trail that way.

If we did it in a changes feed I think we could very easily do diffs, esp because it wouldn't impact write performance as it would do right now (since right now when you write to CouchDB through api it blocks on audit being successful).

Oh whoops! So the other main reason we have audit instead of trusting compaction is that audit gives us who wrote it and when.

Which the _changes solution can't actually do (it could approximate when, but you've lost the who).

Nevermind, that one's out.

@SCdF Doesn't couchdb-audit already wrap nano and do auditing? The problem in sentinel is we maintain both wrapped (audit) and unwrapped (db) versions which leaves developers with a choice. In retrospect I think we should only pass around audit and treat it as a full db, but that may require more complete wrapping. We really need a proxy!

@garethbowen yeah it does, I meant transparently wrap, so you don't have to go out of your way to use it. Not sure if there is a better word, I guess proxy, based on your link?

But yeah you're correct, you can use audit to save a doc with audit if you want, but the normal non-audited one is available to use, has a more complete api, more natural etc.

Will read about proxies, sounds like it would be a solution to the first proposed idea.

IMO the only way to reliably achieve this auditing is to put a proxy between couch and all services that we want to audit (api, sentinel, concierge). This proxy should then log mutating requests made, and their results.

How the logging itself could be best achieved will come down to the reasons for doing this logging, and how much time we have to devote to implementing it.

If we only envisage using the audit data _in extremis_, I assume our main concerns are (in order):

- integrity of the log

- ensuring logging can't be circumnavigated by developers by accident

- ensuring the log can't be modified on disk

- ensuring the log is reliably backed up

- not adversely affecting server performance

then the simplest way to achieve this would be to log full content of every HTTP request that can mutate couch's data (PUT, POST, DELETE), and the result (response status code and assigned IDs is probably enough for this; logging full response headers and body would be simpler). If we're concerned about mapping actions to users, then api, sentinel etc. could add request headers such as X-Medic-Service, X-Medic-User to make it easier for us to get this info quickly.

There is existing tooling for this kind of logging:

To recreate the state at a particular time, replay the HTTP requests against a fresh couch instance.

This does feel a bit bonkers because of the potential volume of logging, but I can't see it will be much different to storing this data in any other format.

@browndav what @alxndrsn is suggesting is quite a large change from what we already do. Do you (with your CTO hat on) have any thoughts here? My original plan was to just try to shore up what we already have. However, I do not have full context into the situations we are trying to solve.

Talked to Dave. I will check with the business and see how they feel about not being able to access audit from the ui at any stage, but a second nginx seems like a good solution to look into that I will detail after I've checked with the business.

Sounds like we're all good to go forward with some kind of external logging solution.

Next steps for this ticket is:

- Work out how to deploy nginx so there is a new instance / port that logs, at least: time, user, verb, request, code, and response, and calls through to CouchDB

- It should be, if not parsable right now, at least easily parsable in the future if we need to. Simplest way of achieving this is one line per request

- Change MedicOS to use this, and for api deployed on MedicOS to go through this new port (or move CouchDB's port and put this one at 5984)

- Delete code in api / sentinel associated with logging to the audit db (but don't delete the actual db, it contains legacy data!)

- Put that code in a major version and make it clear to deployers / upgraders that they need MedicOS Vwhatever

@sglangevin / @garethbowen this change is a major change, requiring that people update their version of medicos along with this change (because we'd be moving the responsibility of auditing from code to nginx).

Should we reschedule this for 3.0? Or 4.0?

3.0 makes sense to me, or 4.0 if it doesn't get done in time.

I agree with @garethbowen - let's try for 3.0.

This is scheduled for 3.0 so I'll drop it out of this milestone for now.

Deprioritised out of 3.0.0.

@vimemo Is this done now, or are we waiting on the associated infrastructure changes?

@garethbowen, we still need the updates to the infrastructure.

For AT: This needs extensive testing to ensure every request is logged in the audit layer with the correct details including the username of the user who made the initial request.

hi @Hareet, I am assigning this to you for the deployment piece of the haproxy. please let me know when you get to this. thank you.

@vimemo We should also remove the Audit Logs export from the admin app and remove the api too as they won't actually get the latest log any more.

Now that the haproxy PR is merged into medic-infrastructure I think this is ready for AT. You'll need to get the new infrastructure deployed first.

For AT:

- Make sure _all_ DB requests from all users (including admins), via all services (webapp, admin, api, sentinel, fauxton, etc) are logged to the audit log.

- Make sure log statements include the username of the original user. For example, a request for the changes feed from user Jim may cause API to make several requests to the database - these should all be listed as originating from Jim, not API or unknown.

- Make sure there's no way to access the DB without being logged.

Hi @Hareet .I would like to know how to access these log on gamma.dev now that the audit-log is no longer in use. The logs available in kibana do not seem to be consistent with the requirements of this ticket. Plus gamma.dev logs here are about 2 days behind, ie only logs from the 2nd of Feb are showing...

Feb 02 08:04:00 gamma-dev gamma-dev-medic-sentinel-logs: (gamma-dev-xxxxxxx) | [2019-02-01 19:04:00] 2019-02-01 19:04:00 INFO: checking schedule again in 5 minutes

Oh, thanks bede!

This is likely related to kibana running out of space to keep logs.

Until we change to a microservice architecture where all logs pump to a stdout/streaming engine (elasticsearch/kibana), SRE thought it was advised to not duplicate costs on logging, so the duration of logging on kibana is limited (since we will pay for the files to exist either way). I will clear the history and refresh it.

A thing to remember, you can always log into the container and view logs the old (2.x) way. kubectl-auth repo contains the details on how to connect and find the logs.

LGMT. Tested on purge-upgrade-muso-mali running latest 3.3.x

Hi @vimemo . As discussed with you and @Hareet this morning, the logs need to be saved in /srv, for as Hareet suggested, "any other folders will be cleared on container restart, and LogTrail won’t save all the data (since we currently log to files for the rest of the application, so there was no point to pay duplicate storage costs)".

@vimemo Is this now ready for AT again?

@garethbowen, looks like @Hareet has this currently in-progress.

@vimemo @garethbowen I still have to deploy the new images on gamma-dev. Will comment here when its done

@vimemo @ngaruko Fixes deployed on gamma.dev. Re-deploy of medic-os container reverted it to release 3.2.0, so you may need to upgrade it to whichever branch again.

Logs are now being saved on the server and streamed on kibana. However, it looks like these are being truncated when they are too long. Did you want to have another look at this @vimago

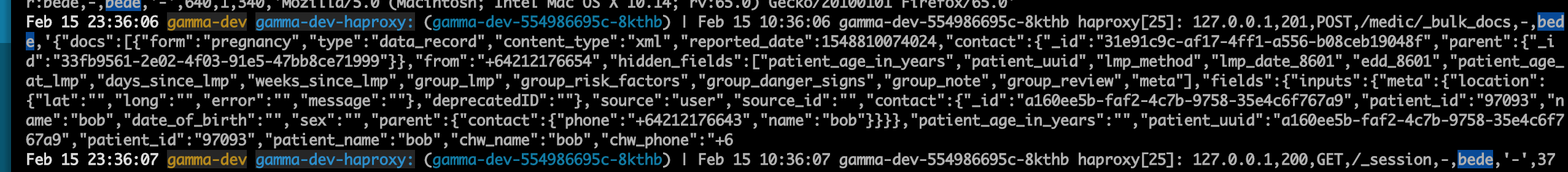

here is how it looks on the server (truncated after at hcw_phone; can be reproduced by editing an existing pregnancy report):

`Feb 15 10:36:06 gamma-dev-554986695c-8kthb haproxy[25]: 127.0.0.1,201,POST,/medic/_bulk_docs,-,bede,'{"docs":[{"form":"pregnancy","type":"data_record","content_type":"xml","reported_date":1548810074024,"contact":{"_id":"31e91c9c-af17-4ff1-a556-b08ceb19048f","parent":{"_id":"33fb9561-2e02-4f03-91e5-47bb8ce71999"}},"from":"+64212176654","hidden_fields":["patient_age_in_years","patient_uuid","lmp_method","lmp_date_8601","edd_8601","patient_age_at_lmp","days_since_lmp","weeks_since_lmp","group_lmp","group_risk_factors","group_danger_signs","group_note","group_review","meta"],"fields":{"inputs":{"meta":{"location":{"lat":"","long":"","error":"","message":""},"deprecatedID":""},"source":"user","source_id":"","contact":{"_id":"a160ee5b-faf2-4c7b-9758-35e4c6f767a9","patient_id":"97093","name":"bob","date_of_birth":"","sex":"","parent":{"contact":{"phone":"+64212176643","name":"bob"}}}},"patient_age_in_years":"","patient_uuid":"a160ee5b-faf2-4c7b-9758-35e4c6f767a9","patient_id":"97093","patient_name":"bob","chw_name":"bob","chw_phone":"+6

Feb 15 10:36:07 gamma-dev-554986695c-8kthb `

and on kibana

@ngaruko, we have a limit of 400,000 characters for this at the moment:

https://github.com/medic/medic-infrastructure/blob/master/images/haproxy/haproxy.cfg#L31

Based on your testing and this type of request, how many characters should we capture?

Here is the haproxy documentation about this:

https://www.haproxy.com/documentation/aloha/8-0/traffic-management/lb-layer7/traffic-capture/

@garethbowen, do you have a suggestion about this?

Thank you.

@ngaruko, it looks like that is not the limit we are reaching. The 1024 line length default limit is what is truncating this. I will create a pr to increase it. Still, how big do you think the request/post can be?

@vimago Maybe we should log the whole thing up to the allowable limit with haproxy, unless @garethbowen has got a different suggestion.

@vimemo @ngaruko I agree - go for the maximum.

@vimemo @ngaruko

Latest HAProxy image (with config change) has been deployed on gamma

LGMT atm. Thanks @vimago and @Hareet