Botframework-webchat: Bot with cognitive services speech services (js) not working when using subscription key instead of a token

Screenshots

Version

I'm using the template in: "06.c.cognitive-services-speech-services-js", but with hardcoded key for DirectLine and hardcoded subscriptionKey for cognitive services speech.

I did implemented this with electron but the same happen if i use npx serve.

Describe the bug

I can use the mic button to speak once, then i get:

"

webchat.js:1 POST https://westeurope.tts.speech.microsoft.com/cognitiveservices/v1 400

webchat.js:1 Error: Failed to syntheis speech, server returned 400

at VM127 webchat.js:1

Uncaught (in promise) Error: Failed to syntheis speech, server returned 400

Uncaught (in promise) {error: Error: Failed to syntheis speech, server returned 400

at https://cdn.botframework.com/botframew…, type: "error", target: t}

"

I do get an answer from my bot but without speech and i can not click the mic button again.

To Reproduce

Steps to reproduce the behavior:

Use a subscription key instead of tokens.

Expected behavior

Not breaking :)

Additional context

The example as is working fine, the problem started when i switched to hardcoded values.

the values are correct though as the bot is functioning and the speech-to-text work one time before crashing (I did try to insert an invaild key to see what happen then i get authentication error as expected.

This is my code:

<script>

(async function () {

const token = 'XXX';

const region = 'westeurope';

const subscriptionKey = 'YYY';

const webSpeechPonyfillFactory = await window.WebChat.createCognitiveServicesSpeechServicesPonyfillFactory({

subscriptionKey,

region

});

window.WebChat.renderWebChat({

directLine: window.WebChat.createDirectLine({ token }),

webSpeechPonyfillFactory

}, document.getElementById('webchat'));

document.querySelector('#webchat > *').focus();

})().catch(err => console.error(err));

</script>

[Bug]

PS: if you can point me out to somewhere which explain how to get bit token and cognitive services authorization token with js then i might just skip the hardcoded keys (as this was a first step check).

All 6 comments

I wasn't able to reproduce this error, and your Web Chat code seems fine. It appears a 400 error indicates that the language code is not provided or the language isn't supported. If you want to avoid using the subscription key, take a look at the Speech-to-text REST API Documentation for information issuing a token for Cognitive Speech.

Is it possiable the problem is with my chatbot code?

Will it be useful if i provide you with my keys?

I know the bot is working with a C# client and the direct line speech though.

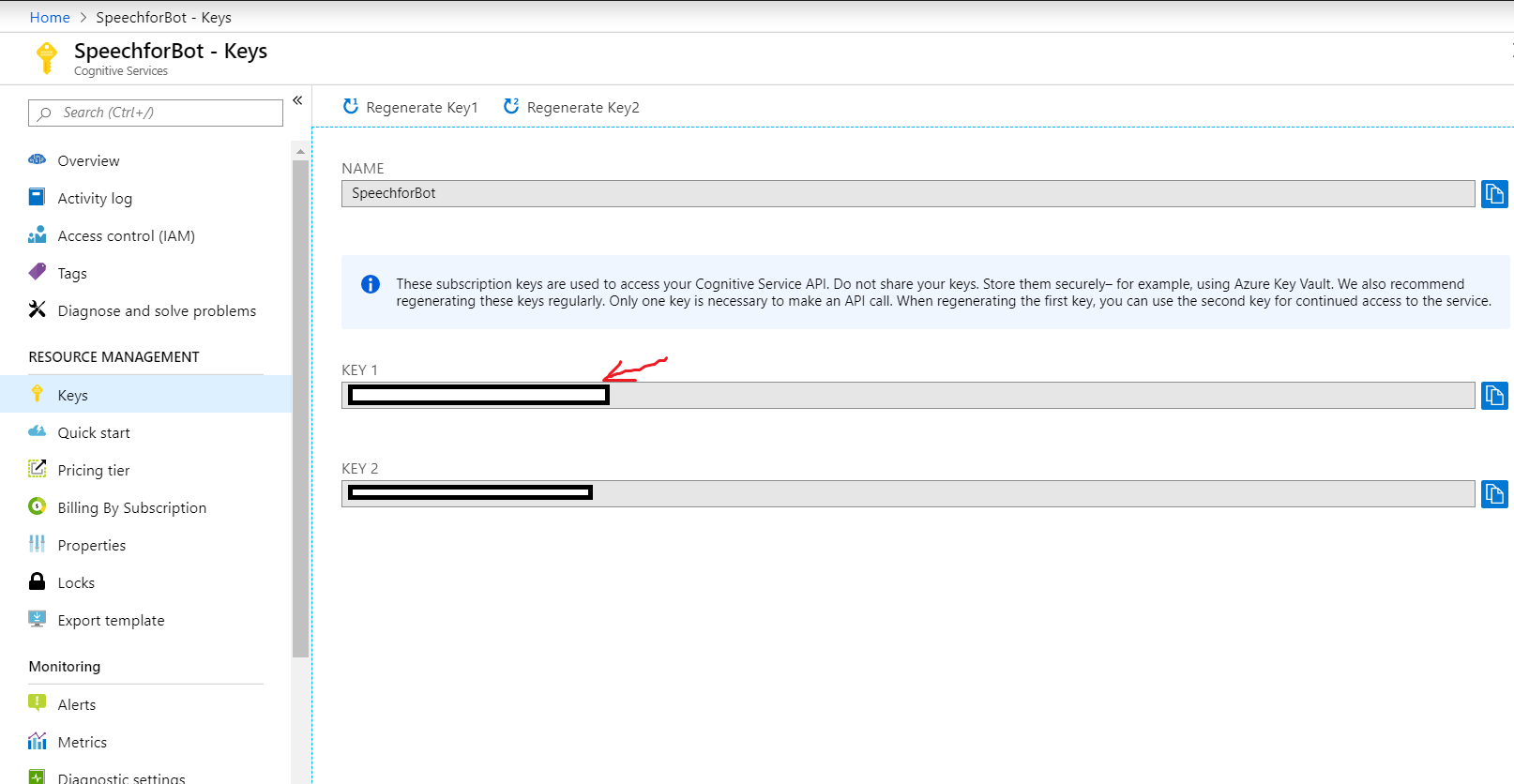

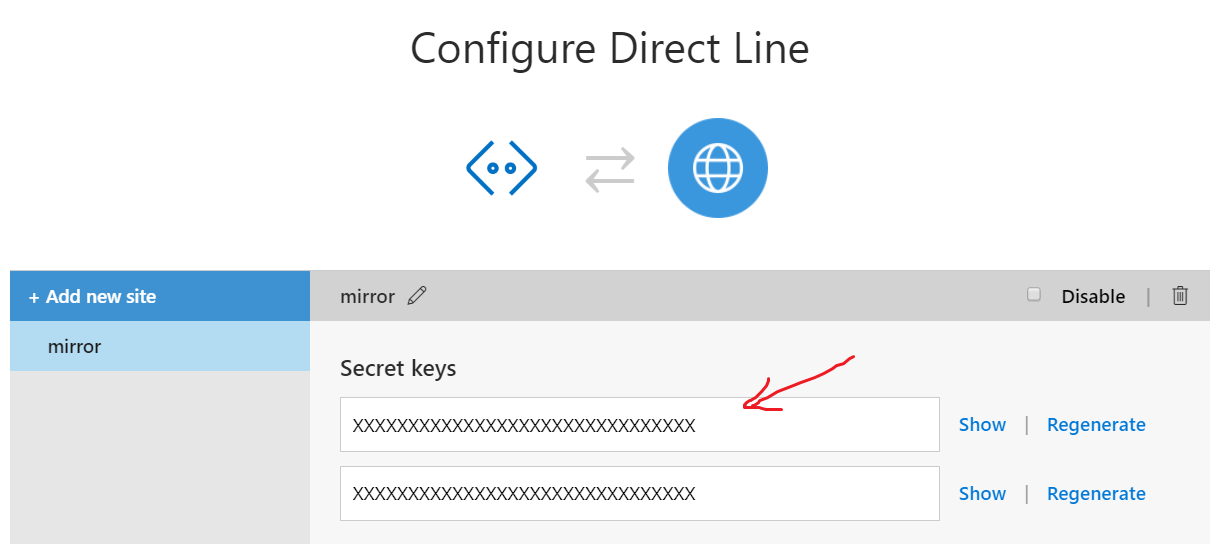

Just to make sure, I'm using these keys:

and:

I don't think your keys would be helpful. Are you sending any input hints or anything speech related in the bot's response?

What I did to convert my Q&A bot to use speech is these steps:

https://docs.microsoft.com/en-us/azure/bot-service/directline-speech-bot?view=azure-bot-service-4.0

Which now that I'm saying this seems to actully be the problem(?!)

Were i suppose to do somthing else to add speech? this actully for direct line speech (which is not supported by JS, this also make sense why my bot works with the C# client).

@r3dr4gon Thanks for the additional information. It looks like Web Chat is nesting the Speech Synthesis Markup Language (SSML) which is causing the Cognitive Speech ponyfil to fail. If you remove the speak and voice tags from the activity's speak property, it should work fine.

This is a known issue in Web Chat. I'm closing this issue as we are already using #1322 to track the bug.

Awsome... Indeed changing the speak function to:

public IActivity Speak(string message)

{

var activity = MessageFactory.Text(message);

activity.Speak = message;

return activity;

}

Solved my problems, Thanks a lot for the help.

Most helpful comment

Awsome... Indeed changing the speak function to:

Solved my problems, Thanks a lot for the help.