Azure-sdk-for-js: [Service Bus] Long-running Streaming Listener stops receiving/de-queuing messages after a while

I have a listener that follows this pattern: https://github.com/Azure/azure-sdk-for-js/blob/master/packages/%40azure/servicebus/data-plane/examples/javascript/gettingStarted/receiveMessagesStreaming.js

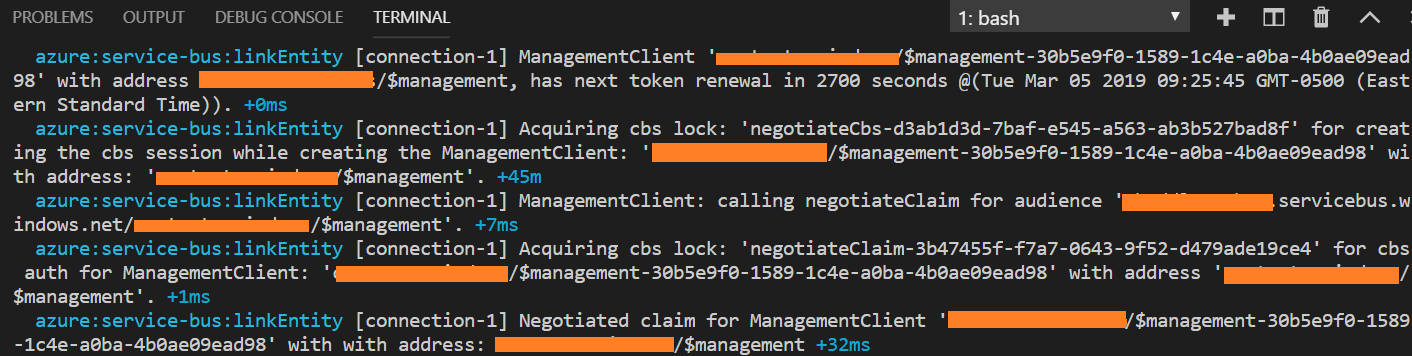

The receiver.receive() call happens in my app at startup, and it works... but after a period of time (or maybe a period of _idle_ time... not sure which), the connection to servicebus doesn't work anymore and messages are stuck in the bus and not de-queued. These are the console messages when that happens:

I can't find any tutorials or documents illustrating a different receiving paradigm that either 1) polls Sbus or 2) keeps the connection alive and working regardless of up-time, so I'm inclined think this is a bug in the receive() behavior.

All 21 comments

Original issue posted by @lululeon https://github.com/Azure/azure-sdk-for-node/issues/4819

/cc @ramya-rao-a

@lululeon There have been quite some changes since the last time you tried the library, can you please try the latest version (1.0.0-preview.3) and let us know if you still see issues?

The logs that you have shared are related to renewing tokens for the $management link and are normal. Can you tell us what the value have you set for the DEBUG env variable to get those logs printed?

@ramya-rao-a - uhm... :( unfortunately we moved on from using azure service bus... so I haven't had the opportunity to use the newer versions. Sorry! But the debug messages were either from DEBUG=azure:service-bus* nodemon app.js or DEBUG=azure:service-bus:receiverstreaming nodemon app.js. Hope that helps!!

Thanks @lululeon

If I may ask, what made you move from azure service bus? And what did you move to?

Any feedback that you can provide to help us improve is much appreciated.

I think we might be seeing this as well, with version 1.0.2 and previous versions. I'll try to collect some logs but it's hard to pinpoint exactly when this occurs as there is no notice of it.

@ramya-rao-a We are also seeing this behavior. We specifically have seen this using the 1.0.0-preview.2 version and now on the 1.0.2 version. This issue is present for createReceiver on both a subscriptionClient and queueClient. As @agates said, I have also been unable to find any relevant log messages, partially due to not knowing when the issue even begins. I have noticed some receivers able to run for days without issue yet others stop their processing after only one day. Please let me know if there is anything else that I can do to help the research of this issue or if there is any further information that I may be able to provide.

Thanks for reporting @agates and @MirkSerain

The streaming receiver takes an error callback, do you see any evidence of your error callback being called at any point?

Yes @ramya-rao-a, I am seeing it with this error, will try to find something more specific.

2019-06-17T08:11:31.072494023Z 2019-06-17T08:11:31.072Z logger Error receiving message from service buss subscription

2019-06-17T08:11:31.076009537Z 2019-06-17T08:11:31.075Z logger MessageLockLostError: Failed to complete the message as the AMQP link with which the message was received is no longer alive.

2019-06-17T08:11:31.076020337Z at Object.translate (/node_modules/@azure/amqp-common/dist/index.js:741:17)

2019-06-17T08:11:31.076024237Z at throwIfMessageCannotBeSettled (/node_modules/@azure/service-bus/dist/index.js:1054:34)

2019-06-17T08:11:31.076027637Z at ServiceBusMessage.<anonymous> (/node_modules/@azure/service-bus/dist/index.js:864:13)

2019-06-17T08:11:31.076031437Z at Generator.next (<anonymous>)

2019-06-17T08:11:31.076034837Z at /node_modules/tslib/tslib.js:107:75

2019-06-17T08:11:31.076038237Z at new Promise (<anonymous>)

2019-06-17T08:11:31.076041637Z at Object.__awaiter (/node_modules/tslib/tslib.js:103:16)

2019-06-17T08:11:31.076044837Z at ServiceBusMessage.complete (/node_modules/@azure/service-bus/dist/index.js:853:24)

2019-06-17T08:11:31.076048238Z at StreamingReceiver.alertsOnMessageHandler [as _onMessage] (file:///home/site/wwwroot/lib/azure/service-bus-subscription-receiver.mjs:127:27)

2019-06-17T08:11:31.076051738Z at process._tickCallback (internal/process/next_tick.js:68:7)

Going to enable more debugging to get more relevant messages.

@ramya-rao-a I am seeing quite a bit of this. Not sure if it's related or normally expected.

2019-06-20T08:22:14.400996932Z 2019-06-20T08:22:14.400Z rhea:io [connection-1] read 296 bytes

2019-06-20T08:22:14.401239633Z 2019-06-20T08:22:14.401Z rhea:io [connection-1] got frame of size 296

2019-06-20T08:22:14.401472434Z 2019-06-20T08:22:14.401Z rhea:raw [connection-1] RECV: 296 0000012802000001005316d00000011800000003434100531dd00000010a00000003a317616d71703a6c696e6b3a6465746163682d666f72636564a1ea546865206c696e6b20274733313a313338383830303a64656c617965642d616c657274732d30333264363930612d663362632d303634322d623163372d6434663031646133636433312720697320666f726365206465746163686564206279207468652062726f6b65722064756520746f206572726f7273206f6363757272656420696e207075626c6973686572286c696e6b32313237383532292e20446574616368206f726967696e3a20416d71704d6573736167655075626c69736865722e49646c6554696d6572457870697265643a2049646c652074696d656f75743a2030303a31303a30302e40

2019-06-20T08:22:14.401866736Z 2019-06-20T08:22:14.401Z rhea:frames [connection-1]:1 <- detach#16 {"closed":true,"error":{"condition":"amqp:link:detach-forced","description":"The link 'G31:1388800:my-topic-032d690a-f3bc-0642-b1c7-d4f01da3cd31' is force detached by the broker due to errors occurred in publisher(link2127852). Detach origin: AmqpMessagePublisher.IdleTimerExpired: Idle timeout: 00:10:00."}}

2019-06-20T08:22:14.402077236Z 2019-06-20T08:22:14.402Z rhea:events [connection-1] Link got event: sender_error

2019-06-20T08:22:14.402273537Z 2019-06-20T08:22:14.402Z rhea-promise:sender [connection-1] sender got event: 'sender_error'. Re-emitting the translated context.

2019-06-20T08:22:14.402437638Z 2019-06-20T08:22:14.402Z rhea-promise:translate [connection-1] Translating the context for event: 'sender_error'.

2019-06-20T08:22:14.403343342Z 2019-06-20T08:22:14.403Z azure:service-bus:error [connection-1] An error occurred for sender 'my-topic-032d690a-f3bc-0642-b1c7-d4f01da3cd31': { DetachForcedError: The link 'G31:1388800:my-topic-032d690a-f3bc-0642-b1c7-d4f01da3cd31' is force detached by the broker due to errors occurred in publisher(link2127852). Detach origin: AmqpMessagePublisher.IdleTimerExpired: Idle timeout: 00:10:00.

2019-06-20T08:22:14.403358742Z at Object.translate (/node_modules/@azure/amqp-common/dist/index.js:741:17)

2019-06-20T08:22:14.403363242Z at Sender.MessageSender._onAmqpError (/node_modules/@azure/service-bus/dist/index.js:1213:40)

2019-06-20T08:22:14.403367242Z at Sender.emit (events.js:182:13)

2019-06-20T08:22:14.403371042Z at emit (/node_modules/rhea-promise/dist/lib/util/utils.js:129:24)

2019-06-20T08:22:14.403384742Z at Object.emitEvent (/node_modules/rhea-promise/dist/lib/util/utils.js:140:9)

2019-06-20T08:22:14.403388742Z at Sender._link.on (/node_modules/rhea-promise/dist/lib/link.js:252:25)

2019-06-20T08:22:14.403392442Z at Sender.emit (events.js:182:13)

2019-06-20T08:22:14.403396242Z at Sender.link.dispatch (/node_modules/rhea/lib/link.js:59:37)

2019-06-20T08:22:14.403400042Z at Sender.link.on_detach (/node_modules/rhea/lib/link.js:159:32)

2019-06-20T08:22:14.403403542Z at Session.on_detach (/node_modules/rhea/lib/session.js:730:27)

2019-06-20T08:22:14.403416842Z name: 'DetachForcedError',

2019-06-20T08:22:14.403421342Z translated: true,

2019-06-20T08:22:14.403424742Z retryable: true,

2019-06-20T08:22:14.403427942Z info: null,

2019-06-20T08:22:14.403431242Z condition: 'amqp:link:detach-forced' }.

@agates

Look at the logs right before the "Failed to complete the message as the AMQP link with which the message was received is no longer alive." message. You should see the reason for the link dying.

The DetachForcedError is for a sender link and is unrelated to your receiving issue. Its just saying that the sender link is idle, so the service kills it

In my case it fails without throwing an error

Just wondering: In such a case, do we have access to a status so the client can have the knowledge that it won't received any message?

This one return false:

receiver.isClosed

I have been having a similar issue when using registerMessageHandler while listening to a queue. Occasionally, I will check my app and messages will be backed up in the queue even though no errors have been thrown in the error callback. My app runs 24x7 and I'm concerned with stability because I don't want to be checking it every day to make sure it is still reading from the queue. Are there any best practices for ensuring the AMQP link is up and running at all times?

in the onMessage event, should I be checking that the AMQP connection is still alive or something like this? This is the closest post I've seen so far compared to my issue. Haven't found any good code examples or explanations to fix this other than "switch to using an azure function with service bus bindings". But, I want to keep this as a node app if possible.

Thanks in advance for any input you have.

Can we re-open this issue since it appears to still be happening for multiple people?

When there is a retryable error for the receiver, the library tries to recover the link behind the scenes. But this retry happens for a finite number of times. Once the max retry count is reached, the library fails to call the user's error handler.

We are currently working on fixing this and should have an update soon.

@bdelville,

In my case it fails without throwing an error

Just wondering in such case, do we have access a status so the client can have the knowledge that it won't received any message?

This one return false:

receiver.isClosed

You can try the isReceivingMessages property on the receiver.

@Burnett2k, @bdelville,

We have just released an update (1.1.1) to the @azure/service-bus package which has the fix to the issue of the library never calling the user's error handler if it fails all its retry attempts to recover broken receivers. After upgrading to this version your error handler should be called when there is a problem with the receiver.

Thank you for the prompt release, we do not observe anymore this issue for the previous 12 days. (So that also mean we didn't have a chance to test catching the error if something similar happen)

I have also not had an issue since upgrading to 1.1.1 about a week ago. The long-running receiver did lose it's connection at one point, but it looks like it gracefully reconnected and none of the SB messages were stuck on the queue for a significant amount of time. 👍 It would be cool, if you could add an example to the examples section which shows how you'd implement retry logic if your receiver lost its AMQP connection.

Thanks @Burnett2k

6616 tracks the need for a sample to show the retries. Please subscribe to that issue, we will get to it right after the holidays.

Closing this issue for now as the latest version of the library seems to have fixed different problems in this area.

Please log a new issue if you find any problems after the upgrade

@ramya-rao-a

request you to please open this issue i am facing this issue for past few months

message handler - OperationTimeoutError>

Unable to create the amqp receiver bot-incoming-message-430a5f0f-e241-5b44-84f7-d453a75d12ca due to operation timeout

@gaurav16694 Apologies for the delay in response. Comments on closed issues do not get the same attention as open ones. Can you please log a new issue if you are still having problems with the Service Bus SDK? Also, please note that we have released a new version of the Service Bus package which we highly recommend migrating to. See the migration guide for more details