Arctos: Add environmental data parameters to collecting event

For aquatic collections, Sevilleta, NEON, environmental samples etc.

See issue #1265

All 29 comments

What does NEON do with environmental data?

I've modeled this out in the past, we can deal with it, but it will take some resources to develop, might require more infrastructure from TACC, and will complicate everything involving events (which is most everything). I'd rather treat it like GenBank: just enter the ID assigned by someone with an environmental data system, and use their data when it's useful.

At one point we discussed a way to deal with fish environmental data

through an external link? But that would require data entry in two separate

databases?

On Sep 16, 2017 9:24 AM, "dustymc" notifications@github.com wrote:

What does NEON do with environmental data?

I've modeled this out in the past, we can deal with it, but it will take

some resources to develop, might require more infrastructure from TACC, and

will complicate everything involving events (which is most everything). I'd

rather treat it like GenBank: just enter the ID assigned by someone with an

environmental data system, and use their data when it's useful.—

You are receiving this because you were assigned.

Reply to this email directly, view it on GitHub

https://github.com/ArctosDB/arctos/issues/1270#issuecomment-329975102,

or mute the thread

https://github.com/notifications/unsubscribe-auth/AOH0hHd5EHazhJoI8_gLVyOoP2T1H2Ulks5si-gqgaJpZM4PZhEX

.

Data entry requirements depend on the database and how the data are collected. I would think all environmental data would be "entered" by hooking the machines which gathered it to some other machine. (Just like nobody types sequences into GenBank - I hope!) Any form of entry could happen in an Arctos interface which writes to a remote DB, or a remote DB interface which lives inside Arctos forms, or by supplying Arctos a path to data on a remote system, or by joining datasets based on temporospatial data, or WHATEVER. Arctos is designed and built to interact with other resources, and I don't see Arctos being a limiting or defining factor in how anything involving any kind of remote data might work.

In the fish case, collectors in the field are recording dissolved oxygen,

streamflow, etc with handheld instruments and writing this down with other

collecting event info.

On Sep 16, 2017 1:06 PM, "dustymc" notifications@github.com wrote:

Data entry requirements depend on the database and how the data are

collected. I would think all environmental data would be "entered" by

hooking the machines which gathered it to some other machine. (Just like

nobody types sequences into GenBank - I hope!) Any form of entry could

happen in an Arctos interface which writes to a remote DB, or a remote DB

interface which lives inside Arctos forms, or by supplying Arctos a path to

data on a remote system, or by joining datasets based on temporospatial

data, or WHATEVER. Arctos is designed and built to interact with other

resources, and I don't see Arctos being a limiting or defining factor in

how anything involving any kind of remote data might work.—

You are receiving this because you were assigned.

Reply to this email directly, view it on GitHub

https://github.com/ArctosDB/arctos/issues/1270#issuecomment-329988601,

or mute the thread

https://github.com/notifications/unsubscribe-auth/AOH0hMDSARMCKOd5-2Fsem6C8XnoHpvwks5sjBxBgaJpZM4PZhEX

.

moved from https://github.com/ArctosDB/arctos/issues/1273;

It seems like dealing with this as collecting_event_attributes could work, so here's an expansion of https://github.com/ArctosDB/arctos/issues/1273#issuecomment-380594090 for hopefully-final approval.

New table collecting_event_attributes

collecting_event_attribute_id number: PKEY

collecting_event_id number not null: FKEY-->collecting_event.collecting_event_id number not null

determined_by_agent_id number not null: FKEY-->agent.agent_id number not null (or should this be NULL?)

event_attribute_type varchar2(255) NOT NULL: FKEY-->new table collecting_event_attribute_type

event_attribute_value varchar2(4000) NOT NULL: conditionally controlled by triggers

event_attribute_units varchar2(30) NULL: conditionally controlled by triggers

event_attribute_remark varchar2(4000) NULL

event_determination_method varchar2(4000) NULL

event_determined_date ISO8601 NULL

New table analogous to http://arctos.database.museum/info/ctDocumentation.cfm?table=CTATTRIBUTE_TYPE (controls event_attribute_type)

Possible values:

- drainage

- watershed

- temperature

- pH

(These can be added via authorities; adding them requires no code changes.)

New table analogous to http://arctos.database.museum/info/ctDocumentation.cfm?table=CTATTRIBUTE_CODE_TABLES

- value in value_code_table-->event_attribute_value is code-table controlled

- value in unit_code_table-->event_attribute_value is numeric, event_attribute_units is code-table controlled

- no entry-->event_attribute_units must be NULL, event_attribute_value=free-text

Examples:

|term|units|value|result|

|-----|----|-----|------|

|drainage|NULL|ctdrainage|values are restricted to a (new) authority file

|temperature|CTTEMPERATURE_UNITS|NULL|values are numeric, units are restricted to http://arctos.database.museum/info/ctDocumentation.cfm?table=CTTEMPERATURE_UNITS

From those data + "Possible values" above, watershed and pH (not included in the table) would be free-text and unitless. (None of this is a recommendation for data values or controls, it's just an attempt at describing functionality.)

Example (partial) data (given the above). This could all be attached to one collecting_event (which could in turn be attached to any number of specimens, media, etc.)

|type|value|units|date|determiner|method|explanation|

|---|------|-----|-----|------|----|------------|

|drainage|something|NULL|today|me|NULL|current determination|

|drainage|somethingelse|NULL|yesterday|me|NULL|previous determination|

|drainage|somethingelse|NULL|yesterday|you|NULL|consensus determination|

|temperature|5|kelvin|last week|me|felt REALLY cold|determination, "warts and all" (eg, there's no particular reason to reject unlikely data|

|temperature|5|celsius|last week|you|likely interpretation of unlikely Kelvin data|Curatorial "corrections" fit and can be documented in the same way as everything else|

|temperature|5.600000001|celsius|{YYYY-MM-DDTHH:MM:SSZ}|NOAA weather station|retrieved via API|These data don't necessarily have to come from any particular source (eg, collectors) and don't have to perfectly coincide with the event (it may span a day, temperature readings may come with second-precision)

https://github.com/ArctosDB/arctos/issues/1294 is probably a soft blocker (I don't think it needs to STOP this, but it should share priority): these data could require hundreds of "columns" and so may need an asynchronous/normalized entry option.

Note that the structure of the data does not control the appearance of the UI - we could allow users to search "drainage" from the Locality pane and "temperature" from {wherever} and restrict pH searches to the Curatorial pane or WHATEVER.

Things in here SHOULD be related to place-at-time (collecting events), but there's some flexibility in that as well. And nothing here changes the flexibility inherent to Places (which can be defined as "THAT cubic millimeter" or "somewhere near the planet, probably") or Events (Locality plus somewhere between "THAT second" and "sometime in the last 4 billion years, probably"). I think that makes sense for these data - the "The list is not current for Alaska." comment at https://water.usgs.gov/GIS/huc_name.html suggests these data are best represented as "{drainage} (as defined by SOURCE on DATE)" rather than "{drainage} (an attribute of a place which mostly doesn't change over human timescales, even if we occasionally refine names and boundaries)" as geology data are modeled.

Does this still seem like a viable solution, is there anything I'm not yet understanding, etc?

I think this sounds like a good approach.

On Tue, Apr 24, 2018, 9:55 AM dustymc notifications@github.com wrote:

moved from #1273 https://github.com/ArctosDB/arctos/issues/1273;

It seems like dealing with this as collecting_event_attributes could work,

so here's an expansion of #1273 (comment)

https://github.com/ArctosDB/arctos/issues/1273#issuecomment-380594090

for hopefully-final approval.New table collecting_event_attributes

collecting_event_attribute_id number: PKEY

collecting_event_id number not null: FKEY-->collecting_event.collecting_event_id number not null

determined_by_agent_id number not null: FKEY-->agent.agent_id number not null (or should this be NULL?)

event_attribute_type varchar2(255) NOT NULL: FKEY-->new table collecting_event_attribute_type

event_attribute_value varchar2(4000) NOT NULL: conditionally controlled by triggers

event_attribute_units varchar2(30) NULL: conditionally controlled by triggers

event_attribute_remark varchar2(4000) NULL

event_determination_method varchar2(4000) NULL

event_determined_date ISO8601 NULLNew table analogous to

http://arctos.database.museum/info/ctDocumentation.cfm?table=CTATTRIBUTE_TYPE

(controls event_attribute_type)Possible values:

- drainage

- watershed

- temperature

- pH

(These can be added via authorities; adding them requires no code changes.)

New table analogous to

http://arctos.database.museum/info/ctDocumentation.cfm?table=CTATTRIBUTE_CODE_TABLES

- value in value_code_table-->event_attribute_value is code-table

controlled- value in unit_code_table-->event_attribute_value is numeric,

event_attribute_units is code-table controlled- no entry-->event_attribute_units must be NULL,

event_attribute_value=free-textExamples:

term units value result

drainage NULL ctdrainage values are restricted to a (new) authority file

temperature CTTEMPERATURE_UNITS NULL values are numeric, units are

restricted to

http://arctos.database.museum/info/ctDocumentation.cfm?table=CTTEMPERATURE_UNITSFrom those data + "Possible values" above, watershed and pH (not included

in the table) would be free-text and unitless. (None of this is a

recommendation for data values or controls, it's just an attempt at

describing functionality.)Example (partial) data (given the above). This could all be attached to

one collecting_event (which could in turn be attached to any number of

specimens, media, etc.)

type value units date determiner method explanation

drainage something NULL today me NULL current determination

drainage somethingelse NULL yesterday me NULL previous determination

drainage somethingelse NULL yesterday you NULL consensus determination

temperature 5 kelvin last week me felt REALLY cold determination, "warts

and all" (eg, there's no particular reason to reject unlikely data

temperature 5 celsius last week you likely interpretation of unlikely

Kelvin data Curatorial "corrections" fit and can be documented in the

same way as everything else

temperature 5.600000001 celsius {YYYY-MM-DDTHH:MM:SSZ} NOAA weather

station retrieved via API These data don't necessarily have to come from

any particular source (eg, collectors) and don't have to perfectly coincide

with the event (it may span a day, temperature readings may come with

second-precision)1294 https://github.com/ArctosDB/arctos/issues/1294 is probably a soft

blocker (I don't think it needs to STOP this, but it should share

priority): these data could require hundreds of "columns" and so may need

an asynchronous/normalized entry option.Note that the structure of the data does not control the appearance of the

UI - we could allow users to search "drainage" from the Locality pane and

"temperature" from {wherever} and restrict pH searches to the Curatorial

pane or WHATEVER.Things in here SHOULD be related to place-at-time (collecting events), but

there's some flexibility in that as well. And nothing here changes the

flexibility inherent to Places (which can be defined as "THAT cubic

millimeter" or "somewhere near the planet, probably") or Events (Locality

plus somewhere between "THAT second" and "sometime in the last 4 billion

years, probably"). I think that makes sense for these data - the "The list

is not current for Alaska." comment at

https://water.usgs.gov/GIS/huc_name.html suggests these data are best

represented as "{drainage} (as defined by SOURCE on DATE)" rather than

"{drainage} (an attribute of a place which mostly doesn't change over human

timescales, even if we occasionally refine names and boundaries)" as

geology data are modeled.Does this still seem like a viable solution, is there anything I'm not yet

understanding, etc?—

You are receiving this because you were assigned.

Reply to this email directly, view it on GitHub

https://github.com/ArctosDB/arctos/issues/1270#issuecomment-383984668,

or mute the thread

https://github.com/notifications/unsubscribe-auth/AOH0hKyhCyuQ5OpbJArQl04nh2smI5eGks5tr0rkgaJpZM4PZhEX

.

Agree

I would like to request that his get resolved quickly. PLEASE!!!

@Jegelewicz what do you need this for/do you have some example data?

Note that drainage circled back around to geography. (I'm still not absolutely sure it belongs there, although it seems to work well enough, but limiting this to things one can measure makes sense to me.)

Add to AWG agenda for prioritization?

Yes, let's prioritize and bring to AWG, but ideally work on it in advance.

We need to resolve issues with our fish collection database and this has

become urgent.

On Thu, Jan 31, 2019 at 3:24 PM dustymc notifications@github.com wrote:

@Jegelewicz https://github.com/Jegelewicz what do you need this for/do

you have some example data?Note that drainage circled back around to geography. (I'm still not

absolutely sure it belongs there, although it seems to work well enough,

but limiting this to things one can measure makes sense to me.)Add to AWG agenda for prioritization?

—

You are receiving this because you were assigned.

Reply to this email directly, view it on GitHub

https://github.com/ArctosDB/arctos/issues/1270#issuecomment-459530190,

or mute the thread

https://github.com/notifications/unsubscribe-auth/AOH0hOZFG0GeAuMabcAKZ4AsspdQ5YeSks5vI201gaJpZM4PZhEX

.

@Jegelewicz what do you need this for/do you have some example data?

UTEP ants - grant funded project to catalog them! Need the following:

air temperature (currently a specimen attribute) – I feel like this should be in the collecting event.

soil temperature – temperature of soil (degrees C or F)

humidity - the amount of water vapor present in air (quantitative, if qualitative, put in event remarks)

wind speed – Speed of wind (m/sec)

climate description - free text

@mvzhuang can provide actual data if needed.

Actual data is always very useful.

This is "locality," locality forms are complicated, I would very much like to write some specialized forms to simplify normal stuff (https://github.com/ArctosDB/arctos/issues/1357) and those will be very complicated, and there are still grumbly remarks on https://github.com/ArctosDB/arctos/issues/1705. Can we QUICKLY finalize the locality model decisions? I'm happy to talk, but I think that has to block this until it's resolved.

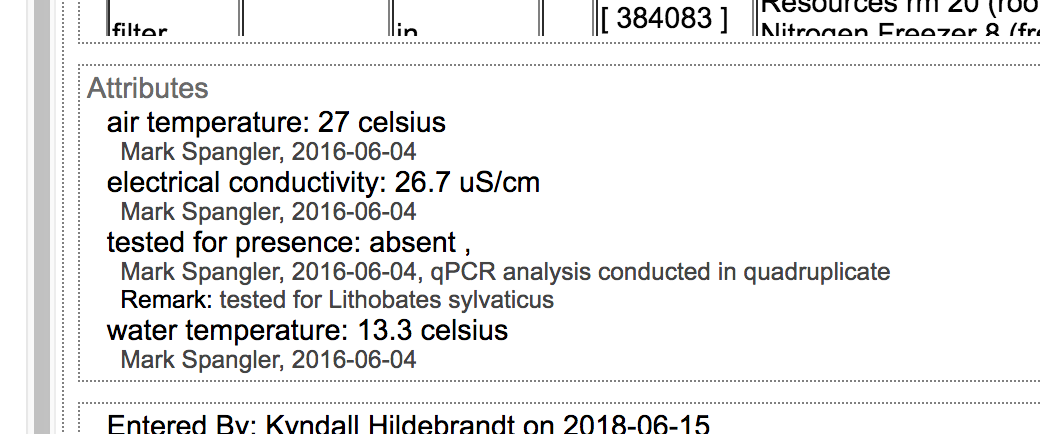

Example data: all the environmental samples at UAM! Right now, I put all the environmental data parameters into attributes which I find to be a poor place for it.

MSB Fish environmental data; note I've included original coordinates as TRS

and UTM data which do not show up on the current cataloged records.

MSB Fish environmental variables.csv

https://drive.google.com/a/carachupa.org/file/d/1bzTfDsvk0ZC8FlOOXbOUHDngNqP_B7Ia/view?usp=drive_web

Attachments area

MSB Fish environmental variables.csv

https://drive.google.com/a/carachupa.org/file/d/1bzTfDsvk0ZC8FlOOXbOUHDngNqP_B7Ia/view?usp=drive_web

On Thu, Apr 4, 2019 at 8:56 PM Mariel Campbell campbell@carachupa.org

wrote:

MSB Fish environmental data; note I've included original coordinates as

TRS and UTM data which does not show up on the current cataloged records.On Thu, Apr 4, 2019 at 6:02 PM Kyndall Hildebrandt <

[email protected]> wrote:Example data: all the environmental samples at UAM! Right now, I put all

the environmental data parameters into attributes which I find to be a poor

place for it.

[image: Screen Shot 2019-04-04 at 4 01 49 PM]

https://user-images.githubusercontent.com/16887896/55596020-13c17d00-56f3-11e9-8d06-229b48781b4f.png—

You are receiving this because you were assigned.

Reply to this email directly, view it on GitHub

https://github.com/ArctosDB/arctos/issues/1270#issuecomment-480104824,

or mute the thread

https://github.com/notifications/unsubscribe-auth/AOH0hFPug9OBNFI9iCA9tFQ9QPLuTkL0ks5vdpKsgaJpZM4PZhEX

.

Thanks - does anyone see anything in those example which won't fit in https://github.com/ArctosDB/arctos/issues/1270#issuecomment-383984668? I don't.

Can we commit to our locality model and proceed with this?

Back to next task re: https://github.com/ArctosDB/arctos/issues/1705#issuecomment-482300045

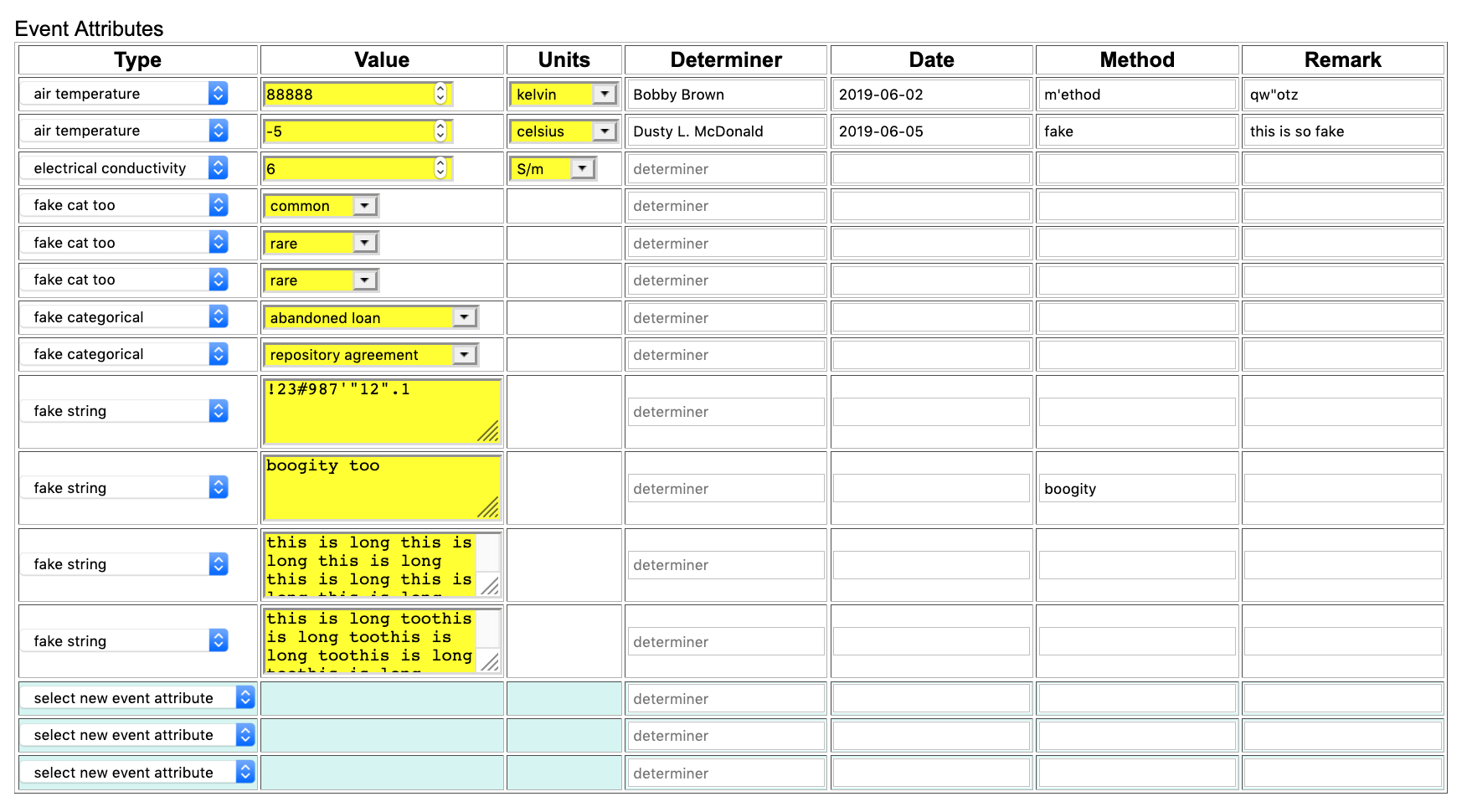

The core of this is functional, and I could use some feedback before I start sprinkling it around.

Code tables (CTCOLL_EVENT_ATTR_TYPE and CTCOLL_EVENT_ATT_ATT) are functional, and I'm 95% sure I won't need to flush that. I think it's safe to set up and datatype attributes, but I don't expect any problems there - that doesn't need much testing, it's simple even if it is broken, whatever. Let me know if my fake/test stuff is in your way and I'll delete it.

There is a management form on edit collecting event. Immediate feedback on it would be greatly appreciated; I need to copy it to fork-edit fairly soon (I need duplicates to stress-test some internal stuff) it would be good to have any kinks ironed out first. I will almost certainly find a reason to flush the data again; we're not ready for "real" data yet, please DO test but not with anything you'll expect to find later.

I've been using http://arctos.database.museum/Locality.cfm?action=editCollEvnt&collecting_event_id=11316538 - feel free to use it or any other event that's not attached to anything permanent.

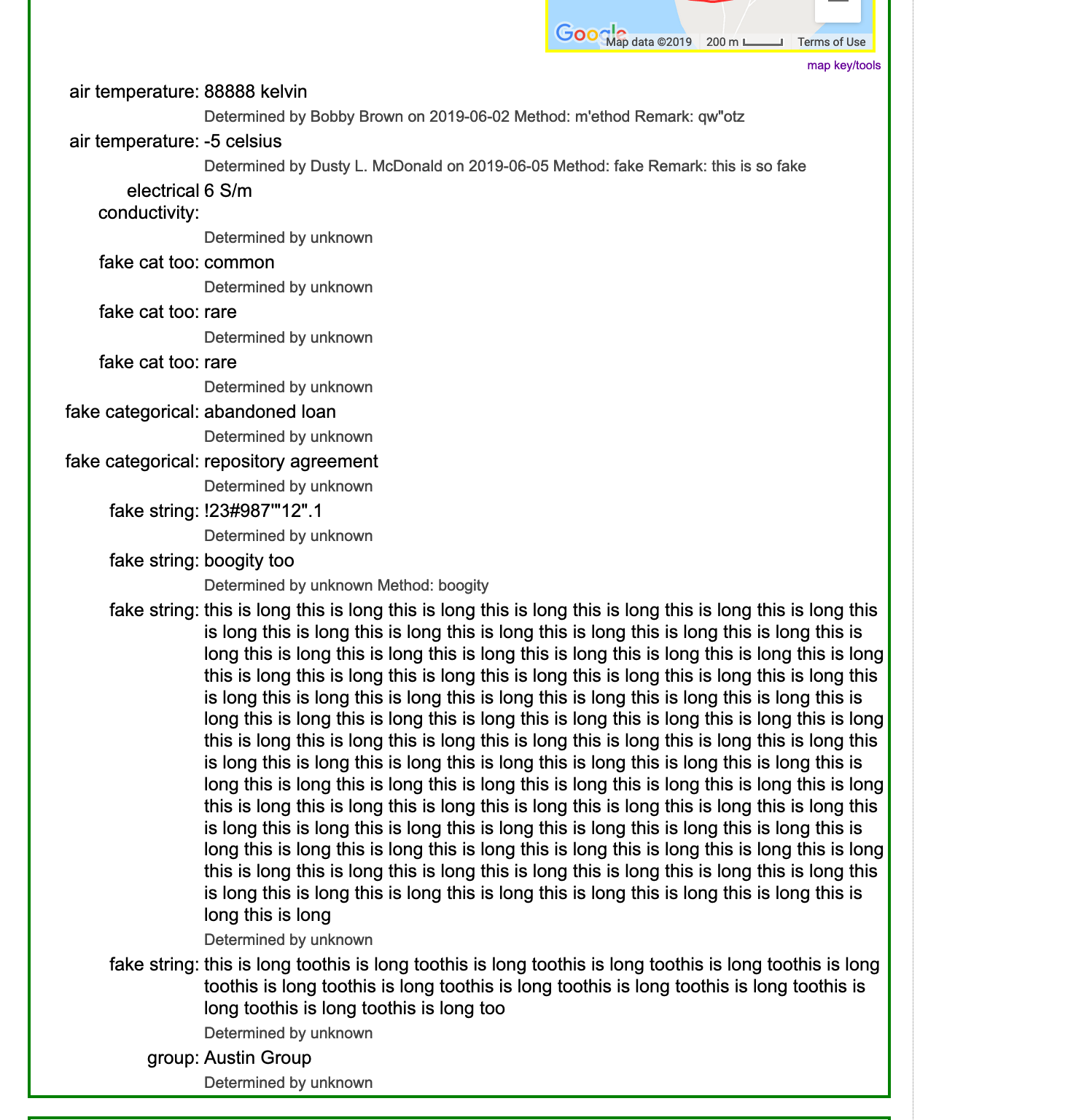

Much lower priority from my perspective, we'll want to discuss how these data are displayed in various places. You can see them only from specimendetail at the moment; they're semi-sharing space with, and formatted identically to, geology.

Here is a test of adding one attribute. Works for me.

https://arctos.database.museum/guid/MSB:Fish:98278

For the display, I think having to click to display environmental data in

the collecting event makes sense. Next step - how can we make these

searchable? E.g. all Rhinichthyes

from Pecos River drainage collected xx date to yy date with flow rate z, ph

7.2, depth 1m, salinity .5 etc.

File with Fish enviro attributes attached. We need to create the attributes

to match these fields as a start.

enviro data and extra fields for test upload.csv

https://drive.google.com/a/carachupa.org/file/d/1VNmQ7QcywGF2vpX4QNTMZ9ln-cu1GbRl/view?usp=drive_web

On Fri, Jun 21, 2019 at 9:21 AM dustymc notifications@github.com wrote:

The core of this is functional, and I could use some feedback before I

start sprinkling it around.Code tables (CTCOLL_EVENT_ATTR_TYPE and CTCOLL_EVENT_ATT_ATT) are

functional, and I'm 95% sure I won't need to flush that. I think it's safe

to set up and datatype attributes, but I don't expect any problems there -

that doesn't need much testing, it's simple even if it is broken, whatever.

Let me know if my fake/test stuff is in your way and I'll delete it.There is a management form on edit collecting event. Immediate feedback on

it would be greatly appreciated; I need to copy it to fork-edit fairly soon

(I need duplicates to stress-test some internal stuff) it would be good to

have any kinks ironed out first. I will almost certainly find a reason to

flush the data again; we're not ready for "real" data yet, please DO test

but not with anything you'll expect to find later.[image: Screen Shot 2019-06-21 at 8 05 57 AM]

https://user-images.githubusercontent.com/5720791/59932151-6f023200-93fb-11e9-8cc6-935981e95bbf.pngI've been using

http://arctos.database.museum/Locality.cfm?action=editCollEvnt&collecting_event_id=11316538

- feel free to use it or any other event that's not attached to anything

permanent.Much lower priority from my perspective, we'll want to discuss how these

data are displayed in various places. You can see them only from

specimendetail at the moment; they're semi-sharing space with, and

formatted identically to, geology.[image: Screen Shot 2019-06-21 at 8 02 47 AM]

https://user-images.githubusercontent.com/5720791/59932390-e59f2f80-93fb-11e9-9134-74b9af3cbfb0.pnghttp://arctos.database.museum/guid/UAMObs:Ento:238566

—

You are receiving this because you were assigned.

Reply to this email directly, view it on GitHub

https://github.com/ArctosDB/arctos/issues/1270?email_source=notifications&email_token=ADQ7JBGIE6U3OOUWUYQBR3DP3TWV5A5CNFSM4D3GCEL2YY3PNVWWK3TUL52HS4DFVREXG43VMVBW63LNMVXHJKTDN5WW2ZLOORPWSZGODYIYNZA#issuecomment-504465124,

or mute the thread

https://github.com/notifications/unsubscribe-auth/ADQ7JBCTVYKSDL2QN2XWJW3P3TWV5ANCNFSM4D3GCELQ

.

Thanks!

I'm not convinced that "isn't visible" and "doesn't exist" are functionally distinct for most users, and I really dislike search results that don't contain what was searched for. We may end up with one or both of those anyway, but it's still evil and we should continue looking for a better solution.

As above, I think you can set up the code tables at any time.

Search should be straightforward. I'll probably add this with https://github.com/ArctosDB/arctos/issues/2097.

I can build a bulkloader at some point (I'm probably at least a week out from that, if I don't get pulled in some new direction). It would be a tremendous amount of fiddly work to find events from Arctos specimens, find specimens that should share events in your data (the event attributes are linked to specimens, not events in what you attached), and reconcile all of that. Lacking better ideas, I recommend we use the "fork-edit" method, which will just make new events for each specimen and let the cleanup scripts put them back together when that's possible. That is very expensive computationally so it would have to run asynchronously - that's not a problem, just some additional complexity. I don't think it's a problem here - I think MSB:Fish all have one event and we'll want to replace it - but for other stuff where we want to eg, archive one of many events and add a new or etc. that may get unbearably complicated. The merger scripts currently have a 30-day gestation period which provides opportunity to add denormalizing data to some of the specimens which should share events - just something to be aware of.

Does that sound workable, or do you (or anyone) have better bulkloader ideas?

Let's give it a shot. I added more attributes to that record, FYI, so you

can see the kinds of fields we are dealing with.

On Fri, Jun 21, 2019 at 1:23 PM dustymc notifications@github.com wrote:

Thanks!

I'm not convinced that "isn't visible" and "doesn't exist" are

functionally distinct for most users, and I really dislike search results

that don't contain what was searched for. We may end up with one or both of

those anyway, but it's still evil and we should continue looking for a

better solution.As above, I think you can set up the code tables at any time.

Search should be straightforward. I'll probably add this with #2097

https://github.com/ArctosDB/arctos/issues/2097.I can build a bulkloader at some point (I'm probably at least a week out

from that, if I don't get pulled in some new direction). It would be a

tremendous amount of fiddly work to find events from Arctos specimens, find

specimens that should share events in your data (the event attributes are

linked to specimens, not events in what you attached), and reconcile all of

that. Lacking better ideas, I recommend we use the "fork-edit" method,

which will just make new events for each specimen and let the cleanup

scripts put them back together when that's possible. That is very expensive

computationally so it would have to run asynchronously - that's not a

problem, just some additional complexity. I don't think it's a problem here

- I think MSB:Fish all have one event and we'll want to replace it - but

for other stuff where we want to eg, archive one of many events and add a

new or etc. that may get unbearably complicated. The merger scripts

currently have a 30-day gestation period which provides opportunity to add

denormalizing data to some of the specimens which should share events -

just something to be aware of.Does that sound workable, or do you (or anyone) have better bulkloader

ideas?—

You are receiving this because you were assigned.

Reply to this email directly, view it on GitHub

https://github.com/ArctosDB/arctos/issues/1270?email_source=notifications&email_token=ADQ7JBCGP4QLEY7ATX3VKFTP3UTC3A5CNFSM4D3GCEL2YY3PNVWWK3TUL52HS4DFVREXG43VMVBW63LNMVXHJKTDN5WW2ZLOORPWSZGODYJLY2Y#issuecomment-504544363,

or mute the thread

https://github.com/notifications/unsubscribe-auth/ADQ7JBC4DRVKR5QJ7EQLEHLP3UTC3ANCNFSM4D3GCELQ

.

It's working for me too. So cool!!

Is it possible to prevent users from changing the attribute type once a collecting event attribute has been created (like how specimen and part attributes work)? When I was playing around with the feature, changing the attribute type erased data that I'd previously saved and it seems like an easy thing to do inadvertently while editing other parts of a collecting event.

Also, could tracking collecting event attributes be integrated into the collecting event edit archive?

prevent users from changing the attribute type

Yes, it's possible - I'll see what I can come up with.

collecting event edit archive

I've been flip-flopping around on that too. My current wishy-washy thinking is that event attributes are metadata - they don't CHANGE the fundamental nature of an Event, they can only ADD - and as such don't need archived. I'm happy enough to go the other way if ya'll don't agree.

- Existing attributes now have only the existing type and delete

- The table/form is now in fork-edit specimen events

Additional thoughts on archiving OLD event attribute values? It's fairly easy to set up an archive at this point; if we're going there I'd like to do it soon-ish. (Display/UI is less trivial, but that's more or less a separate low-priority issue.)

I agree with setting up an archive.

On Tue, Jun 25, 2019 at 10:07 AM dustymc notifications@github.com wrote:

>

- Existing attributes now have only the existing type and delete

- The table/form is now in fork-edit specimen events

Additional thoughts on archiving OLD event attribute values? It's fairly

easy to set up an archive at this point; if we're going there I'd like to

do it soon-ish. (Display/UI is less trivial, but that's more or less a

separate low-priority issue.)—

You are receiving this because you were assigned.

Reply to this email directly, view it on GitHub

https://github.com/ArctosDB/arctos/issues/1270?email_source=notifications&email_token=ADQ7JBAOAC6MPTOT3SSRN4TP4I7FVA5CNFSM4D3GCEL2YY3PNVWWK3TUL52HS4DFVREXG43VMVBW63LNMVXHJKTDN5WW2ZLOORPWSZGODYQYJDI#issuecomment-505513101,

or mute the thread

https://github.com/notifications/unsubscribe-auth/ADQ7JBETTUMVINH6RR2VHTTP4I7FVANCNFSM4D3GCELQ

.

There's a link to the event attribute archive (which may get zapped and rebuilt in the near future...) from the event archive.

Unless someone has good reasons not to, I'm going to cascade delete both event attributes and event attribute archives from the parent event. I can't see any reason that's wrong, and it's more or less necessary to both maintaining the archives and cleaning up the messes left by the fork-edit form.

There's a bulkloader in the usual place, with the option to fork events for specimens.

This is now searchable, that has some skeletal documentation, and the code table viewer is happy. I need to do some more testing on merging, then if nobody can find anything else missing/broken I'll delete the test data and we can use this.

The big obvious gap is data entry. The only sorta-practical approaches I can think of are a new option in the "more.." widget, or to deal with event attributes either before or after specimen data entry. The "more..." thing is semi-complicated and seems to cause lots of complaints, so I'm not crazy about that option. I suspect these data will mostly be normalized in field-notes-or-whatever, if that's true then de-normalizing them so they can be (mis)entered with every specimen (and then hopefully letting the merge scripts pull them back together, except the stuff that's inevitable entered wrong won't merge...) doesn't make much sense. The upload would have to run in fork-edit mode, so it could be doubly confusing when picking localities (and then moving the specimen out of them when you load the attribute data). Can we just expect (and instruct) data entry to use pre-created Events (or bulkload post-entry, but that's sort of messy as well) when there's environmental data? I think some collections do that for everything, so I don't think it's a particularly inefficient approach in general (and perhaps we can solicit a how-to - anyone??).

Can we just expect (and instruct) data entry to use pre-created Events

Works for me

On Mon, Jul 1, 2019, 3:37 PM dustymc notifications@github.com wrote:

This is now searchable, that has some skeletal documentation, and the code

table viewer is happy. I need to do some more testing on merging, then if

nobody can find anything else missing/broken I'll delete the test data and

we can use this.The big obvious gap is data entry. The only sorta-practical approaches I

can think of are a new option in the "more.." widget, or to deal with event

attributes either before or after specimen data entry. The "more..." thing

is semi-complicated and seems to cause lots of complaints, so I'm not crazy

about that option. I suspect these data will mostly be normalized in

field-notes-or-whatever, if that's true then de-normalizing them so they

can be (mis)entered with every specimen (and then hopefully letting the

merge scripts pull them back together, except the stuff that's inevitable

entered wrong won't merge...) doesn't make much sense. The upload would

have to run in fork-edit mode, so it could be doubly confusing when picking

localities (and then moving the specimen out of them when you load the

attribute data). Can we just expect (and instruct) data entry to use

pre-created Events (or bulkload post-entry, but that's sort of messy as

well) when there's environmental data? I think some collections do that for

everything, so I don't think it's a particularly inefficient approach in

general (and perhaps we can solicit a how-to - anyone??).—

You are receiving this because you were assigned.

Reply to this email directly, view it on GitHub

https://github.com/ArctosDB/arctos/issues/1270?email_source=notifications&email_token=ADQ7JBGX7JTNJS77M46APF3P5JMHFA5CNFSM4D3GCEL2YY3PNVWWK3TUL52HS4DFVREXG43VMVBW63LNMVXHJKTDN5WW2ZLOORPWSZGODY7EEHY#issuecomment-507396639,

or mute the thread

https://github.com/notifications/unsubscribe-auth/ADQ7JBFBLDNLSAW6RWDHCBLP5JMHFANCNFSM4D3GCELQ

.

I can't break it so I cleaned up the testing mess - I think this is now 'in production,' and I will treat all data as such going forward.

@campmlc I'm happy to help get those data in, but I have no idea what most of that means, what the units might be, what belongs here vs. specimen attributes, etc. I think Step One is to set up the code tables, which will probably require creating more unit-tables and etc. Lemme know how I can help....

Most helpful comment

I agree with setting up an archive.

On Tue, Jun 25, 2019 at 10:07 AM dustymc notifications@github.com wrote:

>