🚀 Feature

COCO finetuning evolution will attempt to evolve hyps better tuned to finetune COCO from official (pretrained COCO) YOLOv5 weights in https://github.com/ultralytics/yolov5/releases/tag/v3.0

A docker image has been created at ultralytics/yolov5:evolve with a new hyp.coco.finetune.yaml file identical to hyp.scratch.yaml, but with added hyps for warmup evolution:

# Hyperparameters for COCO finetuning

# python train.py --batch 32 --weights yolov5m.pt --data coco.yaml --img 640 --epochs 30

# See tutorials for hyperparameter evolution https://github.com/ultralytics/yolov5#tutorials

lr0: 0.01 # initial learning rate (SGD=1E-2, Adam=1E-3)

lrf: 0.2 # final OneCycleLR learning rate (lr0 * lrf)

momentum: 0.937 # SGD momentum/Adam beta1

weight_decay: 0.0005 # optimizer weight decay 5e-4

warmup_epochs: 1.5 # warmup epochs (fractions ok)

warmup_momentum: 0.5 # warmup initial momentum

warmup_bias_lr: 0.05 # warmup initial bias lr

giou: 0.05 # box loss gain

cls: 0.5 # cls loss gain

cls_pw: 1.0 # cls BCELoss positive_weight

obj: 1.0 # obj loss gain (scale with pixels)

obj_pw: 1.0 # obj BCELoss positive_weight

iou_t: 0.20 # IoU training threshold

anchor_t: 4.0 # anchor-multiple threshold

anchors: 3.0 # anchors per output grid (0 to ignore)

fl_gamma: 0.0 # focal loss gamma (efficientDet default gamma=1.5)

hsv_h: 0.015 # image HSV-Hue augmentation (fraction)

hsv_s: 0.7 # image HSV-Saturation augmentation (fraction)

hsv_v: 0.4 # image HSV-Value augmentation (fraction)

degrees: 0.0 # image rotation (+/- deg)

translate: 0.1 # image translation (+/- fraction)

scale: 0.5 # image scale (+/- gain)

shear: 0.0 # image shear (+/- deg)

perspective: 0.0 # image perspective (+/- fraction), range 0-0.001

flipud: 0.0 # image flip up-down (probability)

fliplr: 0.5 # image flip left-right (probability)

mixup: 0.0 # image mixup (probability)

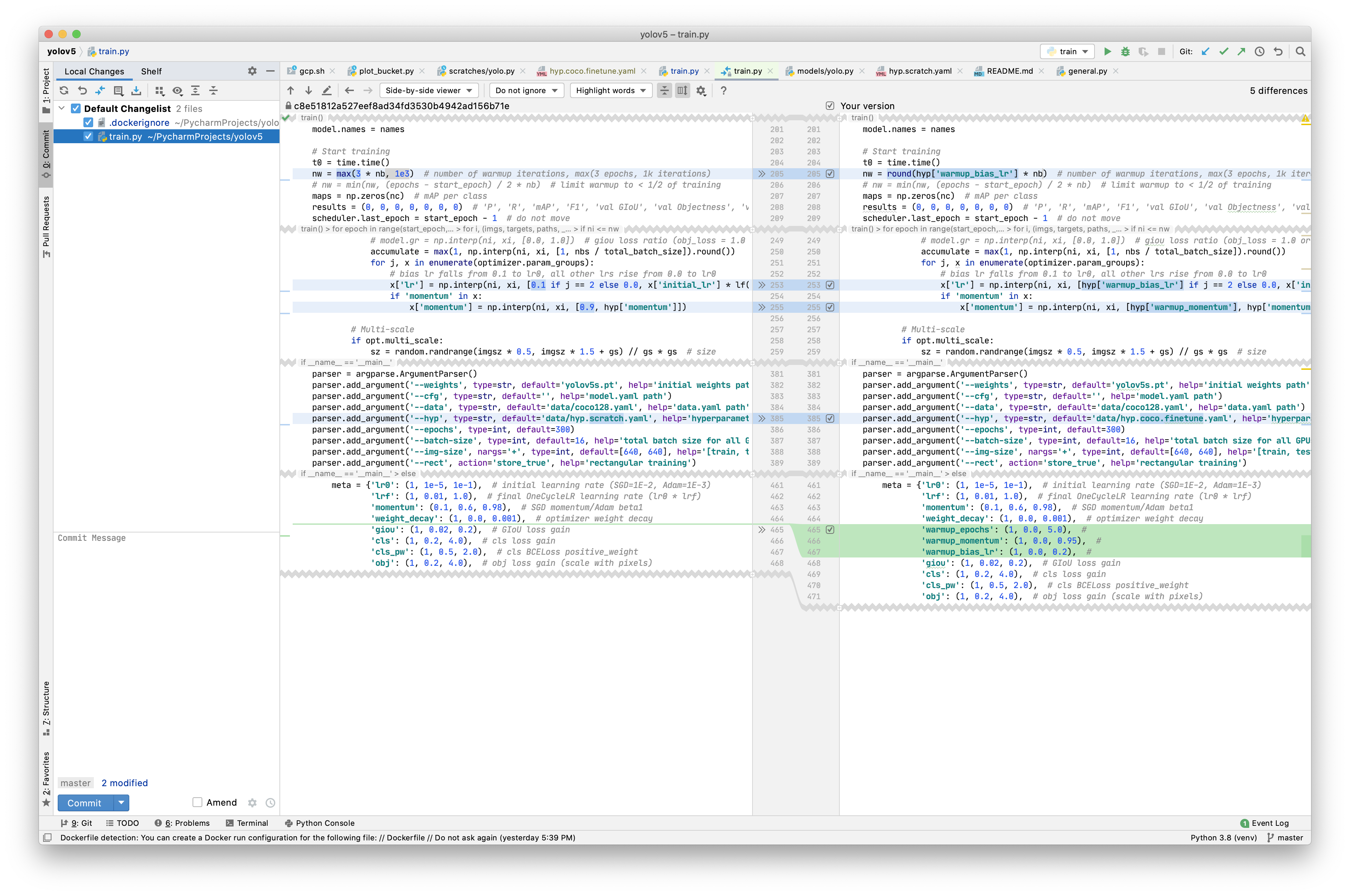

Image train.py has been updated to accept these additional hyps:

Initial evolution will use a shorter training schedule to maximize evolution rate for 300 generations:

python train.py --batch 32 --weights yolov5m.pt --data coco.yaml --epochs 10 --bucket ult/evolve/coco/fine10

Pending analysis of above results, a longer period may be used to reduce overfitting and improve results:

python train.py --batch 32 --weights yolov5m.pt --data coco.yaml --epochs 30 --bucket ult/evolve/coco/fine30

@NanoCode012 and anyone with available GPUs is invited to participate! We need all of the help we can get to improve our hyps to help people better train on their own custom datasets. Our docker image reads and writes evolution results from each generation from a centralized GCP bucket, so multiple distributed nodes can evolve simultaneously and seamlessly. The steps on a unix machine are:

1. Navigate to (or download) COCO

Open a new terminal and navigate to the directory containing /coco, or download using yolov5/data/scripts/get_coco.sh:

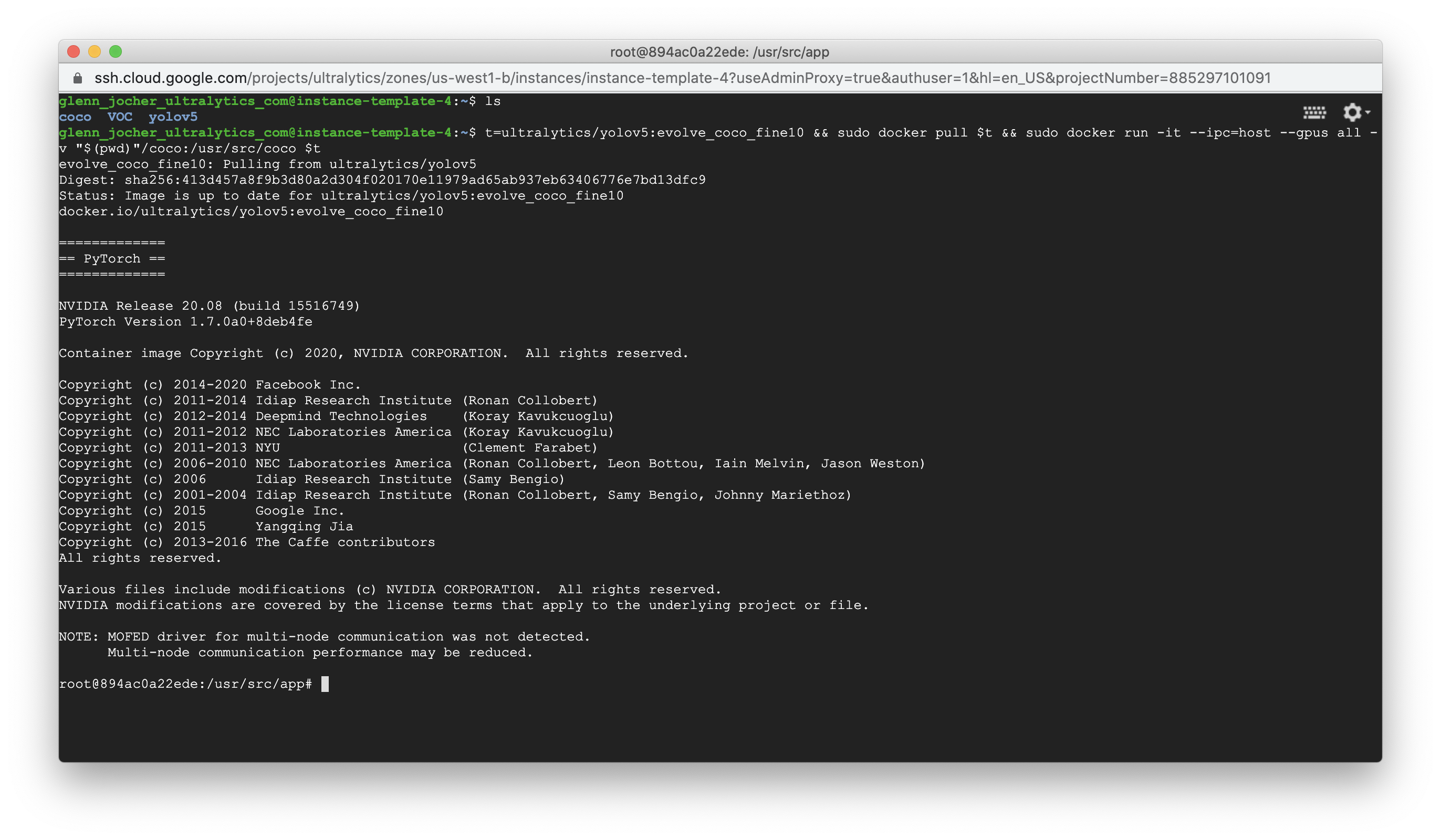

2. Pull Image

Pull and run docker image in interactive mode with access to GPUs, mounting coco volume:

t=ultralytics/yolov5:evolve_coco_fine10 && sudo docker pull $t && sudo docker run -it --ipc=host --gpus all -v "$(pwd)"/coco:/usr/src/coco $t

3. Evolve

From within the running image, select your --device and run this loop to start evolution. When you want to free up resources or stop contributing, simply terminate the docker container. Run sudo docker ps to display running containers, and then sudo docker kill CONTAINER_ID. Restart anytime from step 2.

while true; do

python train.py --batch 32 --weights yolov5m.pt --data coco.yaml --img 640 --epochs 10 --evolve --bucket ult/evolve/coco/fine10 --device 0

done

Optional parallel evolution on a multi-GPU machine, one container per GPU for devices 0, 1, 2, 3:

for i in 0 1 2 3; do

t=ultralytics/yolov5:evolve_coco_fine10 && sudo docker pull $t && sudo docker run -d --ipc=host --gpus all -v "$(pwd)"/coco:/usr/src/coco $t bash utils/evolve.sh $i

sleep 60 # avoid simultaneous evolve.txt read/write

done

4. Batchsize

--batch 32 uses about 11.9GB of CUDA mem. If you are using a 2080Ti with 11GB you can use the same commands above with --batch 28 or 24 with minimal impact on results.

All 20 comments

Thanks @glenn-jocher . I’ve created a jupyterlab docker for evolve and set 4 containers to run.

Would there be bottleneck if those 4 containers read from the same coco directory?

@NanoCode012 The results of above process is weights of model or hyper parameter files?

It take alot of time consuming for evolve task!

Thank you

Hi @buimanhlinh96 , it evolves hyperparameters. It indeed takes a lot of time.

Since I'm not using my GPUs atm, I'm letting them all evolve. It's quite fast since one generation is only 3.3 hours on each of my GPUs.

Btw, @glenn-jocher , it may be good idea to make the batch-size an argument for the bash script too, as you've said, "2080Ti needs to use a smaller batch-size". On the other hand, I need a higher batch-size.

Since you haven't uploaded the code here, I can't make a PR, but it should be something like this,

# Hyperparameter evolution commands

while true; do

python train.py --batch $1 --weights yolov5m.pt --data coco.yaml --img 640 --epochs 10 --evolve --bucket ult/evolve/coco/fine10 --device $2

done

then,

# Start containers

$bs = 32

for i in 0 1 2 3; do

t=ultralytics/yolov5:evolve_coco_fine10 && sudo docker pull $t && sudo docker run -d --ipc=host --gpus all -v "$(pwd)"/coco:/usr/src/coco $t bash utils/evolve.sh $bs $i

sleep 60 # avoid simultaneous evolve.txt read/write

done

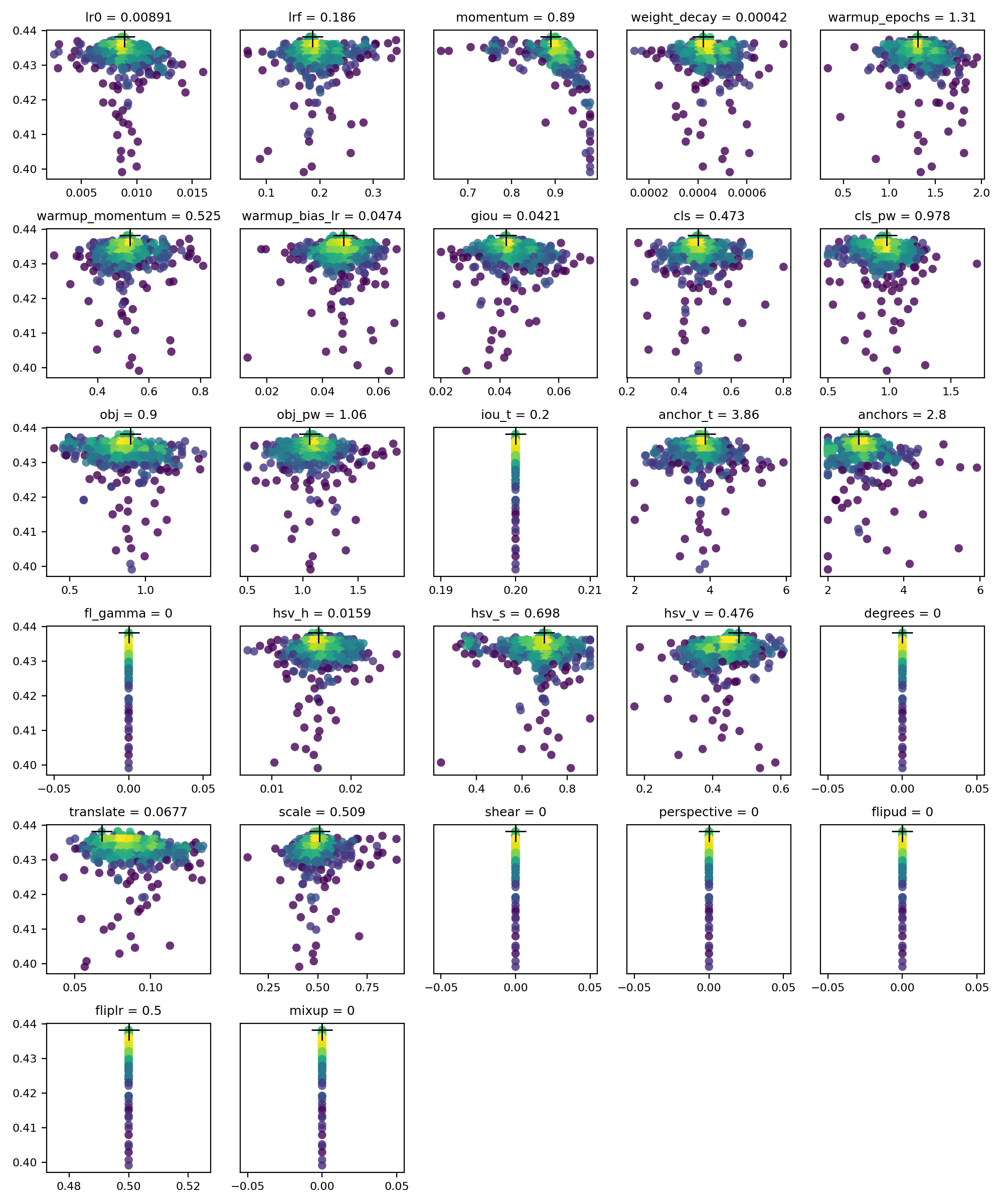

@NanoCode012 awesome, great! We can see the realtime results here, we already have a bunch of generations. Not too much change yet though.

https://storage.googleapis.com/ult/evolve/coco/fine10/evolve.txt

https://storage.googleapis.com/ult/evolve/coco/fine10/hyp_evolved.yaml

Ah yes, you are right. I'm not great at bash, but maybe there is a way to make the docker command execute an arbitrary bash script that would include the while loop with train.py and skip evolve.py?

EDIT: for reference, initial mAPs (gen 0) were 0.613 and 0.412.

@buimanhlinh96 results of hyp evolution is a hyperparameter file. You then train with this hyperparameter file, i.e.:

python train.py --hyp hyp.evolved.yaml

for reference, initial mAPs (gen 0) were 0.613 and 0.413.

Oh, I see. I thought the initial were the lowest results. Then, I guess there isn't much change as you've said.

Ah yes, you are right. I'm not great at bash, but maybe there is a way to make the docker command execute an arbitrary bash script that would include the while loop with train.py and skip evolve.py?

I think the current method works well. All a user has to do is copy the "start container" code and run it.

I'm not sure if there's a way to execute an arbitrary bash script, only static/hardcoded bash scripts.

# end dockerfile

CMD ['python','train.py','--evolve']

but you could pass in environmental variables to a docker call -e BATCHSIZE=64, then let a bash script or code above take that value.

@NanoCode012 no, evolve.txt is read, updated, sorted, saved, and uploaded (if bucket) after each generation, so the top row is the best result. Unfortunately chronological results are not available currently. Just checked, we have 60 generations, but the top result is not moving much, currently at 0.617 and 0.415, vs 0.613 and 0.412 originally (not 0.413). Let's wait a bit more.

@glenn-jocher is there a way how to use weighted fine-tuning? So far I found in code only this hard-coded

https://github.com/ultralytics/yolov5/blob/c8e51812a527eef8ad34fd3530b4942ad156b71e/train.py#L496

which means that alternative elif parent == 'weighted' never runs... correct?

@Borda ah, this line is part of the genetic algorithm parent selection method for the next generation. If parent == 'single', then a single parent from the top n population is randomly selected for mutation based on their weights. The highest fitness parents carry higher weights, and are more likely to be selected. This is the default method.

If parent == 'weighted', then the top n population is instead merged togethor using a weighted mean, where the weights again are the individual fitness.

The selected parent is then mutated based on the mutation probability and sigma, and this child's fitness is subsequently evaluated, and appended to the population list in evolve.txt when complete, whereafter the process repeats.

# Select parent(s)

parent = 'single' # parent selection method: 'single' or 'weighted'

if parent == 'single' or len(x) == 1:

# x = x[random.randint(0, n - 1)] # random selection

x = x[random.choices(range(n), weights=w)[0]] # weighted selection

elif parent == 'weighted':

x = (x * w.reshape(n, 1)).sum(0) / w.sum() # weighted combination

EDIT: yes, since parent is hardcoded as 'single', the weighted combination method is never used.

Hi @glenn-jocher , I think the evolve has been plateauing. I recall that the top hyp has been constant for more than 100 generations/3 days.

What should be the next step? Should we try to do a full 300 coco scratch run on the 5m with the new hyp? Evolving with 20 epochs?

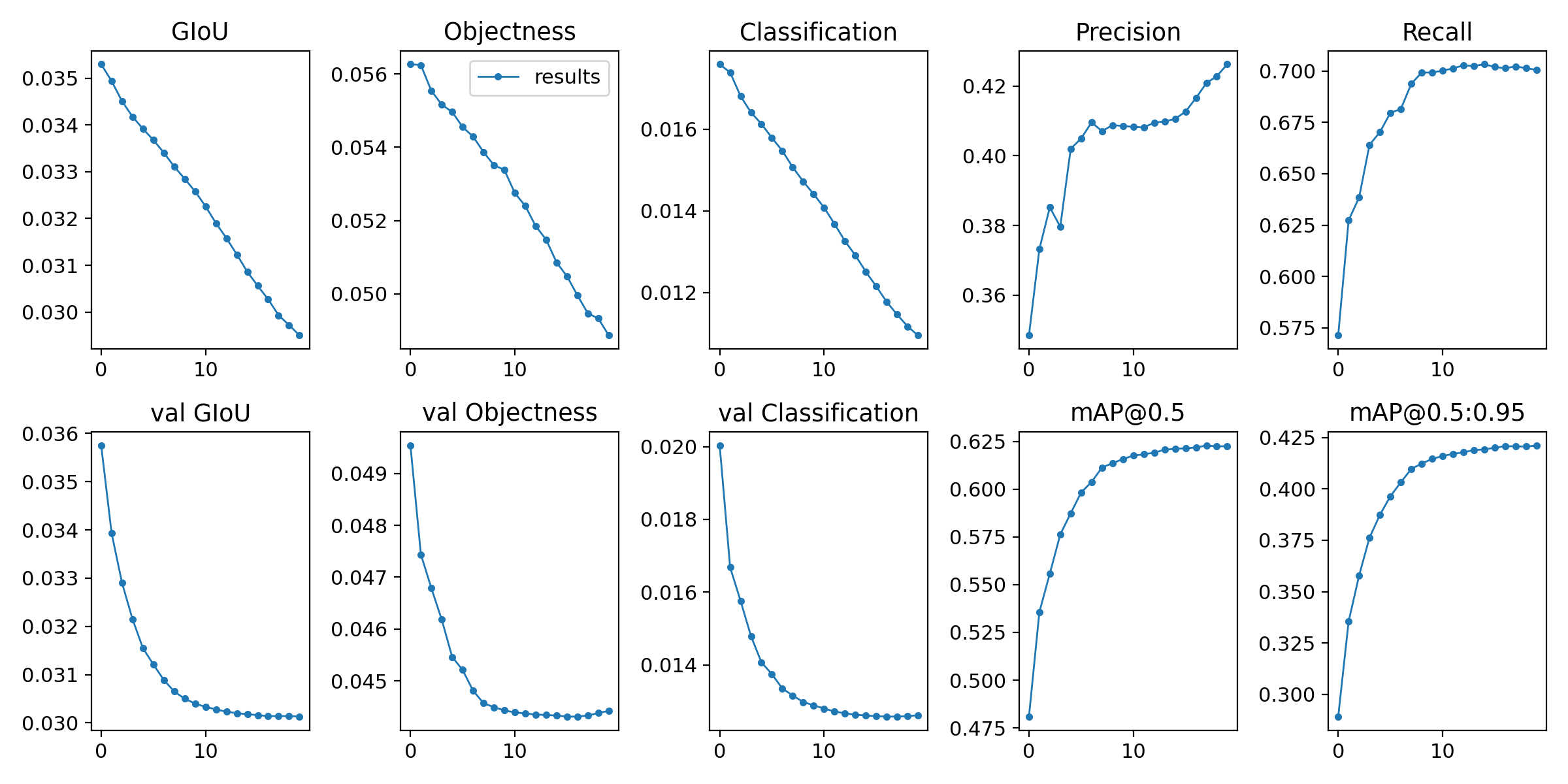

I used one of the top hyps to finetune for 20 epochs. I notice that the warmup strategy doesn't make a drop in the mAP at the start too, so that's good.

| Hyp | Highest mAP @ 0.5 | Highest mAP @ 0.5:0.95 |

|-|-|-|

| OG Scratch | 62.07 | 41.99|

| Evolved Scratch | 62.29 (+0.22) | 42.11 (+0.12) |

The below is for finetuning 10 epochs for comparison.

| Hyp | Highest mAP @ 0.5 | Highest mAP @ 0.5:0.95 |

|-|-|-|

| OG Scratch | 61.3 | 41.2 |

| Evolved Scratch | 62 (+0.7) | 41.8 (+0.6) |

We see that the hyps may be overfitting to 10 epochs..

Edit: I stand corrected. It would seem that mAP 0.5:0.95 just hit 41.9

@NanoCode012 hey good analysis! Yes you are right, we are settled into a local minima for a while now, and also as you said perhaps we are overfitting for 10 epochs. I've seen this pattern before, whereby most of the improvement occurs in the first 100 generations, i.e. the 80 20 rule is in good effect here, and after sufficient generations (i.e. maybe 200-400) there is no more improvement, indicating that the parameters have settled into a minimum.

Unfortunately our results here are disappointing. For custom datasets it's not uncommon to see 5-10% improvement in both [email protected] and [email protected]:0.95 when starting from the COCO hyps. I suppose this may be indicating that the optimal scratch hyps for a given dataset are not dissimilar to the optimal finetuning hyps.

Before we do anything else I am going to add a timestamp to the evolution output, this should allow us to 1) more automatically track the number of plateaued generations, 2) plot the actual improvement vs generation to see what the curve actually looks like (I've never seen this), which should be interesting.

I've also integrated most of the docker image changes into master now, and actually found out that the docker image may add some overhead in certain cases, so I've come up with a better evolution execution that simply relies on cloning a branch and running each GPU in it's own screen (no docker required).

I'll try to get these changes done today and then I'll update here.

Hi @glenn-jocher , could you provide the results_5m.txt file that got you the current results in the ReadMe? The files that you provide in the google drive are old results. I would like to use it for comparison against the current fine-tuning results.

@NanoCode012 I've uploaded yolov5m results here:

https://github.com/ultralytics/yolov5/releases/download/v3.0/results_yolov5m.txt

The very last epoch shows a bump because we used to have a line in train.py that would pass pad=0.5 on the final epoch, to match the padding used when testing directly from test.py. This caused best.pt to always turn into last.pt though most of the time, so I eliminated the line. Epochs 0-298 should be the same as what we see now when training.

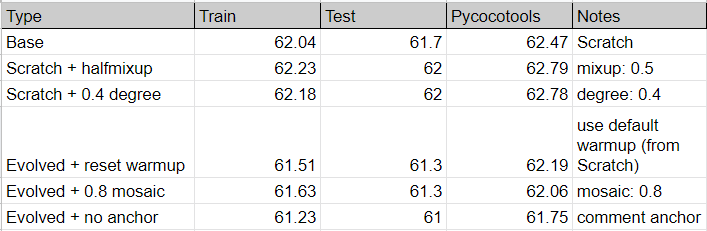

Hi @glenn-jocher , this is my brief analysis on the hyps now.

| Method | Total Epochs | Highest train mAP 0.5 \ Epoch | test mAP 0.5 | pycoco mAP 0.5 |

|-|-|-|-| -|

| scratch hyp (default)| 300 | 62.69 \ 269 | 62.4 | 63.167 |

| evolved hyp | 300 | 61.48 \ 211 | 61.5 | 62.26|

|||||

|fine scratch hyp|40| 62.47 \ 40 |62.3| 63.14|

|fine evolved hyp|40|62.87 \ 25|62.4| 63.188 |

Note:

- This uses the 5m model, bs 64, single device.

- Fine is short for finetune from yolov5m weights

- Evolved hyps are from different gens 838 vs 939 but uses same values.

- Default scratch hyp results are from results file given above

This shows that the evolved hyp does not help with a Full COCO scratch training.

Also the evolve on 10 epochs did not significantly improve finetuning at higher epochs, which was expected.

An interesting point is that the evolved hyp peaked quite fast (around epoch 211) before dropping to 60.33 (around 1 whole percentage) compared to scratch hyp (about 0.1) at the end. The same applies for the finetuning.

I also noticed a drop at epoch 2 (with warmup) for evolve finetune. I suppose 10 epochs was too short for a big impact on 40 epochs.

I have one curiosity. How would the new evolved hyp affect custom datasets? Since this hyp is from finetuning the coco pretrained weights, could it be better suited as that is how the current repo is mainly used?

@NanoCode012 hey there, thanks for the analysis. I'm sorry, I've been busy recently with export and updating the iOS app. The app now has two new supercool features, realtime threshold settings (confidence and NMS) via slider and rotation to landscape/upside down, and uses all the very latest models with hardswish activations, including YOLOv5x, which incredibly runs at about 20-25 FPS (!) on an iPhone 11.

I've also drafted a plan for easier export with pytorch hub, so users can load a pretrained model and simply pass it cv2/PIL/numpy images for inference, with automatic letterboxing and NMS, without any complicated pre/post processing steps. This should help usability, works without cloning the repo, and the same work can be reutilized for our pip package soon.

Regarding the evolution, it's very interesting. I've evolved on a few custom datasets that always produce a very good jump in mAP, i.e. +10%, but for COCO I suspect the hyps may already be sitting in a decent local minima (from earlier evolutions on yolov3-spp), and so the potential improvements are harder to come by.

My most recent experience is this. I had 3 datasets I was working with: COCO, VOC, supermarket (custom). I finetuned VOC using hyps_coco_scratch.yaml. I then evolved on VOC for 300 generations, which improved VOC mAP almost +8%, to SOTA basically, around 0.92. I saved these hyps as hyps_voc_finetune.yaml

I finetuned supermarket dataset using both hyps_voc_finetune.yaml and hyps_coco_scratch.yaml, and surprisingly found +10% better performance using hyps_coco_scratch.yaml (!?). I evolved 300 generations starting from hyps_coco_scratch.yaml, and ended up with 10% additional performance, so again custom dataset evolution produced very good results.

But our COCO finetuning evolution did not produce good results. The conclusion is that coco hyps may already be decently evolved. Second conclusion is that it seems very hard to generalize hyps across datasets, and indeed the VOC and supermarket hyps are nothing alike. Hyps do seem to generalize well across models though, as all 4 models improved similarly in my above experiments, even though I evolved only yolvo5m.

One other item to note is that many hyps really require full training to correctly evolve. For example weight decay will always hurt short trainings (i.e. 10 epochs), but usually always helps out longer trainings (i.e. 300 epochs), so 10 epoch evolutions will surely always cause weight_decay and many augmentation settings to reduce, which will hurt when applied to 300 epochs

Hi @glenn-jocher

I'm sorry, I've been busy recently with export and updating the iOS app.

No worries. I am amazed how you could manage this repo and your own work at the same time!

I finetuned supermarket dataset using both hyps_voc_finetune.yaml and hyps_coco_scratch.yaml, and surprisingly found +10% better performance using hyps_coco_scratch.yaml (!?)

This is interesting. Do you mean to say coco scratch performed better in general in your experience? Could it be due to the fact that coco had a bigger dataset? What do you think of experimenting with mixing those 2 or more datasets? Would the evolved hyp of this bigger dataset be more generalized?

The conclusion is that coco hyps may already be decently evolved. Second conclusion is that it seems very hard to generalize hyps across datasets.

It could be a combination of both.

Maybe we have to create a more "general" hyp via a larger dataset, so training/evolving them to any custom dataset would be better/faster! I am not sure if this approach is good or have been done before.

Since there are so many different kinds of datasets, I don't think it's possible for a one fit all scenario. It would be better to provide many hyps that people can choose from although that would defeat the goal of simplicity for this repo.

For example weight decay will always hurt short trainings (i.e. 10 epochs), but usually always helps out longer trainings (i.e. 300 epochs)

I'm going to test with changing a few hyps (like warmup) from the coco evolved manually and see how the results turn out.

Edit: It could also be that 10 epochs evolve is too short, and we should go with a larger epoch number.

Hi @glenn-jocher , glad to see you back. I've also got caught up with some stuff irl, but I got some results from some random trainings I proposed above.

Commit: 7220cee1d1dc1f14003dbf8d633bbb76c547000c

(Also, was there a change with Docker? The image now does not contain .git files, making it hard to track which commit it's on. I could not find any changes within the repo.)

Notes:

- This uses the 5m model, batch-size 64, single V100 for 300 epochs each.

- Evolved hyps are from gen 838 (best result).

- Mixup training takes around 30% longer!

Problems:

- The 5m

basemodel is performing WORSE than your ReadMe results. - Increasing batch-size from 32->64 does not decrease seem to decrease training speed.

I proposed 2 ideas above on what to do next for hyps.

- Try evolve finetuning with 30 epochs.

- Try evolving with a bigger dataset, maybe VOC+COCO?

Would it be logical to have hyps for each dataset and letting users test them out?

@NanoCode012 hmm, ok lots of things to think about, I'll sleep on it. To quickly answer your questions though:

- Yes, .git was added to dockerignore to lighten up the images, but I see that you are right, this makes them hard to track, and also will not notify to git pull older images because no .git to check. See https://github.com/ultralytics/yolov5/commit/cab36f72a852ef00e8b42d3283ba9b2fc757b17f#diff-f7c5b4068637e2def526f9bbc7200c4e

- Yes that's odd that you can't reproduce the table values. Table shows 63.2 and 44.3 for YOLOv5m. These are pycocotools results at 640.

- It's possible that beyond batch size 32 the dataloader is the constraint, and this is why you don't see faster training at 64.

- BTW, degrees hyperparameter is just rotation in degrees, so you can input values from 0-360 basically, but I've always tried smaller values around 5-10 degrees, which never helped much unfortunately. 0.4 degrees won't really produce a material change from 0 degrees, it's too small.

Yes, I think it definitely makes sense to simply label the hyps for each dataset. For example hyps.finetune.yaml are actually VOC finetune hyps, which oddly enough are quite different than COCO hyps:

https://github.com/ultralytics/yolov5/blob/master/data/hyp.finetune.yaml

Hi @glenn-jocher , I would like to just give an update.

I tried to fine tune evolve for 30 epochs for around ~150 generations. I got around 62.8 and 42.6 as the map 0.5 / 0.5:0.95 respectively.

I tried to use those hype to fine tune from the 5m and only got 62.4 / 42.6 (from test.py). I’m not sure why using the same hyps do not get the same results. Is there some random factor at play?

Anyways, the results are just below the current 5m models, so I think finetuning is not the way to go. I haven’t tried using the hyps to train a full model, but I doubt it’ll be fare much better.

This issue has been automatically marked as stale because it has not had recent activity. It will be closed if no further activity occurs. Thank you for your contributions.