Yolov5: Difference in behavior of --img-size detect.py vs test.py

❔Question

Hi

I have fine-tuned yolov5s on my custom dataset. Training goes very smoothly.

There is a slight discrepancy in the image-size used in detect.py and test.py

Training is done with img-size=960.

Please find attached terminal output files for detect and test for same model, same val set.

For detect.py, each image inference is carried out at 640x960

For test.py results are measured at 960x960

Can you explain the difference?

--DETECT.py--

nohup python3 detect.py --source=../coco128/images/val --img-size=960 --device=0 --iou-thres=0.65 --conf-thres=0.1 --weights=runs/exp9/weights/best.pt

Using CUDA device0 _CudaDeviceProperties(name='Tesla T4', total_memory=15109MB)

Model Summary: 140 layers, 7.25731e+06 parameters, 6.61683e+06 gradients

Namespace(agnostic_nms=False, augment=False, classes=None, conf_thres=0.1, device='0', img_size=960, iou_thres=0.65, output='inference/output', save_txt=False, source='../coco128/images/val', update=False, view_img=False, weights=['runs/exp9/weights/best.pt'])

Fusing layers...

image 1/600 /home/ubuntu/coco128/images/val/image_1.png: 640x960 18 Xs, 1 As, 1 Bs, 2 Cs, 1 Ds, Done. (0.015s)

image 2/600 /home/ubuntu/coco128/images/val/image_1008.png: 640x960 12 Xs, 3 As, 1 Bs, 2 Cs, 1 Ds, Done. (0.013s)

Results saved to inference/output

Done. (0.028s)

--TEST.py--

nohup python3 test.py --data=data/coco128.yaml --batch-size=16 --img-size=960 --device=0 --verbose --task=val --weights=runs/exp9/weights/best.pt

Using CUDA device0 _CudaDeviceProperties(name='Tesla T4', total_memory=15109MB)

Model Summary: 140 layers, 7.25731e+06 parameters, 6.61683e+06 gradients

0it [00:00, ?it/s]

Scanning labels ../coco128/labels/val.cache (600 found, 0 missing, 0 empty, 0 duplicate, for 600 images): 600it [00:00, 12336.79it/s]

Class Images Targets P R [email protected] [email protected]:.95: 0%| | 0/38 [00:00<?, ?it/s]Namespace(augment=False, batch_size=16, conf_thres=0.001, data='data/coco128.yaml', device='0', img_size=960, iou_thres=0.65, merge=False, save_json=False, save_txt=False, single_cls=False, task='val', verbose=True, weights=['runs/exp9/weights/best.pt'])

Fusing layers...

all 600 1.14e+04 0.812 0.998 0.995

Speed: 5.1/1.6/6.7 ms inference/NMS/total per 960x960 image at batch-size 16

All 5 comments

Hello @ml5ah, thank you for your interest in our work! Please visit our Custom Training Tutorial to get started, and see our Jupyter Notebook , Docker Image, and Google Cloud Quickstart Guide for example environments.

If this is a bug report, please provide screenshots and minimum viable code to reproduce your issue, otherwise we can not help you.

If this is a custom model or data training question, please note Ultralytics does not provide free personal support. As a leader in vision ML and AI, we do offer professional consulting, from simple expert advice up to delivery of fully customized, end-to-end production solutions for our clients, such as:

- Cloud-based AI systems operating on hundreds of HD video streams in realtime.

- Edge AI integrated into custom iOS and Android apps for realtime 30 FPS video inference.

- Custom data training, hyperparameter evolution, and model exportation to any destination.

For more information please visit https://www.ultralytics.com.

Hi,

I also noticed this behavior. In particular, I am troubled by the fact that the test.py presents much faster inference speed compared to detect.py, for the same input size.

I wonder what causes the difference, and whether the same speeds can be achieved for detect.py.

Here is an example for inference speed measured for detect.py (~42ms, slow):

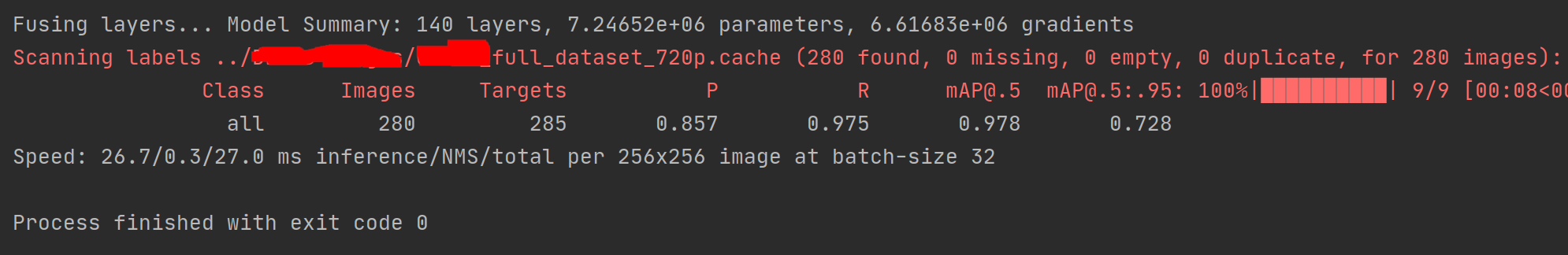

Here is an example for inference speed measured for test.py (26.7ms, fast):

The gap is even bigger on my GPU (60FPS for test.py VS 30FPS detect.py)

This was observed on both machines:

- Intel core i7-6200U, Ubuntu 20.04, Python 3.8

- Nvidia Jetson Xavier AGX, Ubuntu 18.04 (JetPack), Python 3.6, MODE 30W 6 CORES

Thanks!

detect.py process the images 1 by 1 while test.py can do batches and thats how it can do it faster. You could tweak detect.py to make it do batches if you are able to get the images in batch (can be applied to anything that is not a single live camera feed).

@glenn-jocher any suggestions for img-size?

This issue has been automatically marked as stale because it has not had recent activity. It will be closed if no further activity occurs. Thank you for your contributions.