Yolov5: Refactoring the test.py and detect.py.

🚀 Feature

Refactor the test.py and detect.py. Make multi-gpu inference possible. Improve readability. And reuse codes between them.

Motivation

I found it hard to add multi-gpu inference (especailly for val inference during DDP training). The inference, post-processing, evaluation (coco official evaluation and home-made evaluation), should be split into several abstract functions. Otherwise it is difficult to perform the map-reduce pattern for DDP.

Scope

The code effected should be mainly test.py and detect.py. And a very small part of train.py.

@glenn-jocher

I am currently working on it. I will make a PR later and please have a loot at it. Hope it can help!

All 19 comments

Hi.. I believe glenn planned to combined them into one file called eval. You should check with him if he started doing it, so you won't have hard time to merge them later on.

Hi.. I believe glenn planned to combined them into one file called

eval. You should check with him if he started doing it, so you won't have hard time to merge them later on.

Damn. I have begun doing it already!

@glenn-jocher May I ask what your plan is and how it is going?

After a day's hard work, I have finished the first key part of refractoring test.py lol.

Here it is

If Glenn didn't start this job, I think now I can handle it... Good night!

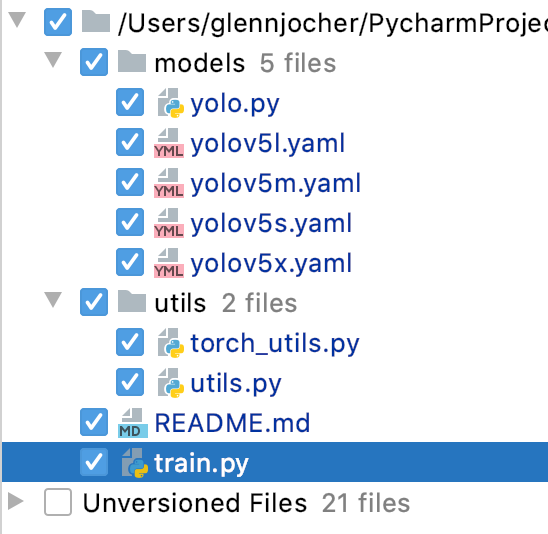

@MagicFrogSJTU @NanoCode012 this sounds like a good idea. Yes I want to eventually merge test.py with detect.py, but this is a long term goal, I have no immediate plans. My only immediate plans are a v2.0 release, I should have this done either today or tomorrow. This has no effect on test.py though. The affected files are here:

@MagicFrogSJTU your work looks really interesting. The final test.py solution needs to meet a few requirements:

- Ideally FP16 single/multi-GPU support (currently incompatible)

- FP32 CPU support (FP16 not supported on CPU)

- Speed >= current

- repo mAP >= current

- pycocotools mAP >= current

- same print screen functionality as current

- a pathway towards integrating detect.py into test.py (some of the commented text like save-txt relates to this).

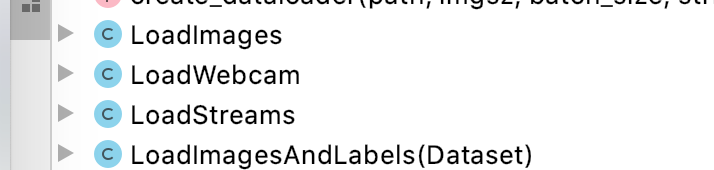

BTW, to explain the datasets.py a bit more, there are currently 4 different dataloaders, 1 is a bit redundant

- LoadImages(): Loads images, globs, files, folder, videos

- LoadWebcam(): Simple local webcam dataloader: python detect.py --source 0

- LoadStreams(): Loads single/multiple RTSP/HTTP streams as well as local webcam. Multithreaded solution. Can even run local webcam along with multiple streams simultaneously.

- LoadImagesAndLabels(): For training and testing, reads from data.yaml files to load *.txt file with list of images or a directory containing images. Performs augmentation optionally.

My initial goal is to split and wrap the code so that each part does its own things. I assume the performance would remain the same, but things seem to get complex. Let's see how it's going.

@glenn-jocher

I almost finish refactoring the code for test.py. It is hugely changed. But luckily the mAP results remain exactly same.

I should have v2.0 released soon, probably later today

It seems v2.0 has already been released? I will work on the v2.0 release then.

I would finish all the jobs before next Monday, so please suspend any big changes to test.py and detect.py on the master branch.

# test.py

inferencer = Inferencer(model, local_rank=local_rank)

inference_results = inferencer.infer(dataloader, augment, nms_kwargs, training, hooks=hooks)

# TODO: all reduce these.

t0 = inferencer.t0

t1 = inferencer.t1

loss = inferencer.loss

if local_rank in [0, -1]:

##############################

# Calculate speeds

t = tuple(x / len(dataloader.dataset) * 1E3 for x in (t0, t1, t0 + t1)) + (imgsz, imgsz, dataloader.batch_size) # tuple

# Speed is presently only valid in single-gpu mode.

if not training and local_rank == -1:

print('Speed: %.1f/%.1f/%.1f ms inference/NMS/total per %gx%g image at batch-size %g' % t)

##############################

# Calculate home-made mAP

iou_vec = torch.linspace(0.5, 0.95, 10).to(device) # iou vector for [email protected]:0.95

metric = MetricMAP(iou_vec, nc)

eval_result = metric.eval(inference_results[0], inference_results[1])

metric.print_result(eval_result, names, verbose)

mAP = eval_result.mAP

mAP50 = eval_result.mAP50

##############################

# Save JSON, Calculate coco mAP

if do_official_coco_evaluation:

metric_coco = MetricCoco(dataloader.dataset.img_files)

mAP_coco_official, mAP50_coco_official = metric_coco.eval(inference_results[0], inference_results[2], official_coco_evaluation_save_fname)

if mAP_coco_official:

mAP = mAP_coco_official

if mAP50_coco_official:

mAP50 = mAP50_coco_official

It looks compact and clean now!

As for detect.py, I don't have clear ideas now. I will dig deeper tomorrow.

@glenn-jocher

I almost finish refactoring the code for test.py. It is hugely changed. But luckily the mAP results remain exactly same.I should have v2.0 released soon, probably later today

It seems v2.0 has already been released? I will work on the v2.0 release then.

I would finish all the jobs before next Monday, so please suspend any big changes to test.py and detect.py on the master branch.

@MagicFrogSJTU great, I will suspend updates to test.py and detect.py. Don't worry too much about detect.py, it's more of a long term goal to eventually merge it into detect.py, but there is no hurry. It might not even make sense, I'm not sure. The idea is to reduce the codebase, and eliminate duplication of operations, but it may simply be easier to use separate files as we do now.

@glenn-jocher

I almost finish refactoring the code for test.py. It is hugely changed. But luckily the mAP results remain exactly same.I should have v2.0 released soon, probably later today

It seems v2.0 has already been released? I will work on the v2.0 release then.

I would finish all the jobs before next Monday, so please suspend any big changes to test.py and detect.py on the master branch.@MagicFrogSJTU great, I will suspend updates to test.py and detect.py. Don't worry too much about detect.py, it's more of a long term goal to eventually merge it into detect.py, but there is no hurry. It might not even make sense, I'm not sure. The idea is to reduce the codebase, and eliminate duplication of operations, but it may simply be easier to use separate files as we do now.

- Task of test.py is done.

- Due to limited time, I will leave detect.py unchanged. The dataset of test.py and detect.py have different structures, and therefore it is not an easy task. May return to this issue in the future.

@MagicFrogSJTU ok sounds good! Yes, it is difficult for a variety of reasons to merge the two.

@MagicFrogSJTU are you still working on this ? Had similar ideas and I started a class to try and make things easier. Look at #668, maybe we could work something out to get everything tied together!

@Ownmarc , If you're interested in implementing on your branch, see #514 .

Multi gpu would be something I want to add, but I am currently working on Windows and Pytorch doesn’t support it natively. I will see what I can do :)

Only adding multi GPU inference should be easier then training since we do not have to sync the GPUs, so there is many ways of doing this using multiprocessing/threading.

@Ownmarc , as I've read, DDP does work on Pytorch Windows, however, I've never tested it myself. Are you saying the code right now doesn't work for windows?

It doesn’t, its a Pytorch limitation, see https://github.com/pytorch/pytorch/issues/42095

Thanks for clarifying it for me! I will update the guide to add that note. May I ask if multiprocessing.spawn works on Windows?

spawn() works, Windows has no true fork() tho

@Ownmarc

As #514, Glenn tends to keep the code layout. Do you want to make a PR, or do you want to make a fork repos for yourself? If the former, I suggest you have communications with Glenn and settle things down before you begin to code. If the latter one, you may utilize my PR.

Most helpful comment

It looks compact and clean now!

As for detect.py, I don't have clear ideas now. I will dig deeper tomorrow.