Velero: failure of volumes --snapshot-volumes0

What steps did you take and what happened:

[A clear and concise description of what the bug is, and what commands you ran.)

What did you expect to happen:

The output of the following commands will help us better understand what's going on:

(Pasting long output into a GitHub gist or other pastebin is fine.)

kubectl logs deployment/velero -n velerovelero backup describe <backupname>orkubectl get backup/<backupname> -n velero -o yamlvelero backup logs <backupname>velero restore describe <restorename>orkubectl get restore/<restorename> -n velero -o yamlvelero restore logs <restorename>

Anything else you would like to add:

[Miscellaneous information that will assist in solving the issue.]

Environment:

- Velero version (use

velero version): - Kubernetes version (use

kubectl version): - Kubernetes installer & version:

- Cloud provider or hardware configuration:

- OS (e.g. from

/etc/os-release):

All 9 comments

We are trying to take backup of namespace in k8 cluster. For that we have using velero(--restic). .

but did not get chance to take pod volumes.

Any one please look into this.

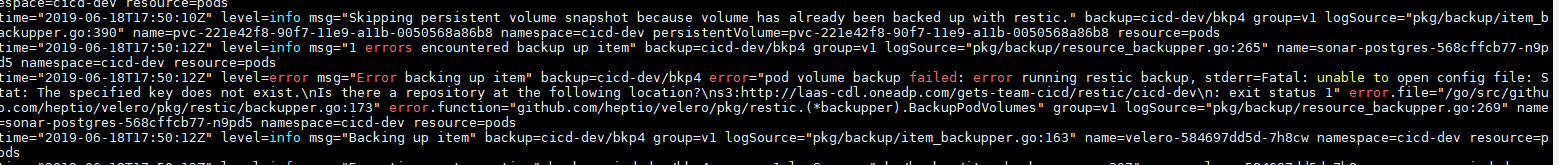

command: velero backup create bkp4 --include-namespaces cicd-dev --snapshot-volumes

OutPut:

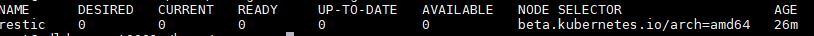

and restic DeamonSet :

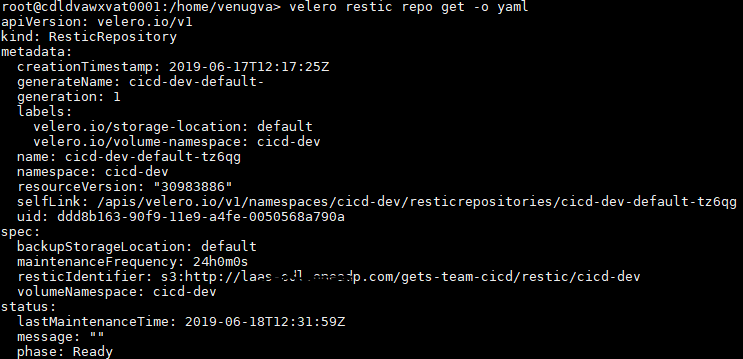

What object storage system are you using? Can you also provide the output of velero restic repo get -o yaml?

@skriss , i have used IBM S3 Object storage.

Output of restic repo :

oh, it looks like there are no pods for the restic daemonset...do you have any node taints that are preventing pods from being scheduled on each node?

@skriss No , i didn't taint any nodes. My K8 cluster have different types of node flavors like suse-linux-nodes and IBM-Z-linux nodes. so that I have use Node-selector to spin the pods in Suse-linux .

@skriss No , i didn't taint any nodes. My K8 cluster have different types of node flavors like suse-linux-nodes and IBM-Z-linux nodes. so that I have use Node-selector to spin the pods in Suse-linux .

OK - well the restic daemonset currently is not running any pods, so I'd look at why that's the case.

@gkdba87 did you need anything further here?

Closing as inactive, reopen as needed!