Vector: Trying to understand what vector is doing with memory (0.6.0)

Hello folks,

We're evaluating vector as an alternative to fluentd

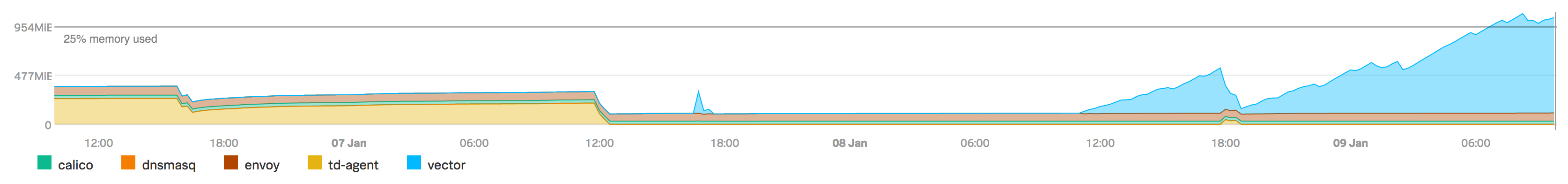

I like it so far, but I was checking out how it compares in terms of memory/cpu with our current logging agent. It seems favorable in terms of CPU usage but there seems to be a memory problem, possibly a leak, but I don't understand how this is possible with a rust application?

You can see the metrics, that I am basing this question on, here:

(td-agent is fluentd)

Our configuration consists of:

- 5 file sources, 2 of which are not written to past the initial startup of the server

- A few transforms (regex, lua)

- One sink, which sends logs to kinesis

Not sure what other information would be valuable here... logs don't have anything apart from TRACE level messages regarding kinesis being rate-limited

All 10 comments

Thanks @centanu, that definitely should not be the case. We'll have someone take a look and see what's going on.

@cetanu Thanks for this! Some more information that would be helpful as we try to track this down, if you're able to provide it:

- The config file you're running (the more detail you're able to provide, the better)

- Any other correlated spikes in your metrics (it doesn't look like a constant leak)

- Any info about relative data volume through each component, roughly speaking

My first instinct is to look into how you're using the Lua transform, since that involves FFI, a whole language runtime written in C, garbage collection, etc.

I'm also curious about your batching config for the kinesis sink and how often it's getting rate-limited (either internally or by AWS). If you've configured very large batches and requests are not succeeding very often, those batches could grow to take up quite a bit of memory.

Thanks again for opening the issue, and of course we totally understand if you're not able to share everything above!

I think I can share the config, nothing really private about it:

# -- Global Options --

# The directory used for persisting Vector state,

# such as on-disk buffers, file checkpoints, and more.

data_dir = "/var/lib/vector"

# -- Sources --

[sources.envoy_access]

type = "file"

include = ["/dev/shm/envoy_logs/access.log"]

[sources.envoy_application]

type = "file"

include = ["/var/log/envoy/application.log"]

[sources.cfn-init]

type = "file"

include = ["/var/log/cfn-init.log"]

[sources.salt]

type = "file"

include = ["/var/log/salt/masterless"]

[sources.syslog]

type = "file"

include = ["/var/log/syslog"]

# -- Transforms --

[transforms.parse_envoy_access]

type = "json_parser"

inputs = ["envoy_access"]

drop_invalid = false

[transforms.envoy_access_transforms]

type = "lua"

inputs = ["parse_envoy_access"]

source = """

tokens = {}

for token in string.gmatch(event["src_ip"], "[^:]+") do

table.insert(tokens, token)

end

for index, value in pairs(tokens) do

if index == 1 then

event["src_ip"] = value

elseif index == 2 then

event["src_port"] = value

end

end

"""

[transforms.parse_envoy_application]

type = "regex_parser"

inputs = ["envoy_application"]

regex = "^\\[(?P\u003ctimestamp\u003e[^]]+)]\\[(?P\u003cthread_id\u003e[^]]+)]\\[(?P\u003clevel\u003e[^]]+)]\\[(?P\u003clogger\u003e[^]]+)] (?P\u003cmessage\u003e.+)$"

[transforms.parse_envoy_application.types]

timestamp = "timestamp|%Y-%m-%d %H:%M:%S%.3f"

thread_id = "int"

[transforms.parse_salt]

type = "regex_parser"

inputs = ["salt"]

regex = "\\s*Name: (?P\u003cname\u003e.+) - Function: (?P\u003cfn\u003e[^-]+) - Result: (?P\u003cresult\u003e\\S+) Started: - (?P\u003ctimestamp\u003e\\S+) Duration: (?P\u003cexec_ms\u003e[\\d\\.]+) ms"

[transforms.parse_salt.types]

timestamp = "timestamp|%H:%M:%S%.6f"

exec_ms = "float"

[transforms.parse_cfn_init]

type = "regex_parser"

inputs = ["cfn-init"]

regex = "^(?P\u003ctimestamp\u003e.*?) \\[(?P\u003clevel\u003e[^ ]*)\\] (?P\u003cmessage\u003e.*)$"

[transforms.parse_cfn_init.types]

timestamp = "timestamp|%Y-%m-%d %H:%M:%S,%3f"

[transforms.parse_syslog]

type = "regex_parser"

inputs = ["syslog"]

regex = "^(?P\u003ctimestamp\u003e[^ ]*) .+? (?P\u003cproc\u003e.*?)(\\[(?P\u003cpid\u003e[0-9]+)\\])?: (?P\u003cmessage\u003e.*)$"

[transforms.parse_syslog.types]

timestamp = "timestamp|%Y-%m-%dT%H:%M:%S%.6f%:z"

pid = "int"

[transforms.derive_envoy_type_from_filename]

type = "lua"

inputs = ["envoy_access_transforms", "parse_envoy_application"]

source = """

event["type"] = string.gsub(event["file"], "/(var/log/envoy|dev/shm/envoy_logs)/([^%.]+)%.log", "%1")

event["app"] = "envoy"

event["file"] = nil

"""

[transforms.derive_system_log_type_from_filename]

type = "lua"

inputs = ["parse_cfn_init", "parse_salt", "parse_syslog"]

source = """

event["type"] = string.gsub(event["file"], "/var/log/([a-z]+).*", "%1")

event["app"] = "system"

event["file"] = nil

"""

[transforms.add_metadata]

type = "add_fields"

inputs = ["derive_envoy_type_from_filename", "derive_system_log_type_from_filename"]

[transforms.add_metadata.fields]

instance_id = "i-aaaaaaaaaaaaa"

service_cluster = "D1"

service_cluster_type = "main"

service_id = "aaaaaaaa-cccc-bbbb-dddd-aaaaaaaaa"

region = "ap-southeast-2"

env = "dev"

[transforms.filter_logs]

type = "lua"

inputs = ["add_metadata"]

source = """

if event["source"] ~= nil and event["source"].match(str, "kernel") then

event = nil

end

"""

# -- Sinks --

[sinks.to_kinesis]

type = "aws_kinesis_streams"

inputs = ["filter_logs"]

region = "ap-southeast-2"

stream_name = "prod-logs"

encoding = "json"

healthcheck = false

A rough look at what was logged during testing shows that there was about 10 "rate limit exceeded, disabling service" messages per minute

reading your comment again, perhaps the kinesis batches could be piling up in memory... I'll take a look at reducing the amount we try to send and give it a test

Thanks so much! We'll dig in and let you know what we find. One of our near-term goals is to improve Vector's observability so it's easier to understand what's going on in cases like this.

Related: I think doing https://github.com/timberio/vector/issues/1499 could potentially reduce likelihood of actual memory leaks (as opposed to expected growth of memory usage for example in buffers).

Looks like we originally had a leak with our tracing-subscriber 0.1.6 depdency but this has been fixed via #1670 and #1635 can be used to track that.

That said, I don't think this leak is related since we don't heavily use deeply nested spans enough to trigger the slab bug. But I am leaving this here for further reference.

I'm going to kick up some more tests for this in the next week, just organizing deployments

Hi @cetanu, I have good news. This should be fixed via https://github.com/timberio/vector/pull/1990. Feel free to reopen if not.

Interesting! I'll give this another go. Love your work ❤️

Most helpful comment

I'm going to kick up some more tests for this in the next week, just organizing deployments