Vector: Journald Source crashes after few minutes

Software Versions

$ vector --version

vector 0.4.0-dev

$ journalctl --version

systemd 241 (241)

+PAM +AUDIT +SELINUX +IMA +APPARMOR +SMACK +SYSVINIT +UTMP +LIBCRYPTSETUP +GCRYPT +GNUTLS +ACL +XZ +LZ4 +SECCOMP +BLKID +ELFUTILS +KMOD -IDN2 +IDN -PCRE2 default-hierarchy=hybrid

lsb_release -a

No LSB modules are available.

Distributor ID: Debian

Description: Debian GNU/Linux 9.11 (stretch)

Release: 9.11

Codename: stretch

Vector Config

The following is our vector.toml config file

data_dir = "/var/lib/vector"

#INPPUT

[sources.journald_myservice]

type = "journald"

units = ["myservice"]

[sources.nginx_access]

type = "file"

include = ["/var/log/nginx/access.log"]

ignore_older = 86400

[transforms.journald_myservice_json]

type = "json_parser"

drop_invalid = false

inputs = ["journald_myservice"]

#OUTPUT JOURNALD

[sinks.journald_es]

type = "elasticsearch"

host = "https://xxxxxxxxxxxxxx"

inputs = ["journald_myservice_json"]

index = "journald-%Y-%m-%d"

batch_size = 10000000

[sinks.journald_es.basic_auth]

user = "xxxxxxx"

password = "xxxxxxxxx"

#OUTPUT NGINX

[sinks.es]

type = "elasticsearch"

host = "https://xxxxxxxxxxxxxx"

inputs = ["nginx_access"]

index = "vector-%Y-%m-%d"

batch_size = 10000000

[sinks.es.basic_auth]

user = "xxxxxxx"

password = "xxxxxxxxx"

Note that myservice is a custom nodejs service, it creates arround 4000 log per minutes

Issue

There appears to be an issue with the journald source, When vector is started everything goes smoothly, few minutes later (2-5 minutes) I stop receiving logs from journald on elasticsearch, but I keep receiving logs from nginx(source=file),

Once I run

systemctl restart vector

I start receiving again, then only journald crashes after few minutes,

I tried running in verbose mode, with a console sinks, here's an extract of the logs the moment that journald source crashes:

..........

...........

{"_BOOT_ID":"3726528d4b5a461192a0f971d127e5ae","..........}

Nov 17 11:54:07.540 DEBUG sink{name="journald_es"}: vector::sinks::util::http: response. status=200 OK version=HTTP/1.1

Nov 17 11:54:08.485 DEBUG sink{name="journald_es"}: vector::sinks::util::http: sending request.

Nov 17 11:54:08.514 DEBUG sink{name="journald_es"}: vector::sinks::util::http: response. status=200 OK version=HTTP/1.1

Nov 17 11:54:08.629 DEBUG sink{name="es"}: vector::sinks::util::http: sending request.

Nov 17 11:54:08.658 DEBUG sink{name="es"}: vector::sinks::util::http: response. status=200 OK version=HTTP/1.1

Nov 17 11:54:09.652 DEBUG sink{name="es"}: vector::sinks::util::http: sending request.

Nov 17 11:54:09.686 DEBUG sink{name="es"}: vector::sinks::util::http: response. status=200 OK

Thanks.

All 32 comments

This is odd, thanks for reporting @soufiane-bouchaara. @bruceg wrote this source so I'll have him take a look and see what's going on here.

Thank you @binarylogic for your help 🙏

@soufiane-bouchaara, I think I might see the problem here. type = "json" is not a valid transform. It should be type = "json_parser". Ex:

# ...

[transforms.journald_myservice_json]

type = "json_parser"

inputs = ["journald_myservice"]

#...

If that was the error, though, you wouldn't see any output what-so-ever. In fact you should see an error upon booting that the type is unrecognized.

Dear @binarylogic , my bad I just wanted to simplify the example, I checked my config

type = "json_parser" is there could it be drop_invalid = false ?

Ok, that makes more sense. drop_invalid = false should be fine. You'll see a warning in your logs if there are invalid entries, but it should not break downstream processing (within Vector). Let's see what @bruceg has to say.

Also, you're logs look ok. You didn't see any errors or warning in your logs? And if @bruceg cannot reproduce, would you be open to joining our Slack to help debug quicker?

I noticed no warning during my debugging, and I repeated it several times, I will be more than happy to help

It appears you may be running a development version of Vector. Do you recall when you last updated the sources? Several fixes to the journald source have been applied recently that might affect your situation.

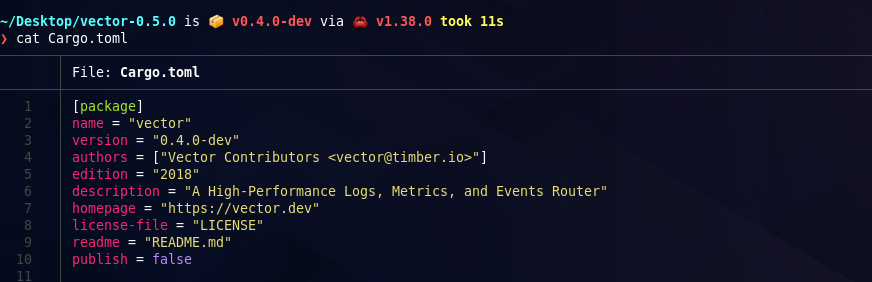

Dear @bruceg, Thank you for your answer, I checked the version of vector installed on the server :

$ vector --version

vector 0.4.0-dev

We installed vector using :

curl https://sh.vector.dev -sSf | sh

The installation was done in November, so I checked in Vector Release, the version 0.5 was released on Oct 10

So I think it's a packaging problem that comes from the installation link, correct me if I'm wrong

@bruceg @binarylogic I just ran on my local machine :

curl https://sh.vector.dev -sSf | sh

then

vector --version

vector 0.4.0-dev

I'm not getting the latest version,

So I downloaded the source manually open it and here a screenshot of what I get :

You are right, version 0.5.0 reported version 0.4.0-dev internally due to a glitch in the release process.

So I understand what the problem is, is this a problem that causes vector to crash (ie with a segmentation fault or something else), or that the journald source stops feeding new lines?

Are you able to install git and the rust compiler? If so, I would start with trying to see if the journald fixes that have been applied since the latest release resolve the issue for you.

@bruceg, As you mentioned before V0.5 must have many updates on journald, I'm not sure if updating to v0.5 will solve my problem,

I have git and rust compiler installed

As indicated, there have been several bugs resolved since the release of 0.5.0.

So, to build from git, clone this repo and build that:

git clone https://github.com/timberio/vector.git

cd vector

cargo build --release

Then use the newly built executable target/release/vector.

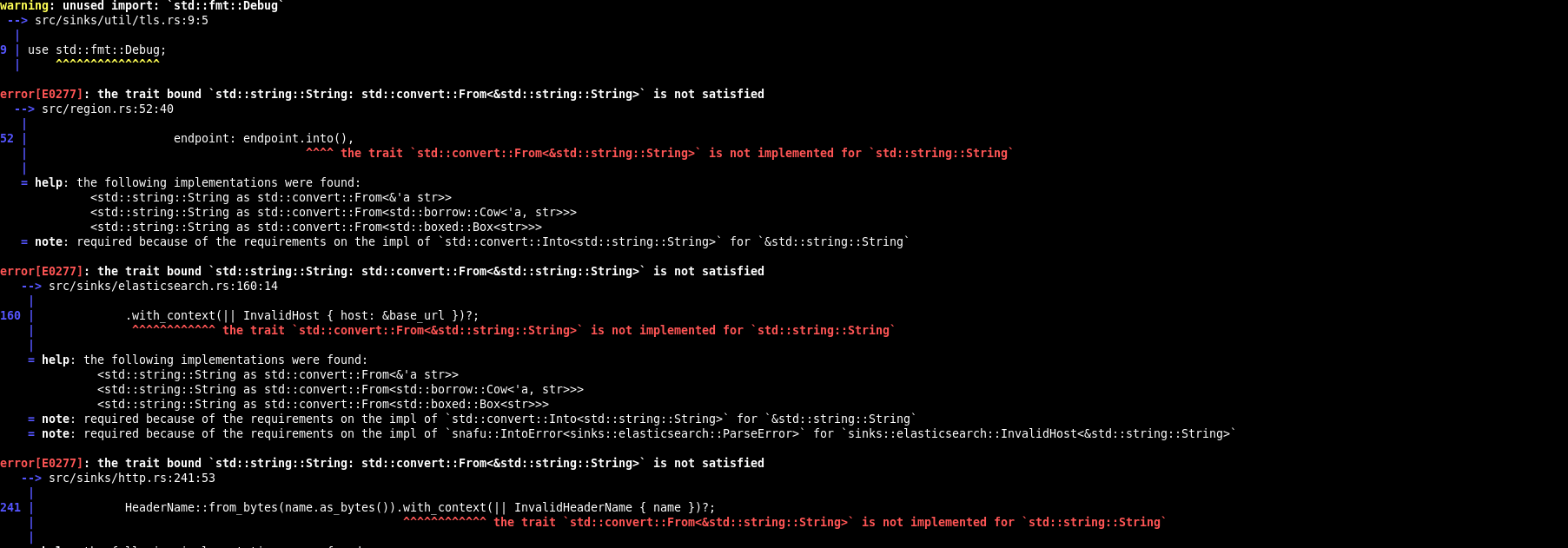

This is what I'm getting when the build is about to finish

Compiling tracing-tower v0.1.0 (https://github.com/tokio-rs/tracing#8720792d)

Compiling rusoto_credential v0.41.1

Compiling hyper-tls v0.3.2

Compiling hyperlocal v0.6.0

Compiling tower-hyper v0.1.1

Compiling shiplift v0.5.0 (https://github.com/LucioFranco/shiplift?branch=timber#e84e0047)

Compiling rusoto_logs v0.41.0

Compiling rusoto_s3 v0.41.0

Compiling rusoto_cloudwatch v0.41.0

Compiling rusoto_kinesis v0.41.0

warning: unused import: `std::fmt::Debug`

--> src/sinks/util/tls.rs:9:5

|

9 | use std::fmt::Debug;

| ^^^^^^^^^^^^^^^

error[E0277]: the trait bound `std::string::String: std::convert::From<&std::string::String>` is not satisfied

--> src/region.rs:52:40

|

52 | endpoint: endpoint.into(),

| ^^^^ the trait `std::convert::From<&std::string::String>` is not implemented for `std::string::String`

|

= help: the following implementations were found:

<std::string::String as std::convert::From<&'a str>>

<std::string::String as std::convert::From<std::borrow::Cow<'a, str>>>

<std::string::String as std::convert::From<std::boxed::Box<str>>>

= note: required because of the requirements on the impl of `std::convert::Into<std::string::String>` for `&std::string::String`

error[E0277]: the trait bound `std::string::String: std::convert::From<&std::string::String>` is not satisfied

--> src/sinks/elasticsearch.rs:160:14

|

160 | .with_context(|| InvalidHost { host: &base_url })?;

| ^^^^^^^^^^^^ the trait `std::convert::From<&std::string::String>` is not implemented for `std::string::String`

|

= help: the following implementations were found:

<std::string::String as std::convert::From<&'a str>>

<std::string::String as std::convert::From<std::borrow::Cow<'a, str>>>

<std::string::String as std::convert::From<std::boxed::Box<str>>>

= note: required because of the requirements on the impl of `std::convert::Into<std::string::String>` for `&std::string::String`

= note: required because of the requirements on the impl of `snafu::IntoError<sinks::elasticsearch::ParseError>` for `sinks::elasticsearch::InvalidHost<&std::string::String>`

error[E0277]: the trait bound `std::string::String: std::convert::From<&std::string::String>` is not satisfied

--> src/sinks/http.rs:241:53

|

241 | HeaderName::from_bytes(name.as_bytes()).with_context(|| InvalidHeaderName { name })?;

| ^^^^^^^^^^^^ the trait `std::convert::From<&std::string::String>` is not implemented for `std::string::String`

|

= help: the following implementations were found:

<std::string::String as std::convert::From<&'a str>>

<std::string::String as std::convert::From<std::borrow::Cow<'a, str>>>

<std::string::String as std::convert::From<std::boxed::Box<str>>>

= note: required because of the requirements on the impl of `std::convert::Into<std::string::String>` for `&std::string::String`

= note: required because of the requirements on the impl of `snafu::IntoError<sinks::http::BuildError>` for `sinks::http::InvalidHeaderName<&std::string::String>`

error[E0277]: the trait bound `std::string::String: std::convert::From<&std::string::String>` is not satisfied

--> src/sinks/http.rs:243:18

|

243 | .with_context(|| InvalidHeaderValue { value })?;

| ^^^^^^^^^^^^ the trait `std::convert::From<&std::string::String>` is not implemented for `std::string::String`

|

= help: the following implementations were found:

<std::string::String as std::convert::From<&'a str>>

<std::string::String as std::convert::From<std::borrow::Cow<'a, str>>>

<std::string::String as std::convert::From<std::boxed::Box<str>>>

= note: required because of the requirements on the impl of `std::convert::Into<std::string::String>` for `&std::string::String`

= note: required because of the requirements on the impl of `snafu::IntoError<sinks::http::BuildError>` for `sinks::http::InvalidHeaderValue<&std::string::String>`

error[E0277]: the trait bound `std::string::String: std::convert::From<&std::string::String>` is not satisfied

--> src/sinks/prometheus.rs:166:74

|

166 | let opts = prometheus::Opts::new(name.clone(), name.clone()).namespace(namespace);

| ^^^^^^^^^ the trait `std::convert::From<&std::string::String>` is not implemented for `std::string::String`

|

= help: the following implementations were found:

<std::string::String as std::convert::From<&'a str>>

<std::string::String as std::convert::From<std::borrow::Cow<'a, str>>>

<std::string::String as std::convert::From<std::boxed::Box<str>>>

= note: required because of the requirements on the impl of `std::convert::Into<std::string::String>` for `&std::string::String`

error[E0658]: use of unstable library feature 'iter_copied' (see issue #57127)

--> src/sinks/prometheus.rs:167:46

|

167 | let keys: Vec<_> = labels.keys().copied().collect();

| ^^^^^^

error[E0277]: the trait bound `std::string::String: std::convert::From<&std::string::String>` is not satisfied

--> src/sinks/prometheus.rs:187:74

|

187 | let opts = prometheus::Opts::new(name.clone(), name.clone()).namespace(namespace);

| ^^^^^^^^^ the trait `std::convert::From<&std::string::String>` is not implemented for `std::string::String`

|

= help: the following implementations were found:

<std::string::String as std::convert::From<&'a str>>

<std::string::String as std::convert::From<std::borrow::Cow<'a, str>>>

<std::string::String as std::convert::From<std::boxed::Box<str>>>

= note: required because of the requirements on the impl of `std::convert::Into<std::string::String>` for `&std::string::String`

error[E0658]: use of unstable library feature 'iter_copied' (see issue #57127)

--> src/sinks/prometheus.rs:188:46

|

188 | let keys: Vec<_> = labels.keys().copied().collect();

| ^^^^^^

error[E0277]: the trait bound `std::string::String: std::convert::From<&std::string::String>` is not satisfied

--> src/sinks/prometheus.rs:211:18

|

211 | .namespace(namespace);

| ^^^^^^^^^ the trait `std::convert::From<&std::string::String>` is not implemented for `std::string::String`

|

= help: the following implementations were found:

<std::string::String as std::convert::From<&'a str>>

<std::string::String as std::convert::From<std::borrow::Cow<'a, str>>>

<std::string::String as std::convert::From<std::boxed::Box<str>>>

= note: required because of the requirements on the impl of `std::convert::Into<std::string::String>` for `&std::string::String`

error[E0658]: use of unstable library feature 'iter_copied' (see issue #57127)

--> src/sinks/prometheus.rs:212:46

|

212 | let keys: Vec<_> = labels.keys().copied().collect();

| ^^^^^^

error[E0277]: the trait bound `std::string::String: std::convert::From<&std::string::String>` is not satisfied

--> src/sinks/prometheus.rs:233:74

|

233 | let opts = prometheus::Opts::new(name.clone(), name.clone()).namespace(namespace);

| ^^^^^^^^^ the trait `std::convert::From<&std::string::String>` is not implemented for `std::string::String`

|

= help: the following implementations were found:

<std::string::String as std::convert::From<&'a str>>

<std::string::String as std::convert::From<std::borrow::Cow<'a, str>>>

<std::string::String as std::convert::From<std::boxed::Box<str>>>

= note: required because of the requirements on the impl of `std::convert::Into<std::string::String>` for `&std::string::String`

error[E0658]: use of unstable library feature 'iter_copied' (see issue #57127)

--> src/sinks/prometheus.rs:234:46

|

234 | let keys: Vec<_> = labels.keys().copied().collect();

| ^^^^^^

error[E0277]: the trait bound `std::string::String: std::convert::From<&std::string::String>` is not satisfied

--> src/sources/file.rs:140:35

|

140 | Regex::new(indicator).with_context(|| InvalidMessageStartIndicator { indicator })?;

| ^^^^^^^^^^^^ the trait `std::convert::From<&std::string::String>` is not implemented for `std::string::String`

|

= help: the following implementations were found:

<std::string::String as std::convert::From<&'a str>>

<std::string::String as std::convert::From<std::borrow::Cow<'a, str>>>

<std::string::String as std::convert::From<std::boxed::Box<str>>>

= note: required because of the requirements on the impl of `std::convert::Into<std::string::String>` for `&std::string::String`

= note: required because of the requirements on the impl of `snafu::IntoError<sources::file::BuildError>` for `sources::file::InvalidMessageStartIndicator<&std::string::String>`

error[E0277]: the trait bound `std::string::String: std::convert::From<&std::string::String>` is not satisfied

--> src/sources/journald.rs:74:26

|

74 | unit.into()

| ^^^^ the trait `std::convert::From<&std::string::String>` is not implemented for `std::string::String`

|

= help: the following implementations were found:

<std::string::String as std::convert::From<&'a str>>

<std::string::String as std::convert::From<std::borrow::Cow<'a, str>>>

<std::string::String as std::convert::From<std::boxed::Box<str>>>

= note: required because of the requirements on the impl of `std::convert::Into<std::string::String>` for `&std::string::String`

error: enum variants on type aliases are experimental

--> src/sources/util/tcp.rs:152:13

|

152 | Self::SocketAddr(ref addr) => addr.fmt(f),

| ^^^^^^^^^^^^^^^^^^^^^^^^^^

error: enum variants on type aliases are experimental

--> src/sources/util/tcp.rs:153:13

|

153 | Self::SystemdFd(offset) => write!(f, "systemd socket #{}", offset),

| ^^^^^^^^^^^^^^^^^^^^^^^

error: enum variants on type aliases are experimental

--> src/sources/util/tcp.rs:160:9

|

160 | Self::SocketAddr(addr)

| ^^^^^^^^^^^^^^^^

error: aborting due to 17 previous errors

Some errors occurred: E0277, E0658.

For more information about an error, try `rustc --explain E0277`.

error: Could not compile `vector`.

To learn more, run the command again with --verbose.

What version of the rust compiler do you have installed? The sources require version 1.39.0 of the compiler, which is easiest managed through rustup.

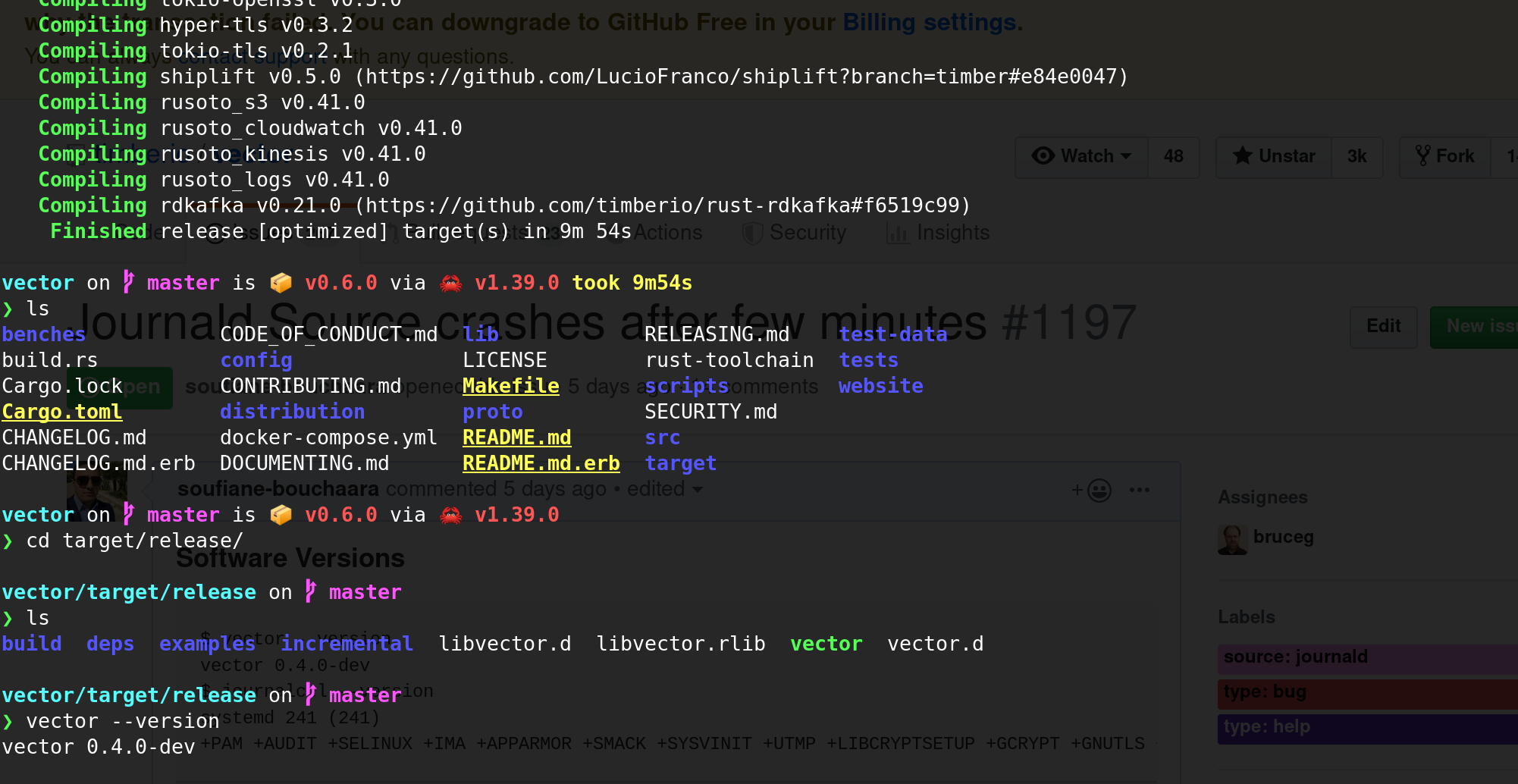

I did so, here's a screenshot

Ok, this looks like it built fine for you. However, it is not running the newly built version, probably because your $PATH (wisely) does not contain the current directory. To run it, use the explicit path (ie ./vector from in the release directory).

Hi, I built vector and I checked the version it was 0.6 , I ran ./vector and here's the following result

Nov 24 12:24:38.036 TRACE sink{name=journald_es type=elasticsearch}: tower_limit::rate::service: rate limit exceeded, disabling service

Nov 24 12:24:40.046 TRACE sink{name=journald_es type=elasticsearch}: tower_limit::rate::service: rate limit exceeded, disabling service

Nov 24 12:24:42.127 TRACE sink{name=journald_es type=elasticsearch}: tower_limit::rate::service: rate limit exceeded, disabling service

Nov 24 12:24:44.935 TRACE sink{name=journald_es type=elasticsearch}: tower_limit::rate::service: rate limit exceeded, disabling service

Nov 24 12:24:45.955 TRACE sink{name=es type=elasticsearch}: tower_limit::rate::service: rate limit exceeded, disabling service

Nov 24 12:24:50.014 TRACE sink{name=journald_es type=elasticsearch}: tower_limit::rate::service: rate limit exceeded, disabling service

Nov 24 12:24:53.176 TRACE sink{name=es type=elasticsearch}: tower_limit::rate::service: rate limit exceeded, disabling service

Nov 24 12:24:55.540 TRACE sink{name=journald_es type=elasticsearch}: tower_limit::rate::service: rate limit exceeded, disabling service

Nov 24 12:25:00.568 TRACE sink{name=journald_es type=elasticsearch}: tower_limit::rate::service: rate limit exceeded, disabling service

Nov 24 12:25:04.056 TRACE sink{name=es type=elasticsearch}: tower_limit::rate::service: rate limit exceeded, disabling service

Nov 24 12:25:05.594 TRACE sink{name=journald_es type=elasticsearch}: tower_limit::rate::service: rate limit exceeded, disabling service

Nov 24 12:25:10.536 TRACE sink{name=es type=elasticsearch}: tower_limit::rate::service: rate limit exceeded, disabling service

Nov 24 12:25:10.611 TRACE sink{name=journald_es type=elasticsearch}: tower_limit::rate::service: rate limit exceeded, disabling service

^CNov 24 12:25:15.376 INFO vector: Shutting down.

Nov 24 12:25:15.377 TRACE sink{name=journald_es type=elasticsearch}: tower_limit::rate::service: rate limit exceeded, disabling service

I disabled rate limiting of journald in /etc/systemd/journald.conf but no clue,

@LucioFranco @lukesteensen is it possible our internal rate limit defaults are too low?

I disabled rate limiting of journald in

/etc/systemd/journald.confbut no clue,

Yeah, this is actually internal rate limiting that our elasticsearch sink does to avoid overwhelming the downstream ES service with requests. By default, it will allow 5 requests per second and up to 5 concurrent requests. When those limits are hit, the sink applies backpressure which would cause the journald source to temporarily stop reading data.

With your batch size of 10M, you should still see quite a lot of data getting through before any rate limiting takes effect.

@lukesteensen @binarylogic @bruceg

I tried with 10M and 100M , no clue, I checked the documentation about rate limiting

I pulled again from source and compile

and I updated my elasticsearch sink as follow

#OUTPUT JOURNALD

[sinks.journald_es]

type = "elasticsearch"

host = "https://xxxxxxxxxx:443"

inputs = ["myimput"]

index = "journald-%Y-%m-%d"

batch_size = 10490000 # default, bytes

batch_timeout = 1 # default, seconds

rate_limit_duration = 1 # default, seconds

rate_limit_num = 5 # default

request_in_flight_limit = 5 # default

request_timeout_secs = 60 # default, seconds

retry_attempts = 5 # default

retry_backoff_secs = 5 # default, seconds

Here's what I get :

Nov 26 05:56:43.440 INFO vector: Log level "info" is enabled.

Nov 26 05:56:43.440 INFO vector: Loading config. path="/etc/vector/vector.toml"

Nov 26 05:56:43.442 ERROR vector: Configuration error: unknown field `rate_limit_duration` for key `sinks.journald_es`

Please help

I'm sorry about that @soufiane-bouchaara, it seems like the documentation is off with some of the names of those options for the ES sink. I've opened #1262 to track a fix, and you should be able to use the actual option names listed there in the meantime.

Yes, apologies for that. Docs have been updated accordingly.

Thank you for the quick answers, @binarylogic I'm using ansible to deploy vector for more than 250 production instance , I'm going to do another retry to see if the journald source doesn't get affected by the rate limit

Thanks, @soufiane-bouchaara. One thing I was thinking about, if you notice that ES is failing to ingest data fast enough, it might be worth considering Kafka or Kinesis since it can handle much higher volumes with more variance. Then you can setup a Vector consumers behind Kakfa or Kinesis to flush to ES. Just food for thought if you find things are not as stable as you'd like.

Thanks @binarylogic for the advice I will check this option

@soufiane-bouchaara thanks for bringing all of this to our attention. I'm going to close this since it appears the issue has been resolved. If it has not, please reopen as we'd love to continue helping.

@binarylogic unfortunately I have exactly the same errors running in a docker container 0.7.2-alpine with defaults:

[sources.host_files]

type = "file"

include = [

"/host/var/log/auth.log",

"/host/var/log/syslog",

]

[sinks.logs_elasticsearch]

type = "elasticsearch"

inputs = ["host_files"]

host = "http://logs-elasticsearch:9200"

index = "logs-%F"

@sam701 thanks for letting us know. We'll attempt to reproduce. The journald source has been an interesting journey, but https://github.com/timberio/vector/pull/1611 should have resolved all of the errors here since we switched to leveraging journalctl. We'll dig in and see what's going on though.

@sam I just realized your config isn't even using the journald source 👀 . Would you mind being more specific on the exact issue you're seeing? I assume it's the rate limiting errors for the elasticsearch sink?

@binarylogic Sorry, my report may be misleading. Here are errors I have

Feb 06 20:43:51.701 INFO vector: Log level "info" is enabled.

Feb 06 20:43:51.702 INFO vector: Loading config. path="/etc/vector/vector.toml"

Feb 06 20:43:51.705 INFO vector: Vector is starting. version="0.7.2" git_version="v0.7.2" released="Fri, 31 Jan 2020 20:33:36 +0000" arch="x86_64"

Feb 06 20:43:51.738 INFO vector::topology: Running healthchecks.

Feb 06 20:43:51.738 INFO vector::topology: Starting source "host_files"

Feb 06 20:43:51.739 INFO vector::topology: Starting sink "logs_elasticsearch"

Feb 06 20:43:51.739 INFO source{name=host_files type=file}: vector::sources::file: Starting file server. include=["/host/var/log/auth.log", "/host/var/log/syslog", "/tmp/test"] exclude=[]

Feb 06 20:43:51.741 INFO source{name=host_files type=file}:file_server: file_source::file_server: Found file to watch. path="/host/var/log/syslog" file_position=677633

Feb 06 20:43:51.741 INFO source{name=host_files type=file}:file_server: file_source::file_server: Found file to watch. path="/host/var/log/auth.log" file_position=54879

Feb 06 20:43:51.749 INFO vector::topology::builder: Healthcheck: Passed.

Feb 06 20:43:52.746 TRACE sink{name=logs_elasticsearch type=elasticsearch}: tower_limit::rate::service: rate limit exceeded, disabling service

Feb 06 20:43:54.806 TRACE sink{name=logs_elasticsearch type=elasticsearch}: tower_limit::rate::service: rate limit exceeded, disabling service

Feb 06 20:44:03.023 TRACE sink{name=logs_elasticsearch type=elasticsearch}: tower_limit::rate::service: rate limit exceeded, disabling service

Got it. You should be able to fiddle with the request.* options. It's impossible for Vector to pick perfect defaults since clusters vary greatly in size. But you can raise the rate_limit_num option to something higher if your cluster can handle it.

👍 Solved with

[sinks.logs_elasticsearch.request]

rate_limit_num = 1000

Most helpful comment

👍 Solved with