Tfjs: Error: Invalid TF_Status: 8, Message: OOM when allocating tensor

My GPU is the NVIDIA GTX 970, and if it isn't clear by the issue, I am using tfjs-node-gpu

TensorFlow.js version

tfjs:"1.5.2"

tfjs-converter:"1.5.2"

tfjs-core:"1.5.2"

tfjs-data:"1.5.2"

tfjs-layers:"1.5.2"

tfjs-node:"1.5.2"

Browser version

ares:"1.15.0"

brotli:"1.0.7"

chrome:"78.0.3904.130"

electron:"7.1.7"

http_parser:"2.8.0"

icu:"64.2"

llhttp:"1.1.4"

modules:"75"

napi:"4"

nghttp2:"1.39.2"

node:"12.8.1"

openssl:"1.1.0"

Describe the problem or feature request

I get the following stacktrace after a while of using face-api.js's detectAllFaces method which utilizes tensorflow.js:

(node:18420) UnhandledPromiseRejectionWarning: Error: Invalid TF_Status: 8

Message: OOM when allocating tensor with shape[1,3688,3688,3] and type float on /job:localhost/replica:0/task:0/device:GPU:0 by allocator GPU_0_bfc

at Object.<anonymous> (<anonymous>)

at NodeJSKernelBackend.executeSingleOutput (C:\Users\Infinity\Documents\Code\NodeJS\face-rec\node_modules\@tensorflow\tfjs-node-gpu\dist\nodejs_kernel_backend.js:193:43)

at NodeJSKernelBackend.concat (C:\Users\Infinity\Documents\Code\NodeJS\face-rec\node_modules\@tensorflow\tfjs-node-gpu\dist\nodejs_kernel_backend.js:405:21)

at C:\Users\Infinity\Documents\Code\NodeJS\face-rec\node_modules\face-api.js\node_modules\@tensorflow\tfjs-core\dist\ops\concat_split.js:184:78

at C:\Users\Infinity\Documents\Code\NodeJS\face-rec\node_modules\@tensorflow\tfjs-node-gpu\node_modules\@tensorflow\tfjs-core\dist\engine.js:528:55

at C:\Users\Infinity\Documents\Code\NodeJS\face-rec\node_modules\@tensorflow\tfjs-node-gpu\node_modules\@tensorflow\tfjs-core\dist\engine.js:388:22

at Engine.scopedRun (C:\Users\Infinity\Documents\Code\NodeJS\face-rec\node_modules\@tensorflow\tfjs-node-gpu\node_modules\@tensorflow\tfjs-core\dist\engine.js:398:23)

at Engine.tidy (C:\Users\Infinity\Documents\Code\NodeJS\face-rec\node_modules\@tensorflow\tfjs-node-gpu\node_modules\@tensorflow\tfjs-core\dist\engine.js:387:21)

at kernelFunc (C:\Users\Infinity\Documents\Code\NodeJS\face-rec\node_modules\@tensorflow\tfjs-node-gpu\node_modules\@tensorflow\tfjs-core\dist\engine.js:528:29)

at C:\Users\Infinity\Documents\Code\NodeJS\face-rec\node_modules\@tensorflow\tfjs-node-gpu\node_modules\@tensorflow\tfjs-core\dist\engine.js:539:27

(node:18420) UnhandledPromiseRejectionWarning: Error: Invalid TF_Status: 8

Message: OOM when allocating tensor with shape[1,3688,3688,3] and type float on /job:localhost/replica:0/task:0/device:GPU:0 by allocator GPU_0_bfc

at Object.<anonymous> (<anonymous>)

at NodeJSKernelBackend.executeSingleOutput (C:\Users\Infinity\Documents\Code\NodeJS\face-rec\node_modules\@tensorflow\tfjs-node-gpu\dist\nodejs_kernel_backend.js:193:43)

at NodeJSKernelBackend.concat (C:\Users\Infinity\Documents\Code\NodeJS\face-rec\node_modules\@tensorflow\tfjs-node-gpu\dist\nodejs_kernel_backend.js:405:21)

at C:\Users\Infinity\Documents\Code\NodeJS\face-rec\node_modules\face-api.js\node_modules\@tensorflow\tfjs-core\dist\ops\concat_split.js:184:78

at C:\Users\Infinity\Documents\Code\NodeJS\face-rec\node_modules\@tensorflow\tfjs-node-gpu\node_modules\@tensorflow\tfjs-core\dist\engine.js:528:55

at C:\Users\Infinity\Documents\Code\NodeJS\face-rec\node_modules\@tensorflow\tfjs-node-gpu\node_modules\@tensorflow\tfjs-core\dist\engine.js:388:22

at Engine.scopedRun (C:\Users\Infinity\Documents\Code\NodeJS\face-rec\node_modules\@tensorflow\tfjs-node-gpu\node_modules\@tensorflow\tfjs-core\dist\engine.js:398:23)

at Engine.tidy (C:\Users\Infinity\Documents\Code\NodeJS\face-rec\node_modules\@tensorflow\tfjs-node-gpu\node_modules\@tensorflow\tfjs-core\dist\engine.js:387:21)

at kernelFunc (C:\Users\Infinity\Documents\Code\NodeJS\face-rec\node_modules\@tensorflow\tfjs-node-gpu\node_modules\@tensorflow\tfjs-core\dist\engine.js:528:29)

at C:\Users\Infinity\Documents\Code\NodeJS\face-rec\node_modules\@tensorflow\tfjs-node-gpu\node_modules\@tensorflow\tfjs-core\dist\engine.js:539:27

I've tried looking around for a possible solution, but in all honesty, I am unaware how to implement the solutions offered. The solutions were involving processing the images in _batch_, but I am using an API that is built upon tfjs, so I don't even know if I could implement the solution, let alone how to. That being said, I read elsewhere that setting the TF_FORCE_GPU_ALLOW_GROWTH environmental variable to true could potentially fix the solution; however, the only issue that solved in the past was "Invalid TF_Status: 2. Message: Failed to get convolution algorithm. This is probably because cuDNN failed to initialize".

--

FWIW: Invalid TF_Status: 8, Message: OOM when allocating tensor with shape occurrs on the 78th image. Additionally, I noticed once that it went ~5 images past that on, so around the 83rd image or so, on one random run.

Code to reproduce the bug / link to feature request

const scrapped = [];

const faceapiOptions = new faceapi.SsdMobilenetv1Options({ minConfidence: 0.9 });

// file = json object {name, id} | allImagesInFolder = array of files (type object {name, id})

for (const file of allImagesInFolder) {

// Get the image as a Buffer from Google Drive, and decode it using tensorflow

const image = tf.node.decodeImage(await googleUtils.getImageAsBuffer(file.id));

console.log(`Detecting faces in ${file.id} of size ${image.size}`);

// Detect faces within the image and the landmarks for each face

const detections = await faceapi.detectAllFaces(image, faceapiOptions).withFaceLandmarks(); // ERROR HERE

if (detections.length == 1) {

scrapped.push(detections[0]);

}

}

For what it's worth, I created a simple benchmark for myself in order to test the time it took to detect faces using face-api.js. The following is a snippet that runs just fine and doesn't result in Error: Invalid TF_Status: 8. Why is it that the following snippet executes with no errors, but the snippet above does?

const testingImg = tf.node.decodeImage(await googleUtils.getImageAsBuffer('1wBKoDbjr8qGfVrzyhzFkiZ1i-O4FLhU3'));

const detection = await faceapi.detectAllFaces(testingImg).withFaceLandmarks();

console.log(detection);

const withoutPromise = [];

const withPromise = [];

for (let i = 0; i < 1000; i++) {

withoutPromise.push(faceapi.detectAllFaces(img));

withPromise.push(faceapi.detectAllFaces(img).withFaceLandmarks());

}

const start = new moment();

Promise.all(withoutPromise).then(() => {

console.log(`Time to detect all faces w/o landmarks: ${moment.duration(new moment().diff(start)).asMilliseconds()} ms`);

});

Promise.all(withPromise).then(() => {

console.log(`Time to detect all faces w/ landmarks: ${moment.duration(new moment().diff(start)).asMilliseconds()} ms`);

});

All 7 comments

I would like to add that I when I run the facial recognition on that one specific image in particular that results in the OOM error, it works just fine by itself.

@Infinitay is it resolved ? can we close this issue ?

Yes and no really. Apparently 3.5 GB of VRAM isn't enough as people with more high-end GPU's don't run into this issue. On the other hand, if I decide to settle for a much slower, almost double the time, alternative by using tfjs-node for CPU, I no longer have the issue.

Since a new GPU is out of my budget, I had to settle for the double computation time.

@Infinitay try adding image.dispose() after calling faceapi.detectAllFaces. It might free up intermediate tensors faster.

I was doing this wrong I suppose, I tried it once by calling tf#dispose but I would end up with the same OOM error at times, if not completely crash node.js.

This solves my issue I am having. Thank you very much Tafsiri!

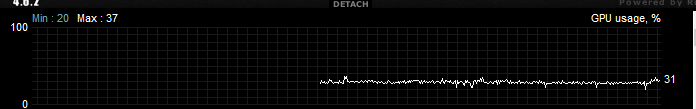

Also, would you happen to know if there is a way to perhaps force tfjs-node-gpu to have full priority of the GPU and utilize it at 100%? I noticed that it was using only 30% of the GPU on average

I would most likely interpret that graph as saying your program doesn't saturate the GPU. If face-api takes images in batches you might be able to pass in more images at a time and thus utilize more of your GPU (you would need to investigate the face-api api to find out if this is doable).

I appreciate all the assistance, I will now close this issue.

Thanks again for all your help Rthadur and Tafsiri

Most helpful comment

@Infinitay try adding

image.dispose()after callingfaceapi.detectAllFaces. It might free up intermediate tensors faster.