Tendermint: cs.wal growing without bound on gaia-8001

https://github.com/cosmos/cosmos-sdk/issues/2123

cs.wal is 15GB on my node

All 31 comments

collating from riot chat:

seems like seed nodes are affected more >50GB usage

some nodes are relatively unaffected ~2GB usage

but most nodes are sitting around 15-20GB at the moment

$ du -h ~/.gaiad/

138M /home/johnzampolin/.gaiad/data/gaia.db

52K /home/johnzampolin/.gaiad/data/tx_index.db

20K /home/johnzampolin/.gaiad/data/mempool.wal

20K /home/johnzampolin/.gaiad/data/evidence.db

20G /home/johnzampolin/.gaiad/data/cs.wal

4.4M /home/johnzampolin/.gaiad/data/state.db

147M /home/johnzampolin/.gaiad/data/blockstore.db

20G /home/johnzampolin/.gaiad/data

8.0K /home/johnzampolin/.gaiad/config/gentx

704K /home/johnzampolin/.gaiad/config

20G /home/johnzampolin/.gaiad/

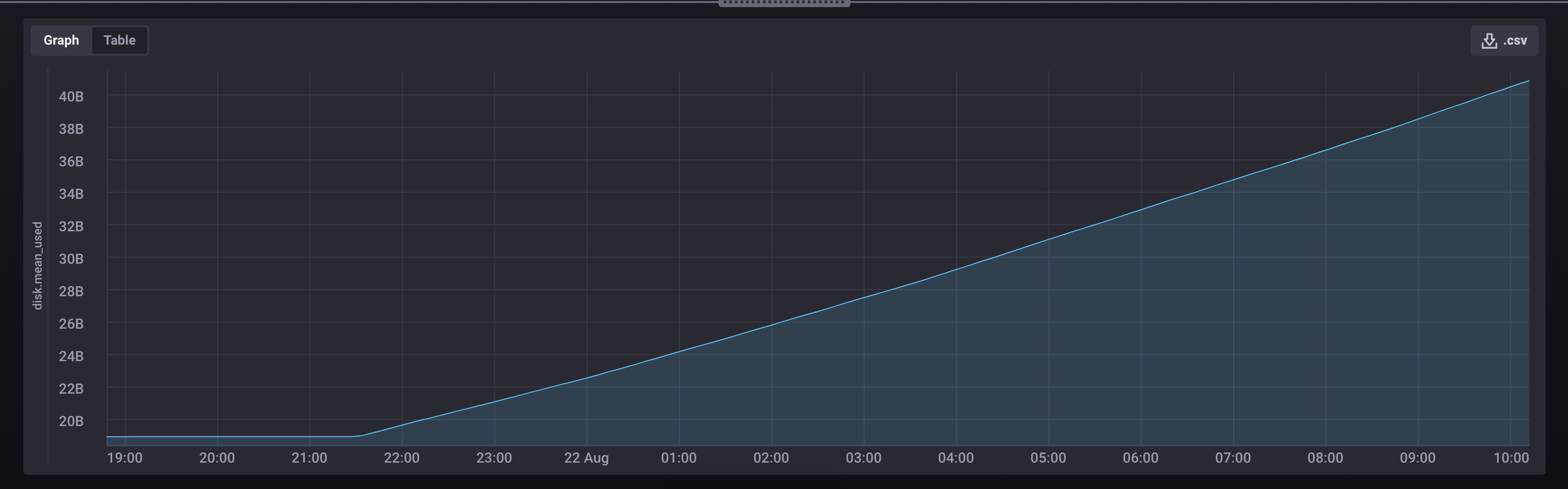

Growth over time:

From one sentry // used ncdu:

~/.gaiad/data ---------------------------------------------------

/..

18.0 GiB [##########] /cs.wal

146.5 MiB [ ] /blockstore.db

138.5 MiB [ ] /gaia.db

3.3 MiB [ ] /state.db

52.0 KiB [ ] /tx_index.db

20.0 KiB [ ] /mempool.wal

20.0 KiB [ ] /evidence.db

data folder:

140M data/gaia.db

1.9M data/state.db

60K data/tx_index.db

32G data/cs.wal

16K data/evidence.db

20K data/mempool.wal

149M data/blockstore.db

32G data

Validator seems unaffected. Its running behind 2 sentries. sentry 1 is showing numbers above, the other one is at half of the file sizes of sentry 1

@nckrtl Can you share your configuration file (~/.gaiad/config/config.toml) as a gist?

@ValarDragon could this be related to a change in https://github.com/tendermint/tendermint/pull/2113 ?

10 min size checked every min. (MB)

20180822-17:24:40 20519 /home/gaia/.gaiad/data/cs.wal

20180822-17:25:40 20551 /home/gaia/.gaiad/data/cs.wal

20180822-17:26:40 20574 /home/gaia/.gaiad/data/cs.wal

20180822-17:27:40 20605 /home/gaia/.gaiad/data/cs.wal

20180822-17:28:40 20631 /home/gaia/.gaiad/data/cs.wal

20180822-17:29:40 20657 /home/gaia/.gaiad/data/cs.wal

20180822-17:30:40 20683 /home/gaia/.gaiad/data/cs.wal

20180822-17:31:40 20710 /home/gaia/.gaiad/data/cs.wal

20180822-17:32:40 20741 /home/gaia/.gaiad/data/cs.wal

20180822-17:33:40 20766 /home/gaia/.gaiad/data/cs.wal

The only difference between my first sentry and sentry2 + validator is that first sentry has seed_mode on true. Just turned seed_mode=true off to see if it has any effect

Do we use atomic write file to write to the wal? I only remember using it for priv validator and tests. If we don't, it's unlikely that #2113 caused this

I am only seeing the problem on my sentries, but not on my validator node.

Actually it is growing there on my validator as well, just at a much lower rate, and so I am not above 2 GB yet. My validator only has 3 peers while my sentries have 40.

cosmos@cosmos:~/.gaiad/data$

du -hs *

158M blockstore.db

13G cs.wal

20K evidence.db

147M gaia.db

12K mempool.wal

8.5M state.db

52K tx_index.db

seed node london = up today with one peersitent peer. (1 core 4 ram)

du -h ~/.gaiad/

12G /home/cosmosseedlondon/.gaiad/data/cs.wal

168M /home/cosmosseedlondon/.gaiad/data/blockstore.db

20K /home/cosmosseedlondon/.gaiad/data/evidence.db

48K /home/cosmosseedlondon/.gaiad/data/tx_index.db

145M /home/cosmosseedlondon/.gaiad/data/gaia.db

9,0M /home/cosmosseedlondon/.gaiad/data/state.db

8,0K /home/cosmosseedlondon/.gaiad/data/mempool.wal

12G /home/cosmosseedlondon/.gaiad/data

608K /home/cosmosseedlondon/.gaiad/config

12G /home/cosmosseedlondon/.gaiad/

status sync

gaiacli status

{"node_info":{"id":"b7d8678daa644eba28174a9fec26577b7044a7f6","listen_addr":"54.36.163.150:26656","network":"gaia-8001","version":"0.23.0","channels":"4020212223303800","moniker":"melea-trust-seed-London","other":["amino_version=0.12.0","p2p_version=0.5.0","consensus_version=v1/0.2.2","rpc_version=0.7.0/3","tx_index=on","rpc_addr=tcp://0.0.0.0:26657"]},"sync_info":{"latest_block_hash":"E77306EF78D083757918AEE49F7A4D36DC9F8AD2","latest_app_hash":"FA126B530C346040D0A109991C0DCF548D449900","latest_block_height":"5937","latest_block_time":"2018-08-22T18:32:17.173650222Z","catching_up":false},"validator_info":{"address":"98C061003F3EBC21FFD4F3E8BF468D957F34B610","pub_key":{"type":"tendermint/PubKeyEd25519","value":"cXbnKMNJASsm8Sbx/F5YkbHI/D5bUwCpfFB01Eexzlk="},"voting_power":"0"}}

sentry node london = up today only seed node (2 cores 8 ram)

du -h ~/.gaiad/

2,7G /home/cosmosvlondon/.gaiad/data/cs.wal

168M /home/cosmosvlondon/.gaiad/data/blockstore.db

16K /home/cosmosvlondon/.gaiad/data/evidence.db

60K /home/cosmosvlondon/.gaiad/data/tx_index.db

148M /home/cosmosvlondon/.gaiad/data/gaia.db

7,0M /home/cosmosvlondon/.gaiad/data/state.db

8,0K /home/cosmosvlondon/.gaiad/data/mempool.wal

3,0G /home/cosmosvlondon/.gaiad/data

596K /home/cosmosvlondon/.gaiad/config

3,0G /home/cosmosvlondon/.gaiad/

status sync

gaiacli status

{"node_info":{"id":"54e87a7baa9829b9579e1cf4f1d782800715ef5c","listen_addr":"51.38.71.229:26656","network":"gaia-8001","version":"0.23.0","channels":"4020212223303800","moniker":"melea-trust-London","other":["amino_version=0.12.0","p2p_version=0.5.0","consensus_version=v1/0.2.2","rpc_version=0.7.0/3","tx_index=on","rpc_addr=tcp://0.0.0.0:26657"]},"sync_info":{"latest_block_hash":"701E0104AB0EA5D6A66C3FBA0FA0B819D89E2E50","latest_app_hash":"ECC67D0B6CD2CDD97302ABAC469044C8B44512D4","latest_block_height":"5928","latest_block_time":"2018-08-22T18:30:56.1657453Z","catching_up":false},"validator_info":{"address":"4593BFD2D764244E2DE54C33ADE4F39D67C4BFBE","pub_key":{"type":"tendermint/PubKeyEd25519","value":"D8/X3Njt9xBS629sZkpzxWQgJEk7OVXVjRB5pyCdei0="},"voting_power":"0"}}

the only diference in the config.toml is one have only one peersitent peer and other only one seed node.

anyway i can see in other nodes same setup like before and the file wall is big too. so is not related to hardware or peersitents peers / seed nodes in config.toml.

Do we use atomic write file to write to the wal? I only remember using it for priv validator and tests. If we don't, it's unlikely that #2113 caused this

No of course not. I'm sorry I confused it with the autofile. Looks like we removed the loop that actually manages the log rotation : https://github.com/tendermint/tendermint/pull/2135/files#diff-70f4a8f7e82987d773349f21083cfa94R70

So the fix should be simple enough

Ok I'm about to board a plane but I pushed a potential fix: https://github.com/cosmos/cosmos-sdk/releases/tag/v0.24.2-rc0

Looks like v0.24.2-rc0 fixes this issue for me! Thanks @ebuchman!

$ du -h ~/.gaiad

...

16M /home/johnzampolin/.gaiad/data/cs.wal

...

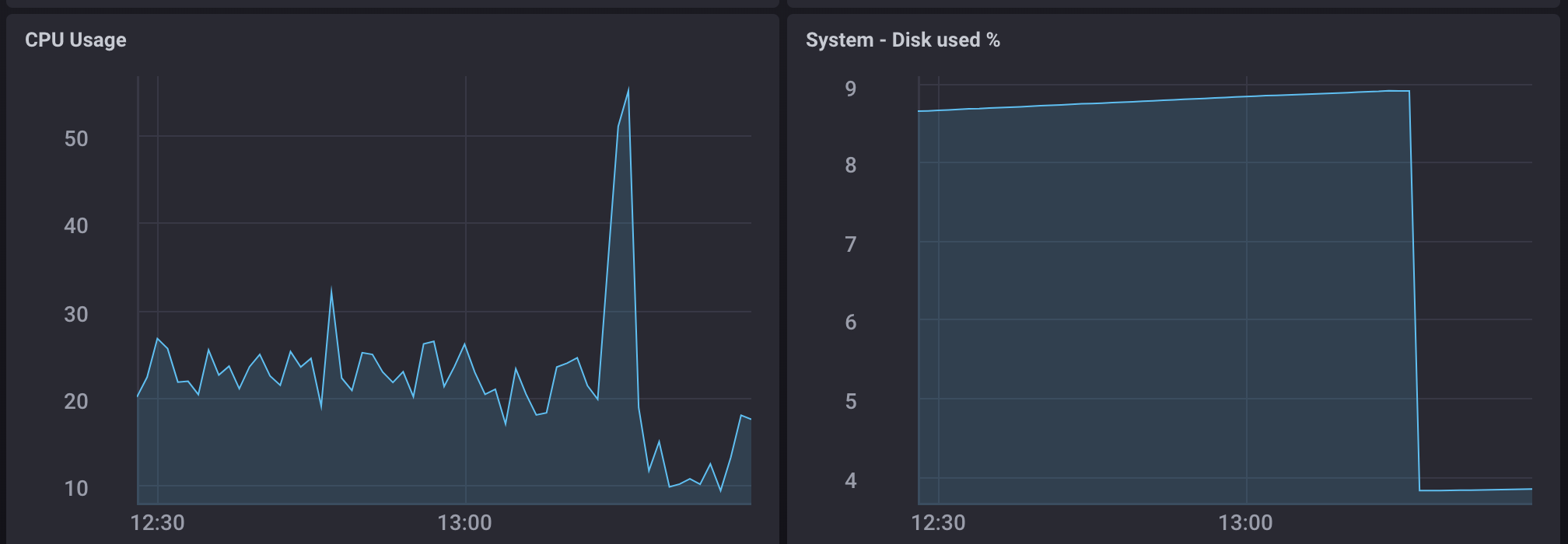

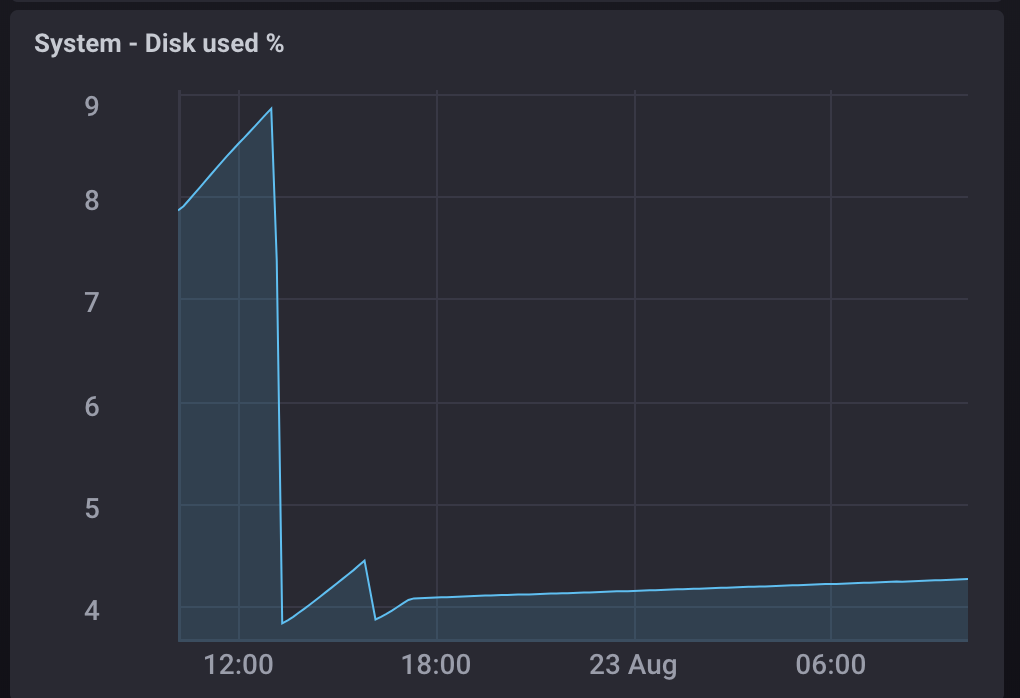

Graphs:

[gaia@sfo ~]$ gaiad version

0.24.2-0bf061b

[gaia@sfo ~]$ ls /home/gaia/.gaiad/data/cs.wal

wal wal.004 wal.009 wal.014 wal.019 wal.024 wal.029 wal.034 wal.039

wal.000 wal.005 wal.010 wal.015 wal.020 wal.025 wal.030 wal.035 wal.040

wal.001 wal.006 wal.011 wal.016 wal.021 wal.026 wal.031 wal.036 wal.041

wal.002 wal.007 wal.012 wal.017 wal.022 wal.027 wal.032 wal.037

wal.003 wal.008 wal.013 wal.018 wal.023 wal.028 wal.033 wal.038

[gaia@sfo ~]$ du -sh /home/gaia/.gaiad/data/cs.wal

460M /home/gaia/.gaiad/data/cs.wal

[gaia@sfo ~]$

20180822-22:03:31 Dir Size MB: 740 cs.wal File count: 69

20180822-22:04:31 Dir Size MB: 745 cs.wal File count: 70

20180822-22:05:31 Dir Size MB: 753 cs.wal File count: 70

20180822-22:06:31 Dir Size MB: 763 cs.wal File count: 71

20180822-22:07:31 Dir Size MB: 774 cs.wal File count: 72

20180822-22:08:31 Dir Size MB: 785 cs.wal File count: 73

20180822-22:09:31 Dir Size MB: 798 cs.wal File count: 75

@BaryonNetwork I'm also seeing similar:

$ du -h ~/.gaiad/

...

2.4G /home/johnzampolin/.gaiad/data/cs.wal

...

Looks like a restart wipes the wal however. I'll also update the forum post.

I seem to be running stable with this work around:

[gaia]$ crontab -e

*/5 * * * * rm /home/gaia/.gaiad/data/cs.wal/wal.*

The cron will clear out the wal.000 files but leave the wal file.

jackzampolin

01:20

Wouldn't recommend deleting the file while process is running. May cause failure.

Zaki=

Your wal file should grow to ~1GB

and then stop

SD

My wal file on the upgraded node has been stable at ~1GB

me = the hotfix is working in a new install in my node too.

Before hotfix (cs.wal varied from 42-47GB across my sentry nodes), and my public and private validators were 17gb and 12 gb respectively (unfortunately I didn't c/p their results for direct comparison.

Before hotfix:

du -h ~/.gaiad/

5.0M /root/.gaiad/data/state.db

52K /root/.gaiad/data/tx_index.db

220M /root/.gaiad/data/gaia.db

283M /root/.gaiad/data/blockstore.db

20K /root/.gaiad/data/mempool.wal

47G /root/.gaiad/data/cs.wal

20K /root/.gaiad/data/evidence.db

47G /root/.gaiad/data

624K /root/.gaiad/config

47G /root/.gaiad/

After Hotfix (same node):

du -h ~/.gaiad/

1.4M /root/.gaiad/data/state.db

40K /root/.gaiad/data/tx_index.db

211M /root/.gaiad/data/gaia.db

299M /root/.gaiad/data/blockstore.db

20K /root/.gaiad/data/mempool.wal

603M /root/.gaiad/data/cs.wal

20K /root/.gaiad/data/evidence.db

1.1G /root/.gaiad/data

636K /root/.gaiad/config

1.1G /root/.gaiad/

Public Validator:

du -h ~/.gaiad/

636K /root/.gaiad/data/state.db

40K /root/.gaiad/data/tx_index.db

212M /root/.gaiad/data/gaia.db

299M /root/.gaiad/data/blockstore.db

20K /root/.gaiad/data/mempool.wal

267M /root/.gaiad/data/cs.wal

20K /root/.gaiad/data/evidence.db

778M /root/.gaiad/data

604K /root/.gaiad/config

779M /root/.gaiad/

Private Validator

du -h ~/.gaiad/

5.4M /root/.gaiad/data/state.db

40K /root/.gaiad/data/tx_index.db

212M /root/.gaiad/data/gaia.db

301M /root/.gaiad/data/blockstore.db

20K /root/.gaiad/data/mempool.wal

48M /root/.gaiad/data/cs.wal

20K /root/.gaiad/data/evidence.db

564M /root/.gaiad/data

404K /root/.gaiad/config

565M /root/.gaiad/

For me is only working in a new clean install in a validator node.

after the update and made some test, in other nodes, I can’t have this working good.

don’t follow the steps for now imo don’t help. maybe try a new clean install

For Update the Hotfix upgrade (but don't work)

Stop gaiad

cd go/src/github.com/cosmos/cosmos-sdk

git fetch --tags

git checkout v0.24.2-rc0

make get_vendor_deps

make install

start gaiad

then for the cs.wal problem need .....

du -h ~/.gaiad/

24G /home/cosmosvlondon/.gaiad/data/cs.wal

429M /home/cosmosvlondon/.gaiad/data/blockstore.db

Stop gaiad

cd .gaiad/data

rm -r cs.wal/*

du -h ~/.gaiad/

4,0K /home/cosmosvlondon/.gaiad/data/cs.wal

429M /home/cosmosvlondon/.gaiad/data/blockstore.db

cd ../

~/.gaiad$ cp -r data/ $HOME

gaiad unsafe_reset_all

Gaiad start

Wait for sync some blocks almost 11 or 1

Stop gaiad

sudo rm -r data/*

cd

cp -r data/* .gaiad/data

rm -rf data (or not)

Start gaiad

have to sync since last block you backup and no cs.wal problems. Like

du -h ~/.gaiad/

11M /home/cosmosvlondon/.gaiad/data/cs.wal

436M /home/cosmosvlondon/.gaiad/data/blockstore.db

gaiacli status

{"node_info":{"id":"54e87a7baa9829b9579e1cf4f1d782800715ef5c","listen_addr":"51.38.71.229:26656","network":"gaia-8001","version":"0.23.0","channels":"4020212223303800","moniker":"melea-trust-London","other":["amino_version=0.12.0","p2p_version=0.5.0","consensus_version=v1/0.2.2","rpc_version=0.7.0/3","tx_index=on","rpc_addr=tcp://0.0.0.0:26657"]},"sync_info":{"latest_block_hash":"206B91C8A6BE52580F20210CBEF8C59C8B17BF98","latest_app_hash":"A81C95B07B56E63C5D71D7E4E73527163634704D","latest_block_height":"15674","latest_block_time":"2018-08-23T14:07:18.185675383Z","catching_up":false},"validator_info":{"address":"4593BFD2D764244E2DE54C33ADE4F39D67C4BFBE","pub_key":{"type":"tendermint/PubKeyEd25519","value":"D8/X3Njt9xBS629sZkpzxWQgJEk7OVXVjRB5pyCdei0="},"voting_power":"0"}}

Now when you see cs.wal files growing up to 1gb, stop and start gaiad again one more time.

Now cs.wal files are ok.

Test in validator and two sentrys and works only in a new clean install in a validator node

note update

For me is only working in a new clean install in a validator node.

after the update and made some test, in other nodes, I can’t have this working good.

don’t follow the steps for now imo don’t help. maybe try a new clean install

I had an issue with my initial cron post ^^, the asterisk at the end of wall. was not showing up. If you use that temporary workaround, be sure to specify only the files with . extension ( example wall.101 ). The active write file should be the wall file without extension. I have run stable overnight with this rm cron running every five minutes on my validator and sentry nodes. Also note I only run this with gaiad version 0.24.2-0bf061b.

Will also note that my node is stable at ~1GB wal. I had to rm -rf vendor and rebuild from scratch to get the benefit of the hotfix. Graph of disk usage below shows initial restart, wal growing unbounded again, rebuild and restart where hotfix is working:

I just read Zaki's post in the forum "Expected behavior is for the wal file to grow ~1GB on v0.24.2 and then stabilize" I am removing my cron and testing now.

Yes, I am stable without rm any wal files after all. The middle column is cs.wal dir size in MB.

20180823-17:26:14 270 /home/gaia/.gaiad/data/cs.wal File count: 24

20180823-17:27:14 298 /home/gaia/.gaiad/data/cs.wal File count: 26

20180823-17:28:14 319 /home/gaia/.gaiad/data/cs.wal File count: 28

20180823-17:29:14 339 /home/gaia/.gaiad/data/cs.wal File count: 30

20180823-17:30:14 364 /home/gaia/.gaiad/data/cs.wal File count: 32

20180823-17:31:14 384 /home/gaia/.gaiad/data/cs.wal File count: 34

20180823-17:32:14 409 /home/gaia/.gaiad/data/cs.wal File count: 36

20180823-17:33:14 435 /home/gaia/.gaiad/data/cs.wal File count: 39

20180823-17:34:14 456 /home/gaia/.gaiad/data/cs.wal File count: 40

20180823-17:35:14 474 /home/gaia/.gaiad/data/cs.wal File count: 42

20180823-17:36:14 502 /home/gaia/.gaiad/data/cs.wal File count: 44

20180823-17:37:14 522 /home/gaia/.gaiad/data/cs.wal File count: 46

20180823-17:38:14 543 /home/gaia/.gaiad/data/cs.wal File count: 48

20180823-17:39:14 572 /home/gaia/.gaiad/data/cs.wal File count: 50

20180823-17:40:14 592 /home/gaia/.gaiad/data/cs.wal File count: 52

20180823-17:41:14 615 /home/gaia/.gaiad/data/cs.wal File count: 54

20180823-17:42:14 641 /home/gaia/.gaiad/data/cs.wal File count: 56

20180823-17:43:14 664 /home/gaia/.gaiad/data/cs.wal File count: 58

20180823-17:44:14 690 /home/gaia/.gaiad/data/cs.wal File count: 60

20180823-17:45:14 713 /home/gaia/.gaiad/data/cs.wal File count: 62

20180823-17:46:14 736 /home/gaia/.gaiad/data/cs.wal File count: 64

20180823-17:47:14 759 /home/gaia/.gaiad/data/cs.wal File count: 66

20180823-17:48:14 783 /home/gaia/.gaiad/data/cs.wal File count: 68

20180823-17:49:14 805 /home/gaia/.gaiad/data/cs.wal File count: 70

20180823-17:50:14 829 /home/gaia/.gaiad/data/cs.wal File count: 72

20180823-17:51:14 851 /home/gaia/.gaiad/data/cs.wal File count: 74

20180823-17:52:14 875 /home/gaia/.gaiad/data/cs.wal File count: 76

20180823-17:53:14 901 /home/gaia/.gaiad/data/cs.wal File count: 79

20180823-17:54:14 925 /home/gaia/.gaiad/data/cs.wal File count: 81

20180823-17:55:14 946 /home/gaia/.gaiad/data/cs.wal File count: 82

20180823-17:56:14 970 /home/gaia/.gaiad/data/cs.wal File count: 84

20180823-17:57:14 994 /home/gaia/.gaiad/data/cs.wal File count: 86

20180823-17:58:14 1017 /home/gaia/.gaiad/data/cs.wal File count: 89

20180823-17:59:14 1016 /home/gaia/.gaiad/data/cs.wal File count: 89

20180823-18:00:14 1016 /home/gaia/.gaiad/data/cs.wal File count: 89

20180823-18:01:14 1016 /home/gaia/.gaiad/data/cs.wal File count: 89

20180823-18:02:14 1018 /home/gaia/.gaiad/data/cs.wal File count: 89

20180823-18:03:14 1013 /home/gaia/.gaiad/data/cs.wal File count: 89

20180823-18:04:14 1025 /home/gaia/.gaiad/data/cs.wal File count: 90

20180823-18:05:14 1023 /home/gaia/.gaiad/data/cs.wal File count: 90

20180823-18:06:14 1015 /home/gaia/.gaiad/data/cs.wal File count: 89

20180823-18:07:14 1015 /home/gaia/.gaiad/data/cs.wal File count: 90

20180823-18:08:14 1021 /home/gaia/.gaiad/data/cs.wal File count: 90

20180823-18:09:14 1023 /home/gaia/.gaiad/data/cs.wal File count: 90

20180823-18:10:14 1025 /home/gaia/.gaiad/data/cs.wal File count: 90

20180823-18:11:14 1024 /home/gaia/.gaiad/data/cs.wal File count: 90

20180823-18:12:14 1024 /home/gaia/.gaiad/data/cs.wal File count: 90

20180823-18:13:14 1016 /home/gaia/.gaiad/data/cs.wal File count: 89

20180823-18:14:14 1013 /home/gaia/.gaiad/data/cs.wal File count: 89

20180823-18:15:14 1024 /home/gaia/.gaiad/data/cs.wal File count: 90

20180823-18:16:14 1014 /home/gaia/.gaiad/data/cs.wal File count: 89

20180823-18:17:14 1014 /home/gaia/.gaiad/data/cs.wal File count: 89

20180823-18:18:14 1013 /home/gaia/.gaiad/data/cs.wal File count: 89

20180823-18:19:14 1014 /home/gaia/.gaiad/data/cs.wal File count: 89

20180823-18:20:14 1018 /home/gaia/.gaiad/data/cs.wal File count: 90

20180823-18:21:14 1024 /home/gaia/.gaiad/data/cs.wal File count: 90

20180823-18:22:14 1016 /home/gaia/.gaiad/data/cs.wal File count: 90

20180823-18:23:14 1015 /home/gaia/.gaiad/data/cs.wal File count: 90

20180823-18:24:14 1016 /home/gaia/.gaiad/data/cs.wal File count: 90

20180823-18:25:14 1023 /home/gaia/.gaiad/data/cs.wal File count: 90

20180823-18:26:14 1021 /home/gaia/.gaiad/data/cs.wal File count: 90

New install works 100% in 5 servers sync from 0 blocks

update dont work.

Install like

go get github.com/cosmos/cosmos-sdk

cd go/src/github.com/cosmos/cosmos-sdk

git fetch --tags

git checkout v0.24.2-rc0

make get_tools

make get_vendor_deps

make install

gaiad init --name melea

vi .gaiad/config/config.toml (add seeds and make your setup)

start gaiad

Hat tip to https://github.com/tendermint/tendermint/pull/2244 that caught this error but maybe didn't communicate it well enough.