Expected behavior

I have a simple application that I'd like to deploy with skaffold. In particular my application is a kind: job.

Actual behavior

If I deploy with run command everything works well. If I deploy with dev the first time works, then simply modify the source code but when skaffold tries to apply I have this error:

The Job "getting-started" is invalid: spec.template: Invalid value: core.PodTemplateSpec{ObjectMeta:v1.ObjectMeta{Name:"", GenerateName:"", Namespace:"", SelfLink:"", UID:"", ResourceVersion:"", Generation:0, CreationTimestamp:v1.Time{Time:time.Time{wall:0x0, ext:0, loc:(*time.Location)(nil)}}, DeletionTimestamp:(*v1.Time)(nil), DeletionGracePeriodSeconds:(*int64)(nil), Labels:map[string]string{"controller-uid":"a2085887-9cb4-11e8-9dca-02b390d668c6", "job-name":"getting-started"}, Annotations:map[string]string(nil), OwnerReferences:[]v1.OwnerReference(nil), Initializers:(*v1.Initializers)(nil), Finalizers:[]string(nil), ClusterName:""}, Spec:core.PodSpec{Volumes:[]core.Volume(nil), InitContainers:[]core.Container(nil), Containers:[]core.Container{core.Container{Name:"getting-started", Image:"224646542506.dkr.ecr.us-west-2.amazonaws.com/arraiy/roto:d22a845-dirty-1ee9974", Command:[]string(nil), Args:[]string(nil), WorkingDir:"", Ports:[]core.ContainerPort(nil), EnvFrom:[]core.EnvFromSource(nil), Env:[]core.EnvVar(nil), Resources:core.ResourceRequirements{Limits:core.ResourceList(nil), Requests:core.ResourceList(nil)}, VolumeMounts:[]core.VolumeMount(nil), VolumeDevices:[]core.VolumeDevice(nil), LivenessProbe:(*core.Probe)(nil), ReadinessProbe:(*core.Probe)(nil), Lifecycle:(*core.Lifecycle)(nil), TerminationMessagePath:"/dev/termination-log", TerminationMessagePolicy:"File", ImagePullPolicy:"IfNotPresent", SecurityContext:(*core.SecurityContext)(nil), Stdin:false, StdinOnce:false, TTY:false}}, RestartPolicy:"OnFailure", TerminationGracePeriodSeconds:(*int64)(0xc425c72988), ActiveDeadlineSeconds:(*int64)(nil), DNSPolicy:"ClusterFirst", NodeSelector:map[string]string(nil), ServiceAccountName:"", AutomountServiceAccountToken:(*bool)(nil), NodeName:"", SecurityContext:(*core.PodSecurityContext)(0xc4409610c0), ImagePullSecrets:[]core.LocalObjectReference(nil), Hostname:"", Subdomain:"", Affinity:(*core.Affinity)(nil), SchedulerName:"default-scheduler", Tolerations:[]core.Toleration(nil), HostAliases:[]core.HostAlias(nil), PriorityClassName:"", Priority:(*int32)(nil), DNSConfig:(*core.PodDNSConfig)(nil)}}: field is immutable

WARN[0051] Skipping Deploy due to error: deploying manifests: exit status 1

Skaffold YAML

To try this I've started from "getting-started" example but I modified k8s-pod.yaml:

apiVersion: batch/v1

kind: Job

metadata:

name: getting-started

spec:

template:

spec:

containers:

- name: getting-started

image: gcr.io/k8s-skaffold/skaffold-example

restartPolicy: OnFailure

Information

- Skaffold version: 0.11.0 and 0.10.0

- Operating system: Linux Ubuntu 16.04.4 LTS

Steps to reproduce the behavior

skaffold dev

All 9 comments

I also would like to run a job using skaffold. My use case is for database seeding and migrations. For the moment we're just using a 'restartPolicy: Never' pod.

Just so that I understand this better - @bryanlarsen you would trigger the database seeding and migration task as a separate job before the app is deployed?

@lenlen - can you tell me more about your use case for a job?

I would also be interested in some sort of better support here to enable us to run one-off jobs as part of the deployment process.

We have a migration process that runs with each release of our code that updates schema. Ideally we'd like to do the following in our flows:

skaffold dev: Used by developers and triggers the migration Job on the deploy step so we can ensure the DB (or any other stateful dependency) is setup correctlyskaffold run: Used in CI/CD and triggers the migration Job on the deploy step to run any incremental schema changes (or other state migration related tasks) on deploy

Our current workaround is a pod that runs the migration script that is deployed alongside the application as @bryanlarsen suggested. This works but has a few limitations:

- We had to add a step in our CI pipeline that removes the pod before we run skaffold to ensure the migration script to runs on every deploy

- We had some issues when spinning up new environments with

skaffold devsince the development database service takes some time to provision. We have been using therestartPolicy: OnFailureto get around this issue for now. We would prefer to get the restart logic provided by theJobworkload to get the more robust retry / reschedule logic it provides.

I think one possibility (not sure if this would be ideal) here is providing hooks that would let user-defined scripts to run before/after each step of the deployment process. That level of flexibility would open up a lot of doors for more intricate integrations over time. For this particular issue, it would let us setup a script to clear out existing Jobs before redeploying.

I'm wondering if you tried using init containers? Would that be sufficient for your use cases?

@priyawadhwa as we discussed, let's attempt to do one-by-one deployment of k8s artifacts and do kubectl delete -f on failed ones where error message contains field is immutable

/cc @r2d4

This should be fixed by #940

@balopat I get the same error when re-deploying a job which was already deployed using skaffold run (I guess this was fixed for skaffold dev). Looks like someone else is also getting the error - mentioned here: https://github.com/GoogleContainerTools/skaffold/pull/940#issuecomment-547389706

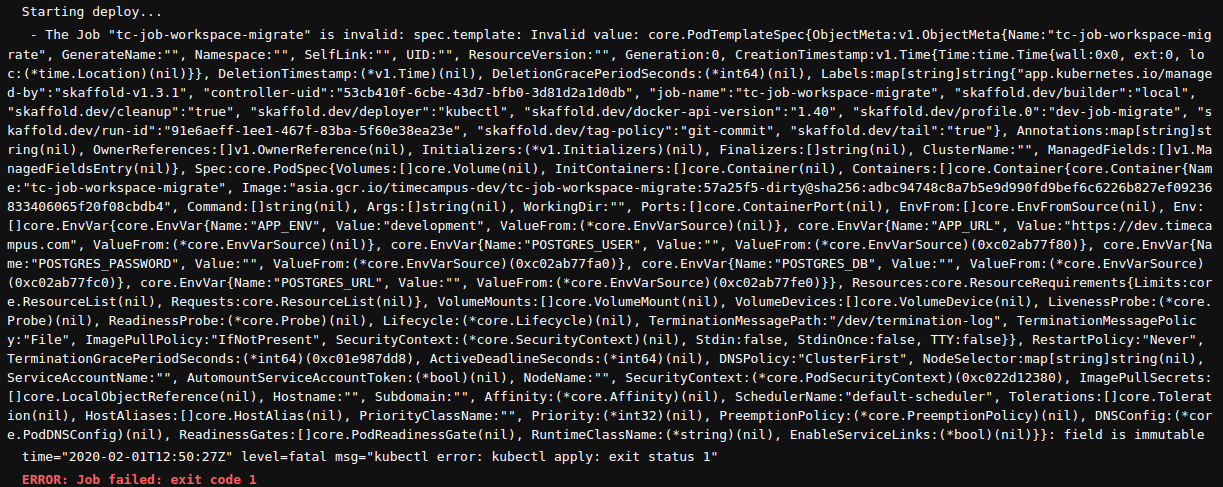

Screenshot below:

In my case, I don't think init containers will works since I want the job to run only once per deployment. Not for every pod. I guess, in case of init containers the job will run before every pod starts if I am not wrong.

For skaffold dev this is how it works with --force=true by default, for skaffold run you have to explicitly specify --force=true. Does that solve it for you @tvvignesh?

@balopat Thanks. Seems to work with --force set so far but I am still doubtful of one thing since kubectl has itself not implemented it completely as I see it. Related issue here: https://github.com/kubernetes/kubernetes/issues/87747

WIll that work in skaffold.

Most helpful comment

@balopat I get the same error when re-deploying a job which was already deployed using

skaffold run(I guess this was fixed forskaffold dev). Looks like someone else is also getting the error - mentioned here: https://github.com/GoogleContainerTools/skaffold/pull/940#issuecomment-547389706Screenshot below:

In my case, I don't think init containers will works since I want the job to run only once per deployment. Not for every pod. I guess, in case of init containers the job will run before every pod starts if I am not wrong.