Rawtherapee: Support for saving 16-bit floating-point or 32-bit TIFFs

Originally reported on Google Code with ID 2374

With regards to issue 2369 which brings read support for 16-bit, 24-bit and 32-bit floating-point

DNG files, it would be nice to have the ability to save 16-bit floating-point TIFFs

(and 24-bit and 32-bit ones?).

Update 2016-08-09:

The referenced old issue 2369 is new issue #2353

Reported by entertheyoni on 2014-05-08 05:47:04

All 52 comments

I'm working on this.

Reported by natureh.510 on 2014-05-08 17:44:42

- Status changed:

Started - Labels added: OpSys-All

Just curious ..

How many discrete levels can 16bit float handle ?.

If I understand what happens .. it should be 16384 distributed as 1024 levels for each

stop .. this looks equivalent to 14bit int with gamma2.0 encoding .. Am I missing something

?.

Reported by iliasgiarimis on 2014-05-08 18:41:30

Ahh sorry .. 5bit exponent - 10 bits mantissa so 32*1024 = 65536

equivalent to 16bit int gamma2.0 encoded ?.

Reported by iliasgiarimis on 2014-05-08 18:55:27

I can't answer to your question illias, but it'll save to 32 bit fp, so it's worth the

effort.

Reported by natureh.510 on 2014-05-10 23:22:25

One question: following the same thinking than for the HDR DNG patch, can I switch the

internal output image type from IImage16 to IImagefloat, increasing the memory footprint

(the conversion to 16 bit would be done at serialisation time), or should I handle

2 image format through template to use less memory for standard output (8-16 bit, i.e.

98% of cases)?

I've followed the second route so far, but the first one would be simpler to code.

Anyway, if I manage to finish the patch of the second solution, the question would

be pointless.

Reported by natureh.510 on 2014-05-10 23:40:15

Hombre, I think, memory footprint doesn't matter at this stage.

Reported by [email protected] on 2014-05-11 00:35:28

Do we also care about the LCMS transform speed? It shouldn't be the same from working

with INT_16 and FLT values. I haven't quantified the difference though.

Reported by natureh.510 on 2014-05-11 01:19:00

#4, Hombre my question is not about usefulness but to understand the differences.

I think that even the "half float" format will be useful because the data will be linear

without a gamma function needed, so best accuracy and speed maybe .. No idea about

compressibility though .. we have to test how it goes ..

Then it is the thing about downsampling in linear space which is better .. :) https://code.google.com/p/rawtherapee/issues/detail?id=2286#c1

Reported by iliasgiarimis on 2014-05-11 04:25:45

Working in pure float (not mixed with int16 as now) also makes it easier to make a vectorized

version for resizing.

Reported by [email protected] on 2014-05-11 11:19:49

Sounds like a change which would need significant testing. Hombre is it OK with you

if this becomes a 4.2 patch, and not a 4.1 one?

Reported by entertheyoni on 2014-05-11 16:04:12

Sure.

Reported by natureh.510 on 2014-05-11 22:12:20

- Labels added: 4.2

Ok, it's enough now!

I need someone to help me on how to use this shitty libtiff library!

Here is a patch that tries to add new TIFF output format (32 bits Float, LogLub24,

LogLuv32).

The biggest problem is in defining the BitsPerSample and SampleFormat tag because they

have a variable length (rtengine/imageio.h, e.g. line 1129), as well as the Strip's

setting (if necessary; line 1164).

One of the 2 save method has been removed; createTIFFHeader from rtexif/rtexif.cc

may be removed now, as everything occurs in imageio.cc. However it shows an alternate

way of creating a TIFF header...

The patch is not final (but quite close), but I need to be able to save proper and

coherent TIFF tag before debugging the data format itself.

Moreover, do we have to save ICC profiles in the float and logluv files?

And lab2Imagefloat (formely known as lab2rgb16 & 16b) in iplab2rgb.cc does handle

a gamma transform for 16 bit images. Do we have to skip gamma for Float and LogLuv

images, given that the user can select a linear output in the GUI?

Reported by natureh.510 on 2014-05-24 10:10:51

- _Attachment: 2374-05WIP.patch_

Hombre, one thing about line 1165 rtengine/imageio.c:

TIFFSetField (out, TIFFTAG_STRIPBYTECOUNTS, width*height*getSampleSize());

Shouldn't you use explicit 64bit datatypes in the calculation to avoid int overflow

with big files?

Ingo

Reported by [email protected] on 2014-05-24 11:13:54

The TIFF standard expect a 16 or 32 bit value for this tag. We could set it to 32 bit

(LONG), and divide the image in several strips if the image is really big, but casting

the result from getSampleSize to uint32 doesn't solve anything (yet it should be included

in the patch).

If anyone knows how to set strips (i.e. several strips) using the libtiff functions,

let me know!

When saving a float TIFF image, I get a nice

"_TIFFVSetField: /media/jcf/Photos/Test/DrSlony 32 bits TIFF/convertis/test.tif: Invalid

tag "StripByteCounts" (not supported by codec)"

error, which is quite upsetting since StripByteCounts is part of the standard tag!

(AFAIK)

... followed by "TIFFWriteBufferSetup: No space for output buffer."

Reported by natureh.510 on 2014-05-24 11:54:44

Ah, ok, I read here that it should be 64 bit. http://www.remotesensing.org/libtiff/v4.0.0.html

These TIFF tags require a 64-bit type as an argument in libtiff 4.0.0:

TIFFTAG_FREEBYTECOUNTS

TIFFTAG_FREEOFFSETS

TIFFTAG_STRIPBYTECOUNTS

TIFFTAG_STRIPOFFSETS

TIFFTAG_TILEBYTECOUNTS

TIFFTAG_TILEOFFSETS

but I don't know which libtiff you use (here it's 3.9.5 and so not 64 bit)

Ingo

Reported by [email protected] on 2014-05-24 12:46:44

I get this when I try to save t o float TIFF

_TIFFVSetField: H:/aaaaa_new_cameras/converted\CRW_2090-1.tif: Invalid tag "StripByteCounts"

(not supported by codec).

H:/aaaaa_new_cameras/converted\CRW_2090-1.tif: Integer overflow in TIFFVStripSize.

Reported by [email protected] on 2014-05-24 12:50:40

I must have an outdated documentation then >:(

Where the hell is all this centralized? libtiff seems to support the official Tiff

standard (where to find the most up-to-date one?) and the Big-TIFF changes.

Without proper documentation, no code (at least from me, sorry).

Reported by natureh.510 on 2014-05-24 12:53:25

#define TIFF_VERSION_CLASSIC 42

#define TIFF_VERSION_BIG 43

What to do with that?

Reported by natureh.510 on 2014-05-24 12:56:06

TIFFSetField (out, TIFFTAG_STRIPBYTECOUNTS, width*height*(uint64)getSampleSize());

...same error.

Return value from TIFFVersion: "LIBTIFF, Version 4.0.2"

What is yours?

Reported by natureh.510 on 2014-05-24 13:16:48

How is this going? I am interested in helping here, but I get merge problems with the

last commit. Hombre, can you post an updated patch?

Also, the "not supported by codec" error may mean that saving to floating point data

is not supported with strips. Try using tiles instead.

About documentation:

- TIFF 6.0 standard: http://partners.adobe.com/public/developer/en/tiff/TIFF6.pdf

Reported by jcelaya on 2014-08-06 17:12:35

Re #20: Here is the updated patch to apply to TIP. Thanks for any help jcelaya.

Reported by natureh.510 on 2014-08-06 20:13:31

Sorry, the previous patch missed 2 files. Here is the correct one.

Reported by natureh.510 on 2014-08-06 21:47:57

- _Attachment: 2374-06WIP-complete.patch_

Oops, I've just raised an issue https://github.com/Beep6581/RawTherapee/issues/4190, asking for save-as-floating-point, not realising it's already an issue. Sorry.

Hi, I just pushed an experimental branch tiff32float that allows to save in 32-bit floating-point format.

Please note that I had to change quite a few things, so I might have broken something -- it needs to be tested extensively!

diff --git a/rtgui/main-cli.cc b/rtgui/main-cli.cc

index 233e0495..a030ddef 100644

--- a/rtgui/main-cli.cc

+++ b/rtgui/main-cli.cc

@@ -438,8 +438,8 @@ int processLineParams ( int argc, char **argv )

case 'b':

bits = atoi (currParam.substr (2).c_str());

- if (bits != 8 && bits != 16) {

- std::cerr << "Error: specify -b8 for 8-bit or -b16 for 16-bit output." << std::endl;

+ if (bits != 8 && bits != 16 && bits != 32) {

+ std::cerr << "Error: specify -b8 for 8-bit, -b16 for 16-bit or -b32 for 32-bit output." << std::endl;

deleteProcParams (processingParams);

return -3;

}

@@ -575,7 +575,7 @@ int processLineParams ( int argc, char **argv )

std::cout << std::endl;

#endif

std::cout << "Options:" << std::endl;

- std::cout << " " << Glib::path_get_basename (argv[0]) << "[-o <output>|-O <output>] [-q] [-a] [-s|-S] [-p <one.pp3> [-p <two.pp3> ...] ] [-d] [ -j[1-100] [-js<1-3>] | [-b<8|16>] [-t[z] | [-n]] ] [-Y] [-f] -c <input>" << std::endl;

+ std::cout << " " << Glib::path_get_basename (argv[0]) << "[-o <output>|-O <output>] [-q] [-a] [-s|-S] [-p <one.pp3> [-p <two.pp3> ...] ] [-d] [ -j[1-100] [-js<1-3>] | [-b<8|16|32>] [-t[z] | [-n]] ] [-Y] [-f] -c <input>" << std::endl;

std::cout << std::endl;

std::cout << " -c <files> Specify one or more input files or directory." << std::endl;

std::cout << " When specifying directories, Rawtherapee will look for images files that comply with the" << std::endl;

@@ -606,7 +606,7 @@ int processLineParams ( int argc, char **argv )

std::cout << " Chroma halved horizontally." << std::endl;

std::cout << " 3 = Best quality: 1x1, 1x1, 1x1 (4:4:4)" << std::endl;

std::cout << " No chroma subsampling." << std::endl;

- std::cout << " -b<8|16> Specify bit depth per channel (default value: 16 for TIFF, 8 for PNG)." << std::endl;

+ std::cout << " -b<8|16|32> Specify bit depth per channel (default value: 16 for TIFF, 8 for PNG)." << std::endl;

std::cout << " Only applies to TIFF and PNG output, JPEG is always 8." << std::endl;

std::cout << " -t[z] Specify output to be TIFF." << std::endl;

std::cout << " Uncompressed by default, or deflate compression with 'z'." << std::endl;

@agriggio +1 for merging this. I ran into no issues while testing.

See also #4179 for enabling PREDICTOR_FLOATINGPOINT which significantly reduces file sizes.

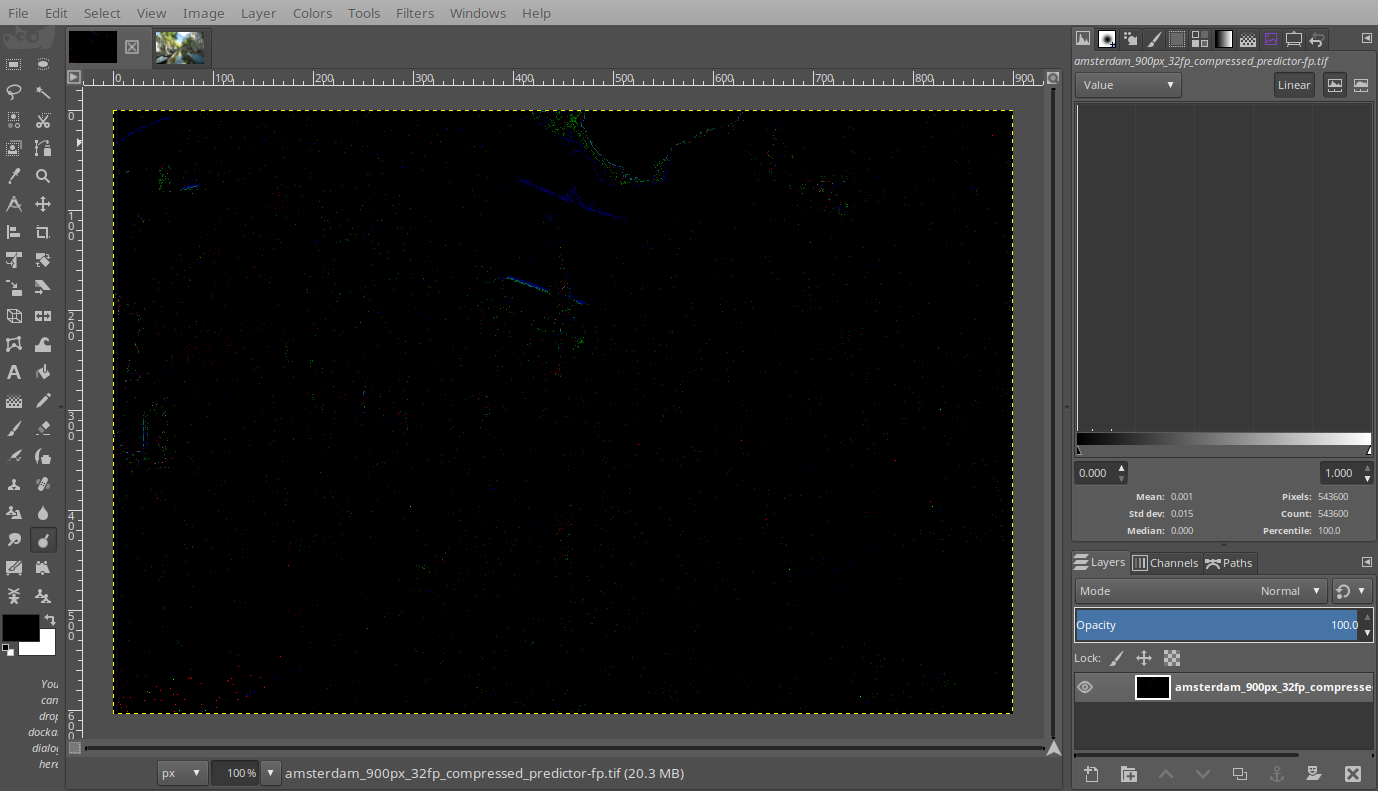

32-bit floating-point test files using PREDICTOR_FLOATINGPOINT and PREDICTOR_HORIZONTAL

https://filebin.net/l19s1pu36p5l2vjr

GIMP-2.9.6 opens the PREDICTOR_FLOATINGPOINT like this:

I can't tell whether RT is doing something wrong, or whether this predictor is not well supported. I am under the impression that it should be well supported, based on this comment:

https://github.com/OpenImageIO/oiio/blob/5b8bc225659b3b022b131cb3be71a63c3c2e6535/src/tiff.imageio/tiffoutput.cpp#L397

@Beep6581 thanks for testing! however, even if it works this is almost useless at the moment I'm afraid. unfortunately RT clips to 65535 in various places during processing, which kind of defeats the purpose... changing this is not a 5-minutes work, but we might attempt when restructuring the pipeline

@agriggio wouldn't it make sense to merge this, but to just hide it from the UI until those problems are resolved? To prevent merge conflicts and to make testing easier in the future.

yes, that seems a good idea indeed!

I tried to implement 16-bit float support for TIFF image, but despite that I set sampleformat to SAMPLEFORMAT_IEEEFP and sample bit depth to 16, libtiff wrote 16-bit interger data in the output file.

At least we can read/write 32 bit float with RT, I'd concentrate on integrating OpenEXR (see #1895), which derives from TIFF format, support 16-bit float data and many more things, and is an industry standard.

So I'd merge 32b-tiff-output-cli and close this issue.

Ping @Beep6581 @heckflosse @agriggio

@Hombre57 have you seen this?

Note that the SampleFormat field does not specify the size of data samples; this is still done by the BitsPerSample field.

https://www.awaresystems.be/imaging/tiff/tifftags/sampleformat.html

https://www.awaresystems.be/imaging/tiff/tifftags/bitspersample.html

@Beep6581 I know, read my previous comment again. Here is example files where sampleformat tag doesn't seem correspond to the real data type, which seem to be 16-bit integer. Could you confirm that ?

I tried to read those files in RT but values are only correctly handle when I'm forcing RT to read 16-bit integer. So I'm a bite lost here.

@Hombre57 I created a 16-bit floating-point image using GIMP and it matched your sample images.

Info about your images from various tools:

ExifTool:

-TIFF-IFD0:BitsPerSample=16 16 16

-TIFF-IFD0:SampleFormat=Float; Float; Float

ImageMagick:

Depth: 16-bit

quantum:format: floating-point

TiffInfo:

Bits/Sample: 16

Sample Format: IEEE floating point

I uploaded two more images to your bin.

So it looks to me like your two images are valid 16-bit floating-point images.

Except that your images look corrupt, and make RT dev 5.4-271-gfde0dccd crash.

@Beep6581 Yes, the tag says it's 16-bit float data, however libtiff don't know how to save this data and wrote 16-bit integer. Apply this patch that force recognition of 16-bit float image (looking by the tags) at 16-bit integer :

diff --git a/rtengine/imageio.cc b/rtengine/imageio.cc

index d3e8150..68f39d0 100644

--- a/rtengine/imageio.cc

+++ b/rtengine/imageio.cc

@@ -706,7 +706,7 @@

}

} else if (samplesperpixel == 3 && sampleformat == SAMPLEFORMAT_IEEEFP) {

if (bitspersample==16) {

- sFormat = IIOSF_FLOAT16;

+ sFormat = IIOSF_UNSIGNED_SHORT;

return IMIO_SUCCESS;

}

if (bitspersample == 24) {

RT show the correct image then this prove that libtiff doesn't save what I ask it to save.

@Hombre57 I guess we need to generate the 16bit float. libtiff just writes what you give it.

If the format is the same as in 16bit float dng, then this function maybe useful:

https://github.com/jcelaya/hdrmerge/blob/0333ba1266ca87e08a1ba2c19dfd3015175ed655/src/DngFloatWriter.cpp#L403

@Hombre57 I will see what I can do.

Can someone please try this tif in latest gimp. There is still something wrong, but it should read it.

https://filebin.net/b657wkmku7fw21bo/amsterdam-6.tif?t=d21edjf8

Here's the patch which I used to produce the tiff from above:

diff --git a/rtengine/image16.cc b/rtengine/image16.cc

index 68dd4bb40..18cfd5a6b 100644

--- a/rtengine/image16.cc

+++ b/rtengine/image16.cc

@@ -60,7 +60,7 @@ Image16::~Image16 ()

{

}

-void Image16::getScanline (int row, unsigned char* buffer, int bps)

+void Image16::getScanline (int row, unsigned char* buffer, int bps, bool isFloat)

{

if (data == nullptr) {

diff --git a/rtengine/image16.h b/rtengine/image16.h

index 33e74f146..60197491c 100644

--- a/rtengine/image16.h

+++ b/rtengine/image16.h

@@ -55,7 +55,7 @@ public:

{

return 8 * sizeof(unsigned short);

}

- virtual void getScanline (int row, unsigned char* buffer, int bps);

+ virtual void getScanline (int row, unsigned char* buffer, int bps, bool isFloat = false);

virtual void setScanline (int row, unsigned char* buffer, int bps, unsigned int numSamples, float *minValue = nullptr, float *maxValue = nullptr);

// functions inherited from IImage16:

diff --git a/rtengine/image8.cc b/rtengine/image8.cc

index 69066f2dd..b6dfef324 100644

--- a/rtengine/image8.cc

+++ b/rtengine/image8.cc

@@ -37,7 +37,7 @@ Image8::~Image8 ()

{

}

-void Image8::getScanline (int row, unsigned char* buffer, int bps)

+void Image8::getScanline (int row, unsigned char* buffer, int bps, bool isFloat)

{

if (data == nullptr) {

diff --git a/rtengine/image8.h b/rtengine/image8.h

index 6444499d9..d26ff4560 100644

--- a/rtengine/image8.h

+++ b/rtengine/image8.h

@@ -50,7 +50,7 @@ public:

{

return 8 * sizeof(unsigned char);

}

- virtual void getScanline (int row, unsigned char* buffer, int bps);

+ virtual void getScanline (int row, unsigned char* buffer, int bps, bool isFloat = false);

virtual void setScanline (int row, unsigned char* buffer, int bps, unsigned int numSamples, float *minValue = nullptr, float *maxValue = nullptr);

// functions inherited from IImage*:

diff --git a/rtengine/imagefloat.cc b/rtengine/imagefloat.cc

index e79678194..aaff3816d 100644

--- a/rtengine/imagefloat.cc

+++ b/rtengine/imagefloat.cc

@@ -154,22 +154,41 @@ void Imagefloat::setScanline (int row, unsigned char* buffer, int bps, unsigned

namespace rtengine { extern void filmlike_clip(float *r, float *g, float *b); }

-void Imagefloat::getScanline (int row, unsigned char* buffer, int bps)

+void Imagefloat::getScanline (int row, unsigned char* buffer, int bps, bool isFloat)

{

if (data == nullptr) {

return;

}

- if (bps == 32) {

- int ix = 0;

- float* sbuffer = (float*) buffer;

+ if (isFloat) {

+ if (bps == 32) {

+ int ix = 0;

+ float* sbuffer = (float*) buffer;

- // agriggio -- assume the image is normalized to [0, 65535]

- for (int i = 0; i < width; i++) {

- sbuffer[ix++] = r(row, i) / 65535.f;

- sbuffer[ix++] = g(row, i) / 65535.f;

- sbuffer[ix++] = b(row, i) / 65535.f;

+ // agriggio -- assume the image is normalized to [0, 65535]

+ for (int i = 0; i < width; i++) {

+ sbuffer[ix++] = r(row, i) / 65535.f;

+ sbuffer[ix++] = g(row, i) / 65535.f;

+ sbuffer[ix++] = b(row, i) / 65535.f;

+ }

+ } else if (bps == 16) {

+ int ix = 0;

+ union {

+ float f;

+ uint32_t i;

+ } tmp;

+ uint16_t* sbuffer = (uint16_t*) buffer;

+ for (int i = 0; i < width; i++) {

+ tmp.f = r(row, i) / 65535.f;

+ sbuffer[ix++] = DNG_FloatToHalf(tmp.i);

+ tmp.f = g(row, i) / 65535.f;

+ sbuffer[ix++] = DNG_FloatToHalf(tmp.i);

+ tmp.f = b(row, i) / 65535.f;

+ sbuffer[ix++] = DNG_FloatToHalf(tmp.i);

+// sbuffer[ix++] = DNG_FloatToHalf(reinterpret_cast<uint32_t>(g(row, i) / 65535.f));

+// sbuffer[ix++] = DNG_FloatToHalf(reinterpret_cast<uint32_t>(b(row, i) / 65535.f));

+ }

}

} else {

unsigned short *sbuffer = (unsigned short *)buffer;

diff --git a/rtengine/imagefloat.h b/rtengine/imagefloat.h

index abb74bc8e..b52e4704f 100644

--- a/rtengine/imagefloat.h

+++ b/rtengine/imagefloat.h

@@ -59,7 +59,7 @@ public:

{

return 8 * sizeof(float);

}

- virtual void getScanline (int row, unsigned char* buffer, int bps);

+ virtual void getScanline (int row, unsigned char* buffer, int bps, bool isFloat = false);

virtual void setScanline (int row, unsigned char* buffer, int bps, unsigned int numSamples, float *minValue = nullptr, float *maxValue = nullptr);

// functions inherited from IImagefloat:

@@ -99,7 +99,37 @@ public:

{

delete this;

}

-

+inline uint16_t DNG_FloatToHalf(uint32_t i) {

+ int32_t sign = (i >> 16) & 0x00008000;

+ int32_t exponent = ((i >> 23) & 0x000000ff) - (127 - 15);

+ int32_t mantissa = i & 0x007fffff;

+ if (exponent <= 0) {

+ if (exponent < -10) {

+ return (uint16_t)sign;

+ }

+ mantissa = (mantissa | 0x00800000) >> (1 - exponent);

+ if (mantissa & 0x00001000)

+ mantissa += 0x00002000;

+ return (uint16_t)(sign | (mantissa >> 13));

+ } else if (exponent == 0xff - (127 - 15)) {

+ if (mantissa == 0) {

+ return (uint16_t)(sign | 0x7c00);

+ } else {

+ return (uint16_t)(sign | 0x7c00 | (mantissa >> 13));

+ }

+ }

+ if (mantissa & 0x00001000) {

+ mantissa += 0x00002000;

+ if (mantissa & 0x00800000) {

+ mantissa = 0; // overflow in significand,

+ exponent += 1; // adjust exponent

+ }

+ }

+ if (exponent > 30) {

+ return (uint16_t)(sign | 0x7c00); // infinity with the same sign as f.

+ }

+ return (uint16_t)(sign | (exponent << 10) | (mantissa >> 13));

+}

virtual void normalizeFloat(float srcMinVal, float srcMaxVal);

void normalizeFloatTo1();

void normalizeFloatTo65535();

diff --git a/rtengine/imageio.cc b/rtengine/imageio.cc

index d3e815027..8bfe81504 100644

--- a/rtengine/imageio.cc

+++ b/rtengine/imageio.cc

@@ -1468,9 +1468,9 @@ int ImageIO::saveTIFF (Glib::ustring fname, int bps, float isFloat, bool uncompr

}

for (int row = 0; row < height; row++) {

- getScanline (row, linebuffer, bps);

+ getScanline (row, linebuffer, bps, isFloat);

- /*if (bps == 16) {

+ if (bps == 16) {

if(needsReverse && !uncompressed) {

for(int i = 0; i < lineWidth; i += 2) {

char temp = linebuffer[i];

@@ -1478,7 +1478,7 @@ int ImageIO::saveTIFF (Glib::ustring fname, int bps, float isFloat, bool uncompr

linebuffer[i + 1] = temp;

}

}

- } else */ if (bps == 32) {

+ } else if (bps == 32) {

if(needsReverse && !uncompressed) {

for(int i = 0; i < lineWidth; i += 4) {

char temp = linebuffer[i];

diff --git a/rtengine/imageio.h b/rtengine/imageio.h

index 135927bbf..c8a0d71c2 100644

--- a/rtengine/imageio.h

+++ b/rtengine/imageio.h

@@ -112,7 +112,7 @@ public:

}

virtual int getBPS () = 0;

- virtual void getScanline (int row, unsigned char* buffer, int bps) {}

+ virtual void getScanline (int row, unsigned char* buffer, int bps, bool isFloat = false) {}

virtual void setScanline (int row, unsigned char* buffer, int bps, unsigned int numSamples = 3, float minValue[3] = nullptr, float maxValue[3] = nullptr) {}

virtual bool readImage (Glib::ustring &fname, FILE *fh)

As I don't have a running version of latest gimp I let @Hombre57 complete the work ;-)

I don't have GIMP-2.10, but it looks fine in GIMP-2.9.8 and in G'MIC.

Looks fine in GIMP-2.10, no warning message

@heckflosse Here is a patch that _should_ work but crash RT miserably in Imagefloat::computeHistogramAutoWB. The image data values goes beyond 1.0, something like 1.12xx, but not the value you'll find in the debugger. I don't understand what happens.

Patch above committed to 32b-tiff-output-cli branch. Loading 16-bit float image still crash...

On IRC with @Hombre57 we agreed to work togehter on solving the issue of loading 16-bit float tif files.

Stay tuned...

Ingo

Greetings!

I posted this issue a while back without reading through this thread well enough.

RawTherapee 5.4 currently has the ability to save 32bit float tiffs from the gui, but this is disabled in the commandline.

I have successfully compiled the 32b-tiff-output-cli branch, and have been using it to debayer raw files to 32bpp tiffs successfully using rawtherapee-cli.

Unfortunately, some of the newer features are missing, like AMaZE+VNG4 demoisaicing and the changes introduced in the fullraw branch: https://github.com/Beep6581/RawTherapee/issues/4642

Would it be difficult to merge the upstream changes into this 32b-tiff-output-cli branch?

It's a naive question since I barely know enough to get myself into trouble compiling code let alone writing it! :P

Thanks!

It looks like merging can be done with a single click, there are no conflicts:

https://github.com/Beep6581/RawTherapee/compare/32b-tiff-output-cli

@Beep6581 @jedypod

Thanks to @heckflosse for fixing a silly bug from me :roll_eyes: . The branch has been merged into dev, this issue is now closed (at last).

Tested 5.4-778-g61e033ae

- RT tells me float output was detected when I selected integer output:

../rawtherapee/rawtherapee-cli -o /tmp/cli_16.tif -S -t -b16 -c ~/photos/test_images/tracteur.dng

Float output detected (16 bits)!

- The outputs of

-b16and-b16fare identical in size, metadata and ImageMagick's

identify -verbose

Additional info:

$ et /tmp/cli_16.tif | egrep "SampleFormat|BitsPerSample"

-TIFF-IFD0:BitsPerSample=16 16 16

-TIFF-IFD0:SampleFormat=Unsigned; Unsigned; Unsigned

$ et /tmp/cli_16f.tif | egrep "SampleFormat|BitsPerSample"

-TIFF-IFD0:BitsPerSample=16 16 16

-TIFF-IFD0:SampleFormat=Unsigned; Unsigned; Unsigned

$ et /tmp/cli_32.tif | egrep "SampleFormat|BitsPerSample"

-TIFF-IFD0:BitsPerSample=32 32 32

-TIFF-IFD0:SampleFormat=Float; Float; Float

$ identify -verbose /tmp/cli_16.tif | grep -A4 "Depth:"

Depth: 16-bit

Channel depth:

red: 16-bit

green: 16-bit

blue: 16-bit

$ identify -verbose /tmp/cli_16f.tif | grep -A4 "Depth:"

Depth: 16-bit

Channel depth:

red: 16-bit

green: 16-bit

blue: 16-bit

$ identify -verbose /tmp/cli_32.tif | grep -A4 "Depth:"

Depth: 32/16-bit

Channel depth:

red: 16-bit

green: 16-bit

blue: 16-bit

I don't know what 32/16-bit means, perhaps it could be related to my ImageMagick being compiled as "Q16" and not "Q32".

In ImageMagick identify -verbose, "32/16-bit" means the image data is 32 bits/channel/pixel, but only the top 16 are significant.

A floating-point image should have the property "quantum:format: floating-point".

@Beep6581 @snibgo I've commited patch 5c10e19 that should solve the problem.

@Hombre57 fix mostly confirmed, there is one issue left.

The --help info mentions -b<8|16|16f|32>

I naturally tried -b32 and -b32f to see if I can break it, and found that -b32f saved an unsigned integer image.

$ ../rawtherapee/rawtherapee-cli -o /tmp/cli_32.tif -S -t -b32 -c ~/photos/test_images/tracteur.dng

RawTherapee, version 5.4-782-g5c10e195, command line.

Output is 32-bit, floating point

Processing: /home/morgan/photos/test_images/tracteur.dng

Merging sidecar procparams

$ ../rawtherapee/rawtherapee-cli -o /tmp/cli_32f.tif -S -t -b32f -c ~/photos/test_images/tracteur.dng

RawTherapee, version 5.4-782-g5c10e195, command line.

Output is 32-bit, integer

Processing: /home/morgan/photos/test_images/tracteur.dng

Merging sidecar procparams.

$ et /tmp/cli_32.tif | egrep "SampleFormat|BitsPerSample"

-TIFF-IFD0:BitsPerSample=32 32 32

-TIFF-IFD0:SampleFormat=Float; Float; Float

$ et /tmp/cli_32f.tif | egrep "SampleFormat|BitsPerSample"

-TIFF-IFD0:BitsPerSample=32 32 32

-TIFF-IFD0:SampleFormat=Unsigned; Unsigned; Unsigned

RT cannot read the unsigned integer image.

This patch fixes it, -b32f is now an alias for -b32:

diff --git a/rtgui/main-cli.cc b/rtgui/main-cli.cc

index df8e9937..347161cd 100644

--- a/rtgui/main-cli.cc

+++ b/rtgui/main-cli.cc

@@ -391,14 +391,15 @@ int processLineParams ( int argc, char **argv )

case 'b':

bits = atoi (currParam.substr (2).c_str());

- if (currParam.length() >=3 && currParam.at(2) == '8') {

+

+ if (currParam.length() >= 3 && currParam.at(2) == '8') { // -b8

bits = 8;

- } else if (currParam.length() >= 4 && currParam.at(2) == '1' && currParam.at(3) == '6') {

+ } else if (currParam.length() >= 4 && currParam.length() <= 5 && currParam.at(2) == '1' && currParam.at(3) == '6') { // -b16, -b16f

bits = 16;

if (currParam.length() == 5 && currParam.at(4) == 'f') {

isFloat = true;

}

- } else if (currParam.length() == 4 && currParam.at(2) == '3' && currParam.at(3) == '2') {

+ } else if (currParam.length() >= 4 && currParam.length() <= 5 && currParam.at(2) == '3' && currParam.at(3) == '2') { // -b32 == -b32f

bits = 32;

isFloat = true;

}

@@ -409,7 +410,7 @@ int processLineParams ( int argc, char **argv )

return -3;

}

- std::cout << "Output is " << bits << "-bit, " << (isFloat ? "floating point" : "integer") << std::endl;

+ std::cout << "Output is " << bits << "-bit " << (isFloat ? "floating-point" : "integer") << "." << std::endl;

break;

@Beep6581 Thanks for the fix, patch committed. Feel free to close.