Px4-autopilot: [FeatureRequest] Support VISLAM with PX4 Stack on Snapdragon Platform

Problem Statement

There is a VISLAM ROS example released at https://github.com/ATLFlight/ros-examples for Snapdragon FlightTM. The examples work as standalone and does not work when PX4 stack is running. The reason being both the "Snapdragon::ImuManager" and the PX4 stack try to use the same MPU9x50.

This request is to support the VISLAM with Px4 Stack.

Proposed Solution/Steps:

The solution is to not use the Sensor::ImuManager directly but use the PX4's ORB topics to get the IMU Data. Here is a summary of the changes:

- Create a module in PX4 for VISLAM

- The module must use the Snapdragon::CameraManager(https://github.com/ATLFlight/ros-examples/tree/master/src/camera) class to get the camera Frames

- Create a PX4 IMUOrbWrapper class/implementation to subscribe the “accel“ and “gyro“ ORB topics.

- For each ORB Topic the timestamp needs to be adjusted as follows:

// get the adsp offset.

int64_t dsptime;

static const char qdspTimerTickPath[] = "/sys/kernel/boot_adsp/qdsp_qtimer";

char qdspTicksStr[20] = "";

static const double clockFreq = 1 / 19.2;

FILE * qdspClockfp = fopen( qdspTimerTickPath, "r" );

fread( qdspTicksStr, 16, 1, qdspClockfp );

uint64_t qdspTicks = strtoull( qdspTicksStr, 0, 16 );

fclose( qdspClockfp );

dsptime = (int64_t)( qdspTicks*clockFreq*1e3 );

//get the apps proc timestamp;

int64_t appstimeInNs;

struct timespec t;

switch( clockType )

{

case SENSOR_CLOCK_SYNC_TYPE_MONOTONIC:

clock_gettime( CLOCK_MONOTONIC, &t );

break;

default:

clock_gettime( CLOCK_REALTIME, &t );

break;

}

uint64_t timeNanoSecMonotonic = (uint64_t)(t.tv_sec) * 1000000000ULL + t.tv_nsec;

appstimeInNs = (int64_t)timeNanoSecMonotonic;

offsetInNs = appstimeInNs – dsptime;

- Add the computed offset to the timestamp to the ORB topic

- This is needed as the timestamp of the ORB topics are based on the aDSP time.

Modify the VISLAM Manager code to add an API to get the gyro and accel data into the VISLAM engine.

- The implementation should call the VISLAM's API mvVISLAM_AddAccel and mvVISLAM_AddGyro

An example implementation is in the "Snapdragon::VislamManager::Imu_IEventListener_ProcessSamples" located in the file: https://github.com/ATLFlight/ros-examples/blob/master/src/vislam/SnapdragonVislamManager.cpp

- Create a Cmakelists file for the new module and add the dependency on mv and libcamera. An example can be found in the https://github.com/ATLFlight/ros-examples/blob/master/CMakeLists.txt

- Compile the PX4 code

- Update the start up script to start the px4 module.

All 158 comments

Sounds interesting. Have you though about using the mavros bridge (https://github.com/mavlink/mavros) instead of accessing the IMU data directly?

I have heard of this, but not actively used it. I am wondering if the latency may be an issue here? Also if the VISLAM's pose information needs to be injected into Px4 stack I think it may be easy to have VISLAM as module within px4.

Latency shouldn't be a problem as it's all on the same board.

We have been doing VIO for 1.5 years + : https://youtu.be/AdPpIjlAdKg

For injecting the data back into PX4, I have an API proposed here : https://github.com/PX4/Firmware/pull/6074

@rkintada this is great; I am attempting this now. Have you been able to complete and test this?

@rkintada if the intention is to allow the VISLAM Manager to have access to PX4's IMU ORB topics then why does the PX4 module need access to the camera frames?

The module must use the Snapdragon::CameraManager(https://github.com/ATLFlight/ros-examples/tree/master/src/camera) class to get the camera Frames

@r1b4z01d Bryan It is great that you are going to look into this. Please go ahead and try it out. I did not get a chance to look into this.

The VISLAM manager needs both the camera frames(Optic Flow ) and IMU. Hence the dependency is coming from VISLAM Manager and not directly from PX4.

For this to work, you will need the Machine Vision SDK for Snapdragon Flight from here: https://developer.qualcomm.com/hardware/snapdragon-flight/tools

Thanks

Rama

I am still a little confused by what your end goal is. Are you proposing to incorporate the entire ros-example into the PX4 module or just provide the PX4 IMU data to the ros example? If the latter then how do you propose to send PX4 the computed pose?

I have incorporated MAVROS into the ros-example like @mhkabir mentioned, successfully populating vision_position_estimate. I need to decide if I should build the module or use the IMU data from MAVROS. I am currently leaning towards MAVROS.

HI ,

The original goal is to get the VISLAM ros-example to work with PX4. Feeding the VISLAM pose back into PX4 is optional.

My original comment was based on the fact you are not using MAVROS. If you are using MAVROS, the changes to MAVROS do not need any dependency on the camera manager.

With limited knowledge of MAVROS, this is my understanding of your approach:

- Enhance MAVROS to publish IMU data( the timestamp correct still needs to be done ).

- Update the ros-example(VISLAM) to subscribe to the ROS topics that publish IMU data from MAVROS.

- Update the VILSAM Manager code to do the following:

* Add an API to add the Accel/Gyro Data

* Remove the dependency of IMU Manager in the same code.

At run-time, both the nodes MAVROS and VISLAM ROS example need to run.

Thanks

Hi,

I just came to know that PX4 already does the time-stamp synchronization between aDSP and the apps processor. To the above pseudo code to do time-adjustment is not needed.

thanks

@rkintada Yep :)

I'm all for pushing this ahead with Mavros now. We can just disable the Mavlink sync since the time bases will already be the same.

@r1b4z01d Can you please push your changes to the VI-slam example somewhere and I'll get it streamlined and working with the new API, since this has been merged : https://github.com/PX4/Firmware/pull/6074

@rkintada Where did you find that PX4 performs timestamp synchronization between aDSP and the apps processor?

@Seanmatthews

The synchronization is done when initializing muorb. It first computes the offset and invokes an fastrpc call to set the offset in adsp. Here are the relevant source files:

- offset computation: https://github.com/PX4/Firmware/blob/master/src/modules/muorb/krait/px4muorb_KraitRpcWrapper.cpp - Line 85

- Call to adsp:

https://github.com/PX4/Firmware/blob/master/src/modules/muorb/krait/px4muorb_KraitRpcWrapper.cpp - line 243 - setting offset in adsp:

https://github.com/PX4/Firmware/blob/master/src/modules/muorb/adsp/px4muorb.cpp - line 76

@rkintada

In the approach you outlined above-- frames from camera manager, IMU from MAVROS-- I'm finding that there's still a timestamp synchronization problem between the IMU and camera frame timestamps. The discrepancy in the timestamps between the two prevent VISLAM from upgrading pose quality beyond "initializing". The timestamp on the IMU data from MAVROS reflects realtime (date +%s%N), while the camera timestamp uses monotonic time. Is this just a matter of manually synchronizing? Or should either MAVROS or VISLAM use a different clock?

@Seanmatthews The DSP time is synchronized to Monotonic clock( as seen in https://github.com/PX4/Firmware/blob/master/src/modules/muorb/krait/px4muorb_KraitRpcWrapper.cpp line 110 ). Do you know if the MavRos is overriding the IMU timestamp with ros::now()? This may explain why it is getting the real time clock. Also the camera timestamps are based off Monotonic clock and not realtime clock.

No it doesn't override anything.

Mavros syncs the IMU time to the ROS time base, which is the real-time

clock.

I will add a parameter to mavros to disable the timesync and pass on the

timestamp straight though. Meanwhile, you can edit the imu_pub plugin and

remove the sync_stamp call for the IMU message.

@mhkabir thanks for the clarification.

@Seanmatthews can you please share a sample log of the raw timestamps for the IMU and the camera?

Thanks

@rkintada is it feasible to timestamp the camera data using the realtime clock? That way, we'd be maintaining ROS standards.

@mhkabir No. that is not an easy change and also has ripple effect as other application using the camera API rely on the monotonic based timestamp.

Ok, understood. My proposal, in that case, would be to simply add an the time offset to the camera image (so that it's time base matches the IMU time), before pushing it into VISLAM. That way we have valid ROS timestamps for IMU, and maintain standards across the board. VISLAM will also return the estimate in the ROS timebase in that case, and PX4 will sync it back to monotonic on receiving.

@Seanmatthews Here is what you need for the onboard timesync (the mode timesync_mode::ONBOARD will calculate the offset between CLOCK_REALTIME for ROS and CLOCK_MONOTONIC for PX4 and timestamp data) :

https://github.com/mavlink/mavros/tree/uasys-upstreaming

@rkintada As per @mhkabir suggestion above, I removed the timestamp synchronization from the MAVROS imu plugin. Doing this results in camera/imu timestamps that are typically within 0.5ms of each other.

Big picture, VISLAM does output a pose, but the poseQuality goes through blips of INITIALIZATION and FAILED about every second. Also, the estimated pose varies wildly when retrieving IMU data through MAVROS (possibly due to the aforementioned blip). For equal sampling rates, the speed of IMU data through MAVROS and VISLAM appears to be somewhat equivalent to the speed of IMU data reception from imu_app, though the data does lag through ROS at higher sample rates (at 200Hz, for example, VISLAM receives/processes at 180-190Hz). Also, there's no difference in the number of samples received per callback between MAVROS and imu_app-- each sends one at a time.

Beyond guesses, I don't have a clear picture of what's wrong, but I have these questions:

- What properties of the IMU data, or its transmission, would cause mvVISLAM to transition from an initialized state to a failed (or initializing) state?

- Does MAVROS filter/process the IMU data from PX4 in any way?

- VISLAM uses IMU values for adding gyro and accelerometer data to mvVISLAM. This seems to work for running VISLAM in the previously prescribed manner. Is it essential to add gyro and accelerometer data from elsewhere when running with MAVROS (as @rkintada suggested in his latter design proposal)?

Pretty sure that IMU frame and/or units are wrong. No idea what vislam expects (NED?), but mavros follows the ROS convention of ENU.

Try swapping X, Y and negating Z in the mavros gyro and accel data to get it into NED.

Then there is the matter of extrinsics and intrinsics calibration for your camera-IMU system. A quick skim of the code base didn't reveal vislam taking in that information anywhere. I don't see how it could work without an accurate calib.

There are too many variables in the VIO chain to predict what exactly goes wrong without actually using it or having more information on the vislam approach. I'll bring out my Snapdragon Flight later and see if I can get something going.

I wondered about that as well-- there's no mention of calibration at ATLFlight/ros-examples. Though, the VISLAM example does seem to work on its own. @rkintada can you fill us in?

I'll post links to a couple bag files of IMU + pose data from both methods to this comment shortly.

The bag file with /vislam/imu is using imu_app forIMU data, while /mavros/imu/data uses MAVROS:

I suspect that the default intrinsics and extrinsics are just close enough for the filter to converge.

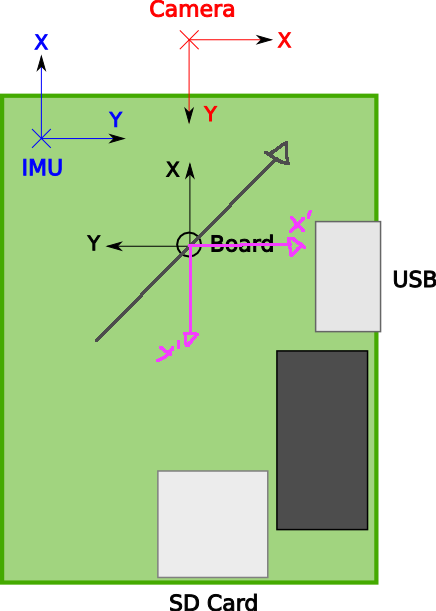

@mhkabir @Seanmatthews Sorry I could not get back to you sooner. Yes, the IMU to camera frame spatial transformation (extrinsic calibration) needs to be provided to the mvVISLAM's initialize API (the tbc and ombc parameters). I will be updating the documentation with more details soon( with some diagrams ). This may be primary cause as the ROS IMU frame is different from the IMU frame I am inputting into the mvVISLAM example code.

Thanks

Rama.

@rkintada What about the intrinsics?

Updating the transformation yields more stable pose values. However, the FAIL/INIT blip has become more frequent. On qualcomm's advice, I tried raising the frequency with which PX4 samples the IMU to 500Hz. Though MAVROS seems to publish IMU data up to only 250Hz, despite calling /mavros/set_sample_rate. I don't believe this causes the blips, since VISLAM works fine (though less accurately) at 100Hz.

You need to increase the rate on the PX4 side using the mavlink stream command to set rate of HIGHRES_IMU message.

Also, make sure that you're using the /mavros/imu/data_raw topic, not the filtered one.

I had already set HIGHRES_IMU and ATTITUDE to rates of 500 (the IMU data from MAVROS seems to also be limited by the ATTITUDE rate). Switching from /mavros/imu/data to /mavros/imu/data_raw prevents mvVISLAM from ever breaching its initialization phase.

@rkintada Do you have some insight into mvVISLAM's poseQuality, or what about the IMU data would prevent mvVISLAM from initializing?

@mhkabir For the camera intryincs we set them via the structure defined in the mvCameraConfiguration structure. Here is an example of where it is used in the example code:

https://github.com/ATLFlight/ros-examples/blob/master/src/nodes/SnapdragonRosNodeVislam.cpp

Lines: 95 - 109. You can modify them if they need to be updated.

@Seanmatthews Thanks you very much for trying the integration with PX4. Here some of my comments:

- The standalone VILSAM example uses the following configuration for the MPU9x50:

- gyro_lpf = MPU9X50_GYRO_LPF_184HZ,

- acc_lpf = MPU9X50_ACC_LPF_184HZ,

- gyro_fsr = MPU9X50_GYRO_FSR_2000DPS,

- acc_fsr = MPU9X50_ACC_FSR_16G,

- Can you please make sure that the PX4 MPU driver is doing the same. The VISLAM is tuned for the above configurations.

- The VISLAM needs to see atleast 10 feature points to initialize correctly. Wanted to check if the surface that the Camera is pointing to has the features.

- Regarding using the RAW_imu stream, wanted to check if the IMU frame is the same as the one you were using before( ie HIGHRES_IMU ) stream? Otherwise the spatial transformation may need to be re-adjusted. Also make sure the that the timestamp are based of Monotonic clock and not the Ros::time() based.

- Regarding the test setup, can you provide how you are testing to see the FAIL/INITIALIZE blips? Also for the blip you see, is this the transition you see( ie INITIALIZING->HIGH_QUALITY->FAIL ??? )

- Regarding test scenario, can you try in two phase tests.

- Test1: Keep the board stationary and see if the VISLAM initializes properly( ie it should give pose with High Quality after initializing ). This should not go into the BLIP you are mentioning.

- Test2: Once test 1 is successful, can you please lift the board and see if the pose is generated correctly and with no blips( I assume this is what you are trying ).

- If blip happens, then it could be that the spatial transformation is not correct.

@rkintada @mhkabir

- Thanks for those values. I made the changes with PX4.

- I've been testing the camera on a flat surface, on top of a newspaper.

- I had already made adjustments to both the frame and timestamp. Both did not match up between imu_app and PX4/MAVROS. ROS uses ENU where imu_app/VISLAM uses local NED. And as you stated, VISLAM uses monotonic time.

- Yes-- INIT(x3-4)->HIGH_QUALITY(*)->FAIL(x1).

- I've only tested on a flat surface, since it never stayed initialized.

The good news is that I solved the blip issue. It was a matter of one IMU source accounting for g and the other not. When using IMU data from MAVROS with VISLAM, don't multiply by kNormG here: https://github.com/ATLFlight/ros-examples/blob/master/src/vislam/SnapdragonVislamManager.cpp#L202

@Seanmatthews Thanks for the inputs. Just so that I understand, with the blip issue you solved are you still seeing that the VISLAM pose to from INIT--> HIGH_QUALITY-->FAIL?

Thanks

Rama

Essentially yes. It would cycle through INIT for a couple seconds at the beginning, until it reached HIGH_QUALITY. Then, about every 1s, it would hit a FAIL followed by ~4 cycles of INIT, then back to HIGH_QUALITY for another ~1s. The IMU data is coming from MAVROS at 250Hz. @rkintada recommends 500Hz, but I'm currently having trouble getting MAVROS to go any higher than that.

@Seanmatthews One more question, when you do the bench testing( with mavROS ), is there a fan or the props spinning? Also can you share the Imu logs for the test you ran?

The mvVILSAM has a mvSRW_Writer class( mvSRW.h ). Can you use the api's of the mvSRW_Writer class to store the accel, gyro, camera frames and camera configurations? Once you have it we can do some offline analysis internally. Make sure to call the mvSRW_Writer_Initialize() first.

@rkintada I have only the Snapdragon Flight board by itself. To reiterate from above, I no longer have the blip issue after I fixed the linear acceleration values. Do you still want logs? If so, do you want them from the code pre or post-fix?

@Seanmatthews With the fix you have, what is the current issue you are seeing? Based on your earlier comment, I understood that you are still seeing the INIT->HighQuality( for 1s ) --> Fail after your fix to the linear acceleration values. If this is still correct, I would like to get the logs to analyze it.

The 250Hz thing isn't a mavros limit. PX4 samples the sensors at 1kHz, but the sensor_combined uORB topic (from which the HIGHRES_IMU message is generated) is published at 250 Hz.

@mhkabir Is that something that can be changed with a setting? I have no problem getting 500Hz using Qualcomm's imu_app.

@rkintada The remaining issue is that after flashing with image 3.1.2 and installing the system according to the PX4 wiki (with v1.6.0rc2 from PX4/Firmware), the ESCs no longer arm (as they did the the ALFlight/Firmware). Do I need to change some ESC settings or install special Qualcomm drivers? Do I need to also install the fc_addon drivers, as prescribed by ATLFlight/ATLFlightDocs?

@Seanmatthews Once the 3.1.2 image is installed, there is not need to load the add-on drivers as they already part of the image. Regarding arm's the esc I am not aware of any ESC changes. However we are seeing a different issue where there are some IMU related messages on mini-dm( i guess this already reported on qdn forum ). I am suspecting this may be causing the issue. We are looking this currently and will keep you posted.

Thanks

Rama.

@rkintada Just to be clear, you are (or were) able to load the 3.1.2 image from Intrinsyc, install the firmware from this repository, and then operate the Snapdragon Flight?

@mhkabir Can you spare any info regarding your last comment? Is there a PX4 setting that can be changed to send IMU data at 500Hz?

@Seanmatthews I had the same problem as you with the ESC board not arming. Maybe you have the same problem, namely an incorrect tty device mapping. I posted my findings on the qualcomm Forums (https://developer.qualcomm.com/comment/12329#comment-12329) and James Wilson already merged it to both, the px4 dev page as well as the ATLFlight docs.

Any news on making the ros-examples work with the px4 flight stack? I was about to tackle the same problem when I stumbled upon this issue here.

@potaito My arming problem was happening because I was following the dev.px4.io instructions for building PX4 for the Snapdragon Flight. The instructions have an error-- the build command should be make eagle_legacy_default.

You can find a version of ros-examples, which I adapted to work with PX4, here.

The instructions are not incorrect. The legacy_default target is only for building the legacy binary drivers (not open-source), such as that for the Snapdragon Flight ESC. In which case, you should be following the ATLFlight instructions.

@mhkabir Correct or not, the instructions listed specifically for the Snapdragon Flight on dev.px4.io do not produce a flyable unit.

EDIT: ...with the Snapdragon Flight dev kit.

They do, if you use the PWM ESCs, which is the fully open source build we support.

@mhkabir

Here is what you need for the onboard timesync (the mode timesync_mode::ONBOARD will calculate the offset between CLOCK_REALTIME for ROS and CLOCK_MONOTONIC for PX4 and timestamp data) :

https://github.com/mavlink/mavros/tree/uasys-upstreaming

The link is no longer leading anywhere... Can you help me track down your changes? It's probably

not compatible to ros-indigo anyway right?

It is already in mavros master. It will not be backported to indigo however.

Ah okay, thank you! Could have figured that out...

@potaito Follow source build instructions here to get the upstream timestamp passthrough functionality: https://github.com/mavlink/mavros/tree/master/mavros#source-installation

Note that step 3 should also include --rosdistro kinetic flag.

@Seanmatthews does VI-SLAM work for you at the moment?

@mhkabir Yes it does, to a certain degree. I have it integrated with MAVROS, but the algo itself seems to fail on takeoff due to not being able to hold onto points during shaky takeoffs.

@Seanmatthews ok, interesting. Would you mind doing a small writeup of what you had to do to get it running, including mavros config and camera-IMU calibration, etc ? We would like it on dev.px4.io!

So I thought I made it work, but the pose drifts away rather quickly, even when the quad is not moving. Here's what I did (similar to @Seanmatthews):

- Getting IMU messages from mavros instead of the board directly by replacing the IMU API calls in SnapdragonVislamManager with a ROS

sensor_msgs::IMUcallback. - In the mavros sources (I'm on the indigo devel branch), I overwrite the IMU messages' synced time stamp with the board clock stamp

imu_hr.time_usec. I logged the IMU and image timestamps and they seem fine:

- Mavros connects to a highres IMU stream delivering data at 250hz

- Before feeding the mavros IMU messages into vislam, X and Y are swapped and Z is negated, as suggested by @mhkabir.

```

lin_acc[0] = msg->linear_acceleration.y;

lin_acc[1] = msg->linear_acceleration.x;

lin_acc[2] = -msg->linear_acceleration.z;

ang_vel[0] = msg->angular_velocity.y;

ang_vel[1] = msg->angular_velocity.x;

ang_vel[2] = -msg->angular_velocity.z;

// ...

mvVISLAM_AddAccel(vislam_ptr_, current_timestamp_ns, lin_acc[0], lin_acc[1],

lin_acc[2]);

mvVISLAM_AddGyro(vislam_ptr_, current_timestamp_ns, ang_vel[0], ang_vel[1],

ang_vel[2]);

```

And here is what happens:

- I place the quad level on the edge of some object with a flat surface such that there are at least 10cm between snapdragon's optical flow camera and my table.

- Running the px4 stack + VISLAM initially seems fine. VISLAM initializes and switches to

MV_TRACKING_STATE_HIGH_QUALITYright away. - When moving the snapdragon around slowly, the pose starts drifting away and keeps doing it even after putting the quad back down on the surface. In this case

/vislam/odometry/twistis non-zero even when the quad is resting on the table. - Tracking state remains in high quality and never switches to failed or initializing throughout the entire time.

Ok so apparently the IMU messages need to have Y and Z inverted before passing it to VISLAM. Not as I have described above (swap X and Y and invert Z). Correct version:

lin_acc[0] = msg->linear_acceleration.x;

lin_acc[1] = -msg->linear_acceleration.y;

lin_acc[2] = -msg->linear_acceleration.z;

ang_vel[0] = msg->angular_velocity.x;

ang_vel[1] = -msg->angular_velocity.y;

ang_vel[2] = -msg->angular_velocity.z;

// ...

mvVISLAM_AddAccel(vislam_ptr_, current_timestamp_ns, lin_acc[0], lin_acc[1],

lin_acc[2]);

mvVISLAM_AddGyro(vislam_ptr_, current_timestamp_ns, ang_vel[0], ang_vel[1],

ang_vel[2]);

EDIT: There is a smarter way. The camera-imu transformation can be passed to Vislam, which makes the inversion/rotation I mentioned above unnecessary. Here are the corresponding changes.

Is it better now? I don't see how that conversion makes sense though.

Yes, sorry I was not clear on that. The drifting occurred because of my wrong IMU transformation. Now the tracking seems accurate and reasonable. I did not actually fly with this odometry yet though.

Hi All,

This excellent to know that basic integration is working.

The example expects the IMU to the in the RAW imu frame co-oridinate( this is configured in the tbc and ombc of the VISLAM example). Here is the documentation of the same:

https://github.com/ATLFlight/ros-examples#imu-to-camera-transformation

If you are not changing the VISLAM parameters then you will need this transformation.

If the orientation is different, the VISLAM parameters can also be updated instead.

Thanks

Rama.

@mhkabir

I would like to understand the timesync_mode::ONBOARD you added to mavros master. In the code I can see that an offset between CLOCK_REALTIME and CLOCK_MONOTONIC is computed. The IMU messages from mavros are then sent with the realtime time stamp. Shouldn't there be a way to obtain the offset from mavros too now? Assuming I want to adapt the image timestamp before passing it to VISLAM, I would want to add the offset so that its stamp is also with respect to CLOCK_REALTIME. Correct?

If anyone is interested, I pushed all my changes in the VISLAM example to my fork. The linked code allowed me to get the snapdragon to hover at a given waypoint, which serves as a proof of concept. The flight test has been done by flying in offboard mode and providing the px4 with a steady waypoint at (x,y,z)=(0, 0, 1.5). I don't think it is possible at this point to takeoff and fly in any of the autonomous px4 modes without a GPS lock.

Changes I had to make include most of the things discussed here:

- Compile mavros from source with the

-- ros-kineticbuild - Create a mavlink stream for VISLAM with

HIGHRES_IMU 250 - Adjust the VISLAM camera-IMU transformation. This is imo the best solution as then the VISLAM output does not need to be manipulated before being fed back to mavros. If we manually flip the coordinate system in the IMU message callback this would not be true.

- Use LPE (Local Position Estimator) on px4 instead of EKF2.

I hope this is helpful for anyone interested in running VISLAM + px4. If you find ways to improve any of this, please feel free to share. Specifically, I had to set the mavros yaml parameter time/timesync_mode to PASSTHROUGH. This results in time stamps having the time since boot instead of the wall time, which does not follow the ROS standard... Guess that can be solved better.

@potaito awesome! Thanks for that!

@potaito This is excellent. Thanks for the update. Also if you have a video can you please post it here as well.

@rkintada Here you go

https://streamable.com/shqxj (sorry for the shakiness, it's recorded with a smartphone)

The quad is moving a lot around the desired position, but that's the tame default px4 controller, not the state estimation. The LPE knows perfectly well what the quad's current position is.

@potaito Nice! What frame is that?

@potaito Thank you very much for posting the video.

@mhkabir I was following the Qualcomm recommendations and wanted to buy the QX 200. I managed to get the motors and propellers, but not the frame (hard to ship to Switzerland from the US). So I found the chinese seller "rakonheli" offering custom QX 200 upgrade frames, and this is it. It's a very nice kit, probably better than the original recommendation :)

However, now that the PX4 can output PWM through the Snapdragon's UARTs, I will just go back to buying normal motors and ESCs rather than using this proprietary ESC board and the QX200 motors/propellers.

Just a heads-up regarding the linked fork: The master has been rolled back (yes, evil, I know...) to ATLFlight/master in order to remain compatible. Sorry if that causes inconveniences for anyone.

@potaito Very nice work. How far does it lurch forward on takeoff? What do you suppose the reason for that is?

@Seanmatthews In my case it was almost 2 meters. It should be said though that I did not touch the controller gains at all. I think the default gains are very tame, might be that this is the reason, paired with an wrong level bias of the IMU.

On a different note: I think what is missing right now is integration of the velocities from VISLAM odometry into LPE. I made a few attempts at this, but LPE seems to completely ignore the provided twist messages sent through mavros.

Yeah you need to add the velocity update to LPE.

@potaito Did you use the PX4 example hover code with a different positive Z value? My own attempts to replicate your setup have not been successful. The differences I see are a different frame (possibly heavier, different legs), no floor mat to provide additional friction, possibly a different battery (?), and anything else? Sorry to barrage you here, but issues are not setup on your repo.

@Seanmatthews The issue is obvious in your case. You have no texture on the ground and lighting seems sub-optimal; Any visual odometry system (especially monocular ones), no matter how good - will fail without adequate texture for feature tracking. Without these image-space constraints, the system cannot perform adequately. Can you post an image from the bottom camera (at flight altitude) here?

If you notice, @potaito has perfect lighting and a highly textured mat on the floor. This is crucial for good performance.

I also think that anyone wanting to properly use this VIO system will need good camera intrinsics calibration. It currently just works because the bottom camera-lens system on the Snapdragon Flight boards are just "close enough" to whatever is set/hardcoded in the code. @rkintada Can you comment?

@Seanmatthews You will also need to take off manually and _then_ switch to position control after the VIO properly converges. Auto takeoff (in position control, if you don't want to change the code) is possible, but :

i) It will drift before VIO converges, and VIO may diverge at the beginning since the camera is out of focus.

ii) You will need to make sure to use the proper PX4 "takeoff jump" functionality (quickly gives a throttle burst to clear the ground and get to minimum camera focus altitude), which is not available in offboard mode yet. (It activates when you raise throttle above 50% in position control mode.)

@potaito It would be great if you could add all the instructions to get this working on a page on the PX4 Devguide : https://dev.px4.io/. You can send in a pull request to https://github.com/PX4/Devguide.

It would be a shame to have all this great work lost. I think we would also be interested in moving your working branch of the VINS repo to the PX4 organisation so that we can provide a reference setup, software and instructions to get it working.

@mhkabir It's not super obvious and I'd like to hear @potaito 's view since he has it working. I tested position estimation manually using my floor texture and it works very well. Particularly, I think seeing his takeoff/hover code will answer your and my questions. I did not get the impression that he performed a manual takeoff before entering offboard mode, given the 2m forward lunge.

@mhkabir Regarding the position control takeoff mode, can you point to code examples or further explain how one would use the "takeoff jump" functionality programmatically? I looked around the PX4 Devguide, but maybe I'm missing a page for this?

@Seanmatthews: Did you use the PX4 example hover code with a different positive Z value? My own attempts to replicate your setup have not been successful.

Yes you are correct, I used a modified example and did not take off manually. All I do in the video is hit the enter key and from there it goes. Unfortunately I am out of the office for a week, but here's what I did:

- Start VISLAM and px4 with the quad on the ground. It can be that the camera is too close to the ground with our small frames. With sub-optimal conditions I had to put the quad on the edge of a small box with the camera being over the edge. Otherwise VISLAM would fail. In that case my quad took off in a straight 45° line and I had to use the kill-switch or it would have crashed into the ceiling.

- Start the modified example code. The code does the following:

- Keep sending

(0,0,0)as a waypoint - Switch to offboard mode

- Arm vehicle

- Switch to sending

(0,0,1.5)waypoints

- Keep sending

We could do that better if we used the px4 takeoff in any of the other flight modes and then switch to OFFBOARD. But the px4 refuses to do anything autonomous due to the lack of a GPS lock it seems.

@mhkabir: If you notice, @potaito has perfect lighting and a highly textured mat on the floor. This is crucial for good performance.

That is true. However, the Snapdragon´s optical flow lens has a rather wide angle. So in my opinion in comes down to whether or not VISLAM can initialize while landed. As noted above, I sometimes had to increase the starting height of my quad to help VISLAM a bit.

On a similar note: There is a setting to allow VISLAM initialization while the vehicle is moving. The default is false, i.e. do not allow this to happen. And I did not change that.

@mhkabir I also think that anyone wanting to properly use this VIO system will need good camera intrinsics calibration.

Also true. To me it was not entirely clear what kind of calibration VISLAM expects, i.e. how we can produce the currently hard-coded parameters ourselves.

@mhkabir: It would be great if you could add all the instructions to get this working on a page on the PX4 Devguide : https://dev.px4.io/. You can send in a pull request to https://github.com/PX4/Devguide.

I will gladly do that, always wanted to contribute to the docs. But perhaps we should first sort out all issues before providing an official HOWTO that might not even work properly. Plus, @rkintada wants to integrate the px4 compatible application into the existing Qualcomm VISLAM example, which will require changes in the code on my working branch. As a summary, here's what I want to do:

- [ ] Not only fuse position/orientation but also the velocities in LPE

- [x] Fix image/IMU timestamps to have

ros::Time::now()as the reference clock instead of the time-since-boot. - [ ] Have @Seanmatthews or someone else replicate the setup so we know for sure it's working and I did not forget some small but crucial modification that makes everything work ;) A friend of mine is also currently replicating the setup with yet another frame and regular ESCs + PWM output.

- [ ] Wait for restructuring of official ATLFlight example repository

@potaito That sounds like a good path. Regarding step 1, @rkintada refers to some potential problems with standardizing on ROS time. I'm not sure if that impacts what you're planning here.

I have a similar test mat coming tomorrow, as well as the rakonheli frame. I created a discord server if you'd all like to join @potaito @mhkabir @rkintada

@Seanmatthews That is true, the ROS time does not affect the performance at all. I am just under the impression that I did not fully understand the time sync mode in mavros and that there would be a better way of doing it (i.e. mavros outputting the offset between the two clocks or something, which we could then use).

To correctly solve the timebase issue, what you need to do is add a offset computation in the camera driver. That's a small change - it would just need to compute and average the time offset between the aDSP time (image timestamp) and the ROS wall clock (essentially the POSIX CLOCK_REALTIME). Then just use timesync_mode::ONBOARD in mavros and push all the data into VI-SLAM with the ROS timebase stamps.

I also think that anyone wanting to properly use this VIO system will need good camera intrinsics calibration. It currently just works because the bottom camera-lens system on the Snapdragon Flight boards are just "close enough" to whatever is set/hardcoded in the code. @rkintada Can you comment?

The instrincs can be computed by ROS camera calibration(http://wiki.ros.org/camera_calibration) if needed. The existing code may need some changes. I will be able to update it in a week. However the current values are generated based on our internal calibration and it was seen to be the same for same sensors.

@potaito That sounds like a good path. Regarding step 1, @rkintada refers to some potential problems with standardizing on ROS time. I'm not sure if that impacts what you're planning here.

For step 1, can MAVROS be updated to generated a new ROS topic that includes the IMU stamp of the provided by the IMU? This would be additional field of the ROS topic. In this case, the ROS timestamping will not be affected. The user of the VISLAM will need to use the timestamp provided in the ROS topic instead of the ROS::time.

can MAVROS be updated to generated a new ROS topic that includes the IMU stamp of the provided by the IMU?

For that you need to create a custom msg. MAVROS is publishing IMU data in sensor_msgs/IMU.msg, which uses ROS stamp on header. What I don't understand is that if you are using a system that is running ROS, which syncs all topics through POSIX clock, what makes you think that pushing the IMU timestamp directly to the VISLAM will avoid problems with ROS time?

@TSC21 By default, MAVROS sends a ROS time associated with the IMU data it obtains from PX4. MAVROS assigns the ros::Time::now() when it receives the data. There's a timesync_mode, PASSTHROUGH, that will deliver the timestamp associated with the data by PX4. However, there's no option for both timestamps. VISLAM gets this IMU through MAVROS, but then gets its camera data from the Qualcomm camera drivers, which uses the same time reference as the IMU data. To be able to properly sync the two in VISLAM, PASSTHROUGH was implemented.

Correct me if I'm wrong, but I believe @potaito's intention is to standardize on ROS time. However clean that sounds, the more I think about it, the more I think operating on the aDSP time in VISLAM (and other ROS nodes) will lead to less possibility for error. In that sense, I like @rkintada's idea to add an additional field for the IMU stamp. This allows the header timestamp to be used for housekeeping calculations, etc, but still gives us the original aDSP timestamp to use for sensor syncing activities.

@TSC21 @Seanmatthews Also for algorithms like VISLAM the timestamp of when the event occurred is important( ie when the IMU sample was taken vs the timestamp of when the data is received in mavros). So in this case using ROS::Time::now(), without passthrough, will include the latency of getting from dsp-->px4-->mavros which will not be good.

@TSC21 I agree, but having the ROS timestamp for non-time-critical components, like VISLAM, would be a useful addition. Also, adding an additional message field for the IMU timestamp will make their distinction more clear to MAVROS users.

So maybe the second bullet on your list is not so critical @potaito. What do you think?

Also, adding an additional message field for the IMU timestamp will make their distinction more clear to MAVROS users.

This is not upstreamable.

So in this case using ROS::Time::now(), without passthrough, will include the latency of getting from dsp-->px4-->mavros which will not be good.

Correct. Mavros never uses ros::Time::now() in the codebase, if you have a look. However all you need for the VISLAM usecase is adding a time offset (between aDSP and ros::WallTime i.e POSIX CLOCK_REALTIME) computation in VISLAM. For mavros, just use timesync_mode::ONBOARD which I recently added.

It would literally be 10 lines of code. If I'm not clear yet, I'll just propose a PR - let me know.

Mavros never uses ros::Time::now() in the codebase, if you have a look.

This statement is unclear.

What I mean is that the synchronised timestamp is used for all messages. The time of arrival (ros::Time::now()) is only used if synchronisation is unavailable or disabled.

Correct. Mavros never uses ros::Time::now() in the codebase, if you have a look. However all you need for the VISLAM usecase is adding a time offset (between aDSP and ros::WallTime i.e POSIX CLOCK_REALTIME) computation in VISLAM. For mavros, just use timesync_mode::ONBOARD which I recently added.

The question here is how often this offset need to be updated? If the CLOCK_REALTIME gets changed due NTP or some other source, this offset will be incorrect. Also In the current example the camera frames are not obtained as ROS topic and this poses an issue for the above solution as the time stamp is CLOCK_MONOTONIC.

@rkintada 10 Hz is what we use for offboard sync (when PX4 is running on an external board connected over a UART). This gives us excellent sync precision (well under 1ms) and very good visual-inertial odometry performance on our custom pipeline. I think that 10Hz will be more than enough to keep the clocks tightly synchronised - especially given that it's all onboard and no UART latency, unreliability, etc. is involved.

@mhkabir 10Hz would mean that the offset can potentially change every 100ms. In your testing how often does this change? My main concern is that the time stamp of the data changes( or can potentially change every 100ms), this will impact the VISLAM algorithm as they may cause potential gaps/overlaps in imu data. Hence I was thinking that passing the actual timestamp as is may be better. If passthrough mode is the way, then it should be fine.

@rkintada Passthrough mode does indeed work that way, and it works perfectly fine for VISLAM. This conversation branch was born out of @potaito's desire to use ROS timestamps for both IMU and camera, which isn't really necessary and might even be detrimental due to potential small conversion error. In my opinion, having both timestamps would be a nice-to-have, but definitely not necessary at this point.

@rkintada We low-pass the offset, so there are no spikes. A hard-set is only done when there a > 10ms spike (usually means that NTP corrected the clock). This works quite well, and I have tested it with a VIO setup while manually skewing the realtime clock. And if I remember correctly, it's easy to configure NTP as a one-shot so that there are no runtime skews. I think it's best that I propose a PR and then you guys test it with your VISLAM setups versus the passthrough mode.

@mhkabir Will the PR remove passthrough mode?

@Seanmatthews Nope. It'll be a PR to the VISLAM example repo. :)

Coming back to the original issue, I noticed two things about my setup:

- Although VISLAM begins tracking and sends a position to mavros, I don't think PX4 has a position lock because nothing is being published back to

/mavros/local_position/pose. - Mavros complains about a home position with

HP: requesting home position.

Is not having GPS the culprit in these two issues? Is GPS required to provide a home position (the home position plugin does look for latlong)? Does PX4 need a home position before it sends back a local position? Do I need to send the home position? It's not part of the mavros offboard example, so I didn't think I needed to.

@Seanmatthews There is also a local home position, however, we have not extensively used that so far. This is something @ChristophTobler and @Stifael have been looking into extensively. I will sync with them tomorrow.

@ChristophTobler Can you please help to try to get this in shape for v1.7 (which should happen some time soon as well)? @vilhjalmur89 might be able to contribute as well.

I added param set MAV_DATA_STREAM 6 to px4.config and mavlink stream -u 14556 -s LOCAL_POSITION_NED -r 30 to mainapp.config. The additions do not cause local position to be published by mavros.

@LorenzMeier @ChristophTobler This would be a good opportunity to push for good vision support in EKF2 as well. We already have the core stuff for some time - it just needs some polishing.

@Seanmatthews:

1. Although VISLAM begins tracking and sends a position to mavros, I don't think PX4 has a position lock because nothing is being published back to/mavros/local_position/pose.

2. Mavros complains about a home position withHP: requesting home position.

I keep seeing the same messages regarding the home position, so that is not the cause of your problem. If everything is working you should however definitely see the pose estimate published on /mavros/local_position/pose.

@potaito I wonder what's the difference between our setups, since I get nothing from /mavros/local_position/pose. From your instructions, it looks like we're using the same version of mavros (Kinetic). Which branch/tag/release of PX4 are you using? Do you make any alterations to the mavros offboard example? Do you have any additional sensors attached? Thanks!

@Seanmatthews,

Is not having GPS the culprit in these two issues?

No.

Is GPS required to provide a home position (the home position plugin does look for latlong)?

Yes.

Does PX4 need a home position before it sends back a local position?

No.

Do I need to send the home position?

MAVROS sends a request and if the FCU as already an Home Position, it will respond to the request.

Home Position is a different subject. You need to get something on /mavros/local_position/pose.

@Seanmatthews I can check the version of px4 on Monday.

- Are you certain you modified both

mainapp.confandpx4.config? Specifically, is the parameterSYS_MC_EST_GROUP == 1? - Did you start

local_position_estimator(LPE) andattitude_estimator_q?

Thanks @potaito.

Are you certain you modified both mainapp.conf and px4.config? Specifically, is the parameter SYS_MC_EST_GROUP == 1?

Did you start local_position_estimator (LPE) and attitude_estimator_q?

Yes, I am using your mainapp.conf. I did add param set to the beginning of the line with LPE_VIS_DELAY.

Here's an oddity: Using param set SYS_MC_EST_GROUP 1 and starting the ekf2 estimator instead of the the LPE estimator gives me a return on /mavros/local_position/pose. However, the height measurement it returns for Z is very inaccurate and jumps around by 10-30cm at a time, even though the height returned by VISLAM is accurate. Regardless, this leads me to believe that PX4 is receiving the VISLAM measurements, but something is getting held up in the LPE estimator.

@TSC21 How can I check in the ULG log that LPE started successfully?

Setting SYS_MC_EST_GROUP on Snapdragon (and other POSIX platforms) has no effect in the actual module startup.

You can check if LPE started by looking in the PX4 console where the startup script is actually run (i.e start mainapp manually over a remote shell and see the output). You should see some info messages from LPE if it starts correctly.

@mhkabir Good to know about SYS_MC_EST_GROUP. Looks like it does start:

[lpe] fuse gps: 0, flow: 0, vis_pos: 1, vis_yaw: 1, land: 0, pub_agl_z: 0, flow_gyro: 0

Enable land and baro (if it already isn't)

Funny, I disabled baro and land precisely because of the jumpy Z-axis readings. With the settings posted by @Seanmatthews I managed to get the onboard height estimate to properly track VISLAM's estimate.

@Seanmatthews By the way, this is the modified example code I used to hover at a specific waypoint:

#include <geometry_msgs/PoseStamped.h>

#include <mavros_msgs/CommandBool.h>

#include <mavros_msgs/SetMode.h>

#include <mavros_msgs/State.h>

#include <ros/ros.h>

mavros_msgs::State current_state;

void state_cb(const mavros_msgs::State::ConstPtr& msg)

{

current_state = *msg;

}

int main(int argc, char** argv)

{

ros::init(argc, argv, "offb_node");

ros::NodeHandle nh;

ros::Subscriber state_sub =

nh.subscribe<mavros_msgs::State>("mavros/state", 10, state_cb);

ros::Publisher local_pos_pub = nh.advertise<geometry_msgs::PoseStamped>(

"mavros/setpoint_position/local", 10);

ros::ServiceClient arming_client =

nh.serviceClient<mavros_msgs::CommandBool>("mavros/cmd/arming");

ros::ServiceClient set_mode_client =

nh.serviceClient<mavros_msgs::SetMode>("mavros/set_mode");

// the setpoint publishing rate MUST be faster than 2Hz

ros::Rate rate(20.0);

// wait for FCU connection

ROS_INFO("Waiting for FCU connection");

while (ros::ok() && current_state.connected)

{

ros::spinOnce();

rate.sleep();

}

// Specify the setpoint in ENU frame

geometry_msgs::PoseStamped pose;

pose.pose.position.x = 0;

pose.pose.position.y = 0;

pose.pose.position.z = 0;

// send a few setpoints before starting

for (int i = 100; ros::ok() && i > 0; --i)

{

local_pos_pub.publish(pose);

ros::spinOnce();

rate.sleep();

}

ros::Time last_request = ros::Time(0);

const double kMinTimeBetweenRequests = 1.0;

// Try to switch to flightmode OFFBOARD

ROS_INFO("Requesting flight mode OFFBOARD");

mavros_msgs::SetMode offb_set_mode;

offb_set_mode.request.custom_mode = "OFFBOARD";

while (ros::ok())

{

local_pos_pub.publish(pose);

if (current_state.mode != "OFFBOARD" &&

(ros::Time::now() - last_request >

ros::Duration(kMinTimeBetweenRequests)))

{

if (set_mode_client.call(offb_set_mode) && offb_set_mode.response.success)

{

ROS_INFO("Offboard enabled");

last_request = ros::Time(0);

break;

}

last_request = ros::Time::now();

}

rate.sleep();

}

// Try to arm vehicle

ROS_INFO("Arming vehicle");

mavros_msgs::CommandBool arm_cmd;

arm_cmd.request.value = true;

while (ros::ok())

{

local_pos_pub.publish(pose);

if (!current_state.armed && (ros::Time::now() - last_request >

ros::Duration(kMinTimeBetweenRequests)))

{

if (arming_client.call(arm_cmd) && arm_cmd.response.success)

{

ROS_INFO("Vehicle armed");

break;

}

last_request = ros::Time::now();

}

rate.sleep();

}

pose.pose.position.z = 1.5;

ROS_INFO("Taking off!");

while (ros::ok())

{

local_pos_pub.publish(pose);

ros::spinOnce();

rate.sleep();

}

return 0;

}

@potaito I have VISLAM working well when holding the drone by hand and now I am also trying to run VISLAM in flight. However when using the recommended IMU settings, LPF 184HZ etc, the position diverges quickly and VISLAM fails. I checked for the vibration level of the IMU sensors and found that the acc especially in z axis had 14m/s^2 noise variance. Way higher then used in the standard VISLAM setting. Things I tried where:

-adjusting the noise standard deviation values in the VISLAM settings

-Lowering the LPF frequency of the accelerometer to 92Hz and then to 40Hz

Now VISLAM doesn't fail in flight anymore, however still the drift is higher than tolerable(about 2m in 5min flight)

How did you cope with sensor noise, what kind of values are you seeing,or is the board on your frame just very well insulated?

@mmoiozo To be honest I did not have to bother with filter settings, but then again I never checked the noise/vibration levels. The longest time I was flying with the Snapdragon was probably a minute. But yes, it is probably well isolated as I attached it with thick double-sided tape to the frame :)

I guess I will have to look into the damping of the board.

Thanks anyway.

What's the current state here? Is there a small documentation? Are there any working branches? Or is everyone working independently? :)

Hey @ChristophTobler

I do have a working branch here: https://github.com/potaito/snapdragon_mavros_vislam/tree/devel/mavros-integration

You can also find the px4 configurations that I used in there. With this branch I am able to run VISLAM + Mavros + px4 and fly with an offboard node (the code of which I posted earlier).

However, it is still far from perfect as I ran into a new problem: Initially the VISLAM pose message in ros has an age of 20-50 miliseconds once it is available on ROS. By age I mean the difference between the time the message is published and the capturing time of the image last fed to VISLAM. To be more precise, the timestamp is centered in the exposure of the image, so time_start_capture + exposure_time/2 in pseudo code.

Now the problem: After having VISLAM + Mavros + px4 running for 5-10 minutes, that message age increases up to 200ms, which is unusable for flying around. Restarting the VISLAM node alone resolves this problem.

Debugging the cause showed me that the function call mvVISLAM_AddImage() (located inside Snapdragon::VislamManager::GetPose()) gets slower, but I cant tell why. The original ATLFlight snap_ros_example does not experience this problem. The timings there are constant.

@potaito Thanks a lot! I'll have a look at your branch and try to reproduce your results first.

@ChristophTobler I was able to reproduce flight using the standard hardware platform and @potaito's mavros-integration branch.

@Seanmatthews That's good news! What fixed your initial issues where your quad dashed over the floor?

@potaito I see you are using ROS kinetic and not the suggested indigo. Were you able to follow these normal instructions? And do you have to install mavros from source? Are there changes we need which are not in the default binaries?

@ChristophTobler Ah no, sorry for the confusion. The ROS version is indigo. I guess you think of kinetic because of the --rosdistro kinetic flag @Seanmatthews and I used to compile mavros? Perhaps my memory serves me wrong, but the kinetic branch is fully compatible with indigo. And we need the up-to-date mavros because of the new feature timesync_mode.

@potaito I'm able to run the code and the topic VISION_POSITION_ESTIMATE seems to be correct. But LOCAL_POSITION_NED is mostly zero. Shouldn't that one change corresponding to VISION_POSITION_ESTIMATE?

I rebased your branch to use the newer mv release (1.0.2) https://github.com/ChristophTobler/snap-ros-examples/tree/mv-1.0.2/mavros-integration (README is still a mess...)

Yeah it should (somewhat poorly) reflect the vislam visual odometry topic. Did you see my px4 config files mainapp.conf and px4.config located here?

Yup, I pushed them according to your README. I'll check again.

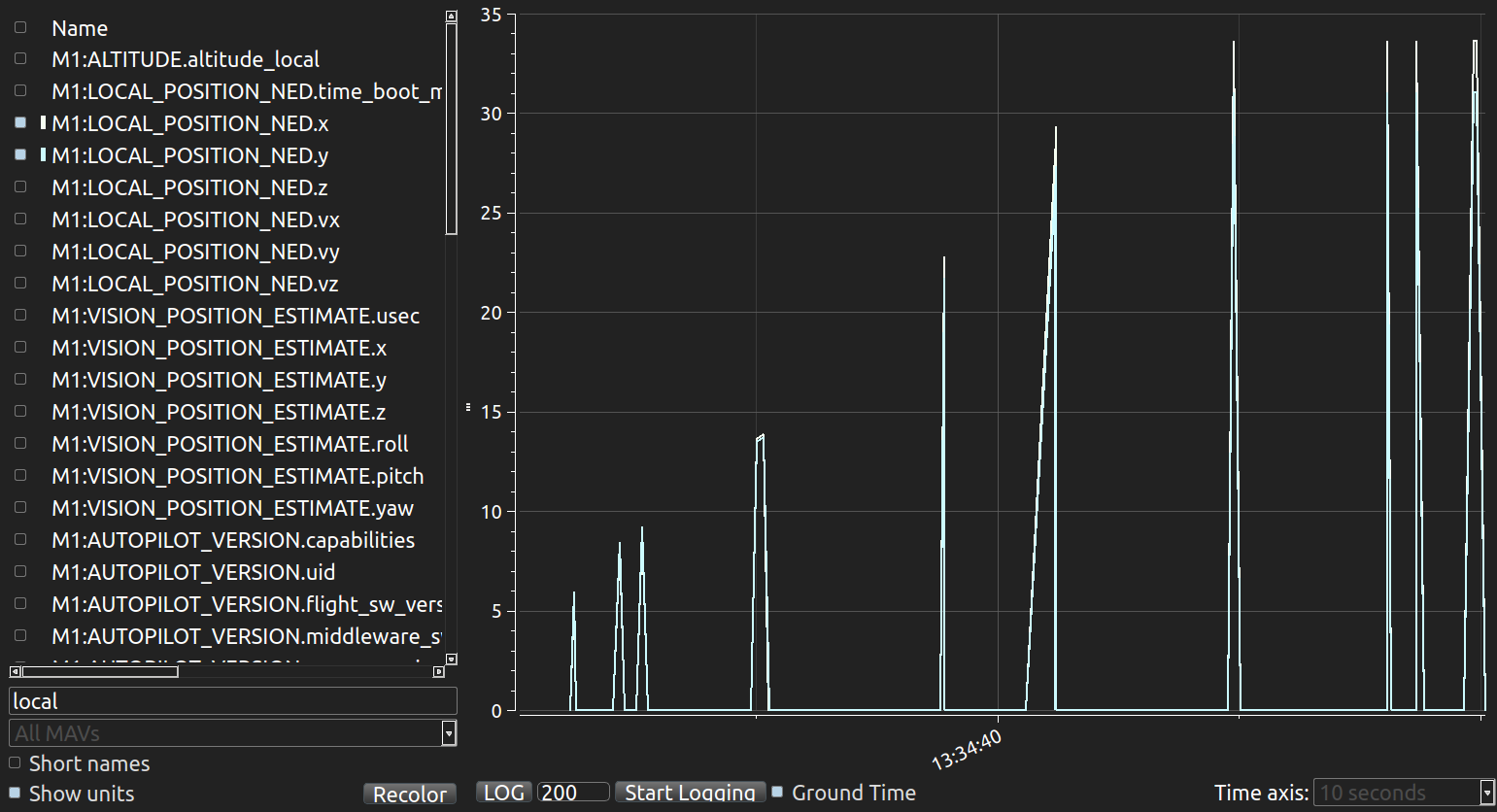

@potaito I used your mainapp.conf and px4.config. I get reasonable LOCAL_POSITION_NED estimates for a few seconds and then huge spikes (see image). However, VISION_POSITION_ESTIMATE does not have any spikes... Any ideas? Maybe I have to try with your branch and the older mv release (even though estimates look good).

@ChristophTobler This could be some LPE oddity. Can you try EKF2 after updating ECL and setting the necessary fusion flags? You will need this PR too :https://github.com/PX4/ecl/pull/309 . We recently did a bunch of overhauling to the EKF2 vision handling, with more changes incoming soon.

@mhkabir That seems to work, thanks! Still have to do a real flight test, though.

@ChristophTobler Don't forget to post the updated configurations upon successful flying ;)

I did some flight tests (just manual, no position control). The results were not very consistent, though. Here are two logs:

http://logs.px4.io/plot_app?log=50d53cf2-4df2-42ac-9a2e-c62fb6b123d6

In this one, the z directions even seems to go into the opposite direction (z should be negative when going up).

The second log seems to be very good. But I wasn't able to reproduce this...

http://logs.px4.io/plot_app?log=e08f4503-0911-448d-8e64-17f8659f6fc9

I need to test a bit more. @potaito Can you test with ekf2 as well? Maybe not offboard but manual or handheld.

@ChristophTobler re: previous problems-- I had a few. One, unrelated to this thread, was a mavros_extras problem. The lurching forward was likely due to this.

And that's strange about the Z coordinate. I may have experienced something similar with the current setup. I found, when taking off from the ground, the quad would rise and then try to correct yaw in the wrong direction, causing it to slowly spiral around the z-axis. What appears to alleviate this problem is providing some distance between the optical flow camera and the feature0full ground. To that effect, I use a piece of clear acrylic on four Solo Cup legs. Again, this may be unrelated. I'm using mv_0.9.1_8x74.deb.

@ChristophTobler I will have to test later, currently the quad is disassembled and no px4 flashed on the aDSP. What exactly would I have to do? Only checkout and flash the pull-request branch in ecl mentioned by @mhkabir and then use EKF2 with the parameters contained in your log?

@potaito Exactly, that would be helpful. Just to see if it is related to my setup/environment. (I rebased the PR)

Thanks!

@ChristophTobler @potaito In further tests, I don't seem to get consistent flight. Though, the drone does consistently fly forward and to the left, with a slight left yaw (like it's spiraling upward). Pose coming from VISLAM follows a NWU convention. However, looking at MAVROS' /mavros/local_position/pose topic, its position follows the same NWU convention, while its orientation is completely reversed (SED). My only guess as to the reason it was able to hover before is perhaps because the initial yaw value fell within range where it wasn't trying to rotate to a correct orientation.

Something else I noticed about /mavros/local_position/pose is that on PX4 and VISLAM startup, the yaw value is very large and takes ~20s to settle around 0.

@Seanmatthews Hm that is odd. Indeed I never yawed during flights so maybe that problem was there all along.

So, let's go through this: The original VISLAM orientation matches the IMU frame as shown here

However, when MAVROS is used to obtain the IMU samples, the IMU messages are already rotated and translated to match the ROS convention. I believe this is coincidently the board frame depicted in the image above. What I then did is configure VISLAM to consider this transformation by specifying the transformation between the camera frame and the mavros imu messages.

Maybe this transformation is wrong...

@potaito Like you mention in some code comments, ROS seems to negate the IMU's Y and Z values, such that incoming IMU data is in the form NWU. This is perhaps standard. Your OMBC value, [2.2214, -2.2214, 0] seems correct in that it negates all values, then flips X and Y, putting our IMU in the camera frame of ESD. Then, however, the output of VISLAM comes out in board coordinates (NWU). It seems MAVROS expects NED though?

Something to note-- looking at the MAVROS local_position plugin, it appears to only present the user with heading based on IMU data even if there exists a visual heading estimate.

There's a better way to do this, but here's what I changed to deliver the ENU format from VISLAM that MAVROS expects:

// R.getRotation(q);

double r, p , y;

R.getRPY(r, p, y);

q.setRPY(-p, r, y);

pose_msg.pose.position.y = vislamPose.bodyPose.matrix[0][3];

pose_msg.pose.position.x = -vislamPose.bodyPose.matrix[1][3];

pose_msg.pose.position.z = vislamPose.bodyPose.matrix[2][3];

However, this configuration doesn't seem to want to hover at 0, 0, 1: http://logs.px4.io/plot_app?log=6c2491d2-5df1-4094-9bc0-4c4cea00b795

Changing back to your original configuration, @potaito , I may have misspoke about the orientation coming from mavros/local_position/pose. It looks like the orientation is indeed NWU.

What I have noticed between the two configurations, is that LPE does not seem to be estimating my height, causing the quad to shoot up into the air. See local position Z: http://logs.px4.io/plot_app?log=ee52a54e-af6c-4943-a44d-fd3cdefe07be

It's _never_ "NWU" in mavros. I can't stress enough on the never. Everything coming out of mavros is ENU, and unless you can point out a bug in mavros's NED-ENU transformation, you're wrong.

@mhkabir Hasty criticisms. The coordinate coming out of MAVROS is a function of the coordinate frame of the values input. All that happens in between are just simple transformations representative of moving from one frame to another. When I say 'NWU', I'm saying it relative to the coordinate frame being used before the values entered MAVROS. That's not a reference to the coordinate frame MAVROS intends to output.

@potaito @ChristophTobler Here's a log of a straight-out-of-the-box install: http://logs.px4.io/plot_app?log=2fdeef0c-d0f5-42b6-9942-85d40b662032 . Do you see anything blatantly wrong in the logs?

Details:

- Rakonheli frame

- Flight 3.1.3.1

- PX4 v1.6.5

- mv0.91

- mavros kinetic

- snapdragon_mavros_vislam/devel/mavros-integration (with accompanying px4.config and mainapp.conf)

- LPE_FUSION = vis_pos & vis_yaw

EDIT: Additional logs

- http://logs.px4.io/plot_app?log=19f419c4-97f7-4115-9944-f3f8a7cb3ab4

- The yaw is not set to 0 in the hover node and it seems to affect the 'Yaw Angular Rate'

- http://logs.px4.io/plot_app?log=bdf8b535-37f0-4955-9e20-693e34029b34

- After setting setpoint orientation to

[1, 0, 0, 0]to ensure yaw=0

- After setting setpoint orientation to

Oddly, what I'm seeing in QGroundControl is that the lifting the drone by hand has the local NED position and vision position go negative (what I expect). Upon takeoff, both go positive, causing the drone to keep going toward the ceiling.

@Seanmatthews MAVROS applies a static transform ENU<->NED, so it assumes that the data coming from the FCU is in NED and that the data coming from the ROS framework. So if your data coming from the FCU is not in NED, when it applies the transform, probably the resulting frame won't be ENU. So just be sure that the data coming from the SD flight is in NED and if not, apply the correct transform in NED.

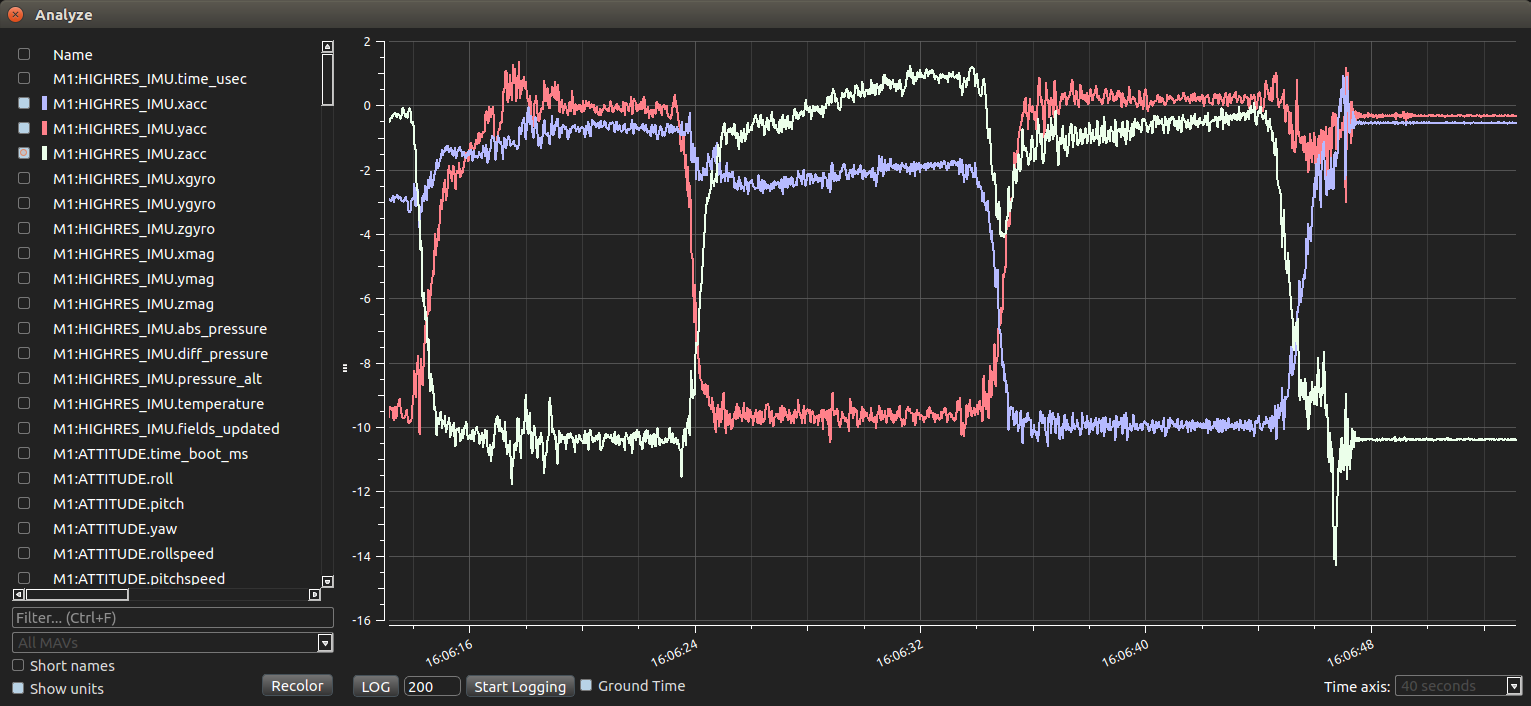

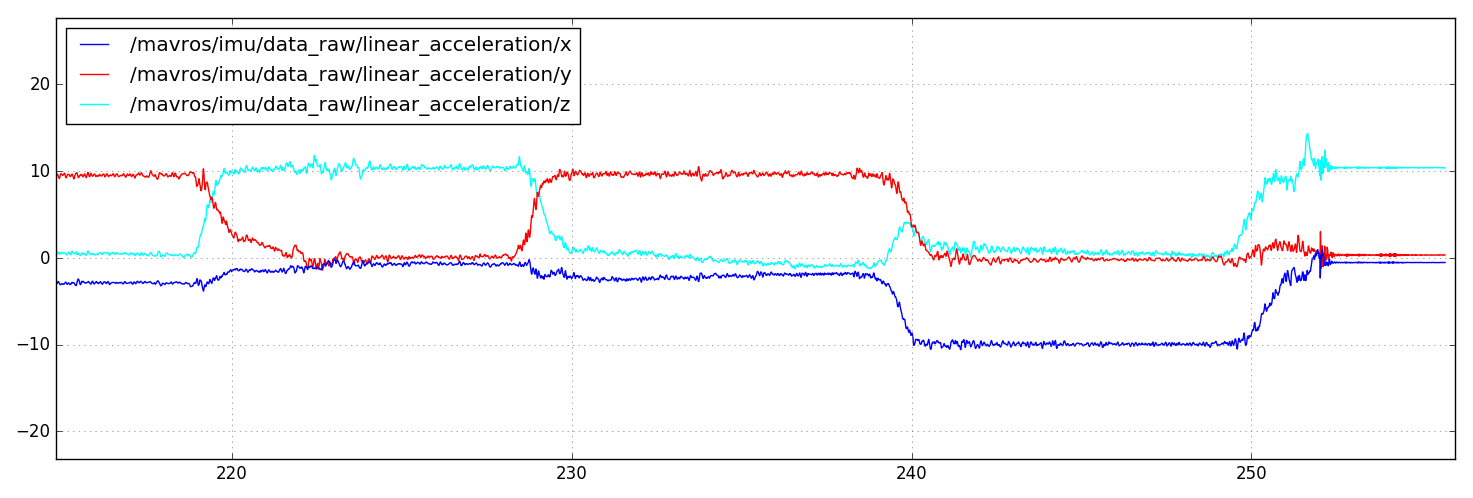

@TSC21 Here's a graph comparison of the IMU linear accelerations I see coming back from both PX4 and MAVROS. The three "humps" are with the snapdragon flight board with 1) bottom facing ground, 2) right side facing ground, and 3) front facing ground.

Perhaps also related is this issue I found in MAVROS: https://github.com/mavlink/mavros/issues/751 . When I run this setup (LPE, q attitude estimator, VISLAM, MAVROS), I also see a yaw offset based on the starting position. The yaw starts large-ish and settles at pi/2, before the vehicle has even moved.

EDIT:

I've confirmed that this is not a hardware issue by installing the same software (running the stock mavros/px4.launch) on a different Snapdragon Flight board. The same linear acceleration rotation occurs.

It's important to note that @potaito and I are installing mavros kinetic with upstream changes, according to these instructions.

@Seanmatthews: Changing back to your original configuration, @potaito , I may have misspoke about the orientation coming from mavros/local_position/pose. It looks like the orientation is indeed NWU.

Indeed. I just checked myself and the VISLAM output on my branch is in ENU frame. Just so we are all on the same page, as far as I understand ENU this corresponds to the board frame depicted in the image from ATLFlight I have linked earlier, correct?

@Seanmatthews: Here's a graph comparison of the IMU linear accelerations I see coming back from both PX4 and MAVROS. The three "humps" are with the snapdragon flight board with 1) bottom facing ground, 2) right side facing ground, and 3) front facing ground.

I think the last log with IMU data you have shown is correct and in ENU frame (mavros is transforming the raw IMU as expected and we told VISLAM about this with those obc variables etc.).

@potaito Maybe I'm misinterpreting something, but it seems that the first graph (original PX4 IMU data) reports NED as expected. When each axis is pointed positively into the ground, the board is accelerating in the opposite direction, counter to the force of gravity. Then the second graph (MAVROS transformed, and keeping in mind that Z would be negated because I still point the board's bottom toward the ground) reports NWU.

However, even if my interpretation is incorrect, if we assume MAVROS is reporting IMU data correct and in the ENU frame, then:

- Shouldn't the conversion to camera frame be a 180 degree rotation about the X-axis, rather than your OMBC values?

- Shouldn't pointing the front of the board towards the ground affect the Y (and not X) in the MAVROS graph?

@Seanmatthews I think you might be correct. When looking at the Imu raw data coming from mavros alone, I made the following observations:

- Snapdragon lying on the table with optical flow camera facing the table, I read a linear acceleration equal to g in positive z direction.

- Snapdragon standing on its tail with highres camera looking to the ceiling, I get positive g-acceleration in x-direction.

- Snapdragon standing on the USB-port gives positive g-acceleration in y-direction.

Given these observations and the fact that the IMU (when resting) sees gravity as an acceleration opposite to the gravitational pull, it looks like the IMU data coming from mavros on the snapdragon is in a frame equal to the board frame in this picture:

And the depicted board frame resembles a NWU system, right? And if the IMU data should be in NED frame, something seems to be wrong indeed. Either that or we both are now completely confused now.

@potaito I suppose this makes sense according to the code:

- https://github.com/mavlink/mavros/blob/0.19.0/mavros/src/plugins/imu_pub.cpp#L256-L257

- https://github.com/mavlink/mavros/blob/0.19.0/mavros/src/plugins/imu_pub.cpp#L69-L72

- https://github.com/mavlink/mavros/blob/0.19.0/mavros/src/plugins/imu_pub.cpp#L75

There's an aircraft-baselink transform occurring on the data, but nothing else before it's published. Note the comments from the second link as well.

Please see : https://github.com/mavlink/mavros/issues/762#issuecomment-321727701

Thanks @mhkabir

@Seanmatthews The ROS conventions about axis-orientation in REP 103 state:

In relation to a body the standard is:

x forward

y left

z up

That's exactly how we both get the IMU readings from MAVROS. So far so good. Now let me go through the transformation from IMU to camera frame that is specified with vislamParams.ombc:

- The original

ombcvector in ATLFlight is[0 0 pi/2]. The positive angle tells meombcis rotating vectors from IMU to camera frame, i.e.X_cam = R_cam_imu * X_imu + T_cam_imuwhereR_cam_imuis a rotation matrix andT_cam_imua translation vector.

The [ATLFlight Readme](https://github.com/ATLFlight/ros-examples#imu-to-camera-transformation) states that the `ombc` rotation is the other way around, meaning `X_imu = R_imu_cam * X_cam + T_imu_cam`. But then their original `ombc` vector should have a negative rotation as far as I can tell...

With the coordinate frame of the mavros IMU readings, we have to find a new rotation axis and angle, for example this one:

The rotation unit-axis represented in the mavros IMU frame is

A_imu = [1 -1 0] /sqrt(2), the rotation anglepi. The resulting Euler rotation vector then readse_imu = [1 -1 0] *pi / sqrt(2). These are the values I put down for the ombc settings in my fork.Last but not least I also changed the translation

tbctoT_camera_imu = [0.009 0 0]as this was what VISLAM always converged to. So I assumed that mavros not only rotates the IMU readings, but also translates them. Never confirmed with the source code !!

I am writing all this down to have a proper record and because there could very well be errors in it. Please find a mistake because then we would have something to fix :wink:

@potaito Thanks for the memorialization of the logic. Above, I had suspected that the IMU was coming back according the REP 103 standard, but then this comment confused the matter, making me doubt the validity of my own results.

There's still the question about why the local_position comes back as forward-left-up, and why I'm forced to send settpoint_position coordinates in that same coordinate frame.

Update: I think we found a setup where the VISLAM works with PX4 just as nicely. Seems that the issue was the filtering in the mpu9250_wrapper which introduces a delay.

Branches:

Firmware: https://github.com/ChristophTobler/Firmware/tree/pr-raw_imu_snappy

Mavros: https://github.com/ChristophTobler/mavros/tree/pr-raw_imu_px4

VISLAM: https://github.com/ChristophTobler/snap-vislam-ros/tree/mv-1.0.2/mavros-integration

This still needs some minor cleanup and some integration for the EKF2

Most helpful comment

@ChristophTobler This could be some LPE oddity. Can you try EKF2 after updating ECL and setting the necessary fusion flags? You will need this PR too :https://github.com/PX4/ecl/pull/309 . We recently did a bunch of overhauling to the EKF2 vision handling, with more changes incoming soon.