Prometheus: React UI: Implement more sophisticated autocomplete

It would be great to have more sophisticated expression field autocompletion in the new React UI.

Currently it only autocompletes metric names, and only when the expression field doesn't contain any other sub-expressions yet.

Things that would be nice to autocomplete:

- metric names anywhere within an expression

- label names

- label values

- function names

- etc.

For autocomplete functionality not to annoy users, it needs to be as highly performant, correct, and unobtrusive as possible. Grafana does many things right here already, but they also have a few really annoying bugs, like inserting closing parentheses in incorrect locations of an expression.

Currently @slrtbtfs has indicated interest in building a language-server-based autocomplete implementation.

All 64 comments

I'm currently in the process of building that language server. It lives at https://github.com/slrtbtfs/promql-lsp.

The features you named are all intended to land there. Support for completing metric and function names will probably be implemented in the coming two weeks.

Other features include:

- Showing syntax error messages while typing (done)

- Showing documentation and type signatures on hover (in progress)

- PromQL Syntax highlighting (not part of the language server, but exists already)

The part that has to be done on the React UI side to support the language server would be to provide a language client. A good candidate for that seems to be the monaco editor which is also used by vscode and is surprisingly fast and responsive when included in a web UI (See https://microsoft.github.io/monaco-editor/playground.html).

cc @squat

@slrtbtfs Wonderful! Really looking forward to this :) Do you know if you'll have capacity to work on the React UI integration as well?

It would make sense to do so. Especially as there probably is a lot of overlap with the PromQL vscode extension (https://github.com/slrtbtfs/vscode-prometheus).

I'll discuss this with my managers.

@slrtbtfs One point to consider: Monaco is not exactly small. Even just the minified editor.main.js is >2MB large. That doesn't make me immediately rule it out, but if we could do what we need with a significantly smaller download size, that would be much better of course.

@juliusv Progress on the language server side is tracked here https://github.com/slrtbtfs/promql-lsp/issues?utf8=%E2%9C%93&q=+is%3Aissue+label%3A%22React+UI%22+

Edit: And here: https://github.com/slrtbtfs/promql-lsp/blob/master/doc/progress.md

@juliusv An possible alternative might be the CodeMirror editor (400kb unminified, 250kb minified). Someone has started building a language client for that one: https://github.com/wylieconlon/lsp-editor-adapter .

Although the development of the lsp client seems to have stalled, it already supports the features we need for our use case, according to https://langserver.org/ .

It also looks small enough that we can fix issues with it ourself, if needed.

Edit: Add minfied size

Ah cool, 250kb is getting into the realm of being less painful (although it's still quite big, I guess for what we want to do, that's a reasonable size).

As per https://bundlephobia.com/[email protected] is even less - 165kb minified

Hi,

With my collegue @celian-garcia, we made a library to provide PromQL in Monaco. It is quite closed to the one provided in Grafana. Recently I presented it to @juliusv and he asked me if we implemented a real PromQL parser/grammar.

So no, we didn't and on my point of view I don't think it's really worth it to implement it on the client side. Autocompletion and syntax highlight should be enough, at least for the Prometheus Console.

Implementing a new parser in Typescript would say to maintain two different parser in two different language.

The only advantage I will see with that is: You can know in real time that you have an error in your PromQL query. (same way you have it in a IDE)

Concerning this library, we'll try to provide the PromQL language as a native language in Monaco, we are waiting a response from Microsoft about how you can make the autocompletion when it is a native language. (It's not super crystal clear for us :)) (https://github.com/microsoft/monaco-editor/issues/1672)

Depending of what LSP can provide in the Json, but I'm thinking that we could make a similar configuration in CodeMirror (same way we did for Monaco).

And it maybe make sense to do it in a different repository (like prometheus/react-promQL-lsp), so it can be used in other UI/IDE if they want to integrate the Prometheus Console.

@juliusv I hope I didn't forget any particular point and thanks for your patient :)

@Nexucis

Hi,

good to know you've been working on a similar thing.

Recently I presented it to @juliusv and he asked me if we implemented a real PromQL parser/grammar.

So no, we didn't and on my point of view I don't think it's really worth it to implement it on the client side.

Implementing a new parser in Typescript would say to maintain two different parser in two different language.

One advantage of using the language server protocol is, that the language server can be written in any language. In this case it is written in go and uses the same parser as prometheus.

Depending of what LSP can provide in the Json, but I'm thinking that we could make a similar configuration in CodeMirror (same way we did for Monaco).

Not having to implement the same features multiple times for different editors is actually the point of the language server protocol. The LSP allows implementing these features once and then using them with basically any editor, including vim, emacs, monaco, codemirror, eclipse, ...

Autocompletion and syntax highlight should be enough, at least for the Prometheus Console.

Completion and syntax highlighting are not the only useful features one can provide for language support. Consider e.g. showing errors and documentation. See e.g. https://imgur.com/a/Lwv8G2e

Also note that Completion is far less trivial than it looks at first.

- Completion for metric names and labels only works, if it is known what timeseries reside on the server

- When completing function arguments, the completer should be aware of the type signatures of the respective functions

- When completing label names the completer should be able to figure out, which labels occur together with the given metric name

- Context sensitive completion on this level only works, when the query is actually parsed, to figure out the necessary metadata.

Another important point to consider is, that PromQL queries often occur as part of yaml files. For a useful editor integration this case should be supported, too. The PromQL language server already does this.

Thanks for the input, both of you!

@slrtbtfs That makes sense for the case of complex autocompletion at least, yeah. Just curious, do LSP clients cache certain completion information in a way that e.g. function / operator names can be autocompleted without constant roundtrips to the server, or does basically every keystroke lead to an LSP request?

For syntax highlighting, it seems there's no LSP support for it yet (https://github.com/Microsoft/language-server-protocol/issues/682#issuecomment-486676262), and it is is generally considered to be better done on the client-side (for speed / reliability reasons anyway)? So I guess the syntax highlighting portion of what @Nexucis did here would be applicable for client-side UI integration (if ported to whatever editor we choose to use)?

Just curious, do LSP clients cache certain completion information in a way that e.g. function / operator names can be autocompleted without constant roundtrips to the server, or does basically every keystroke lead to an LSP request?

Usually there is a limit how many completions the server offers for a single request (by default 100). If the returned list is complete, i.e. there are less than 100 available completions, the server can tell this to the client and the client won't ask for additional completions again

For syntax highlighting, it seems there's no LSP support for it yet (microsoft/language-server-protocol#682 (comment)), and it is is generally considered to be better done on the client-side (for speed / reliability reasons anyway)?

Yes, that would be reusable. I've actually already done something similar for the vscode extension for prometheus, too. The main difference seems to be that @Nexucis provides the syntax highlighting using some typescript and I use a textmate grammar.

The advantage of the textmate grammar is, that it can be used by some other tools than vscode, e.g. eclipse and atom. The advantage of the typescript approach is, that it might allow slightly better highlighting.

Just curious, do LSP clients cache certain completion information in a way that e.g. function / operator names can be autocompleted without constant roundtrips to the server, or does basically every keystroke lead to an LSP request?

The server can additionally tell the client to only ask for completions, if the cursor is over a word separator. This way no completions will be asked for if the cursor is somewhere in the middle of a word.

Thanks for the background! So I guess for completion we will just wait for server-side LSP support then, but need to decide what to do for highlighting in the meantime. That in turn seems gated on the editor decision. If anyone interested wants to give CodeMirror a deeper look in terms of usability, quality, and LSP support (for our use case), that seems like the next step.

Thanks for your feedback about the LSP feature @slrtbtfs . Futur is bright with it :).

On our side we will try CodeMirror, we are also interested to reduce the footprint of Monaco.

I think we'll begin just by make the migration from Monaco to CodeMirror without LSP.

Then once it is done, we'll try with LSP if nobody else has done it.

@Nexucis Sounds good, especially since I'm not that good with front end stuff.

In case it is useful, here is a TextMate grammar for PromQL. At least for VS-Code it needs to be converted to JSON before using it.

The language server isn't able to talk over a network, yet. I'll fix this soon, to make experiments with Code Mirror possible.

Thank you @slrtbtfs @juliusv for all the inputs ! I'm sure it will help us ;)

FYI, we also found this. https://github.com/codemirror/CodeMirror/pull/4948/files

It's pretty outdated but maybe it's worth it to throw an eye.

We'll keep you informed ;)

@juliusv @celian-garcia @Nexucis

The language server is now in a state where you can try it out locally. It even has some limited support for auto completion.

Support for remote clients is not yet there, however.

@slrtbtfs uuuh so cool ! Thanks. On my side, I didn't advance so much on the topic unfortunately :(. The promCon gave me many things to think and to say to my team :).

Some aspects of a typical LSP implementation have become apparent that are a problem for Prometheus.

Firstly communication is typically done via JSON-RPC over WebSocket, which is not at all like our current APIs.

Secondly the LSP needs to maintain state for each active client (https://microsoft.github.io/language-server-protocol/overview) of at least the document (query) it's working on. This is both a complexity and reliability risk.

I think any such functionality and communication needs to happen internal to the frontend, and any communication to the Prometheus server itself is done via our standard APIs.

I think any such functionality and communication needs to happen internal to the frontend.

The idea of having a language server is kind of the opposite of having it in the frontend.

While I agree with some of the concerns, there are actually good reasons to not implement such features on the client side:

- Every client has to implement these features for itself. Right now we already have different completion implementations for Prometheus, Thanos and Grafana which leads to a lot of duplicate effort. Also each of these only implements a very limited feature set.

- Having the language server written in golang allows to use the same parser as Prometheus. This allows having much more insights into queries than existing tools.

Secondly the LSP needs to maintain state for each active client (https://microsoft.github.io/language-server-protocol/overview) of at least the document (query) it's working on. This is both a complexity and reliability risk.

For more complex setups like Thanos or Grafana it would be also possible to run the language server in a sidecar container which might reduce these risks.

The idea of having a language server is kind of the opposite of having it in the frontend.

That's fine when it's running on a developer's desktop or dedicated SaaS. Not when it's living inside a key process of a critical monitoring system.

Having the language server written in golang allows to use the same parser as Prometheus.

I'm not worried about the parser, I'm worried about the other parts. Namely the architecture that implicitly comes with an LSP.

For more complex setups like Thanos or Grafana it would be also possible to run the language server in a sidecar container which might reduce these risks.

I don't think that solves the problem. I'm proposing to sidestep the problem by having the usual LSP client<-> server communication happen entirely inside the browser when it's a Prometheus type use case where the primary purpose of the binary in question is monitoring.

Creating a stateless API that uses the same logic as the PromQL language server is something that should be actually possible from a implementation side.

I have some doubts about whether it's a good idea though, since that would essentially mean creating a new protocol.

One option is to leave the parsing to Prometheus, and do the rest up in the frontend.

One option is to leave the parsing to Prometheus, and do the rest up in the frontend.

Parsing only produces an AST. That's not really useful for a frontend.

We can add more than that, as long as the API is stateless and using our existing API way of doing things.

While I think building such an API on a server side would be doable with reasonable effort, the client side part would be an huge amount of work, with questionable benefit.

But there is another problem with that. Features that the language server supports or will support include:

- Completion

- Hover information

- Diagnostics

- Function signatures (not yet, but soon)

- Formatting (maybe in the future)

- Quickfixes (maybe in the future)

Each of these things is quite complex to encode in an API. That's the reason why the LSP Specification is as complex as it is.

I think it's great that Microsoft was able to establish a standard way of doing this, even though I don't like all of their design decisions.

If we are deciding to build our own API we are basically inventing our own protocol and going against very much needed standardization in the field of language tools.

EDIT: Spelling

The best option then may be to code it all up in Javascript then.

I was proposing more that we handle the backend of the LSP via our API, rather than the LSP itself.

The best option then may be to code it all up in Javascript then.

That would mean:

- Rewriting the PromQL parser in Javascript.

- Rewriting the PromQL language server in Javascript.

- Making the frontend much bigger and more complex than it is now.

- Someone would need to work several months full time to get another implementation of things that already exist.

- Someone would need to maintain all that.

- Someone would need to be actually willing to do this and/or be paid for it.

If the choice is between adding a stateful document manager to the Prometheus process with a brand new API transport, and not having autocomplete, then I'd prefer not having autocomplete. A nice feature doesn't trump reliability/maintainability of our core functions.

I would hope we can find some way to do this though.

I thought one of the benefits of a generated parser was that it could be used from other languages?

I thought one of the benefits of a generated parser was that it could be used from other languages?

That's not actually the case. While this was assumed to be true in some past dev summit discussions it wasn't mentioned in the parser rewrite proposal any more.

However, having a language grammar as a source for the parser generator provides a human readable definition of what exactly a PromQL query is, which can make writing other parsers easier.

Just reading along here and getting worried that both of you have really good arguments for why things have to be one way or another, but I also don't see how to resolve that properly.

Obviously for every user of Prometheus it would be amazing to have proper and very intelligent autocomplete. But at the same time, introducing user session state into Prometheus (which I wasn't fully aware of regarding LSP) seems undesirable. Is it possible in any way to either limit LSP support in Prometheus itself to only stateless actions (no idea if this is even possible in LSP or if it always requires state - just saw a talk mentioning the option of either full or partial file delta syncs as an example), or alternatively, limit server-side LSP state to be really small / only allow few users at once?

I think there is a way in the middle where we autocomplete function names and metric names only; but instead of current implementation where we autocomplete on full line, we would autocomplete on full words.

More I'm reading this conversation, more I'm thinking it's actually not a good idea to implement the LSP feature in Prometheus for the following reason:

So let's say, LSP is implemented with websocket or another fancy protocol and it's working like should be. Now all frontend can implement a light view that handle PromQL.

But let's be a bit realist here:- Let's say I developed a plugin for IntelliJ IDEA that handle promQL. That would mean I need a live Prometheus server to have my autocompletion and all funcy feature that is coming with it. So that would mean I have to install a prometheus in my development environment which I think is a bit overkill for just having my favorite IDE working properly. And it can work only if I have the chance to be able to install this server

- You could say, that's not a big issue, you could connect your funcy IDE to a remote Prometheus server. And here again I'm not agree. You don't have necessary access to a remote Prometheus.

- Prometheus could be in an isolation zone that nobody have access excepting through Grafana.

- Everybody don't have necessary only one prometheus deployed in Production. If we take Criteo for example, they have like 600 Prometheis deployed. Which become a bit complicate to say, yeah I want to connect to this one in particular

- In Amadeus, we also deployed a lot of different Prometheus. To able to manage it easily and to not lost our customer about the simple question "Where is my metric", we also have Thanos in front of all of these Prometheis. So that would mean to have a nice LSP integration, Thanos would have to implement this feature too. Maybe they want too, maybe not.

- Prometheus could be in an isolation zone that nobody have access excepting through Grafana.

Now let's say, LSP is actually in a sidecar container.

- I still have to install a server in local environment to have my fancy IDE working properly

- When you have hundred prometheus deployed, it's not really realist to say, just had the sidecar for all prometheus already deployed just for a frontend improvement such as autocompletion and so one.

- Deploying the sidecar along to Grafana is not realist too, because that would mean Grafana has a dependency when you are using the datasource Prometheus, which is not the case for the other datasource.

- Prometheus can be installed with only the binary. And if it's the case, that would mean to have a full access to all prometheus functionality I have to deployed the LSP binary too. It will be hard to explain to a SRE who is focus on the stability.

Regarding why we are here. At the beginning we are here because thanks to Julius, we are now able to improve a bit the Prometheus UI. And again let's be a bit realist, today the Prometheus Console was really simple with no feature basically. So I think we can simply change the Prometheus console with more or less the same things that have been achieved in Grafana or in Monaco. You still will have the syntax highlighting, the autocompletion regarding the promQL keyword, and I believe it's not rocket science to have also the autocompletion about label name and metric.

Yes of course you won't have in real time an error that says your PromQL expression is incorrect. But does it really matter actually ? We can still send the promQL expression to Prometheus after the user stopped to type in the console. It will fake the real time.

@Nexucis In case of using an IDE the language server would be shipped within an IDE extension and just run on the same workstation as the IDE.

So this is not really an issue.

To sum up the whole situation, we have two competing standards here:

- The jsonrpc based API specified in the LSP

- The RESTful APIs used in Prometheus

These two follow completely different designs, and I agree that it might be not the best idea to add a jsonrpc based API to Prometheus.

It should be actually quite easy to build a stateless RESTful API that provides access to most of the language server functionality.

However, right now other things like rewriting the PromQL parser and improving the language server have higher priority for me.

@juliusv

Obviously for every user of Prometheus it would be amazing to have proper and very intelligent autocomplete. But at the same time, introducing user session state into Prometheus (which I wasn't fully aware of regarding LSP) seems undesirable. Is it possible in any way to either limit LSP support in Prometheus itself to only stateless actions (no idea if this is even possible in LSP or if it always requires state - just saw a talk mentioning the option of either full or partial file delta syncs as an example), or alternatively, limit server-side LSP state to be really small / only allow few users at once?

The state stored by the language server is basically the content of the open files of each user and the syntax tree and error messages resulting in parsing that file. The latter is recalculated every time the file changes.

The reason why this state must be kept, is that telling about the file update and asking for completions or other information happen in separate jsonrpc messages.

just saw a talk mentioning the option of either full or partial file delta syncs as an example.

For larger files it makes sense to submit only diffs on file changes, which the PromQL language server supports. For this to work, state consistency is really important. For things as small as a single PromQL query doing this doesn't really bring performance improvements anyway, so it is as well possible to send the whole PromQL query on every change.

Is it possible in any way to either limit LSP support in Prometheus itself to only stateless actions (no idea if this is even possible in LSP

The LSP does not even have the concept of stateless actions, so no it's not possible.

What would be possible is to create a stateless REST API that wraps around the LSP functionality, as briefly mentioned above.

The idea behind this is to have an API where the HTTP query would contain the PromQL query and a position inside the PromQL query and the response would be the JSON object that the LSP would have used to encode the desired information.

So an API call could look roughly like this:

$ curl "localhost:9090/api/langserver/hover?query=go_goroutines&line=1&col=5" | jq

{

"contents": {

"kind": "markdown",

"value": "### go_goroutines\n\n__Metric Help:__ Number of goroutines that currently exist.\n\n__Metric Type:__ gauge\n\n__PromQL Type:__ vector\n\n"

},

"range": {

"start": {

"line": 0,

"character": 0

},

"end": {

"line": 0,

"character": 13

}

}

}

So an API call could look roughly like this:

That could work, we'll likely want to mark any such API as permanently internal use only.

I've built a HTTP API for completion and other stuff now:

https://godoc.org/github.com/prometheus-community/promql-langserver/rest

@juliusv @brian-brazil How would you feel about eventually including this HTTP API in Prometheus?

And then maybe having a GSOC student doing the front end integration.

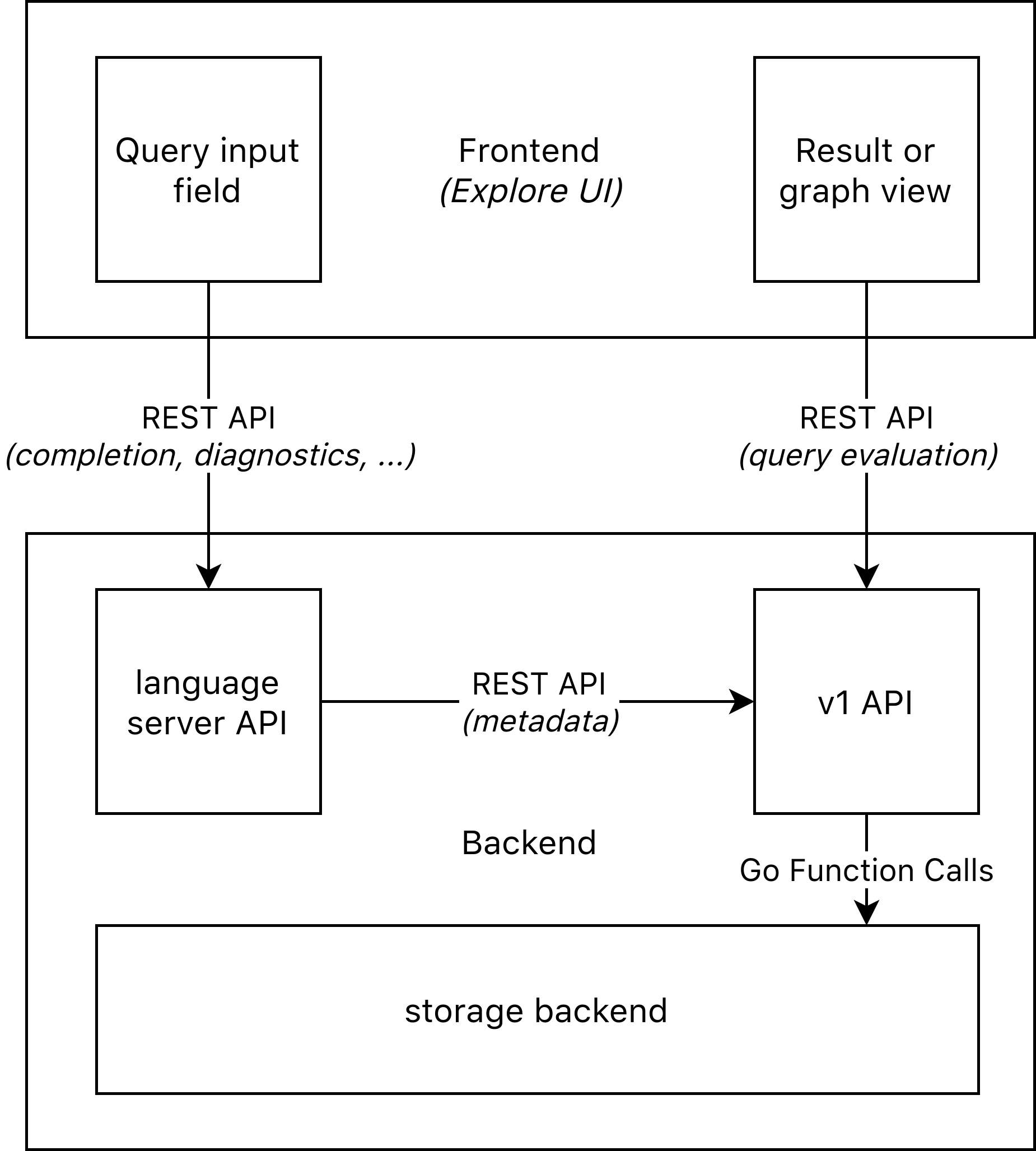

@slrtbtfs I think I may need a box diagram or similar to understand which component talks to which other one using which mechanism now exactly (with frontend and all) :)

@juliusv Here's a simple box diagram that describes how the architecture would look like, if the language server API would be included in Prometheus as is and someone would have done the necessary front end integration.

I'm interested in working on the integration.

@slrtbtfs I see, thanks! So is my understanding of the following correct then, also summarizing what you said further above:

- None of these components speak the actual LSP with each other (as that would be jsonrpc and not REST), not even the wrapper to something below it.

- Instead, the wrapper introduces a completely new parallel REST-based API that is not the LSP, but offers some of the same functionality based on the language server code you wrote, but in a stateless way.

- Thus we cannot use any LSP integration that an editor like e.g. Monaco would offer (through extensions), but we will build our own completely custom frontend integration for this new REST API.

- The reasoning for all this is because LSP cannot be done in a stateless way, and we don't want to have stateful (per-LSP-client state) in the Prometheus server.

Sounds good to me if that's all the case.

@juliusv

- None of these components speak the actual LSP with each other (as that would be jsonrpc and not REST), not even the wrapper to something below it.

All the internal communication happens over Golang function/interface calls.

- Thus we cannot use any LSP integration that an editor like e.g. Monaco would offer (through extensions), but we will build our own completely custom frontend integration for this new REST API.

Yes.

We can reuse some of the type definitions from the vscode-languageserver-types npm package , though.

- Instead, the wrapper introduces a completely new parallel REST-based API that is not the LSP, but offers some of the same functionality based on the language server code you wrote, but in a stateless way.

- The reasoning for all this is because LSP cannot be done in a stateless way, and we don't want to have stateful (per-LSP-client state) in the Prometheus server.

The topic of statelessness needs some elaboration. With the current implementation the following state is kept in the API handler.

A locked down instance of the

langserver.serverstruct. "locked down" in this case means, that the instance cannot send or receive any JSONRPC communication. Logging messages that the instance tries to send over JSONRPC are redirected to stderr. This instance keeps the following state:- The URL of the Prometheus server to be used for the metadata API calls. This is obviously unavoidable.

- A asynchronous cache that keeps track of all documents that are handled by the language server. An API call triggers the following procedure:

- A document with the content passed by the query is created and added to the cache. This triggers asynchronous parsing of the document.

- The desired data is requested from the cache. This blocks until the parsing of the document is done.

- The data is written to the http response.

The document is removed from the cache and eventually garbage collected. This happens regardless of whether the query succeeded, failed or panicked.

If the existence of that cache is a concern, it is possible to change to code to not register the temporary documents there. Since the cache structure is just a synchronized hash map containing one item per currently handled HTTP request I don't see to much of an issue with having it, though.

TL;DR of the above:

- No JSONRPC is involved.

- The REST API exposes a stateless interface, that reuses some types from the language server protocol.

- The handler carries some state.

- Each client state lives only as long as the corresponding HTTP request.

- There exists a hash map containing all current client states. If this is considered a problem, it is possible to stop using that hash map.

@slrtbtfs Cool, thank you for the detailed explanation! That sounds reasonable to me.

Hello guys :)

Just wanted to tell you that I started the promQL mode for CodeMirror here

I'm quite satisfied with the syntax highlighting ( I put a screenshot in the readme so you can judge by yourself )

I'm a bit struggling with the autocompletion. But I guess it's just matter of time.

Otherwise, I'm wondering if I should put this library immediately somewhere in a neutrale zone (like prometheus community). Any thought @juliusv ?

And regarding the "lsp" integration I'm thinking that maybe it will be nice if the CodeMirror mode has a "offline" mode. Like that people that cannot have access to a Prometheus (like for example because of Cross Origin issue) can still have a PromQL editor.

Do you think it will be interesting ?

@Nexucis

Just wanted to tell you that I started the promQL mode for CodeMirror here

Nice!

Otherwise, I'm wondering if I should put this library immediately somewhere in a neutrale zone (like prometheus community).

I would like that. Will raise it with the others.

And regarding the "lsp" integration I'm thinking that maybe it will be nice if the CodeMirror mode has a "offline" mode.

Yeah, definitely LSP-based autocompletion needs to be optional for cases where you don't use it directly from a Prometheus server.

Yeah, definitely LSP-based autocompletion needs to be optional for cases where you don't use it directly from a Prometheus server.

The PromQL language server and the PromQL language server REST API both can work without a Prometheus server to get data from. Syntax error checks, function name completion and function documentation still work in that case.

The main issue here is that some host to run the language server would be required.

Alternatively it should be also possible to run the language server in a browser using Web Assembly. However that would require downloading the langserver wasm binary, which is about 4mb gzipped.

The PromQL language server and the PromQL language server REST API both can work without a Prometheus server to get data from. Syntax error checks, function name completion and function documentation still work in that case.

Good to know! I think in the normal Prometheus server we can always require the full backend support, as the Prometheus UI needs to be able to reach its API anyway. But editors might then use just the language server without a Prometheus server. Not sure if there's a good-enough use case for even pulling the language server itself into the UI using wasm (especially at 4MB size).

it can be "ok" if you still have access to internet I guess, which is not always the case.

But let's see how far we can go in the offline mode ^^

It might be that tinygo will be able to compile the language server somewhen in the future, which might significantly reduce binary sizes.

Hello Guys,

I hope you are all ok during this period.

I have some news regarding the light library I started few weeks ago :)

So just after saying I was finished the syntax highlight, I thought it would be nice for the "offline" mode to highlight when the syntax is not good.

After couple of times of intense coding and failure, I finally decided to move to the proper way: which is having a grammar.

So now I have a light grammar of promQL and I'm able to hightlight some error. Not all of them are hightlighted but it's a start (and the error shown is super ugly). I also have the autocompletion ready :)

Here some kind of error that are not covered :

100 > 100 --> doesn't return an error. I did it on purpose and not sure it will be easy to make it better

100>my_tric --> it's not parsed. The parser is waiting a space to analyze it

100 > group_left 15 ---> doesn't return an error. Same problem as 100 > 100. Grammar too subversive

1 - 100 ) --> doesn't return an error. A bit weird, no idea why

You can try it by yourself using this playground: https://nexucis.github.io/codemirror-mode-promql/

I hope you will like this new way to handle the syntax hightlighting, the autocompletion and the beginning of the error highlightning.

cheers!

PS: @juliusv any news regarding if I should put it in a neutral zone ?

@Nexucis Thanks, doing well, hope you're ok too!

Oh wow, interesting! Are the current limitations of your client-side error display ones that run into fundamental problems with the CodeMirror grammar support, or is it just not "done" yet? Would be really cool to get 100% reliable client-side syntax error markings of course! Btw. not sure if you were aware of it, but we changed PromQL back to a generated parser (from a custom-built one), so now this file basically has the full grammar definition for the language: https://github.com/prometheus/prometheus/blob/master/promql/parser/generated_parser.y

PS: @juliusv any news regarding if I should put it in a neutral zone ?

I just filed https://github.com/prometheus-community/community/issues/19 and https://github.com/prometheus-community/community/issues/20. I had previously asked on Chat, but I think it got ignored, so thanks for reminding me.

@juliusv I'm ok too :) thanks !

I saw the yacc file and actually I was searching for a lib that would generate the typescript/javascript code thanks to the yacc file. But I didn't find it :(.

Regarding the current limitations, I'm not sure.

My fundamental issue so far is to be able to say to the parseur :

100 * 100is a scalar100 * my_metricis a vector

Because last time I was trying it, the 2nd rule didn't work because it said to me it expects a scalar.

So for the moment I factorize the two rules in a single one, which is the main cause of the current limitation.

Maybe if I'm taking a deep dive into the yacc file I will find how to solve it. I guess in the beginning of the promQL grammar you maybe had a similar issue.

But I'm agree with you, it would be super cool to cover 100% of all possible syntax.

Finally regarding the ugly error the codeMirror is currently returning when you are writting a wrong expression, I think it is just I don't handle all functionalities provided by the lib codeMirror-grammar

I just filed prometheus-community/community#19 and prometheus-community/community#20. I had previously asked on Chat, but I think it got ignored, so thanks for reminding me.

Oh cool, thanks a lot !

@Nexucis So in Prometheus the grammar itself initially allows any expression type (vector/matrix/string/scalar) around binary operators (https://github.com/prometheus/prometheus/blob/44ad28dd5e7401bbcbf482a9a9be62c210229a00/promql/parser/generated_parser.y#L228-L244), but then only checks the types of the two operands in a separate step after parsing has succeeded: https://github.com/prometheus/prometheus/blob/fac7a4a0504404fa5d4c5abb8fcc9750bd5cbda7/promql/parser/parse.go#L510-L515

There are a bunch of post-parsing checks like that that would be infeasible to all do directly in the lexer + parser, so unless we port the entire parser + checker to JS manually it seems infeasible to support all error cases client-side with just a grammar file. But maybe that's fine for a beginning.

ah ok! Thanks for telling me it. I was wondering if I had forgotten all my knowledge about how to write a grammar because it was so irritating to fail on that :D.

So yes in that case, it won't be feasible to check this kind of error with the current implementation. I just wrote the grammar and I don't have the hand on the lexer + parser. So I cannot intercept the result and analyze it :(.

So I guess for the "offline" mode, it should be enough for the moment. It's already an improvement regarding the monaco editor, so on my side, I'm already quite happy with it :)!

It would be actually super nice to be able to generate the parser and lexer thanks to the yacc file. But that would be an entire but super interesting project by itself.

Hello,

I finished to build a better development environment, and I will pause a bit the offline mode to be more focus on the "online mode".

Looking at the PR #6872, it seems the functionality needed are not yet ready. Is it something that will be unlocked soon @slrtbtfs ? Do you need some help on it ?

@juliusv do you think, it makes sense to use the promql-langserver directly if functionalities are not yet available in Prometheus ?

Looking at the PR #6872, it seems the functionality needed are not yet ready. Is it something that will be unlocked soon @slrtbtfs ? Do you need some help on it ?

The main blocker currently is https://github.com/prometheus-community/promql-langserver/issues/134 .

I currently don't have the capacity to work on implementing this myself but if someone is willing to work on this, I'll happily help with any questions that arise and review PRs. So help on this would be appreciated.

do you think, it makes sense to use the promql-langserver directly if functionalities are not yet available in Prometheus?

In what context? For using it in VS Code there already exists a VS Code extension. Unfortunately publishing it to the VS Code Marketplace is blocked on https://github.com/redhat-developer/vscode-promql/issues/29 .

I currently don't have the capacity to work on implementing this myself but if someone is willing to work on this, I'll happily help with any questions that arise and review PRs. So help on this would be appreciated.

Ok I will take a look at it then :).

In what context? For using it in VS Code there already exists a VS Code extension. Unfortunately publishing it to the VS Code Marketplace is blocked on redhat-developer/vscode-promql#29

For using it in codeMirror

For using it in codeMirror

If you're ok with having a language server binary running on some host you can use https://godoc.org/github.com/prometheus-community/promql-langserver/rest . (Using the master branch).

If you're ok with downloading about 5mb of compressed wasm code, you should be able run the language server inside the browser. However this requires a small patch in a dependency.

Ok I will take a look at it then :).

Great!

Thanks for your answer @slrtbtfs. I think it will depend mainly how much time it will take to finish the PR #6872. And so if I'm able to handle the issue you pointed :).

Most helpful comment

I'm interested in working on the integration.