Pipeline: Proposal: graceful sidecar support

Expected Behavior

Pods injected with sidecars run to completion.

Actual Behavior

They typically fail because the sidecar is not ready and the steps depend on it. If the steps succeed, the pod does not exit because the sidecar is not killed.

Proposal

Use Cases:

- Environments that require injected sidecars for pods to interface with the network correctly. Concrete example: we use clusters that have do not have internet access and direct all internet bound access through one cluster that does have it via istio (i.e. using envoy proxies)

- CI testing across multiple steps that requires a shared resource, e.g. a local test database.

Requirements:

The solution must handle both automatically injected sidecars and user-specified ones (via some yet to be determined configuration). To be clear, this proposal does not cover how a user might specify sidecars for Tekton to startup, instead it only offers a solution for how to manage sidecars, both injected and manually specified.

Implementation Details:

See https://github.com/tektoncd/pipeline/pull/728 for a POC. We are currently running this successfully with injected istio sidecars. Note that the PR is very wip and a true POC - it's not very clean, has no tests and is likely not production ready. With that said, feedback on the implementation would be appreciated.

At a high level we need to solve two problems with sidecars:

- Wait until the sidecars are ready before running steps.

- Kill the sidecars once all steps have terminated (due to success or failure)

For 1/ we can leverage the entrypointer by modifying it to (optionally) wait until the wait_file not only exists but has a non-zero size. Then we can set the first step in the TaskRun's wait_file to a downward projected volume from an annotation. The controller can then set this annotation to a non-empty value once it observes that all containers in the pod are ready.

For 2/ we have three options:

- Use a shared process namespace and a final container that kills all other containers that are still alive.

- Remote exec into the remaining containers and

kill 1. - Update the pod's spec changing the image for all sidecar containers to the nop image. This will cause the kubelet to trigger a restart of the container, which will immediately exit either because the nop image successfully runs or fails because it tries to run a command that the nop image does not have. Either way, we simply ignore the final result of sidecars for TaskRun status purposes.

Downsides for each approach:

- Introduces security implications of using a shared process namespace across containers. It can also break containers that expect to be run as the first process.

- Does not execute container lifecycle hooks (i.e. pre-stop) and effectively requires re-implementing the kubelet's graceful kill logic ourselves. It also requires giving fairly powerful permissions to the controller, that is, in the very least, the ability to exec into any container in a namespace where pipelines run.

- Is a bit of a hack, but is the only way to update a pod spec to force a container to be killed by the kubelet using the same logic it would use when deleting a pod. Otherwise, the downsides are that it could be confusing to the user to see sidecars always restarted once and subsequently failing.

Personally, I'm partial to 3/ and the POC implements it that way since it's actually rather simple to add, though 2/ seems reasonable as well.

Alternatives:

In order to solve the first problem we need a way to suspend execution of the TaskRun until the sidecars are ready. There are effectively two ways to do this, assuming we've suspended the execution on startup,

- Externally signal to the suspension mechanism (e.g. the entrypointer) that it's time to proceed (proposed above)

- Check if we're ready to run from within the suspension mechanism.

The downside of 2/ is that afaik there's no way to achieve this without elevated permissions of some sort, whether that's RBAC authorization to fetch the pod status that we're running as or access to the docker daemon to check the status of other containers, etc. etc.

Three options were given above for solving the second problem, so they will not be repeated here.

All 17 comments

Wait until the sidecars are ready before running steps.

Kill the sidecars once all steps have terminated (due to success or failure)

Oh this is awesome - I have some sidecar related plans I need to propose soon and I'll need this as well :pray::pray::pray: (plus did NOT anticipate handling this haha)

For 1/ we can leverage the entrypointer by modifying it to (optionally) wait until the wait_file not only exists but has a non-zero size. Then we can set the first step in the TaskRun's wait_file to a downward projected volume from an annotation. The controller can then set this annotation to a non-empty value once it observes that all containers in the pod are ready.

Brainstorming other ideas:

- Make the entrypointer binary responsible

- Make an (init ?) container repsonsible for this <-- could be cool?

For 2/ we have three options:

More brainstorming:

- Could we impose an interface on the sidecar containers, e.g. that they need to stop themselves in such-and-such circumstances, or do we need to be able to support off the shelf (istio?) arbitrary sidecars?

- Have the TaskRun controller decide when execution is done and stop the entire pod

Personally, I'm partial to 3/ and the POC implements it that way since it's actually rather simple to add, though 2/ seems reasonable as well.

My preference of these options is 2/ over 3/ simply because 2/ leaves the pod spec in the state that reflects what it was actually doing, but happy to hear a 3rd opinion here

The downside of 2/ is that afaik there's no way to achieve this without elevated permissions of some sort, whether that's RBAC authorization to fetch the pod status that we're running as or access to the docker daemon to check the status of other containers, etc. etc.

Would we be able to use the downward api for this?

If _we_ could, I'm tempted to suggest:

- Handle issue 1/ with an init container that uses the downward api to determine if the sidecars are ready

- Handle issue 2/ by removing the pod via controller when execution has finished (i.e. all steps have completed)

What do you think @dicarlo2 ?

p.s. https://github.com/kubernetes/kubernetes/issues/65502 looks relevant though during a quick scan I didn't see anything that would help us :thinking:

Handle issue 1/ with an init container that uses the downward api to determine if the sidecars are ready

Just realized this won't work b/c the sidecars won't start until the init containers are done :woman_facepalming:

Maybe the same thing with a container? I guess the question is whether it's simpler to have the controller take care of this (via writing to a volume as you are suggesting @dicarlo2 ) or have a container in the pod take care of it. I'm torn but leaning toward container in the pod :thinking:

Make the entrypointer binary responsible

Yup - I think this makes the most sense for waiting for sidecars to be ready and is the approach implemented in #728.

Could we impose an interface on the sidecar containers, e.g. that they need to stop themselves in such-and-such circumstances, or do we need to be able to support off the shelf (istio?) arbitrary sidecars?

Ideally we'd support off the shelf arbitrary sidecars, it's probably the most user-friendly and likely easiest to configure. E.g. for istio, we could certainly wrap the istio proxy with logic to make it conform to a sidecar interface as well as only use that specially wrapped image in the namespace that we're running tekton pipelines in, but that kind of customization would definitely fall under the advanced users only category (at least for istio).

Have the TaskRun controller decide when execution is done and stop the entire pod

Is there a way to stop the pod without deleting it?

My preference of these options is 2/ over 3/ simply because 2/ leaves the pod spec in the state that reflects what it was actually doing, but happy to hear a 3rd opinion here

Yea, this is probably the right way forward. Originally I was thinking that giving the tekton controller the ability to exec arbitrarily into pods would be a security issue, but thinking about it a bit more the controller can already create arbitrary pods, so I don't think giving it the ability to exec in and kill containers changes the security profile.

To summarize:

- We should definitely use the downward api with an annotation to signal to the entrypointer binary to start the first step in the pipeline once the tekton controller has determined that all sidecars are ready. (See #728 for implementation of this)

- We should let the tekton controller either

a. delete the pod once allTaskRunsteps are complete

b. exec into the remaining containers and kill them

2a. is easier to implement and likely cleaner since we don't have to worry about killing all processes, and any other issues I'm not thinking of when it comes to clearing out a running container. With that said, we'd have to wait until tekton provides a log archival solution since the pod will be deleted and the logs will not be (easily) accessible.

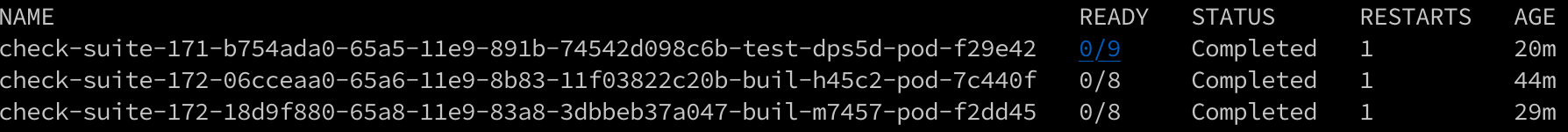

FWIW, we've been running with #728 for a few weeks now and it works pretty flawlessly, for our use-case anyways (istio sidecars). The main downside of course is that the sidecars/pod status is a bit strange since it will always have 1 restart, but we never manually inspect the resulting pods so it's a bit of a non-issue for us. See below for an example (all of these pods successfully exited, i.e. the TaskRun's final status is success):

Also, just fyi, I'll be relatively available to tackle implementing whatever solution we land on for the next week or two. (I.e. I won't disappear again for 7-10 days 😅)

Make the entrypointer binary responsible

Yup - I think this makes the most sense for waiting for sidecars to be ready and is the approach implemented in #728.

My bad, I didn't provide enough detail about what I was thinking here: I was thinking instead of having the controller responsible for knowing when the sidecars are ready, have the entrypoint binary do it - but looking at your implementation in #728, I think the direction you've gone is better.

I was thinking originally that having the controller be responsible for paying attention to the sidecars was complex, but looking at #728 it actually seems pretty slick :D

Ideally we'd support off the shelf arbitrary sidecars, it's probably the most user-friendly and likely easiest to configure.

kk sounds good!

Is there a way to stop the pod without deleting it?

Ah good point :(

With that said, we'd have to wait until tekton provides a log archival solution since the pod will be deleted and the logs will not be (easily) accessible.

I'm gonna get on this asap - and probably we'll implement this with a sidecar, so I guess we have a bit of a chicken and egg situation XD

To summarize:

- We should definitely use the downward api with an annotation to signal to the entrypointer binary to start the first step in the pipeline once the tekton controller has determined that all sidecars are ready. (See #728 for implementation of this)

- We should let the tekton controller either

a. delete the pod once all TaskRun steps are complete

b. exec into the remaining containers and kill them

I totally agree! I think a lot of this back and forth was me wrapping my head around your solution, so apologies for the delay, it seems really solid :D

As for whether 2a or 2b are better, if we feel like we can figure out how to generically kill the remaining containers I think I lean toward 2b after all, but probably they are equally good and we should just pick one and go with it :D

Also, just fyi, I'll be relatively available to tackle implementing whatever solution we land on for the next week or two.

Awesome!!

(I.e. I won't disappear again for 7-10 days :sweat_smile:)

haha no worries at all XD Super excited to have you contributing in general :sunglasses:

Oh yeah one more question: @dicarlo2 as part of this work are you also interested in extending the Task spec to include support for defining sidecars? No worries if not, once we have this we can add that in a later iteration :)

I totally agree! I think a lot of this back and forth was me wrapping my head around your solution, so apologies for the delay, it seems really solid :D

No worries! I've always found it a bit more difficult to work through proposals entirely remote, through text only no less, so I totally understand.

As for whether 2a or 2b are better, if we feel like we can figure out how to generically kill the remaining containers I think I lean toward 2b after all, but probably they are equally good and we should just pick one and go with it :D

Cool, sounds good, I'll take a look at what it will take to implement 2b.

I won't disappear again for 7-10 days

He says then disappears again for 10 days 😅

as part of this work are you also interested in extending the Task spec to include support for defining sidecars?

Definitely interested, we'll just have to see how much time I can devote to it. As a first iteration I'll implement without though.

He says then disappears again for 10 days 😅

lol happens to the best of us XD

Cool, sounds good, I'll take a look at what it will take to implement 2b.

So I started implementing 2b and realized we actually can't use this approach because there's no guarantee the container has a binary capable of killing other processes available. I.e. it's not guaranteed that the container will have kill or equivalent. So we either delete the pod entirely or use the third solution I mentioned in the original proposal.

Thanks for the update about implementation 2b. I'm going to start picking up work on sidecar support once I'm done with TaskRuns in resource constrained environments and this is v useful info.

So I started implementing 2b and realized we actually can't use this approach because there's no guarantee the container has a binary capable of killing other processes available. I.e. it's not guaranteed that the container will have kill or equivalent.

Ah makes sense, nice find @dicarlo2 !!

So we either delete the pod entirely or use the third solution I mentioned in the original proposal.

Both options seem to have some downsides and personally I don't feel like either is immediately more appealing, so I think we should just go with one and see what happens :D

Both options seem to have some downsides and personally I don't feel like either is immediately more appealing, so I think we should just go with one and see what happens :D

I take it back - after seeing demos of the cli tool @vdemeester + co are working on, and the dashboard that @skaegi + co are working on, I think deleting the pods (and the logs with them) is _not_ the best option (even once we do have log persistence).

So let's use the third option and overwrite the spec with the nop image?

So let's use the third option and overwrite the spec with the nop image?

SGTM, in that case the POC I have is pretty much the full implementation - it just needs to be cleaned up, tested and probably properly abstracted (I just kinda stuck the logic in as quickly and hackily as possible)

Sounds perfect @dicarlo2 !! :D

Hey, have you looked at the sidecars containers KEP for Kubernetes? I would be interested to see if you think it would solve this issue for you, tracking issue: https://github.com/kubernetes/enhancements/issues/753 (design doc).

Now that we've agreed on the implementation details of the proposal (and it's already most of the way done in the PR! :tada: thanks @dicarlo2 ) I'm going to close this issue and carry on with the remaining TODOs in https://github.com/tektoncd/pipeline/issues/926

@Joseph-Irving I've read over the sidecar KEP. In principal it does seem applicable to this use case. Given its current status I'm not sure it buys us much right now but thanks for keeping us posted on it. Feel free to ping me on tekton's slack (user Scott) if you want to discuss further.