Picongpu: problems with running topic-collision

topic-collision: https://github.com/ComputationalRadiationPhysics/picongpu/pull/3416 (@pordyna)

Simulations with collisions (adjusted in collision.param) either run only up to ~20% for low laser energies or up to 100% without output for high laser energy. For the low energies i get no error, just that the time ran out (i set it to 20hrs). The same simulations without collisions (turned off in collision.param) run wihtout a problem.

I tried making the box smaller (to a half), reducing ppc (to a half, 32) and used more gpus (8) but its gets just a tiny bit better (runs up to ~30%) for some and for other there is no change. The high energies run without output for every adjustment.

here are the input setups (cases in cmakeFlags):

a5_tp4_n31_input.zip

a5_tp40_n31_input.zip

(the densities as shown in cmakeFlags are incorrect, real values are a sixth of that because i messed up the density ratio)

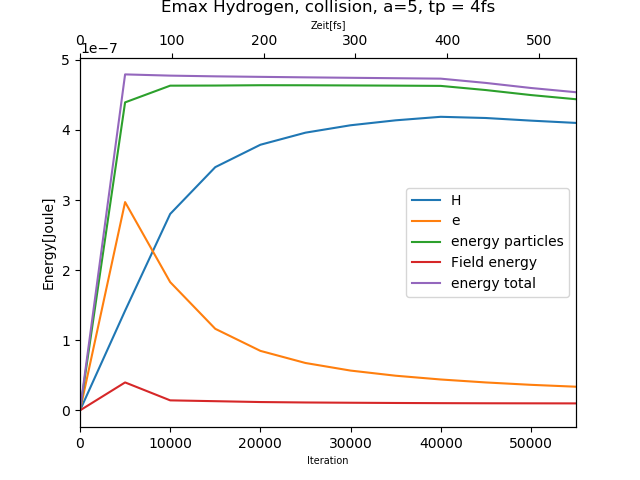

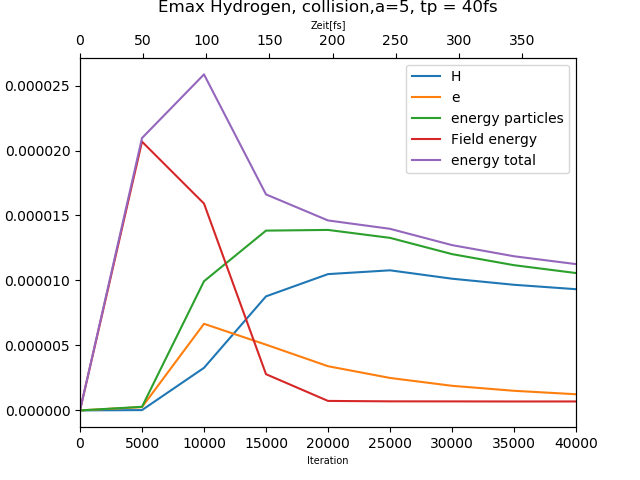

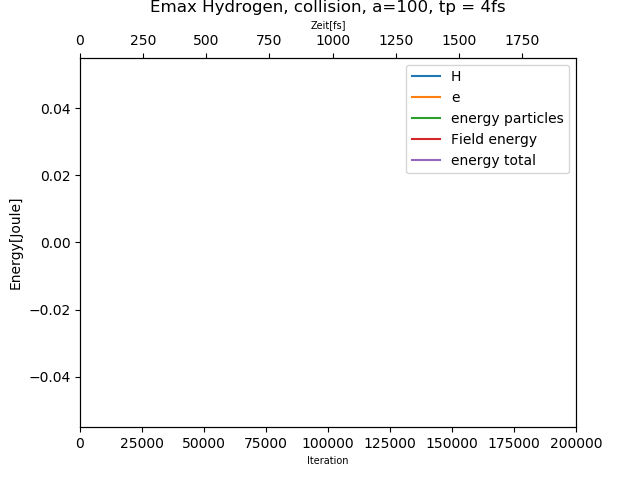

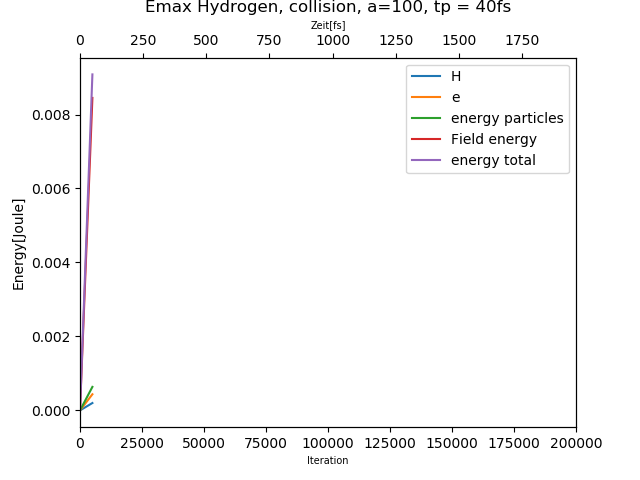

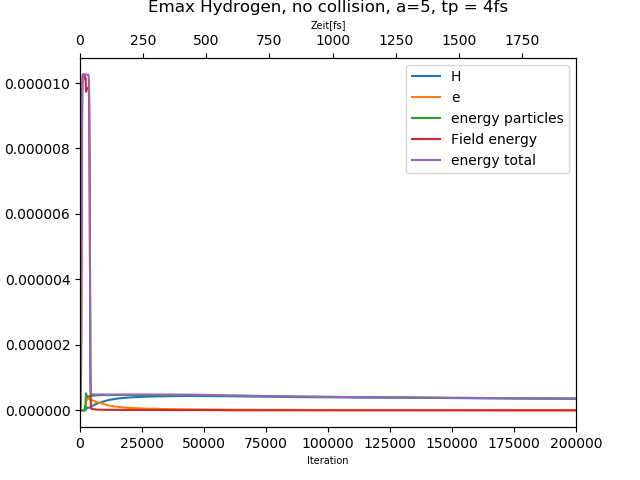

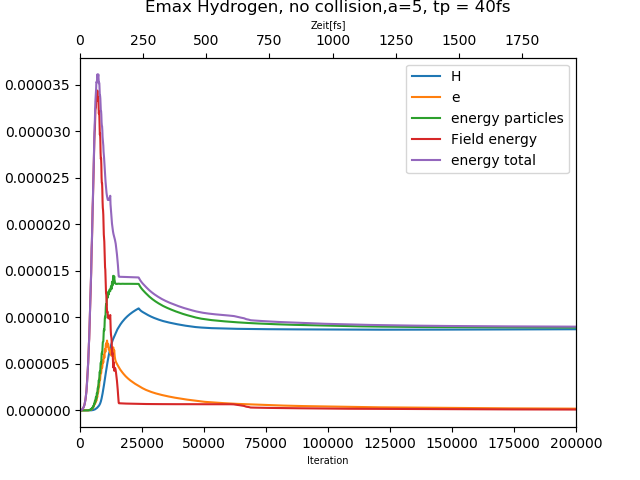

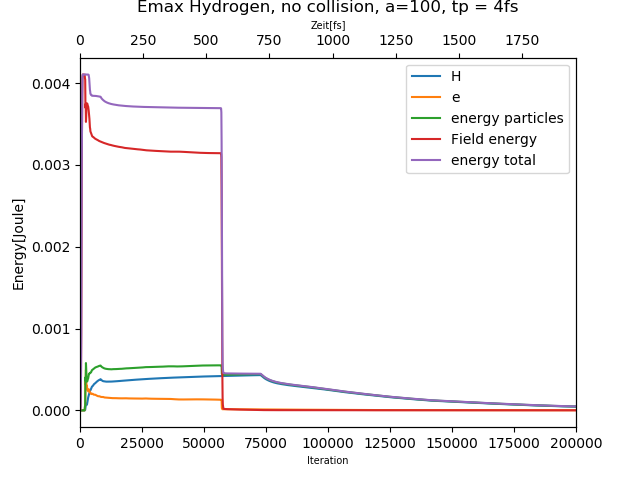

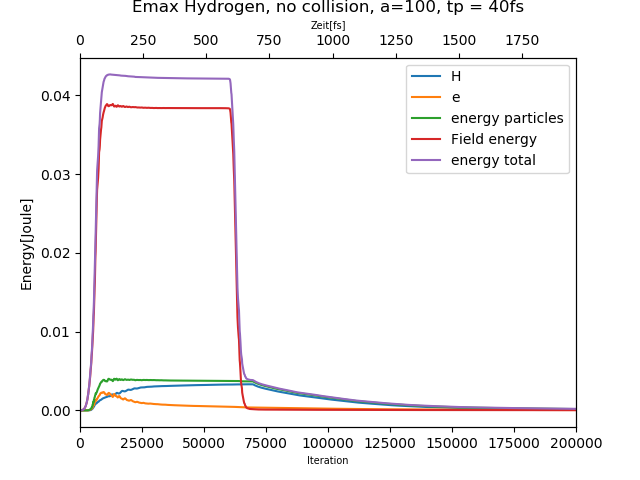

here are the energy diagrams:

collisions turned on:

100% = 200000 iterations

collisions turned off:

All 12 comments

@paschk31 at 200k iterations and a walltime of 20h your simulations has to be faster than 360ms per iteration speed. This is already quite fast without collisions but IO included (of course it highly depends on your setup).

Most likely you run into the walltime, because with collisions included, one iteration requires more than a second. Cold you confirm this via the stdout output?

To overcome the issue, please activate checkpoints and restart from the checkpoint after you reached the walltime.

Hello @paschk31 ,

The data missing on plots can be caused by two reasons:

- data is indeed not part of the output

- data is not valid, e.g. NaN or maybe not in valid range depending on how you plot it

I would imagine the data being invalid (e.g. due to a bug in collisions or somewhere else) is actually more likely than somehow some data being in the output and other not. And that is easy to check by manually looking at the respective output files. Could you please check it? Or somehow share it in case you are unsure how to do so.

What @PrometheusPi wrote is also a very valid concern, but I think it's not what happened yet.

@PrometheusPi okay i will try checkpoints (after sharing the output), thanks!

The iterations take quite a normal time at the beginning, 0% to 10% takes like 10 minutes, after that the same range takes up to 7 hrs. After the bigdata maintenace i will add a stdout to the issue.

Nevertheless the checkpoints are not possible for the high energy range (where the simulations run up to 100%, so it is not clear where to checkpoint).

@sbastrakov yes im not sure how to check the validity of the output, i will add these files as well as soon as bigdata allows to. thanks!

@paschk31 To quickly check the actual run time/number of iterations, could you please post a stdout file of one of your simulations with collisions. If NaNs are the issue, the file should still show more iterations completed than plotted.

Hello @paschk31 ,

The data missing on plots can be caused by two reasons:

- data is indeed not part of the output

- data is not valid, e.g. NaN or maybe not in valid range depending on how you plot it

I would imagine the data being invalid (e.g. due to a bug in collisions or somewhere else) is actually more likely than somehow some data being in the output and other not. And that is easy to check by manually looking at the respective output files. Could you please check it? Or somehow share it in case you are unsure how to do so.

What @PrometheusPi wrote is also a very valid concern, but I think it's not what happened yet.

As @sbastrakov said we should check first if there are NANs in the simulation.

After that we need to check if the collison implementation is maybe not freeing all dynamic used memory.

After bigdate is back we need additionally to stdout the stderr file.

here are the requested outputs:

stdout:

5_tp4_n31_stdout.txt

5_tp40_n31_stdout.txt

100_tp4_n31_stdout.txt

100_tp40_n95_stdout.txt

stderr:

5_tp4_n31_stderr.txt

5_tp40_n31_stderr.txt

100_tp4_n31_stderr.txt

100_tp40_n95_stderr.txt

simOutput/e_energy_all:

5_tp4_n31_e_energy_all.txt

5_tp40_n31_e_energy_all.txt

100_tp4_n31_e_energy_all.txt

100_tp40_n95_e_energy_all.txt

@sbastrakov as you predicted there are nans in the high energy simulations

Yes, so it means the issue is not in the output, but that the simulation somehow produces wrong results.

Nans result from mathematical operations that do not have a defined meaning, nor limit in the mathematical sense, like infinity / infinity, 0 / 0, log(negative number). At the same time operations like finite_number / 0 result in infinities, not nans. And all operations where one operand is nan also result in nan, so they are speading. E.g. if due to some mistake a particle momentum becomes nan at some point, it would then procude nan currents at the neighboring grid cells, and they would propagate these nans around via the field solver, and those would produce nan Lorenz forces and hence momentums of other particles. So it spreads very quickly over all data sets and to investigate you probably need to find the place where it first occurs. I guess the collision code itself is the prime suspect since without it your simulation ran fine.

okay will look for the place it first occurs! thanks!

I reviewed the collision PR again and found few potential sources for NaN's: https://github.com/ComputationalRadiationPhysics/picongpu/pull/3416#pullrequestreview-551791987

@paschk31 Can you check which commit did you use for your simulation? Let me know if you need help with it :smile:

@psychocoderHPC @sbastrakov So NaNs are one thing and I'm looking into this right now but do you have any idea why a simulation would freeze like the low energy ones here?

@sbastrakov @psychocoderHPC @franzpoeschel A little update on this. So it appears that the source of NaNs was in precision problems for highly relativistic particles. My current solution to this is to perform binary collisions with double precision, also in a 32bit simulation. What I want to do is to check for particles velocity and have a customizable threshold for beta, from which on the double precision is used. The check would be done in single precision simulations only.

The other thing is that this setup still freezes for smaller laser parameters. I will have to look for infinite loops again.

Most helpful comment

I reviewed the collision PR again and found few potential sources for

NaN's: https://github.com/ComputationalRadiationPhysics/picongpu/pull/3416#pullrequestreview-551791987