Peertube: Allow uploads to be resumed

Uploading videos take hours with most connections available today. Waiting for hours to get nothing because the connection breaks for a couple minutes, the server gets restarted, or a power failure at 5am is highly frustrating.

Users should be able to resume their uploads. Ideally, it should be possible to resume a partial upload. At the very least, it should be possible to complete the publishing process after an upload is done. For example if an error happens during the processing stage, users should not have to upload the whole video again.

A checksum of the bytes already uploaded could be compared to ensure the local file has not been modified in the meantime.

Partial uploads should not be kept on the server indefinitely. I guess keeping them for 10 days would be long enough.

All 26 comments

How about uploading the video with webtorrent as well (with the current way as a fallback)? It could even open up some interesting functionalities down the road, like allowing the video to be watched before it's completely transferred, and maybe helping pave the road to livestreaming :)

@MayeulC actually uploading the video with WebTorrent would mean uploading the video with a WebRTC data channel to the server and using BitTorrent in it to declare (creating a torrent) and then transfer the file. WebRTC acts as a mere transport layer for a BitTorrent session whose goal is to share a file. The first problem is the fact that torrents are immutable: it prevents livestreaming (see issue #151). In our case the file is a video, but what prevents a client from declaring a torrent for other file types? The server should be able to check the file conforms to what a video is, thus downloading the video in its entirety anyway before forwarding the declared torrent. So I'm afraid using WebTorrent for the upload wouldn't bring anything, except added complexity.

@rigelk I've been thinking about this feature. The underlying .torrent could potentially be uploaded, but to do so would require the local generation of the .torrent file. Now I'm not sure if this is a possibility or not with how things are currently designed.

From my understanding the only way that would allow that, without someone manually creating the torrent locally than uploading it (and seeding it) would be to try to do it with JavaScript in the browser.

I don't know if that is a feasible way to do things or not either. But if there were the option of using the torrent protocol to upload, that could allow for the creation of clusters. Without that, I think the issues I've had with being able to watch videos will probably continue, as I suspect that the limited numbers of peers is a significant factor.

It is also possible that the peers that do exist for a given video with performance issues are behind a "too slow connection."

Without that, I think the issues I've had with being able to watch videos will probably continue, as I suspect that the limited numbers of peers is a significant factor.

Please if you have playback problems on peertube create an issue so we can investigate.

We should not mix up things in this issue. Correct me if I'm wrong, but there are two options considered so far:

- keep it simple by improving the actual HTTP upload mechanism. Resumable file uploads are not something out of the ordinary, and there seem to be quite a few libraries out there: https://github.com/23/resumable.js/ , https://tus.io/ − and even integrations like https://uppy.io/

- go a step further, with a client (in the browser or out of it) creating a torrent and seeding it.

Frankly, I think 1. is way more realistic, since 2. complexifies things a lot.

I will look into it next time. I'm not sure I could really get any idea of how to figure out the ones that were a problem in the past. I have enough problems with other things, that I'm never quite sure what that problem might be.

There is a third option:

- allow the uploading of a seeded torrent file.

I don't know the code base at all, so whether that is an option that ends up as "even more complex" or "less complex" I have no idea. The more I'm seeing the community, and the development team, the more I'm leaning toward staying with YouTube and Vimeo...

@JigmeDatse Don't misunderstand me. I'm merely trying to give hints as to whether or not this is easy, not refusing any option, just so that we can prioritize ways to solve the problem best. Whichever the solution, if you want to get to know the codebase to implement it yourself, we'll be more than willing to help. I don't think you'll ever be given that much by YouTube's.

I don't believe I am misunderstanding you. It's not merely comments to me, but I have seen repeatedly comments of "we don't want to do this." You're quite right that I'd never be given the right to "rewrite" YouTube, and call it YouTube, but I also wouldn't see bickering between a developer and users ever with YouTube or Vimeo.

Perhaps the problem isn't so much that you disagree with people, but that you present roadblocks with no indication of any effort on your (or any other dev member) interest in helping to find them. To you that may be "merely trying to give hints," to most people who aren't already involved, this is probably a strong indication of "we won't do this, unless you are willing and able to do it yourself."

Which to me means "please feel free to fork, because you're going to basically be doing that anyway."

I think you've made my decision for me. Thanks for the effort to be "more than willing to help." as you've helped enormously. I'm going to go back to seeing about MediaGoblin where "more than willing to help" was never mentioned, they just proved it by saying, "oh yeah, that's something to fix..." and they went ahead and fixed it. If it was something which required more than that they did work to do that. I don't have the time or energy to try to work with your team...

Maybe we should continue to discuss about how to fix this issue.

I really think we should have the ability to resume upload and in the first instance make it work simple so we can then take our time to think about a more sophisticated way to do that.

Or maybe is possible to upload file straight to folder without web browser with ftp client like filezila?

I would like to help with introducing resumable.js and implement this.

@xcffl if you are still around, I've come down to https://github.com/kukhariev/ngx-uploadx to the client part of the upload process, and https://github.com/kukhariev/node-uploadx for the server part receiving the chunks. I'll be giving it a stab in the next weeks if all is well, to see if that stack is statisfying. You can have a try at it too if you want :slightly_smiling_face:

@xcffl if you are still around, I've come down to https://github.com/kukhariev/ngx-uploadx to the client part of the upload process, and https://github.com/kukhariev/node-uploadx for the server part receiving the chunks. I'll be giving it a stab in the next weeks if all is well, to see if that stack is statisfying. You can have a try at it too if you want 🙂

Great to hear that! That's definitely helpful.

I've realized that PeerTube is not exactly suitable for my use case, so I might not continue to contribute here.

OK, I'm coming back to this again. If there is anything I can help I may have a look.

I have used resume.js with a node angular project and it was easy to add to main project with webpack. here is the approach I used

https://morioh.com/p/8f10c2038aca

I may give it a try integrating with Peertype uploader.

@rigelk Is there still interest in adding this feature?? I do not waist time if not planned to be mainlined???

@Salmon-Bard there is still interest in this feature, although I would suggest you use the above module.

@xcffl if you are still around, I've come down to https://github.com/kukhariev/ngx-uploadx to the client part of the upload process, and https://github.com/kukhariev/node-uploadx for the server part receiving the chunks. I'll be giving it a stab in the next weeks if all is well, to see if that stack is statisfying. You can have a try at it too if you want

There is something confusing as your referring to both client and server parts with the same link !!!

Also I have found ngx-flow

https://github.com/flowjs/ngx-flow

looks much simpler to implement

What are your thoughts ???

There is something confusing as your referring to both client and server parts with the same link !!!

ngx-uploadx != node-uploadx

looks much simpler to implement

How so? Its server implementation example is shallow at best.

Thanks for the clarification.

How so? Its server implementation example is shallow at best.

By shallow you mean less features and hooks to work with. But maybe we do not want to alert the current Peertube server side processes handling files and only want to work on uploading client resume events with same events triggers and hooks.

I'm looking for a simple solution that will not require rewrting most of the current code around uploading and storage handling.

In all cases I will take a deeper look into ngx-upload and do some testing.

Also, please consider an error message if/when uploads fail

Simply throwing the user out at the upload view/state again (current behaviour) is terrible UX:

I don't use PT much but I just spent the evening attempting to upload a ~400MB file on a 3MB connection (10-20 attempts) and now I'm giving up, don't know why it keeps failing and very unhopeful of it ever working. Slow connections should mean slow uploads not failed uploads - is there an overzealous timeout setting?

Hope this helps

Sincerely

We should not mix up things in this issue. Correct me if I'm wrong, but there are two options considered so far:

- keep it simple by improving the actual HTTP upload mechanism. Resumable file uploads are not something out of the ordinary, and there seem to be quite a few libraries out there: https://github.com/23/resumable.js/ , https://tus.io/ − and even integrations like https://uppy.io/

- go a step further, with a client (in the browser or out of it) creating a torrent and seeding it.

Frankly, I think 1. is way more realistic, since 2. complexifies things a lot.

I've played around with both Uppy and Tus and there's quite a lot you can do with them (Uppy in particular could be used to add support for sources like Google Drive, Dropbox, Onedrive, etc. which currently requires a publicly accessible URL for link import, though that's another discussion).

More importantly, chunked uploading with Tus also has the added benefit that uploads work behind more restrictive proxies like Cloudflare which has a max request size of 100MB on the free plan. More than 200MB, you're looking at enterprise pricing.

Well, this might come as a by-product of livestreaming, depending on how it is to be implemented, to reference my first comment ^^"

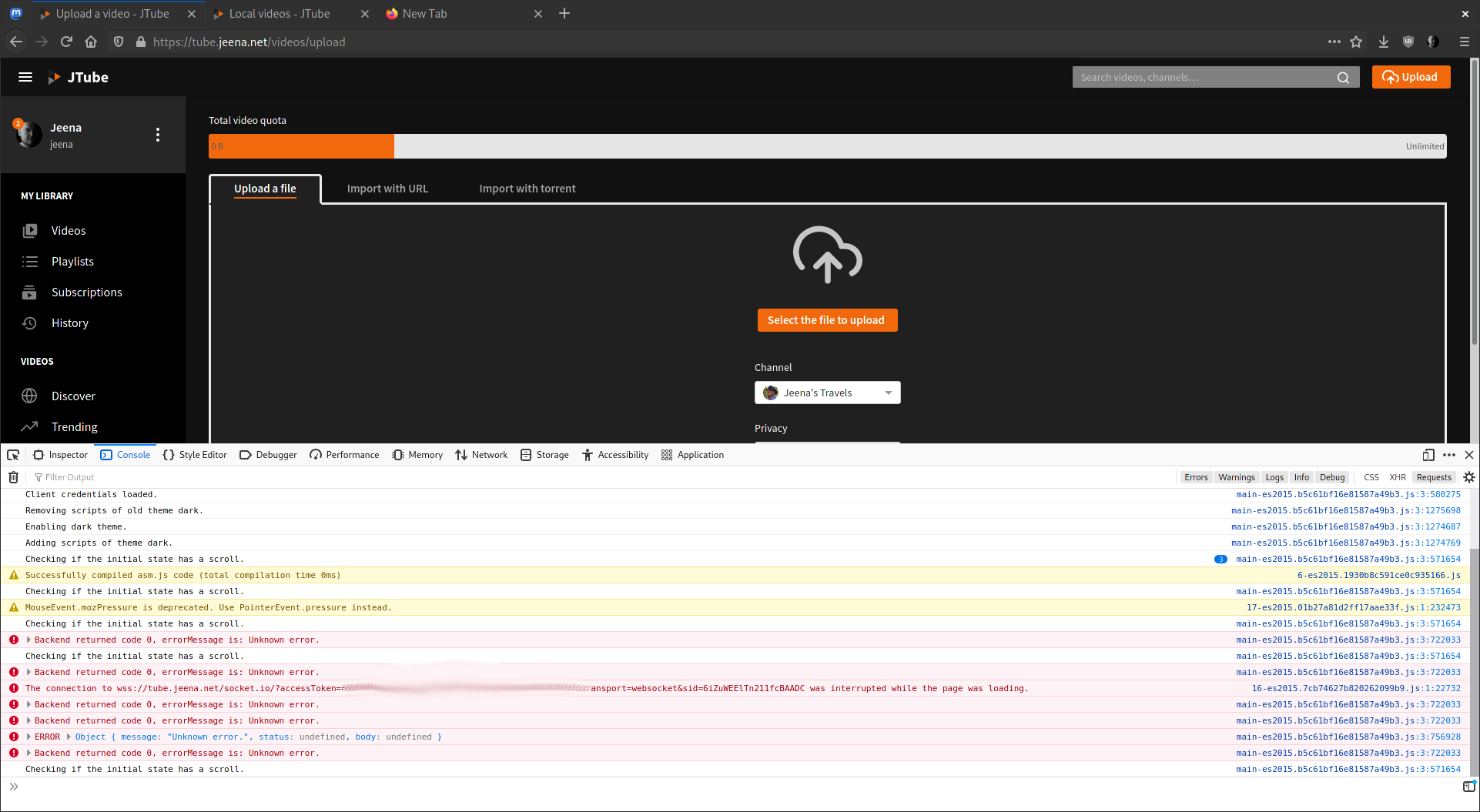

I think at least a error message in the UI when a video upload failed would be a good first step. I was confused for hours about it because the UI just goes back to the "Please choose a file" state and then I thought I accidentally closed the tab (uploading takes about one hour and I keep using my browser). But when I looked in the browser console there are error messages about it. The wifi seems really flaky here at the hotel so it happens often that I need to reupload a video 5 or 7 times before it really appears on my instance. This means a full day of trying to upload a 20 minutes video over and over manually.

@ldexterldesign @jeena I added a way for us to display helpful (hopefully) messages and a retry button while not throwing users out of their upload form, in #3347. Thanks for your feedback!

Most helpful comment

Also, please consider an error message if/when uploads fail

Simply throwing the user out at the upload view/state again (current behaviour) is terrible UX:

I don't use PT much but I just spent the evening attempting to upload a ~400MB file on a 3MB connection (10-20 attempts) and now I'm giving up, don't know why it keeps failing and very unhopeful of it ever working. Slow connections should mean slow uploads not failed uploads - is there an overzealous timeout setting?

Hope this helps

Sincerely