Orleans: Using MySQL as a Membership Provider with Microsoft.Orleans.Clustering.AdoNet 2.1.0 too many Connection error occurred

When i using MySQL as a Membership Provider with Microsoft.Orleans.Clustering.AdoNet 2.1.0 too many Connection error occurred.

In previous versions, a silo had only 2-5 connections. But now one silo have more than 30 connections.

Too surprised me, there is such a problem.

My .net version is: .net framework 4.6.1

Orleans version is: 2.1.0

All 84 comments

I am so surprised, there is such a problem.Is it not disconnected?

Btw ,I upgraded from 1.5.5 to 2.1.0, is the SQL changed?

@HermesNew Has anything else changed? Could the connections be other than membership traffic such as storage grains?

For the reference: https://dev.mysql.com/doc/refman/5.5/en/too-many-connections.html, that amount of connections shouldn't bee high. But if you previously had only a few and now that many, something must have changed. I don't think anything should have changed between 1.5.5 and 2.1.0 that should cause that.

@veikkoeeva I'm sure that is membership's connections.This maybe is bug need to fixed quickly.

@HermesNew To reiterate, 2.0.x functions correctly with a few connections, but 2.1.0 does not and opens an order of magnitude more connections in membership protcol. Unfortunately I do not currently know where to look for the problem. Maybe some of the core people have an educated, "first line" guess. Ping @ReubenBond and @xiazen.

@veikkoeeva I will be testing next week to find the problem. This problem is too serious.

@benjaminpetit Microsoft.Orleans.OrleansSQLUtils Is this package required?I did not install this package.

But I found that Microsoft.Orleans.OrleansSQLUtils only contains these references.

Orleans.Clustering.AdoNet.csproj

Orleans.Persistence.AdoNet.csproj

Orleans.Reminders.AdoNet.csproj

When using UseAdoNetReminderService, the number of MySql connections has increased several.

@benjaminpetit @sergeybykov I have not done anything. After observation, the number of MySql connections will automatically increase.

One silo adds one connection an hour.

Hope to fix it as soon as possible.This is a serious problem.

Microsoft.Orleans.OrleansSQLUtils is just a meta package to make migration from 1.5 to 2.x more easy.

I am looking into the issue.

What providers are you using? Only Clusterting like you said in the first post? And did you added reminders after?

If only have Clustering, you only have 1 connection at first, and add 1 connection after tens of minutes. If both Clustering and Reminder have, at the beginning have 3 connections, then gradually increase the connection until the mysql connection is exhausted.

Surprisingly, the number of connections will only increase and will not decrease.

In the 1.5.x version, this is no problem.

I don't know what changes have been made in the 2.x version. How can this serious problem occur? I have been using Orleans for two years.

I found that all MySQL connections to the Orleans ADONET Provider are in use.

Don't you use an existing connection?

If create new connection every time, should close the connection after using it.I think these are the most basic things.

Is there any other configuration?

And you are sure than 2.0.x does not have this issue, correct?

@benjaminpetit Maybe that 2.0.x have the same issue.I will look at it again.

@benjaminpetit I have tested it, 2.0.x has the same problem.

AdoNetReminderService Config code:

siloHostBuilder.UseAdoNetReminderService(options =>

{

options.ConnectionString = siloHostServerConfig.AdoNetReminderServiceConnectionString;

options.Invariant = siloHostServerConfig.AdoNetReminderServiceInvariant;

});

https://github.com/dotnet/orleans/issues/4207

This issue has been fixed.So I think there is no problem with the configuration code.

I'll just add here tangentially that if this occurs in SQL Server, extra certaintity of the origin can be had by using the Application Name attribute in connection string. The Oracle MySQL connector doesn't have this option, but the open source alternative has (isn't loaded currently, there's an issue open to change that), see at https://stackoverflow.com/questions/51848391/add-application-name-program-name-in-mysql-connection-string.

@HermesNew The code opening and closing the connection is at https://github.com/dotnet/orleans/blob/master/src/AdoNet/Shared/Storage/RelationalStorage.cs#L263 . If multiple connections are opened and then not closed, it can imply several things:

- Connections opened rapidly. Does the error occur quickly or slowly after starting? As this is membership protocol, the framework is responsible, but it'd stand to reason if it's higher level Oracle functionality that it'd occur with others too. I'm not sure if multiple connections would be opened if there are a lot of entries in the membership table.

- If you have a lot of reminders, it may happen you exceed the limit either in ADO.NET pool or server set limit.

- The server is experiencing resource starvation and answers very slowly, which keeps connections open.

@HermesNew As a quick measure, can you increase the connection limit on the server? See at https://dev.mysql.com/doc/refman/5.5/en/too-many-connections.html .

increase the connection limit is not a good solution.Server connection limit is 1000.

MySql connections will automatically increase.So no matter how many connections are not enough.

This issue need monitor MySQL server many minutes ,you will found the membership’s database connection is constantly increasing.

This issue will not be met only by me. Many people may not pay attention to it.because it increase slowly

@veikkoeeva MySQL know what application connected it

@HermesNew To double-check, you have nothing but a system running and the only traffic to database is the membership protocol? I mean nothing else such as reminders, persistence, application code, nothing. Just to make sure since if it is only a membership protocol issue, it can give extra an extra glue.

Are you in position to run the membership tests to MySQL? Since if so and the problem can be replicated in test, it's easier for others to chime in.

Membership and reminder ,all have the issue .It’s work well at version 1.5.x

@HermesNew To synchronize, you have membership and reminder traffic and they combined increase the outstanding connections. Can you give an idea of the number of reminders you have and at what rate they're firing? What version of MySQL connector you are using? What version of MySQL?

<edit: Something of relevance though not the same library: https://github.com/mysql-net/MySqlConnector/issues/305 on thread pool behavior on blocking . Due to this Orleans currently explicitly offloads MySQL connections to thread pool (i.e. otherwise connection do get easily blocked, if there were streaming even deadlocked).

@HermesNew https://dev.mysql.com/doc/connector-net/en/connector-net-programming-connection-pooling.html

Resource Usage

Starting with Connector/NET 6.2, there is a background job that runs every three minutes and removes connections from pool that have been idle (unused) for more than three minutes. The pool cleanup frees resources on both client and server side. This is because on the client side every connection uses a socket, and on the server side every connection uses a socket and a thread.

Prior to this change, connections were never removed from the pool, and the pool always contained the peak number of open connections. For example, a web application that peaked at 1000 concurrent database connections would consume 1000 threads and 1000 open sockets at the server, without ever freeing up those resources from the connection pool. Connections, no matter how old, will not be closed if the number of connections in the pool is less than or equal to the value set by the Min Pool Size connection string parameter.

And just to make sure, the ADO.NET pooling should be on by default. An assumption here is also that it's the same DB but a 1.5 Orleans and 2.x version of Orleans that have something different going on to this same database with the same usage characteristics if it's a shared DB.

MySQL version is 5.6.

MySQL connector is the official package MySql.Data.

Only Orleans's Connect to the MySQL DataBase ,this is my test environment,no other application connect to MySQL.

So I can conclude that this version of Orleans has the problem.

This is my test environment. In this case, how can I put it into the production environment?

@HermesNew Can you try the latest 8.n line and see if the problem persists?

@veikkoeeva My .net version is:.net framework 4.6.1.So the version of MySql.Data I am using is 6.9.12

@veikkoeeva Is it because of the .net framework 4.6.1,If it is .net standard, there is no problem?

@HermesNew I do not know. There has been changes for sure, but I don't know if there is something in .NET 4.6.1 Mysql.Data 6.9.12 interaction. If it is possible, you could upgrade to the latest MySql database, to a newer .NET framework (or use .NET Core). If an upgrade removes the problem, we're that much wiser.

@veikkoeeva Mysql connector I have upgraded to version 8.0.12.But the problem is still not solved.

@veikkoeeva This is my code, is there an error?

`if (clusterClient == null)

{

var clientBuilder = new ClientBuilder()

.Configure

{

options.ClusterId = siloHostClientConfig.ClusterId;

options.ServiceId = siloHostClientConfig.ServiceId;

})

.UseAdoNetClustering(options =>

{

options.Invariant = siloHostClientConfig.Invariant;

options.ConnectionString = siloHostClientConfig.ConnectionString;

})

.Configure

//.Configure

//.UsePerfCounterEnvironmentStatistics()

.ConfigureLogging(builder => builder.SetMinimumLevel((LogLevel)siloHostClientConfig.MinLogLevel).AddConsole());

if (ApplicationPartAssemblys != null && ApplicationPartAssemblys.Count>0)

{

foreach (var assembly in ApplicationPartAssemblys)

{

clientBuilder.ConfigureApplicationParts(parts => parts.AddApplicationPart(assembly).WithReferences());

}

}

clusterClient = clientBuilder.Build();

};

if (!clusterClient.IsInitialized)

{

await clusterClient.Connect(RetryFilter);

}

private int attemptCount = 0;

private int initializeAttemptsBeforeFailing = 3;

private async Task

{

if (exception.GetType() != typeof(SiloUnavailableException))

{

Console.WriteLine($"exception: {exception}");

return false;

}

attemptCount++;

Console.WriteLine($"Client Connect {attemptCount} times {initializeAttemptsBeforeFailing} failed。exception: {exception}");

if (attemptCount > initializeAttemptsBeforeFailing)

{

return false;

}

await Task.Delay(TimeSpan.FromSeconds(3));

return true;

}`

@benjaminpetit I found that the orleansmembershipversiontable table is very frequently queried. Have you changed it in the version of orleans 2.x?

I guess because the query is too frequent, the database connection pool has increased the connection. Can this reduce the query frequency?

@benjaminpetit @veikkoeeva Thank you all.

This issue has not been solved, I can't do anything about it.

I have already changed the Clustering to Consul.

AdoNetClustering problem is still very serious, I hope to attract attention.

BTW,After Clustering switching to consul, the number of mysql connections is normal.

Now My Reminder is use MySQL,the Clustering use consul.

Hi,

I'm using the ADO membership with MySql.

I was hoping to upgrade to 2.1 but now it seems dangerous.

It's very important to solve this one...

@shlomiw Do you have the chance to test if the problem replicates?

@shlomiw please test also to see if you have this issue

@HermesNew if you could provide a memory dump when using MySQL, it would be very useful. There is a lot of unknown parameter here.

@veikkoeeva / @benjaminpetit - when I finally do the upgrade, I'll sure test it and let you know, I don't know yet when it's going to happen.

@benjaminpetit It is very easy to reappear, as I said before. When Custer more than 30 and nodes more than 60 , this problem will arise immediately. The issue is that queries on membership related tables are frequent.

@benjaminpetit In short, if there are enough clusters, the database will not be able to withstand the high-frequency queries of membership, increasing the connection of the database connection pool.If you test too few clusters, you will not find this problem.

@benjaminpetit @shlomiw @veikkoeeva

I read the source code of Orleans, I know the reason for this problem, because my test environment mysql load is higher, and the thread pool mechanism will lead to increasing the number of connections to meet the high frequency query of membership. To solve this problem, you must reduce the frequency of AdoNetCustering's query on the membership-related table. This is a place for improvement and I hope to help you.

@HermesNew - my environment currently consists only 5 silos, so it'll be ok for now (without the fix)?

@shlomiw If only 5 silos I think there should be no problem unless your mysql load is too high.But if your silo becomes more and more in the future, this may be a serious problem, and your mysql will not work properly.

@HermesNew - thank you very much. I'll test it and let you guys know. And of course I will continue keep track on this issue, which is very important for future growth.

@HermesNew I think this is a job for the silo membership oracle and if understand the situation correctly, it should affect all membership protocol providers.

So, based on our understanding:

- The database connection pool is exchausted due to open connections from Orleans cluster.

- The number of open MySQL connections when upgrading from 1.5 too 2.x increases by an order of magnitude.

- It looks like this increase is due to higher number of membership protocol calls to the database.

A corollary seems to be that this change should affect all membership protocol providers and not just AdoNet. Certainly at least all ADO.NET databases.

A definite proof of query frquencies could be had by analyzing the MySQL query log, info: https://blog.toadworld.com/2017/08/09/logging-and-analyzing-slow-queries-in-mysql .

Further pondering:

- There is the new Orleans Scheduler and I don't know if it has a bearing on this matter.

- We know from the previously linked https://github.com/dotnet/orleans/blob/master/src/AdoNet/Shared/Storage/RelationalStorage.cs#L274 that MySQL is amongst the DBs that has queries offloaded to a separate task due to some driver blocking. The queries do use

.ConfigureAwait(false)in any case so they should escape the Orleans scheduler due that also. This code is in 1.5 (has been before that too). - It cannot be ruled out there is some adverse effect due to .NET 4.6.1 / .NET Core 2.0 / MySQL connector incompatibility that leaves the connections open in the pool longer than they should. In another MySQL connector, not the one distributed by Oracle and loaded by default by Orleans, there has been about this kind of a incident with open connections so we have a case showing it's not an outlandish. We also know Oracle MySQL library has had bugs which are being fixed on regular basis (like other software).

@benjaminpetit Do you have idea if there should be something in the new scheduler or otherwise that should increase the membership protocol query frequency?

- It looks like this increase is due to higher number of membership protocol calls to the database.

I don't see why this would happen. We didn't touch the membership protocol at all. All timing and frequencies should have stayed unchanged.

- There is the new Orleans Scheduler and I don't know if it has a bearing on this matter.

The new Scheduler was only introduced in 2.1. So 2.0 could not have been affected.

My suspicion is that if there is an issue, it must from the AdoNet provider down - the code of the provider (if it changed), AdoNet, .NET Framework, etc.

@sergeybykov I estimate that SQL Server will have the same problem, which is determined by the mechanism of the database thread pool. When the database is overloaded, more connections are added to satisfy the query.

But this is a vicious circle ;)

@sergeybykov High-frequency queries are used to discover node joins and exits in a timely manner, but this is not a good idea, maybe storage without monitoring mechanisms is not suitable for service discovery.

Why do you say the queries are high-frequency? During normal operation, as part of the clsuer membership protocol, each silo reads the table once a minute (configurable). So if you have 30 silos in the cluster, it would amount to 30 reads a minute.

Most importantly, this protocol hasn't changed between 1.5 and 2.0 (and between 2.0 and 2.1). So I'm still confused by what we are comparing to what here exactly.

@sergeybykov This is inconsistent with the data I have monitored. I have checked the query on the database 30 times a second.

This problem also makes me confused, but the fact is that the problem does exist. I have been using orleans for two years. I was confused when I encountered this problem.

btw,I have 60 silos.

@sergeybykov Now I have changed the clustering to consul, only the reminder uses mysql, and now the number of database connections is normal.

@sergeybykov This time I upgraded from 1.5.5 to 2.1.0, except for the necessary changes to the changes, other areas have not been modified.

@sergeybykov This issue can be seen after other people have tested it.Wait until I am free and do more detailed testing.

@HermesNew I'm trying to sort out what you have reported so far. please help me understand.

You mentioned both clustering and reminders. Let's disable (not configure) reminders for now and focus on just clustering first. Like I said, clustering alone should not generate any significant load on the membership table. So I want to understand what traffic you are seeing coming to your MySQL database.

- The configuration code you posted is for cluster client. Can you share your silo config code?

- Can you share silo logs?

- Can you run just silos with now clients connecting to the cluster to see what load on the DB silos generate.

@sergeybykov

- These simple tests have been completed. When there is no client connection, the number of connections to the database will increase, Just the connection Increased speed is slower, mainly because the database query pressure is small.

- Warnning of log settings, no warning log, more detailed logs are not open for viewing.

- Silo Config Code:

var siloHostBuilder = new SiloHostBuilder()

.Configure<ClusterOptions>(options =>

{

options.ClusterId = siloHostServerConfig.ClusterId;

options.ServiceId = siloHostServerConfig.ServiceId;

})

.UseAdoNetClustering(options =>

{

options.ConnectionString = siloHostServerConfig.AdoNetClusteringConnectionString;

options.Invariant = siloHostServerConfig.AdoNetClusteringInvariant;

})

.AddMemoryGrainStorage("PubSubStore")

.Configure<SiloMessagingOptions>(options => options.ResponseTimeout = TimeSpan.FromSeconds(ResponseTimeoutSeconds))

//.Configure<SerializationProviderOptions>(options => options.SerializationProviders.Add(typeof(BondSerializer).GetTypeInfo()))

.UsePerfCounterEnvironmentStatistics()

.ConfigureEndpoints(siloHostServerConfig.IP, siloHostServerConfig.SiloPort, siloHostServerConfig.GatewayPort)

.ConfigureLogging(builder => builder.SetMinimumLevel((LogLevel)siloHostServerConfig.MinLogLevel).AddConsole());

//if (ServiceCollection != null)

//{

// siloHostBuilder.ConfigureServices(ServiceCollection);

//}

if (ApplicationPartAssemblys != null && ApplicationPartAssemblys.Count > 0)

{

foreach (var assembly in ApplicationPartAssemblys)

{

siloHostBuilder.ConfigureApplicationParts(parts => parts.AddApplicationPart(assembly).WithReferences());

}

}

if (StartupTaskFunc != null)

{

siloHostBuilder.AddStartupTask(StartupTaskFunc);

}

if (siloHostServerConfig.OrleansDashboardPort > 0)

{

siloHostBuilder.UseDashboard(options =>

{

options.Port = siloHostServerConfig.OrleansDashboardPort;

});

}

if (!string.IsNullOrEmpty(siloHostServerConfig.AdoNetReminderServiceInvariant)

&& !string.IsNullOrEmpty(siloHostServerConfig.AdoNetReminderServiceConnectionString))

{

siloHostBuilder.UseAdoNetReminderService(options =>

{

options.ConnectionString = siloHostServerConfig.AdoNetReminderServiceConnectionString;

options.Invariant = siloHostServerConfig.AdoNetReminderServiceInvariant;

});

}

if (siloHostServerConfig.GrainCollectionMinutes <= 1)

{

siloHostServerConfig.GrainCollectionMinutes = 10;

}

siloHostBuilder.Configure<GrainCollectionOptions>(options =>

{

options.CollectionAge = TimeSpan.FromMinutes(siloHostServerConfig.GrainCollectionMinutes);

});

if (Clients != null)

{

foreach (var client in Clients)

{

Console.Title = $"{siloHostServerConfig.ClusterId} Connect to Cluster:{client.ServerName}";

var success = await client.InitConfig(100);

}

}

siloHost = siloHostBuilder.Build();

await siloHost.StartAsync();

Console.Title = $"{siloHostServerConfig.ClusterId}";

callBack?.Invoke(siloHost);

Console.WriteLine("Orleans Silo is running.\nPress Enter to terminate...");

Console.ReadLine();

await siloHost.StopAsync();

Try disable reminders and Dashboard for now to isolate SQL traffic related to clustering.

I see that you originally posted config code with Consul used for clustering. Did you try that? What was the picture with that?

I'm thinking maybe it's reminders that actually drive traffic to SQL?

@sergeybykov Now I have changed the clustering to consul, only the reminder uses mysql

I'm confused - the code above sets up AdoNet for clustering:

.UseAdoNetClustering(options =>

Is the picture different when you run with Consul?

@sergeybykov It seems that it may be caused by OrleansDashborad and the Reminder query. But this also causes serious problems if silo is enough.

Use Consul's Config code:

.UseConsulClustering(options =>

{

options.Address = new Uri(siloHostServerConfig.AdoNetClusteringConnectionString);

options.KvRootFolder = siloHostServerConfig.AdoNetClusteringInvariant;

})

siloHostServerConfig.AdoNetClusteringConnectionString,siloHostServerConfig.AdoNetClusteringInvariant is read from my custom configuration file, I just did not modify the field name of the configuration file.

Clustering Use Consul,It's all work well and the Mysql connection does not increase.

I'm not sure I understand. When you remove Dashboard, everything is fine? Or was it caused by a configuration mistake? Is it working fine now?

@sergeybykov

My configuration code is correct.And Remove Dashboard this, I have not tested yet.

@sergeybykov

I have given up using AdoNetClustering because this is a potential risk.

Now My clustering Use Consul,It's all work well and the Mysql connection does not increase.

@HermesNew, @sergeybykov Looking at database query logs should give the exact number of calls and their times. For instance at https://blacksaildivision.com/mysql-query-log there are instructions how to log into table, after that one can SELECT the table for queries (we know how membership queries look like) and determine the frequency. I think looking at queries using even the latest bits should be enough to determine if the frequency is abnormally high.

Regardless of that the connections to the pool shouldn't be left open for long, the calls should complete in subsecond time. This method should also indicate if something else is blocking the queries in the DB.

Some more info at https://blog.toadworld.com/2017/08/09/logging-and-analyzing-slow-queries-in-mysql (as was already linked).

.UseConsulClustering(options => { options.Address = new Uri(siloHostServerConfig.AdoNetClusteringConnectionString); options.KvRootFolder = siloHostServerConfig.AdoNetClusteringInvariant; })

FYI, the AdoNet-functions (probably just a typo). :)

@HermesNew - just a thought - there's no automatic maintenance to the sql membership table. It keeps all rows, and creating new ones when a new silo is up (as I seen in my environment also if it's the same silo restarting). See #3261.

Have you checked the rows count of this table? (you have many silos). I'm not familiar with the membership queries but I'm also not sure if they are properly indexed, so queries might take long time and over time it'll start exausting the pool..

@shlomiw A worthy point to make and indexing could be added. I could imagine the row count would need to be in some thousands before indexing makes a measurable difference and before that they likely harm performance a bit. I could imagine the queries are within some tens of milliseconds (incluing network) especially since the table is likely cached into DB memory. All this subject to one's hardware setup.

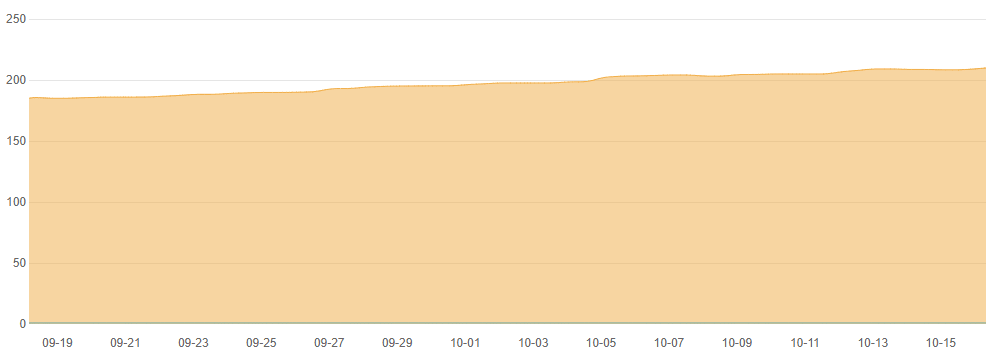

I share a graph of the number of database connections in the production environment for the last month. This database is only for Cluster and Reminder.

The number of connections to the database has been increasing, no reduction.

The version of Orleans is 1.5.5. This problem is always present, but it happens slowly if the database performance is insufficient and silo is enough.

The root of this problem I have already said before, because the performance of the database can not meet the query needs of the Cluster, so the connection pool is increased.

@HermesNew It occurs to me you could simply set the maximum number of connections in the pool, see at https://www.connectionstrings.com/mysql-connector-net-mysqlconnection/connection-pool-size/. This should prevent unbounded increase in connections.

There are reasons for connection growth, enumerated at https://docs.microsoft.com/en-us/dotnet/framework/data/adonet/sql-server-connection-pooling, but they're not plausible in Orleans case. Maybe from client gateway with integrated security?

To recap, there is a problem in 1.5.5 also and the problem is that more queries for membership and reminders are made than can be closed, i.e. the frequency of new queries is faster than responses. Then in 2.x series this problem gets significantly worse?

I think database logs ( https://blog.toadworld.com/2017/08/09/logging-and-analyzing-slow-queries-in-mysql) definitely would give more understanding.

I noticed there is https://github.com/dotnet/corefx/issues/24966 that may affect this. The PostgreSQL and MySQL implementation have some synchronous, blocking behaviour (or at least have had) and the database calls are being explicitly offloaded to a background task. The linked issue _might explain_ the problem and explicitly setting values for minimum, maximum and timeout for the connection pooling might alleviate the issue.

One explicit measure to robustify the system could be to open only one connection per membership provider and per other providers that are guaranteed to issue calls serially per silo (serializing state calls isn't sensible). If this is an issue, this will affect also application database calls.

Tangentially related, exposing ADO.NET pool to application code, in this case to Orleans to make very large scale deployments more robust and efficient: https://github.com/dotnet/corefx/issues/26714 .

I have not verified myself this situation, so provided with a pinch of salt.

/cc @HermesNew, @shlomiw.

<edit: Something that might make the situation worse, assuming this is a problem:

When connection pooling is enabled, and if a timeout error or other login error occurs, an exception will be thrown and subsequent connection attempts will fail for the next five seconds, the "blocking period". If the application attempts to connect within the blocking period, the first exception will be thrown again. Subsequent failures after a blocking period ends will result in a new blocking periods that is twice as long as the previous blocking period, up to a maximum of one minute.

Source: https://docs.microsoft.com/en-us/dotnet/framework/data/adonet/sql-server-connection-pooling .

One might get into trouble quickly in Orleans cluster with potentially thousands of persistence operations, reminders etc.

Assuming returning connections to the pool is a problem, one idea (in addition to mitigating with more robust pooling settings) is to implement https://github.com/dotnet/orleans/issues/3908#issuecomment-367689247 and/or items 3. and 4. at https://github.com/dotnet/orleans/issues/4691. In MySQL case another connector could be used and in PostgreSQL/npsql a newer version of the library.

It might make sense to encourage everyone to update to latest version of npsql and remove offloading to background and test if MySQL tests with newest libraries are still problematic and if not, remove offloading to background task there too.

We have a connection pool exhaustion at Orleans client connection. We are using Postgresql with ADONetClustering option at our Orleans client. It's very strange that Orleans client needs more than 15 connection.

[Error] Exception occurred during RefreshSnapshotLiveGateways_TimerCallback -> listProvider.GetGateways()

Npgsql.NpgsqlException (0x80004005): The connection pool has been exhausted, either raise MaxPoolSize (currently 15) or Timeout (currently 15 seconds)

at Npgsql.ConnectorPool.AllocateLong(NpgsqlConnection conn, NpgsqlTimeout timeout, Boolean async, CancellationToken cancellationToken)

at Npgsql.NpgsqlConnection.<>c__DisplayClass32_0.<

--- End of stack trace from previous location where exception was thrown ---

at Orleans.Clustering.AdoNet.Storage.RelationalStorage.ExecuteAsyncTResult

at Orleans.Runtime.Membership.AdoNetGatewayListProvider.GetGateways()

at Orleans.Messaging.GatewayManager.RefreshSnapshotLiveGateways_TimerCallback(Object context)

@fduman The pool should keep some connections open and multiplex queries over the connections and if you are using only membership queries, exhausting fifteen connections on a short period of time seems fairly implausible (pooling or not). But there are potential issues (see previous discussion and search engines) and it's difficult to tell on this description what could be the issue.

From the stack I see RefreshSnapshotLiveGateways_TimerCallback -> listProvider.GetGateways (maybe @ReubenBond or @xiazen can tell something about that faster than I currently), but unfortunately I'm not able to currently tell how often and at what points does this query happen. I'm explicitly thinking that cluster-side membership queries happen fairly rarely but the client-side queries could be more frequent. More frequent queries could mean exhausting the pool, but still it would mean on this this particular machine there would need to be over 15 queries open that have taken over 15 seconds to run so no one connection could be taken from the pool to satisfy the outstanding queries. Which is odd. One possibility is also other applications connectiong to the database server that take resources and specifically connection handles. As a more general mitigation in addition to to client side pooling, pgBouncer might be helpful, see at https://www.percona.com/blog/2018/06/27/scaling-postgresql-with-pgbouncer-you-may-need-a-connection-pooler-sooner-than-you-expect/ (this is a bit of a tangential).

If you want to double-check, you can paste the connection strings from the cluster and client side and other relevant setup information here or ping me in Gitter.

@veikkoeeva The connection use for only AdoNetClustering option. If I don't limit the max pool size with 15 connection it grows to 100 within an hour. I had to switch options to StaticClustering but it is not a scalable solution for us.

RefreshSnapshotLiveGateways_TimerCallback in on the client side. By default it is invoked once a minute.

@fduman Are you reusing a single IClusterClient and not creating a new one for every request?

@sergeybykov You are right. We have just discovered a bug in our app which reinstantiate IClusterClient many many times. It causes a serious memory leak also. I think the connection pooling issue is related with this bug.

Thank you.

Great. Should we close this then? Seems like the rest here is not Orleans specific. @HermesNew @veikkoeeva

@sergeybykov I don't know if this was the issue @HermesNew experienced, but definitely having a lot of clients each and everyone one opening (though also closing) connections can cause a situation where the number of connections does grow. Traditionally 100 isn't that many, I think it's the ADO.NET default (though on Azure hosted the DB limit is around 60 on the server side, I think) and often raised even. Other than that, if there isn't new information, I think there isn't much we can do without new information.

@sergeybykov I think this issue can be closed.This can't be solved in Orleans. I am using Consul now.

Most helpful comment

@HermesNew - thank you very much. I'll test it and let you guys know. And of course I will continue keep track on this issue, which is very important for future growth.