hi im toying around with orleans and on my machine. i can receive 10 000 "hello world" msgs (without doing anthing with em). i know that the main advantage of orleans is its ability to work in a cluster but i wonder why that number is that small.

Is there a known botlleneck? (Schedulers/Networking/Locking)

Are there any plans to improve?

Looking at the code i at least think there should be potential.

small changes:

Network code does not use async io! why?

IncomingMessageAcceptor and OutgoingMessageSender use socket directly. a BufferedStream for both would make sense from my point of view.

bigger changes propably beyond 2.0 scope:

I would like to experiment with some of the currently in the work stuff of netcore 2.1 eg. System.IO.Pipelines, System.Channels. using that new stuff should significantly improve perf.

sidenote:

i created a prototype/experiment for mqttnet which boosted msgs/sec 10 times with the new networking / channel api.

https://github.com/JanEggers/MQTTnet/tree/system.io.pipelines

thoughts?

All 44 comments

also i cannot get the benchmark project running.

i added Orleans.CodeGeneration.Build to the dependencies of grain contracts and implementation but i still get an exception saying theat no implementation for the grain can be found.

Can you share how exactly you are measuring throughput? There are many subtleties in benchmarking in general, and doing it with Orleans in particular. How are driving load? With a single client thread or more? How many cores does the machine have?

If you are sending request from client, even if on the same machine, messages go over TCP with full mem-copy and serialization. If requests were produced by grains within a silo, the picture would be very different, especially with immutable data being passed.

With a single client thread or more?

yup that is exactly what im doing.

How many cores does the machine have?

https://ark.intel.com/de/products/88970/Intel-Core-i7-6820HQ-Processor-8M-Cache-up-to-3_60-GHz

i send request to one grain and intentionally dont await the response in the client so i can send requests with maximum throughput and that is causing my 32 GB Memory to be eaten up in minutes and all cores are at 100%.

code can be found at:

https://github.com/JanEggers/OrleansTest

if i add await here:

https://github.com/JanEggers/OrleansTest/blob/bf7b87cc38d927295a1b1126672d1aed6b201235/OrleansTest/Program.cs#L72

i get 1000 msgs/sec and memory ist stable but cpu is still at 100 %.

Not calling await has other implications, You are then relying on the .NET TPL library to execute those tasks on the thread pool.. If no one is awaiting the tasks, the behavior beings to change from what you'd expect.

Data serialization is expensive, too. I have seen more throughput using 2 SiloHosts, and 4+ clients. (all separate VMs)

Data serialization is expensive, too.

yup but im just sending a "hello world" and whatever is the msgframe. with binary serialization and on localhost id expect that should work for at least 100k msg/sec. if kestrel can handle 1.7 mio "hello world" request i just wonder why orleans cannot.

@davidfowl: do you think the packages builded for project Bedrock https://github.com/aspnet/KestrelHttpServer/issues/1980 could help improve performance of orleans networking?

The observed behavior is expected for use case of stacking requests to single grain without awaiting.

If to call that case benchmark - from memory standpoint it will show overhead from storing pending grain calls in memory (memory footprint of metadata of average grain method call). As for CPU - assuming that grain method doesn't have any logic - costs of Orleans internal scheduling and synchronization + optional IO - serialization overhead.

But the recommended approach is to fanout requests into multiple grains so that more cores could be utilized at time, and either await the results or lose the backpressure.

The issue with low throughput with 100 % CPU is due to spinning around internal Orleans queues and currently in process of fixing.

@dVakulen thx for the explanation

sry im mixing things now but: i think about plugging a package based on my mqtt experiments in as Orleans.Streams.IStreamProvider to see if that would improve throughput. can i just implement that interface and stick it in a servicecollection and orleans picks it up when requesting that streamprovider by name or is there some more glue code involved?

i send request to one grain and intentionally dont await the response in the client so i can send requests with maximum throughput and that is causing my 32 GB Memory to be eaten up in minutes and all cores are at 100%.

This benchmarking methodology is problematic for multiple reasons.

As was already pointed out, using a single grain as a target is insufficient due to the single-threaded execution guarantee. We usually recommend sending load to a relatively large number of grains (e.g. 1000) by round-robining or otherwise distributing requests across them in order to better utilize capacity of a silo and to be closer to real life scenarios.

Sending a request without awaiting it doesn't actually send anything. It merely add the request to the queue of requests to be sent. With no back pressure mechanism it is easy for a client thread to quickly grow that queue to an unreasonable size. The recommended approach is to control the number of in-flight requests and measure by either a) slowly rowing the rate of requests or b) (my preference) using the

AsyncPipelineclass that implements a simple backpressure mechanism.Running client (load generator) and silo(s) on the same machine makes them compete for system resources and muddies the picture. In some cases, client thread(s) aren't generating work fast enough to fully saturate a silo. When a silo physically isolated from clients, it's easier to see which side is the bottleneck.

Judging by CPU utilization numbers is often misleading because of the spinlocks used by Orleans Scheduler and in general by how those counters work. We've seen in some cases that doubling load on a silo would increase its reported CPU utilization by 5-10%. We found it more reliable to measure max sustained throughput using the

AsyncPipelinemechanism on the load generators side. That allows to fully saturate silos without tripping them over due to be built-in backpressure.

We've measured a silo processing >100K simple remote requests per second when running on 7y old 8-core servers. So you should be able to get much more than the 10K you reported on your hardware.

i think about plugging a package based on my mqtt experiments in as Orleans.Streams.IStreamProvider to see if that would improve throughput. can i just implement that interface and stick it in a servicecollection and orleans picks it up when requesting that streamprovider by name or is there some more glue code involved?

That's an interesting thought. I wonder what @jason-bragg and @xiazen think about it. Orleans Streaming was designed and optimized primarily for high throughput consuming and producing events from/to persistent queues. That's why there's a non-trivial stack there with caches, backpressure, load balancers, etc.

If your target scenario is to ingest high volumes of events from clients and process them with grains, then I think you could simply use the existing SMS stream provider that does not use persistent queues, and sends events directly as grain calls. The key way to increase throughput is to batch events, so that fewer messages are used for delivering same number of messages.

if i add await here:

https://github.com/JanEggers/OrleansTest/blob/bf7b87cc38d927295a1b1126672d1aed6b201235/OrleansTest/Program.cs#L72

That's inefficient because you are awaiting one call at a time. A more efficient way is to add a bunch of Tasks (e.g. 1000) into a list, and then await them all with await Task.WhenAll(list), but the AsyncPipeline approach is even better.

@JanEggers, first I want to thank you for your interest and thoughts on improving our networking. Your suggestions and efforts are very welcome. Various engineers have taken optimization passes and the layer has improved over time, but I believe there is still room for improvement.

Network code does not use async io! why?

There was (is?) a bug in the async api. However, I'm unconvinced the use of async would make a significant difference. Orleans, at the networking layer, is mostly an RPC system. It's networking is tuned for efficiency more than response time or throughput. That is, it's important for the rpc layer to leave as much resources as is practicable to the application layer, even at the cost of some response time or throughput. With proper batching of incoming and outgoing messages, I'm not convinced async would benefit us much under load. Having said that, we still intend to move to async calls when the issues with the api have been resolved.

can i just implement that interface and stick it in a servicecollection and orleans picks it up when requesting that streamprovider by name or is there some more glue code involved?

Currently stream providers must implement IStreamProviderImpl and must be registered via RegisterStreamProvider (or xml config). When this pattern is followed, the stream provider should automatically show up as a names service in the services container. We're working on making this more open, but we're not there yet. While what you describe is possible, it will not be trivial, as the queue balancing and stream subscription management are not generalized enough to be standalone systems so you may need to implement your own versions of these, which is going to hurt.. I don't want to discourage you, but neither do I want to give the impression that implementing a stream provider from scratch is simple.

thx for the info and answering some of my initial questions.

can someone please have a look at

https://github.com/dotnet/orleans/tree/master/test/Benchmarks/Benchmarks

even with some changes i cant get it running. i made it a console app and added Orleans.Codegeneration.Build to the GrainInterfaces and Implementations.

the error i get after starting:

Cannot find an implementation class for grain interface: BenchmarkGrainInterfaces.MapReduce.ITransformGrain2[System.String,System.Collections.Generic.List1[System.String]]. Make sure the grain assembly was correctly deployed and loaded in the silo.

i think it is important to use your benchmarking code when tinkering with the networkstack or integrating other components,

That's weird, adding Orleans.Codegeneration.Build should make the codegen work. How is your app loading assembly ? Did you load the grain assembly into your silo?

@JanEggers We've discussed the Benchmarks project internally and it does look like it is out of date and no longer working, though I'm not familiar with the project so I may be in error. We'll get a PR out to fix the project.

I apologize for the inconvenience, that project does not seem to be part of our nightly runs so it slipped through the cracks. Thanks for finding this.

Submitted a PR #3652 to fix the code gen of our Benchmark app. This should get the benchmark app running again. You are welcome to take a look if you want to run our benchmark app before the PR is merged. Sorry for the inconvenience that brought you. And thanks again for finding this.

But I wasn't involved in the benchmark development, so I'm not quite familiar on what it is testing. If I recall correctly, our benchmarks were introduced by @dVakulen , and later revised by @ReubenBond. Maybe they can bring more insights on this. Also thanks both for contributing these benchmarks to our code base.

@JanEggers, this is a (poor) presentation I made on the Orleans networking layer a while back. It's 'mostly' still accurate.

https://www.youtube.com/watch?v=F1Yoe88HEvg&t=1228s

Note: I was working on streaming a lot when I gave this presentation, so I sometimes misspeak and refer to streaming, but this presentation has nothing to do with streaming, it's all networking.

@JanEggers yes this is a perfect use case for bedrock 😄

@davidfowl how do we follow bedrock so we can start prototyping asap?

There's nothing concrete yet. We're still working through the priorities. The closest thing are "pubternal" transport APIs that kestrel exposes.

While trying to understand the performance characteristics of Orleans Transactions, I needed to have a baseline of normal grain calls to compare against, so I created a ping benchmark.

Ping Benchmark #3674

This benchmark should not be considered an accurate measure of Orleans performance, as it uses two silos and a single client in the same process and the load is actually generated within the silo (not by clients), but it would probably be useful for prototyping networking optimizations and exploring the actor model's performance characteristics.

@sergeybykov why was this closed? did you improve lately?

also @jason-bragg thx for adding another benchmark. as you state the load is generated by a silo so for my usecase this is not realistic. i have mqtt devices and each device generates 1K msgs per second. if i use orleans i should at least be able to process 10K msgs/sec in one cluster node. I also had a look at Azure IOT Hub but that is way to expensive for the ammount of messages i need to process.

i updated to 2.0 Beta1 memory usage is much reduced compared to 1.5 which is nice but im still just seeing 500 msgs/sec.

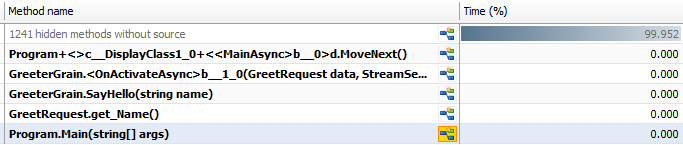

as pictures are easier to grasp Ants Performance Profiler sais 99.95 % methods without source (== Overhead)

I ran ping benchmark on my machine and it sais 1330 msg/sec cold and 2300 msg/sec warm

@JanEggers I was under the impression the discussion exhausted itself. Happy to reopen if you'd like to continue.

im on vacation next 3 weaks so i hope i have some time to provide a prototype with System.Threading.Channel instead of BlockingCollection......

@JanEggers

"1330 msg/sec cold and 2300 msg/sec warm"

I'm seeing radically different numbers.

From command line run of release build on local dev machine:

Running Ping benchmark

Cold Run.

3252923 calls in 30443ms.

106852.90542982 calls per second.

0 calls failed.

Warm Run.

3361778 calls in 30415ms.

110530.264672037 calls per second.

0 calls failed.

Elapsed milliseconds: 63515

@jason-bragg sry visual studio (devtools) seems to slow it down that much.

I ran the test again from console and now things look way better:

Running Ping benchmark

Cold Run.

2120324 calls in 31804ms.

66668.4693749214 calls per second.

0 calls failed.

Warm Run.

2168648 calls in 30692ms.

70658.4126156653 calls per second.

0 calls failed.

Elapsed milliseconds: 69728

Press any key to continue ...

@JanEggers It's usually not VS itself but debugger attached by default (on F5). Ctrl-F5 should be equivalent to running outside VS.

first experiments with System.Threading.Channel dont look good 50000 calls per second. have to find something else to speed things up....

@JanEggers Can you share the code (a gist of something) of what you were trying to do? @dVakulen has done a series of major performance improvements, and he is looking at rewriting the scheduler for better perf.

here is the code https://github.com/JanEggers/orleans/commit/0cc7bbe7721c69a5f9ee8737fa2aa8099065ea38

ill keep you posted i i find a way to squeeze more calls out of it.

i already raised an issue in corefx while experimentig with channels they need an api to get multiple items (reduced locking overhead) and a count for buffer level

@sergeybykov can you please have a look at https://github.com/JanEggers/orleans/tree/System.Threading.Channles-experiments

I did not provide a pr as it is just experiments and the channel package is still in preview and i made some unrelated changes that need to be cleaned up.

but i managed to boost perf in pingbenchmark from 80K (current master) to 120K msg/sec.

i also modified the loadgrain so i can use it to generate load from client. (to compare and analyze overhead of remoting) roughly I get half the number of messages when using the client to generate load.

i cant push it further since visual studio performance profiler and dev tools skrew up perf, at work we use ANTS profiler but at home i have no license. can you advice on tooling i could use to analyze performance?

@JanEggers Thank you! Sounds promising. Although I likely won't be able to look closer before the new year, as I'm technically on vacation. :-)

Tagging also @dVakulen and @ReubenBond.

I was going to suggest using the JetBrains dotTrace EAP, since the EAP version is free and has async tracing support, but they just released the final version today, meaning the EAP is closed for now. https://blog.jetbrains.com/dotnet/2017/12/19/meet-resharper-ultimate-2017-3/

Otherwise, I suggest trial versions of ANTS or dotTrace. There's Intel vTune, but it is rather expensive. No other CPU profiling tools come to mind.

Changes in https://github.com/JanEggers/orleans/tree/System.Threading.Channles-experiments will have conflicts with ThreadPools consolidation PR.

I'd consider to either delay experiments with channels until merge of that PR or perform them on top of it.

ill delay my pr as i said channels are still in preview.... just give me a ping when it is merged please.

Sure, will do.

@JanEggers Maybe Windows Performance Toolkit (some example by Alois Kraus here) and for memory his MemAnalyzer.

Could be interesting to @ReubenBond too, Alois' the Definitive Serialization Performance Guide.

Alois' blog has plenty of example cases, but there are other blogs in the Internet too utilizing Windows Performance Toolkit.

@JanEggers the https://github.com/dotnet/orleans/pull/3792 was merged, you can continue the experiments. But the BlockingCollections is now mostly gone, and replaced with work-stealing enabled queues.

thx for notifing. i will have a look if i can squeeze out more.

any updates ablout System.Threading.Channels?

nope didnt have time yet

as orleans now is using pipelines ill close this issue and open a new one if I find room for improvement somewhere

Most helpful comment

im on vacation next 3 weaks so i hope i have some time to provide a prototype with System.Threading.Channel instead of BlockingCollection......