Openrefine: Reconciliation: how to improve the "guess column type" functionality ?

As already pointed out in the documentation, the "guess column type" function of reconciliation services is unsatisfactory. The choices are often too specific. As a result, it's rare that a user choose one of the propositions.

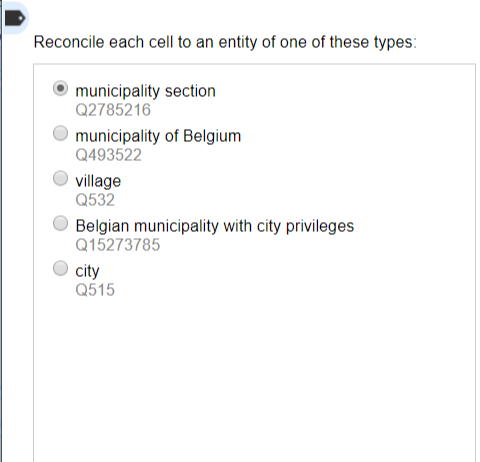

Example: I have a column containing a mix of Belgian municipalities and sections of municipalities. Here are the propositions of the reconciliation service with Wikidata:

You can see that none of them really corresponds to what I need, that is to say a sort of "territorial administrative entity (of Belgium)".

I think it's very difficult (if not impossible) to find a system that works well with any reconciliation service. But for Wikidata, the default one, why not look for Least common subsumers (the Lowest common superclasses in the ontology) of the first two or three propositions ? This can be done with a Sparql Query.

In the case of Municipalities of Belgium and Municipality Sections, the LCS would be "political territorial entity (Q1048835)" and "administrative territorial entity of Belgium (Q15623264)", which would be in both cases a much better definition of my column.

All 9 comments

Very interesting question! I guess the current heuristics were appropriate for Freebase, but not for Wikidata. What it currently does is count the number of times each type appears in the suggestions for the first few rows, and order the types by decreasing frequency.

The queries made to guess the types look pretty much like any normal query so reconciliation services are not expected to return something tailored for the type detection algorithm. That is usually quite nice because as a reconciliation service developer you do not have to worry about this part. But it makes it harder to tweak the type detection results without changing the global behavior of the interface.

One thing that could potentially solve the issue without changing anything in OpenRefine would be that the Wikidata service returns not only the direct types, but also the indirect types via the "subclass of" (P279) property, up to a certain level. In this way, more general types would emerge. The problem is that fetching these more general types is expensive, so we probably don't want to do it for all queries - ideally it should happen only during type detection (and even so - we want this part to be quick too!).

@wetneb The Sparql query I'm talking about seems fast. I'd rather wait five seconds for relevant proposals than get bad ones in two seconds.

oh sorry I overlooked your proposal, just dumped my own ideas >_<

So, given a list of names in the column, how would you find the qids to feed to that query?

You're putting your finger on the difficult part. That's why I thought we could use the QID of the proposals made by the standard reconciliation service. For example, the QID of the first two props (or all five, that would work just as well) in my screenshot. But of course, it's always easy to make suggestions when you do not code them yourself.

Ah right, I see, that would make sense. It would probably be quite accurate and efficient. The only issue is that this would be specific to Wikidata, so we would hard-code something for Wikidata in OpenRefine (which has been done for Freebase, but it's better if we can avoid that).

I guess the "clean" solution to implement that is to add a new (optional) endpoint to the reconciliation API, that takes a list of strings and returns type suggestions. If the reconciliation service does not provide it we would resort to the current heuristics. That would enable us to use a custom algorithm for Wikidata. Potentially we could reuse that for the data extension feature too (so that we get more property suggestions, for instance in the case where the column was reconciled against no particular type).

The only issue is that this would be specific to Wikidata

This is obviously a problem. I personally think that Wikidata (Open Refine's standard reconciliation service) might deserve special treatment.

That said, the Least common subsumer method can be transposed to any RDF graph with a basic ontology (ie, a system of entities and classes linked by rdf:type or instanceOf relationships).

If someone wants to test the idea on a list of QID, here is a little Python3 script (remember that i'm not a developer...)

# -*- coding: utf-8 -*-

import requests #need to be installed : pip install requests

import json

from bs4 import BeautifulSoup #need to be installed : pip install bs4

def getLCS(list_of_qids):

"""

Get the Least common subsumers between several Wikidata qids in a list

Return a Python list with their english labels

"""

array_string = ", ".join(["wd:" + x for x in list_of_qids])

query = {"query": """

SELECT ?lcs ?lcsLabel WHERE {

?lcs ^wdt:P279* %s .

FILTER not exists {

?sublcs ^wdt:P279* %s ;

wdt:P279 ?lcs .

}

SERVICE wikibase:label { bd:serviceParam wikibase:language "[AUTO_LANGUAGE],en " . } }""" % (array_string, array_string)

}

url = "https://query.wikidata.org/sparql"

r = requests.get(url, params=query)

soup = BeautifulSoup(r.text, "lxml")

return [x.text for x in soup.find_all("literal")]

if __name__ == '__main__':

list_of_qids = ['Q2785216', 'Q493522' ]

print(getLCS(list_of_qids))

One thing that _could_ potentially solve the issue without changing anything in OpenRefine would be that the Wikidata service returns not only the direct types, but also the indirect types via the "subclass of" (P279) property, up to a certain level. In this way, more general types would emerge.

This sounds like a good solution and would mimic the behavior of Freebase's much flatter type hierarchy.

The problem is that fetching these more general types is expensive, so we probably don't want to do it for all queries - ideally it should happen only during type detection (and even so - we want this part to be quick too!).

That sounds like an implementation issue. How often does the type hierarchy change? Not very often, I bet, so it probably can be cached effectively. As with the Freebase practice of denylisting Topic, you probably want to exclude any top level Thing equivalent types.

facet of P1269 as well as part of P361 and maybe opposite of P461 could also be very useful to put into type guessing.

As a good example: _materialism_ Q7081

Just one simple concept I've thought of in the past of improving the UI for type guessing. Have 2 columns. Direct types, and Indirect types (which might just be labeled simply as "Other" and that are inclusive of P1269, P361, P461, maybe P31?). And where type guessing is a step in a workflow (Next/Previous buttons). And the boxing could be stretched horizontally for space savings and quick eyeballing and quick clicking like so:

Click a link to choose a type

Direct | Other

-------- | -----------------

materialism (Q7081) | monism (Q178801) , idealism (Q33442)

racism (Q8461) | prejudice (Q179742) , crime (Q83267) , soci(et)al entirety (Q96108364) , identity politics (Q2914650) , ideology (Q7257)

antisemitism (Q22649) | racism (Q8461) , bigotry (Q859781)