Openapi-specification: nullable vs type arrays (JSON Schema compatibility)

I understand that fully supporting type arrays is a challenge for a variety of reasons. I would like to suggest that a compromise that would be functionally identical to nullable but compatible with JSON Schema:

typeMUST be a string or a two-element array with unique items- if an array, one of the unique items MUST be

"null"

The only way in which this is inherently more difficult to implement than nullable is that parsing type becomes slightly harder. The functionality, validation outcomes, code generation, etc. should be identical.

All 18 comments

I know this has been discussed greatly in the past, and as a brief recap:

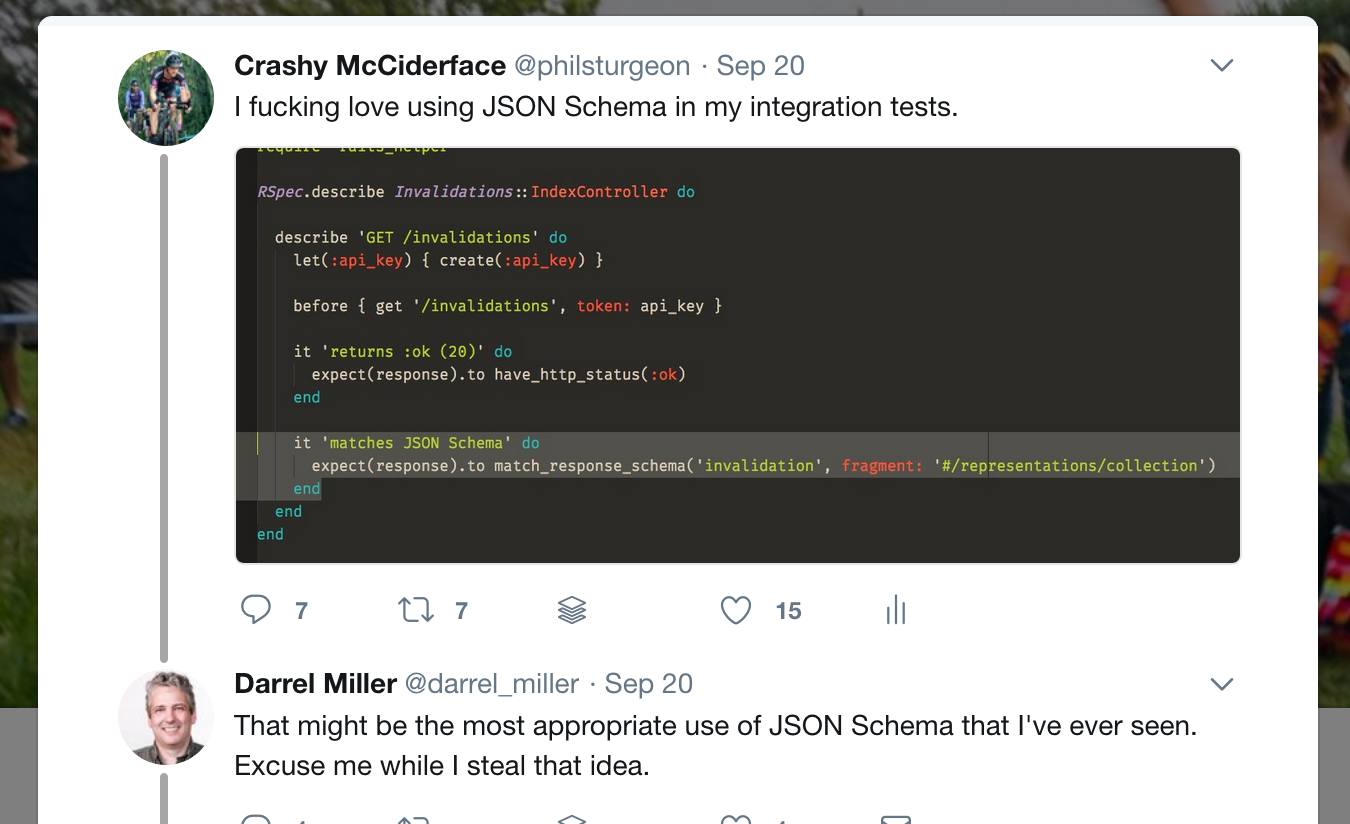

I love OpenAPI, have been pushing it hard at work, and I also love contract testing. JSON Schema is fantastic at contract testing, doing this sort of thing:

@darrelmiller likes the idea, but this is really tricky as the very act of using JSON Schema is causing me to write _invalid OAI files_. I regularly use type: ['string', 'null'] and luckily various parsers support that, even though its invalid.

It's so common for OAI users to use type: ['string', 'null'] that tools are _already_ hacking in support on top of the spec, creating a superset of functionality that only works on some tools...

So that same thread @darrelmiller asked this question:

Do we special case that particular use of the array? Or do we give the middle finger to people who want to generate models?

Honestly it would be fantastic if there was some sort of solution that lead to JSON Schema and OAI handling types identically (supporting a recommendation for "Parameter Bags" to avoid giving out middle fingers as not all languages support union types, etc), but I will be happy to just avoid that whole can of worms.

I change my stance on this and suggest we go with @handrews' proposal:

if an array, one of the unique items MUST be

"null"

That would cover 99% of what people are trying to do. We'd still be left with an item on the "JSON Schema vs OAI discrepancies" list, but it wouldn't cut quite so bad, and it would make thousands of invalid OAI files valid... 👍

We'd still be left with an item on the "JSON Schema vs OAI discrepancies" list

I think the key thing is that the remaining item would be a "feature of JSON Schema that is not supported", while the current situation is "feature of JSON Schema that is functionally supported but requires a conflicting syntax".

I'm not that bothered by a system saying "hey, we don't quite want to use all of JSON Schema as defined for validation". In fact, I want to spend effort in draft-08 making it easier to do that in general, so that projects like OpenAPI don't have to awkwardly document the deltas and either write their own tooling or try to wrap up existing tooling some way to forbid bits and pieces.

With the two-element array option, and some effort in draft-08 around being able to declare support for things like vocabulary subsets, we could get OAI and JSON Schema fully compatible with each other, without requiring OAI to support everything JSON Schema dreams up for validation. After all, validation and code generation are really not the same things, and we should not pretend that they are.

Another reason for supporting @handrews suggestion is that despite commentary/opinion otherwise on whether the v2.0 spec formally permits this or not, the real world continues to intrude.

Companies like SAP (see http://api.sap.com/) produce exactly that construct in their v2.0 output. They expect tool providers to consume it because:

- It is formally valid from the official spec,

- The Swagger 2 on-line editor is happy with it,

- A null value is essential in business APIs,

- And they are big enough to insist

I can confirm that the first three bullet points in @RobJDean185's comment led to our use of two- and three-element array values for type in Swagger 2.0 descriptions published on https://api.sap.com/.

The fourth bullet point actually didn't play a role :smile:

Once we support publishing OpenAPI 3.0.0 descriptions we'll of course strictly use single string values for type, combined with nullable and anyOf.

In Swagger 2.0 descriptions we also use "type":["number","string","null"] for

- numeric data types that allow

-Infinity,Infinity, andNaN, which unfortunately don't have a native representation in JSON and have to be represented as strings, or - numeric data types whose precision is larger than that of

double, so numbers have to be wrapped as strings to avoid precision loss duringJSON.parse()in JavaScript clients

(e.g. for data types DECFLOAT34, or NUMBER(...) with precision greater than 17)

This might be an addition to @handrews' proposal: also allow (a permutation of) this special three-element array for numeric data types.

We use the following script to convert nullable

def _convert_nullable(schema):

ret = copy.deepcopy(schema)

if ret['type'] == 'object':

for key, _ in ret['properties'].items():

ret['properties'][key] = _convert_nullable(

ret['properties'][key])

if ret['type'] == 'array':

ret['items'] = _convert_nullable(ret['items'])

if 'nullable' in ret and ret['nullable']:

ret['type'] = [ret['type'], 'null']

return ret

This would be a very helpful step towards aligning OpenAPI and JSON Schema.

Can we also confirm that null is permitted as a value in an OAS Schema that doesn't specify a type, and doesn't specify nullable as true or false? AFAICT, the current OpenAPI specification doesn't make it clear whether null is valid against a typeless schema.

If we say that null values are valid by default, it's another step towards aligning OAS and JSON Schema, and it should make the transition to the new 2-element array syntax that much easier. With today's syntax, you can still disallow null values by specifying nullable: false or by specifying a type without nullable.

So the translation would be:

| Valid Types

| Current OAS Syntaxtype | nullable | New OAS Syntax

|

| :--- | :--- | :--- | :--- |

| any type including null | not specified | not specified or true | {} |

| any type except null | not specified | false | not: {type: 'null'} |

| specific type T | T | not specified or false | type: T |

| specific type T or null | T | true | type: [T, 'null'] |

Correct?

... Just revisited the spec and I see that nullable has a default value of false. There's no qualifier there about presence or absence of a type assertion. So here's a corrected table:

| Valid Types

| Current OAS Syntaxtype | nullable | New OAS Syntax

|

| :--- | :--- | :--- | :--- |

| any type except null | not specified | not specified or false | not: {type: 'null'} |

| any type including null | not specified | true | {} |

| specific type T | T | not specified or false | type: T |

| specific type T or null | T | true | type: [T, 'null'] |

This has implications for allOf inheritance: A subtype cannot make a non-nullable type nullable. Even if the base schema doesn't specify a type and doesn't specify nullable: false, it is non-nullable by default. So unless OpenAPI has some totally novel inheritance semantics for nullable, making a subtype schema nullable has no effect.

This is a subtle point that is probably going to trip up some API designers. See #1368 for an example. Maybe there's a way we can clarify this in the spec.

@tedepstein if you have a keyword that can possibly fail validation by being absent, that is very, very bad. The empty schema {} must always pass. Is this really the current behavior? Because if so that is a conceptually huge (if hopefully very rare in practice) divergence from JSON Schema.

nullable is a fatally flawed keyword and the single biggest headache we have for interoperability. Breaking the empty schema is so fundamental... I assume the only reason this works is that people either have never implemented it accurately or just very rarely use null.

If there is any wiggle room in the wording of the spec, the only way out of this that I can think of is to define the default behavior in terms of type. Namely:

If type is present with any value, nullable defaults to false

If type is absent, nullable defaults to true

I wonder if any implementations effectively do that anyway.

@tedepstein if you have a keyword that can possibly fail validation by _being absent_, that is very, very bad. The empty schema

{}must always pass. Is this really the current behavior? Because if so that is a conceptually huge (if hopefully very rare in practice) divergence from JSON Schema.

nullableis a fatally flawed keyword and the single biggest headache we have for interoperability. Breaking the empty schema is so fundamental...

Totally. nullable throws a monkey wrench into the whole JSON Schema paradigm. It's the only keyword that _expands_ the allowed range of values. As such its neither an assertion, nor an annotation, nor an applicator. It's something else entirely.

I assume the only reason this works is that people either have never implemented it accurately or just very rarely use

null.

I tend to think that this problem (and others) haven't surfaced very often because people rarely do message schema validation in actual API implementations and client libraries.

The mainstream code generators don't implement it. I don't know if there are open source libraries that implement OAS-compliant schema validation; and if there are, they may not be widely used. Which is kind of unfortunate, really. A missed opportunity.

If there is any wiggle room in the wording of the spec, the only way out of this that I can think of is to define the default behavior in terms of

type. Namely:If

typeis present with any value,nullabledefaults tofalse

Iftypeis absent,nullabledefaults totrue

That's really what I was hoping for. Having a keyword with different default values, depending on another keyword, is definitely odd. But in this case, it would be much better than the alternative.

Strictly speaking, this would be a breaking change. But maybe the TSC will have an opinion about this, or some insight into the original intent.

I wonder if any implementations effectively do that anyway.

If anyone can point to any OpenAPI-compliant schema validators in the wild, that would help.

http://OpenAPI.tools/ plenty on there.

--

Phil Sturgeon

@philsturgeon

On Apr 29, 2019, at 07:09, Ted Epstein notifications@github.com wrote:

@tedepstein if you have a keyword that can possibly fail validation by being absent, that is very, very bad. The empty schema {} must always pass. Is this really the current behavior? Because if so that is a conceptually huge (if hopefully very rare in practice) divergence from JSON Schema.

nullable is a fatally flawed keyword and the single biggest headache we have for interoperability. Breaking the empty schema is so fundamental...Totally. nullable throws a monkey wrench into the whole JSON Schema paradigm. It's the only keyword that expands the allowed range of values. As such its neither an assertion, nor an annotation, nor an applicator. It's something else entirely.

I assume the only reason this works is that people either have never implemented it accurately or just very rarely use null.

I tend to think that this problem (and others) haven't surfaced very often because people rarely do schema validation. The mainstream code generators don't implement it. I don't know if there are open source libraries that implement OAS-compliant schema validation; and if there, they may not be widely used.

If there is any wiggle room in the wording of the spec, the only way out of this that I can think of is to define the default behavior in terms of type. Namely:

If type is present with any value, nullable defaults to false

If type is absent, nullable defaults to trueThat's really what I was hoping for. Having a keyword with different default values, depending on another keyword, is definitely odd. But in this case, it would be much better than the alternative.

Strictly speaking, it would be a breaking change. But maybe the TSC will have an opinion about this, or some insight into the original intent.

I wonder if any implementations effectively do that anyway.

If anyone can point to any OpenAPI-compliant schema validators in the wild, that would help.

—

You are receiving this because you commented.

Reply to this email directly, view it on GitHub, or mute the thread.

Thanks, @philsturgeon .

@handrews , I think that answers our question. Assuming at least some of those message validators follow the spec exactly, changing nullable to a different default would be a breaking change.

But allowing the 2-element type array as you've suggested, and possibly deprecating nullable, would not be.

I thought the idea was to add alternativeSchemas in 3.1 to avoid needing to worry about fixing any of this, then 4.0 kicks out Schema and makes alternativeSchema the new Schema.

Instead of trying to ad hoc fix single discrepancies between OpenAPI Schema Objects and the already out of date versions of JSON Schema it was roughly based on, the idea as I understood it was to get rid of OpenAPI Schema Objects entirely and let JSON Schema, XML Schema, Protobuf, etc take over the domain model.

--

Phil Sturgeon

@philsturgeon

On Apr 29, 2019, at 12:18, Ted Epstein notifications@github.com wrote:

Thanks, @philsturgeon .

@handrews , I think that answers our question. Assuming at least some of those message validators follow the spec exactly, changing nullable to a different default would be a breaking change.

But allowing the 2-element array as you've suggested, and possibly deprecating nullable, would not be.

—

You are receiving this because you were mentioned.

Reply to this email directly, view it on GitHub, or mute the thread.

@philsturgeon there's also https://github.com/OAI/OpenAPI-Specification/pull/1766

Why can't we just add a type: null and then use oneOf for situations where you can provide an explicit value or null? Then we don't need to support some foreign/new type where it's value is an array of types, yet we still have a way to indicate that null is a valid value.

@whitlockjc are you aware of how normal JSON Schema handles this?

I mean, that's an option, but why the excessive resistance to compatibility?

Here is the proposal to clarify nullable. It is currently under review by the TSC, hopefully to be included in a v3.0.3 patch release of the OpenAPI spec:

This was fixed in #1977 so we can close this.

Most helpful comment

I know this has been discussed greatly in the past, and as a brief recap:

I love OpenAPI, have been pushing it hard at work, and I also love contract testing. JSON Schema is fantastic at contract testing, doing this sort of thing:

@darrelmiller likes the idea, but this is really tricky as the very act of using JSON Schema is causing me to write _invalid OAI files_. I regularly use

type: ['string', 'null']and luckily various parsers support that, even though its invalid.It's so common for OAI users to use

type: ['string', 'null']that tools are _already_ hacking in support on top of the spec, creating a superset of functionality that only works on some tools...So that same thread @darrelmiller asked this question:

Honestly it would be fantastic if there was some sort of solution that lead to JSON Schema and OAI handling types identically (supporting a recommendation for "Parameter Bags" to avoid giving out middle fingers as not all languages support union types, etc), but I will be happy to just avoid that whole can of worms.

I change my stance on this and suggest we go with @handrews' proposal:

That would cover 99% of what people are trying to do. We'd still be left with an item on the "JSON Schema vs OAI discrepancies" list, but it wouldn't cut quite so bad, and it would make thousands of invalid OAI files valid... 👍