Octoprint: small corners dont print smoothly (raspberry pi)

Hi,

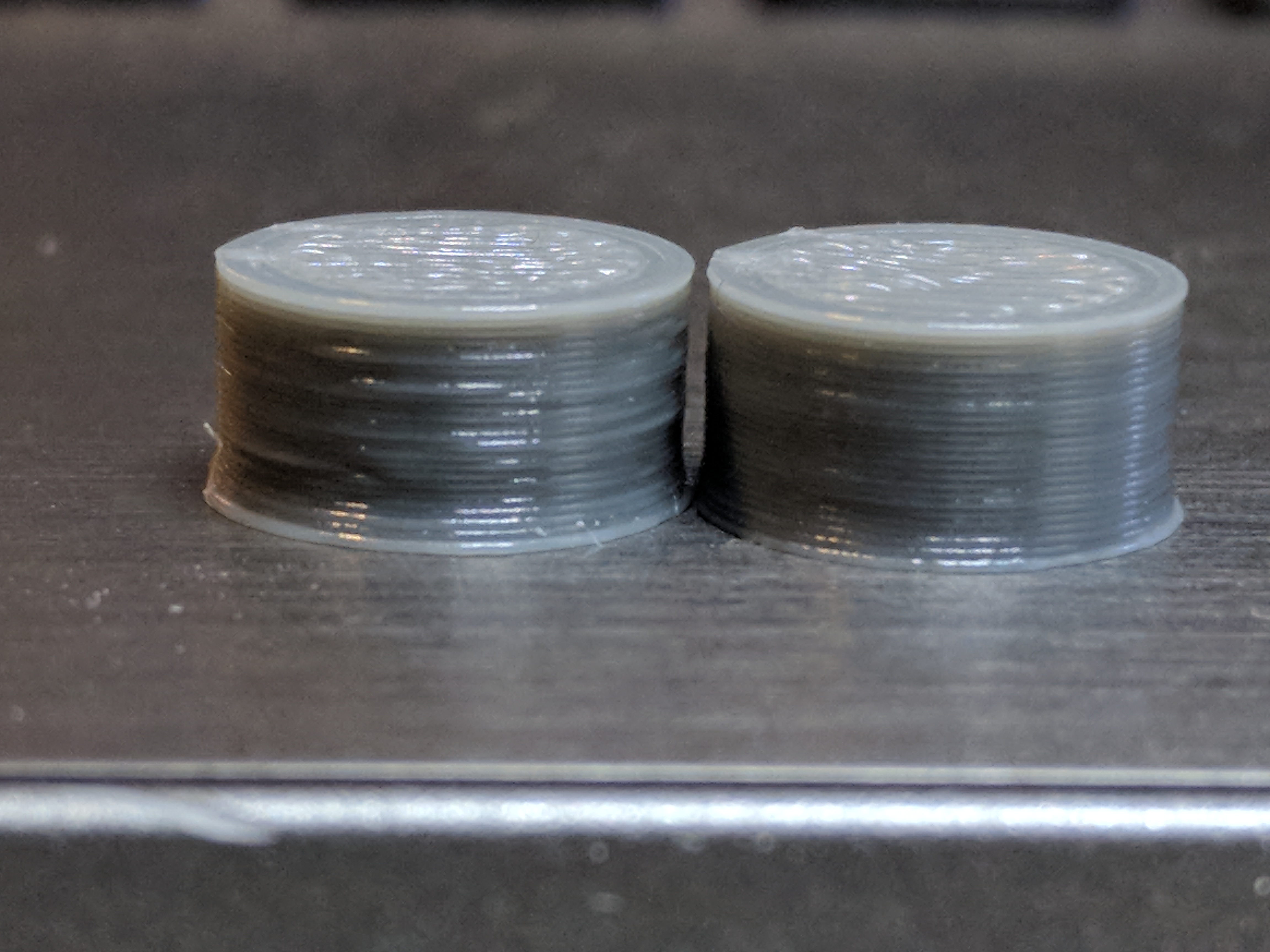

I have had a couple of gear prints that fail get bad print results when using OcotPrint. The same gcode in repetier via a pc works perfectly without any small stops or hesitations in the movement.

This is one of the gears in thing:243278

example images of full print from pc/repetier vs stopped print OcotPrint.

A video of the problem. Long straight or circles work without any problems but a few seconds in when it prints the "gears" it starts to stop mid movement.

https://www.youtube.com/watch?v=tbUntyq7djY

The log from the heart gear print

https://gist.github.com/LangBalthazar/11037683

The gcode from the heart gear print:

https://gist.github.com/LangBalthazar/11037928

I am running Branch: devel, Commit: bf9d5efe43a1e57aacd8512125082ddca06b4efc

I have done a update and upgrade (so the pyserial should be 2.7 and work on baud 250000, correct or could this be my problem?) My printer is a Velleman K8200 / 3drag.

Any ideas on why this happens when printing from octoprint?

Best regards

Balthazar Lang

All 163 comments

You are printing from the Pi, right? You might simply crash against it's

limitations there. For toolpaths with a lot of small lines (e.g. lots of

rounds and direction changes, as in gears) you will have a lot of gcode

lines generated, each only moving the head a tiny fraction. Now, all those

lines of gcode need to be transfered to the printer, and that quite fast.

On a regular work pc, you won't run into problems here. On a pi though,

transfer plus house keeping can be too much. Personally, I've taken to

doing prints like that from SD instead of streaming them via USB. Serial

communication is a bottleneck. Printing slower might help.

It might be better than just stating that raspi might be too slow, then maybe Octoprint can detect that it is falling behind, and report an warning or such.

Suggestions on how to do this (without making the whole processing even

slower) are welcome

First suggestion is you could (while anaylsing the gcode), try to detect Perimeter Movements, e.g. by Speed, and check if there are many "small" movements after each other. Just one suggestion, I believe if I think that further I'll find a few more ways to check that.

If the errors are happening because the processor gets too loaded, maybe just having a graph showing the processor utilization?

Hello,

I had an idea about speeding up the whole Analysis Process and even detecting for small movements... I don*t know if it's a good idea so i post it before doing any programming.

Idea:

Do the analysis in the browser before uploading everything to Octoprint.

e.g.: I want to upload File XY, i drop it onto the Browser, now a Javascript Gcode Analyser will process the whole file, sending all necessary informations with the file (as a comment or JSON-Data), Octoprint wouldn't need to do anything anymore.

If you notice areas with many small movements you could inject a Gcode to slow things down, make a Comment, or warn the uploader.

The problem I have with this approach is that it's moving too much of the core work over to the client. This is critical due to two reasons.

- Never trust the client

- My guess is that this would make uploading from a mobile device or remotely (think: cloud slicer) way more complicated/close to impossible.

IMHO the gcode analysis should be the server's responsibility (and right now it doesn't add much anyways besides some stats, which is why it's done asynchronously and should never be done when printing), as should be anything to do with file processing.

Recognizing small movements might be possible on the fly during printing, so we could display a warning in such a case. What I want to prevent though is adding even more detection code and stuff in the send loop that will put even more strain on the poor CPU and make serial communication even more fiddly (which it already is anyways thanks to the protocol), which is why I'm quite reluctant to add large "am I slow now?" detection mechanisms in there. This is also the reason for my backtalk above ;)

I'm not even sure if a software solution is the best approach here to be honest (due to the serial bottleneck and also the fact that as said above, anything done in Software will only put more strain on the system). A small part of me is rather thinking in the direction of emulated SD card attachments connected via hi-speed-USB to the host.

In any case, at the current state it's a non trivial issue.

anyway to compress gcode before sending it over the wire ?

Does this problem still occur in OctoPrint 1.2.x? I backported a lot of the fixes I did on the commRefactoring branch (which sadly proofed after a lot of work on it to be unviable), so the problem shouldn't exist as much anymore (although the serial line will still always be a bottleneck the smaller the segments get, there's nothing I can do about that).

I have been bit by this as well.

I made a small g-code file, only containing a few G0 commands and tried to have octoprint(1.2.9) send it to marlin over raspi serial (the one on the GPIO) at 250000 baud.

I then hooked up a LSA to RX and TX and measured time between "ok" and the next "G0" command: approx 10ms.

So octoprint seems to be busy doing stuff for 10ms between each command getting sent. seems to me like a place to look for some improvements. If I make file long enough octoprint maxes the raspi to 100% CPU load.

First place to look could be disabling terminal logging during prints. (i tried with a filter, but it did not help much)

The firmware should buffer up G0 commands, so unless you are trying to print more than 100 line segments per second you shouldn't notice. What speed are you printing at and how short are your line segments during a curve?

The test data mentioned is crafted only track down where the bottlenecks are. No real printing going on here. But if I make small enough curve segments it will exhaust the buffer in the firmware. I'm pointing out that the send loop isn't particular fast, which I think I read some of the comments above to confirm as well.

Well yes it will always be possible to exhaust the buffer with a finite comms speed, but is 10ms a problem in a practical sense? It doesn't seem to affect my prints but then I don't print segments shorter than my filament width.

Perhaps I should explain why I came across this in the first place. I was wondering why upload to sd card takes to long. And here I more or less constantly see a 6.6ms delay from previous "ok" till next line starts transmitting.

Sorry for trying to make the example simpler when all that it did was to confuse you guys.

So I thought I would investigate this but I can't get upload to SD to work at all with Version: 1.2.9 (master branch). The log just says octoprint.filemanager.analysis - INFO - Starting analysis of local:cal.gco and that is it. It never sends anything to the serial port.

Does this work for anybody else?

There is a bug with sd uploads in 1.2.9 that I already have fixed on the

maintenance branch, but I got sick before I could release that and since

then it has been on hold. See #1224

OK I downgraded to 1.2.8 using your instructions. The sudo service octoprint restart did not find a service but a manual reboot worked.

The delay from OK to the next line is 16ms on my RPI B. Marlin only takes about 0.68ms to process the line and reply with OK. The line takes 5.3ms to send so it could potentially run a lot faster.

I agree, there's something up here.

@MortenGuldager also pinged me on another channel and suggested he'd open a new ticket for that. He observed a significant performance drop between 1.2.2 and 1.2.9 so it definitely looks like there was some issue introduced somewhere between those versions. For this a new ticket indeed might be better (specific issue introduced through a code change somewhere).

It's actually a perfect timing now since the 1.2.10 has gotten delayed anyhow, this way that could contain another valuable fix if we figure this out. I have to admit, I'm unsure how to best measure this stuff (not sure my cheap logic analyser is up to that actually) and I'm still not fit again, so any help from you two in that matter is welcome to get that ironed out as fast as possible.

I measured it with an extremely cheap Chinese clone of a Saleae logic analyser. Although I do have much better equipment that was the easiest to attach to the machine in my garage that uses ttyAMA0 at 115200, rather than USB at 250000. A long time ago I found that faster for SD upload but I can't remember if that was an early OctoPrint or Pronterface.

The next step I will try is 1.2.2. To get further it might be necessary to use a Python profiler. Or I could add code to pulse GPIO lines at specific points in the code as I have six more channels on this device.

my fault. perhaps not the latest octopi. The "fast" one reports Version: 1.1.1-30-g4fede5a (master branch)

and the img file I loaded on the RPI sd was 2015-01-31-octopi-0.11.0.img

That is bad then, because 1.1.1 contained bugs in the comm layer that could basically ruin prints under the right conditions (race conditions in resend handling and overrunning the firmware's receive buffer) that the big rework in 1.2.x solved. So no going back to the old version without basically breaking everything left and right again for a lot of people.

There are still points in the code where I could imagine there are optimization possibilities though.

well, lets leave it as it is here. I will look into other ways for speed-uploading and eventually make a feature request for a faster serial communication.

Sorry for the fuss I stirred up.

I found I had the serial log enabled. Turning that off reduces the latency to just over 9ms, so similar to @MortenGuldager's finding. If the latency was zero it would go nearly three times faster.

The sd upload via serial is more like a hobbled wheel anyhow. Even if you blast at full serial rate, a large file still takes ages. I've recently been rather experimenting with FlashAir (sd card with built in Wi-Fi) and that looks like a way less annoying approach.

I'll still take another look at the current code though once I'm back on my feet, your mention of a Saleae clone reminded me that I have a ScanaPlus that might be able to help after all.

After the file is upload OctoPrint seems to get into a strange state where it doesn't send anything. I have to disconnect and reconnect. The final comms looks like this:

Send: N33708 M84 X Y E*116

Recv: ok

Send: N33709 M29*22

Changing monitoring state from 'Printing' to 'Operational'

Recv: Done saving file.

Then nothing sent until I disconnect and reconnect. It will display output from the machine, for example if I reset it but won't send anything, even from the send button.

I have noticed this happen a lot since I updated from an old version. I am using /dev/ttyAMA0, which unlike USB is connectionless, so previously I could even power off the machine and power it back on again and OctoPrint wouldn't care.

Nothing suspicious in the log, just a couple of warnings that don't look related to comms.

2016-02-28 10:17:25,789 - py.warnings - WARNING - /home/pi/oprint/local/lib/python2.7/site-packages/requests-2.7.0-py2.7.egg/requests/packages/urllib3/util/ssl_.py:90: InsecurePlatformWarning: A true SSLContext object is not available. This prevents urllib3 from configuring SSL appropriately and may cause certain SSL connections to fail. For more information, see https://urllib3.readthedocs.org/en/latest/security.html#insecureplatformwarning.

InsecurePlatformWarning

and

2016-02-28 10:23:13,179 - octoprint.server.util.sockjs - WARNING - Could not send message to client 192.168.1.97: object of type 'NoneType' has no len()

Is it perhaps because Marlin doesn't send an OK after Done saving file.?

Exactly. Another lovely Marlin bug.

You can get stuff going again with the fake ack button in the terminal advanced options. I thought I'd also built in a work around for that recently, might mistake it for another Marlin bug though. They do like to forget their ok here and there.

I seem to be in bug city now. I can get things moving with a fake acc but I don't seem to be able to reset the line numbers. M110 on its own seems to do nothing and M110 N0 gives:-

Recv: Error:No Checksum with line number, Last Line: 33709

Recv: Resend: 33710

What is supposed to happen here? How did it work before I updated OctoPrint?

The last time I looked at FlashAir it seemed to create its own network access point rather than joining an existing network so I didn't see how it could be useful

It did work before because OctoPrint didn't care about missing oks in the old version, leading to the problems I described above since it just kept sending then even if the firmware didn't report readiness to receive. Solving this leads to problems with buggy firmware which doesn't signal it's ready to receive in all such cases where it actually is (hello marlin), so basically doing it right on the host side makes firmware issues better observable.

Just from the two lines up there I'd suggest to send an M110 with line number and checksum. Marlin doesn't accept just a line number without a checksum and older versions have a bug where they interpret an N parameter to an otherwise line number less command as the prefixed line number and then fall flat on their faces when the line turns out to not be checksummed (because it always had to be both line number and checksum together). So basically your Marlin version is seeing "M110 N0" as if it were "N0 M110" where it would expect "N0 M110*checksum" (sorry, I don't have the checksum at hand right now), so it complains about having received a line number without checksum and wants a resend. Cute, isn't it? Oh, and I've also seen flavors which didn't accept an N parameter to M110 but demanded M110 to be sent with line number and checksum to do anything. So there's some attempt in OctoPrint to take care of that case too, I can't remember right now under which circumstances that holds, could also be at fault here.

I don't know why a regular M110 refuses to work though, are you still in state streaming/printing or operational again?

Did I mention that I hate how all those various firmware versions that are out there are riddled with horrible bugs and how hard it is to write code that tries to not have everything crash and burn regardless of if they are present or not?

dunno if it is intended, but sometimes 1.2.9, when uploading to sdcard, have more than one line outstanding, and then marlin will soon respond with two OK's in a row. seen both in terminal tab and with my LSA (which of course is a Chinese Saleae clone)

Send: M28 /small.gco

Recv: echo:Now fresh file: /small.gco

Recv: Writing to file: /small.gco

Changing monitoring state from 'Operational' to 'Sending file to SD'

Recv: ok

Send: N15 G1 X0.001*75

Send: N16 G1 X0.002*75

Recv: ok

Send: N17 G1 X0.003*75

Recv: ok

Send: N18 G1 X0.004*67

Recv: ok

Send: N19 G1 X0.005*67

Recv: ok

Send: N20 M29*42

Changing monitoring state from 'Printing' to 'Operational'

Recv: ok

Send: M20

Recv: Done saving file.

N15 and N16 are output really quick after each other, only 1.86ms in between, much faster than octoprint usually output commands.

Looking at the source to the version of Marlin I am using M110 on its own is a NOP. The only mention of M110 is it suppresses the line number check. So by side effect sending M110 with a line number and checksum will change the next expected line number. There is no explicit handler for M110 though.

I note that OctoPrint now sends naked M110 at the beginning. Is that intended as a NOP or is it intended to reset the line counter?

Does OctoPrint expect the line counter to go up forever or does it expect to reset it for each file?

I can easily fix up my Marlin to match what OctoPrint expects I just need to know what that is.

At the beginning of a connection M110 is expected to be a nop (if there was something like an actual nop, I'd send it there, all I want to do is to get the firmware to respond with something recognizable to make sure it's a printer and not a coffee machine). Although for firmwares that require line numbers to be always used (default config for repetier afaik) it also resets the number if the corresponding flag is set, which is important in cases where establishing a serial connection does not also reset the printer firmware. So welcome side effect under certain scenarios, don't care in others.

First line of an actually printed file should be a checksummed M110 to reset to 0. I've thought about doing regular resets over the course of printing to not exceed firmware side line number restrictions (uint32 I think?), but that might break resends in scenarios where commands are not acknowledged in the order they are sent (another firmware issue) and hence is tricky to get right.

About the two ok's, that would be interesting to get a log of, but it depends on which firmware version (order of command acknowledgement), read buffer, planner buffer, previous commands, command order, etc.

In general, what the protocol should do is, when I send it a command, process that (in case of G0 through G4 that means put it into the planner buffer if there's space, in case of other stuff do whatever it does, produce output) and then acknowledged with "ok". If there's a line number and checksum present, that should be checked before that processing step if the number is in sequence and the checksum matches and if not a resend line should be sent instead of processing the line and the input buffer flushed.

What's the situation instead is that on some firmware versions G0 through G4 get ok'd as soon as they are parsed from the serial input buffer but before they are processed, so their ok can over take commands that were preceding them, some answers lack an ok and the resend request includes an ok though it shouldn't, other firmware continuous sending resend requests several times to make sure they arrive, others don't flush their input on a resend and keep getting confused by whatever else was still in the buffer, some firmwares send "wait" every second if the planner buffer runs dry, others just don't do anything, yet others can cope with having the serial buffer getting full and just block writing whereas other implementations crash in such cases...

... If you two don't mind, I think I need to take a break here, I'm seeing red again ;)

OK, I can see how frustrating this must be for you. It winds me up and I am

only dealing with one version of Marlin at a time and don't have any comms

errors that need to be handled.

One last clarification though please: are you saying that I should put M110

at the start of my gcode files now?

I will go back to the latest release and do the patch to make uploads work

and I will patch Marlin to make M29 send OK.

On 28 February 2016 at 14:44, Gina Häußge [email protected] wrote:

At the beginning of a connection M110 is expected to be a nop (if there

was something like an actual nop, I'd send it there, all I want to do is to

get the firmware to respond with something recognizable to make sure it's a

printer and not a coffee machine). Although for firmwares that require line

numbers to be always used (default config for repetier afaik) it also

resets the number if the corresponding flag is set, which is important in

cases where establishing a serial connection does not also reset the

printer firmware. So welcome side effect under certain scenarios, don't

care in others.First line of an actually printed file should be a checksummed M110 to

reset to 0. I've thought about doing regular resets over the course of

printing to not exceed firmware side line number restrictions (uint32 I

think?), but that might break resends in scenarios where commands are not

acknowledged in the order they are sent (another firmware issue) and henceis tricky to get right.

About the two ok's, that would be interesting to get a log of, but it

depends on which firmware version (order of command acknowledgement), read

buffer, planner buffer, previous commands, command order, etc.In general, what the protocol should do is, when I send it a command,

process that (in case of G0 through G4 that means put it into the planner

buffer if there's space, in case of other stuff do whatever it does,

produce output) and then acknowledged with "ok". If there's a line number

and checksum present, that should be checked before that processing step if

the number is in sequence and the checksum matches and if not a resend line

should be sent instead of processing the line and the input buffer flushed.What's the situation instead is that on some firmware versions G0 through

G4 get ok'd as soon as they are parsed from the serial input buffer but

before they are processed, so their ok can over take commands that were

preceding them, some answers lack an ok and the resend request includes an

ok though it shouldn't, other firmware continuous sending resend requests

several times to make sure they arrive, others don't flush their input on a

resend and keep getting confused by whatever else was still in the buffer,

some firmwares send "wait" every second if the planner buffer runs dry,

others just don't do anything, yet others can cope with having the serial

buffer getting full and just block writing whereas other implementations

crash in such cases...... If you two don't mind, I think I need to take a break here, I'm seeing

red again ;)—

Reply to this email directly or view it on GitHub

https://github.com/foosel/OctoPrint/issues/450#issuecomment-189885040.

Sorry, I was unclear there. The first line OctoPrint should send from a printed file should be M110. OctoPrint basically injects that before it returns the actual file lines. I wrote should there since I'm currently unsure about the exact logic in place, I'm fairly sure it will force that with a checksum, but I can't check right now (phone's browser doesn't like github file listings with syntax highlighting at all, always crashes on anything with more than a few hundred lines).

I also fixed a bunch of typos and clarified some things in my last reply after I posted it, so if you are following this thread via mail, maybe take another look at that in the ticket.

So I patched 1.2.9 with the fix in devel and it uploads to SD OK without any change to Marlin. It isn't missing the OK at the end any more but the OKs do seem out of step. I think perhaps OctoPrint doesn't wait for one from M28 but gets one, so there is always an extra OK kicking about so it doesn't get stuck at the end.

Here is a log of a small transfer. I have added line numbers to the OKs manually and it seems to bear out my theory. Marlin M28 sends OK but OctoPrint doesn't expect it and M29 doesn't send OK but OctoPrint does expect it.

The extra OK during the upload might actually be beneficial because it makes OctoPrint send the next line early. It works because Marlin can buffer the next line while processing a command.

OctoPrint sends M110 N0 to start a print over serial but it doesn't when it does an SD upload, so the numbers just continue on up. This goes wrong if host or printer are reset.

Ah... then it was M28 for which there is a workaround in place, and your version doesn't have that firmware bug yet or anymore, but on the other hand has the M29 issue and that indeed cancels out each other.

I can't send the M110 when it already has sent the M28 since that would have it become part of the file on the sd (I'm unsure what consequences that would have, might be that it would have the printer reset line numbers "on its own" when the file is printed, without the host being able to know that, bad thing). But I should probably force a checksummed M110 before the M28.

I might be wrong but as far as I can see Marlin's behaviour hasn't changed over the lifetime of OctoPrint. M28 is a normal command and is encountered in command mode so it sends an OK using the default mechanism.

M29 is sent during streaming so is not executed but a special case check is made to allow it to terminate the stream (otherwise it would get written to the card). See https://github.com/MarlinFirmware/Marlin/blob/RC/Marlin/Marlin_main.cpp#L749. That is why it doesn't send an OK. It does if you send it when not in stream mode, but then it is just a NOP, see https://github.com/MarlinFirmware/Marlin/blob/RC/Marlin/Marlin_main.cpp#L3415.

I'm fairly sure I've seen it send an ok in the past (I've gotten a bit sensitive when it comes to missing oks over the past year or so), but that might have been some non-mainline-form of Marlin or even some other firmware.

In any case, even if it's handled before the actual command handling, it should still send an ok.

Of course, since it's so wide spread, it's basically impossible to eradicate this bug at this point, even if mainline Main gets that patched up, so I guess I need yet another special case here. Hooray.

Btw, having to check for stuff like that also makes the code slower. Still thinking that moving the ok handling to a point as early as possible into the read loop should give a nice boost (right now it's at the bottom, that's stupid), so that's the current battle plan.

Yes there no reason why G29 couldn't send OK but it seems it has always just sent "Done saving file" instead, so perhaps that is the de-facto protocol.

Am I correct in thinking it is this line: https://github.com/foosel/OctoPrint/blob/master/src/octoprint/util/comm.py#L1048 that makes OctoPrint not wait for ok after M28? Should that just be moved to here: https://github.com/foosel/OctoPrint/blob/master/src/octoprint/util/comm.py#L1067 to make it not wait for one after "Done saving file" instead?

I will have a look at the effect on timing later today. As I said before the extra OK during the transfer probably speeds it up and any optimisation you do by moving the ok handling up the loop will not beat sending the command before getting the OK for the last one as it does now.

I am surprised a few lines of Python code can eat up 10ms. Are we sure is not accessing SD files somewhere? Turning on logging seems to add only another 6ms.

I clarified the last comment so if you are following this thread via mail take another look at it in the ticket.

I'm not sure that the clear_to_send should actually be removed there vs. just replicated in the M29 handling. I probably added it for a reason there (searching for the history), and I'm suspecting that it was some firmware that lacked the ok. Which makes things tricky now since it basically gets the ok count out of sync depending on the firmware. Which sucks. I started working on another attempt at a better abstracted comm layer at the beginning of the year, that also includes firmware flavor specific handling of stuff like that. Learning from past mistakes ;)

In general having an extra ok in the loop is something that should be avoided. Yes, it can speed things up if the buffer can cope with it, but it can also kill the firmware quite good depending on the board, the version etc. I've seen this happening in the past (especially printrboards appear to like locking up for a couple of seconds if they get too much stuff on the serial, and I'm not meaning that in a "they don't accept commands anymore" kinda way, which would be ok, but in a "the whole CPU seems to just stop working" kinda way. Not a hardware person, no idea what's going on there, but that's what I've seen. So I'm a bit reluctant to add that generally. But I'm also unhappy with having to add loads of new flags all the time to cope with different firmware implementations and their various bugs and differences.

In this specific case it might make sense to introduce something like a configurable "answers from the firmware that lack an ok" setting. That would allow to add or remove whatever the user's firmware does or doesn't have with regards to bugged responses there and be done with it hopefully. But it's still hackish and ugly. Meh.

Also, I might have been unclear in my last post. I don't think that the full 10ms come from just the location of the ok parsing. I'm thinking though that some fraction of it does, and that there are several places across the code here that add up to 10ms.

I also have to take another look at the PySerial docs to see if flush should maybe actually be called on the serial object after each write, or if that is a no-op in the current implementation.

Finally, there might be some issue with locks interfering here which is tremendously tricky to get a) diagnosed and b) debugged. So the idea was to first get the obvious idiocies out of the code before moving on to the more difficult stuff, to ensure the trivial stuff isn't masking the non-trivial stuff.

Once I'm back in action that is. Still down. Argh.

I think I will bring forth a small observation: While uploading to sdcard CPU maxes on the RPI.

This could indicate[1] that the problem is not "execution order" - simply said, but rather "too much work" being done per line of g-code being pumped through the engine.

The parts I mean are less a case of execution order and more of "is it this

message? is it maybe that one? Oh, or this one? maybe this one here? oh, I

know, that one? still not, hmm, maybe...". But it's also only a hunch so

far.

Since you are observing it while uploading to sd card it shouldn't be gcode

processing which would have been my first guess, have to double check that

though.

Perhaps we should have Chris, or somebody else, to confirm my observation, just to make sure I haven't fooled my self (again). At least before you burn too much fuel in your debugger.

Right now I'm sadly still a coughing mess on my couch, so not much burnt

yet ;) But any additional input on this is probably very valuable once I

can tackle it, so keep it coming!

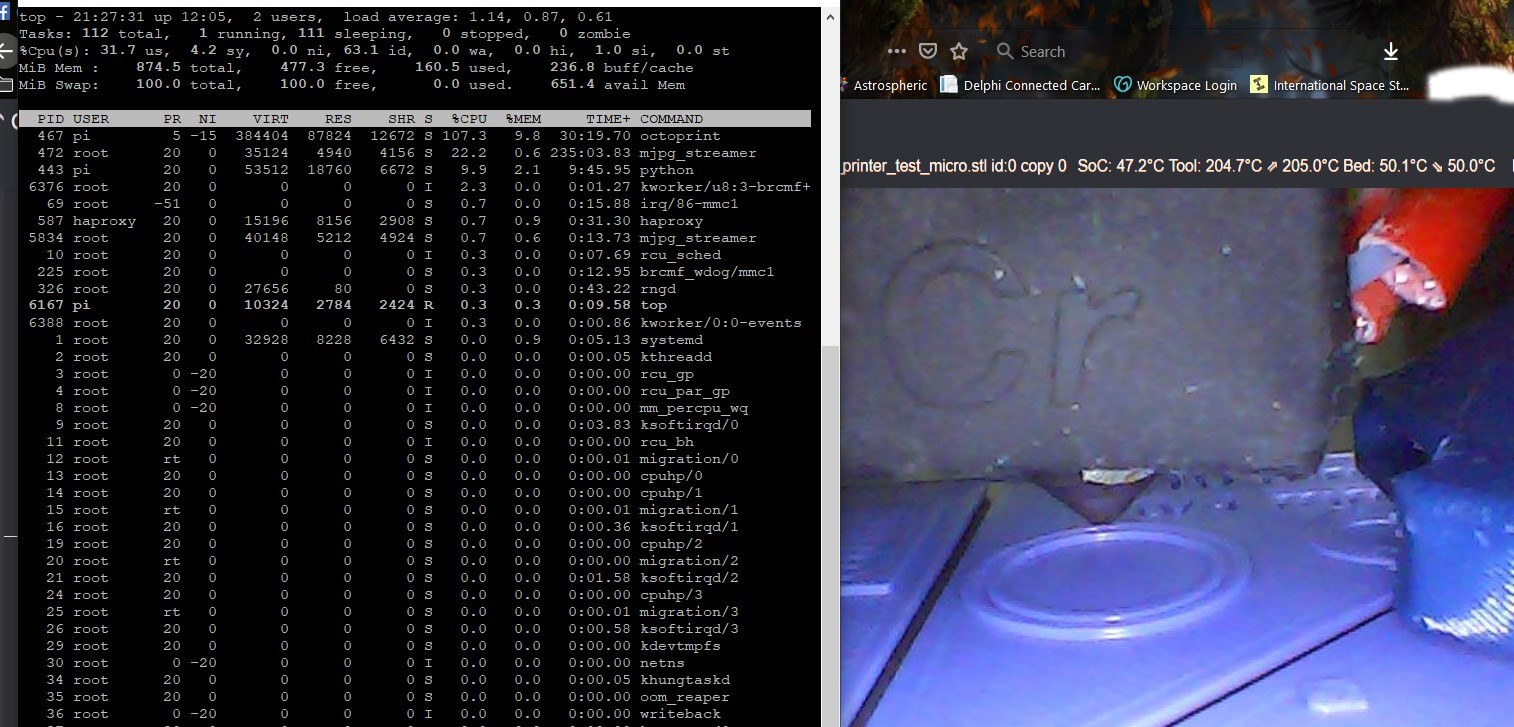

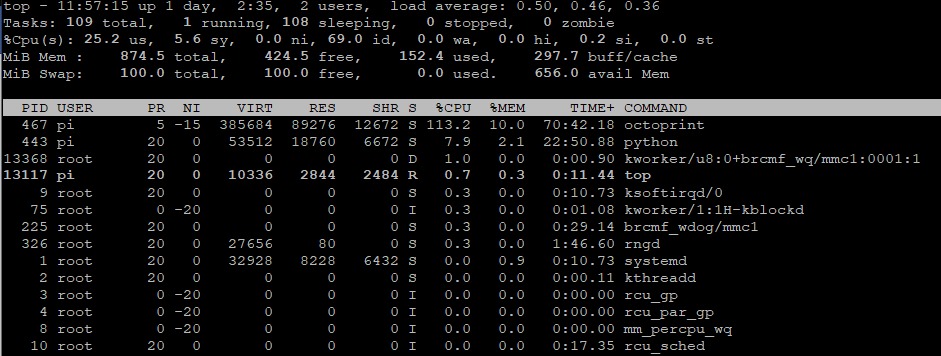

I can confirm the CPU is maxed out at 100% during upload on an RPI B with a direct serial link running at 115200. Here is htop:

I was hoping to look at the signals again today but I got an order to print something and my make shift probes fell off! Unfortunately my £3.50 logic analyser didn't come with any probes so I will have to improvise something better tomorrow. Maybe steal some from my £24000 Tektronix logic analyser from the mid 90's.

I think a python profiler might be the best way to find where the CPU time goes.

Thinking about it some more - let's ignore the 10ms for a bit but shouldn't a 100% cpu load be expected? We basically have a process here that is constantly busy either reading from or writing to the serial port. When is the core supposed to come up for a breather (= give control over to some other process) if everything runs as fast as possible here? Data trader as fast as possible is the actual goal, we want to have as high a throughput we can manage, no? (And again, please ignore that there might be some of those cycles spent on unnecessary stuff, that's another problem)

In fact, the more I think of it, the better it actually is that a 100% cpu load it's observable, since it means that the delay we are observing isn't caused by some misbehaving locks on which the code is waiting (which would give control back to the OS to give done cycles for another process), but by actual processing input and output data (which needs a closer look to remove fat, and for that a profile indeed is the tool of choice).

Or am I completely misreading things here?

It depends how efficient the serial driver of the RPI is. You should be able to transmit a string using interrupts or DMA so the CPU is available while the characters are being sent and the OK is being received. Similarly with USB where the hardware does most of the hard work and interrupts or DMAs bulk data to the CPU.

And a 700mHz 32 bit ARM should be able to send faster than a 16mHz 8 bit AVR can receive it, parse it and write it to SD via 1 bit SPI. So the RPI should be hanging around waiting for OKs not the AVR waiting for data.

Python and pyserial is indeed accountable for some of the CPU cycles being eaten. Wrote this small test program to illustrate:

#!/usr/bin/env python

import serial

import time

ser = serial.Serial('/dev/ttyAMA0', 250000)

for i in xrange(1, 10):

s = 'U' * i

t=time.time()

for n in xrange(100000 / len(s)):

ser.write(s)

t = time.time() - t

print i, t

which, when running on the RPI, model B, outputs

1 15.7945480347

2 8.64547085762

3 6.3043179512

4 4.74336218834

5 4.07250404358

6 4.0721051693

7 4.09759402275

8 4.04491209984

9 4.02989697456

This shows that size of chunks being sent down the stack and out the line matters too. For the bare bone program above line rate alone, in my case set to 250kbaud, becomes the limiting factor when outputting more than 5 bytes at a time. Below that the RPI CPU is the bottleneck.

In the extreme case, with one byte at a time, a quick calculation shows that only 25% of line capacity is utilized.

This is dumb sending alone, of course nowhere close to the work octoprint has to do, but at least it shows that the RPI is capable to saturate it's GPIO UART transmit channel using pyserial.

I am back on this now with probes that stay on!

In comms.py why is there a startFileTransfer() and a startSdFileTransfer()? Am I correct in thinking startSdFileTransfer is not used?

Now that is embarrassing, you are right.

Had some time to play now and get some results and they are very confusing!

I added resetLineNumbers() before the M28 and that works fine, see https://github.com/nophead/OctoPrint/commit/db9a8369a52a626fa8cfb385dbda7520330e2458. Having discovered I can get rid of line numbers altogether in the features settings I think resetLineNumbers() should check self._neverSendChecksum and skip sending M110 if that is set.

I looked at the transfer with the extra OK sloshing around and that looks like this:

The message takes 4.8ms down the wire. Marlin thinks about it for 0.45ms and then sends OK which takes a further 0.24ms, making a total of 5.5ms. Octoprint takes 6.5ms after the OK to send the next message making a total of 12ms per line. Note that the OK it is replying to is actually the one before and the delay from that is 17ms.

Because of the extra OK you sometimes get too messages back to back. Here is an example:

A message gets delayed a whopping 32ms from the OK that triggered it and 24ms from the last OK. Somehow that manages to make the next two messages come back to back with absolutely no delay in between. That means it must have got around the send loop is less time than it takes to send a message when normally it takes more than twice as long.

I modified OctoPrint to expect OK after G28 instead of G29 and that gets rid of the double messages as it is now waiting for the OK from the last message before sending the next one. See https://github.com/nophead/OctoPrint/commit/a2b761ed2bac09262ff65db1f46e8f3baaed1554. I remove the line = "ok" because I couldn't see a reason for it.

I expected it to get a lot slower but it is actually about the same. So it looks like the getting the OK in advance doesn't make the send loop go any faster.

I tried turning off line numbers and checksums expecting it to get a little bit faster due to shorter messages but to my surprise it got significantly slower.

I can't explain that but reading about threads in Python it seems like they are broken due the global interpreter lock. It can cause weird things like more cores making it go slower and adding CPU intensive threads can then make it go faster.

I got an RPi 3 today so I will see if that goes faster or maybe slower!

@nophead Interesting result. Now to figure out whether it is the python context switch (from the read thread that sees the ok and clears to send to the send loop) or latency in pyserial (post read or pre-write). Probably some logging we can come up with to help with that though we'll have to worry about Heisenberg :-).

I wonder what the timing graph looked like before the wait for ok when OctoPrint used to just go.

I will try rolling back to the last version I was using.

I noticed this on the OctoPrint web page: "Profiling is done with the help of PyVmMonitor." Does it work on a RPi and does anybody have experience with it?

I tried to roll back to the last version I used on this machine, which was 1.1.1-22-g83790fc. Git said "You are in 'detached HEAD' state." so just call me NOP from now on!

The ./oprint/bin/python setup.py install failed so I removed oprint and set up a new venv and tried with that. It falls over installing mock 1.3.0. mock requires setuptools>=17.1. Aborting installation

I tried sudo apt-get install python-setuptools but that says python-setuptools is already the newest version. so I gave up.

Onwards and upwards to RPi3.

Haha nophead in a detached head state.

Oh shoot, I forgot there'd be complications like those trying to go backwards.

I'm feeling like an idiot right now. I just lost half of the afternoon hunting down a delay of up to 6ms I observed when I just threw in some print debug statements out of curiosity. I found it. The serial.log was enabled m( After disabling that, back to square one...

Yes I got 6ms extra when I had the log on. That seems slow for writing a block or two to an SD card. I don't think Marlin takes that long and it is only using a single bit SPI port. RPi uses four bits.

Funny thing, that even seems to actually be the logging framework (Python's

own logging module)... I got the 6ms even on my development machine (i7

with SSD). But if you don't mind I'm not going to do a deep dive now into

getting the serial logging performant that's only for debug purposes anyhow

but rather look into the send loop ;)

I start to doubt it will be an easy problem to fix. I just made a small program that waits for "ok" and then sends a "M110 N123". To try something different I this time wrote it in javascript (node) and ran it on my regular linux desktop box. Even here I see in the area of 2ms delay between last bit of ok before the next command start to transmit.

Usually I'm very impressed of execution speed node programs. Perhaps linux serial simply isn't meant for low latency stuff...

What I did the last two days was spreading some simple print debugging over the critical parts of the comm code, mainly measuring the time between the received ok and the sent line, and then a couple of additional measurements in between. This I did on a pi2.

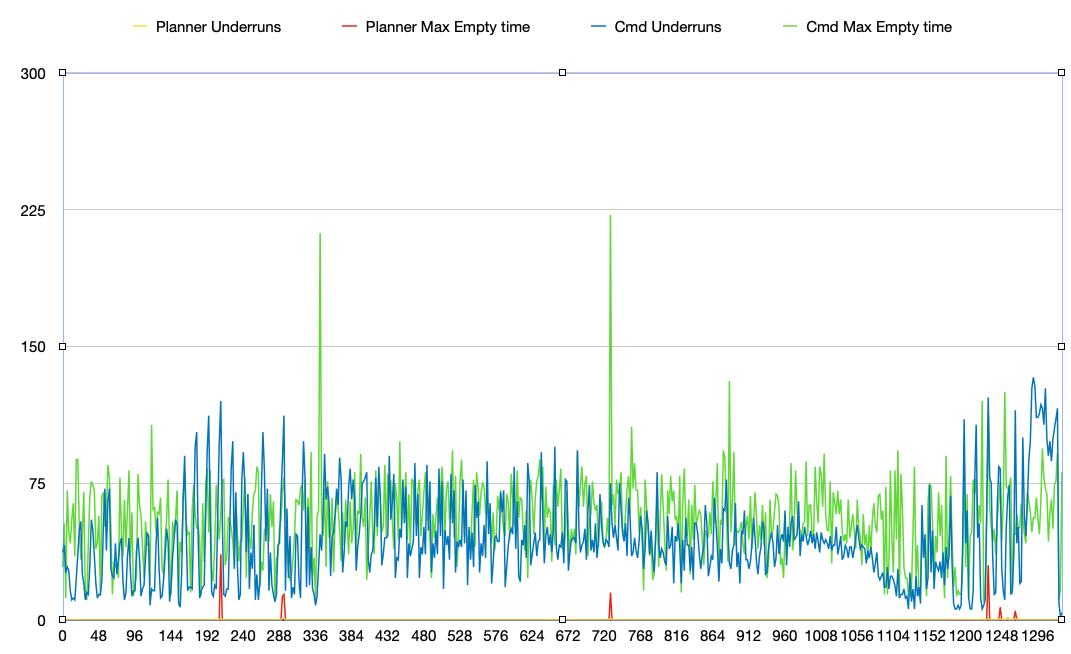

Then I started swapping things around and benchmarking a couple of parts. First of all moving the ok processing further up did indeed do improve things at least a tiny bit (up to half a ms maybe). What takes surprisingly long is a) reading a line from disk (up to 1ms), b) calculating the checksum (I benchmarked that and tried a couple of alternate implementations, didn't really change anything, still opted for the slightly fastest, half a ms to 1ms), and weirdly c) 2ms for waiting for the send queue get to complete. I also sometimes saw values of over 1ms for the sending itself. The queue issue might be caused by the implementation of pythons queue class itself. While looking at all that I got a really nice idea to decouple things further and I'll try and see if that might get rid of that particular delay.

Overall, when uploading to sd I saw delays between ok and line sent of 2ms up to 7, sometimes 8 or even 10, with most values seeming to be located around 4ms (note: that's just from watching the values scroll through, I'll have to write something to output that stuff via udp or something to another system and write into a quantifiable csv file, don't want to bog down the system under test with additional file i/o but also want to be able to analyse the data more effectively). This was not measured on the actual serial line but basically on the outer border of the comm layer. I'll see if I can combine both measure methods maybe to get some insight on how much gets lost in pyserial and os, but I'll first have to figure out how to get probes hooked up to that particular setup (hephestos 2) or get my spare ramps prepped for some tests.

Anyway, I now have at least some ideas where to look more closely, but I'm also thinking that there is time getting lost outside of my control considering your timings on the line vs mine in the code. What certainly was a relief is thatjust the presence of the hooks for processing the queued and sent lines indeed didn't cause much harm (200-300us iirc, I also measured during regular printing).

Anyway this just as a small first feedback from my side.

Observations: (I read the whole thread, have LA, 5 marlins.)

- From past experiences with 'serial' communication way up in user space, GHz of horse power on thousands of lines is being burned to perform a hundred or less instructions for real communication.

- comm.py in OctoPrint is structured (to the reader) as if it might have to speak to a thousand different

Marlins at the same instant. (Instead of -- Here is a marlin 1.0 actual product shipped - 100 printers still going)

What happens here is there may be only (exaggerated #) 3 real marlins, but the code complexity gets extremely confusing due to fixing something for this, that and not being allowed to toss obsolete printers.

OctoPrint is speaking to only 1 firmware. - There is missing feedback to show which firmwares are in use and when. So I'd expect the developer of this section of product to become extremely protective because the complexity of the fixes impact ALL and not JUST the one firmware.

- The manufacturers/Marlin owners are not "clearly" in the loop. IE ("Your dreaded machine is still running

the original version of Marlin and is clearly capable of going up to, errr, let's say 1.1.0 on your cute little 16MHz 8bit 1980's modelish processor. Why not upgrade your firmware for free!")

I would also recon the "manufacturers->selling shop/brand" would enjoy feedback/facetime with their darling customers.

Questions:

- Is it possible to "objectize" the code so that slowly over time the whole picture becomes:

"I am the code for Original Marlin",

"I handle most marlins since they added 'xxxxxx'"

When OctoPrint talks to a printer it starts only uses 1 version, only has 1 extruder, etc.

Let the customer deal with that object. (You start adding extruders, the objects getting updated. Someday we may just save your printbed for you...) - I am amazed python can respond that quickly to a hardware device. It just by brute force that it can. Pushing serial communication and command response somehow down the

stack so there is almost '0' delay would really show that the Pi is almost completely free, not 100%.

It would also allow easier printer objects in python to talk with to do higher level customer/service interactions.

I also assume socket streams and newer faster methods are out the door somewhere. They'll come to you to interface if it looks easier.

- Shouldn't octoprint be the consumers interface, the manufacturers interface, the brands interface,

the slicer's interface? Collect the knowledge. Know more than a single manufacturer knows.

@nophead, @MortenGuldager I think I've found a very big culprit, and it was a quite idiotic one (hint: commit e2ec1ae1652c69fb4fd5c142d6901a39d05ad7a6). Could you guys check out the improve/commPerformance branch (https://github.com/foosel/OctoPrint/tree/improve/commPerformance) and tell me what you are seeing?

I had to revert a lot of what I did the last few days with regards to swapping things around since it broke things severely, but in the end with the small rather non-invasive changes I now did I arrived at a very consistent 1.5-2ms per sent line on a pi2 while uploading to SD (without the patches: 2-3, some peaks around 4 -- the values in my previous post were measured with more debug output and hence differ). Again measured when seeing the ok and when sending the next line (so without additional delays added by pyserial or the OS. It might be possible to shave off some more by changing the queue handling in general, but that's nothing that could be done as part of a maintenance release since that would mean very invasive changes and those I rather reserve for larger releases that see more intermittent testing by the community over a long time.

_edit_ value range and comparison

I tried, chopped off head and everything, but nope, perhaps it went down from 7ms to 6ms, give and take. - not very consistent - will test some more tomorrow.

RPi-1B, GPIO serial, 250000baud. Lets hear it from some other headless guys.

Ah yes, octoprint says "Version: 1.2.9.post19+ge2ec1ae (HEAD branch)", so the version change should be fine. I did: git checkout e2ec1ae ; ./venv/bin/python setup.py install

With Version: 1.2.10.dev21+g7301012 (improve/commPerformance branch) I get pretty much the same performance to my last test on my branch. About 12ms cycle time and 6ms latency, i.e. 50% utilisation of 115200 kb serial link.

I also have to do fake acknowledgement at the end to get it moving again. It looks like it expects OK from M28 and M29.

At the start it sends an M110 N0 with the current line number, so that has no effect with the Marlin I am using.

For some unknown reason I couldn't start it as a service but it worked from the command line. Switching back to my fork it works as a service again.

I'm starting to think it is pyserial.

I just added a serial.flush() after my writes, which as far as I can see in the code should basically make it wait until the data has actually been sent over the serial line, and that made the line sending take 8-9ms instead of 2:

##### received line: 0.450927734375

##### received ok: 0.64111328125

##### sent line: 9.0400390625

##### received line: 0.406982421875

##### received ok: 0.61376953125

##### sent line: 8.83911132812

##### received line: 0.537109375

##### received ok: 0.61279296875

##### sent line: 8.96606445312

##### received line: 0.25927734375

##### received ok: 0.626953125

##### sent line: 9.01293945312

##### received line: 0.375

##### received ok: 0.756103515625

##### sent line: 8.85888671875

For comparison, this is without flush:

##### received line: 4.25512695312

##### received ok: 0.849853515625

##### sent line: 2.0

##### received line: 4.15087890625

##### received ok: 0.826171875

##### sent line: 1.87084960938

##### received line: 4.28100585938

##### received ok: 0.900146484375

##### sent line: 1.97802734375

##### received line: 6.89086914062

##### received ok: 0.925048828125

##### sent line: 1.88598632812

##### received line: 4.173828125

##### received ok: 0.8203125

##### sent line: 1.85083007812

##### received line: 4.34106445312

##### received ok: 0.885009765625

##### sent line: 2.22998046875

##### received line: 5.28002929688

##### received ok: 0.886962890625

##### sent line: 1.91796875

##### received line: 4.17993164062

##### received ok: 0.8671875

##### sent line: 2.06909179688

So then I dug deeper again and have now arrived at this (values not comparable to the above ones, more output means slower performance overall):

##### received line: 8.52099609375

##### --- received ok: 0.39697265625

##### --- sending from file: 0.7060546875

##### --- fetched line from file: 1.17114257812

##### --- enqueued new line: 1.4951171875

##### --- sent from file: 1.71313476562

##### --- new line to send: 1.96313476562

##### --- increment line number: 2.3310546875

##### --- calculating checksum: 2.50805664062

##### --- sending line: 2.91015625

##### --- sent line: 3.29321289062

##### received line: 8.63012695312

##### --- received ok: 0.400146484375

##### --- sending from file: 0.701904296875

##### --- fetched line from file: 1.06689453125

##### --- enqueued new line: 1.3720703125

##### --- new line to send: 1.55590820312

##### --- sent from file: 1.73193359375

##### --- increment line number: 1.94995117188

##### --- calculating checksum: 2.22119140625

##### --- sending line: 2.63500976562

##### --- sent line: 3.0439453125

##### received line: 8.48291015625

##### --- received ok: 0.39404296875

##### --- sending from file: 0.718994140625

##### --- fetched line from file: 1.21997070312

##### --- enqueued new line: 1.5390625

##### --- new line to send: 1.71508789062

##### --- sent from file: 1.88305664062

##### --- increment line number: 2.123046875

##### --- calculating checksum: 2.40625

##### --- sending line: 2.82495117188

##### --- sent line: 3.22705078125

##### received line: 8.62817382812

##### --- received ok: 0.466796875

##### --- sending from file: 0.7919921875

##### --- fetched line from file: 1.13891601562

##### --- enqueued new line: 1.44604492188

##### --- new line to send: 1.63793945312

##### --- increment line number: 1.91772460938

##### --- sent from file: 2.06079101562

##### --- calculating checksum: 2.19995117188

##### --- sending line: 2.7138671875

##### --- sent line: 3.10888671875

##### received line: 8.52294921875

##### --- received ok: 0.402099609375

##### --- sending from file: 0.7041015625

##### --- fetched line from file: 1.02099609375

##### --- enqueued new line: 1.3359375

##### --- new line to send: 1.52001953125

##### --- sent from file: 1.74194335938

##### --- increment line number: 1.98486328125

##### --- calculating checksum: 2.27685546875

##### --- sending line: 2.70703125

##### --- sent line: 3.10083007812

First of all a short explanation what the measure points are:

- read thread

received line: Right after fetching a new line from the serial port, without any processing yetreceived ok: Line logged (+ sanitized to ASCII, which is the biggest chunk of time here) and parsed asok, right after thisclear_to_send.set()gets calledsending from file: We are currently printing, so we now try to send the next line from the current file - the 0.3ms spent here are due to also detecting that we are in fact streaming, so the regular command queue doesn't apply and shouldn't be processed.fetched line from file: next line to send fetched from file, comments are stripped alreadyenqueued new line: next line has entered send queuesent from file: that's when we "surface" after entering thesending from fileprocess

- send thread

new line to send: send queue has detected that a new line to process is available (different thread from the prior steps, so we see a bit of order switchups here)increment line number: capture lock, increase line number, add line to past lines to be able to resendcalculating checksum: now going to calculate the line's checksum (prefix withN<linenumber>, build checksum, postfix*<checksum>)sending line: just beforeserial.writesent line: after returning from sending the line via serial

So what we are seeing here is the following "slow runners":

- reading a line from the file (

sending from filetofetched line from file): this is because I must strip comments from the lines I read (since I must not send comments to the firmware), and since that might have the line end up completely empty, I potentially have to read more than one line from the file too (must also not send empty lines to the firmware) - so here there's nothing to save - line number + checksum: the checksum part cannot be further optimized as far as I can see - I need to follow the algorithm here, and that means iterating over every char on the line and XORing it with the running result, I don't see how to do that differently than iterating over every char and... well... XORing. The line number delay appears to be caused by the locking I have to do here in order to prevent a potential resend response being read at the same time from jumbling up things

- processing of send queue (

enqueued new linetonew line to send): this is thegetmethod of a PythonQueueat work. Apparently there are some performance issues here. Thing is, I can't use a simpledequeuehere since I don't want a busy wait in the send loop (that would bring the CPU to its knees), so I need a lock here, and aQueueis adequeue+ lock, so I don't really see how to optimize that part - The elephant in the room, the sent-read delay (

sent lineto nextreceived line): I'm seeing a lot of time spent here. After myflushexperiment it appears to in fact be that the printer DOES send a new line quite fast, but after if fire a line to theserial.writeit takes ages to actually get sent out. As long as this is the case, basically all optimization I might be able to do in code under my control have no chance to really influence things. I'm going to give the new pyserial version a spin, maybe that improved things, but I also did a quick google search for slow writes with pyserial and the results were a bit disheartening.

Finally, how things look right now if I limit the output to received line, received ok and sent line and have received ok and sent line report relative to received line:

##### received line: 7.1240234375

##### --- received ok: 0.6630859375

##### --- sent line: 2.93310546875

##### received line: 8.42797851562

##### --- received ok: 0.680908203125

##### --- sent line: 2.61010742188

##### received line: 7.25903320312

##### --- received ok: 0.684814453125

##### --- sent line: 2.71411132812

##### received line: 7.19213867188

##### --- received ok: 0.67578125

##### --- sent line: 2.81079101562

##### received line: 7.1298828125

##### --- received ok: 0.6630859375

##### --- sent line: 2.68090820312

##### received line: 7.1259765625

##### --- received ok: 0.722900390625

##### --- sent line: 2.9130859375

##### received line: 8.46484375

##### --- received ok: 0.671142578125

##### --- sent line: 2.94116210938

##### received line: 8.65234375

##### --- received ok: 0.661865234375

##### --- sent line: 2.62573242188

##### received line: 7.20288085938

##### --- received ok: 0.73779296875

##### --- sent line: 2.78784179688

##### received line: 7.10693359375

##### --- received ok: 0.656982421875

##### --- sent line: 2.72998046875

##### received line: 7.19799804688

##### --- received ok: 0.682861328125

##### --- sent line: 2.66381835938

##### received line: 7.1181640625

##### --- received ok: 0.663818359375

##### --- sent line: 2.92797851562

##### received line: 8.32983398438

##### --- received ok: 0.755126953125

##### --- sent line: 2.68701171875

##### received line: 10.2429199219

##### --- received ok: 0.72998046875

##### --- sent line: 3.42407226562

Note the huge delay between sent and received.

And the same with flush:

##### received line: 10.0180664062

##### --- received ok: 0.7109375

##### --- sent line: 9.798828125

##### received line: 10.0437011719

##### --- received ok: 0.667236328125

##### --- sent line: 9.62622070312

##### received line: 9.99829101562

##### --- received ok: 0.552734375

##### --- sent line: 9.90283203125

##### received line: 10.0048828125

##### --- received ok: 0.81201171875

##### --- sent line: 9.494140625

##### received line: 9.85009765625

##### --- received ok: 0.517822265625

##### --- sent line: 9.65283203125

##### received line: 10.0209960938

##### --- received ok: 0.5390625

##### --- sent line: 9.59887695312

##### received line: 10.3669433594

##### --- received ok: 0.39697265625

##### --- sent line: 9.38793945312

##### received line: 9.75903320312

##### --- received ok: 0.531005859375

##### --- sent line: 9.66015625

##### received line: 9.90478515625

##### --- received ok: 0.770263671875

##### --- sent line: 9.59008789062

##### received line: 9.97119140625

##### --- received ok: 0.5087890625

##### --- sent line: 9.6328125

##### received line: 9.9970703125

##### --- received ok: 0.520751953125

##### --- sent line: 9.76977539062

##### received line: 10.0190429688

##### --- received ok: 0.682861328125

##### --- sent line: 9.77294921875

##### received line: 10.1318359375

##### --- received ok: 0.554931640625

##### --- sent line: 9.48803710938

##### received line: 9.84619140625

##### --- received ok: 0.630859375

##### --- sent line: 9.63500976562

##### received line: 10.0026855469

##### --- received ok: 0.524169921875

##### --- sent line: 9.63818359375

##### received line: 9.98828125

##### --- received ok: 0.533935546875

##### --- sent line: 9.806640625

##### received line: 10.0400390625

##### --- received ok: 0.6826171875

##### --- sent line: 9.79760742188

So - there we have the ~10ms. Around 2ms of them are actual "oh, look, new line can be sent, let's send it" OctoPrint code, plus ~0.5ms for "read bytes, encode to ascii". Rest appears to be waiting for pyserial. Or am I misreading things here?

Possibly it is because you give away the CPU during the serial write to

another python thread and don't get it back until it releases the GIL. I.e.

pre-emptive OS multitasking becomes almost cooperative multitasking in

Python due to the GIL. I see there are many Python threads now in

OctoPrint, not just send and receive.

I will try a simple single threaded script that just sends without line

number and checksums, waits for OK and loops. That will show the fastest

achievable with Pyserial.

On 8 March 2016 at 11:06, Gina Häußge [email protected] wrote:

I'm starting to think it is pyserial.

I just added a serial.flush() after my writes, which as far as I can see

in the code should basically make it wait until the data has actually been

sent over the serial line, and that made the line sending take 8-9ms

instead of 2:received line: 0.450927734375

received ok: 0.64111328125

sent line: 9.0400390625

received line: 0.406982421875

received ok: 0.61376953125

sent line: 8.83911132812

received line: 0.537109375

received ok: 0.61279296875

sent line: 8.96606445312

received line: 0.25927734375

received ok: 0.626953125

sent line: 9.01293945312

received line: 0.375

received ok: 0.756103515625

sent line: 8.85888671875

For comparison, this is without flush:

received line: 4.25512695312

received ok: 0.849853515625

sent line: 2.0

received line: 4.15087890625

received ok: 0.826171875

sent line: 1.87084960938

received line: 4.28100585938

received ok: 0.900146484375

sent line: 1.97802734375

received line: 6.89086914062

received ok: 0.925048828125

sent line: 1.88598632812

received line: 4.173828125

received ok: 0.8203125

sent line: 1.85083007812

received line: 4.34106445312

received ok: 0.885009765625

sent line: 2.22998046875

received line: 5.28002929688

received ok: 0.886962890625

sent line: 1.91796875

received line: 4.17993164062

received ok: 0.8671875

sent line: 2.06909179688

So then I dug deeper again and have now arrived at this (values not

comparable to the above ones, more output means slower performance overall):received line: 8.52099609375

--- received ok: 0.39697265625

--- sending from file: 0.7060546875

--- fetched line from file: 1.17114257812

--- enqueued new line: 1.4951171875

--- sent from file: 1.71313476562

--- new line to send: 1.96313476562

--- increment line number: 2.3310546875

--- calculating checksum: 2.50805664062

--- sending line: 2.91015625

--- sent line: 3.29321289062

received line: 8.63012695312

--- received ok: 0.400146484375

--- sending from file: 0.701904296875

--- fetched line from file: 1.06689453125

--- enqueued new line: 1.3720703125

--- new line to send: 1.55590820312

--- sent from file: 1.73193359375

--- increment line number: 1.94995117188

--- calculating checksum: 2.22119140625

--- sending line: 2.63500976562

--- sent line: 3.0439453125

received line: 8.48291015625

--- received ok: 0.39404296875

--- sending from file: 0.718994140625

--- fetched line from file: 1.21997070312

--- enqueued new line: 1.5390625

--- new line to send: 1.71508789062

--- sent from file: 1.88305664062

--- increment line number: 2.123046875

--- calculating checksum: 2.40625

--- sending line: 2.82495117188

--- sent line: 3.22705078125

received line: 8.62817382812

--- received ok: 0.466796875

--- sending from file: 0.7919921875

--- fetched line from file: 1.13891601562

--- enqueued new line: 1.44604492188

--- new line to send: 1.63793945312

--- increment line number: 1.91772460938

--- sent from file: 2.06079101562

--- calculating checksum: 2.19995117188

--- sending line: 2.7138671875

--- sent line: 3.10888671875

received line: 8.52294921875

--- received ok: 0.402099609375

--- sending from file: 0.7041015625

--- fetched line from file: 1.02099609375

--- enqueued new line: 1.3359375

--- new line to send: 1.52001953125

--- sent from file: 1.74194335938

--- increment line number: 1.98486328125

--- calculating checksum: 2.27685546875

--- sending line: 2.70703125

--- sent line: 3.10083007812

First of all a short explanation what the measure points are:

- read thread

- received line: Right after fetching a new line from the serial

port, without any processing yet

- received ok: Line logged (+ sanitized to ASCII, which is the

biggest chunk of time here) and parsed as ok, right after this

clear_to_send.set() gets called

- sending from file: We are currently printing, so we now try to

send the next line from the current file - the 0.3ms spent here are due to

also detecting that we are in fact streaming, so the regular command queue

doesn't apply and shouldn't be processed.

- fetched line from file: next line to send fetched from file,

comments are stripped already

- enqueued new line: next line has entered send queue

- sent from file: that's when we "surface" after entering the sending

from file process

- send thread

- new line to send: send queue has detected that a new line to process

is available (different thread from the prior steps, so we see a bit of

order switchups here)

- increment line number: capture lock, increase line number, add

line to past lines to be able to resend

- calculating checksum: now going to calculate the line's checksum

(prefix with N, build checksum, postfix * )

- sending line: just before serial.write

- sent line: after returning from sending the line via serial

So what we are seeing here is the following "slow runners":

- reading a line from the file (sending from file to fetched line from

file): this is because I must strip comments from the lines I read

(since I must not send comments to the firmware), and since that might have

the line end up completely empty, I potentially have to read more than one

line from the file too (must also not send empty lines to the firmware) -

so here there's nothing to save- line number + checksum: the checksum part cannot be further

optimized as far as I can see - I need to follow the algorithm here, and

that means iterating over every char on the line and XORing it with the

running result, I don't see how to do that differently than iterating over

every char and... well... XORing. The line number delay appears to be

caused by the locking I have to do here in order to prevent a potential

resend response being read at the same time from jumbling up things- processing of send queue (enqueued new line to new line to send):

this is the get method of a Python Queue at work. Apparently there are

some performance issues here. Thing is, I can't use a simple dequeue

here since I don't want a busy wait in the send loop (that would bring the

CPU to its knees), so I need a lock here, and a Queue is a dequeue +

lock, so I don't really see how to optimize that part- The elephant in the room, the sent-read delay (sent line to next received

line): I'm seeing a lot of time spent here. After my flush experiment

it appears to in fact be that the printer DOES send a new line quite fast,

but after if fire a line to the serial.write it takes ages to actually

get sent out. As long as this is the case, basically all optimization I

might be able to do in code under my control have no chance to really

influence things. I'm going to give the new pyserial version a spin, maybe

that improved things, but I also did a quick google search for slow writes

with pyserial and the results were a bit disheartening.Finally, how things look right now if I limit the output to received line,

received ok and sent line and have received ok and sent line report

relative to received line:received line: 7.1240234375

--- received ok: 0.6630859375

--- sent line: 2.93310546875

received line: 8.42797851562

--- received ok: 0.680908203125

--- sent line: 2.61010742188

received line: 7.25903320312

--- received ok: 0.684814453125

--- sent line: 2.71411132812

received line: 7.19213867188

--- received ok: 0.67578125

--- sent line: 2.81079101562

received line: 7.1298828125

--- received ok: 0.6630859375

--- sent line: 2.68090820312

received line: 7.1259765625

--- received ok: 0.722900390625

--- sent line: 2.9130859375

received line: 8.46484375

--- received ok: 0.671142578125

--- sent line: 2.94116210938

received line: 8.65234375

--- received ok: 0.661865234375

--- sent line: 2.62573242188

received line: 7.20288085938

--- received ok: 0.73779296875

--- sent line: 2.78784179688

received line: 7.10693359375

--- received ok: 0.656982421875

--- sent line: 2.72998046875

received line: 7.19799804688

--- received ok: 0.682861328125

--- sent line: 2.66381835938

received line: 7.1181640625

--- received ok: 0.663818359375

--- sent line: 2.92797851562

received line: 8.32983398438

--- received ok: 0.755126953125

--- sent line: 2.68701171875

received line: 10.2429199219

--- received ok: 0.72998046875

--- sent line: 3.42407226562

Note the huge delay between sent and received.

And the same with flush:

received line: 10.0180664062

--- received ok: 0.7109375

--- sent line: 9.798828125

received line: 10.0437011719

--- received ok: 0.667236328125

--- sent line: 9.62622070312

received line: 9.99829101562

--- received ok: 0.552734375

--- sent line: 9.90283203125

received line: 10.0048828125

--- received ok: 0.81201171875

--- sent line: 9.494140625

received line: 9.85009765625

--- received ok: 0.517822265625

--- sent line: 9.65283203125

received line: 10.0209960938

--- received ok: 0.5390625

--- sent line: 9.59887695312

received line: 10.3669433594

--- received ok: 0.39697265625

--- sent line: 9.38793945312

received line: 9.75903320312

--- received ok: 0.531005859375

--- sent line: 9.66015625

received line: 9.90478515625

--- received ok: 0.770263671875

--- sent line: 9.59008789062

received line: 9.97119140625

--- received ok: 0.5087890625

--- sent line: 9.6328125

received line: 9.9970703125

--- received ok: 0.520751953125

--- sent line: 9.76977539062

received line: 10.0190429688

--- received ok: 0.682861328125

--- sent line: 9.77294921875

received line: 10.1318359375

--- received ok: 0.554931640625

--- sent line: 9.48803710938

received line: 9.84619140625

--- received ok: 0.630859375

--- sent line: 9.63500976562

received line: 10.0026855469

--- received ok: 0.524169921875

--- sent line: 9.63818359375

received line: 9.98828125

--- received ok: 0.533935546875

--- sent line: 9.806640625

received line: 10.0400390625

--- received ok: 0.6826171875

--- sent line: 9.79760742188

So - there we have the ~10ms. Around 2ms of them are actual "oh, look, new

line can be sent, let's send it" OctoPrint code, plus ~0.5ms for "read

bytes, encode to ascii". Rest appears to be waiting for pyserial. Or am I

misreading things here?—

Reply to this email directly or view it on GitHub

https://github.com/foosel/OctoPrint/issues/450#issuecomment-193730781.

I think I was faster than you: upload.py.

Usage: upload.py <port> <baudrate> <path> <targetname> [ flush ]

If flush is given, serial.flush will be called after each write.

Comm log will be written to stdout, timings to stderr.

Timings without flush:

##### line received: 4.37521934509

##### ---ok received: 0.103950500488

##### ---line sent: 0.389814376831

##### line received: 4.24885749817

##### ---ok received: 0.103950500488

##### ---line sent: 0.353097915649

##### line received: 4.2519569397

##### ---ok received: 0.105142593384

##### ---line sent: 0.355005264282

##### line received: 4.37617301941

##### ---ok received: 0.113964080811

##### ---line sent: 0.361919403076

##### line received: 4.24885749817

##### ---ok received: 0.1060962677

##### ---line sent: 0.353097915649

##### line received: 4.25410270691

##### ---ok received: 0.113964080811

##### ---line sent: 0.362873077393

##### line received: 4.2519569397

##### ---ok received: 0.104904174805

##### ---line sent: 0.351905822754

##### line received: 4.2450428009

##### ---ok received: 0.103950500488

##### ---line sent: 0.351905822754

##### line received: 4.12893295288

##### ---ok received: 0.104904174805

##### ---line sent: 0.356912612915

##### line received: 4.24790382385

##### ---ok received: 0.104188919067

##### ---line sent: 0.354051589966

##### line received: 8.37898254395

##### ---ok received: 0.11420249939

##### ---line sent: 0.365018844604

##### line received: 4.24909591675

##### ---ok received: 0.114917755127

##### ---line sent: 0.363111495972

##### line received: 4.1229724884

##### ---ok received: 0.103950500488

##### ---line sent: 0.354051589966

##### line received: 4.24814224243

##### ---ok received: 0.104904174805

##### ---line sent: 0.35285949707

and with flush:

##### line received: 10.0440979004

##### ---ok received: 0.108003616333

##### ---line sent: 9.4039440155

##### line received: 9.97090339661

##### ---ok received: 0.107049942017

##### ---line sent: 1.0621547699

##### line received: 2.91514396667

##### ---ok received: 0.105857849121

##### ---line sent: 6.51693344116

##### line received: 7.10582733154

##### ---ok received: 0.105142593384

##### ---line sent: 9.41300392151

##### line received: 9.97710227966

##### ---ok received: 0.1060962677

##### ---line sent: 9.44089889526

##### line received: 10.0128650665

##### ---ok received: 0.1060962677

##### ---line sent: 9.42611694336

##### line received: 9.99116897583

##### ---ok received: 0.105857849121

##### ---line sent: 9.43684577942

##### line received: 10.0319385529

##### ---ok received: 0.1060962677

##### ---line sent: 9.4039440155

##### line received: 9.95898246765

##### ---ok received: 0.1060962677

##### ---line sent: 9.44495201111

##### line received: 9.99999046326

##### ---ok received: 0.103950500488

##### ---line sent: 9.44709777832

##### line received: 9.99999046326

##### ---ok received: 0.105142593384

##### ---line sent: 9.4530582428

##### line received: 10.195016861

##### ---ok received: 0.113010406494

##### ---line sent: 9.2670917511

Yes much faster than me and more thorough.

Without flush it was the same as OctoPrint, around 10ms per cycle with about 6ms latency.

With flush it is three times slower, no idea why.

Then I removed the print statements and the latency dropped to about 1.2ms without flush.

With flush it is still much slower.

According to this: http://pyserial.readthedocs.org/en/latest/pyserial_api.html write is blocking when no timeout is specified, so I would have expected a flush after a write to come back immediately. I can't think why it can wait 25ms.

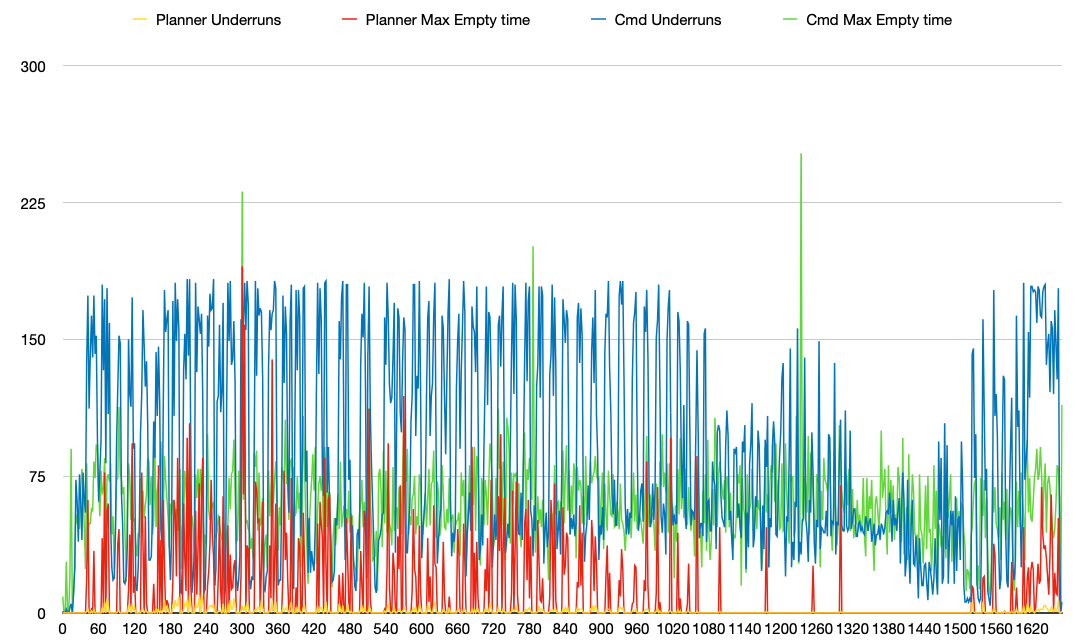

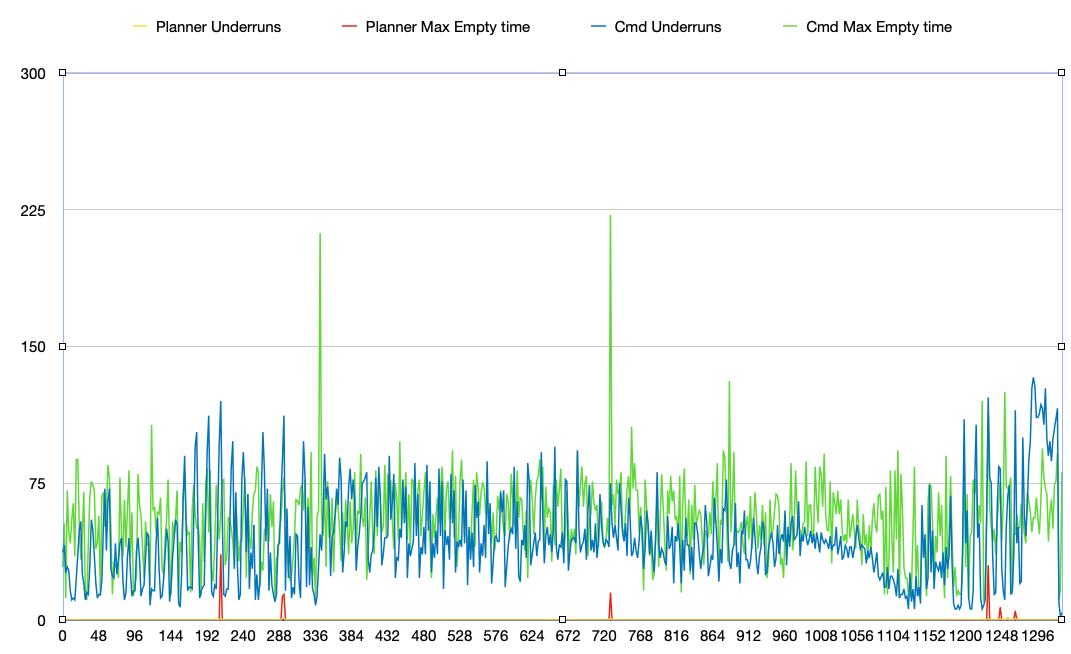

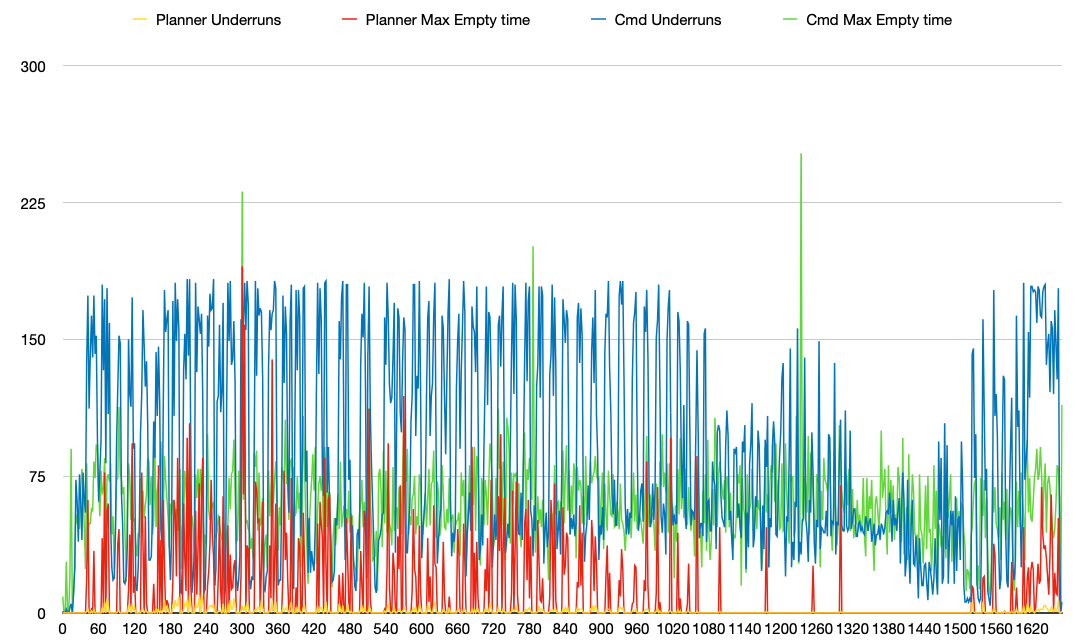

I plotted the data I got from three separate runs and calculated min, max and average, one with upload.py, one with OctoPrint with the CountedEvent used for ok tracking, one with a regular threading.Event (I was wondering if there was a difference - there isn't).

So on average, upload.py is 3ms faster for each round trip. flush always has everything run against the magical 10ms boundary for the received line round trips, regardless of whether I use OctoPrint, upload.py or even Printrun (I modified printcore to also output the same kind of timings).

Just for the record, do you also see similar roundtrip values in the log output on stderr as you see on the actual line?

It's also very weird that while from Python it looks like it's the write that is slow and the read that is fast, on the line it looks just the other way around. Makes me wonder if the readline method might be the real culprit (I so far assumed write).

Ah, just saw your reference to the API docs.

From what I see in the actual code of PySerial, write with no timeout appears to be writing it to the output buffer and flush waits until that is actually processed and hence really went over the line. But I agree that that shouldn't take _that_ long...

Also, forget what I said about read vs write being slow, the timing diagram can be interpreted just as easily the other way around.

So, at this point I'm a bit stumped. I have absolutely no idea what's happening with flush there and where the read delays are coming from that I'm saying. And the only explanation that I have for the avg. 3ms between OctoPrint and upload.py I'm seeing in my current tests is the GIL. But that is just a hunch, and I'm a bit wary of sinking the amount of time I'd need into the current comm layer in order to make it multiprocessing capable to test that theory (from my understanding of multiprocessing, it should get rid of the GIL since it would indeed use separate processes instead of threads in the same process then, but there is a bit of an overhead involved there, and especially on single core CPUs (Pi1) it seems to not be that good of an idea depending on who you ask). So I guess I need to think about this some more, read up on multiprocessing a bit more and figure out if it's viable at all (especially considering the gcode hook situation).

I hacked your upload to accumulate stats in a dictionary instead of printing them. This is what I get:

Uploading file /mnt/reprap/tmp/Dice.gco as dice.gco to printer on port /dev/ttyAMA0, baudrate 115200. Serial flush is disabled

Connected.

line received count 13352 ave 5.7 min 0.8 max 59.5

ok received count 13349 ave 0.1 min 0.1 max 6.4

line sent count 13350 ave 0.5 min 0.4 max 10.8

Done.

So on the face of it looks like receive is slow but send is faster than the line so actually it looks like write is non-blocking and read has to wait for the write to happen before the OK comes back. This is running at almost the full line half duplex bandwidth. The Python is only wasting about 1ms.

With flush both the read and the write get much longer:

Uploading file /mnt/reprap/tmp/Dice.gco as dice.gco to printer on port /dev/ttyAMA0, baudrate 115200. Serial flush is enabled

Connected.

line received count 13352 ave 20.0 min 0.8 max 58.4

ok received count 13349 ave 0.1 min 0.1 max 0.6

line sent count 13350 ave 19.1 min 0.8 max 29.5

Done.

What I would expect is for sent to be around 5ms longer and received to be 5ms shorter.

In conclusion I think pyserial flush must be broken. Raw Python is about twice as fast as OctoPrint. I don't think it is necessary or wise to have send and receive in separate threads for a half duplex protocol. I have always done it with one but then I only allow my slaves to reply to the master, they never speak out of turn and they must reply within a specified timeout. Again I think RepRap is horribly and irrecoverably broken and will go back to my own simple system design, blow the cobwebs of HydraRaptor and bring it up to modern standards with a 32 bit controller and an RPi 2 host or perhaps 3 if I can get the serial port to work.

I don't think multiprocessing will work to separate the reader from the writer on Windows because only one process at a time can have the device open.