Mmdetection: why my losses are getting bigger and bigger when training?

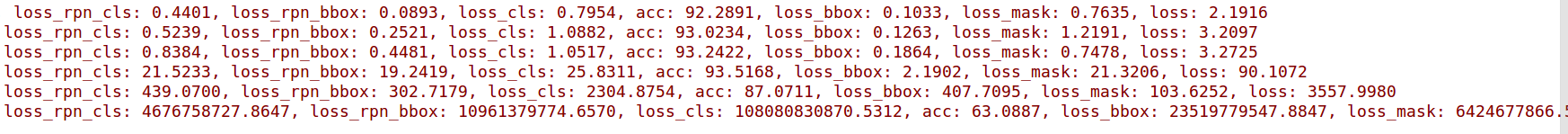

I have not change the code, but my losses become bigger and bigger when I train the mask_rcnn_r101_fpn_1x. Can anyone tell me what for please?

All 7 comments

I had the same problem. Reducing lr in the config file helped me.

Important: The default learning rate in config files is for 8 GPUs and 2 img/gpu (batch size = 8*2 = 16). According to the Linear Scaling Rule, you need to set the learning rate proportional to the batch size if you use different GPUs or images per GPU, e.g., lr=0.01 for 4 GPUs * 2 img/gpu and lr=0.08 for 16 GPUs * 4 img/gpu.

just follow https://github.com/open-mmlab/mmdetection/blob/master/docs/GETTING_STARTED.md

My question is quiet similar to yours. My loss stays unchanged after 50 iters.

lr =0.01 with 4 GPUs and 2img/gpu.

Any one met this problem?

Looking forward to your advice.

2019-10-09 13:05:46,773 - INFO - workflow: [('train', 1)], max: 80 epochs

2019-10-09 13:06:34,358 - INFO - Epoch [1][50/93] lr: 0.00399, eta: 1:57:10, time: 0.951, data_time: 0.014, memory: 9053, focal_loss: 685.2113, iou_loss: 13.7145, loss: 698.9258

2019-10-09 13:08:11,904 - INFO - Epoch [2][50/93] lr: 0.00523, eta: 1:26:43, time: 1.088, data_time: 0.014, memory: 9053, focal_loss: 18.4207, iou_loss: 13.8155, loss: 32.2362

2019-10-09 13:10:00,893 - INFO - Epoch [3][50/93] lr: 0.00647, eta: 1:21:53, time: 1.180, data_time: 0.013, memory: 9053, focal_loss: 18.4207, iou_loss: 13.8155, loss: 32.2362

2019-10-09 13:11:54,490 - INFO - Epoch [4][50/93] lr: 0.00771, eta: 1:19:45, time: 1.209, data_time: 0.013, memory: 9053, focal_loss: 18.4207, iou_loss: 13.8155, loss: 32.2362

2019-10-09 13:13:47,784 - INFO - Epoch [5][50/93] lr: 0.00895, eta: 1:18:16, time: 1.220, data_time: 0.015, memory: 9053, focal_loss: 18.4207, iou_loss: 13.8155, loss: 32.2362

2019-10-09 13:15:40,929 - INFO - Epoch [6][50/93] lr: 0.01000, eta: 1:16:54, time: 1.215, data_time: 0.015, memory: 9053, focal_loss: 18.4207, iou_loss: 13.8155, loss: 32.2362

I had the same problem. Reducing lr in the config file helped me.

Thank you very much! It really help.

I had the same problem. Reducing lr in the config file helped me.

Thank you very much! It really help.

I have change lr from 0.02 to 0.0001, problems still happens...

My question is quiet similar to yours. My loss stays unchanged after 50 iters.

lr =0.01 with 4 GPUs and 2img/gpu.

Any one met this problem?

Looking forward to your advice.2019-10-09 13:05:46,773 - INFO - workflow: [('train', 1)], max: 80 epochs 2019-10-09 13:06:34,358 - INFO - Epoch [1][50/93] lr: 0.00399, eta: 1:57:10, time: 0.951, data_time: 0.014, memory: 9053, focal_loss: 685.2113, iou_loss: 13.7145, loss: 698.9258 2019-10-09 13:08:11,904 - INFO - Epoch [2][50/93] lr: 0.00523, eta: 1:26:43, time: 1.088, data_time: 0.014, memory: 9053, focal_loss: 18.4207, iou_loss: 13.8155, loss: 32.2362 2019-10-09 13:10:00,893 - INFO - Epoch [3][50/93] lr: 0.00647, eta: 1:21:53, time: 1.180, data_time: 0.013, memory: 9053, focal_loss: 18.4207, iou_loss: 13.8155, loss: 32.2362 2019-10-09 13:11:54,490 - INFO - Epoch [4][50/93] lr: 0.00771, eta: 1:19:45, time: 1.209, data_time: 0.013, memory: 9053, focal_loss: 18.4207, iou_loss: 13.8155, loss: 32.2362 2019-10-09 13:13:47,784 - INFO - Epoch [5][50/93] lr: 0.00895, eta: 1:18:16, time: 1.220, data_time: 0.015, memory: 9053, focal_loss: 18.4207, iou_loss: 13.8155, loss: 32.2362 2019-10-09 13:15:40,929 - INFO - Epoch [6][50/93] lr: 0.01000, eta: 1:16:54, time: 1.215, data_time: 0.015, memory: 9053, focal_loss: 18.4207, iou_loss: 13.8155, loss: 32.2362

Did you change your code? The loss in epoch 1 is so large. It seems like there are some errors in your code.

Important: The default learning rate in config files is for 8 GPUs and 2 img/gpu (batch size = 8*2 = 16). According to the Linear Scaling Rule, you need to set the learning rate proportional to the batch size if you use different GPUs or images per GPU, e.g., lr=0.01 for 4 GPUs * 2 img/gpu and lr=0.08 for 16 GPUs * 4 img/gpu.

just follow https://github.com/open-mmlab/mmdetection/blob/master/docs/GETTING_STARTED.md

Thank you for your advice!

Most helpful comment

I had the same problem. Reducing lr in the config file helped me.