Ml-agents: Asymmetrical Self-play with two different behavior names perform same action

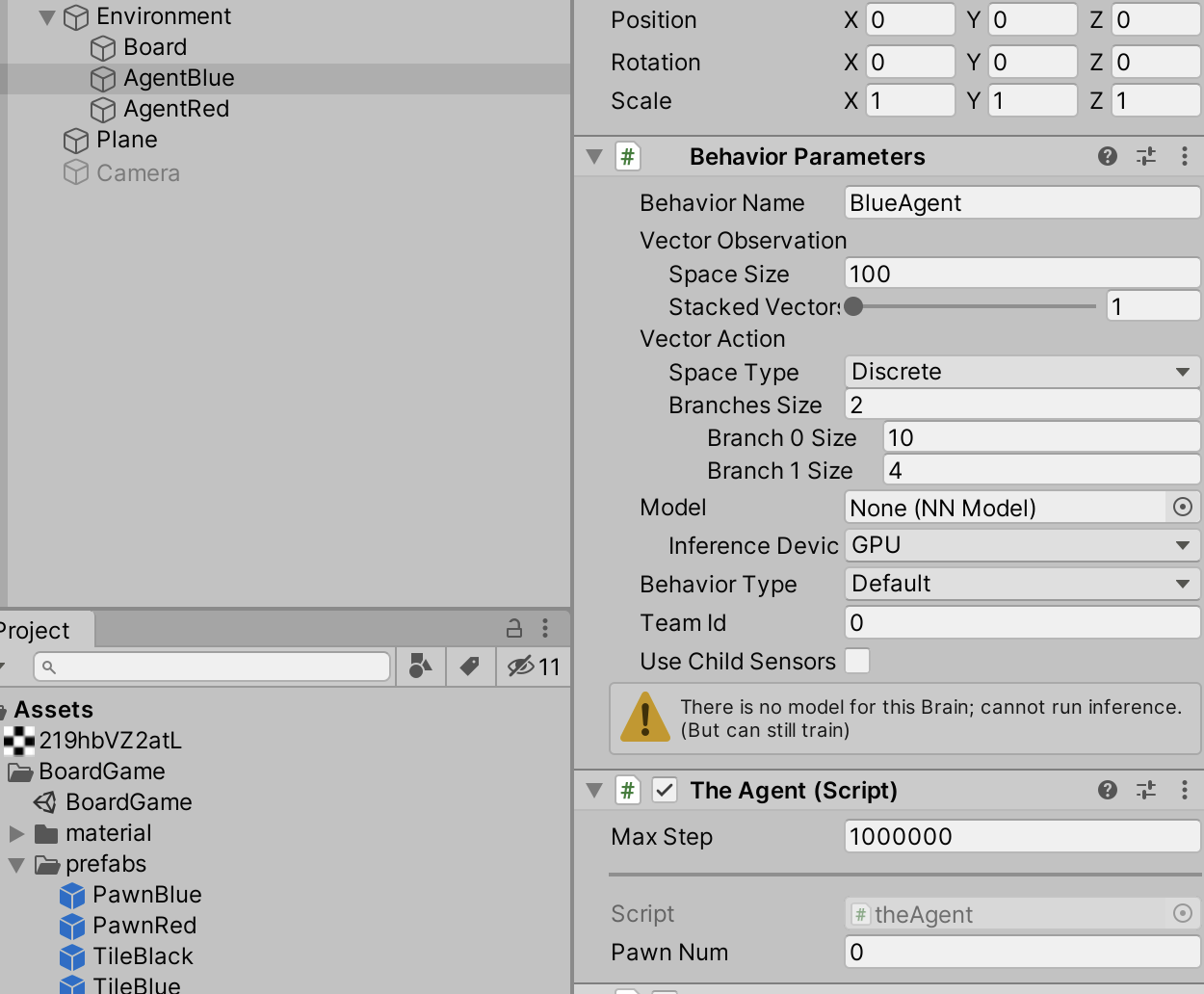

Setting up a competitive multi agent snake scene, which is Asymmetrical. Both agents have a different behavior name and config (SnakeA, SnakeB). During training they both perform the exact same action each step.

This doesn't seem correct, can you please confirm?

Vector inputs: 1600 (each square of game and its type one hot encoded) [VectorSensor]

▄▄▄▓▓▓▓

╓▓▓▓▓▓▓█▓▓▓▓▓

,▄▄▄m▀▀▀' ,▓▓▓▀▓▓▄ ▓▓▓ ▓▓▌

▄▓▓▓▀' ▄▓▓▀ ▓▓▓ ▄▄ ▄▄ ,▄▄ ▄▄▄▄ ,▄▄ ▄▓▓▌▄ ▄▄▄ ,▄▄

▄▓▓▓▀ ▄▓▓▀ ▐▓▓▌ ▓▓▌ ▐▓▓ ▐▓▓▓▀▀▀▓▓▌ ▓▓▓ ▀▓▓▌▀ ^▓▓▌ ╒▓▓▌

▄▓▓▓▓▓▄▄▄▄▄▄▄▄▓▓▓ ▓▀ ▓▓▌ ▐▓▓ ▐▓▓ ▓▓▓ ▓▓▓ ▓▓▌ ▐▓▓▄ ▓▓▌

▀▓▓▓▓▀▀▀▀▀▀▀▀▀▀▓▓▄ ▓▓ ▓▓▌ ▐▓▓ ▐▓▓ ▓▓▓ ▓▓▓ ▓▓▌ ▐▓▓▐▓▓

^█▓▓▓ ▀▓▓▄ ▐▓▓▌ ▓▓▓▓▄▓▓▓▓ ▐▓▓ ▓▓▓ ▓▓▓ ▓▓▓▄ ▓▓▓▓`

'▀▓▓▓▄ ^▓▓▓ ▓▓▓ └▀▀▀▀ ▀▀ ^▀▀ `▀▀ `▀▀ '▀▀ ▐▓▓▌

▀▀▀▀▓▄▄▄ ▓▓▓▓▓▓, ▓▓▓▓▀

`▀█▓▓▓▓▓▓▓▓▓▌

¬`▀▀▀█▓

Version information:

ml-agents: 0.15.0,

ml-agents-envs: 0.15.0,

Communicator API: 0.15.0,

TensorFlow: 2.0.1

WARNING:tensorflow:From c:\users\gigaf\source\repos\ml-agents\ml-agents-venv\lib\site-packages\tensorflow_core\python\compat\v2_compat.py:65: disable_resource_variables (from tensorflow.python.ops.variable_scope) is deprecated and will be removed in a future version.

Instructions for updating:

non-resource variables are not supported in the long term

2020-04-08 15:34:53 INFO [trainer_controller.py:167] Hyperparameters for the GhostTrainer of brain SnakeA:

trainer: ppo

batch_size: 1024

beta: 0.005

buffer_size: 10240

epsilon: 0.2

hidden_units: 256

lambd: 0.95

learning_rate: 0.0003

learning_rate_schedule: constant

max_steps: 5.0e7

memory_size: 128

normalize: True

num_epoch: 3

num_layers: 2

time_horizon: 1000

sequence_length: 64

summary_freq: 10000

use_recurrent: False

vis_encode_type: simple

reward_signals:

extrinsic:

strength: 1.0

gamma: 0.99

summary_path: snake-1_SnakeA

model_path: ./models/snake-1/SnakeA

keep_checkpoints: 5

self_play:

window: 10

play_against_latest_model_ratio: 0.5

save_steps: 50000

swap_steps: 50000

team_change: 100000

2020-04-08 15:34:53.403082: I tensorflow/core/platform/cpu_feature_guard.cc:142] Your CPU supports instructions that this TensorFlow binary was not compiled to use: AVX2

2020-04-08 15:34:54 INFO [trainer_controller.py:167] Hyperparameters for the GhostTrainer of brain SnakeB:

trainer: ppo

batch_size: 1024

beta: 0.005

buffer_size: 10240

epsilon: 0.2

hidden_units: 256

lambd: 0.95

learning_rate: 0.0003

learning_rate_schedule: constant

max_steps: 5.0e7

memory_size: 128

normalize: True

num_epoch: 3

num_layers: 2

time_horizon: 1000

sequence_length: 64

summary_freq: 10000

use_recurrent: False

vis_encode_type: simple

reward_signals:

extrinsic:

strength: 1.0

gamma: 0.99

summary_path: snake-1_SnakeB

model_path: ./models/snake-1/SnakeB

keep_checkpoints: 5

self_play:

window: 10

play_against_latest_model_ratio: 0.5

save_steps: 50000

swap_steps: 50000

team_change: 100000

2020-04-08 15:36:37 INFO [trainer.py:213] snake-1: SnakeA: Step: 10000. Time Elapsed: 104.249 s Mean Reward: 0.800. Std of Reward: 0.400. Not Training.

2020-04-08 15:36:37 INFO [trainer.py:102] Learning brain SnakeA?team=0 ELO: 1199.001

Mean Opponent ELO: 1200.091 Std Opponent ELO: 0.287

2020-04-08 15:36:37 INFO [trainer.py:213] snake-1: SnakeB: Step: 10000. Time Elapsed: 102.920 s Mean Reward: 1.000. Std of Reward: 0.000. Not Training.

2020-04-08 15:36:37 INFO [trainer.py:102] Learning brain SnakeB?team=1 ELO: 1198.513

Mean Opponent ELO: 1200.135 Std Opponent ELO: 0.427

2020-04-08 15:38:15 INFO [trainer.py:213] snake-1: SnakeA: Step: 20000. Time Elapsed: 202.252 s Mean Reward: 0.667. Std of Reward: 0.471. Not Training.

2020-04-08 15:38:15 INFO [trainer.py:102] Learning brain SnakeA?team=0 ELO: 1199.010

Mean Opponent ELO: 1200.090 Std Opponent ELO: 0.285

2020-04-08 15:38:15 INFO [trainer.py:213] snake-1: SnakeB: Step: 20000. Time Elapsed: 200.922 s Mean Reward: 1.000. Std of Reward: 0.000. Not Training.

2020-04-08 15:38:15 INFO [trainer.py:102] Learning brain SnakeB?team=1 ELO: 1197.030

Mean Opponent ELO: 1200.270 Std Opponent ELO: 0.854

2020-04-08 15:39:48 INFO [trainer.py:213] snake-1: SnakeA: Step: 30000. Time Elapsed: 295.421 s Mean Reward: 1.000. Std of Reward: 0.000. Not Training.

2020-04-08 15:39:48 INFO [trainer.py:102] Learning brain SnakeA?team=0 ELO: 1198.017

Mean Opponent ELO: 1200.180 Std Opponent ELO: 0.570

SnakeA:

normalize: true

max_steps: 5.0e7

learning_rate_schedule: constant

batch_size: 1024

buffer_size: 10240

hidden_units: 256

time_horizon: 1000

self_play:

window: 10

play_against_latest_model_ratio: 0.5

save_steps: 50000

swap_steps: 50000

team_change: 100000

SnakeB:

normalize: true

max_steps: 5.0e7

learning_rate_schedule: constant

batch_size: 1024

buffer_size: 10240

hidden_units: 256

time_horizon: 1000

self_play:

window: 10

play_against_latest_model_ratio: 0.5

save_steps: 50000

swap_steps: 50000

team_change: 100000

All 19 comments

Hi @ElliotWood

Have you set different team_ids for both agents?

Also, the asymmetric self-play feature is currently on the master branch and is not yet part of an official release. It seems you are using the latest release 0.15 which only supports symmetric games. Are you also looking at the documentation on the master branch?

If you are comfortable doing so, I encourage you to try this out on master and let me know how it goes.

Ok I pulled master (.dev4) before testing, bit will double check now.

Yes using different teams also.

Ok upgraded to lastest master, the agents appear to still be mirroring actions. I'd expect them to use different actions, is that assumption correct?

(mlagents) (base) \repos\ml-agents>mlagents-learn config/trainer_config.yaml --run-id=snake-02

WARNING:tensorflow:From \repos\ml-agents\python-envs\mlagents\lib\site-packages\tensorflow_core\python\compat\v2_compat.py:65: disable_resource_variables (from tensorflow.python.ops.variable_scope) is deprecated and will be removed in a future version.

Instructions for updating:

non-resource variables are not supported in the long term

▄▄▄▓▓▓▓

╓▓▓▓▓▓▓█▓▓▓▓▓

,▄▄▄m▀▀▀' ,▓▓▓▀▓▓▄ ▓▓▓ ▓▓▌

▄▓▓▓▀' ▄▓▓▀ ▓▓▓ ▄▄ ▄▄ ,▄▄ ▄▄▄▄ ,▄▄ ▄▓▓▌▄ ▄▄▄ ,▄▄

▄▓▓▓▀ ▄▓▓▀ ▐▓▓▌ ▓▓▌ ▐▓▓ ▐▓▓▓▀▀▀▓▓▌ ▓▓▓ ▀▓▓▌▀ ^▓▓▌ ╒▓▓▌

▄▓▓▓▓▓▄▄▄▄▄▄▄▄▓▓▓ ▓▀ ▓▓▌ ▐▓▓ ▐▓▓ ▓▓▓ ▓▓▓ ▓▓▌ ▐▓▓▄ ▓▓▌

▀▓▓▓▓▀▀▀▀▀▀▀▀▀▀▓▓▄ ▓▓ ▓▓▌ ▐▓▓ ▐▓▓ ▓▓▓ ▓▓▓ ▓▓▌ ▐▓▓▐▓▓

^█▓▓▓ ▀▓▓▄ ▐▓▓▌ ▓▓▓▓▄▓▓▓▓ ▐▓▓ ▓▓▓ ▓▓▓ ▓▓▓▄ ▓▓▓▓`

'▀▓▓▓▄ ^▓▓▓ ▓▓▓ └▀▀▀▀ ▀▀ ^▀▀ `▀▀ `▀▀ '▀▀ ▐▓▓▌

▀▀▀▀▓▄▄▄ ▓▓▓▓▓▓, ▓▓▓▓▀

`▀█▓▓▓▓▓▓▓▓▓▌

¬`▀▀▀█▓

Version information:

ml-agents: 0.16.0.dev0,

ml-agents-envs: 0.16.0.dev0,

Communicator API: 0.16.0,

TensorFlow: 2.0.1

WARNING:tensorflow:From \repos\ml-agents\python-envs\mlagents\lib\site-packages\tensorflow_core\python\compat\v2_compat.py:65: disable_resource_variables (from tensorflow.python.ops.variable_scope) is deprecated and will be removed in a future version.

Instructions for updating:

non-resource variables are not supported in the long term

2020-04-09 12:17:42.015424: I tensorflow/core/platform/cpu_feature_guard.cc:142] Your CPU supports instructions that this TensorFlow binary was not compiled to use: AVX2

2020-04-09 12:17:42 INFO [stats.py:130] Hyperparameters for behavior name snake-02_SnakeB:

trainer: ppo

batch_size: 1024

beta: 0.005

buffer_size: 10240

epsilon: 0.2

hidden_units: 256

lambd: 0.95

learning_rate: 0.0003

learning_rate_schedule: constant

max_steps: 5.0e7

memory_size: 128

normalize: True

num_epoch: 3

num_layers: 2

time_horizon: 1000

sequence_length: 64

summary_freq: 10000

use_recurrent: False

vis_encode_type: simple

reward_signals:

extrinsic:

strength: 1.0

gamma: 0.99

summary_path: snake-02_SnakeB

model_path: ./models/snake-02/SnakeB

keep_checkpoints: 5

self_play:

window: 10

play_against_latest_model_ratio: 0.5

save_steps: 50000

swap_steps: 50000

team_change: 100000

2020-04-09 12:17:43 INFO [stats.py:130] Hyperparameters for behavior name snake-02_SnakeA:

trainer: ppo

batch_size: 1024

beta: 0.005

buffer_size: 10240

epsilon: 0.2

hidden_units: 256

lambd: 0.95

learning_rate: 0.0003

learning_rate_schedule: constant

max_steps: 5.0e7

memory_size: 128

normalize: True

num_epoch: 3

num_layers: 2

time_horizon: 1000

sequence_length: 64

summary_freq: 10000

use_recurrent: False

vis_encode_type: simple

reward_signals:

extrinsic:

strength: 1.0

gamma: 0.99

summary_path: snake-02_SnakeA

model_path: ./models/snake-02/SnakeA

keep_checkpoints: 5

self_play:

window: 10

play_against_latest_model_ratio: 0.5

save_steps: 50000

swap_steps: 50000

team_change: 100000

2020-04-09 12:20:09 INFO [stats.py:111] snake-02_SnakeB: Step: 10000. Time Elapsed: 151.135 s Mean Reward: 0.667. Std of Reward: 0.471. Training.

2020-04-09 12:20:09 INFO [stats.py:116] snake-02_SnakeB ELO: 1199.500.

2020-04-09 12:22:19 INFO [stats.py:111] snake-02_SnakeB: Step: 20000. Time Elapsed: 280.779 s Mean Reward: 1.000. Std of Reward: 0.000. Training.

2020-04-09 12:22:19 INFO [stats.py:116] snake-02_SnakeB ELO: 1198.176.

SnakeA:

normalize: true

max_steps: 5.0e7

learning_rate_schedule: constant

batch_size: 1024

buffer_size: 10240

hidden_units: 256

time_horizon: 1000

self_play:

window: 10

play_against_latest_model_ratio: 0.5

save_steps: 50000

swap_steps: 50000

team_change: 100000

SnakeB:

normalize: true

max_steps: 5.0e7

learning_rate_schedule: constant

batch_size: 1024

buffer_size: 10240

hidden_units: 256

time_horizon: 1000

self_play:

window: 10

play_against_latest_model_ratio: 0.5

save_steps: 50000

swap_steps: 50000

team_change: 100000

[Update] After 5000 steps they now perform different actions ^_^ Cant wait to see them train!

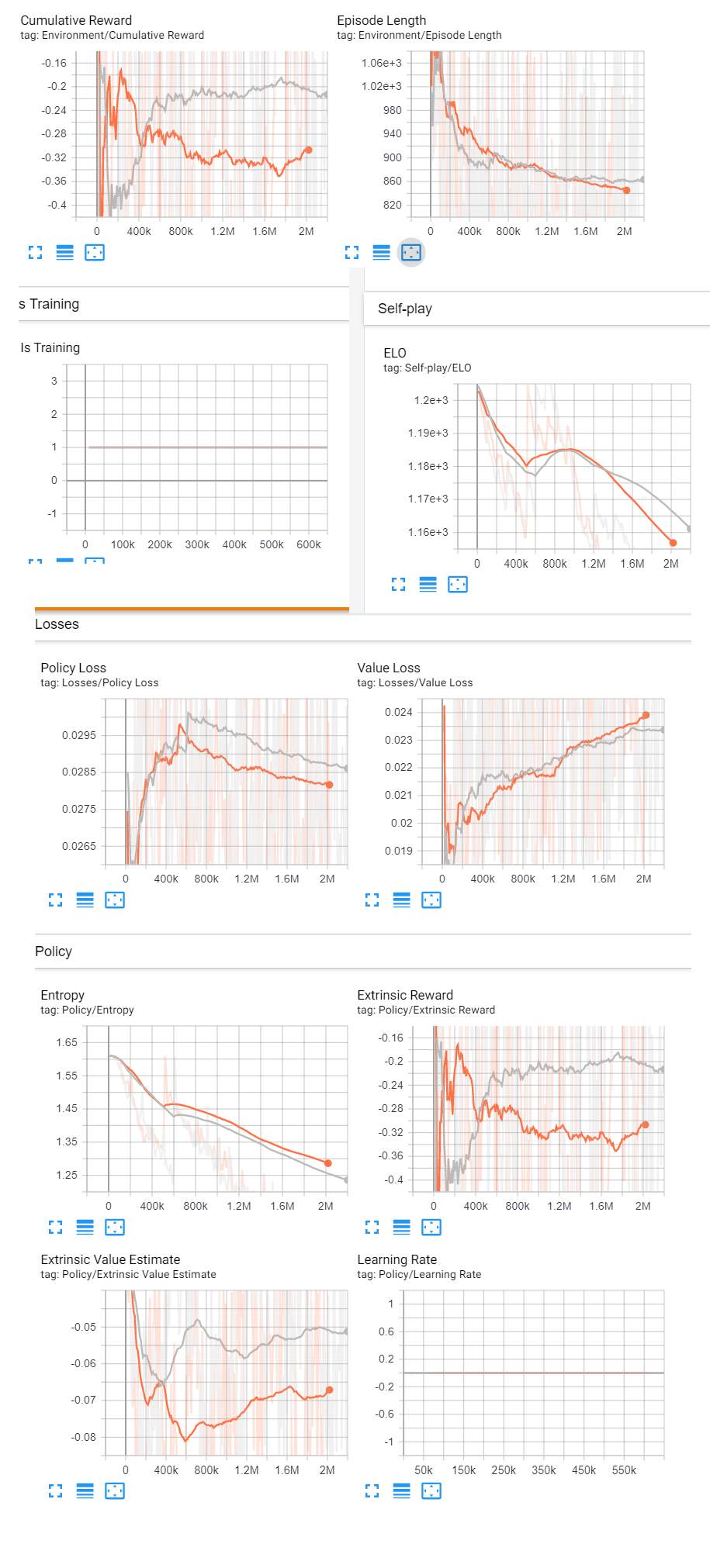

[Update] after 2,000,000 steps The ELO of the agents steadily decreased. I believe this was because the reward wasn't zero sub. +1 point for food (without other snakes loosing a point).

Retraining new brains with new rules:

-1 point for hitting snake, other snakes get +1. End of episode.

+1 point for hitting food, other snakes get -1. Continue episode.

Hopefully this will create an environment where the ELO of the agents steadily increase.

[Update] Similar results, the ELO of the agents steadily decreases.

Is this a bug or is there something I've not configured correctly? Open to suggestions

twtich *videos of the process are on my Twitch Channel, also the live training is being streamed.

▄▄▄▓▓▓▓

╓▓▓▓▓▓▓█▓▓▓▓▓

,▄▄▄m▀▀▀' ,▓▓▓▀▓▓▄ ▓▓▓ ▓▓▌

▄▓▓▓▀' ▄▓▓▀ ▓▓▓ ▄▄ ▄▄ ,▄▄ ▄▄▄▄ ,▄▄ ▄▓▓▌▄ ▄▄▄ ,▄▄

▄▓▓▓▀ ▄▓▓▀ ▐▓▓▌ ▓▓▌ ▐▓▓ ▐▓▓▓▀▀▀▓▓▌ ▓▓▓ ▀▓▓▌▀ ^▓▓▌ ╒▓▓▌

▄▓▓▓▓▓▄▄▄▄▄▄▄▄▓▓▓ ▓▀ ▓▓▌ ▐▓▓ ▐▓▓ ▓▓▓ ▓▓▓ ▓▓▌ ▐▓▓▄ ▓▓▌

▀▓▓▓▓▀▀▀▀▀▀▀▀▀▀▓▓▄ ▓▓ ▓▓▌ ▐▓▓ ▐▓▓ ▓▓▓ ▓▓▓ ▓▓▌ ▐▓▓▐▓▓

^█▓▓▓ ▀▓▓▄ ▐▓▓▌ ▓▓▓▓▄▓▓▓▓ ▐▓▓ ▓▓▓ ▓▓▓ ▓▓▓▄ ▓▓▓▓`

'▀▓▓▓▄ ^▓▓▓ ▓▓▓ └▀▀▀▀ ▀▀ ^▀▀ `▀▀ `▀▀ '▀▀ ▐▓▓▌

▀▀▀▀▓▄▄▄ ▓▓▓▓▓▓, ▓▓▓▓▀

`▀█▓▓▓▓▓▓▓▓▓▌

¬`▀▀▀█▓

Version information:

ml-agents: 0.16.0.dev0,

ml-agents-envs: 0.16.0.dev0,

Communicator API: 0.16.0,

TensorFlow: 2.0.1

WARNING:tensorflow:From c:\users\gigaf\source\repos\ml-agents\python-envs\mlagents\lib\site-packages\tensorflow_core\python\compat\v2_compat.py:65: disable_resource_variables (from tensorflow.python.ops.variable_scope) is deprecated and will be removed in a future version.

Instructions for updating:

non-resource variables are not supported in the long term

2020-04-11 17:27:35.337986: I tensorflow/core/platform/cpu_feature_guard.cc:142] Your CPU supports instructions that this TensorFlow binary was not compiled to use: AVX2

2020-04-11 17:27:35 INFO [stats.py:130] Hyperparameters for behavior name snake-04_SnakeA:

trainer: ppo

batch_size: 1024

beta: 0.005

buffer_size: 10240

epsilon: 0.2

hidden_units: 256

lambd: 0.95

learning_rate: 0.0003

learning_rate_schedule: constant

max_steps: 5.0e7

memory_size: 128

normalize: True

num_epoch: 3

num_layers: 2

time_horizon: 1000

sequence_length: 64

summary_freq: 10000

use_recurrent: False

vis_encode_type: simple

reward_signals:

extrinsic:

strength: 1.0

gamma: 0.99

summary_path: snake-04_SnakeA

model_path: ./models/snake-04/SnakeA

keep_checkpoints: 5

self_play:

window: 10

play_against_latest_model_ratio: 0.5

save_steps: 50000

swap_steps: 50000

team_change: 100000

2020-04-11 17:27:36 INFO [tf_policy.py:118] Loading model for brain SnakeA?team=0 from ./models/snake-04/SnakeA.

2020-04-11 17:27:36 INFO [tf_policy.py:147] Resuming training from step 602741.

2020-04-11 17:27:36 INFO [stats.py:130] Hyperparameters for behavior name snake-04_SnakeB:

trainer: ppo

batch_size: 1024

beta: 0.005

buffer_size: 10240

epsilon: 0.2

hidden_units: 256

lambd: 0.95

learning_rate: 0.0003

learning_rate_schedule: constant

max_steps: 5.0e7

memory_size: 128

normalize: True

num_epoch: 3

num_layers: 2

time_horizon: 1000

sequence_length: 64

summary_freq: 10000

use_recurrent: False

vis_encode_type: simple

reward_signals:

extrinsic:

strength: 1.0

gamma: 0.99

summary_path: snake-04_SnakeB

model_path: ./models/snake-04/SnakeB

keep_checkpoints: 5

self_play:

window: 10

play_against_latest_model_ratio: 0.5

save_steps: 50000

swap_steps: 50000

team_change: 100000

2020-04-11 17:27:37 INFO [tf_policy.py:118] Loading model for brain SnakeB?team=1 from ./models/snake-04/SnakeB.

2020-04-11 17:27:37 INFO [tf_policy.py:147] Resuming training from step 516693.

2020-04-11 17:29:54 INFO [stats.py:111] snake-04_SnakeA: Step: 610000. Time Elapsed: 143.277 s Mean Reward: -0.500. Std of Reward: 1.118. Training.

2020-04-11 17:29:54 INFO [stats.py:116] snake-04_SnakeA ELO: 1199.501.

2020-04-11 17:32:38 INFO [stats.py:111] snake-04_SnakeA: Step: 620000. Time Elapsed: 307.693 s Mean Reward: -0.125. Std of Reward: 1.452. Training.

2020-04-11 17:32:38 INFO [stats.py:116] snake-04_SnakeA ELO: 1198.466.

2020-04-11 17:35:24 INFO [stats.py:111] snake-04_SnakeA: Step: 630000. Time Elapsed: 473.317 s Mean Reward: -0.357. Std of Reward: 1.288. Training.

2020-04-11 17:35:24 INFO [stats.py:116] snake-04_SnakeA ELO: 1197.231.

2020-04-11 17:38:14 INFO [stats.py:111] snake-04_SnakeA: Step: 640000. Time Elapsed: 643.950 s Mean Reward: -0.357. Std of Reward: 1.445. Training.

2020-04-11 17:38:14 INFO [stats.py:116] snake-04_SnakeA ELO: 1196.027.

2020-04-11 17:41:02 INFO [stats.py:111] snake-04_SnakeA: Step: 650000. Time Elapsed: 811.586 s Mean Reward: -0.500. Std of Reward: 1.258. Training.

2020-04-11 17:41:02 INFO [stats.py:116] snake-04_SnakeA ELO: 1194.594.

2020-04-11 17:41:48 INFO [trainer_controller.py:100] Saved Model

2020-04-11 17:44:02 INFO [stats.py:111] snake-04_SnakeA: Step: 660000. Time Elapsed: 991.859 s Mean Reward: 0.375. Std of Reward: 1.728. Training.

2020-04-11 17:44:02 INFO [stats.py:116] snake-04_SnakeA ELO: 1194.168.

2020-04-11 17:46:49 INFO [stats.py:111] snake-04_SnakeA: Step: 670000. Time Elapsed: 1158.775 s Mean Reward: 0.636. Std of Reward: 1.367. Training.

2020-04-11 17:46:49 INFO [stats.py:116] snake-04_SnakeA ELO: 1194.561.

2020-04-11 17:49:41 INFO [stats.py:111] snake-04_SnakeA: Step: 680000. Time Elapsed: 1330.236 s Mean Reward: 0.091. Std of Reward: 1.621. Training.

2020-04-11 17:49:41 INFO [stats.py:116] snake-04_SnakeA ELO: 1195.269.

2020-04-11 17:52:45 INFO [stats.py:111] snake-04_SnakeA: Step: 690000. Time Elapsed: 1514.293 s Mean Reward: -0.333. Std of Reward: 1.491. Training.

2020-04-11 17:52:45 INFO [stats.py:116] snake-04_SnakeA ELO: 1194.551.

2020-04-11 17:55:41 INFO [stats.py:111] snake-04_SnakeA: Step: 700000. Time Elapsed: 1690.137 s Mean Reward: -0.357. Std of Reward: 1.674. Training.

2020-04-11 17:55:41 INFO [stats.py:116] snake-04_SnakeA ELO: 1192.097.

2020-04-11 17:56:13 INFO [trainer_controller.py:100] Saved Model

2020-04-11 17:57:21 INFO [stats.py:111] snake-04_SnakeB: Step: 520000. Time Elapsed: 1790.171 s Mean Reward: -1.000. Std of Reward: 0.816. Training.

2020-04-11 17:57:21 INFO [stats.py:116] snake-04_SnakeB ELO: 1199.810.

2020-04-11 18:00:17 INFO [stats.py:111] snake-04_SnakeB: Step: 530000. Time Elapsed: 1966.579 s Mean Reward: -0.750. Std of Reward: 1.250. Training.

2020-04-11 18:00:17 INFO [stats.py:116] snake-04_SnakeB ELO: 1197.653.

2020-04-11 18:03:18 INFO [stats.py:111] snake-04_SnakeB: Step: 540000. Time Elapsed: 2147.085 s Mean Reward: 0.444. Std of Reward: 1.165. Training.

2020-04-11 18:03:18 INFO [stats.py:116] snake-04_SnakeB ELO: 1195.218.

2020-04-11 18:06:07 INFO [stats.py:111] snake-04_SnakeB: Step: 550000. Time Elapsed: 2316.644 s Mean Reward: -0.286. Std of Reward: 1.436. Training.

2020-04-11 18:06:07 INFO [stats.py:116] snake-04_SnakeB ELO: 1194.191.

2020-04-11 18:08:50 INFO [stats.py:111] snake-04_SnakeB: Step: 560000. Time Elapsed: 2479.585 s Mean Reward: -0.091. Std of Reward: 1.621. Training.

2020-04-11 18:08:50 INFO [stats.py:116] snake-04_SnakeB ELO: 1192.709.

2020-04-11 18:10:36 INFO [trainer_controller.py:100] Saved Model

2020-04-11 18:11:52 INFO [stats.py:111] snake-04_SnakeB: Step: 570000. Time Elapsed: 2661.293 s Mean Reward: -0.231. Std of Reward: 1.527. Training.

2020-04-11 18:11:52 INFO [stats.py:116] snake-04_SnakeB ELO: 1189.171.

2020-04-11 18:14:32 INFO [stats.py:111] snake-04_SnakeB: Step: 580000. Time Elapsed: 2821.262 s Mean Reward: -0.143. Std of Reward: 1.245. Training.

2020-04-11 18:14:32 INFO [stats.py:116] snake-04_SnakeB ELO: 1186.538.

2020-04-11 18:17:25 INFO [stats.py:111] snake-04_SnakeB: Step: 590000. Time Elapsed: 2994.530 s Mean Reward: -0.900. Std of Reward: 1.300. Training.

2020-04-11 18:17:25 INFO [stats.py:116] snake-04_SnakeB ELO: 1185.070.

2020-04-11 18:20:24 INFO [stats.py:111] snake-04_SnakeB: Step: 600000. Time Elapsed: 3173.927 s Mean Reward: 0.400. Std of Reward: 1.665. Training.

2020-04-11 18:20:24 INFO [stats.py:116] snake-04_SnakeB ELO: 1183.891.

2020-04-11 18:23:13 INFO [stats.py:111] snake-04_SnakeB: Step: 610000. Time Elapsed: 3342.301 s Mean Reward: -0.375. Std of Reward: 1.218. Training.

2020-04-11 18:23:13 INFO [stats.py:116] snake-04_SnakeB ELO: 1182.343.

2020-04-11 18:25:02 INFO [trainer_controller.py:100] Saved Model

2020-04-11 18:27:37 INFO [stats.py:111] snake-04_SnakeA: Step: 710000. Time Elapsed: 3606.930 s Mean Reward: -0.333. Std of Reward: 1.535. Training.

2020-04-11 18:27:37 INFO [stats.py:116] snake-04_SnakeA ELO: 1207.336.

2020-04-11 18:30:22 INFO [stats.py:111] snake-04_SnakeA: Step: 720000. Time Elapsed: 3771.369 s Mean Reward: -0.571. Std of Reward: 1.498. Training.

2020-04-11 18:30:22 INFO [stats.py:116] snake-04_SnakeA ELO: 1210.250.

2020-04-11 18:33:10 INFO [stats.py:111] snake-04_SnakeA: Step: 730000. Time Elapsed: 3939.134 s Mean Reward: -0.231. Std of Reward: 1.804. Training.

2020-04-11 18:33:10 INFO [stats.py:116] snake-04_SnakeA ELO: 1206.974.

2020-04-11 18:36:03 INFO [stats.py:111] snake-04_SnakeA: Step: 740000. Time Elapsed: 4112.371 s Mean Reward: -0.125. Std of Reward: 1.364. Training.

2020-04-11 18:36:03 INFO [stats.py:116] snake-04_SnakeA ELO: 1204.815.

2020-04-11 18:38:51 INFO [stats.py:111] snake-04_SnakeA: Step: 750000. Time Elapsed: 4280.429 s Mean Reward: 0.500. Std of Reward: 1.190. Training.

2020-04-11 18:38:51 INFO [stats.py:116] snake-04_SnakeA ELO: 1202.430.

2020-04-11 18:39:22 INFO [trainer_controller.py:100] Saved Model

2020-04-11 18:41:45 INFO [stats.py:111] snake-04_SnakeA: Step: 760000. Time Elapsed: 4454.462 s Mean Reward: -0.357. Std of Reward: 1.540. Training.

2020-04-11 18:41:45 INFO [stats.py:116] snake-04_SnakeA ELO: 1199.935.

2020-04-11 18:44:49 INFO [stats.py:111] snake-04_SnakeA: Step: 770000. Time Elapsed: 4638.846 s Mean Reward: -0.562. Std of Reward: 1.767. Training.

2020-04-11 18:44:49 INFO [stats.py:116] snake-04_SnakeA ELO: 1197.384.

2020-04-11 18:47:31 INFO [stats.py:111] snake-04_SnakeA: Step: 780000. Time Elapsed: 4800.156 s Mean Reward: 0.000. Std of Reward: 1.414. Training.

2020-04-11 18:47:31 INFO [stats.py:116] snake-04_SnakeA ELO: 1195.512.

2020-04-11 18:50:31 INFO [stats.py:111] snake-04_SnakeA: Step: 790000. Time Elapsed: 4980.861 s Mean Reward: -0.067. Std of Reward: 1.806. Training.

2020-04-11 18:50:31 INFO [stats.py:116] snake-04_SnakeA ELO: 1193.248.

2020-04-11 18:53:23 INFO [stats.py:111] snake-04_SnakeA: Step: 800000. Time Elapsed: 5152.071 s Mean Reward: -0.400. Std of Reward: 1.497. Training.

2020-04-11 18:53:23 INFO [stats.py:116] snake-04_SnakeA ELO: 1191.557.

2020-04-11 18:53:43 INFO [trainer_controller.py:100] Saved Model

2020-04-11 18:55:02 INFO [stats.py:111] snake-04_SnakeB: Step: 620000. Time Elapsed: 5251.974 s Mean Reward: -1.000. Std of Reward: 1.080. Training.

2020-04-11 18:55:02 INFO [stats.py:116] snake-04_SnakeB ELO: 1184.269.

2020-04-11 18:57:42 INFO [stats.py:111] snake-04_SnakeB: Step: 630000. Time Elapsed: 5411.535 s Mean Reward: -0.091. Std of Reward: 1.881. Training.

2020-04-11 18:57:42 INFO [stats.py:116] snake-04_SnakeB ELO: 1187.915.

2020-04-11 19:00:33 INFO [stats.py:111] snake-04_SnakeB: Step: 640000. Time Elapsed: 5582.488 s Mean Reward: -1.000. Std of Reward: 1.617. Training.

2020-04-11 19:00:33 INFO [stats.py:116] snake-04_SnakeB ELO: 1187.008.

2020-04-11 19:03:29 INFO [stats.py:111] snake-04_SnakeB: Step: 650000. Time Elapsed: 5758.259 s Mean Reward: -0.438. Std of Reward: 1.619. Training.

2020-04-11 19:03:29 INFO [stats.py:116] snake-04_SnakeB ELO: 1185.719.

2020-04-11 19:06:18 INFO [stats.py:111] snake-04_SnakeB: Step: 660000. Time Elapsed: 5927.364 s Mean Reward: 0.778. Std of Reward: 1.618. Training.

2020-04-11 19:06:18 INFO [stats.py:116] snake-04_SnakeB ELO: 1185.457.

2020-04-11 19:08:03 INFO [trainer_controller.py:100] Saved Model

2020-04-11 19:09:17 INFO [stats.py:111] snake-04_SnakeB: Step: 670000. Time Elapsed: 6106.813 s Mean Reward: -0.091. Std of Reward: 1.164. Training.

2020-04-11 19:09:17 INFO [stats.py:116] snake-04_SnakeB ELO: 1183.079.

2020-04-11 19:12:02 INFO [stats.py:111] snake-04_SnakeB: Step: 680000. Time Elapsed: 6271.915 s Mean Reward: -0.615. Std of Reward: 1.496. Training.

2020-04-11 19:12:02 INFO [stats.py:116] snake-04_SnakeB ELO: 1181.817.

2020-04-11 19:15:00 INFO [stats.py:111] snake-04_SnakeB: Step: 690000. Time Elapsed: 6449.091 s Mean Reward: -0.438. Std of Reward: 1.368. Training.

2020-04-11 19:15:00 INFO [stats.py:116] snake-04_SnakeB ELO: 1180.721.

2020-04-11 19:17:52 INFO [stats.py:111] snake-04_SnakeB: Step: 700000. Time Elapsed: 6621.489 s Mean Reward: -0.214. Std of Reward: 1.473. Training.

2020-04-11 19:17:52 INFO [stats.py:116] snake-04_SnakeB ELO: 1181.449.

2020-04-11 19:20:53 INFO [stats.py:111] snake-04_SnakeB: Step: 710000. Time Elapsed: 6802.054 s Mean Reward: 0.077. Std of Reward: 1.141. Training.

2020-04-11 19:20:53 INFO [stats.py:116] snake-04_SnakeB ELO: 1179.990.

2020-04-11 19:22:20 INFO [trainer_controller.py:100] Saved Model

2020-04-11 19:24:58 INFO [stats.py:111] snake-04_SnakeA: Step: 810000. Time Elapsed: 7047.450 s Mean Reward: 0.000. Std of Reward: 1.414. Training.

2020-04-11 19:24:58 INFO [stats.py:116] snake-04_SnakeA ELO: 1194.419.

2020-04-11 19:27:55 INFO [stats.py:111] snake-04_SnakeA: Step: 820000. Time Elapsed: 7224.915 s Mean Reward: -0.667. Std of Reward: 1.546. Training.

2020-04-11 19:27:55 INFO [stats.py:116] snake-04_SnakeA ELO: 1193.342.

2020-04-11 19:30:51 INFO [stats.py:111] snake-04_SnakeA: Step: 830000. Time Elapsed: 7400.112 s Mean Reward: -1.000. Std of Reward: 1.414. Training.

2020-04-11 19:30:51 INFO [stats.py:116] snake-04_SnakeA ELO: 1191.796.

2020-04-11 19:33:41 INFO [stats.py:111] snake-04_SnakeA: Step: 840000. Time Elapsed: 7570.229 s Mean Reward: 0.067. Std of Reward: 1.482. Training.

2020-04-11 19:33:41 INFO [stats.py:116] snake-04_SnakeA ELO: 1187.466.

2020-04-11 19:36:26 INFO [stats.py:111] snake-04_SnakeA: Step: 850000. Time Elapsed: 7735.707 s Mean Reward: -0.538. Std of Reward: 1.500. Training.

2020-04-11 19:36:26 INFO [stats.py:116] snake-04_SnakeA ELO: 1185.915.

2020-04-11 19:36:46 INFO [trainer_controller.py:100] Saved Model

2020-04-11 19:39:16 INFO [stats.py:111] snake-04_SnakeA: Step: 860000. Time Elapsed: 7905.441 s Mean Reward: 0.182. Std of Reward: 1.402. Training.

2020-04-11 19:39:16 INFO [stats.py:116] snake-04_SnakeA ELO: 1183.606.

2020-04-11 19:42:21 INFO [stats.py:111] snake-04_SnakeA: Step: 870000. Time Elapsed: 8090.905 s Mean Reward: 0.000. Std of Reward: 1.749. Training.

2020-04-11 19:42:21 INFO [stats.py:116] snake-04_SnakeA ELO: 1184.406.

2020-04-11 19:45:11 INFO [stats.py:111] snake-04_SnakeA: Step: 880000. Time Elapsed: 8260.113 s Mean Reward: -0.077. Std of Reward: 1.639. Training.

2020-04-11 19:45:11 INFO [stats.py:116] snake-04_SnakeA ELO: 1183.248.

2020-04-11 19:47:53 INFO [stats.py:111] snake-04_SnakeA: Step: 890000. Time Elapsed: 8422.684 s Mean Reward: -0.375. Std of Reward: 1.495. Training.

2020-04-11 19:47:53 INFO [stats.py:116] snake-04_SnakeA ELO: 1181.091.

2020-04-11 19:50:46 INFO [stats.py:111] snake-04_SnakeA: Step: 900000. Time Elapsed: 8595.280 s Mean Reward: 0.250. Std of Reward: 1.714. Training.

2020-04-11 19:50:46 INFO [stats.py:116] snake-04_SnakeA ELO: 1180.205.

2020-04-11 19:51:03 INFO [trainer_controller.py:100] Saved Model

2020-04-11 19:52:25 INFO [stats.py:111] snake-04_SnakeB: Step: 720000. Time Elapsed: 8694.513 s Mean Reward: -0.333. Std of Reward: 1.491. Training.

2020-04-11 19:52:25 INFO [stats.py:116] snake-04_SnakeB ELO: 1184.269.

2020-04-11 19:55:23 INFO [stats.py:111] snake-04_SnakeB: Step: 730000. Time Elapsed: 8872.134 s Mean Reward: 0.182. Std of Reward: 2.167. Training.

2020-04-11 19:55:23 INFO [stats.py:116] snake-04_SnakeB ELO: 1189.932.

2020-04-11 19:58:13 INFO [stats.py:111] snake-04_SnakeB: Step: 740000. Time Elapsed: 9042.987 s Mean Reward: -1.000. Std of Reward: 1.354. Training.

2020-04-11 19:58:13 INFO [stats.py:116] snake-04_SnakeB ELO: 1189.568.

2020-04-11 20:00:57 INFO [stats.py:111] snake-04_SnakeB: Step: 750000. Time Elapsed: 9206.850 s Mean Reward: 0.000. Std of Reward: 1.569. Training.

2020-04-11 20:00:57 INFO [stats.py:116] snake-04_SnakeB ELO: 1187.115.

2020-04-11 20:03:54 INFO [stats.py:111] snake-04_SnakeB: Step: 760000. Time Elapsed: 9383.820 s Mean Reward: -0.462. Std of Reward: 1.393. Training.

2020-04-11 20:03:54 INFO [stats.py:116] snake-04_SnakeB ELO: 1187.250.

2020-04-11 20:05:18 INFO [trainer_controller.py:100] Saved Model

2020-04-11 20:06:52 INFO [stats.py:111] snake-04_SnakeB: Step: 770000. Time Elapsed: 9561.236 s Mean Reward: -0.357. Std of Reward: 1.540. Training.

2020-04-11 20:06:52 INFO [stats.py:116] snake-04_SnakeB ELO: 1186.516.

2020-04-11 20:09:43 INFO [stats.py:111] snake-04_SnakeB: Step: 780000. Time Elapsed: 9732.210 s Mean Reward: -0.750. Std of Reward: 1.199. Training.

2020-04-11 20:09:43 INFO [stats.py:116] snake-04_SnakeB ELO: 1186.631.

2020-04-11 20:12:57 INFO [stats.py:111] snake-04_SnakeB: Step: 790000. Time Elapsed: 9926.597 s Mean Reward: -0.231. Std of Reward: 1.423. Training.

2020-04-11 20:12:57 INFO [stats.py:116] snake-04_SnakeB ELO: 1185.840.

2020-04-11 20:16:25 INFO [stats.py:111] snake-04_SnakeB: Step: 800000. Time Elapsed: 10134.928 s Mean Reward: 0.188. Std of Reward: 1.590. Training.

2020-04-11 20:16:25 INFO [stats.py:116] snake-04_SnakeB ELO: 1184.083.

2020-04-11 20:21:11 INFO [stats.py:111] snake-04_SnakeB: Step: 810000. Time Elapsed: 10420.211 s Mean Reward: -1.077. Std of Reward: 1.269. Training.

2020-04-11 20:21:11 INFO [stats.py:116] snake-04_SnakeB ELO: 1181.978.

2020-04-11 20:23:29 INFO [trainer_controller.py:100] Saved Model

2020-04-11 20:28:48 INFO [stats.py:111] snake-04_SnakeA: Step: 910000. Time Elapsed: 10877.291 s Mean Reward: -0.100. Std of Reward: 1.700. Training.

2020-04-11 20:28:48 INFO [stats.py:116] snake-04_SnakeA ELO: 1180.927.

2020-04-11 20:33:42 INFO [stats.py:111] snake-04_SnakeA: Step: 920000. Time Elapsed: 11171.210 s Mean Reward: 0.545. Std of Reward: 1.924. Training.

2020-04-11 20:33:42 INFO [stats.py:116] snake-04_SnakeA ELO: 1182.729.

2020-04-11 20:38:16 INFO [stats.py:111] snake-04_SnakeA: Step: 930000. Time Elapsed: 11445.918 s Mean Reward: -0.273. Std of Reward: 1.656. Training.

2020-04-11 20:38:16 INFO [stats.py:116] snake-04_SnakeA ELO: 1181.630.

2020-04-11 20:43:19 INFO [stats.py:111] snake-04_SnakeA: Step: 940000. Time Elapsed: 11748.095 s Mean Reward: -0.818. Std of Reward: 1.527. Training.

2020-04-11 20:43:19 INFO [stats.py:116] snake-04_SnakeA ELO: 1180.658.

2020-04-11 20:48:07 INFO [stats.py:111] snake-04_SnakeA: Step: 950000. Time Elapsed: 12036.358 s Mean Reward: 0.062. Std of Reward: 1.560. Training.

2020-04-11 20:48:07 INFO [stats.py:116] snake-04_SnakeA ELO: 1179.148.

2020-04-11 20:48:11 INFO [trainer_controller.py:100] Saved Model

2020-04-11 20:53:02 INFO [stats.py:111] snake-04_SnakeA: Step: 960000. Time Elapsed: 12331.939 s Mean Reward: -0.556. Std of Reward: 1.257. Training.

2020-04-11 20:53:02 INFO [stats.py:116] snake-04_SnakeA ELO: 1178.580.

2020-04-11 20:58:08 INFO [stats.py:111] snake-04_SnakeA: Step: 970000. Time Elapsed: 12637.124 s Mean Reward: 0.000. Std of Reward: 1.472. Training.

2020-04-11 20:58:08 INFO [stats.py:116] snake-04_SnakeA ELO: 1175.634.

2020-04-11 21:02:21 INFO [stats.py:111] snake-04_SnakeA: Step: 980000. Time Elapsed: 12890.494 s Mean Reward: 0.000. Std of Reward: 1.477. Training.

2020-04-11 21:02:21 INFO [stats.py:116] snake-04_SnakeA ELO: 1172.973.

2020-04-11 21:05:36 INFO [stats.py:111] snake-04_SnakeA: Step: 990000. Time Elapsed: 13085.959 s Mean Reward: -0.133. Std of Reward: 1.628. Training.

2020-04-11 21:05:36 INFO [stats.py:116] snake-04_SnakeA ELO: 1170.151.

2020-04-11 21:08:50 INFO [trainer_controller.py:100] Saved Model

2020-04-11 21:08:56 INFO [stats.py:111] snake-04_SnakeA: Step: 1000000. Time Elapsed: 13285.374 s Mean Reward: 0.643. Std of Reward: 1.493. Training.

2020-04-11 21:08:56 INFO [stats.py:116] snake-04_SnakeA ELO: 1170.362.

2020-04-11 21:10:32 INFO [stats.py:111] snake-04_SnakeB: Step: 820000. Time Elapsed: 13381.480 s Mean Reward: -0.556. Std of Reward: 1.165. Training.

2020-04-11 21:10:32 INFO [stats.py:116] snake-04_SnakeB ELO: 1184.831.

2020-04-11 21:13:43 INFO [stats.py:111] snake-04_SnakeB: Step: 830000. Time Elapsed: 13572.639 s Mean Reward: -0.500. Std of Reward: 1.500. Training.

2020-04-11 21:13:43 INFO [stats.py:116] snake-04_SnakeB ELO: 1190.957.

2020-04-11 21:17:06 INFO [stats.py:111] snake-04_SnakeB: Step: 840000. Time Elapsed: 13775.078 s Mean Reward: -1.000. Std of Reward: 1.265. Training.

2020-04-11 21:17:06 INFO [stats.py:116] snake-04_SnakeB ELO: 1187.480.

2020-04-11 21:20:18 INFO [stats.py:111] snake-04_SnakeB: Step: 850000. Time Elapsed: 13968.033 s Mean Reward: -1.000. Std of Reward: 1.247. Training.

2020-04-11 21:20:18 INFO [stats.py:116] snake-04_SnakeB ELO: 1185.761.

2020-04-11 21:23:22 INFO [stats.py:111] snake-04_SnakeB: Step: 860000. Time Elapsed: 14152.014 s Mean Reward: -0.615. Std of Reward: 1.496. Training.

2020-04-11 21:23:22 INFO [stats.py:116] snake-04_SnakeB ELO: 1182.368.

2020-04-11 21:24:49 INFO [trainer_controller.py:100] Saved Model

2020-04-11 21:26:37 INFO [stats.py:111] snake-04_SnakeB: Step: 870000. Time Elapsed: 14346.438 s Mean Reward: -0.250. Std of Reward: 1.090. Training.

2020-04-11 21:26:37 INFO [stats.py:116] snake-04_SnakeB ELO: 1183.259.

2020-04-11 21:29:48 INFO [stats.py:111] snake-04_SnakeB: Step: 880000. Time Elapsed: 14537.165 s Mean Reward: -0.214. Std of Reward: 1.655. Training.

2020-04-11 21:29:48 INFO [stats.py:116] snake-04_SnakeB ELO: 1181.874.

2020-04-11 21:32:58 INFO [stats.py:111] snake-04_SnakeB: Step: 890000. Time Elapsed: 14727.921 s Mean Reward: 0.143. Std of Reward: 1.457. Training.

2020-04-11 21:32:58 INFO [stats.py:116] snake-04_SnakeB ELO: 1180.243.

2020-04-11 21:36:21 INFO [stats.py:111] snake-04_SnakeB: Step: 900000. Time Elapsed: 14930.543 s Mean Reward: -1.083. Std of Reward: 1.187. Training.

2020-04-11 21:36:21 INFO [stats.py:116] snake-04_SnakeB ELO: 1178.691.

2020-04-11 21:39:24 INFO [stats.py:111] snake-04_SnakeB: Step: 910000. Time Elapsed: 15113.566 s Mean Reward: -0.333. Std of Reward: 1.333. Training.

2020-04-11 21:39:24 INFO [stats.py:116] snake-04_SnakeB ELO: 1176.351.

2020-04-11 21:40:53 INFO [trainer_controller.py:100] Saved Model

2020-04-11 21:44:06 INFO [stats.py:111] snake-04_SnakeA: Step: 1010000. Time Elapsed: 15395.179 s Mean Reward: -0.500. Std of Reward: 1.190. Training.

2020-04-11 21:44:06 INFO [stats.py:116] snake-04_SnakeA ELO: 1166.808.

2020-04-11 21:47:20 INFO [stats.py:111] snake-04_SnakeA: Step: 1020000. Time Elapsed: 15589.591 s Mean Reward: -0.750. Std of Reward: 1.534. Training.

2020-04-11 21:47:20 INFO [stats.py:116] snake-04_SnakeA ELO: 1164.072.

2020-04-11 21:50:46 INFO [stats.py:111] snake-04_SnakeA: Step: 1030000. Time Elapsed: 15795.345 s Mean Reward: -0.538. Std of Reward: 1.500. Training.

2020-04-11 21:50:46 INFO [stats.py:116] snake-04_SnakeA ELO: 1160.634.

2020-04-11 21:53:39 INFO [stats.py:111] snake-04_SnakeA: Step: 1040000. Time Elapsed: 15969.008 s Mean Reward: -0.333. Std of Reward: 1.633. Training.

2020-04-11 21:53:39 INFO [stats.py:116] snake-04_SnakeA ELO: 1159.198.

2020-04-11 21:56:54 INFO [trainer_controller.py:100] Saved Model

2020-04-11 21:57:07 INFO [stats.py:111] snake-04_SnakeA: Step: 1050000. Time Elapsed: 16176.361 s Mean Reward: -0.231. Std of Reward: 1.576. Training.

2020-04-11 21:57:07 INFO [stats.py:116] snake-04_SnakeA ELO: 1159.886.

2020-04-11 22:00:22 INFO [stats.py:111] snake-04_SnakeA: Step: 1060000. Time Elapsed: 16371.785 s Mean Reward: 0.417. Std of Reward: 1.847. Training.

2020-04-11 22:00:22 INFO [stats.py:116] snake-04_SnakeA ELO: 1158.896.

2020-04-11 22:03:32 INFO [stats.py:111] snake-04_SnakeA: Step: 1070000. Time Elapsed: 16561.604 s Mean Reward: 0.083. Std of Reward: 1.754. Training.

2020-04-11 22:03:32 INFO [stats.py:116] snake-04_SnakeA ELO: 1158.272.

2020-04-11 22:06:32 INFO [stats.py:111] snake-04_SnakeA: Step: 1080000. Time Elapsed: 16741.352 s Mean Reward: -0.647. Std of Reward: 1.747. Training.

2020-04-11 22:06:32 INFO [stats.py:116] snake-04_SnakeA ELO: 1157.351.

2020-04-11 22:10:01 INFO [stats.py:111] snake-04_SnakeA: Step: 1090000. Time Elapsed: 16950.146 s Mean Reward: -0.688. Std of Reward: 1.310. Training.

2020-04-11 22:10:01 INFO [stats.py:116] snake-04_SnakeA ELO: 1155.528.

2020-04-11 22:12:58 INFO [trainer_controller.py:100] Saved Model

2020-04-11 22:13:02 INFO [stats.py:111] snake-04_SnakeA: Step: 1100000. Time Elapsed: 17131.114 s Mean Reward: 0.300. Std of Reward: 1.100. Training.

2020-04-11 22:13:02 INFO [stats.py:116] snake-04_SnakeA ELO: 1154.695.

2020-04-11 22:14:56 INFO [stats.py:111] snake-04_SnakeB: Step: 920000. Time Elapsed: 17246.024 s Mean Reward: -0.333. Std of Reward: 1.563. Training.

2020-04-11 22:14:56 INFO [stats.py:116] snake-04_SnakeB ELO: 1175.569.

2020-04-11 22:18:04 INFO [stats.py:111] snake-04_SnakeB: Step: 930000. Time Elapsed: 17433.267 s Mean Reward: 0.375. Std of Reward: 1.409. Training.

2020-04-11 22:18:04 INFO [stats.py:116] snake-04_SnakeB ELO: 1187.395.

2020-04-11 22:21:11 INFO [stats.py:111] snake-04_SnakeB: Step: 940000. Time Elapsed: 17620.314 s Mean Reward: -0.467. Std of Reward: 1.543. Training.

2020-04-11 22:21:11 INFO [stats.py:116] snake-04_SnakeB ELO: 1186.636.

2020-04-11 22:24:36 INFO [stats.py:111] snake-04_SnakeB: Step: 950000. Time Elapsed: 17825.110 s Mean Reward: 0.077. Std of Reward: 1.859. Training.

2020-04-11 22:24:36 INFO [stats.py:116] snake-04_SnakeB ELO: 1184.472.

2020-04-11 22:27:50 INFO [stats.py:111] snake-04_SnakeB: Step: 960000. Time Elapsed: 18019.956 s Mean Reward: -0.583. Std of Reward: 1.441. Training.

2020-04-11 22:27:50 INFO [stats.py:116] snake-04_SnakeB ELO: 1182.798.

2020-04-11 22:28:58 INFO [trainer_controller.py:100] Saved Model

2020-04-11 22:31:06 INFO [stats.py:111] snake-04_SnakeB: Step: 970000. Time Elapsed: 18215.720 s Mean Reward: -1.000. Std of Reward: 1.713. Training.

2020-04-11 22:31:06 INFO [stats.py:116] snake-04_SnakeB ELO: 1180.118.

2020-04-11 22:34:21 INFO [stats.py:111] snake-04_SnakeB: Step: 980000. Time Elapsed: 18410.055 s Mean Reward: 0.083. Std of Reward: 1.382. Training.

2020-04-11 22:34:21 INFO [stats.py:116] snake-04_SnakeB ELO: 1177.228.

2020-04-11 22:37:27 INFO [stats.py:111] snake-04_SnakeB: Step: 990000. Time Elapsed: 18596.943 s Mean Reward: -0.556. Std of Reward: 1.771. Training.

2020-04-11 22:37:27 INFO [stats.py:116] snake-04_SnakeB ELO: 1176.074.

2020-04-11 22:40:41 INFO [stats.py:111] snake-04_SnakeB: Step: 1000000. Time Elapsed: 18790.494 s Mean Reward: -1.111. Std of Reward: 0.994. Training.

2020-04-11 22:40:41 INFO [stats.py:116] snake-04_SnakeB ELO: 1174.535.

2020-04-11 22:44:00 INFO [stats.py:111] snake-04_SnakeB: Step: 1010000. Time Elapsed: 18989.156 s Mean Reward: 0.077. Std of Reward: 1.439. Training.

2020-04-11 22:44:00 INFO [stats.py:116] snake-04_SnakeB ELO: 1172.697.

2020-04-11 22:45:00 INFO [trainer_controller.py:100] Saved Model

2020-04-11 22:48:43 INFO [stats.py:111] snake-04_SnakeA: Step: 1110000. Time Elapsed: 19272.428 s Mean Reward: -0.429. Std of Reward: 1.450. Training.

2020-04-11 22:48:43 INFO [stats.py:116] snake-04_SnakeA ELO: 1154.498.

2020-04-11 22:52:05 INFO [stats.py:111] snake-04_SnakeA: Step: 1120000. Time Elapsed: 19474.882 s Mean Reward: -0.154. Std of Reward: 1.610. Training.

2020-04-11 22:52:05 INFO [stats.py:116] snake-04_SnakeA ELO: 1154.134.

2020-04-11 22:55:11 INFO [stats.py:111] snake-04_SnakeA: Step: 1130000. Time Elapsed: 19660.831 s Mean Reward: -0.091. Std of Reward: 1.311. Training.

2020-04-11 22:55:11 INFO [stats.py:116] snake-04_SnakeA ELO: 1154.989.

2020-04-11 22:58:17 INFO [stats.py:111] snake-04_SnakeA: Step: 1140000. Time Elapsed: 19846.078 s Mean Reward: -0.667. Std of Reward: 1.054. Training.

2020-04-11 22:58:17 INFO [stats.py:116] snake-04_SnakeA ELO: 1153.679.

2020-04-11 23:01:04 INFO [trainer_controller.py:100] Saved Model

2020-04-11 23:01:30 INFO [stats.py:111] snake-04_SnakeA: Step: 1150000. Time Elapsed: 20039.368 s Mean Reward: -0.636. Std of Reward: 1.367. Training.

2020-04-11 23:01:30 INFO [stats.py:116] snake-04_SnakeA ELO: 1151.967.

2020-04-11 23:04:57 INFO [stats.py:111] snake-04_SnakeA: Step: 1160000. Time Elapsed: 20246.637 s Mean Reward: -0.091. Std of Reward: 1.379. Training.

2020-04-11 23:04:57 INFO [stats.py:116] snake-04_SnakeA ELO: 1150.728.

2020-04-11 23:07:56 INFO [stats.py:111] snake-04_SnakeA: Step: 1170000. Time Elapsed: 20425.704 s Mean Reward: -0.462. Std of Reward: 1.447. Training.

2020-04-11 23:07:56 INFO [stats.py:116] snake-04_SnakeA ELO: 1151.245.

2020-04-11 23:11:07 INFO [stats.py:111] snake-04_SnakeA: Step: 1180000. Time Elapsed: 20616.655 s Mean Reward: 0.083. Std of Reward: 1.320. Training.

2020-04-11 23:11:07 INFO [stats.py:116] snake-04_SnakeA ELO: 1150.758.

2020-04-11 23:14:28 INFO [stats.py:111] snake-04_SnakeA: Step: 1190000. Time Elapsed: 20817.958 s Mean Reward: -0.154. Std of Reward: 1.460. Training.

2020-04-11 23:14:28 INFO [stats.py:116] snake-04_SnakeA ELO: 1150.670.

2020-04-11 23:17:04 INFO [trainer_controller.py:100] Saved Model

2020-04-11 23:17:43 INFO [stats.py:111] snake-04_SnakeA: Step: 1200000. Time Elapsed: 21012.677 s Mean Reward: 0.000. Std of Reward: 1.651. Training.

2020-04-11 23:17:43 INFO [stats.py:116] snake-04_SnakeA ELO: 1149.904.

2020-04-11 23:19:11 INFO [stats.py:111] snake-04_SnakeB: Step: 1020000. Time Elapsed: 21100.697 s Mean Reward: -0.200. Std of Reward: 1.470. Training.

2020-04-11 23:19:11 INFO [stats.py:116] snake-04_SnakeB ELO: 1171.715.

2020-04-11 23:22:22 INFO [stats.py:111] snake-04_SnakeB: Step: 1030000. Time Elapsed: 21291.565 s Mean Reward: -1.000. Std of Reward: 0.471. Training.

2020-04-11 23:22:22 INFO [stats.py:116] snake-04_SnakeB ELO: 1170.145.

2020-04-11 23:25:38 INFO [stats.py:111] snake-04_SnakeB: Step: 1040000. Time Elapsed: 21487.653 s Mean Reward: -0.062. Std of Reward: 1.784. Training.

2020-04-11 23:25:38 INFO [stats.py:116] snake-04_SnakeB ELO: 1167.505.

2020-04-11 23:28:48 INFO [stats.py:111] snake-04_SnakeB: Step: 1050000. Time Elapsed: 21677.219 s Mean Reward: -0.556. Std of Reward: 1.499. Training.

2020-04-11 23:28:48 INFO [stats.py:116] snake-04_SnakeB ELO: 1166.734.

2020-04-11 23:32:02 INFO [stats.py:111] snake-04_SnakeB: Step: 1060000. Time Elapsed: 21871.503 s Mean Reward: -0.273. Std of Reward: 1.135. Training.

2020-04-11 23:32:02 INFO [stats.py:116] snake-04_SnakeB ELO: 1163.598.

2020-04-11 23:33:06 INFO [trainer_controller.py:100] Saved Model

2020-04-11 23:35:09 INFO [stats.py:111] snake-04_SnakeB: Step: 1070000. Time Elapsed: 22058.822 s Mean Reward: -0.667. Std of Reward: 1.700. Training.

2020-04-11 23:35:09 INFO [stats.py:116] snake-04_SnakeB ELO: 1160.459.

2020-04-11 23:38:35 INFO [stats.py:111] snake-04_SnakeB: Step: 1080000. Time Elapsed: 22264.543 s Mean Reward: -0.235. Std of Reward: 1.628. Training.

2020-04-11 23:38:35 INFO [stats.py:116] snake-04_SnakeB ELO: 1158.593.

2020-04-11 23:41:42 INFO [stats.py:111] snake-04_SnakeB: Step: 1090000. Time Elapsed: 22451.168 s Mean Reward: -0.167. Std of Reward: 1.344. Training.

2020-04-11 23:41:42 INFO [stats.py:116] snake-04_SnakeB ELO: 1155.800.

2020-04-11 23:44:56 INFO [stats.py:111] snake-04_SnakeB: Step: 1100000. Time Elapsed: 22645.917 s Mean Reward: 0.583. Std of Reward: 1.706. Training.

2020-04-11 23:44:56 INFO [stats.py:116] snake-04_SnakeB ELO: 1154.976.

2020-04-11 23:48:03 INFO [stats.py:111] snake-04_SnakeB: Step: 1110000. Time Elapsed: 22832.392 s Mean Reward: -0.200. Std of Reward: 1.641. Training.

2020-04-11 23:48:03 INFO [stats.py:116] snake-04_SnakeB ELO: 1157.000.

2020-04-11 23:49:07 INFO [trainer_controller.py:100] Saved Model

2020-04-11 23:53:01 INFO [stats.py:111] snake-04_SnakeA: Step: 1210000. Time Elapsed: 23130.514 s Mean Reward: 0.125. Std of Reward: 1.364. Training.

2020-04-11 23:53:01 INFO [stats.py:116] snake-04_SnakeA ELO: 1165.965.

2020-04-11 23:56:02 INFO [stats.py:111] snake-04_SnakeA: Step: 1220000. Time Elapsed: 23311.548 s Mean Reward: -0.231. Std of Reward: 1.761. Training.

2020-04-11 23:56:02 INFO [stats.py:116] snake-04_SnakeA ELO: 1166.748.

2020-04-11 23:59:25 INFO [stats.py:111] snake-04_SnakeA: Step: 1230000. Time Elapsed: 23514.342 s Mean Reward: -0.385. Std of Reward: 1.389. Training.

2020-04-11 23:59:25 INFO [stats.py:116] snake-04_SnakeA ELO: 1164.529.

2020-04-12 00:02:41 INFO [stats.py:111] snake-04_SnakeA: Step: 1240000. Time Elapsed: 23710.870 s Mean Reward: -0.667. Std of Reward: 1.075. Training.

2020-04-12 00:02:41 INFO [stats.py:116] snake-04_SnakeA ELO: 1161.919.

2020-04-12 00:05:08 INFO [trainer_controller.py:100] Saved Model

2020-04-12 00:05:41 INFO [stats.py:111] snake-04_SnakeA: Step: 1250000. Time Elapsed: 23890.075 s Mean Reward: -0.300. Std of Reward: 1.616. Training.

2020-04-12 00:05:41 INFO [stats.py:116] snake-04_SnakeA ELO: 1159.095.

2020-04-12 00:09:06 INFO [stats.py:111] snake-04_SnakeA: Step: 1260000. Time Elapsed: 24095.517 s Mean Reward: -0.083. Std of Reward: 1.801. Training.

2020-04-12 00:09:06 INFO [stats.py:116] snake-04_SnakeA ELO: 1160.573.

2020-04-12 00:12:39 INFO [stats.py:111] snake-04_SnakeA: Step: 1270000. Time Elapsed: 24308.527 s Mean Reward: 0.083. Std of Reward: 1.605. Training.

2020-04-12 00:12:39 INFO [stats.py:116] snake-04_SnakeA ELO: 1158.920.

2020-04-12 00:15:35 INFO [stats.py:111] snake-04_SnakeA: Step: 1280000. Time Elapsed: 24484.479 s Mean Reward: -0.923. Std of Reward: 1.492. Training.

2020-04-12 00:15:35 INFO [stats.py:116] snake-04_SnakeA ELO: 1157.934.

2020-04-12 00:18:56 INFO [stats.py:111] snake-04_SnakeA: Step: 1290000. Time Elapsed: 24685.957 s Mean Reward: 0.450. Std of Reward: 1.857. Training.

2020-04-12 00:18:56 INFO [stats.py:116] snake-04_SnakeA ELO: 1158.580.

2020-04-12 00:21:29 INFO [trainer_controller.py:100] Saved Model

2020-04-12 00:22:02 INFO [stats.py:111] snake-04_SnakeA: Step: 1300000. Time Elapsed: 24871.159 s Mean Reward: 0.333. Std of Reward: 1.247. Training.

2020-04-12 00:22:02 INFO [stats.py:116] snake-04_SnakeA ELO: 1156.593.

2020-04-12 00:23:51 INFO [stats.py:111] snake-04_SnakeB: Step: 1120000. Time Elapsed: 24980.555 s Mean Reward: -0.211. Std of Reward: 1.507. Training.

2020-04-12 00:23:51 INFO [stats.py:116] snake-04_SnakeB ELO: 1156.145.

2020-04-12 00:27:04 INFO [stats.py:111] snake-04_SnakeB: Step: 1130000. Time Elapsed: 25174.014 s Mean Reward: -0.286. Std of Reward: 1.868. Training.

2020-04-12 00:27:04 INFO [stats.py:116] snake-04_SnakeB ELO: 1159.499.

2020-04-12 00:30:11 INFO [stats.py:111] snake-04_SnakeB: Step: 1140000. Time Elapsed: 25360.208 s Mean Reward: -0.111. Std of Reward: 1.286. Training.

2020-04-12 00:30:11 INFO [stats.py:116] snake-04_SnakeB ELO: 1159.085.

2020-04-12 00:33:23 INFO [stats.py:111] snake-04_SnakeB: Step: 1150000. Time Elapsed: 25552.456 s Mean Reward: -0.462. Std of Reward: 1.393. Training.

2020-04-12 00:33:23 INFO [stats.py:116] snake-04_SnakeB ELO: 1159.264.

2020-04-12 00:36:45 INFO [stats.py:111] snake-04_SnakeB: Step: 1160000. Time Elapsed: 25754.860 s Mean Reward: -0.444. Std of Reward: 1.674. Training.

2020-04-12 00:36:45 INFO [stats.py:116] snake-04_SnakeB ELO: 1156.413.

2020-04-12 00:37:27 INFO [trainer_controller.py:100] Saved Model

2020-04-12 00:39:51 INFO [stats.py:111] snake-04_SnakeB: Step: 1170000. Time Elapsed: 25940.540 s Mean Reward: 0.615. Std of Reward: 1.211. Training.

2020-04-12 00:39:51 INFO [stats.py:116] snake-04_SnakeB ELO: 1155.084.

2020-04-12 00:43:05 INFO [stats.py:111] snake-04_SnakeB: Step: 1180000. Time Elapsed: 26134.058 s Mean Reward: -0.846. Std of Reward: 1.657. Training.

2020-04-12 00:43:05 INFO [stats.py:116] snake-04_SnakeB ELO: 1155.048.

2020-04-12 00:46:29 INFO [stats.py:111] snake-04_SnakeB: Step: 1190000. Time Elapsed: 26338.495 s Mean Reward: -0.067. Std of Reward: 1.526. Training.

2020-04-12 00:46:29 INFO [stats.py:116] snake-04_SnakeB ELO: 1153.108.

2020-04-12 00:49:43 INFO [stats.py:111] snake-04_SnakeB: Step: 1200000. Time Elapsed: 26533.013 s Mean Reward: -0.357. Std of Reward: 1.394. Training.

2020-04-12 00:49:43 INFO [stats.py:116] snake-04_SnakeB ELO: 1152.770.

2020-04-12 00:52:43 INFO [stats.py:111] snake-04_SnakeB: Step: 1210000. Time Elapsed: 26712.894 s Mean Reward: -0.250. Std of Reward: 1.785. Training.

2020-04-12 00:52:43 INFO [stats.py:116] snake-04_SnakeB ELO: 1151.588.

2020-04-12 00:53:28 INFO [trainer_controller.py:100] Saved Model

2020-04-12 00:55:52 INFO [stats.py:111] snake-04_SnakeB: Step: 1220000. Time Elapsed: 26901.646 s Mean Reward: 0.000. Std of Reward: 1.592. Training.

2020-04-12 00:55:52 INFO [stats.py:116] snake-04_SnakeB ELO: 1149.928.

2020-04-12 00:57:43 INFO [stats.py:111] snake-04_SnakeA: Step: 1310000. Time Elapsed: 27012.907 s Mean Reward: -0.222. Std of Reward: 1.685. Training.

2020-04-12 00:57:43 INFO [stats.py:116] snake-04_SnakeA ELO: 1157.049.

2020-04-12 01:00:50 INFO [stats.py:111] snake-04_SnakeA: Step: 1320000. Time Elapsed: 27199.294 s Mean Reward: -0.667. Std of Reward: 1.491. Training.

2020-04-12 01:00:50 INFO [stats.py:116] snake-04_SnakeA ELO: 1159.557.

2020-04-12 01:04:08 INFO [stats.py:111] snake-04_SnakeA: Step: 1330000. Time Elapsed: 27397.509 s Mean Reward: -0.053. Std of Reward: 1.317. Training.

2020-04-12 01:04:08 INFO [stats.py:116] snake-04_SnakeA ELO: 1158.290.

2020-04-12 01:07:14 INFO [stats.py:111] snake-04_SnakeA: Step: 1340000. Time Elapsed: 27583.939 s Mean Reward: -0.889. Std of Reward: 1.370. Training.

2020-04-12 01:07:14 INFO [stats.py:116] snake-04_SnakeA ELO: 1155.745.

2020-04-12 01:09:26 INFO [trainer_controller.py:100] Saved Model

2020-04-12 01:10:21 INFO [stats.py:111] snake-04_SnakeA: Step: 1350000. Time Elapsed: 27770.227 s Mean Reward: -0.533. Std of Reward: 1.668. Training.

2020-04-12 01:10:21 INFO [stats.py:116] snake-04_SnakeA ELO: 1152.737.

2020-04-12 01:13:37 INFO [stats.py:111] snake-04_SnakeA: Step: 1360000. Time Elapsed: 27966.708 s Mean Reward: 0.000. Std of Reward: 1.519. Training.

2020-04-12 01:13:37 INFO [stats.py:116] snake-04_SnakeA ELO: 1154.140.

2020-04-12 01:16:44 INFO [stats.py:111] snake-04_SnakeA: Step: 1370000. Time Elapsed: 28153.638 s Mean Reward: 0.000. Std of Reward: 1.342. Training.

2020-04-12 01:16:44 INFO [stats.py:116] snake-04_SnakeA ELO: 1154.694.

2020-04-12 01:19:53 INFO [stats.py:111] snake-04_SnakeA: Step: 1380000. Time Elapsed: 28342.753 s Mean Reward: -0.200. Std of Reward: 1.990. Training.

2020-04-12 01:19:53 INFO [stats.py:116] snake-04_SnakeA ELO: 1154.708.

2020-04-12 01:23:17 INFO [stats.py:111] snake-04_SnakeA: Step: 1390000. Time Elapsed: 28546.455 s Mean Reward: -0.500. Std of Reward: 1.740. Training.

2020-04-12 01:23:17 INFO [stats.py:116] snake-04_SnakeA ELO: 1153.353.

2020-04-12 01:25:28 INFO [trainer_controller.py:100] Saved Model

2020-04-12 01:26:27 INFO [stats.py:111] snake-04_SnakeA: Step: 1400000. Time Elapsed: 28736.979 s Mean Reward: -0.353. Std of Reward: 1.678. Training.

2020-04-12 01:26:27 INFO [stats.py:116] snake-04_SnakeA ELO: 1153.930.

2020-04-12 01:31:16 INFO [stats.py:111] snake-04_SnakeB: Step: 1230000. Time Elapsed: 29025.535 s Mean Reward: -0.053. Std of Reward: 1.356. Training.

2020-04-12 01:31:16 INFO [stats.py:116] snake-04_SnakeB ELO: 1154.351.

2020-04-12 01:34:41 INFO [stats.py:111] snake-04_SnakeB: Step: 1240000. Time Elapsed: 29230.166 s Mean Reward: -0.600. Std of Reward: 1.583. Training.

2020-04-12 01:34:41 INFO [stats.py:116] snake-04_SnakeB ELO: 1153.625.

2020-04-12 01:37:41 INFO [stats.py:111] snake-04_SnakeB: Step: 1250000. Time Elapsed: 29410.466 s Mean Reward: -0.800. Std of Reward: 1.327. Training.

2020-04-12 01:37:41 INFO [stats.py:116] snake-04_SnakeB ELO: 1150.649.

2020-04-12 01:41:00 INFO [stats.py:111] snake-04_SnakeB: Step: 1260000. Time Elapsed: 29609.695 s Mean Reward: -0.600. Std of Reward: 1.665. Training.

2020-04-12 01:41:00 INFO [stats.py:116] snake-04_SnakeB ELO: 1147.895.

2020-04-12 01:41:28 INFO [trainer_controller.py:100] Saved Model

2020-04-12 01:44:09 INFO [stats.py:111] snake-04_SnakeB: Step: 1270000. Time Elapsed: 29798.619 s Mean Reward: -0.667. Std of Reward: 1.563. Training.

2020-04-12 01:44:09 INFO [stats.py:116] snake-04_SnakeB ELO: 1147.175.

2020-04-12 01:47:23 INFO [stats.py:111] snake-04_SnakeB: Step: 1280000. Time Elapsed: 29992.961 s Mean Reward: -0.357. Std of Reward: 1.586. Training.

2020-04-12 01:47:23 INFO [stats.py:116] snake-04_SnakeB ELO: 1147.803.

2020-04-12 01:50:27 INFO [stats.py:111] snake-04_SnakeB: Step: 1290000. Time Elapsed: 30176.823 s Mean Reward: -1.091. Std of Reward: 1.311. Training.

2020-04-12 01:50:27 INFO [stats.py:116] snake-04_SnakeB ELO: 1147.055.

2020-04-12 01:53:54 INFO [stats.py:111] snake-04_SnakeB: Step: 1300000. Time Elapsed: 30383.445 s Mean Reward: -0.846. Std of Reward: 1.099. Training.

2020-04-12 01:53:54 INFO [stats.py:116] snake-04_SnakeB ELO: 1143.994.

2020-04-12 01:56:52 INFO [stats.py:111] snake-04_SnakeB: Step: 1310000. Time Elapsed: 30561.179 s Mean Reward: -0.455. Std of Reward: 1.437. Training.

2020-04-12 01:56:52 INFO [stats.py:116] snake-04_SnakeB ELO: 1142.274.

2020-04-12 01:57:28 INFO [trainer_controller.py:100] Saved Model

2020-04-12 02:00:15 INFO [stats.py:111] snake-04_SnakeB: Step: 1320000. Time Elapsed: 30764.200 s Mean Reward: -0.333. Std of Reward: 1.300. Training.

2020-04-12 02:00:15 INFO [stats.py:116] snake-04_SnakeB ELO: 1139.901.

2020-04-12 02:01:54 INFO [stats.py:111] snake-04_SnakeA: Step: 1410000. Time Elapsed: 30863.609 s Mean Reward: 0.167. Std of Reward: 1.280. Training.

2020-04-12 02:01:54 INFO [stats.py:116] snake-04_SnakeA ELO: 1155.790.

2020-04-12 02:05:07 INFO [stats.py:111] snake-04_SnakeA: Step: 1420000. Time Elapsed: 31056.080 s Mean Reward: 0.000. Std of Reward: 1.633. Training.

2020-04-12 02:05:07 INFO [stats.py:116] snake-04_SnakeA ELO: 1160.270.

2020-04-12 02:08:21 INFO [stats.py:111] snake-04_SnakeA: Step: 1430000. Time Elapsed: 31250.652 s Mean Reward: 0.000. Std of Reward: 1.683. Training.

2020-04-12 02:08:21 INFO [stats.py:116] snake-04_SnakeA ELO: 1160.316.

2020-04-12 02:11:20 INFO [stats.py:111] snake-04_SnakeA: Step: 1440000. Time Elapsed: 31429.261 s Mean Reward: 0.100. Std of Reward: 1.578. Training.

2020-04-12 02:11:20 INFO [stats.py:116] snake-04_SnakeA ELO: 1159.025.

2020-04-12 02:13:31 INFO [trainer_controller.py:100] Saved Model

2020-04-12 02:14:35 INFO [stats.py:111] snake-04_SnakeA: Step: 1450000. Time Elapsed: 31624.282 s Mean Reward: 0.273. Std of Reward: 1.286. Training.

2020-04-12 02:14:35 INFO [stats.py:116] snake-04_SnakeA ELO: 1158.857.

2020-04-12 02:17:44 INFO [stats.py:111] snake-04_SnakeA: Step: 1460000. Time Elapsed: 31813.890 s Mean Reward: -0.100. Std of Reward: 1.700. Training.

2020-04-12 02:17:44 INFO [stats.py:116] snake-04_SnakeA ELO: 1157.633.

2020-04-12 02:21:05 INFO [stats.py:111] snake-04_SnakeA: Step: 1470000. Time Elapsed: 32014.860 s Mean Reward: -0.214. Std of Reward: 1.423. Training.

2020-04-12 02:21:05 INFO [stats.py:116] snake-04_SnakeA ELO: 1157.586.

2020-04-12 02:24:30 INFO [stats.py:111] snake-04_SnakeA: Step: 1480000. Time Elapsed: 32219.718 s Mean Reward: 0.222. Std of Reward: 1.872. Training.

2020-04-12 02:24:30 INFO [stats.py:116] snake-04_SnakeA ELO: 1155.864.

2020-04-12 02:27:37 INFO [stats.py:111] snake-04_SnakeA: Step: 1490000. Time Elapsed: 32406.337 s Mean Reward: 0.222. Std of Reward: 1.750. Training.

2020-04-12 02:27:37 INFO [stats.py:116] snake-04_SnakeA ELO: 1157.937.

2020-04-12 02:29:37 INFO [trainer_controller.py:100] Saved Model

2020-04-12 02:30:47 INFO [stats.py:111] snake-04_SnakeA: Step: 1500000. Time Elapsed: 32596.529 s Mean Reward: -0.727. Std of Reward: 1.213. Training.

2020-04-12 02:30:47 INFO [stats.py:116] snake-04_SnakeA ELO: 1156.351.

2020-04-12 02:35:55 INFO [stats.py:111] snake-04_SnakeB: Step: 1330000. Time Elapsed: 32904.088 s Mean Reward: 0.500. Std of Reward: 1.258. Training.

2020-04-12 02:35:55 INFO [stats.py:116] snake-04_SnakeB ELO: 1141.081.

2020-04-12 02:39:13 INFO [stats.py:111] snake-04_SnakeB: Step: 1340000. Time Elapsed: 33102.586 s Mean Reward: -0.583. Std of Reward: 1.656. Training.

2020-04-12 02:39:13 INFO [stats.py:116] snake-04_SnakeB ELO: 1139.342.

2020-04-12 02:42:19 INFO [stats.py:111] snake-04_SnakeB: Step: 1350000. Time Elapsed: 33288.111 s Mean Reward: 0.000. Std of Reward: 1.183. Training.

2020-04-12 02:42:19 INFO [stats.py:116] snake-04_SnakeB ELO: 1134.991.

2020-04-12 02:45:44 INFO [stats.py:111] snake-04_SnakeB: Step: 1360000. Time Elapsed: 33493.774 s Mean Reward: -0.250. Std of Reward: 1.436. Training.

2020-04-12 02:45:44 INFO [stats.py:116] snake-04_SnakeB ELO: 1132.011.

2020-04-12 02:45:48 INFO [trainer_controller.py:100] Saved Model

2020-04-12 02:48:52 INFO [stats.py:111] snake-04_SnakeB: Step: 1370000. Time Elapsed: 33681.319 s Mean Reward: -0.600. Std of Reward: 1.356. Training.

2020-04-12 02:48:52 INFO [stats.py:116] snake-04_SnakeB ELO: 1129.378.

2020-04-12 02:52:06 INFO [stats.py:111] snake-04_SnakeB: Step: 1380000. Time Elapsed: 33875.392 s Mean Reward: -0.667. Std of Reward: 1.776. Training.

2020-04-12 02:52:06 INFO [stats.py:116] snake-04_SnakeB ELO: 1127.144.

2020-04-12 02:55:13 INFO [stats.py:111] snake-04_SnakeB: Step: 1390000. Time Elapsed: 34062.104 s Mean Reward: 0.231. Std of Reward: 2.154. Training.

2020-04-12 02:55:13 INFO [stats.py:116] snake-04_SnakeB ELO: 1126.916.

2020-04-12 02:58:26 INFO [stats.py:111] snake-04_SnakeB: Step: 1400000. Time Elapsed: 34255.340 s Mean Reward: -0.600. Std of Reward: 1.428. Training.

2020-04-12 02:58:26 INFO [stats.py:116] snake-04_SnakeB ELO: 1127.256.

2020-04-12 03:01:46 INFO [stats.py:111] snake-04_SnakeB: Step: 1410000. Time Elapsed: 34455.941 s Mean Reward: 0.308. Std of Reward: 1.856. Training.

2020-04-12 03:01:46 INFO [stats.py:116] snake-04_SnakeB ELO: 1127.327.

2020-04-12 03:01:50 INFO [trainer_controller.py:100] Saved Model

2020-04-12 03:05:01 INFO [stats.py:111] snake-04_SnakeB: Step: 1420000. Time Elapsed: 34650.378 s Mean Reward: -0.889. Std of Reward: 0.994. Training.

2020-04-12 03:05:01 INFO [stats.py:116] snake-04_SnakeB ELO: 1126.332.

2020-04-12 03:06:11 INFO [stats.py:111] snake-04_SnakeA: Step: 1510000. Time Elapsed: 34720.205 s Mean Reward: -0.333. Std of Reward: 1.333. Training.

2020-04-12 03:06:11 INFO [stats.py:116] snake-04_SnakeA ELO: 1153.556.

2020-04-12 03:09:33 INFO [stats.py:111] snake-04_SnakeA: Step: 1520000. Time Elapsed: 34922.186 s Mean Reward: -0.353. Std of Reward: 1.570. Training.

2020-04-12 03:09:33 INFO [stats.py:116] snake-04_SnakeA ELO: 1151.561.

2020-04-12 03:12:43 INFO [stats.py:111] snake-04_SnakeA: Step: 1530000. Time Elapsed: 35112.729 s Mean Reward: 0.316. Std of Reward: 1.893. Training.

2020-04-12 03:12:43 INFO [stats.py:116] snake-04_SnakeA ELO: 1154.236.

2020-04-12 03:15:57 INFO [stats.py:111] snake-04_SnakeA: Step: 1540000. Time Elapsed: 35306.703 s Mean Reward: -0.500. Std of Reward: 1.360. Training.

2020-04-12 03:15:57 INFO [stats.py:116] snake-04_SnakeA ELO: 1153.129.

2020-04-12 03:17:47 INFO [trainer_controller.py:100] Saved Model

2020-04-12 03:19:16 INFO [stats.py:111] snake-04_SnakeA: Step: 1550000. Time Elapsed: 35505.269 s Mean Reward: -0.231. Std of Reward: 1.310. Training.

2020-04-12 03:19:16 INFO [stats.py:116] snake-04_SnakeA ELO: 1151.528.

2020-04-12 03:22:23 INFO [stats.py:111] snake-04_SnakeA: Step: 1560000. Time Elapsed: 35692.225 s Mean Reward: -0.500. Std of Reward: 1.384. Training.

2020-04-12 03:22:23 INFO [stats.py:116] snake-04_SnakeA ELO: 1151.601.

2020-04-12 03:25:28 INFO [stats.py:111] snake-04_SnakeA: Step: 1570000. Time Elapsed: 35877.962 s Mean Reward: 0.000. Std of Reward: 1.758. Training.

2020-04-12 03:25:28 INFO [stats.py:116] snake-04_SnakeA ELO: 1150.880.

2020-04-12 03:28:50 INFO [stats.py:111] snake-04_SnakeA: Step: 1580000. Time Elapsed: 36079.955 s Mean Reward: 0.100. Std of Reward: 1.044. Training.

2020-04-12 03:28:50 INFO [stats.py:116] snake-04_SnakeA ELO: 1149.522.

2020-04-12 03:31:56 INFO [stats.py:111] snake-04_SnakeA: Step: 1590000. Time Elapsed: 36265.047 s Mean Reward: -0.250. Std of Reward: 1.689. Training.

2020-04-12 03:31:56 INFO [stats.py:116] snake-04_SnakeA ELO: 1149.447.

2020-04-12 03:33:48 INFO [trainer_controller.py:100] Saved Model

2020-04-12 03:35:00 INFO [stats.py:111] snake-04_SnakeA: Step: 1600000. Time Elapsed: 36449.448 s Mean Reward: 0.400. Std of Reward: 0.917. Training.

2020-04-12 03:35:00 INFO [stats.py:116] snake-04_SnakeA ELO: 1145.790.

2020-04-12 03:40:24 INFO [stats.py:111] snake-04_SnakeB: Step: 1430000. Time Elapsed: 36773.770 s Mean Reward: -0.615. Std of Reward: 1.389. Training.

2020-04-12 03:40:24 INFO [stats.py:116] snake-04_SnakeB ELO: 1131.809.

2020-04-12 03:43:50 INFO [stats.py:111] snake-04_SnakeB: Step: 1440000. Time Elapsed: 36980.028 s Mean Reward: -0.533. Std of Reward: 1.204. Training.

2020-04-12 03:43:50 INFO [stats.py:116] snake-04_SnakeB ELO: 1129.131.

2020-04-12 03:46:56 INFO [stats.py:111] snake-04_SnakeB: Step: 1450000. Time Elapsed: 37165.895 s Mean Reward: -1.000. Std of Reward: 1.155. Training.

2020-04-12 03:46:56 INFO [stats.py:116] snake-04_SnakeB ELO: 1127.143.

2020-04-12 03:49:58 INFO [trainer_controller.py:100] Saved Model

2020-04-12 03:50:07 INFO [stats.py:111] snake-04_SnakeB: Step: 1460000. Time Elapsed: 37356.800 s Mean Reward: 0.300. Std of Reward: 1.616. Training.

2020-04-12 03:50:07 INFO [stats.py:116] snake-04_SnakeB ELO: 1127.676.

2020-04-12 03:53:21 INFO [stats.py:111] snake-04_SnakeB: Step: 1470000. Time Elapsed: 37551.006 s Mean Reward: 0.214. Std of Reward: 1.567. Training.

2020-04-12 03:53:21 INFO [stats.py:116] snake-04_SnakeB ELO: 1125.781.

2020-04-12 03:56:38 INFO [stats.py:111] snake-04_SnakeB: Step: 1480000. Time Elapsed: 37747.352 s Mean Reward: -0.500. Std of Reward: 1.722. Training.

2020-04-12 03:56:38 INFO [stats.py:116] snake-04_SnakeB ELO: 1124.364.

2020-04-12 03:59:41 INFO [stats.py:111] snake-04_SnakeB: Step: 1490000. Time Elapsed: 37930.231 s Mean Reward: -1.111. Std of Reward: 1.197. Training.

2020-04-12 03:59:41 INFO [stats.py:116] snake-04_SnakeB ELO: 1120.950.

2020-04-12 04:03:01 INFO [stats.py:111] snake-04_SnakeB: Step: 1500000. Time Elapsed: 38130.410 s Mean Reward: 0.154. Std of Reward: 1.703. Training.

2020-04-12 04:03:01 INFO [stats.py:116] snake-04_SnakeB ELO: 1119.407.

2020-04-12 04:05:56 INFO [trainer_controller.py:100] Saved Model

2020-04-12 04:06:13 INFO [stats.py:111] snake-04_SnakeB: Step: 1510000. Time Elapsed: 38322.469 s Mean Reward: -0.818. Std of Reward: 1.113. Training.

2020-04-12 04:06:13 INFO [stats.py:116] snake-04_SnakeB ELO: 1116.038.

2020-04-12 04:09:34 INFO [stats.py:111] snake-04_SnakeB: Step: 1520000. Time Elapsed: 38523.468 s Mean Reward: -0.200. Std of Reward: 1.327. Training.

2020-04-12 04:09:34 INFO [stats.py:116] snake-04_SnakeB ELO: 1116.631.

2020-04-12 04:10:48 INFO [stats.py:111] snake-04_SnakeA: Step: 1610000. Time Elapsed: 38597.727 s Mean Reward: -0.533. Std of Reward: 1.628. Training.

2020-04-12 04:10:48 INFO [stats.py:116] snake-04_SnakeA ELO: 1145.695.

2020-04-12 04:14:09 INFO [stats.py:111] snake-04_SnakeA: Step: 1620000. Time Elapsed: 38798.908 s Mean Reward: 0.100. Std of Reward: 1.446. Training.

2020-04-12 04:14:09 INFO [stats.py:116] snake-04_SnakeA ELO: 1150.387.

2020-04-12 04:17:36 INFO [stats.py:111] snake-04_SnakeA: Step: 1630000. Time Elapsed: 39005.673 s Mean Reward: -0.364. Std of Reward: 1.666. Training.

2020-04-12 04:17:36 INFO [stats.py:116] snake-04_SnakeA ELO: 1149.909.

2020-04-12 04:20:49 INFO [stats.py:111] snake-04_SnakeA: Step: 1640000. Time Elapsed: 39198.362 s Mean Reward: 0.000. Std of Reward: 1.633. Training.

2020-04-12 04:20:49 INFO [stats.py:116] snake-04_SnakeA ELO: 1148.314.

2020-04-12 04:22:20 INFO [trainer_controller.py:100] Saved Model

2020-04-12 04:23:46 INFO [stats.py:111] snake-04_SnakeA: Step: 1650000. Time Elapsed: 39375.496 s Mean Reward: -0.100. Std of Reward: 1.972. Training.

2020-04-12 04:23:46 INFO [stats.py:116] snake-04_SnakeA ELO: 1146.984.

2020-04-12 04:27:08 INFO [stats.py:111] snake-04_SnakeA: Step: 1660000. Time Elapsed: 39577.675 s Mean Reward: 0.000. Std of Reward: 1.852. Training.

2020-04-12 04:27:08 INFO [stats.py:116] snake-04_SnakeA ELO: 1145.823.

2020-04-12 04:30:13 INFO [stats.py:111] snake-04_SnakeA: Step: 1670000. Time Elapsed: 39762.695 s Mean Reward: 0.000. Std of Reward: 1.275. Training.

2020-04-12 04:30:13 INFO [stats.py:116] snake-04_SnakeA ELO: 1144.808.

2020-04-12 04:33:41 INFO [stats.py:111] snake-04_SnakeA: Step: 1680000. Time Elapsed: 39970.160 s Mean Reward: -0.846. Std of Reward: 1.747. Training.

2020-04-12 04:33:41 INFO [stats.py:116] snake-04_SnakeA ELO: 1141.466.

2020-04-12 04:36:41 INFO [stats.py:111] snake-04_SnakeA: Step: 1690000. Time Elapsed: 40150.071 s Mean Reward: 0.111. Std of Reward: 1.370. Training.

2020-04-12 04:36:41 INFO [stats.py:116] snake-04_SnakeA ELO: 1140.195.

2020-04-12 04:38:23 INFO [trainer_controller.py:100] Saved Model

2020-04-12 04:39:58 INFO [stats.py:111] snake-04_SnakeA: Step: 1700000. Time Elapsed: 40347.428 s Mean Reward: -0.250. Std of Reward: 1.601. Training.

2020-04-12 04:39:58 INFO [stats.py:116] snake-04_SnakeA ELO: 1139.269.

2020-04-12 04:45:13 INFO [stats.py:111] snake-04_SnakeB: Step: 1530000. Time Elapsed: 40662.481 s Mean Reward: 0.133. Std of Reward: 1.586. Training.

2020-04-12 04:45:13 INFO [stats.py:116] snake-04_SnakeB ELO: 1127.101.

2020-04-12 04:48:29 INFO [stats.py:111] snake-04_SnakeB: Step: 1540000. Time Elapsed: 40858.889 s Mean Reward: -0.273. Std of Reward: 1.601. Training.

2020-04-12 04:48:29 INFO [stats.py:116] snake-04_SnakeB ELO: 1126.854.

2020-04-12 04:51:24 INFO [stats.py:111] snake-04_SnakeB: Step: 1550000. Time Elapsed: 41033.830 s Mean Reward: -0.538. Std of Reward: 1.550. Training.

2020-04-12 04:51:24 INFO [stats.py:116] snake-04_SnakeB ELO: 1126.889.

2020-04-12 04:54:19 INFO [trainer_controller.py:100] Saved Model

2020-04-12 04:54:35 INFO [stats.py:111] snake-04_SnakeB: Step: 1560000. Time Elapsed: 41224.517 s Mean Reward: -0.600. Std of Reward: 1.800. Training.

2020-04-12 04:54:35 INFO [stats.py:116] snake-04_SnakeB ELO: 1125.519.

2020-04-12 04:57:49 INFO [stats.py:111] snake-04_SnakeB: Step: 1570000. Time Elapsed: 41418.427 s Mean Reward: -0.286. Std of Reward: 1.943. Training.

2020-04-12 04:57:49 INFO [stats.py:116] snake-04_SnakeB ELO: 1124.214.

2020-04-12 05:00:59 INFO [stats.py:111] snake-04_SnakeB: Step: 1580000. Time Elapsed: 41608.310 s Mean Reward: 0.000. Std of Reward: 1.541. Training.

2020-04-12 05:00:59 INFO [stats.py:116] snake-04_SnakeB ELO: 1125.151.

2020-04-12 05:04:09 INFO [stats.py:111] snake-04_SnakeB: Step: 1590000. Time Elapsed: 41798.193 s Mean Reward: 1.000. Std of Reward: 1.291. Training.

2020-04-12 05:04:09 INFO [stats.py:116] snake-04_SnakeB ELO: 1123.388.

2020-04-12 05:07:21 INFO [stats.py:111] snake-04_SnakeB: Step: 1600000. Time Elapsed: 41990.951 s Mean Reward: -0.400. Std of Reward: 1.562. Training.

2020-04-12 05:07:21 INFO [stats.py:116] snake-04_SnakeB ELO: 1122.976.

2020-04-12 05:10:16 INFO [trainer_controller.py:100] Saved Model

2020-04-12 05:10:38 INFO [stats.py:111] snake-04_SnakeB: Step: 1610000. Time Elapsed: 42187.461 s Mean Reward: -0.267. Std of Reward: 1.340. Training.

2020-04-12 05:10:38 INFO [stats.py:116] snake-04_SnakeB ELO: 1120.821.

2020-04-12 05:13:43 INFO [stats.py:111] snake-04_SnakeB: Step: 1620000. Time Elapsed: 42372.960 s Mean Reward: -0.750. Std of Reward: 1.738. Training.

2020-04-12 05:13:43 INFO [stats.py:116] snake-04_SnakeB ELO: 1117.986.

2020-04-12 05:15:10 INFO [stats.py:111] snake-04_SnakeA: Step: 1710000. Time Elapsed: 42459.101 s Mean Reward: 0.111. Std of Reward: 2.079. Training.

2020-04-12 05:15:10 INFO [stats.py:116] snake-04_SnakeA ELO: 1138.475.

2020-04-12 05:18:13 INFO [stats.py:111] snake-04_SnakeA: Step: 1720000. Time Elapsed: 42643.032 s Mean Reward: 0.308. Std of Reward: 1.136. Training.

2020-04-12 05:18:13 INFO [stats.py:116] snake-04_SnakeA ELO: 1138.359.

2020-04-12 05:21:41 INFO [stats.py:111] snake-04_SnakeA: Step: 1730000. Time Elapsed: 42850.160 s Mean Reward: 0.333. Std of Reward: 1.660. Training.

2020-04-12 05:21:41 INFO [stats.py:116] snake-04_SnakeA ELO: 1136.779.

2020-04-12 05:24:50 INFO [stats.py:111] snake-04_SnakeA: Step: 1740000. Time Elapsed: 43039.579 s Mean Reward: -0.111. Std of Reward: 1.286. Training.

2020-04-12 05:24:50 INFO [stats.py:116] snake-04_SnakeA ELO: 1133.651.

2020-04-12 05:26:11 INFO [trainer_controller.py:100] Saved Model

2020-04-12 05:27:52 INFO [stats.py:111] snake-04_SnakeA: Step: 1750000. Time Elapsed: 43221.244 s Mean Reward: 0.308. Std of Reward: 1.635. Training.

2020-04-12 05:27:52 INFO [stats.py:116] snake-04_SnakeA ELO: 1132.621.

2020-04-12 05:31:02 INFO [stats.py:111] snake-04_SnakeA: Step: 1760000. Time Elapsed: 43411.871 s Mean Reward: 0.077. Std of Reward: 1.439. Training.

2020-04-12 05:31:02 INFO [stats.py:116] snake-04_SnakeA ELO: 1132.136.

2020-04-12 05:34:14 INFO [stats.py:111] snake-04_SnakeA: Step: 1770000. Time Elapsed: 43603.327 s Mean Reward: -0.900. Std of Reward: 1.300. Training.

2020-04-12 05:34:14 INFO [stats.py:116] snake-04_SnakeA ELO: 1131.740.

2020-04-12 05:37:24 INFO [stats.py:111] snake-04_SnakeA: Step: 1780000. Time Elapsed: 43793.586 s Mean Reward: -0.900. Std of Reward: 1.578. Training.

2020-04-12 05:37:24 INFO [stats.py:116] snake-04_SnakeA ELO: 1130.553.

2020-04-12 05:40:35 INFO [stats.py:111] snake-04_SnakeA: Step: 1790000. Time Elapsed: 43984.048 s Mean Reward: -0.133. Std of Reward: 1.784. Training.

2020-04-12 05:40:35 INFO [stats.py:116] snake-04_SnakeA ELO: 1129.343.

2020-04-12 05:42:04 INFO [trainer_controller.py:100] Saved Model

2020-04-12 05:43:48 INFO [stats.py:111] snake-04_SnakeA: Step: 1800000. Time Elapsed: 44177.412 s Mean Reward: 0.000. Std of Reward: 1.572. Training.

2020-04-12 05:43:48 INFO [stats.py:116] snake-04_SnakeA ELO: 1129.174.

2020-04-12 05:48:54 INFO [stats.py:111] snake-04_SnakeB: Step: 1630000. Time Elapsed: 44483.068 s Mean Reward: -1.000. Std of Reward: 1.069. Training.

2020-04-12 05:48:54 INFO [stats.py:116] snake-04_SnakeB ELO: 1117.618.

2020-04-12 05:52:05 INFO [stats.py:111] snake-04_SnakeB: Step: 1640000. Time Elapsed: 44674.869 s Mean Reward: -0.600. Std of Reward: 1.583. Training.

2020-04-12 05:52:05 INFO [stats.py:116] snake-04_SnakeB ELO: 1116.484.

2020-04-12 05:55:11 INFO [stats.py:111] snake-04_SnakeB: Step: 1650000. Time Elapsed: 44860.186 s Mean Reward: -0.462. Std of Reward: 1.737. Training.

2020-04-12 05:55:11 INFO [stats.py:116] snake-04_SnakeB ELO: 1115.036.

2020-04-12 05:57:59 INFO [trainer_controller.py:100] Saved Model

2020-04-12 05:58:29 INFO [stats.py:111] snake-04_SnakeB: Step: 1660000. Time Elapsed: 45058.630 s Mean Reward: -1.308. Std of Reward: 1.136. Training.

2020-04-12 05:58:29 INFO [stats.py:116] snake-04_SnakeB ELO: 1113.030.

2020-04-12 06:01:38 INFO [stats.py:111] snake-04_SnakeB: Step: 1670000. Time Elapsed: 45247.355 s Mean Reward: 0.077. Std of Reward: 1.328. Training.

2020-04-12 06:01:38 INFO [stats.py:116] snake-04_SnakeB ELO: 1111.287.

2020-04-12 06:04:54 INFO [stats.py:111] snake-04_SnakeB: Step: 1680000. Time Elapsed: 45443.128 s Mean Reward: -0.091. Std of Reward: 1.621. Training.

2020-04-12 06:04:54 INFO [stats.py:116] snake-04_SnakeB ELO: 1110.589.

2020-04-12 06:08:03 INFO [stats.py:111] snake-04_SnakeB: Step: 1690000. Time Elapsed: 45632.391 s Mean Reward: -1.091. Std of Reward: 1.164. Training.

2020-04-12 06:08:03 INFO [stats.py:116] snake-04_SnakeB ELO: 1109.980.

2020-04-12 06:11:12 INFO [stats.py:111] snake-04_SnakeB: Step: 1700000. Time Elapsed: 45821.215 s Mean Reward: -1.000. Std of Reward: 1.472. Training.

2020-04-12 06:11:12 INFO [stats.py:116] snake-04_SnakeB ELO: 1109.146.

2020-04-12 06:13:56 INFO [trainer_controller.py:100] Saved Model

2020-04-12 06:14:26 INFO [stats.py:111] snake-04_SnakeB: Step: 1710000. Time Elapsed: 46015.110 s Mean Reward: 0.083. Std of Reward: 1.656. Training.

2020-04-12 06:14:26 INFO [stats.py:116] snake-04_SnakeB ELO: 1109.244.

2020-04-12 06:17:42 INFO [stats.py:111] snake-04_SnakeB: Step: 1720000. Time Elapsed: 46211.128 s Mean Reward: -1.600. Std of Reward: 1.200. Training.

2020-04-12 06:17:42 INFO [stats.py:116] snake-04_SnakeB ELO: 1108.712.

2020-04-12 06:18:58 INFO [stats.py:111] snake-04_SnakeA: Step: 1810000. Time Elapsed: 46287.225 s Mean Reward: -0.933. Std of Reward: 1.652. Training.

2020-04-12 06:18:58 INFO [stats.py:116] snake-04_SnakeA ELO: 1126.790.

2020-04-12 06:22:07 INFO [stats.py:111] snake-04_SnakeA: Step: 1820000. Time Elapsed: 46476.931 s Mean Reward: 0.154. Std of Reward: 1.460. Training.

2020-04-12 06:22:07 INFO [stats.py:116] snake-04_SnakeA ELO: 1132.483.

2020-04-12 06:25:35 INFO [stats.py:111] snake-04_SnakeA: Step: 1830000. Time Elapsed: 46684.615 s Mean Reward: -0.133. Std of Reward: 1.258. Training.

2020-04-12 06:25:35 INFO [stats.py:116] snake-04_SnakeA ELO: 1131.841.

2020-04-12 06:28:34 INFO [stats.py:111] snake-04_SnakeA: Step: 1840000. Time Elapsed: 46863.234 s Mean Reward: 0.167. Std of Reward: 1.462. Training.

2020-04-12 06:28:34 INFO [stats.py:116] snake-04_SnakeA ELO: 1129.398.

2020-04-12 06:29:52 INFO [trainer_controller.py:100] Saved Model

2020-04-12 06:31:54 INFO [stats.py:111] snake-04_SnakeA: Step: 1850000. Time Elapsed: 47063.585 s Mean Reward: -0.294. Std of Reward: 1.774. Training.

2020-04-12 06:31:54 INFO [stats.py:116] snake-04_SnakeA ELO: 1127.901.

2020-04-12 06:35:07 INFO [stats.py:111] snake-04_SnakeA: Step: 1860000. Time Elapsed: 47256.382 s Mean Reward: -0.333. Std of Reward: 1.434. Training.

2020-04-12 06:35:07 INFO [stats.py:116] snake-04_SnakeA ELO: 1125.924.

2020-04-12 06:38:28 INFO [stats.py:111] snake-04_SnakeA: Step: 1870000. Time Elapsed: 47457.190 s Mean Reward: -0.529. Std of Reward: 1.913. Training.

2020-04-12 06:38:28 INFO [stats.py:116] snake-04_SnakeA ELO: 1124.543.

2020-04-12 06:41:22 INFO [stats.py:111] snake-04_SnakeA: Step: 1880000. Time Elapsed: 47631.939 s Mean Reward: 0.182. Std of Reward: 1.402. Training.

2020-04-12 06:41:22 INFO [stats.py:116] snake-04_SnakeA ELO: 1125.390.

2020-04-12 06:44:46 INFO [stats.py:111] snake-04_SnakeA: Step: 1890000. Time Elapsed: 47835.480 s Mean Reward: -1.091. Std of Reward: 1.443. Training.

2020-04-12 06:44:46 INFO [stats.py:116] snake-04_SnakeA ELO: 1123.697.

2020-04-12 06:45:51 INFO [trainer_controller.py:100] Saved Model

2020-04-12 06:47:47 INFO [stats.py:111] snake-04_SnakeA: Step: 1900000. Time Elapsed: 48016.379 s Mean Reward: -0.286. Std of Reward: 1.750. Training.

2020-04-12 06:47:47 INFO [stats.py:116] snake-04_SnakeA ELO: 1122.461.

2020-04-12 06:52:49 INFO [stats.py:111] snake-04_SnakeB: Step: 1730000. Time Elapsed: 48318.070 s Mean Reward: -1.000. Std of Reward: 1.758. Training.

2020-04-12 06:52:49 INFO [stats.py:116] snake-04_SnakeB ELO: 1106.498.

2020-04-12 06:55:52 INFO [stats.py:111] snake-04_SnakeB: Step: 1740000. Time Elapsed: 48501.093 s Mean Reward: -0.429. Std of Reward: 1.450. Training.

2020-04-12 06:55:52 INFO [stats.py:116] snake-04_SnakeB ELO: 1103.889.

2020-04-12 06:59:02 INFO [stats.py:111] snake-04_SnakeB: Step: 1750000. Time Elapsed: 48691.449 s Mean Reward: -0.077. Std of Reward: 1.639. Training.

2020-04-12 06:59:02 INFO [stats.py:116] snake-04_SnakeB ELO: 1102.653.

2020-04-12 07:01:44 INFO [trainer_controller.py:100] Saved Model

2020-04-12 07:02:16 INFO [stats.py:111] snake-04_SnakeB: Step: 1760000. Time Elapsed: 48885.364 s Mean Reward: 0.000. Std of Reward: 1.710. Training.

2020-04-12 07:02:16 INFO [stats.py:116] snake-04_SnakeB ELO: 1104.321.

2020-04-12 07:05:26 INFO [stats.py:111] snake-04_SnakeB: Step: 1770000. Time Elapsed: 49075.830 s Mean Reward: 0.154. Std of Reward: 1.511. Training.

2020-04-12 07:05:26 INFO [stats.py:116] snake-04_SnakeB ELO: 1106.664.

2020-04-12 07:08:43 INFO [stats.py:111] snake-04_SnakeB: Step: 1780000. Time Elapsed: 49272.394 s Mean Reward: 0.556. Std of Reward: 1.257. Training.

2020-04-12 07:08:43 INFO [stats.py:116] snake-04_SnakeB ELO: 1107.944.

2020-04-12 07:11:46 INFO [stats.py:111] snake-04_SnakeB: Step: 1790000. Time Elapsed: 49455.644 s Mean Reward: 0.000. Std of Reward: 1.633. Training.

2020-04-12 07:11:46 INFO [stats.py:116] snake-04_SnakeB ELO: 1107.476.

2020-04-12 07:15:01 INFO [stats.py:111] snake-04_SnakeB: Step: 1800000. Time Elapsed: 49650.318 s Mean Reward: 0.625. Std of Reward: 1.317. Training.

2020-04-12 07:15:01 INFO [stats.py:116] snake-04_SnakeB ELO: 1106.004.

2020-04-12 07:17:37 INFO [trainer_controller.py:100] Saved Model

2020-04-12 07:18:14 INFO [stats.py:111] snake-04_SnakeB: Step: 1810000. Time Elapsed: 49843.819 s Mean Reward: -0.400. Std of Reward: 1.306. Training.

2020-04-12 07:18:14 INFO [stats.py:116] snake-04_SnakeB ELO: 1105.080.

2020-04-12 07:21:22 INFO [stats.py:111] snake-04_SnakeB: Step: 1820000. Time Elapsed: 50031.788 s Mean Reward: -0.400. Std of Reward: 1.685. Training.

2020-04-12 07:21:22 INFO [stats.py:116] snake-04_SnakeB ELO: 1104.044.

2020-04-12 07:22:46 INFO [stats.py:111] snake-04_SnakeA: Step: 1910000. Time Elapsed: 50115.730 s Mean Reward: 0.167. Std of Reward: 1.344. Training.

2020-04-12 07:22:46 INFO [stats.py:116] snake-04_SnakeA ELO: 1121.775.

2020-04-12 07:26:01 INFO [stats.py:111] snake-04_SnakeA: Step: 1920000. Time Elapsed: 50310.951 s Mean Reward: -0.538. Std of Reward: 1.337. Training.