Maskrcnn-benchmark: Pycharm configuration - multi GPU training

❓ Questions and Help

Hi,

I'm trying to run multi-GPU training in Pycharm and can't figure out how to configure my run.

I've tried:

Module name (instead of script path): torch.distributed.launch

Parameters: --nproc_per_node=2 tools/train_net.py --config-file ...

with sum variations and with no success.

How should I configure my run ?

Thanks

All 8 comments

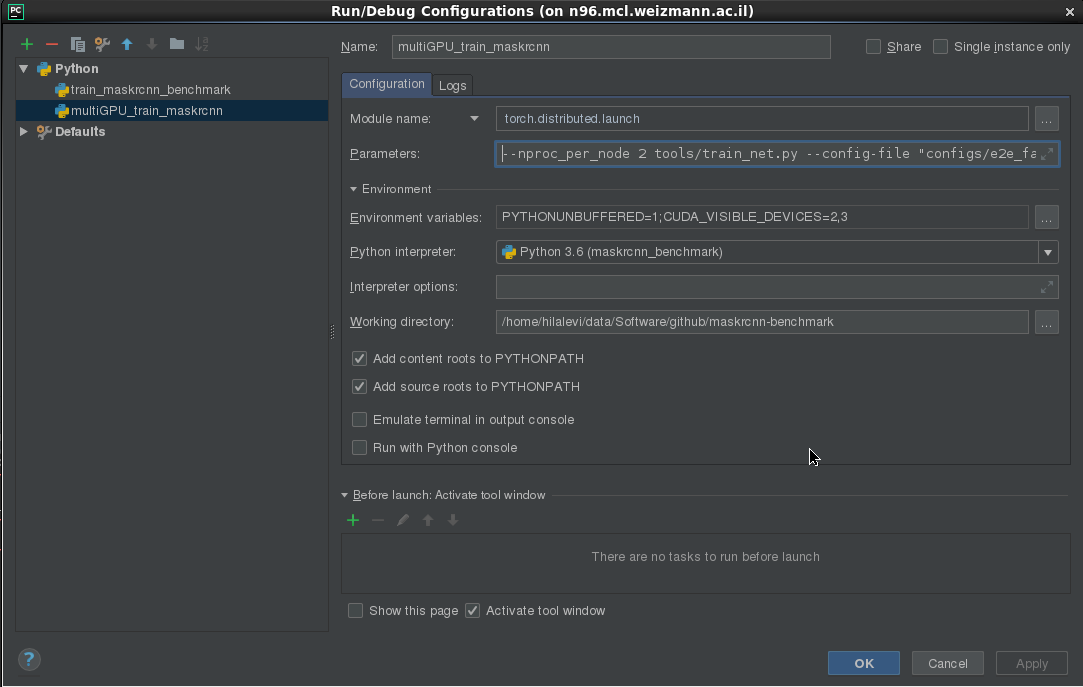

Here you go

Closing thanks to @miguelvr suggestion

Hi,

I tried Miguel's suggestion - but got:

"No module named tools/train_net.py"

Have I missed anything?

Thanks,

tools/train.net is not a module so you are not filling the correct fields

Look at the picture

This is my config:

Where is my mistake? Can't find it

that indeed looks ok... can you show me the output of the terminal?

It is working well from the terminal.

When I'm trying to run the configuration - It is also working.

The problem only occurs while debugging the configuration.

I don't understand the reason, but I guess it's a question to PyCharm - not to you.

Thanks, anyway

you are better off debugging with a single GPU, because torch.launch.distributed is spawning multiple processes. Not sure if pycharm can handle that

Most helpful comment

Here you go