Mask_rcnn: Loss increases after restarting training with model.find_last()

Hi,

When I restart model training after loading last weights using model.find_last() my losses jump up

I use the following to load the weights from the last run

model.load_weights(model.find_last(),by_name=True)

model.train(train, test, learning_rate=train_config.LEARNING_RATE / 10 , epochs=60, layers='all')

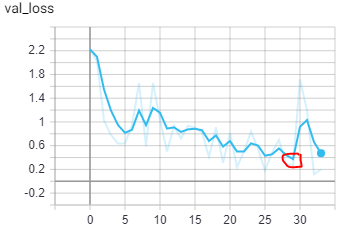

In the image the circled point (epoch 30) is where I stopped my last run. When I restarted training the loss jumped up. Any ideas why that might be happening?

Thanks,

Amit

All 4 comments

I also encountered this problem. I noticed that optimizer status is not saved at the end of each epoch, and every time training is restarted, a new optimizer is initialized. This might cause the loss to increase.

@akrsrivastava @ZhenghanFang But did you guys manage to understand how to deal with this problem? Is there a way to save the optimizer at the end of the epoch?

I reverted to using Keras Learning Rate Scheduler to update the learning rate without restarting the model training.

Can confirm the training loss definitely jumps up after restarting training with model.find_last()

Most helpful comment

I also encountered this problem. I noticed that optimizer status is not saved at the end of each epoch, and every time training is restarted, a new optimizer is initialized. This might cause the loss to increase.