Machinelearning: WeakReference<IHost> memory leak?

System information

- ML.NET Version: 1.4.0

Issue

- What did you do?

- Created a pipeline of ImageLoadingEstimator + ImageResizingEstimator + ImagePixelExtractingEstimator + OnnxScoringEstimator.

- Create a ITransformer object by fitting the pipeline.

- Performed ITransformer.Transform multiple times.

PS: Creating a PredictionEngine and performing PredictionEngine.Predict seems to lock the image as mentioned in issue 4585

- What happened?

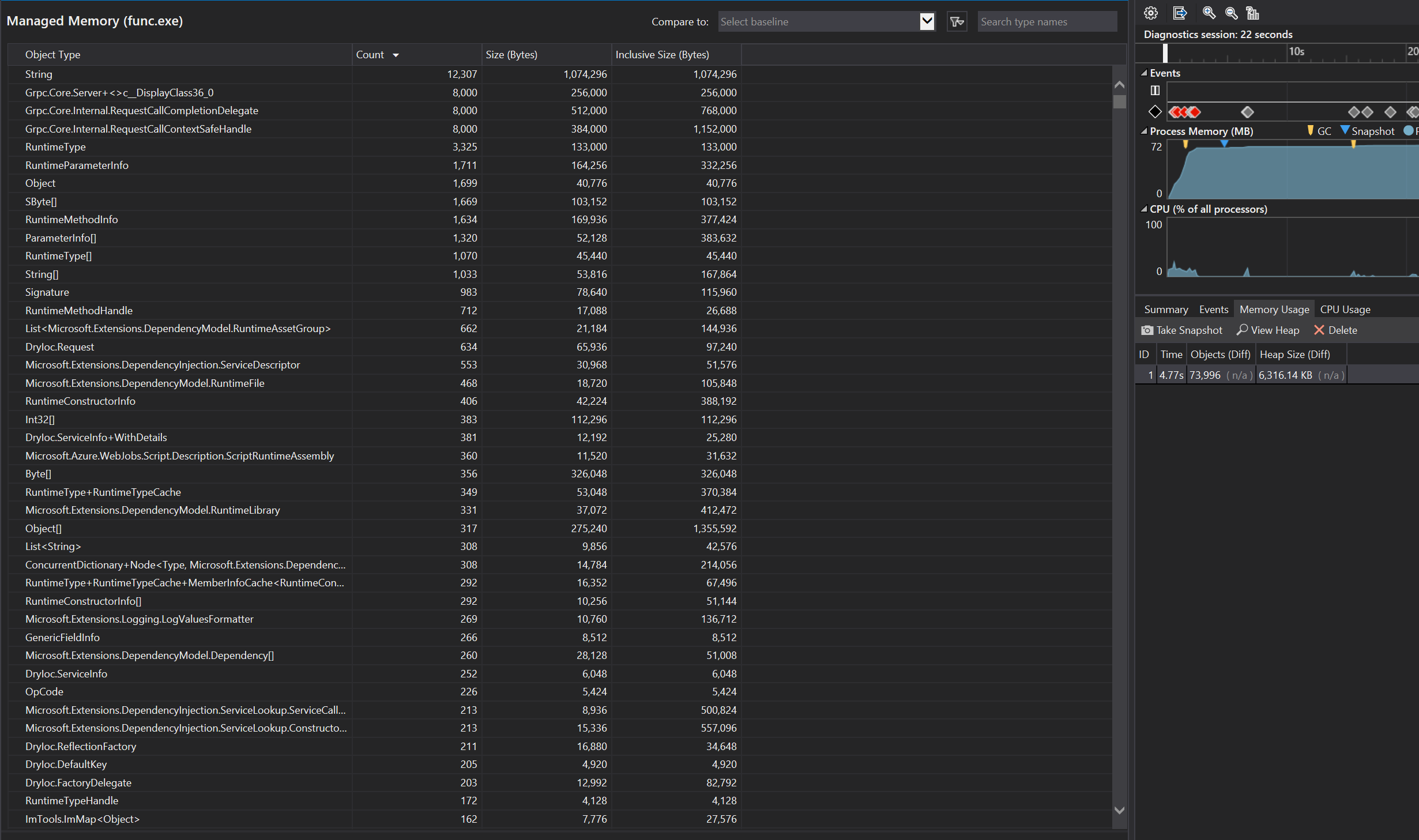

WeakReference

The number of objects does not change even after performing GC.Collect()

Is this possibly a memory leak?

The application is built is Release configuration.

All 21 comments

Hey @crvkumar , thank you for letting us know about this possible memory leak. Could you please share with us your code with which you observed this behavior? I will run your code and see if I see an accumulation of WeakReference objects as well as its impact on memory.

Hello @mstfbl. Thanks for the response.

Created a sample code reproducing this behavior in the below repo. Please check.

Hi @crvkumar , I ran the sample code you linked in Visual Studio 2019 with OnnxTransformer v1.3.1. I waited at the 2 parts of the code where the first and second snapshots are made. I did not see a memory leak when I ran it in Debug and Release modes, where process memory stayed stable at 61-62 MB. I also did not see any odd increases in the amount of WeakReference objects. Thus I was not able to reproduce your issue.

Perhaps there were other factors influencing the results you got. Please try running it again and let us know if you consistently see a memory leak. Thanks!

Hi @mstfbl. Thanks for checking the issue.

I am consistently getting the same results. I have attached a video for your reference.

I was using OnnxTransformer 1.4.0 but versioning down to 1.3.1 didn't change the behavior either.

Hi @crvkumar ,

I see, indeed in your case there is an increase of memory within a short time. I have uploaded my own demonstration showing no increase during one snapshot, and a small but expected increase between the first and second snapshots (The point in time when I iterate from the 1st to the 2nd snapshot is during the middle point of the video, unfortunately the terminal is not shown in this video).

Hello @mstfbl Thank you. May I know what version of onnxruntime are you using.?

I guess ML.NET only supports 0.5.1.

Correct, I'm running OnnxRuntime v0.5.1 and OnnxTransformer v1.3.1.

Edit: We support OnnxRuntime 1.0 in ML.NET 1.5.0-preview.

May I know how could you run the project in "Any CPU" config? Does the project is set in "x64"?

Of course, the "Any CPU" config on my computer is just set to "x64", so it is effectively running in "x64".

Also, I'd like to make an addition to my earlier comment. We currently support OnnxRuntime 1.0 as seen in this PR for ML.NET 1.5.0-preview. (Thanks @antoniovs1029 for the heads up!)

OK. Thank you.

Do you have any idea why the behavior is different in the two machines? Do you have any pointers where to look?

I have the same problem. I use 1.50preview2 and 1.4

By the way, even without image prediction, a mere multi-classification of text would leak.

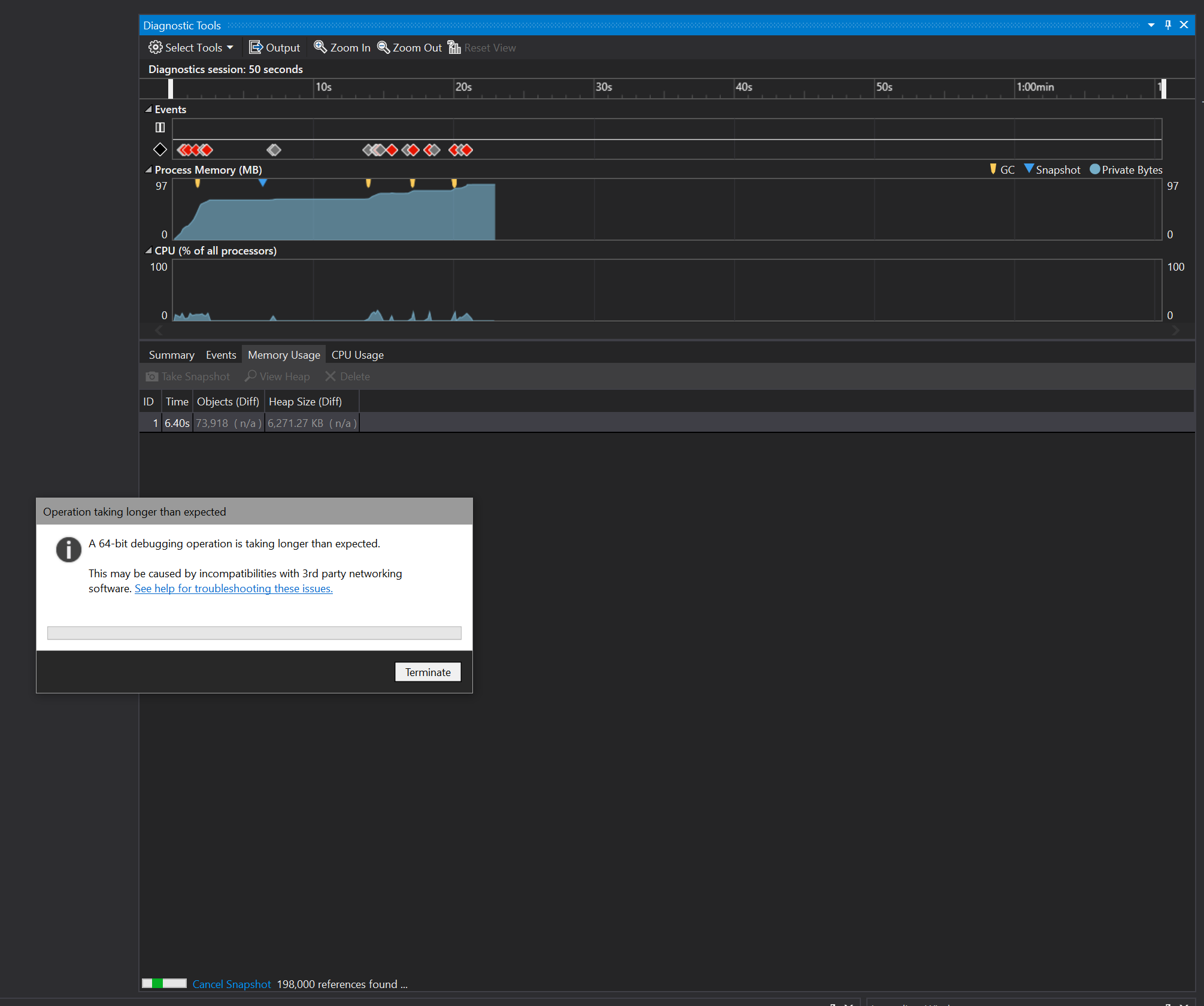

The first picture was when I started the application.

When I started 5 text classification predictions, I could no longer capture snapshots, but I could see that memory was still growing after GC ,

public class ModelMInput

{

[ColumnName("Action"), LoadColumn(0)]

public string Action { get; set; }

[ColumnName("DMString"), LoadColumn(1)]

public string DMString { get; set; }

}

public class PredictedEngineOutput

{

[ColumnName("PredictedLabel")]

public String Prediction { get; set; }

public float[] Score { get; set; }

[ColumnName("Action")]

[KeyType(1)]

public UInt32 InputAction { get; set; }

}

public class DemoModel

{

private MLContext _MLContext;

private DataViewSchema _inputschema;

private ITransformer _Model;

public string ModelName { get; set; }

public string[] TaginModel { get; private set; }

public DemoModel(string Path)

{

_MLContext = new MLContext();

_Model = _MLContext.Model.Load(Path, out _inputschema);

var OutputSchema = _Model.GetOutputSchema(_inputschema);

var column = OutputSchema.GetColumnOrNull("Score");

var slotNames = new VBuffer<ReadOnlyMemory<char>>();

column.Value.GetSlotNames(ref slotNames);

var alltag = slotNames.DenseValues().ToArray();

TaginModel = new string[alltag.Count()];

for (int i = 0; i < TaginModel.Length; i++)

{

TaginModel[i] = alltag[i].ToString();

}

ModelName = new FileInfo(Path).Name;

}

public PredictedEngineOutput Predict(string dmstring)

{

using (var PredictionEngine = _MLContext.Model.CreatePredictionEngine<ModelMInput, PredictedEngineOutput>(_Model))

{

var x = PredictionEngine.Predict(new ModelMInput() { DMString = dmstring });

return x;

}

}

}

Hi @crvkumar and @strikene ,

Thank you for following up on this. We're still working on reproducing this issue locally, and will get back to you soon.

Hi @strikene ,

I'm attempting to run the sample code you've written. Do you have the dataset where Path is pointing?

我正在尝试运行您编写的示例代码。您是否有

Path指向的数据集?

Hi @strikene ,

I'm attempting to run the sample code you've written. Do you have the dataset where

Pathis pointing?

Path = "C:MLModel.zip"

Hi @strikene , I meant, do you have a sample of the actual dataset of what you are trying to classify? For example, the zip file that Path points to.

Hey guys,

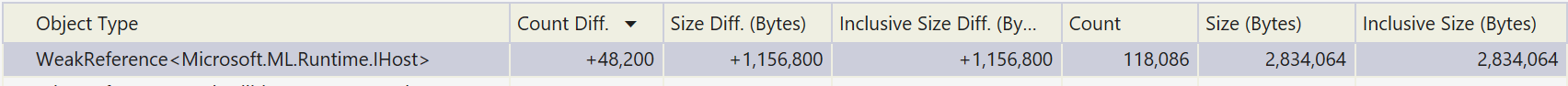

I think I found the root cause of the issue. The accumulation of the WeakReference_children list here:

Between each iteration, this _children list is not cleaned, and instead WeakReference objects accumulate, and the C# garbage collector does not clean it up as the comment above it says it does.

Thanks for following up @mstfbl . Will there be any fix/modifications for this subsequently?

Also why does this not occur in your environment?

Of course @crvkumar ! I'm currently working on a fix for this, and will push out a PR when it's ready.

This memory accumulation does happen in my environment, but it's in the order of 8-12 kilobytes.

Thank you @mstfbl. Yes, the leak seems to be small but adds up to a considerable size over few hours of continuous operation.

Thanks for your support.

Hi @strikene , I meant, do you have a sample of the actual dataset of what you are trying to classify? For example, the zip file that

Pathpoints to.

20200102MLModel.zip

the leak seems to be small but adds up to a considerable size over few hours of continuous operation.

I noticed this as well debugging #4981. I sent ~2,000 requests to an Azure Function, and in the course of about 40 seconds there were 48,200 of these WeakReference objects created, which added up to over 1 MB. Leaking that much memory on a production server is going to cause issues.

Between each iteration, this _children list is not cleaned, and instead WeakReference objects accumulate, and the C# garbage collector does not clean it up as the comment above it says it does.

Note that the IHost objects themselves will get garbage collected. The objects that are accumulating are the WeakReference objects themselves, which can't get garbage collected, because they are put in the List and never removed.

We need to remove these from the List at some point, preferrably when the IHost is no longer needed.

Most helpful comment

Of course @crvkumar ! I'm currently working on a fix for this, and will push out a PR when it's ready.

This memory accumulation does happen in my environment, but it's in the order of 8-12 kilobytes.