Logstash: Persistent Queues fails to deserialize after writing extremely large numeric values

- Logstash version: any version with PQs enabled - currently tested on 5.5 upto 5.6.1

- Platform: any

- Fully reproduceable test case: https://github.com/rdsubhas/logstash-queue-corruption

logstash.yml:

http.host: "0.0.0.0"

path.config: /usr/share/logstash/pipeline

xpack.monitoring.enabled: false

queue.type: persisted

pipeline.conf

input {

http { }

}

output {

stdout { codec => rubydebug }

}

Send any event with a large int value:

curl -XPOST -H 'content-type:application/json'` \

-d '{ "some_value": 9223372036854776000 }' \

http://127.0.0.1:8080

List of resources:

- Reproduce: https://github.com/rdsubhas/logstash-queue-corruption

- https://discuss.elastic.co/t/logstash-5-6-0-deserialization-errors-when-persistent-queues-enabled/100952

- https://discuss.elastic.co/t/logstash-crash-with-persistent-queue-and-kafka-input/90479/3

- https://discuss.elastic.co/t/logstash-5-4-repeated-deserialization-errors-and-losing-data-with-persistent-queues/91503/2

- https://discuss.elastic.co/t/logstash-persistent-queues-have-serious-data-loss-bugs-not-suitable-for-production/101566

- PR that could be a fix, scheduled for Logstash v6.1: https://github.com/elastic/logstash/issues/8131 https://github.com/elastic/logstash/pull/8371

(edited by @jordansissel to reflect accuracy)

All 33 comments

cc @original-brownbear @guyboertje

@andrewvc the is basically a duplicate of #8131 and some related issues. The issue reproduced here (using a long overflow) is def. fixed in master and 6.x already.

Maybe we should consider backporting the BiValue removal to at least 6.0?

@rdsubhas thanks for bringing this up. It looks pretty bad and I'm thinking we'll need to take action relatively quickly on this issue.

@original-brownbear yes, I think we need to open a PR for to patch this in 6.0 as well. Is BiValue removal the only approach we have here?

We will definitely need a fix for 5.6 as well.

@colinsurprenant @guyboertje and @jordansissel , I would love you guys to weigh in on this issue as well as far as options go.

I've confirmed the bug.

I had to use a slightly different config with 5.6.0 to explicitly decode the JSON

input {

http { codec => json }

}

output {

stdout { codec => rubydebug }

}

As an aside, thank you for the incredibly comprehensive writeup and test cases @rdsubhas , it is very much appreciated.

@andrewvc The problem here really is that the BiValue solution does not, in fact, work for types that aren't native JSON or CBOR in kind.

We had the same issue for Timestamp for example, see #8128.

Removing BiValue itself isn't so much the important part here though, but rather adding the custom serializers and deserializers we now have in master and 6.x. Those don't operate on BiValue but rather on the underlying IRubyObject type that the BiValue referenced before.

This means, that any solution that isn't "remove BiValue" would mean adding new custom serializers and deserializers that work wtih BiValue which will be a lot more complicated and risky than just porting the solution from 6.x in my opinion (note that 6.x and master have much better tests than 6.0 and earlier, that cover all these corner cases and rare type serializations too).

@andrewvc also note that https://github.com/elastic/logstash/pull/8371 needs to be merged to get full coverage on the serialization issues. Currently serializing null fields is broken in master still :(

@andrewvc @original-brownbear I've updated the git repo to use json codec for http as well. Pls let us know if we can help in case with any form of testing, we have a few GBs of corrupted queue data folders saved, and I believe we can start a test pipeline with path.queue pointed to one of those. Thank you so much again!

The exception when reproduced (showing only the exception + caused-by context)

Exception in thread "[main]>worker1" Exception in thread "[main]>worker2" Exception in thread "[main]>worker3" Exception in thread "[main]>worker0" org.logstash.ackedqueue.QueueRuntimeException: deserialize invocation error

at org.logstash.ackedqueue.Queue.deserialize(Queue.java:658)

at org.logstash.ackedqueue.Page.readBatch(Page.java:60)

at org.logstash.ackedqueue.Queue._readPageBatch(Queue.java:525)

at org.logstash.ackedqueue.Queue.readBatch(Queue.java:516)

at org.logstash.ackedqueue.ext.JrubyAckedQueueExtLibrary$RubyAckedQueue.ruby_read_batch(JrubyAckedQueueExtLibrary.java:162)

at org.logstash.ackedqueue.ext.JrubyAckedQueueExtLibrary$RubyAckedQueue$INVOKER$i$2$0$ruby_read_batch.call(JrubyAckedQueueExtLibrary$RubyAckedQueue$INVOKER$i$2$0$ruby_read_batch.gen)

at org.jruby.runtime.callsite.CachingCallSite.call(CachingCallSite.java:202)

at rubyjit.LogStash::Util::WrappedAckedQueue$$read_batch_652566213d0cb31c8b444e96975325b8b8be385d1975012498.__file__(/home/jls/build/logstash-5.6.0/logstash-core/lib/logstash/util/wrapped_acked_queue.rb:68)

at rubyjit.LogStash::Util::WrappedAckedQueue$$read_batch_652566213d0cb31c8b444e96975325b8b8be385d1975012498.__file__(/home/jls/build/logstash-5.6.0/logstash-core/lib/logstash/util/wrapped_acked_queue.rb)

...

Caused by: java.lang.reflect.InvocationTargetException

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

--

Caused by: java.lang.IllegalArgumentException: Missing Valuefier handling for full class name=[B, simple name=byte[]

at org.logstash.Valuefier.convertNonCollection(Valuefier.java:48)

at org.logstash.Valuefier.convert(Valuefier.java:87)

at org.logstash.ConvertedMap.newFromMap(ConvertedMap.java:20)

--

Caused by: java.lang.IllegalArgumentException: No enum constant org.logstash.bivalues.BiValues.[B

at java.lang.Enum.valueOf(Enum.java:238)

at org.logstash.bivalues.BiValues.valueOf(BiValues.java:18)

at org.logstash.bivalues.BiValues.newBiValue(BiValues.java:88)

```

@jordansissel if it helps with a backport of a full fix, this version of the round trip event test from one of my PRs https://github.com/elastic/logstash/pull/8371/files#diff-eec69687901066a54bfa2ff47bcc0ea7R30 should cover/reproduce all cases broken in 5.6 and 6.0 (every time I fixed on in 6.x I added it to that test :)) that I know of.

As far as I can tell, it is not corrupt. It serializes to a Bignum based on my very cursory reading of the CBOR spec. What fails is that we fail to deserialize it and somehow view it as a byte array instead of a Bignum-ish value.

tested on 5.6.0. I can confirm it is encoded correctly in the queue:

# hexdump -C from the queue only showing the `some_value` entry:

# 00000120 6a 73 6f 6d 65 5f 76 61 6c 75 65 c2 49 00 80 00 |jsome_value.I...|

# 00000130 00 00 00 00 00 c0 ff ff 46 bf 85 ca 00 00 00 00 |........F.......|

# Per the CBOR spec, C2 49 means "Bignum 9 bytes long"

>> CBOR.load("C2 49 00 80 00 00 00 00 00 00 c0".split(/\s+/).map{ |x| x.to_i(16) }.pack("C*"))

=> 9223372036854776000

So this problem must be happening during deserialization that we are somehow skipping the "This is a bignum" marker from the encoded value.

Its full data loss from this point onwards

Is it data loss? The queue looks correct to me, it is just that Logstash fails (due to a bug) to read it correctly. Can you demonstrate that data is lost ?

I can also reproduce as a Java unit test using

Event e = new Event();

e.setField("foo", new BigInteger("9223372036854776000"));

byte[] binary = e.serialize();

Event deseriealized = Event.deserialize(binary);

java.lang.IllegalArgumentException: Missing Valuefier handling for full class name=[B, simple name=byte[]

at org.logstash.Valuefier.convertNonCollection(Valuefier.java:48)

at org.logstash.Valuefier.convert(Valuefier.java:87)

at org.logstash.ConvertedMap.newFromMap(ConvertedMap.java:18)

at org.logstash.Event.<init>(Event.java:62)

at org.logstash.Event.fromSerializableMap(Event.java:196)

at org.logstash.Event.deserialize(Event.java:410)

at org.logstash.EventTest.queueableBigNumInterfaceRoundTrip(EventTest.java:44)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:497)

at org.junit.runners.model.FrameworkMethod$1.runReflectiveCall(FrameworkMethod.java:50)

at org.junit.internal.runners.model.ReflectiveCallable.run(ReflectiveCallable.java:12)

at org.junit.runners.model.FrameworkMethod.invokeExplosively(FrameworkMethod.java:47)

at org.junit.internal.runners.statements.InvokeMethod.evaluate(InvokeMethod.java:17)

at org.junit.runners.ParentRunner.runLeaf(ParentRunner.java:325)

at org.junit.runners.BlockJUnit4ClassRunner.runChild(BlockJUnit4ClassRunner.java:78)

at org.junit.runners.BlockJUnit4ClassRunner.runChild(BlockJUnit4ClassRunner.java:57)

at org.junit.runners.ParentRunner$3.run(ParentRunner.java:290)

at org.junit.runners.ParentRunner$1.schedule(ParentRunner.java:71)

at org.junit.runners.ParentRunner.runChildren(ParentRunner.java:288)

at org.junit.runners.ParentRunner.access$000(ParentRunner.java:58)

at org.junit.runners.ParentRunner$2.evaluate(ParentRunner.java:268)

at org.junit.runners.ParentRunner.run(ParentRunner.java:363)

at org.junit.runner.JUnitCore.run(JUnitCore.java:137)

at com.intellij.junit4.JUnit4IdeaTestRunner.startRunnerWithArgs(JUnit4IdeaTestRunner.java:68)

at com.intellij.rt.execution.junit.IdeaTestRunner$Repeater.startRunnerWithArgs(IdeaTestRunner.java:47)

at com.intellij.rt.execution.junit.JUnitStarter.prepareStreamsAndStart(JUnitStarter.java:242)

at com.intellij.rt.execution.junit.JUnitStarter.main(JUnitStarter.java:70)

Caused by: java.lang.IllegalArgumentException: No enum constant org.logstash.bivalues.BiValues.[B

at java.lang.Enum.valueOf(Enum.java:238)

at org.logstash.bivalues.BiValues.valueOf(BiValues.java:18)

at org.logstash.bivalues.BiValues.newBiValue(BiValues.java:88)

at org.logstash.Valuefier.convertNonCollection(Valuefier.java:45)

... 28 more

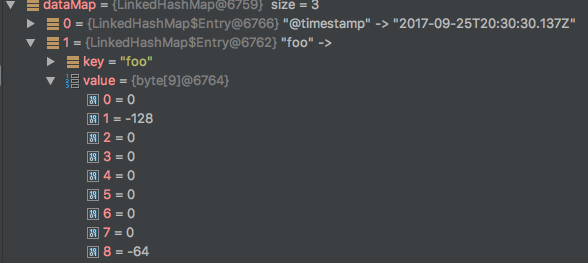

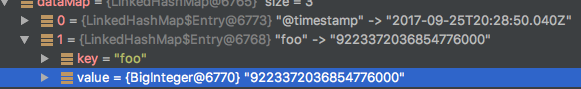

Ok, so here it is: Jackson support for decoding CBOR BigInteger started in 2.8 and we are at 2.7.

Running my test above, using out 2.7 Jackson result in:

while with 2.8 it is:

So I see that the fix introduced in #8205 by @original-brownbear was to actually work around the serialization/deserialization problem in Jackson by providing a custom serializer to serialize these Bignum/BigInteger to string.

At this point we have these options for 6.0 I believe:

- merge #8205 and #8371 in 6.0. The risk is that this has not been much "field" tested as of yet.

- upgrade jackson to 2.8 or 2.9 - not sure about potential conflict with JrJackon, we have to verify.

- add the minimal changes required for custom

Bignum/BigIntegerserializer

And for 5.6:

- upgrade jackson to 2.8 or 2.9 - not sure about potential conflict with JrJackon, we have to verify.

- add the minimal changes required for custom

Bignum/BigIntegerserializer

In think that for 5.6 it would make sense to just add a custom serializer. For 6.0 unless we are confident with merging #8205 and #8371 we could temporarily also just add the extra custom serializer.

Opinions?

We should update Jackson to 2.9 regardless (See #7615) for 5.6 and 6.0.

For 6.0, since we are already in the RC stage, I would also do the minimal changes to add a custom serializer (same for 5.6 as well)

if we plan on upgrading Jackson to 2.9 for 5.6 and 6.0 then there is no need for a custom serializer. I will test on 6.0 that it works end-to-end.

@colinsurprenant I'm +1 on bumping Jackson. Would love buy-in from @guyboertje WRT potential issues there related to JRJackson.

Can we be more precise as to what the risks might be in upgrading? Could something break between 2.8 and 2.9.

We should also make sure to remove the custom serializers in the 6.x and master branches after upgrading.

I confirm that a simple upgrade of Jackson to 2.9.1 solves in 6.0. It solves both cases:

- data queued using Jackson 2.7 that would produce deserialization exception is correctly deserialized if dequeued using Jackson 2.9.1.

- and obviously, data queued using Jackson 2.9.1 is correctly dequeued using 2.9.1 also.

bin/logstash -e 'input{stdin{codec => json_lines}} output{stdout{codec => rubydebug}}'

Sending Logstash's logs to /Users/colin/dev/src/elasticsearch/logstash/logs which is now configured via log4j2.properties

[2017-09-25T17:38:05,335][INFO ][logstash.modules.scaffold] Initializing module {:module_name=>"fb_apache", :directory=>"/Users/colin/dev/src/elasticsearch/logstash/modules/fb_apache/configuration"}

[2017-09-25T17:38:05,338][INFO ][logstash.modules.scaffold] Initializing module {:module_name=>"netflow", :directory=>"/Users/colin/dev/src/elasticsearch/logstash/modules/netflow/configuration"}

[2017-09-25T17:38:05,388][WARN ][logstash.config.source.multilocal] Ignoring the 'pipelines.yml' file because modules or command line options are specified

[2017-09-25T17:38:05,577][INFO ][logstash.agent ] Successfully started Logstash API endpoint {:port=>9600}

[2017-09-25T17:38:07,605][INFO ][logstash.pipeline ] Starting pipeline {:pipeline_id=>"main", "pipeline.workers"=>8, "pipeline.batch.size"=>125, "pipeline.batch.delay"=>5, "pipeline.max_inflight"=>1000, :thread=>"#<Thread:0x1d96273d@/Users/colin/dev/src/elasticsearch/logstash/logstash-core/lib/logstash/pipeline.rb:290 run>"}

[2017-09-25T17:38:07,630][INFO ][logstash.pipeline ] Pipeline started {"pipeline.id"=>"main"}

The stdin plugin is now waiting for input:

[2017-09-25T17:38:07,649][INFO ][logstash.agent ] Pipelines running {:count=>1, :pipelines=>["main"]}

{ "some_value": 9223372036854776000 }

{

"@version" => "1",

"host" => "mbp15r",

"@timestamp" => 2017-09-25T21:38:27.016Z,

"some_value" => 9223372036854776000

}

data queued using Jackson 2.7 that would produce deserialization exception is correctly deserialized if dequeued using Jackson 2.9.1.

:heart::heart::heart:

Just tested same scenarios for 5.6, same result.

I confirm that:

- Jackson upgrade to 2.9.1 solved the

Bignum/BigIntegerCBOR deserialization problem - data in the queue is not corrupted, the problem is with the deserialization of the dequeued data

@colinsurprenant what will happen to previously serialized Bignum objects after the upgrade? Will they be deserialized correctly?

@andrewvc see comment: https://github.com/elastic/logstash/issues/8379#issuecomment-332023211

data queued using Jackson 2.7 that would produce deserialization exception is correctly deserialized if dequeued using Jackson 2.9.1.

To me, this means data queued with Logstash 5.6.0 (jackson 2.7) would be safely deserialized in a Logstash release having the jackson 2.9.1 fix.

data queued using Jackson 2.7 that would produce deserialization exception is correctly deserialized if dequeued using Jackson 2.9.1.

@andrewvc per ^^ yes.

Maybe I wasn't clear, I'll re-explain my test steps: using both 5.6 and 6.0 I did the following tests using this command with PQ enabled.

$ bin/logstash -e 'input{stdin{codec => json_lines}} output{stdout{codec => rubydebug}}'

1- Push { "some_value": 9223372036854776000 } in stdin which resulted in the reported deserialization exception.

2- ^C to terminate logstash.

3- At this point the event is still in the PQ since it was not acknowledged by the output because of the exception.

4- Modify logstash to use Jackson 2.9.1. (a bit tricky, details omitted)

5- Launch logstash with same command above.

6- Logstash is able to pick the previously queued element and correctly deserialized and displayed by the rubydebug codec.

7- Push once more { "some_value": 9223372036854776000 } and see that it is also correctly enqueued, dequeued, deserialized and displayed by the rubydebug codec.

oups, concurrency problem with @jordansissel ;)

I stole the lock.

@colinsurprenant this should depend on #7615 which should now rely on Jackson 2.9.1 and jrjackson 0.4.3

@aburmeis yes, thanks - I forgot to link that issue and the bump PR #8373

Also we can now do #8405 to leverage Jackson proper serialization for BigInteger and BigDecimal.

So this is officially solved by upgrading Jackson for LS versions 5.5, 5.6 and 6.0.

6.1 and up will also benefit the BiValue refactor.

5.6.3 will be the next point release with the fix. Closing.

Thanks so much @colinsurprenant @andrewvc @original-brownbear and everyone!

Seems like the same issue. I can't tell if it was a long number written to the page file.

Can't read it.

logstash-6.2.4-1.noarch

CentOS Linux release 7.4.1708 (Core)

27GB of unusable data.

/var/log/messages

Apr 24 22:24:00 systemd: Starting logstash...

Apr 24 22:24:22 logstash: Sending Logstash's logs to /var/log/logstash which is now configured via log4j2.properties

Apr 24 22:25:22 logstash: Exception in thread "Ruby-0-Thread-9@[main]>worker0: /usr/share/logstash/logstash-core/lib/logstash/pipeline.rb:385" org.logstash.ackedqueue.QueueRuntimeException: deserialize invocation error

Apr 24 22:25:22 logstash: at org.logstash.ackedqueue.Queue.deserialize(Queue.java:733)

/var/log/logstash/logstash-plain.log

[2018-04-24T18:04:31,049][DEBUG][org.logstash.ackedqueue.Queue] opening head page: 56285, in: /opt/logstash/queue/main, with checkpoint: pageNum=56285, firstUnackedPageNum=55850, firstUnackedSeqNum=0, minSeqNum=0, elementCount=0, isFullyAcked=no

[2018-04-24T18:04:31,136][DEBUG][org.logstash.ackedqueue.io.AbstractByteBufferPageIO] PageIO recovery element index:0, readNextElement exception: Element invalid length

[2018-04-24T18:04:31,243][INFO ][logstash.pipeline ] Starting pipeline {:pipeline_id=>"main", "pipeline.workers"=>4, "pipeline.batch.size"=>1024, "pipeline.batch.delay"=>50}

Most helpful comment

So this is officially solved by upgrading Jackson for LS versions 5.5, 5.6 and 6.0.

6.1 and up will also benefit the BiValue refactor.

5.6.3 will be the next point release with the fix. Closing.