Linkerd2: linkerd-proxy throwing load balancer discovery error

Bug Report

What is the issue?

linkerd-proxy started throwing an error and was unable to connect to other meshed services

How can it be reproduced?

Unknown

Logs, error output, etc

ERR! [249010.471746s] outbound:accept{peer.addr=100.96.11.27:34552}:source{target.addr=100.69.16.83:80}: linkerd2_app_core::errors unexpected error: buffered service failed: load balancer discovery error: discovery task failed

linkerd check output

kubernetes-api

--------------

√ can initialize the client

√ can query the Kubernetes API

kubernetes-version

------------------

× is running the minimum Kubernetes API version

Kubernetes is on version [1.11.8], but version [1.13.0] or more recent is required

see https://linkerd.io/checks/#k8s-version for hints

√ is running the minimum kubectl version

linkerd-config

--------------

√ control plane Namespace exists

√ control plane ClusterRoles exist

√ control plane ClusterRoleBindings exist

√ control plane ServiceAccounts exist

√ control plane CustomResourceDefinitions exist

√ control plane MutatingWebhookConfigurations exist

√ control plane ValidatingWebhookConfigurations exist

√ control plane PodSecurityPolicies exist

linkerd-existence

-----------------

√ 'linkerd-config' config map exists

√ heartbeat ServiceAccount exist

√ control plane replica sets are ready

√ no unschedulable pods

√ controller pod is running

√ can initialize the client

√ can query the control plane API

linkerd-identity

----------------

√ certificate config is valid

√ trust roots are using supported crypto algorithm

√ trust roots are within their validity period

√ trust roots are valid for at least 60 days

√ issuer cert is using supported crypto algorithm

√ issuer cert is within its validity period

√ issuer cert is valid for at least 60 days

√ issuer cert is issued by the trust root

linkerd-api

-----------

√ control plane pods are ready

√ control plane self-check

√ [kubernetes] control plane can talk to Kubernetes

√ [prometheus] control plane can talk to Prometheus

linkerd-version

---------------

√ can determine the latest version

‼ cli is up-to-date

is running version 20.1.2 but the latest edge version is 20.1.3

see https://linkerd.io/checks/#l5d-version-cli for hints

control-plane-version

---------------------

‼ control plane is up-to-date

is running version 20.1.2 but the latest edge version is 20.1.3

see https://linkerd.io/checks/#l5d-version-control for hints

√ control plane and cli versions match

Environment

- Kubernetes Version:

- Cluster Environment: (GKE, AKS, kops, ...)

- Host OS:

- Linkerd version:

Possible solution

Additional context

Verfied the linkerd-proxy sidecar version was also 20.1.2. Similar to https://github.com/linkerd/linkerd2/issues/3935

All 30 comments

As per https://github.com/linkerd/linkerd2/issues/3935#issuecomment-576891452, The Proxy is moved back to the stable version.

Can you confirm if it is not because of the min k8s version supported error, by running it in >= 1.13?

× is running the minimum Kubernetes API version

Kubernetes is on version [1.11.8], but version [1.13.0] or more recent is required

see https://linkerd.io/checks/#k8s-version for hints

Can't test on >=1.13 for the moment. Also, I have lots of meshed services. Only saw this error on one service(3 pods) and all 3 pods exhibited the same error. I also verified that linkerd-proxy is running v2.84.0.

Had this happen again. Same service, different namespace. Prior to installing 20.1.2, I was on 19.12.2 and didn't see this error. Again validated that the linkerd-proxy sidecar on the affected pods was running v2.84.0

Additional info. I rolled back the linkerd-proxy version on the service that's been throwing the error to 19.12.3 (v2.82.0) but I left the control plane at 20.1.2 and I still see the same error.I never saw this error at all prior to my upgrade to 20.1.2. Also the fact that I only ever see this error on one service out of the many I have meshed would seem to indicate that there's some "trigger" related to this particular service causing this error to happen.

Just upgraded everything to 20.1.4(latest) but same error. One more tidbit. The service that's throwing this error is the only one that connects to the downstream service via websocket. I have other meshed services that use standard HTTP as well as one service that uses HTTP/2 grpc and they are all fine. The error also doesn't happen right away, only after some period of time. (in the last test case it lasted about 1-1/2 hours before erroring out).

Full testing scenario (using linkerd edge-20.1.4 as the controlplane) with my (now) 2 failing services that utilize a websocket connection:

PROXY_VERSION RESULT

v2.84.0 buffered service failed error

v2.82.0 buffered service failed error

v2.81.0 OK

I did notice that https://github.com/linkerd/linkerd2-proxy/commit/588609becbe9372b4bb3cfa752630ed320f76d3b made changes to the buffer settings between v2.81.0 and v2.82.0. Don't know how to troubleshoot further on why these websocket services throw that error on linkerd-proxy version >=v2.82.0

Upgraded to edge-20.2.1.Now getting a slightly different "buffer service failed" error.

[329592.297712814s] WARN outbound:accept{peer.addr=100.96.28.225:40908}:source{target.addr=100.69.16.83:80}: linkerd2_app_core::errors: Failed to proxy request: buffered service failed: load balancer discovery error: discovery task failed

Still causes the meshed service to fail with a 502.

This sounds like one of the staleness issues that we're working on getting taken care of right now. The next major version of the proxy will have quite a few updates to hopefully address this style of issue. We'll make sure to update the issue and ask for testing when it is ready to go.

Guess my options at this point are to stay on 2.6.1 (the last working version for me) or unmesh the failing services. I know the proxy in 2.7 and 2.6.1 are "supposed" to be the same but there's no getting around the fact that in my use case, v2.81.0 works and any version after has the buffer failure issues.

@gamer22026 when you see this error, is there a message containing the string "dropping resolution due to watchdog" in the logs as well?

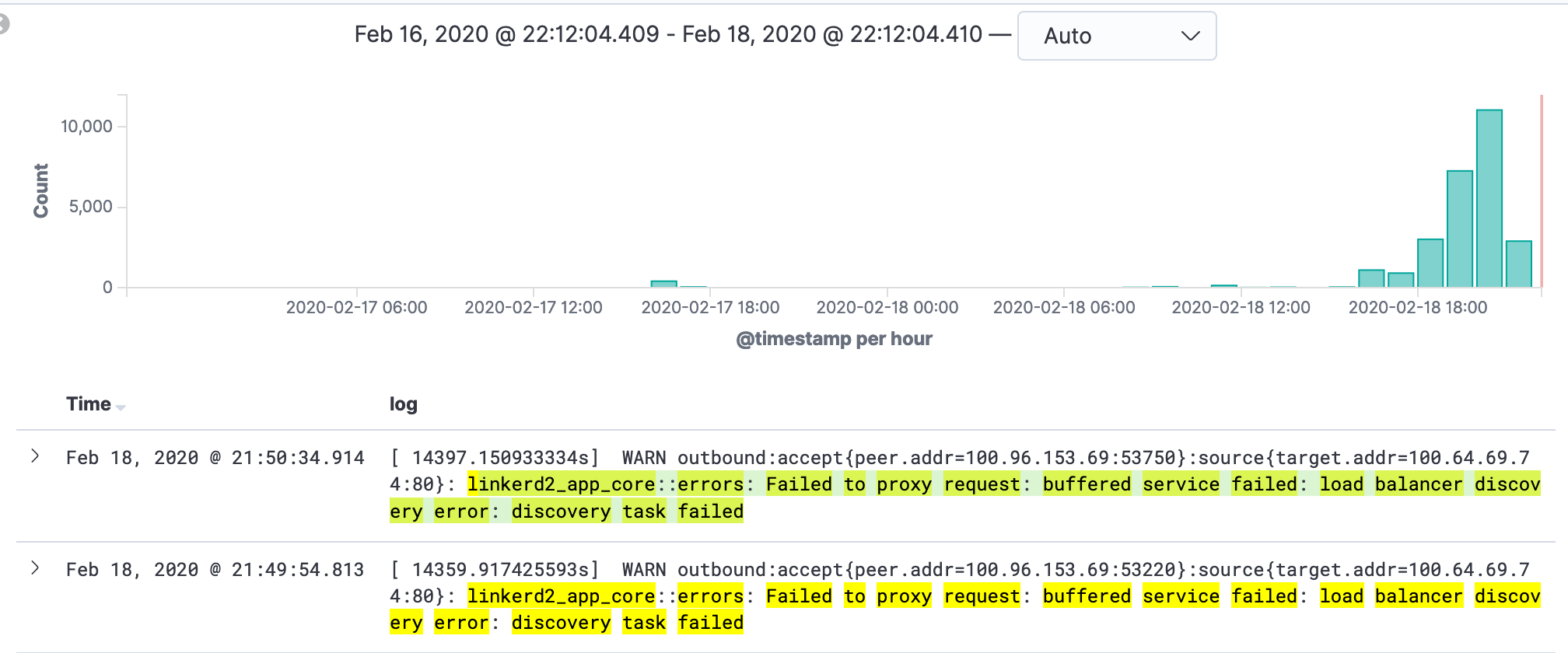

@hawkw Nope just those. Over 5K failures on one pod in a little over an hour.

Hmm, that's interesting, thanks!

The change in linkerd/linkerd2-proxy@588609b added a "watchdog" timeout that fires to cancel and restart a service discovery resolution for a given destination if it is observed to not be updated after a period of time. This is in order to help prevent stuck service discovery resolutions. I think if your discovery tasks were being killed by the watchdog timeout introduced in that change, we should see that log message as well. It's emitted here: https://github.com/linkerd/linkerd2-proxy/blob/588609becbe9372b4bb3cfa752630ed320f76d3b/linkerd/proxy/discover/src/buffer.rs#L143-L149

I wonder if the watchdog timeout fired way in the past (and the corresponding log line was lost due to log rotation or something), but the discovery task was never restarted?

Got it all in ELK so think I got all the lines.

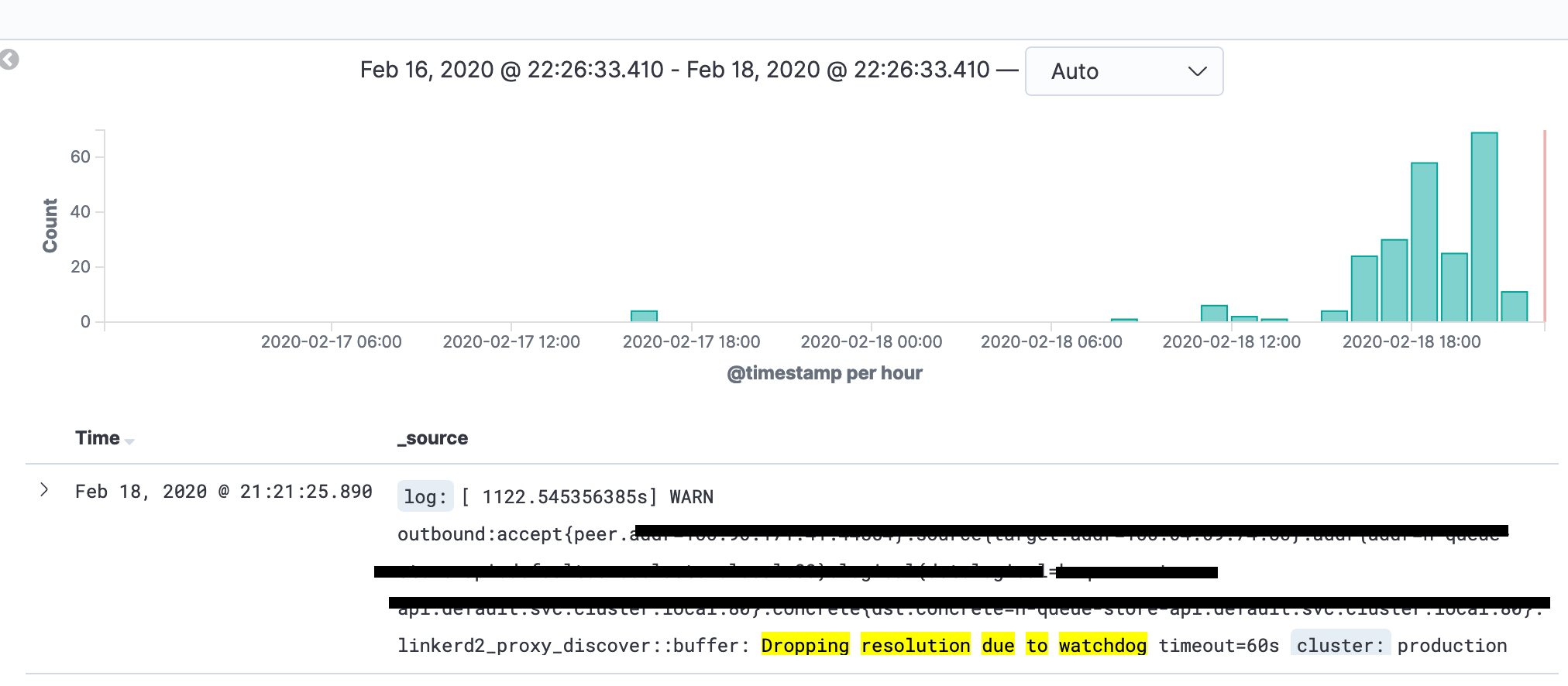

@hawkw Apologize. You were right! (bad Kibana mojo on my part).

Feb 7, 2020 @ 17:10:50.292 [102569.774966826s] WARN outbound:accept{peer.addr=100.96.28.225:49722}:source{target.addr=100.69.16.83:80}:addr{addr=engine:80}:logical{dst.logical=engine.qa3.svc.cluster.local:80}:concrete{dst.concrete=engine.qa3.svc.cluster.local:80}: linkerd2_proxy_discover::buffer: Dropping resolution due to watchdog timeout=60s

Feb 10, 2020 @ 08:03:26.399 [328925.881430445s] WARN outbound:accept{peer.addr=100.96.28.225:44098}:source{target.addr=100.69.16.83:80}: linkerd2_app_core::errors: Failed to proxy request: buffered service failed: load balancer discovery error: discovery task failed

That's first appearance of the failure after the watchdog timeout fired.

Happened again. (second entry is from linkerd-destination pod, which happened at the same time as the watchdog). The timing of the watchdog corresponds to the time the pods backing the service engine:80 were redeployed.

Feb 11, 2020 @ 17:20:53.143 [ 20017.560711102s] WARN outbound:accept{peer.addr=100.96.7.45:37696}:source{target.addr=100.69.16.83:80}:addr{addr=engine:80}:logical{dst.logical=engine.qa3.svc.cluster.local:80}:concrete{dst.concrete=engine.qa3.svc.cluster.local:80}: linkerd2_proxy_discover::buffer: Dropping resolution due to watchdog timeout=60s

Feb 11, 2020 @ 17:20:53.144 time="2020-02-12T00:20:53Z" level=info msg="Stopping watch on endpoint [qa3/engine:80]" addr=":8086" component=endpoints-watcher

Feb 12, 2020 @ 08:52:16.279 [ 75900.696150835s] WARN outbound:accept{peer.addr=100.96.7.45:54224}:source{target.addr=100.69.16.83:80}: linkerd2_app_core::errors: Failed to proxy request: buffered service failed: load balancer discovery error: discovery task failed

Oh, fantastic, that's definitely a clue! Thanks for catching that.

We just had the same issue. Suddenly, network connections staled. A lot of inkerd2_app_core::errors: Failed to proxy request: buffered service failed: load balancer discovery error: discovery task failed appeared. As well as the 'watchdog' messages: linkerd2_proxy_discover::buffer: Dropping resolution due to watchdog timeout=60s

We were using linkerd stable-2.7.0 on kubernetes 1.15.10. Let me know if you need further info.

So I've just witnessed the same thing in one of our pods in our staging environment on :

~ ❯ kubectl logs daedalus-updater-69fbb949fc-6mc6s linkerd-proxy -f

time="2020-02-17T15:49:54Z" level=info msg="running version stable-2.7.0"

[ 0.10338367s] INFO linkerd2_proxy: Admin interface on 0.0.0.0:4191

[ 0.10365568s] INFO linkerd2_proxy: Inbound interface on 0.0.0.0:4143

[ 0.10371468s] INFO linkerd2_proxy: Outbound interface on 127.0.0.1:4140

[ 0.10393368s] INFO linkerd2_proxy: Tap interface on 0.0.0.0:4190

[ 0.10491068s] INFO linkerd2_proxy: Local identity is default.one.serviceaccount.identity.linkerd.cluster.local

[ 0.10513669s] INFO linkerd2_proxy: Identity verified via linkerd-identity.linkerd.svc.cluster.local:8080 (linkerd-identity.linkerd.serviceaccount.identity.linkerd.cluster.local)

[ 0.10519369s] INFO linkerd2_proxy: Destinations resolved via linkerd-dst.linkerd.svc.cluster.local:8086 (linkerd-destination.linkerd.serviceaccount.identity.linkerd.cluster.local)

[ 0.12083579s] INFO inbound: linkerd2_app_inbound: serving listen.addr=0.0.0.0:4143

[ 0.32760114s] INFO daemon:identity: linkerd2_app: Certified identity: default.one.serviceaccount.identity.linkerd.cluster.local

[154791.991986182s] WARN outbound:accept{peer.addr=10.1.0.31:46516}:source{target.addr=172.16.12.128:80}:addr{addr=hermes-api:80}:logical{dst.logical=hermes-api.one.svc.cluster.local:80}:concrete{dst.concrete=hermes-api.one.svc.cluster.local:80}: linkerd2_proxy_discover::buffer: Dropping resolution due to watchdog timeout=60s

[155203.91360616s] WARN outbound:accept{peer.addr=10.1.0.31:35284}:source{target.addr=172.16.12.128:80}: linkerd2_app_core::errors: Failed to proxy request: buffered service failed: load balancer discovery error: discovery task failed

[155224.476833031s] WARN outbound:accept{peer.addr=10.1.0.31:35780}:source{target.addr=172.16.12.128:80}: linkerd2_app_core::errors: Failed to proxy request: buffered service failed: load balancer discovery error: discovery task failed

[155329.657839425s] WARN outbound:accept{peer.addr=10.1.0.31:37844}:source{target.addr=172.16.12.128:80}: linkerd2_app_core::errors: Failed to proxy request: buffered service failed: load balancer discovery error: discovery task failed

[156882.508075123s] WARN outbound:accept{peer.addr=10.1.0.31:40094}:source{target.addr=172.16.12.128:80}: linkerd2_app_core::errors: Failed to proxy request: buffered service failed: load balancer discovery error: discovery task failed

[157461.359183618s] WARN outbound:accept{peer.addr=10.1.0.31:51468}:source{target.addr=172.16.12.128:80}: linkerd2_app_core::errors: Failed to proxy request: buffered service failed: load balancer discovery error: discovery task failed

[157507.388896661s] WARN outbound:accept{peer.addr=10.1.0.31:52394}:source{target.addr=172.16.12.128:80}: linkerd2_app_core::errors: Failed to proxy request: buffered service failed: load balancer discovery error: discovery task failed

Output of kubectl version

~ ❯ kubectl version

Client Version: version.Info{Major:"1", Minor:"15", GitVersion:"v1.15.7", GitCommit:"6c143d35bb11d74970e7bc0b6c45b6bfdffc0bd4", GitTreeState:"clean", BuildDate:"2019-12-11T12:42:56Z", GoVersion:"go1.12.12", Compiler:"gc", Platform:"darwin/amd64"}

Server Version: version.Info{Major:"1", Minor:"15", GitVersion:"v1.15.9", GitCommit:"2e808b7cb054ee242b68e62455323aa783991f03", GitTreeState:"clean", BuildDate:"2020-01-18T23:24:23Z", GoVersion:"go1.12.12", Compiler:"gc", Platform:"linux/amd64"}

Output of linkerd version

~ ❯ linkerd version

Client version: stable-2.7.0

Server version: stable-2.7.0

Output of linkerd check

~ took 6s ❯ linkerd check

kubernetes-api

--------------

√ can initialize the client

√ can query the Kubernetes API

kubernetes-version

------------------

√ is running the minimum Kubernetes API version

√ is running the minimum kubectl version

linkerd-existence

-----------------

√ 'linkerd-config' config map exists

√ heartbeat ServiceAccount exist

√ control plane replica sets are ready

√ no unschedulable pods

√ controller pod is running

√ can initialize the client

√ can query the control plane API

linkerd-config

--------------

√ control plane Namespace exists

√ control plane ClusterRoles exist

√ control plane ClusterRoleBindings exist

√ control plane ServiceAccounts exist

√ control plane CustomResourceDefinitions exist

√ control plane MutatingWebhookConfigurations exist

√ control plane ValidatingWebhookConfigurations exist

√ control plane PodSecurityPolicies exist

linkerd-identity

----------------

√ certificate config is valid

√ trust roots are using supported crypto algorithm

√ trust roots are within their validity period

√ trust roots are valid for at least 60 days

√ issuer cert is using supported crypto algorithm

√ issuer cert is within its validity period

√ issuer cert is valid for at least 60 days

√ issuer cert is issued by the trust root

linkerd-api

-----------

√ control plane pods are ready

√ control plane self-check

√ [kubernetes] control plane can talk to Kubernetes

√ [prometheus] control plane can talk to Prometheus

√ tap api service is running

linkerd-version

---------------

√ can determine the latest version

√ cli is up-to-date

control-plane-version

---------------------

√ control plane is up-to-date

√ control plane and cli versions match

linkerd-ha-checks

-----------------

√ pod injection disabled on kube-system

Status check results are √

Deleting the pod resolved the issue.

I have another instance of this problem.

I am not sure if they are related, however, I am using opencensus tracing and I know that the trace collector backend is full, so linkerd cannot write spans.

On my production system I do not have tracing and have not seen this bug.

I have a proxy version which I believe fixes these issues. It should be released in tomorrow's edge release, but it can be tested manually by adding an annotation to your workload:

config.linkerd.io/proxy-version: ver-backpressure-2020-03-04.0

Please let us know if you observe any similar issues with the newer version!

Just rolled 20.3.1 into our testing environment. Will update this issue accordingly.

I've finally deployed 20.3.1 into our Dev, Staging and Sandbox environments. I'll report back if I see anything odd.

Thanks @gamer22026 and @cpressland looking forward to hearing the results

@gamer22026 @cpressland thanks for checking this out! I currently lack time, but appreciate your efforts!

Think we can close this one out. Any idea when the edge fixes will land in a 2.x release?

There isn't a date set yet, but we're getting good feedback about the edge releases.

Assuming no new issues come up, my best guess is that one of the upcoming edges will be an RC.

We're starting to prepare stable-2.7.1 to include this fix, hopefully released later this week.

In the meantime, I suggest setting the annotation to:

config.linkerd.io/proxy-version: edge-20.3.4

Please report back if you experience this issue again! Thanks

That's awesome news! Thanks

Most helpful comment

I have a proxy version which I believe fixes these issues. It should be released in tomorrow's edge release, but it can be tested manually by adding an annotation to your workload:

Please let us know if you observe any similar issues with the newer version!